Data teams today are flooded with choices when it comes to modern AI and analytics platforms, and one of the most common debates is Dataiku vs Databricks. Both platforms promise to accelerate data-driven decision-making, but they approach the challenge in very different ways. As organizations invest heavily in artificial intelligence, machine learning, and large-scale data infrastructure, the decision between these two leaders has never been more critical.

In this blog, we’ll provide a clear, unbiased breakdown of Dataiku and Databricks—exploring their strengths, weaknesses, pricing models, and ideal use cases—to help both technical teams and business leaders make the right choice.

What is Dataiku?

Company Background: Founded in 2013, Dataiku creates software that helps business teams and data experts work together on analytics projects. The platform solves the problem of making advanced data analysis accessible to people with different skill levels, from business analysts to technical specialists.

Core Focus Areas:

Dataiku emphasizes complete data-to-insights workflows that guide users from raw information to useful business results without requiring extensive programming knowledge. The platform features point-and-click interfaces enabling business teams to participate directly in data projects while providing advanced tools for technical users. This approach helps organizations use their entire workforce for data-driven decision making.

Key Strengths:

The platform excels in making data accessible to everyone, breaking down barriers between technical and business teams through easy-to-use interfaces and shared workspaces. Visual workflow builders allow users to design complex data processing through drag-and-drop tools, making sophisticated analysis available to non-technical staff.

Additionally, automated model building handles technical tasks like choosing the right algorithms and optimizing settings, speeding up the path from data to actionable insights.

What is Databricks?

Company Background: Similarly, Databricks was founded in 2013 by the original creators of Apache Spark, emerging from university research focused on processing large amounts of data efficiently. The founding team’s expertise in handling massive datasets positioned the company to solve enterprise challenges around data scale and performance.

Platform Architecture

Databricks operates as a unified data storage and processing platform that combines the benefits of traditional databases with modern data storage, providing one place for storing, processing, and analyzing all types of business information. The enterprise-ready system supports organizations handling enormous amounts of data with demanding performance needs and complex analysis requirements.

Core Strengths:

Meanwhile, the platform’s main advantage lies in advanced data processing capabilities, offering optimized tools for moving data, real-time updates, and batch processing that handle enterprise workloads efficiently. Large-scale analytics and automation includes distributed computing, model management, and production deployment designed for big organizations.

Furthermore, performance optimization through specialized storage formats, improved processing engines, and intelligent caching ensures fast results even on massive datasets, making it perfect for organizations with substantial data processing needs and complex analytical requirements.

Core Philosophy: Democratization vs Scale

Dataiku Philosophy: Bringing Analytics to Everyone

Dataiku was built on the principle that valuable business insights shouldn’t be locked away in technical departments. The company believes that the people who understand business problems best – marketing managers, operations analysts, and department heads – should be able to work directly with data to find solutions.

The Democratization Approach

- Empowering business users to build their own predictive models and automation without waiting for technical teams

- Creating citizen data scientists by providing intuitive tools that don’t require programming expertise

- Breaking down barriers between business knowledge and technical implementation

- Furthermore, visual interfaces that make complex data processes understandable to everyone

- Collaborative workflows where business experts can work alongside technical specialists as equals

This philosophy stems from Dataiku’s observation that many great ideas die in the gap between business needs and technical execution. By making analytics tools accessible to business users, they enable faster experimentation and more relevant solutions.

Databricks Philosophy: Unified Enterprise Data Infrastructure

Databricks takes a different approach, focusing on creating the most powerful and scalable data infrastructure possible. Their philosophy centers on the belief that modern enterprises need industrial-strength platforms that can handle massive complexity while maintaining performance and reliability.

The Enterprise Scale Approach

- Unifying data storage and processing by combining the flexibility of data lakes with the performance of traditional data warehouses

- Supporting enterprise engineers with advanced tools for building large-scale data systems

- Handling massive data volumes that would overwhelm simpler platforms

- Moreover, optimizing performance for organizations processing terabytes or petabytes of information daily

- Providing enterprise-grade security and governance for sensitive business data

This philosophy recognizes that large organizations often have complex data architectures and need platforms that can integrate seamlessly with existing enterprise systems while providing the scalability to grow.

The Analogy: Data Democratizer vs Data Powerhouse

Think of Dataiku as the “data democratizer” – like providing everyone in your organization with easy-to-use calculators and spreadsheets to solve their own problems. It puts powerful analytical capabilities into the hands of business users who previously had to rely on technical teams for every data request.

Conversely, Databricks serves as the “data powerhouse” – like building a state-of-the-art manufacturing facility that can handle massive production volumes with precision and efficiency. It provides the infrastructure that technical teams need to build sophisticated, enterprise-scale data solutions.

When Each Philosophy Works Best

The democratization approach works particularly well for organizations where business agility and widespread analytics adoption are priorities. Companies that want their marketing, sales, and operations teams to independently explore data and build predictive models benefit from Dataiku’s accessible approach.

The enterprise scale philosophy suits organizations dealing with complex data engineering challenges, massive datasets, or strict performance requirements. Companies with dedicated technical teams who need maximum power and flexibility typically gravitate toward Databricks’ infrastructure-focused approach.

Understanding these philosophical differences helps organizations choose not just a platform, but a partner whose approach aligns with their culture and strategic objectives.

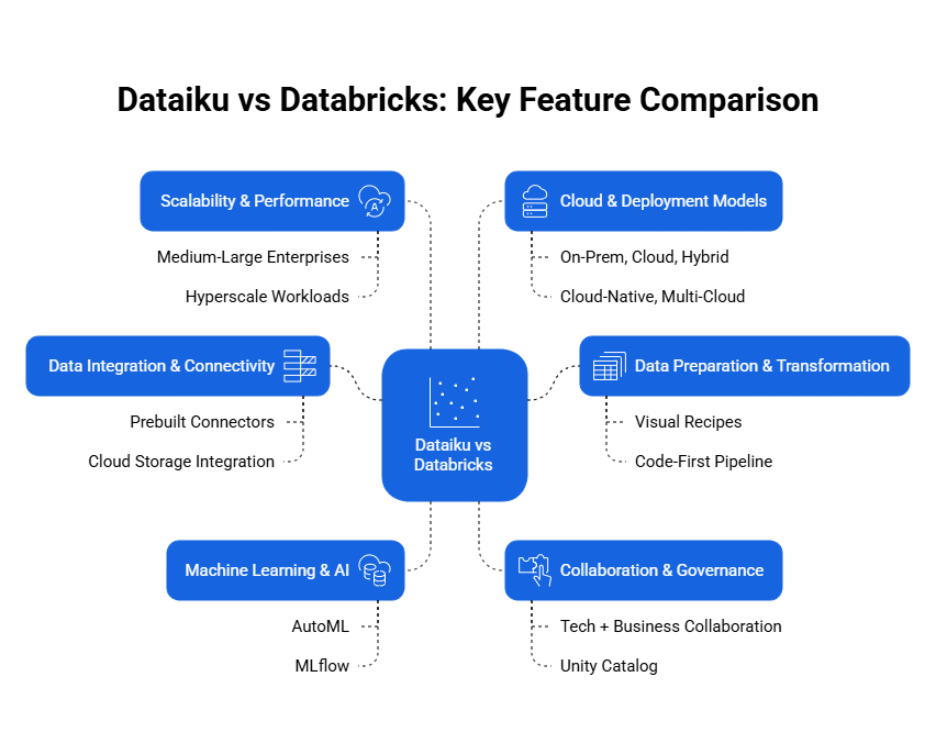

Dataiku vs Databricks: Key Feature Comparison

1. Data Integration & Connectivity

Dataiku’s Integration Approach

- More than 80 prebuilt connectors that work like plug-and-play adapters

- Easy for business analysts and non-technical users to access data from various sources

- Quick connection to popular business applications, databases, and cloud services without coding

- Furthermore, excels at helping business teams access familiar tools like Excel, Salesforce, and Google Analytics

- Designed for marketing platforms and common business applications

Databricks’ Integration Strategy

- Deep integration with cloud storage systems and optimized data ingestion for large-scale operations

- Delta Lake technology provides reliable data storage combining best features of data lakes and warehouses

- Auto Loader feature automatically processes new files as they arrive

- Additionally, ideal for organizations handling continuous data streams

- Seamless integration with major cloud storage services like Amazon S3, Azure Data Lake Storage, and Google Cloud Storage

When Each Excels

- Consequently, Dataiku works better when business users need quick access to diverse data sources

- Databricks shines for organizations building large-scale data pipelines processing massive information volumes

2. Data Preparation & Transformation

Dataiku’s Visual Approach

- Uses “visual recipes” allowing users to clean and transform data through drag-and-drop interfaces

- Business analysts perform complex data preparation tasks without writing code

- Platform supports SQL, Python, and R for users who prefer coding

- Therefore, makes data preparation accessible to broader range of users within organizations

- Visual interface reduces learning curve for non-technical staff

Databricks’ Code-First Pipeline

- Emphasizes code-based data transformation using Spark SQL and PySpark within interactive notebooks

- Delta Live Tables feature helps engineers build reliable data pipelines with automatic quality monitoring

- Moreover, integrates well with popular tools like dbt for data transformation workflows

- Provides maximum flexibility and performance for complex data processing tasks

- Meanwhile, requires technical expertise but offers greater customization options

Key Contrast

- Fundamental difference: drag-and-drop preparation versus code-first pipeline development

- Hence, organizations should choose based on who will be doing majority of data preparation work

3. Analytics & Predictive Modeling

Dataiku’s Accessible Analytics

- Automated model building capabilities that create and test multiple predictive models

- Makes it easy for business users to create forecasting solutions

- Prebuilt templates help users quickly implement common use cases like customer churn prediction

- Furthermore, visual modeling workflow makes it easy to understand and modify models without technical expertise

- Excellent for rapid prototyping and business experimentation

- Demand forecasting and sales prediction capabilities built-in

Databricks’ Advanced Analytics Infrastructure

- MLflow for comprehensive experiment tracking and model management

- Enables data science teams to manage complex analytics projects at scale

- Integration with cutting-edge technologies for natural language processing

- Additionally, designed for organizations building custom predictive solutions

- Training large language models and advanced automation applications at enterprise scale

- On the other hand, requires dedicated technical teams for implementation

Application Focus

- Therefore, Dataiku excels at democratizing analytics for business teams

- Databricks provides infrastructure needed for advanced custom model development and large-scale operations

Drive Business Innovation and Growth with Expert Machine Learning Consulting

Partner with Kanerika Today.

4. Collaboration & Governance

Dataiku’s Cross-Functional Collaboration

- Breaks down silos between technical and business teams through collaborative workspaces

- Everyone can contribute their expertise using tools appropriate to their skill level

- Business users work alongside data scientists on shared projects

- Consequently, creates environment where domain knowledge meets technical capability

- Shared project spaces with role-based access and permissions

Databricks’ Enterprise Governance

- Unity Catalog provides comprehensive governance solution managing data lineage and permissions

- Access controls across entire organization with detailed tracking

- Ensures sensitive data remains secure with regulatory compliance

- Furthermore, maintains strict compliance with industry regulations

- In contrast, focuses on enterprise-grade security and audit capabilities

Comparison

- Essentially, Dataiku focuses on “ease of use for many people”

- Databricks emphasizes “rigorous governance at scale”

- Choice depends on priority: widespread collaboration versus strict enterprise controls

5. Scalability & Performance

Dataiku’s Scaling Characteristics

- Scales effectively for medium to large enterprises

- Not designed for hyperscale computing environments

- Works well for organizations processing significant amounts of data

- Nevertheless, may face limitations with extremely large datasets or real-time processing

- Provides solid performance for most business analytics needs

Databricks’ Hyperscale Architecture

- Built specifically for hyperscale workloads processing petabytes of data

- Handles real-time streaming applications with high performance

- Fortune 500 companies rely on it for mission-critical real-time data pipelines

- Thus, processes millions of transactions per second

- Conversely, serves organizations with most demanding performance requirements

Performance Reality

- Therefore, organizations processing moderate data volumes will find Dataiku sufficient

- Those with massive datasets or strict performance requirements typically need Databricks’ industrial-strength architecture

6. Cloud & Deployment Flexibility

Dataiku’s Deployment Options

- Flexible deployment models including on-premises installations

- Cloud deployments and hybrid configurations available

- Appeals to organizations with specific security requirements

- Moreover, allows companies to maintain control over data location and security protocols

- Preserves existing infrastructure investments

Databricks’ Cloud-Native Approach

- Operates as cloud-native platform across multiple cloud providers (AWS, Azure, Google Cloud)

- Architecture optimized for cloud scalability and cloud-native services

- Takes full advantage of cloud pricing models and latest innovations

- Consequently, organizations can leverage cost efficiencies and automatic scaling

- Meanwhile, requires commitment to cloud-first strategy

Strategic Difference

- Key difference: hybrid flexibility versus cloud-first scale

- Hence, organizations with strict on-premises requirements may prefer Dataiku’s flexibility

- Those ready to embrace cloud computing benefit from Databricks’ cloud-optimized architecture

Dataiku vs Databricks: Pricing Models Comparison

Dataiku: Predictable Subscription Structure

How It Works

- Pay-per-user and computing power: Organizations pay based on the number of team members using the platform plus the computing resources they need

- Fixed monthly or annual fees: Costs stay the same each billing period, determined by team size and technical requirements

- Budget-friendly planning: Finance teams can easily predict and allocate technology expenses without surprises

Key Benefits

- Consistent monthly costs: Bills remain stable regardless of how much the platform is actually used during that period

- Simple budgeting: Organizations know their exact expenses upfront, making long-term planning straightforward

- No usage surprises: Teams can use the platform extensively without worrying about unexpected charges

Best Fit

- Medium-sized companies: Works especially well for organizations with stable team sizes and steady data processing requirements

- Traditional budgeting: Aligns perfectly with standard enterprise financial planning processes

- Growing businesses: Provides cost certainty for companies expanding their data capabilities

Databricks: Usage-Based Consumption Model

How It Works

- Pay for what you use: Customers are charged only for the actual computing resources and storage they consume

- Databricks Unit system: Costs are calculated using special units that represent the processing power used during data work

- Real-time billing: Charges fluctuate based on actual platform activity and resource consumption

Key Benefits

- Cost optimization: Organizations pay more during busy periods and less during quiet times

- Resource flexibility: Companies can scale their usage up or down based on current needs

- No waste: Ensures businesses only pay for resources they actually utilize

Potential Challenges

- Unpredictable bills: Monthly costs can vary significantly based on usage patterns

- Budget complexity: Requires more sophisticated financial planning due to variable expenses

Best Fit

- Large enterprises: Benefits organizations with massive, variable workloads and seasonal processing patterns

- Dynamic businesses: Perfect for companies that experience significant ups and downs in their data processing needs

- Cost-conscious operations: Allows organizations to optimize expenses by scaling resources based on demand

Who Should Choose Which Model

Choose Dataiku If You Are:

- Small to medium-sized business: Need budget certainty and simplified cost management

- Planning-focused organization: Prefer knowing exact monthly costs rather than dealing with variable billing

- Stable operations: Have consistent team sizes and predictable data processing requirements

Choose Databricks If You Are:

- Large enterprise: Have fluctuating data needs and can leverage economies of scale

- Variable workload business: Experience seasonal patterns or significant changes in data processing demands

- Resource optimization focused: Want to scale costs directly with actual usage and business cycles

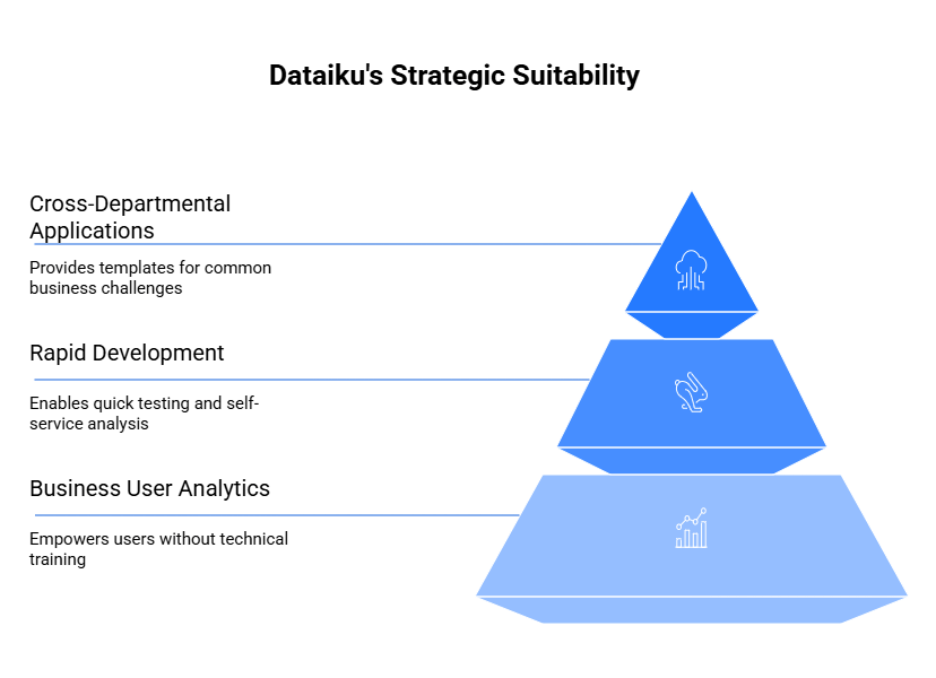

Dataiku vs Databricks: Ideal Use Cases

Dataiku Best Suited For

Business User Analytics Projects: Dataiku excels in business-led data projects where professionals need to analyze information without extensive technical training. The platform empowers marketing managers, financial analysts, and operations staff to build predictive tools and generate insights using simple visual interfaces rather than complex programming.

Rapid Development and Self-Service: Furthermore, the platform is perfect for quick testing and self-service analysis scenarios where teams need fast results. Business users can experiment with different approaches, test ideas, and build working solutions in days rather than weeks, enabling quick decision-making across departments.

Cross-Departmental Business Applications: Additionally, Dataiku serves retail, finance, marketing, and operations teams particularly well, providing ready-made templates and workflows for common business challenges. The platform addresses real-world scenarios like customer grouping, sales forecasting, inventory management, and performance tracking without requiring technical expertise.

Practical Example: A typical use case involves a marketing team identifying customers likely to leave with minimal programming. Marketing analysts can upload customer information, use pre-built templates to spot at-risk customers, and create automated retention campaigns – all through visual workflows without writing code.

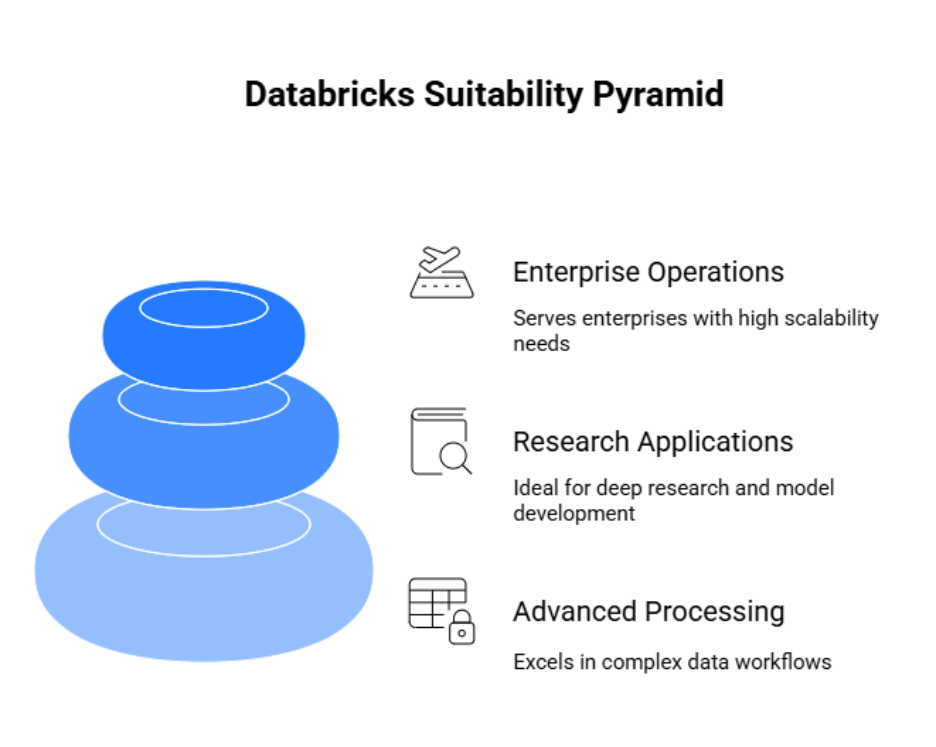

Databricks Best Suited For

Advanced Technical Processing: Meanwhile, Databricks excels in complex data workflows, real-time information processing, and big data analysis requiring sophisticated technical capabilities. The platform handles scenarios where organizations process enormous datasets instantly, requiring optimized performance and advanced engineering expertise.

Research and Development Applications: Similarly, the platform is ideal for deep research projects including advanced model development, experimental analysis, and cutting-edge automation creation. Data science teams use Databricks for breakthrough research requiring significant computing power and technical flexibility.

Enterprise-Scale Technical Operations: In contrast, Databricks serves enterprises with dedicated data engineering teams and substantial technology budgets who need maximum performance and scalability. These organizations typically have technical staff capable of using the platform’s advanced capabilities for complex business-critical applications.

Practical Example: A global banking institution running massive risk analysis systems represents a typical Databricks use case. The bank processes enormous amounts of transaction data, regulatory information, and market data to assess portfolio risks, requiring both technical expertise and substantial computing resources that Databricks provides efficiently.

Dataiku vs Databricks: Feature Comparison

| Feature | Dataiku | Databricks |

| Main Purpose | AI and analytics for everyone | Data engineering with AI tools |

| Interface | Visual tools plus coding | Mostly code and notebooks |

| Machine Learning | Built-in templates and AutoML | Custom ML with MLflow and LLMOps |

| Data Governance | Team collaboration and clear ownership | Unity Catalog with data tracking |

| Scale | Good for mid-size companies | Built for huge enterprise setups |

| Best Fit | Business analysts and citizen data scientists | Data engineers and large AI teams |

Integration with the Modern Data Stack

Dataiku connects well with business intelligence tools. It works smoothly with Power BI and Tableau, making it easy for analysts to build dashboards from their models. The platform focuses on getting insights to business users quickly.

Databricks goes deeper into the engineering side. It connects with dbt for data transformation, Airflow for workflow management, and Kafka for real-time data streaming. Delta Lake provides reliable data storage that handles both batch and streaming data.

For AI applications, the two platforms take different approaches. Databricks offers MosaicML for training large language models and Lakehouse AI for building AI apps directly on your data. This setup works well for teams that want to build custom AI solutions from scratch.

Dataiku takes a simpler path. It provides AutoML workflows that guide you through model building, plus pre-built connectors for common AI tasks. This approach helps business teams get AI projects running without deep technical expertise.

The choice depends on your team’s skills and goals. Pick Databricks if you have strong engineering resources and want to build custom solutions. Choose Dataiku if you need business-friendly tools that work with existing BI workflows.

Overcome Your Data Management Challenges with Next-Gen Data Intelligence Solutions!

Partner with Kanerika for Expert AI implementation Services

Dataiku vs Databricks: Strengths and Weaknesses

Dataiku

What it does well: Dataiku lets people build smart models without coding. Business analysts can drag and drop to create predictions, while tech people can still write code when they want to. Plus, you can run it on your own servers, in the cloud, or mix both approaches.

Where it falls short: However, it struggles with massive datasets that need serious computing power. Also, the chatbot and text generation features aren’t as strong as what you’ll find elsewhere. So if you’re building complex text apps, you might hit walls.

Databricks

What it does well: Databricks processes huge amounts of data fast. It has solid tools for building prediction models and text generation systems, including ways to train your own chatbots. Everything runs in one place, so you don’t need to move data around.

Where it falls short: But you need coding skills to use it well. The pricing gets confusing because costs depend on how much computing power, storage, and features you use. Business users often find it too technical.

The Bottom Line

Pick Dataiku if you want non-technical people building models quickly. It works well for companies that need flexibility in where they run their data work.

On the other hand, choose Databricks if you have big data problems and technical teams. It’s built for companies that want to create custom solutions and can handle the complexity.

Dataiku vs Databricks: Future Outlook

Dataiku is working to make text generation and chatbot building as simple as their current drag-and-drop tools. They want business users to create smart text apps without needing a computer science degree. This means more templates, visual workflows, and pre-built connections to popular text generation services.

Databricks, meanwhile, is doubling down on being the go-to platform for companies that want to build their own chatbots and text systems from scratch. They’re adding more tools for training custom models and managing them at scale. Plus, they’re making their data storage even faster and more reliable.

The Bigger Picture

Both companies are moving toward the same goal but taking different paths. They want to become the main platform where enterprises build all their smart applications.

However, they’re approaching this differently. Dataiku focuses on making advanced tech accessible to regular business people. They believe the future belongs to companies where marketing teams can build their own chatbots and sales teams can create their own prediction tools.

Databricks thinks the future belongs to companies with strong technical teams who want full control. They’re betting that enterprises will want to build custom solutions rather than use pre-made templates.

Microsoft Fabric Vs Tableau: Choosing the Best Data Analytics Tool

A detailed comparison of Microsoft Fabric and Tableau, highlighting their unique features and benefits to help enterprises determine the best data analytics tool for their needs.

Kanerika: Powering Business Success with the Best of AI and ML Solutions

At Kanerika, we specialize in agentic AI and AI/ML solutions that empower businesses across industries like manufacturing, retail, finance, and healthcare. Our purpose-built AI agents and custom Gen AI models address critical business bottlenecks, driving innovation and elevating operations. With our expertise, we help businesses enhance productivity, optimize resources, and reduce costs.

Our AI solutions offer capabilities such as faster information retrieval, real-time data analysis, video analysis, smart surveillance, inventory optimization, sales and financial forecasting, arithmetic data validation, vendor evaluation, and smart product pricing, among many others.

Through our strategic partnership with Databricks, we leverage their powerful platform to build and deploy exceptional AI/ML models that address unique business needs. This collaboration ensures that we provide scalable, high-performance solutions that accelerate time-to-market and deliver measurable business outcomes. Partner with Kanerika today to unlock the full potential of AI in your business.

A New Chapter in Data Intelligence: Kanerika Partners with Databricks

Explore how Kanerika’s strategic partnership with Databricks is reshaping data intelligence, unlocking smarter solutions and driving innovation for businesses worldwide.

FAQs

What is the main difference between Dataiku and Databricks?

The main difference between Dataiku and Databricks lies in their core focus. Dataiku is an end-to-end data science platform designed for collaborative analytics and machine learning workflows, emphasizing visual interfaces for diverse teams. Databricks is a unified analytics platform built on Apache Spark, optimized for large-scale data engineering and lakehouse architecture. While Dataiku prioritizes accessibility for business analysts, Databricks excels at high-performance distributed computing for data engineers. Kanerika helps enterprises evaluate both platforms against their specific analytics requirements—schedule a consultation to determine the right fit.

Is Dataiku similar to Databricks?

Dataiku and Databricks share overlap in enabling data analytics and machine learning but differ significantly in approach. Dataiku provides a visual, low-code environment suited for collaborative data science across technical and non-technical users. Databricks focuses on scalable data engineering with its Spark-based lakehouse platform, targeting engineers who need distributed processing power. Both platforms support ML workflows, yet their architectures serve different organizational needs. Organizations often use them together for complementary capabilities. Kanerika’s data platform specialists can assess which solution aligns with your team’s skill set and infrastructure—connect with us today.

Who are Dataiku's main competitors?

Dataiku’s main competitors include Databricks, Alteryx, DataRobot, H2O.ai, and SAS. Each platform addresses collaborative data science and machine learning workflows differently. Databricks competes on scalable lakehouse analytics, while Alteryx targets self-service analytics automation. DataRobot and H2O.ai focus heavily on automated machine learning capabilities. SAS remains a legacy competitor in enterprise analytics. Choosing between these data science platforms depends on your team composition, existing infrastructure, and ML maturity. Kanerika helps enterprises navigate the competitive landscape and implement the right analytics stack—reach out for an unbiased platform assessment.

What is the biggest competitor of Databricks?

Snowflake stands as Databricks’ biggest competitor in the enterprise data platform space. Both platforms compete for lakehouse and cloud data warehouse dominance, though they approach the market differently. Databricks emphasizes Apache Spark-based analytics and ML engineering, while Snowflake prioritizes ease of use and SQL-based data warehousing. Microsoft Fabric and Google BigQuery also challenge Databricks in unified analytics. AWS with its native services presents additional competition. Kanerika implements both Databricks and Snowflake solutions and can help you evaluate which unified data platform best serves your enterprise goals.

Which platform is better for non-technical users?

Dataiku is better suited for non-technical users compared to Databricks. Dataiku’s visual interface enables business analysts and citizen data scientists to build machine learning workflows without writing extensive code. Its drag-and-drop functionality simplifies data preparation, feature engineering, and model deployment. Databricks, while powerful, requires stronger programming skills in Python, SQL, or Scala for effective use. Organizations seeking to democratize analytics across business teams typically find Dataiku more accessible. Kanerika implements both platforms and can design training programs that accelerate adoption for your specific user personas—let’s discuss your team’s needs.

Can Dataiku and Databricks be used together?

Dataiku and Databricks integrate effectively and many enterprises use them together. Dataiku can connect to Databricks as a compute backend, allowing data scientists to leverage Databricks’ Spark clusters for processing while using Dataiku’s collaborative visual interface. This combination provides Databricks’ scalable data engineering with Dataiku’s accessible ML workflow management. The integration enables teams to build models in Dataiku and execute at scale on Databricks infrastructure. Kanerika architects integrated data stacks that maximize both platforms’ strengths—contact us to design a unified analytics architecture for your organization.

How do Dataiku and Databricks handle scalability?

Databricks handles scalability through its native Apache Spark architecture, enabling distributed computing across massive datasets with auto-scaling clusters. It excels at petabyte-scale data engineering and real-time analytics workloads. Dataiku approaches scalability differently by offloading computation to external engines like Spark, Kubernetes, or cloud platforms while managing orchestration through its interface. Dataiku scales collaboration across teams; Databricks scales compute power. For enterprises processing large data volumes, Databricks offers superior raw performance. Organizations prioritizing team scalability find Dataiku valuable. Kanerika helps enterprises design scalable data architectures using both platforms—schedule a technical discussion with our team.

How do pricing models compare between Dataiku and Databricks?

Dataiku and Databricks use different pricing models that impact total cost significantly. Dataiku typically charges per-user licensing with tiered editions based on features and deployment options. Databricks uses consumption-based pricing calculated through Databricks Units tied to compute usage and cluster runtime. Dataiku costs remain more predictable with user-based fees, while Databricks costs scale with workload intensity. Both offer cloud and on-premise options affecting pricing. Enterprise agreements vary substantially based on scale and commitments. Kanerika provides TCO analysis comparing both platforms against your usage patterns—request a pricing assessment to understand true implementation costs.

Which should my data team choose — Dataiku or Databricks?

Your data team should choose based on composition and primary use cases. Select Databricks if your team consists mainly of data engineers working on large-scale ETL pipelines, lakehouse architecture, and Spark-based analytics. Choose Dataiku if your team includes business analysts and data scientists who need collaborative ML workflows with visual tools. Teams handling both heavy data engineering and accessible analytics often implement both platforms together. Consider existing infrastructure, cloud partnerships, and skill gaps when deciding. Kanerika evaluates organizational needs and recommends the optimal platform strategy—book a discovery session to align technology with your team’s capabilities.

Is Dataiku an ETL tool?

Dataiku includes ETL capabilities but is not primarily an ETL tool. It functions as a comprehensive data science and analytics platform that incorporates data preparation, transformation, and pipeline orchestration alongside machine learning and visualization features. Dataiku’s visual recipes enable users to build data transformation workflows without extensive coding, making it useful for ETL tasks within broader analytics projects. However, dedicated ETL tools like Informatica or Talend offer deeper integration features. For complex enterprise data integration, specialized tools may complement Dataiku’s strengths. Kanerika implements end-to-end data pipelines combining Dataiku with optimal integration tools—contact us to design your architecture.

Is Databricks a database or ETL tool?

Databricks is neither a traditional database nor a dedicated ETL tool—it functions as a unified analytics platform built on lakehouse architecture. It combines data lake storage flexibility with data warehouse performance, supporting both structured and unstructured data. Databricks handles ETL workloads through Apache Spark but offers far more, including machine learning, real-time streaming, and collaborative notebooks. Delta Lake provides ACID transactions typically associated with databases. This unified approach eliminates separate systems for data engineering and analytics. Kanerika specializes in Databricks lakehouse implementations—reach out to explore how unified analytics can modernize your data infrastructure.

What is Dataiku primarily used for?

Dataiku is primarily used for collaborative data science and machine learning workflow management. Organizations deploy Dataiku to enable cross-functional teams—including data scientists, analysts, and business users—to build, deploy, and monitor ML models together. Its visual interface supports data preparation, feature engineering, model training, and production deployment without requiring extensive coding. Dataiku excels at operationalizing analytics projects, providing governance and reproducibility across the ML lifecycle. Industries like finance, retail, and healthcare use it for predictive analytics and automation initiatives. Kanerika implements Dataiku for enterprises seeking democratized data science—let’s discuss your ML operationalization goals.

What is a major weakness for Databricks?

A major weakness for Databricks is its steep learning curve for non-technical users. The platform requires proficiency in Python, SQL, or Scala, making it less accessible for business analysts without engineering backgrounds. Additionally, Databricks’ consumption-based pricing can become unpredictable and expensive for organizations with fluctuating or poorly optimized workloads. Cluster management and performance tuning demand experienced administrators. Organizations without strong data engineering teams may struggle to extract full value. Cost governance and user adoption require careful planning. Kanerika helps enterprises overcome Databricks adoption challenges through training, optimization, and managed services—connect with us to maximize your platform investment.

What is the alternative for Databricks?

Key alternatives to Databricks include Snowflake, Microsoft Fabric, Google BigQuery, and Amazon Redshift for unified analytics workloads. Snowflake offers simpler SQL-based analytics with strong data sharing capabilities. Microsoft Fabric provides end-to-end integration within the Microsoft ecosystem. BigQuery excels at serverless analytics with automatic scaling. For open-source options, Apache Spark on Kubernetes or EMR delivers similar distributed computing. Dataiku serves as an alternative for teams prioritizing accessible ML workflows over raw compute power. Each platform suits different organizational needs and technical environments. Kanerika evaluates alternatives against your requirements—request a platform comparison to identify your optimal solution.

Which platform offers better AI and ML capabilities?

Databricks offers stronger AI and ML capabilities for advanced practitioners, while Dataiku provides better accessibility for broader teams. Databricks excels with MLflow for experiment tracking, distributed training on Spark clusters, and deep integration with popular ML frameworks. Its Mosaic AI features support large language model development. Dataiku simplifies ML workflows with visual model building, AutoML, and one-click deployment—ideal for operationalizing models quickly. Both platforms support the full ML lifecycle, but Databricks suits organizations pushing technical boundaries while Dataiku accelerates time-to-value for standard use cases. Kanerika implements AI solutions on both platforms—consult with us to match capabilities to your ML ambitions.

Which big companies use Databricks?

Major enterprises using Databricks include Shell, Comcast, Regeneron, CVS Health, and ABN AMRO. Technology companies like Block, Atlassian, and Conde Nast leverage Databricks for large-scale analytics. Financial institutions including HSBC and Nationwide deploy it for risk analytics and fraud detection. Retailers like Walgreens and pharmaceutical companies like AstraZeneca use Databricks for data engineering and ML initiatives. These organizations chose Databricks for its scalable lakehouse architecture and unified analytics capabilities handling petabyte-scale workloads. Kanerika delivers enterprise Databricks implementations following proven patterns from global deployments—talk to us about achieving similar outcomes for your organization.

Why is Dataiku so slow?

Dataiku performance issues typically stem from infrastructure configuration rather than inherent platform limitations. Common causes include undersized compute resources, unoptimized data pipelines processing large datasets locally, or misconfigured connections to external processing engines. Dataiku performs best when computation is pushed to scalable backends like Spark, Kubernetes, or cloud services. Running complex transformations on local execution engines creates bottlenecks. Memory allocation, dataset sampling strategies, and recipe optimization significantly impact speed. Proper architecture design eliminates most slowness complaints. Kanerika optimizes Dataiku deployments for enterprise performance—contact us to diagnose and resolve your platform speed issues.

Is Databricks a Palantir competitor?

Databricks competes with Palantir in enterprise data analytics, though their approaches differ substantially. Palantir focuses on data integration and operational intelligence with products like Foundry, targeting government and defense sectors heavily. Databricks emphasizes scalable lakehouse analytics and ML engineering for broader enterprise markets. Both platforms enable large-scale data processing and analytics, creating competitive overlap in commercial sectors. Palantir offers more pre-built operational applications; Databricks provides flexible infrastructure for custom analytics solutions. Organizations evaluate them based on industry fit and build-versus-buy preferences. Kanerika implements both Databricks and alternative enterprise platforms—reach out to determine which architecture serves your analytical objectives.