Enterprise AI sits behind a wall most teams keep building higher. Large language models reason well, write well, and write code well, yet they cannot reach the systems where work happens, your CRM, your data warehouse, your ticketing tool, your SharePoint folder. Teams paper over this with custom function-calling code, brittle plugins, and one-off integrations that break when models change. Anthropic released the Model Context Protocol in November 2024 to fix this directly, with an open standard for how AI applications connect to tools and data. Adoption has spread fast across OpenAI, Google DeepMind, and Microsoft. In this article, we’ll cover what MCP is, how it works, who is using it, and how to evaluate it for an enterprise rollout.

Key Takeaways

- MCP is an open protocol from Anthropic that standardizes how AI applications connect to external tools, data, and services using JSON-RPC 2.0.

- It replaces brittle one-off integrations with a single client and server model that any compliant AI host can call.

- Servers expose three primitives, tools (actions), resources (data), and prompts (templates), to any connected client.

- MCP is being adopted across the major AI vendors, OpenAI, Google DeepMind, Microsoft Copilot Studio, GitHub, Replit, and Block, which makes vendor lock-in less of an issue.

- It does not replace RAG, APIs, or agent-to-agent protocols, it sits alongside them and handles a different layer of the stack.

- For enterprises, MCP makes context-aware AI agents practical without rebuilding integrations every time a model or vendor changes.

What is Model Context Protocol (MCP)?

Model Context Protocol is an open standard, released by Anthropic in November 2024, that defines how AI applications connect to the external tools and data sources they need to do useful work. It plays a role similar to what HTTP did for the web or USB did for peripherals, a shared communication contract that any compliant client and server can use.

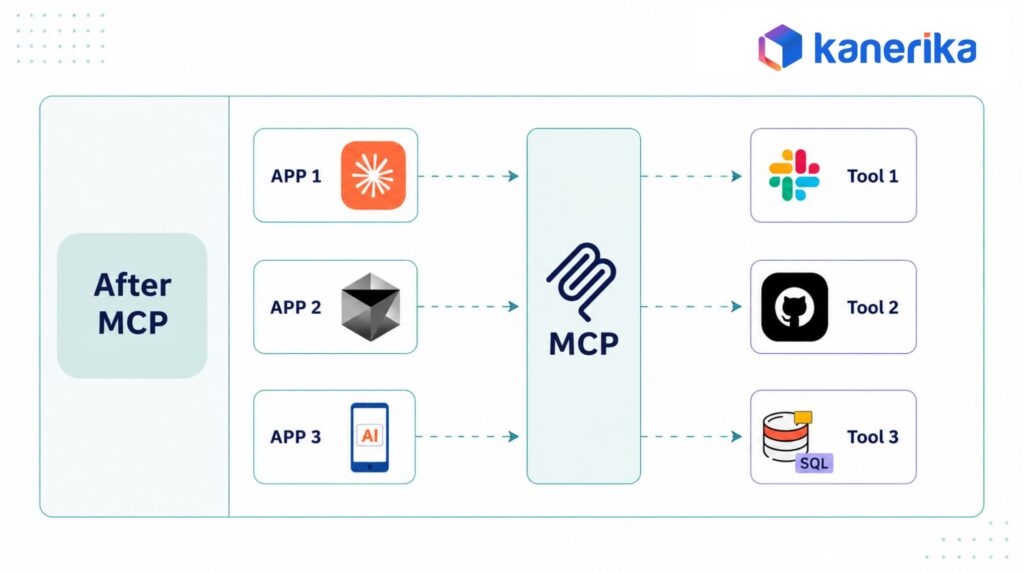

Without MCP, every AI vendor builds its own function-calling format, and every tool builder writes a custom integration for each AI platform they want to support. With MCP, the tool builder writes one MCP server, and any MCP-compliant AI host can use it. That includes Claude Desktop, ChatGPT, Copilot Studio, Cursor, and a growing list of IDEs and agent platforms.

MCP is not a model, not a framework, and not a hosted service. It is a protocol specification with reference SDKs in Python, TypeScript, Java, Kotlin, C#, and Swift, all open source. The spec is published at modelcontextprotocol.io and the reference implementation is on GitHub.

In December 2025, Anthropic donated MCP to the Agentic AI Foundation, a Linux Foundation directed fund co-founded with Block and OpenAI and backed by Google, Microsoft, AWS, Cloudflare, and Bloomberg. That move shifts MCP from single-vendor stewardship to neutral open governance, which removes one of the last enterprise concerns about adopting it.

Why we built—and donated—the Model Context Protocol (MCP) I Anthropic

Why MCP Matters: the Five AI Integration Problems it Solves

Before MCP, every team building AI agents hit the same wall. The model knew what to do, but had no clean way to actually do it inside the customer’s environment.

1. Stale, Training-Bound Context

A pretrained model knows the world up to its cutoff date and nothing after. Without a live connection to systems, it cannot quote today’s sales numbers, look up the active ticket queue, or read the most recent commit. MCP gives the model a way to fetch current state from databases, calendars, file stores, and APIs at request time.

2. One-Off Integrations That Don’t Scale

A typical enterprise AI rollout connects to a handful of tools, then ten, then thirty. Each connector is custom code that has to be maintained for every model version, every authentication change, every API revision. MCP collapses that to one integration per tool, reusable across every compliant AI client.

3. No Shared Way to Describe Tools

Function-calling formats from OpenAI, Anthropic, Google, and other vendors all describe tools differently. A tool built for one model has to be reformatted for another. MCP defines a single schema for tool descriptions, arguments, and return shapes, so the same tool runs across hosts.

4. Inconsistent Context Across Turns

Long agent workflows lose context between calls. The model forgets which file it was reading, which user it was acting for, or which permissions applied. MCP sessions carry that state explicitly through resources and session metadata, so the model can pick up where it left off.

5. Security and Authorization Gaps

Hardcoding API keys into model prompts or passing user credentials through the LLM is a security failure most enterprises will not accept. MCP separates the model from the credential, the host application handles authentication, and the model only sees tool capability descriptions. That makes audit and access control workable for regulated industries.

The Technical Architecture of MCP

MCP follows a client and server model with three explicit layers, the host application, the client, and the server. Understanding how these pieces fit together explains both what MCP can do and what it deliberately leaves out.

1. Hosts, Clients, and Servers

The host is the AI application the user interacts with, Claude Desktop, ChatGPT, an IDE, or a custom enterprise agent. Inside the host, one or more clients open connections to MCP servers. Each server is a tool or data source, a database connector, a file system bridge, a SaaS API wrapper, or a custom enterprise integration.

This separation keeps the host responsible for the AI experience and authentication, while servers focus only on exposing their capabilities cleanly.

2. JSON-RPC 2.0 As the Wire Protocol

MCP uses JSON-RPC 2.0 for all messages between client and server. The wire format supports requests, responses, and notifications, with full bidirectional communication.

Two transports are defined in the spec, stdio for local servers running on the same machine as the host, and HTTP with Server-Sent Events for remote servers. Local stdio is the default for desktop tools like Claude Desktop. Remote HTTP+SSE is what enterprise deployments use when servers run in a separate environment.

3. The Three Server Primitives

Every MCP server exposes some combination of three primitive types.

- Tools are actions the model can call, like

query_database,create_ticket, orsend_email. Each tool has a name, a description, and a JSON schema for its arguments. - Resources are data the model can read, like file contents, database rows, or knowledge base articles. Resources are identified by URI and can be subscribed to for updates.

- Prompts are templates the user can invoke, often surfaced as slash commands in the host UI, that combine instructions and context into a ready-to-run task.

The model sees these three primitives consistently across every server, which is what makes the protocol portable.

4. The Tool Invocation Flow

When a user asks an MCP-enabled host to do something, the flow runs in five steps.

- The host lists available tools from all connected servers and includes their descriptions in the model context.

- The model decides which tool to call and returns a structured tool call with arguments.

- The host validates the call and routes it to the right server via the client connection.

- The server executes the action and returns a result.

- The host feeds the result back into the model so it can compose a final response or chain into the next tool.

The user can require explicit approval before each tool call, which is how Claude Desktop and most enterprise hosts handle sensitive actions today.

Core Capabilities MCP Provides for AI Agents

Once a model is connected to tools and resources through MCP, five capability categories open up that a standalone LLM cannot deliver. Each one moves the agent further from a smart text generator and closer to a system that does real work.

1. Real-Time Data Access

The model can pull live information at request time, current inventory, today’s sales figures, an open support ticket, a fresh log entry. Responses reflect the present state of the business, not the model’s training cutoff.

This matters most for time-sensitive decisions. A treasury analyst asking about cash position needs today’s bank balances, not last quarter’s. A site reliability engineer asking about an active incident needs the current alert state, not yesterday’s. MCP makes the difference between an agent that quotes stale numbers and one that answers from the system of record.

2. Action Execution Inside Real Systems

The model can do more than describe what to do, it can do it. Creating a Jira ticket, posting a Slack message, updating a CRM record, or kicking off a CI build all become single tool calls.

For enterprise rollouts, the action layer is where governance lives. Hosts can route every action through approval workflows before execution, mark certain tools as read-only, or require a second human sign-off for high-impact calls. The protocol does not dictate the policy, it makes the policy enforceable in one consistent place.

3. Persistent Session State

Resources and session metadata let the model maintain working memory across many turns. An agent debugging a production incident can keep the current incident ID, the affected service, and the active runbook in context without re-asking the user.

Resources also support subscriptions, which means the server can push updates to the client when underlying data changes. An agent watching an open ticket gets the new comment as soon as it lands, without polling.

4. Multi-Server Orchestration

A single host can connect to multiple MCP servers at once. An AI agent for finance operations might query the data warehouse through one server, post to Slack through another, and update Workday through a third, in one workflow.

Multi-server orchestration is what makes MCP useful for cross-functional automations. A sales-ops agent might combine Salesforce data, internal product analytics, and an email server in a single flow that drafts a renewal outreach, files the activity in the CRM, and queues a follow-up task, all from one user prompt.

5. Feedback and Tool Chaining

Tool outputs feed directly into the next model turn, so the agent can react to what the system returned. Failed call returned a permission error? The agent can prompt the user for elevated access. Empty result set? It can adjust the query and try again.

This loop is what separates an agent from a script. Scripts run a fixed sequence and fail when reality does not match the plan. MCP-enabled agents can read the failure, reason about what to try next, and recover, with each attempt logged for audit.

What MCP Connects To: Integration Categories

The MCP ecosystem at the time of writing covers six broad integration categories, with hundreds of community-built servers in the official registry.

1. Databases and Data Warehouses

Servers exist for PostgreSQL, MySQL, SQLite, MongoDB, BigQuery, Snowflake, and Microsoft SQL Server. These let an AI agent read schemas, run parameterized queries, and pull live business data into its working context.

2. Web Search and Retrieval

Connectors for Brave Search, Google search, Exa, and Tavily let agents pull external research on demand. For enterprise knowledge bases, servers for Confluence, Notion, and SharePoint expose internal documents the same way.

3. Code Execution and Developer Tools

GitHub, GitLab, Bitbucket, and various sandbox runtimes have MCP servers. Agents can read repositories, open pull requests, run tests, and inspect CI output without leaving the conversation.

4. Document and File Processing

Servers expose local file systems, cloud storage like Google Drive and OneDrive, and parsing engines for PDF, DOCX, and structured data. Document intelligence workflows that used to need a custom pipeline now run as a multi-tool agent flow.

5. Enterprise SaaS

Salesforce, HubSpot, ServiceNow, Workday, Slack, Microsoft Teams, and Zendesk all have MCP servers, some maintained by the vendor, others by the community. This is where most enterprise pilots start.

6. Specialized and Domain-Specific Servers

Beyond the categories above, MCP servers exist for IoT brokers, BI tools like Tableau and Power BI, observability platforms, and even hardware control for robotics labs. Anything that can be wrapped behind an API can become an MCP server.

The registry grows weekly, and the bar to publish is low, which makes the ecosystem look noisy from outside. The signal in the noise is that the active maintainers are doing the work, official servers from Anthropic, Microsoft, Block, GitHub, and Cloudflare get the most production use. For an enterprise rollout, prefer those, then community servers with active commit history, then anything else only after security review.

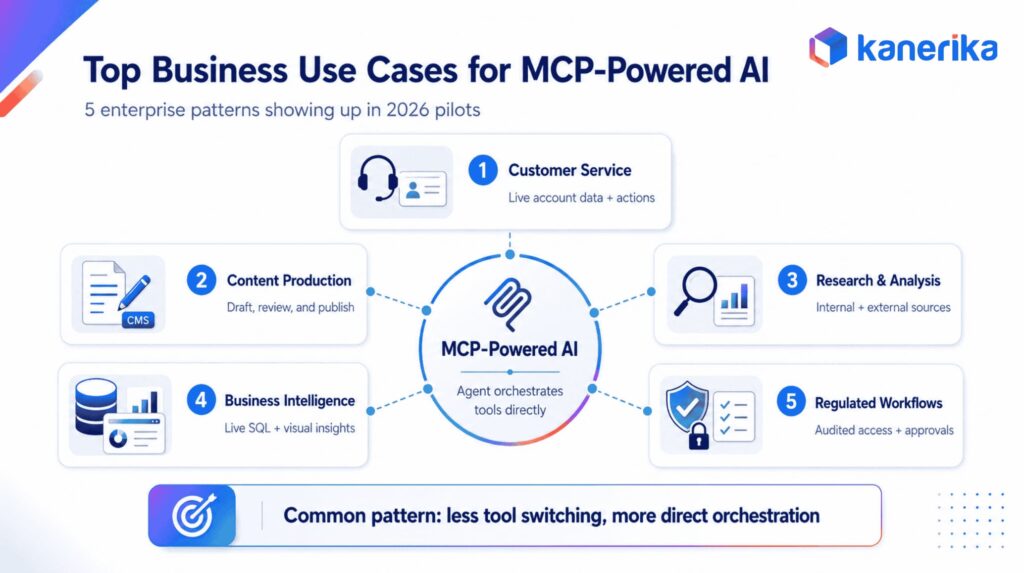

Top Business Use Cases for MCP-Powered AI

The protocol is general purpose, but five business use cases are showing up consistently in enterprise pilots in 2026. Each one shares the same pattern, an existing workflow that depends on people switching between tools, replaced by an agent that orchestrates the tools directly.

1. Customer Service Automation With Live Account Data

Support agents built on MCP can pull live order history, subscription state, and ticket logs while answering, then act on them, applying a credit, escalating a case, or scheduling a callback. The answer is grounded in the current account, not a stale FAQ.

The business case is measurable. Teams using context-aware support agents typically report faster first response, higher first-contact resolution, and lower escalation rates, because the agent has the same data the human would have looked up manually. Combine the live data with an action layer, and routine tickets close without human touch.

2. Content Production Workflows

Marketing and editorial teams use MCP-connected agents that read brand guidelines from a knowledge base, draft in a CMS, run grammar checks, and publish on approval. The agent stays inside the content stack instead of asking the writer to copy and paste between tools.

The same pattern works for documentation, sales enablement, and internal comms. Whoever owns the workflow defines the servers, the model is interchangeable, and the team gets a tool chain that respects their existing standards rather than forcing a switch to a single vendor’s content platform.

Kanerika in practice: Kanerika’s own marketing team runs this stack daily. Claude Desktop sits as the host, wired via MCP to WordPress, Google Search Console, Bing Webmaster, and Semrush, with a Chrome browser server for live SERP and DOM checks. Keyword research, cannibalization detection, draft audits, and publish-prep all happen in one conversational workflow instead of five separate tools.

3. Research and Analysis

Analysts use agents that query internal data warehouses, pull external market data, and cross-check both against research notes. What took two hours of tool switching becomes a single conversational session.

For investment, consulting, and strategy teams, the value is in the cross-tool reasoning. The agent can join a Bloomberg-style external feed with internal pricing models and a SharePoint folder of prior analyses, then produce a memo that cites every source. Manual research workflows that fail audit because sources are scattered become traceable by default.

4. Business Intelligence With Live Numbers

Finance and operations teams ask natural-language questions that resolve through MCP to live SQL queries against Snowflake or BigQuery, then return narrated insights. Power BI and Tableau MCP servers can deliver the visual on top.

The interesting capability is iteration. A BI question rarely lands in one shot. The user asks, sees the answer, asks a follow-up, drills into a segment. With MCP, every follow-up is another tool call against the same warehouse, so the agent maintains the analytical thread without rebuilding context.

5. Regulated Industry Workflows

Healthcare, banking, and insurance teams use MCP to keep sensitive systems behind a controlled boundary, the model never sees PHI or credentials directly, only the responses returned by an audited server. That makes HIPAA, SOC 2, and GDPR posture manageable.

Architecturally, the regulated-industry pattern is consistent. The host runs in a trusted environment. The servers wrap legacy systems with strict allow-lists. Every tool call is logged with the requesting user and the data accessed. Approval workflows gate any action that writes to a system of record. This is the deployment shape Kanerika ships most often into BFSI and healthcare clients.

MCP Compared to APIs, RAG, and Agent-to-Agent Protocols

MCP is often confused with adjacent technologies. Each handles a different layer of the stack, and the easiest way to think about MCP is alongside the others, not instead of them.

| Layer | What it does | When to use it | Replaces MCP? |

|---|---|---|---|

| Traditional REST or GraphQL API | Lets any client talk to a backend service over HTTP | When the consumer is software, not an AI agent | No, APIs sit underneath MCP servers |

| Function calling (OpenAI, Anthropic native) | Lets a specific model invoke tools defined in its own format | Single-model, single-vendor deployments | MCP is the cross-vendor superset |

| RAG (Retrieval-Augmented Generation) | Retrieves text passages from a vector store and injects them into the prompt | Knowledge grounding from documents | No, MCP exposes RAG retrievers as resources or tools. |

| A2A (Agent-to-Agent) protocol | Lets autonomous agents discover each other and delegate tasks | Multi-agent orchestration | No, A2A handles agent-to-agent, MCP handles agent-to-tool. |

| WebMCP | A browser-side variant of MCP for web app tool exposure | Browser-native agent integrations | Different deployment surface. |

The short version

APIs are the backbone, RAG is one technique for grounding text, A2A is for multi-agent communication, and MCP is the standard for connecting an AI host to tools and data. If you want a head-to-head deep dive, read MCP vs RAG for the retrieval comparison, MCP vs A2A for the multi-agent comparison, the WebMCP implementation guide for the browser-side variant, and MCP and context-aware AI agents for applied agent patterns.

A practical example shows how these layers stack. An MCP-enabled support agent might call a knowledge-base server (which internally uses RAG to retrieve articles), a CRM server (which internally wraps a REST API), and a Slack server (which wraps the Slack Web API). The model never sees REST endpoints or vector indexes. It only sees the MCP-defined tools, with stable shapes and consistent behavior across the entire stack. That is the abstraction MCP earns.

What MCP Does Not Do: Five Common Misconceptions

The protocol has been over-marketed in places, which has produced some predictable confusion. Knowing what MCP does not do is as useful as knowing what it does.

1. MCP is not a Replacement for Agent Logic

The protocol defines how tools are described and called, not how the agent decides which tool to call next. That decision logic still lives in the host application and the model. A poorly designed agent loop will still loop poorly with MCP plugged in.

2. MCP does not Handle Authentication for you

The host application is responsible for authenticating users and authorizing tool calls. MCP provides OAuth 2.1 patterns and recommends specific flows for remote servers, but the deployment team owns identity, access policy, and credential storage. There is no shortcut here.

3. MCP is not a Real-Time Streaming Protocol

The wire format supports notifications, but MCP is built for request-response interactions and resource subscriptions, not for streaming high-frequency data. For trading-floor latency, sensor telemetry at scale, or live video, use a purpose-built streaming protocol underneath and expose summaries through MCP if needed.

4. MCP Servers are not Automatically Secure

A community MCP server you install is code running with whatever permissions your host gives it. Treat third-party servers like any other supply-chain dependency, with security review, version pinning, and runtime sandboxing. The official Anthropic and vendor-published servers have a higher trust baseline, but verification is still your job.

5. MCP is not the Same as the Agents SDK or other Frameworks

OpenAI Agents SDK, LangGraph, and similar frameworks build on MCP or sit alongside it. MCP is the lower-layer protocol for tool communication. Frameworks are higher-layer scaffolding for agent design, memory, planning, and orchestration. The two complement each other rather than compete.

Who Is Using MCP: Real-World Adoption

Adoption widened sharply through 2025. By the end of the year, Anthropic reported over 10,000 active public MCP servers and adoption by every major AI platform, with Block, OpenAI, Google, Microsoft, AWS, Cloudflare, and Bloomberg joining as founding backers of the Agentic AI Foundation that now governs the protocol.

Anthropic, OpenAI, and Google DeepMind

Anthropic released MCP and ships native support in Claude Desktop and the Claude API. OpenAI announced support for MCP in March 2025, adding it to the Agents SDK, ChatGPT desktop, and the Responses API. Google DeepMind confirmed MCP support across Gemini models and SDKs in April 2025.

Block and Apollo

Early enterprise adopters Block (formerly Square) and Apollo used MCP to wire AI assistants into internal data and tooling, with Block contributing servers and reference implementations to the open registry. Block’s engineering team has published detailed write-ups of the patterns they use, including the Layered Tool Pattern for MCP servers and their playbook for designing MCP servers at scale across 60-plus internal deployments.

Replit, Codeium, and Sourcegraph

Developer tooling companies were among the fastest to adopt MCP, plugging it into coding agents that can read repositories, run tests, and edit files inside the user’s existing dev environment.

Microsoft Copilot Studio

Microsoft added MCP support to Copilot Studio in May 2025, letting builders connect agents to MCP servers inside Microsoft 365, Dynamics 365, and Power Platform with the same governance the rest of Copilot uses. For teams running Microsoft Fabric as their data backbone, this means agents in Copilot Studio can pull live data from Fabric workspaces and write back through the same MCP layer.

Getting Started With MCP

A first MCP project does not need a custom server, the public registry has hundreds, and a few hours of setup are enough to demonstrate working integrations. For a production enterprise rollout, the path runs longer and involves three decisions.

First, pick the host. Claude Desktop and Cursor are simple to test with. Copilot Studio, the OpenAI Agents SDK, and Gemini hosts each suit different vendor environments. Most enterprises pick the host based on the AI vendor they already use for general LLM work, since that minimizes the security review surface and lets the same model run across MCP and non-MCP workflows.

Second, pick or build servers. Use the registry for common SaaS. Build custom servers for proprietary internal tools, with an SDK in Python or TypeScript. The reference implementations are at github.com/modelcontextprotocol. For most pilots, two or three servers are enough to deliver a meaningful workflow, one connector to the data source, one to the action surface (Slack, Jira, or a CRM), and one to a knowledge base.

Third, treat security as a first-class workstream. Decide which tools the model can call automatically and which need a human in the loop. Audit every tool call. Run servers in an environment with least-privilege access to the underlying systems. The protocol does not solve identity or access control, that is on the deployment, but it does give a clean seam to attach those controls.

A common mistake in early MCP pilots is wiring too many servers at once. Two well-scoped servers with a clear workflow ship faster and prove the value better than ten servers in a half-built agent. Start narrow, measure, then expand.

For a deeper implementation walkthrough, see Kanerika’s WebMCP implementation guide. For applied agent-building patterns with MCP, see MCP and context-aware AI agents.

Kanerika: Your Partner for Building Context-Aware AI With MCP

Kanerika builds production AI agents for mid-market and enterprise clients in BFSI, healthcare, retail, manufacturing, and logistics through its Agentic AI and AI/ML services practices. The team has shipped six named agents to date, Karl for real-time analytics, DokGPT for document intelligence, Alan for legal summarization, Susan for PII redaction, Mike for quantitative proofreading, and Jennifer for voice scheduling, several of them deployed against MCP-style architectures before MCP was named. Technical direction comes from Amit Chandak, Kanerika’s Chief Analytics Officer and a Microsoft MVP in Power BI, who leads the firm’s stance on agent architectures and Microsoft Fabric integration.

Proof Points

Recent applied work makes the value concrete. For an investment bank, Kanerika deployed DokGPT, a context-aware document agent built on a retrieval and tool-calling architecture compatible with MCP, with measured results of 43 percent faster information retrieval, 35 percent reduction in manual review hours, and 100 percent role-based compliance. A separate deployment for a U.S. fuel distributor cut accounts payable manual intervention by 90 percent and saved over 400 monthly man-hours through agent-driven invoice and AP automation. A third engagement built a real-time compliance and risk detection agent that flags policy violations during the transaction itself rather than after the fact. These are real engagements, not pilot demos.

The delivery approach follows a four-phase pattern. First, an architecture review against the existing AI and data stack. Second, a scoped pilot with one or two MCP servers and a single named workflow. Third, security and governance review with the client’s risk team. Fourth, scaled rollout with monitoring, approval workflows, and an audit trail across every tool call. The pilot phase typically runs four to six weeks. The rollout phase scales based on the number of servers and the integration surface, with most enterprise programs reaching production inside a quarter.

The credentials behind the work include Microsoft Solutions Partner status for Data and AI with Analytics Specialization, Microsoft Fabric Featured Partner, Databricks and Snowflake Consulting Partner, ISO 27001 and 27701 certification, SOC II Type II, and CMMI Level 3 appraisal. Kanerika has served 100-plus enterprise clients across 10 years with 98 percent client retention.

For teams evaluating MCP for production AI, Kanerika takes the work from architecture review through pilot, security review, and scaled rollout, with engineers who have built and operated context-aware agents in regulated environments.

Bringing MCP Into Your AI Roadmap

MCP is not a research curiosity, it is the standardization layer enterprise AI was missing. Adoption across Anthropic, OpenAI, Google, and Microsoft and the December 2025 transition to Linux Foundation governance remove both the vendor lock-in argument and the single-steward risk. Open-source SDKs and a public registry of 10,000-plus servers remove the build-from-scratch cost. The hard work that remains is the same hard work as any enterprise integration, security review, change management, and clean architecture for the systems being exposed.

Frequently Asked Questions

What is Model Context Protocol?

Model Context Protocol is an open standard from Anthropic, released in November 2024, that defines how AI applications connect to external tools, data sources, and services. It uses JSON-RPC 2.0 as its wire format and supports both local and remote server deployments, with reference SDKs in Python, TypeScript, Java, Kotlin, C#, and Swift.

What is MCP vs API?

MCP and APIs serve different purposes in system connectivity. Traditional APIs require developers to build specific integrations for each service, handling authentication, data formatting, and error management individually. MCP provides a standardized protocol layer specifically designed for AI models, enabling them to discover and interact with multiple tools through a single interface. While APIs are request-response based, MCP supports bidirectional communication with context preservation. APIs remain essential for general software integration, but MCP optimizes AI-specific workflows. Kanerika’s architects can help you determine the right approach for your AI infrastructure.

What is Model Context Protocol in ChatGPT?

Model Context Protocol in ChatGPT refers to the integration capability that allows ChatGPT to connect with external tools and data sources through the MCP standard. OpenAI has incorporated MCP support, enabling ChatGPT to access real-time information, execute functions in connected systems, and maintain richer contextual awareness during conversations. This means ChatGPT can query databases, trigger workflows, or pull live data without custom plugin development for each service. The standardized MCP approach simplifies enterprise ChatGPT deployments significantly. Kanerika helps organizations configure MCP-enabled ChatGPT implementations for enterprise use cases.

Is MCP Open Source?

Yes, the specification, reference SDKs, and the official server registry are all open source under permissive licenses, published at modelcontextprotocol.io and on GitHub. Any developer or vendor can implement an MCP server or client without licensing fees, and the protocol is governed openly with public RFCs for changes proposed by Anthropic and the broader community.

What is the difference between MCP and LLM?

MCP and LLMs operate at entirely different layers of AI systems. A Large Language Model (LLM) is the AI engine itself- trained neural networks like GPT-4 or Claude that process and generate human language. MCP is a communication protocol that connects these LLMs to external resources. The LLM provides intelligence and reasoning capabilities, while MCP provides access to real-time data and tools the LLM can utilize. Without MCP, an LLM relies solely on its training data; with MCP, it can query live systems and perform actions. Kanerika integrates LLMs with MCP-enabled enterprise systems- explore our AI solutions to learn more.

Can ChatGPT use MCP?

ChatGPT can use MCP following OpenAI’s adoption of the protocol. This integration allows ChatGPT to connect with MCP-compliant servers, accessing external tools, databases, and services through standardized interfaces. Users can configure MCP connections to enable ChatGPT to retrieve real-time data, execute workflows, and interact with enterprise systems dynamically. The implementation varies between ChatGPT versions and deployment types, with enterprise configurations offering more extensive MCP capabilities. This support positions ChatGPT as interoperable with the growing MCP ecosystem alongside Claude and other MCP-enabled models. Kanerika configures MCP connections for ChatGPT enterprise deployments.

What are the key benefits of adopting Model Context Protocol integration?

Model Context Protocol integration delivers several enterprise benefits. First, it eliminates redundant integration work- one MCP implementation connects to any compliant server. Second, it enables real-time data access, moving AI systems beyond static training data limitations. Third, standardized tool discovery allows AI agents to dynamically understand and use available capabilities. Fourth, bidirectional communication supports complex multi-step workflows. Fifth, the protocol supports secure, auditable connections essential for enterprise compliance. Finally, MCP future-proofs investments as the ecosystem grows across major AI platforms. These benefits compound as organizations scale AI initiatives across departments. Kanerika accelerates MCP adoption with proven implementation frameworks.

What are the features of MCP?

MCP includes several core features designed for AI-system connectivity. The protocol supports tool exposure, letting servers declare capabilities AI models can invoke. Resource access enables structured data retrieval from connected systems. Prompt templates provide reusable interaction patterns for common operations. Bidirectional messaging allows servers to send updates to clients proactively. Built-in authentication mechanisms secure connections between AI clients and MCP servers. Capability negotiation ensures clients and servers establish compatible communication parameters. Sampling support lets servers request LLM completions when needed. These features combine to deploy AI integration infrastructure.

What are MCP tools?

MCP tools are executable functions that AI models can invoke through the Model Context Protocol. Each tool is capable to query a database, send an email, create a record, or trigger a workflow. Tools are defined with structured descriptions including parameters, return types, and purpose explanations that AI models interpret to determine when and how to use them. MCP servers expose collections of related tools, while AI clients discover and call these tools based on user requests. This tool-based architecture makes AI agents actionable rather than purely conversational, enabling real business process automation.

What is the use of MCP?

MCP is used to connect AI applications with enterprise systems, enabling intelligent automation across business processes. Common uses include enabling AI assistants to query CRM data in real time, automating document workflows by connecting AI to content management systems, and building AI agents that execute multi-step tasks across multiple applications. MCP also supports customer service automation where AI accesses order history and account information dynamically. Development teams use MCP to create AI-powered tools that interact with code repositories and deployment pipelines. The protocol transforms AI from isolated chatbots into integrated enterprise assistants.

What are the benefits of MCP?

MCP benefits organizations by reducing AI integration complexity through standardization. Development teams save significant time since one protocol works across multiple AI platforms and data sources. Real-time connectivity ensures AI responses reflect current business data rather than stale training information. The standardized approach improves security through consistent authentication and access control patterns. Interoperability increases as the MCP ecosystem grows, protecting integration investments. Maintenance burden decreases since protocol updates apply universally rather than requiring per-integration fixes. Scalability improves because adding new tools follows established patterns. These benefits accelerate AI deployment timelines and improve ROI on AI initiatives.

Is MCP a tool or framework?

MCP is a protocol specification rather than a tool or framework. A protocol defines communication rules and data formats for system interaction- like HTTP for web traffic or SMTP for email. MCP specifies how AI clients and servers exchange messages, discover capabilities, and invoke functions. Tools are software applications; frameworks provide code structures for building applications. MCP provides the communication standard that tools and frameworks implement. SDKs exist to simplify MCP implementation in various languages, but these are implementations of the protocol, not MCP itself. Understanding this distinction helps organizations plan integration approaches correctly.

Will RAG be replaced by MCP?

RAG will not be replaced by MCP because they solve different problems and often work together. Retrieval-Augmented Generation handles knowledge retrieval- finding relevant documents to inform AI responses. MCP handles system connectivity- enabling AI to invoke tools and access live data. RAG excels at knowledge-intensive tasks requiring document search and synthesis. MCP excels at action-oriented tasks requiring system interaction. A customer service AI might use RAG to find policy documents while using MCP to access account records and process requests. Future AI architectures will likely combine both approaches rather than choosing one.

Is MCP server like an API?

An MCP server shares similarities with API servers but includes AI-specific capabilities. Both expose functionality over network connections and handle requests from clients. However, MCP servers provide structured capability descriptions that AI models interpret directly, support bidirectional communication channels, and maintain context across multiple interactions. Traditional API servers respond to explicitly coded requests; MCP servers enable AI models to discover and dynamically invoke appropriate functions. MCP servers also support the full protocol feature set including resource management and prompt templates. Think of MCP servers as API servers enhanced specifically for AI client interaction patterns.

What is Model Context Protocol for Copilot Studio?

Model Context Protocol for Copilot Studio enables Microsoft’s AI development platform to connect with external tools and data sources through standardized MCP interfaces. This integration allows Copilot Studio builders to extend their AI assistants with capabilities from MCP-compliant servers without custom connector development. Copilots can access enterprise databases, trigger business processes, and retrieve real-time information through MCP connections. Microsoft’s MCP support reflects the protocol’s growing adoption across major AI platforms. For organizations invested in Microsoft’s ecosystem, this capability streamlines building powerful, connected AI assistants within familiar tooling.