Did you know that AI can not only understand and respond to human language, but also create entirely new content, from realistic images to catchy music? Many such incredible features are powered by two distinct types of AI: Generative AI and Large Language Models (LLMs). But what sets them apart, and how are they changing the world around us?

Generative AI encompasses a spectrum of technologies, the Einsteins of creativity, capable of generating original content – from gripping novels to captivating paintings. LLMs, the Einsteins of language within the vast Generative AI landscape, specialize in processing and generating human-like text by analyzing and understanding large volumes of language data. These AI forces are already transforming industries. GAI generates lifelike product designs, while LLMs power chatbots that answer your customer service questions with uncanny fluency.

While generative AI systems have a broader application range and can generate various types of content autonomously, LLMs are tailor-made for language tasks. They leverage vast amounts of data to learn the intricacies of languages, such as grammar and style, creating coherent and contextually relevant material. In applications where comprehending and generating human-like language is critical, LLMs are often the underlying technology that enhance the capability of generative AI systems to understand complex prompts and provide suitable responses or content.

These two domains offer unique features and benefits, making them essential in the realm of artificial intelligence. Let’s delve into the world of Generative AI vs. LLMs, exploring their key differences and diverse applications that are shaping the future of intelligent automation.

Generative AI vs Large Language Models (LLMs): An Overview

What is Generative AI?

Generative AI (generative artificial intelligence) is a subfield of AI focused on creating entirely new content. Unlike traditional AI models trained for tasks like classification or prediction, generative AI learns the underlying patterns and relationships within a dataset (text, images, code, etc.) and uses that knowledge to produce original content that closely resembles the training data.

Popular Generative AI Models and Their Applications

1. Generative Adversarial Networks (GANs)

GANs, or competitive neural network models, were first introduced in 2014. While the the first network, the generator, produces original data, the second network, the discriminator, gets data and classifies it as real or artificial intelligence (AI) generated, Using deep learning techniques and a feedback loop that penalizes the discriminator for every error, the GAN gains the ability to produce content that is more original.

Use Cases

- Image generation: Create photorealistic images of anything imaginable, from landscapes and portraits to fantastical creatures.

- Image editing: Enhance existing images, manipulate styles, and create artistic variations.

- Product design: Generate design concepts for various products, allowing for faster iteration and exploration of ideas.

- Medical imaging: Create synthetic medical data to train medical AI models and improve diagnostic accuracy.

Read More – Everything You Need to Know About Building a GPT Models

2. Variational Autoencoders (VAEs)

VAEs are AI models that consist of neural networks for encoding and decoding data, enabling them to learn techniques for generating new data. The encoder compresses data into a condensed representation, and the decoder then uses this condensed form to reconstruct the input data. VAEs can complete a variety of content generation tasks.

Use Cases

- Image compression: Compress images significantly without losing crucial details, making storage and transmission more efficient.

- Anomaly detection: Identify unusual patterns in data sets, helping to detect fraud, equipment failure, or other anomalies.

- Music generation: Generate new music that adheres to a specific style or genre, inspiring musicians and composers.

- Drug discovery: Design new drug molecules with desired properties, accelerating the process of drug development.

Read More – Generative AI in Telecom: Use Cases and Benefits

3. Diffusion Models

Diffusion models use a probabilistic approach to generate new data by gradually refining a random noise input until it resembles the target data. These models are especially helpful for generating high-quality images and videos.

Use Cases

- Image Generation: Turn text descriptions into stunningly realistic images.

- Image Editing: Seamlessly remove objects, restore damage, or complete creations.

- Denoising: Sharpen blurry images and videos, removing unwanted noise.

- Video Generation: Create short videos or add animations.

- Beyond Images: Potential for text-to-3D and complex text generation.

4. WaveNet

WaveNet is a generative AI system, developed by DeepMind. It creates realistic human-like speech, enabling applications such as voice assistants and interactive storytelling.

Use Cases

- Ultra-realistic Speech Synthesis: Generate natural-sounding voices that can be used for audiobooks, chatbots, and even personalized assistants.

- Voice Cloning: Replicate a specific speaker’s voice, potentially aiding those who have lost their ability to speak or creating new characters for audiobooks.

- Voice Editing and Enhancement: Improve the quality of existing audio recordings by removing noise or manipulating voice characteristics.

- Music Generation: WaveNet can be used to generate musical pieces, potentially inspiring new compositions or creating unique sound effects.

5. DALL-E

Developed by OpenAI, DALL-E is a generative AI model that creates images from textual descriptions. Its major applications include visual content generation and design.

Read More – Best Generative AI Tools For Businesses in 2024

Use Cases

- Visual Content creation: DALL-E generates visual content for blogs, social media, and website design,

- Custom art: It creates custom art pieces for interior design, providing unique and affordable decor.

- Alternative to stock photos: It offers an alternative to cheesy stock photos, providing more visually appealing and unique images for websites and other materials

What are Large Language Models (LLMs)?

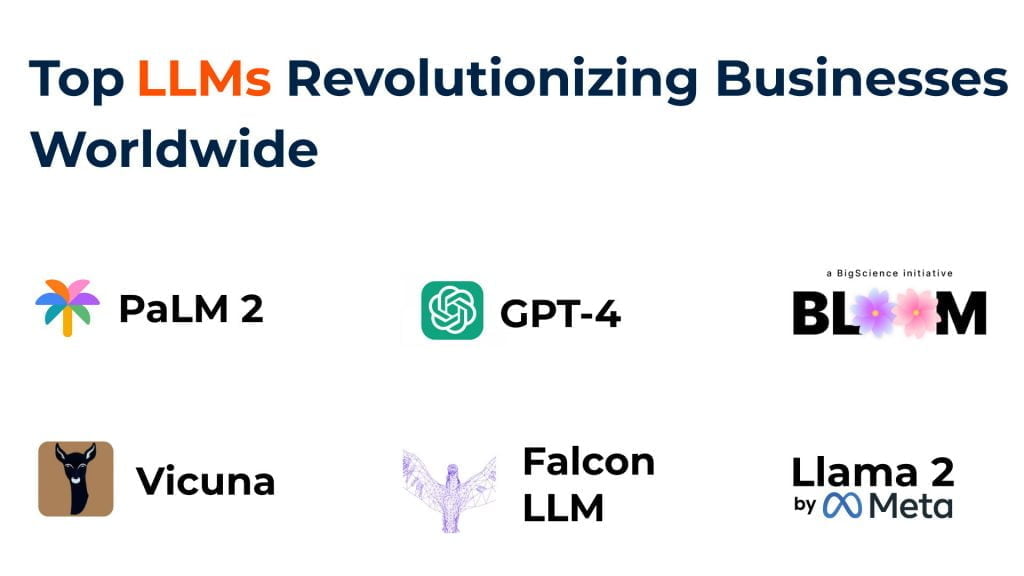

Lage Language Models (LLMs) are AI models that operate primarily on human language, understanding patterns and making predictions within data. They are trained on large datasets with many parameters, enabling them to have broad applicability.. Some popular LLMs include OpenAI’s GPT-3, Google’s PaLM 2, Meta’s Llama 2, and Vicuna.

1. OpenAI GPT-4:

OpenAI’s GPT 4 (Generative Pre-trained Transformer 4) is a powerful language model that excels at generating realistic and creative text formats. This includes creating poems, code, scripts, and even informative answers to your questions!

Use Cases

- Content Creation: Generate different creative text formats like poems, code, scripts, musical pieces, emails, and more.

- Writing Assistance: Improve writing quality by suggesting different phrasings, correcting grammar, and completing sentences.

- Chatbots: It elevates customer service with chatbots that understand natural language. It facilitates smooth interactions, answers questions effectively, and personalizes support experiences.

- Code Generation: GPT-4 assists programmers by generating code snippets, automating repetitive tasks, and writing clear code documentation

- Machine Translation: Translate languages accurately and fluently, aiding communication across borders.

2. Google PaLM 2

Google’s PaLM 2 is a next-generation LLM pushing the boundaries of reasoning and code comprehension. It tackles complex scientific tasks, answers intricate questions, and even generates human-quality code

Use Cases

- Scientific Research: Analyze and understand complex scientific data, accelerating research efforts in various fields.

- Question Answering: Provide comprehensive and informative answers to complex questions.

- Code Generation: Generate human-quality code for different programming languages, aiding software development.

- Med-PaLM 2 (Specialized Version): Identify medical issues from images like X-rays, assisting healthcare professionals.

3. Meta’s Llama 2

Meta’s Llama 2 is a versatile LLM focused on making information accessible. It can create clear summaries of text, generate different creative text formats, and answer your questions in a helpful and informative way.

Use Cases

- Text Summarization: Create concise and accurate summaries of large amounts of text, making information easier to understand.

- Creative Writing: Generate different creative text formats like poems, code snippets, scripts, and more.

- Question Answering: Provide helpful and informative answers to your questions in a user-friendly way.

- Code Generation: While not its main focus, Llama 2 shows potential for generating basic code.

Read More – Google’s Gemini Pro vs. OpenAI’s GPT-4: A Detailed Review

4. Vicuna

Vicuna is a factual language model excelling at summarizing information and answering questions accurately. It can be a helpful tool for research or gathering concise information on specific topics.

Use Cases

- Text Summarization: Similar to LLMs above, Vicuna can create summaries of factual topics.

- Question Answering: Provide helpful answers to factual queries, particularly useful for research or information gathering.

- Writing Assistance: It can be used to improve writing quality and suggest alternative phrasings.

5. BLOOM (Hugging Face)

BLOOM is a multilingual open-source large language model (LLM) created by a massive collaboration effort. It can used to generate human-quality text in various languages, translate languages in real-time, and even help with research by summarizing information across different languages

Use Cases

- Multilingual Content Creation: Generate creative text formats like poems, scripts, and marketing copy in various languages

- Code Generation and Assistance: Analyze existing code and suggest improvements, generate code snippets for repetitive tasks, and even translate code from one language to another.

- Data Analytics: Researchers can use BLOOM to analyze vast amounts of textual data (like scientific papers) to identify patterns and trends.

- Machine Translation: Excels at machine translation, accurately converting text from one language to another while capturing the nuances of each tongue. This can be crucial for communication and collaboration across international borders.

6. FALCON LLM

FALCON LLM a powerful open-source large language model (LLM). It packs a punch in terms of language processing. This contributes to its ability to tackle complex tasks.

Use Cases

- Craft Compelling Content: It can generate creative text formats (like poems or scripts) and brainstorm engaging content ideas, helping marketing teams stand out.

- Machine Translation: It can translate text accurately, even for complex languages. While fine-tuning for specific language pairs can further enhance accuracy, it provides a strong foundation for multilingual communication.

- Build Smarter Chatbots:It can be integrated into chatbots, enabling them to understand natural language and respond in a helpful and informative way.

- Data Labeling: It can analyze text data and help pre-classify or suggest labels for data points, streamlining the data labeling process.

Generative AI vs LLM: Key Differences

Criteria | Generative AI | LLMs |

| Learning Approach | Learns from diverse datasets to produce original content. Excels in creative tasks like generating art, music, or text. | Trained on vast amounts of text data, enabling them to understand and generate human-like language. Excel in tasks like translation, summarization, and conversation. |

| Output Generation | Creates completely new and unique content, making it ideal for creative projects and generating novel ideas. | Generate text based on input and context, producing coherent and contextually relevant responses. Proficient in generating human-like language across domains. |

| Complexity | Can handle complex tasks that require creativity and originality, such as composing music or writing poetry. Mimics human creativity to a certain extent. | Designed to understand and generate nuanced language, making them suitable for sentiment analysis, natural language understanding, and dialogue generation. |

| Computational Power | Typically requires moderate to high computational resources, especially for complex tasks like image or video generation. | Demand high computational power during training but can operate efficiently during inference for tasks like text generation and language understanding. |

| Fine-tuning | Can be fine-tuned for specific tasks or styles, allowing customization and optimization for particular creative endeavors. | Fine-tuned for domain-specific tasks, improving their performance and accuracy in specialized applications such as medical text analysis or legal document summarization. |

| Real-time Response | Has some limitations in real-time response due to processing time, especially for computationally intensive tasks or large-scale content generation. | Offer faster response times due to pre-trained capabilities and efficient inference mechanisms. Provide quick and accurate responses in chatbots and virtual assistants. |

| Applications | Finds applications in creative writing, art generation, storytelling, and content creation for media such as music and video. | Used for a wide range of language tasks, including language translation, text summarization, question answering, and chatbots. Power applications like virtual assistants and automated content generation systems. |

Generative AI vs LLM: A Comparative Analysis

1. Content Creation

Generative AI encompasses AI systems designed to autonomously produce new content, while LLMs such as GPT specialize in generating human-like text. Generative AI can predict and generate text, images, and audio, whereas LLMs primarily focus on text-based tasks, including content creation and summarization.

2. Code Generation and Automation

LLMs like OpenAI’s Codex, which powers GitHub Copilot, are proficient in automating coding tasks by translating natural language prompts into code. Generative AI expands upon this by generating code and automating a broader range of functions through understanding and creating diverse content.

3. Language Translation Efficiency

LLMs are paramount in language translation, leveraging NLP to understand grammar and context. Generative AI systems may enhance translation efficiency by integrating multimodal data to provide enriched language services.

4. Conversational AI and Virtual Assistants

LLMs are essential for conversational AI, powering chatbots and virtual assistants such as OpenAI’s ChatGPT. They use prompt engineering to improve interaction quality, while the broader scope of generative AI can introduce additional modalities into conversations.

5. Creative Uses in Art and Design

While LLMs contribute to art and design through textual descriptions, generative AI tools such as DALL-E, Midjourney, and Stable Diffusion have revolutionized these fields by providing robust image generation capabilities.

6. Applications in Science and Education

In science and education, generative AI empowers intelligent systems that can aid in diverse learning experiences and data analysis. LLMs contribute by making complex information accessible through summarization and explanation in natural language.

7. Industry-Specific AI Solutions

LLMs offer tailored text generation for industries like marketing, enhancing productivity with automated content creation. Generative AI widens the scope, providing industry-specific solutions by generating customized content across various media formats.

8. Future Trends and Scalability

The scalability of generative AI and LLMs lies in their architectural design, with some models boasting billions of parameters. Future trends indicate that as these models grow, so will their capabilities and the breadth of tasks they can perform, enhancing both specificity and contextuality in various applications.

Applications of LLMs and Generative AI

1. Healthcare Industry

- Medical Records Analysis: LLMs can analyze medical records for patterns, trends, and insights to support diagnosis and treatment planning.

- Drug Discovery: Gen AI can identify potential drug candidates and predict their efficacy by analyzing vast amounts of biomedical data

- Personalized Medicine: Gen AI leverages genetic data to provide personalized treatment plans and predict disease risks.

2. Finance Industry

- Risk Assessment: LLMs can assess risks in investments, loans, and portfolios.

- Fraud Detection: They can detect fraudulent activities by analyzing patterns and anomalies in financial transactions.

- Algorithmic Trading: Gen AI can develop and optimize trading algorithms based on market data and trends.

3. Marketing and Advertising Industry

- Market Research: LLMs can analyze consumer data and trends to provide insights for marketing strategies.

- Content Generation: They can generate content such as articles, social media posts, and product descriptions.

- Ad Campaign Optimization: Gen AI can optimize ad campaigns by analyzing performance metrics and user behavior data.

4. Legal Industry

- Contract Analysis: LLMs can efficiently review and analyze contracts, identifying key clauses and potential risks.

- Legal Research: They can assist legal professionals in researching case law, statutes, and regulations, saving time and improving accuracy.

- Document Generation: LLMs can generate legal documents such as briefs, pleadings, and contracts based on input data.

5. Education Industry

- Personalized Learning: LLMs can create personalized learning experiences by analyzing student data and adapting content accordingly.

- Automated Grading: They can automate grading processes for assignments and exams, providing quick and accurate feedback to students.

- Curriculum Design: Gen AI can analyze educational trends and student outcomes to inform curriculum design and improvement.

6. Manufacturing Industry

- Predictive Maintenance: LLMs can analyze sensor data from equipment to predict maintenance needs and prevent downtime.

- Quality Control: They help identify defects and improve manufacturing processes by analyzing product quality data..

- Supply Chain Optimization: Gen AI can optimize supply chains by analyzing demand forecasts, inventory levels, and logistics data.

7. Hospitality and Tourism Industry

- Personalized Recommendations: LLMs can analyze customer preferences and behavior to provide personalized recommendations for travel, accommodations, and activities.

- Revenue Management: They can analyze pricing data and demand patterns to optimize revenue and occupancy rates.

- Customer Service Automation: Gen AI can automate customer service tasks such as reservations, inquiries, and feedback analysis.

Empower Your Business with Kanerika’s Advanced LLM and Gen AI Solutions

Kanerika is a leading technical services provider that offers unique technical solutions tailored to your business needs. By leveraging the immense capabilities of Gen AI and LLM, Kanerika ensures that your business achieves the desired results. Whether you are looking to automate your operations, minimize business expenses, streamline workflows, or optimize your workforce and other resources, Kanerika can help you by providing effective and innovative solutions.

Having successfully implemented numerous LLMa and Gen AI technologies in various industries for renowned clients, Kanerika assures exceptional outcomes no matter what your business challenges or requirements are. Get in touch with us today to find out how we can help you transform your business.

Frequently Asked Questions

Is generative AI the same as LLM?

Generative AI and LLMs are not the same, though they overlap significantly. Generative AI is a broad category of artificial intelligence that creates new content including text, images, audio, and video. Large language models are a specific type of generative AI focused exclusively on understanding and producing human language. Think of LLMs as one powerful subset within the larger generative AI landscape. Other generative models handle visuals or music without using language architectures at all. Kanerika helps enterprises identify whether LLM-based solutions or broader generative AI applications best fit their use cases—schedule a consultation to explore your options.

Is ChatGPT a generative AI?

ChatGPT is both a generative AI and an LLM-powered application. Built on OpenAI’s GPT architecture, ChatGPT generates human-like text responses by predicting the most contextually appropriate words based on massive training datasets. As a generative AI chatbot, it creates original content rather than retrieving pre-written answers. This makes ChatGPT ideal for content creation, coding assistance, and conversational interfaces. The underlying large language model enables its natural dialogue capabilities and creative text generation. Kanerika integrates LLM-based solutions like ChatGPT into enterprise workflows—connect with our AI specialists to build your custom implementation.

Is an LLM technically AI?

An LLM is absolutely a form of artificial intelligence, specifically falling under the machine learning and deep learning branches of AI. Large language models use neural network architectures trained on billions of text parameters to understand context, generate responses, and perform language tasks. They demonstrate AI capabilities through pattern recognition, contextual understanding, and adaptive responses without explicit programming for each scenario. LLMs represent one of the most advanced applications of AI technology available today, powering everything from chatbots to code generation tools. Kanerika deploys enterprise-grade LLM solutions built on proven AI foundations—reach out to discuss your transformation roadmap.

What is the difference between generative AI and AI/ML?

Generative AI is a specialized subset within the broader AI and machine learning ecosystem. Traditional AI/ML focuses on classification, prediction, and pattern recognition from existing data. Generative AI goes further by creating entirely new content—text, images, code, or audio—that did not previously exist. Machine learning models typically analyze and categorize; generative models synthesize and produce. Both rely on training data, but generative AI uses architectures like transformers and diffusion models specifically designed for content creation rather than pure analysis. Kanerika implements both predictive ML models and generative AI solutions tailored to your business objectives—book a discovery session today.

What is the key role of LLM in generative AI?

LLMs serve as the primary engine powering text-based generative AI applications. Their key role involves understanding natural language input, processing contextual meaning across thousands of tokens, and generating coherent, contextually relevant text outputs. Large language models enable generative AI systems to write articles, answer questions, translate languages, summarize documents, and produce code. The transformer architecture underlying most LLMs allows parallel processing that makes real-time text generation practical at scale. Without LLMs, generative AI would lack sophisticated language understanding capabilities. Kanerika leverages LLM capabilities to automate document processing and content workflows—let us demonstrate what is possible for your organization.

What is the difference between GPT and LLM?

GPT is a specific LLM architecture developed by OpenAI, while LLM is the broader category encompassing all large language models. Think of LLM as the product category and GPT as one prominent brand within it. GPT stands for Generative Pre-trained Transformer, describing its training approach and architecture. Other LLMs include Google’s PaLM, Meta’s LLaMA, and Anthropic’s Claude models—each using different training methods and architectures while still qualifying as large language models. GPT popularized transformer-based language generation, but the LLM landscape now includes many competitive alternatives. Kanerika evaluates multiple LLM options to recommend the best fit for your enterprise requirements—contact us for an unbiased assessment.

What is the difference between NLP, LLM, and generative AI?

NLP, LLM, and generative AI represent three interconnected but distinct concepts. Natural language processing is the field focused on enabling machines to understand human language. LLMs are advanced NLP systems trained on massive text datasets using deep learning architectures. Generative AI is the broader capability of creating new content, with LLMs handling the text generation component. NLP provides foundational techniques; LLMs apply those techniques at unprecedented scale; generative AI encompasses all AI that produces original outputs across modalities. These technologies work together in modern applications like intelligent chatbots and content automation platforms. Kanerika combines NLP expertise with LLM implementation to build enterprise-ready generative AI solutions—schedule a technical consultation.

Is there any difference between AI and generative AI?

Generative AI is a specialized subset within the larger artificial intelligence domain. Traditional AI encompasses systems that perceive, reason, learn, and act—including recommendation engines, fraud detection, and predictive analytics. Generative AI specifically focuses on creating new content rather than analyzing existing information. Conventional AI might classify an image as containing a cat; generative AI creates entirely new cat images that never existed. Both use machine learning foundations, but generative AI employs architectures optimized for synthesis and creation. The distinction matters when selecting solutions for business problems. Kanerika helps enterprises determine whether predictive AI or generative AI better addresses their specific challenges—request a free consultation.

What is generative AI in simple terms?

Generative AI is artificial intelligence that creates new content rather than just analyzing existing data. It produces original text, images, music, video, and code by learning patterns from massive training datasets and generating outputs that follow those patterns. When you ask ChatGPT a question, it generates a unique response rather than retrieving a pre-written answer. Generative AI powers tools that write marketing copy, design graphics, compose music, and automate coding tasks. The technology learns what content should look like, then creates something new matching those learned characteristics. Kanerika implements generative AI solutions that automate content-heavy workflows across enterprises—explore what is achievable for your business.

What is an example of a generative AI?

ChatGPT stands as the most recognized generative AI example, using large language model architecture to produce human-like text responses. Other prominent examples include DALL-E and Midjourney for image generation, GitHub Copilot for code creation, and Runway for video synthesis. Music generation tools like AIVA compose original scores, while Synthesia creates AI-generated video content with virtual presenters. Each example demonstrates generative AI creating new, original content across different modalities—text, images, code, audio, and video. These applications share the common capability of producing outputs that did not previously exist. Kanerika deploys enterprise-grade generative AI solutions including LLM-based document automation—see our capabilities in action.

What are LLM examples?

Leading LLM examples include OpenAI’s GPT-4, Google’s Gemini, Anthropic’s Claude, and Meta’s LLaMA. Microsoft’s Copilot leverages GPT technology for productivity applications, while Amazon’s Titan powers AWS generative AI services. Open-source options like Mistral and Falcon provide alternatives for enterprises requiring on-premises deployment. Each large language model differs in parameter count, training data, context window size, and specialized capabilities. GPT-4 excels at reasoning tasks; Claude emphasizes safety and long-context processing; LLaMA offers customizable open-weight deployment. Choosing the right LLM depends on your specific use case requirements and infrastructure constraints. Kanerika evaluates and implements the optimal LLM for your enterprise needs—talk to our AI team.

Is Claude an LLM or generative AI?

Claude is both an LLM and a generative AI, as these categories overlap rather than conflict. Developed by Anthropic, Claude is a large language model trained on text data using transformer architecture, making it definitionally an LLM. Because Claude generates original text responses rather than retrieving stored answers, it also qualifies as generative AI. Claude distinguishes itself through constitutional AI training methods emphasizing helpfulness and harmlessness. Its extended context window handles documents exceeding 100,000 tokens, making it suitable for enterprise document analysis and summarization tasks. Kanerika integrates Claude and other LLMs into business workflows where safety and accuracy matter most—discuss your requirements with our team.

What are the two main types of generative models?

The two main types of generative models are Generative Adversarial Networks and Variational Autoencoders. GANs use two competing neural networks—a generator creating content and a discriminator evaluating authenticity—to produce increasingly realistic outputs. VAEs learn compressed representations of data and generate new samples by decoding from that latent space. More recently, diffusion models have emerged as a third major category, powering image generators like Stable Diffusion and DALL-E. Transformer-based LLMs represent another architectural approach dominating text generation. Each model type suits different content creation tasks and quality requirements. Kanerika selects and implements the appropriate generative model architecture for your specific enterprise applications—reach out for guidance.

Is generative AI a branch of AI?

Generative AI is indeed a branch within the broader artificial intelligence field. The AI hierarchy flows from general AI concepts down through machine learning, then deep learning, with generative AI representing a specialized application area focused on content creation. Other AI branches include computer vision, robotics, expert systems, and natural language processing. Generative AI intersects with several of these—LLMs combine NLP foundations with generative capabilities, while image generators merge computer vision with content synthesis. This positioning makes generative AI both a distinct specialty and a capability that enhances other AI domains. Kanerika brings expertise across AI branches to build comprehensive solutions—let us map the right approach for your objectives.

Can LLMs generate images?

Standard LLMs cannot generate images because they process and produce text exclusively. Large language models use architectures optimized for sequential token prediction, understanding language patterns rather than visual content. However, multimodal models like GPT-4V and Gemini combine LLM capabilities with image understanding, though they still rely on separate image generation components. True image generation requires diffusion models or GANs, not transformer-based language architectures. Some systems like DALL-E use text-to-image pipelines where an LLM interprets prompts before a diffusion model generates visuals. The distinction highlights how generative AI encompasses multiple specialized model types beyond LLMs alone. Kanerika implements both text and image generation solutions for enterprises needing comprehensive content automation—explore our multimodal AI capabilities.

Are generative AI and NLP the same?

Generative AI and NLP are related but fundamentally different concepts. Natural language processing is a field focused on enabling machines to understand, interpret, and manipulate human language—encompassing tasks like sentiment analysis, translation, and entity recognition. Generative AI is the capability of creating new content across any modality, including text, images, and audio. LLMs represent the intersection where generative AI applies NLP foundations to produce original text. Traditional NLP analyzes existing language; generative AI synthesizes new content. Many NLP applications are non-generative, simply classifying or extracting information without creating anything new. Kanerika combines NLP analytics with generative AI production capabilities for comprehensive language solutions—contact us to build your custom implementation.

What is generative AI also known as?

Generative AI goes by several alternative names depending on context and application. Common synonyms include creative AI, synthetic content generation, and AI content creation technology. In academic literature, you may encounter terms like generative modeling or deep generative networks. Marketing contexts sometimes use phrases like AI-powered content generation or intelligent content synthesis. When focused on text specifically, terms like conversational AI and LLM-based generation apply. Image-focused applications often use synthetic media or AI-generated imagery terminology. These variations describe the same fundamental capability—AI systems that produce original content rather than simply analyzing existing data. Kanerika stays current with evolving generative AI terminology and technology—partner with us to implement solutions using proven approaches.

What comes after LLM AI?

The next evolution beyond current LLMs points toward agentic AI systems capable of autonomous reasoning and action. These AI agents use LLMs as reasoning cores while adding planning, tool use, and memory capabilities for multi-step task execution. Multimodal foundation models combining text, vision, audio, and video understanding represent another frontier. Smaller, specialized models optimized for specific domains may complement massive general-purpose LLMs. Research into world models—AI that understands physical reality and causation—promises more grounded reasoning capabilities. The trajectory moves toward AI systems that not only generate content but actively accomplish complex real-world objectives. Kanerika already deploys agentic AI solutions built on LLM foundations—explore how autonomous AI agents can transform your operations.