Do you really need a data analytics pipeline? In the heart of Silicon Valley, nestled among the towering tech giants, a small startup was on the cusp of a breakthrough. Armed with a visionary product and a dedicated team, they were poised to disrupt the industry. Yet, as they navigated the labyrinth of data generated by their platform, they faced an unexpected hurdle: the deluge of information was overwhelming, and crucial insights seemed to slip through their fingers like sand.

This scenario is not unique; it resonates with companies of all sizes and industries in today’s data-driven landscape. In an era where information is king, businesses are recognizing the critical need for robust data analytics pipelines. These pipelines act as the circulatory system of an organization, ensuring that data flows seamlessly from its raw form to actionable insights.

In this article, we delve into why companies, regardless of their scale or domain, are increasingly relying on data analytics pipelines to not only survive but thrive in the fiercely competitive business ecosystem. From enhancing decision-making to unleashing the true power of Big Data, the benefits are boundless.

“Good analytics pipelines are crucial for organizations.

When done right, they help meet strategic goals faster.”

In this article, we’ll take a deep dive into what is a data analysis pipeline, its various components, how it’s useful in real-life business scenarios, and a lot more.

Transform Your Business with Data Analytics Solutions!

Partner with Kanerika for Expert Data Analytics Services

What is a data analytics pipeline?

A data analytics pipeline is a structured framework that facilitates the end-to-end processing of data for extracting meaningful insights and making informed decisions.

Data analytics pipelines are used in different fields, including data science, machine learning, and business intelligence, to streamline the process of turning data into actionable information. They help ensure data quality, consistency, and repeatability in the analysis process.

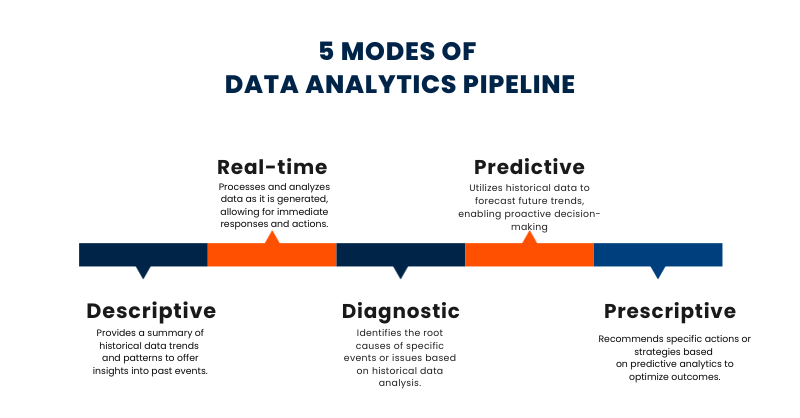

What are the stages of the data analytics pipeline?

A data analysis pipeline involves several stages. The key ones are:

Stage 1 – Capture: In this initial stage, data is collected from various sources such as databases, sensors, websites, or any other data generators. This can be in the form of structured data (e.g., databases) or unstructured data (e.g., text, images).

Stage 2 – Process: Once the data is captured, it often needs to be cleaned, transformed, and pre-processed. This step involves tasks like data cleaning to handle missing values or outliers, data normalization, and feature engineering to make the data suitable for analysis.

Stage 3 – Store: Processed data is then stored in a data repository or database for easy and efficient access. This step ensures that the data is organized and readily available for analysis, typically using technologies like databases or data lakes.

Stage 4 – Analyze: In the analysis stage, data scientists and analysts apply various statistical, machine learning, or data mining techniques to extract valuable insights and patterns from the data. This can include exploratory data analysis, hypothesis testing, and predictive modeling to answer specific questions or solve problems.

Stage 5 – Use: The ultimate goal of a data analysis pipeline is to derive actionable insights that can inform decision-making. The results of the analysis are used to make informed business decisions, optimize processes, or drive strategies, contributing to the organization’s goals and objectives.

A well-structured data analysis pipeline is crucial for ensuring that data-driven insights are generated efficiently and consistently, enabling organizations to leverage their data for competitive advantage and innovation.

Read more – Different Types of Data Pipelines: Which One Better Suits Your Business?

Elevating Project Management with Real-Time Data Analytics

Transform your project outcomes—leverage real-time data analytics for smarter, faster decision-making!

What are the components of the data analytics pipeline?

A data analysis pipeline consists of several key components that facilitate the end-to-end process of turning raw data into valuable insights. Here’s an elaboration on each of these components:

1. Data Sources: Data sources are the starting point of any data analysis pipeline. They can be diverse, including databases, logs, APIs, spreadsheets, or any other repositories of raw data. These sources may be internal (within the organization) or external (third-party data).

2. Data Ingestion: Data ingestion involves the process of collecting data from various sources and bringing it into a centralized location for analysis. It often includes data extraction, data loading, and data transportation.

3. Data Storage: Once data is ingested, it needs to be stored efficiently. This typically involves databases, data warehouses, or data lakes. Data storage systems must be scalable, secure, and designed to handle the volume and variety of data.

4. Data Processing: Data processing involves cleaning and preparing the data for analysis. This step includes data validation, handling missing values, and ensuring data quality. Techniques like data validation, data sampling, and data aggregation are often used.

5. Data Transformation: Data transformation is the step where raw data is transformed into a suitable format for analysis. This may include feature engineering, data normalization, and data enrichment. Data may be transformed using programming languages like Python or tools like ETL (Extract, Transform, Load) processes.

6. Data Analysis: This is the core of the pipeline, where data is analyzed to derive insights. It includes various statistical and machine-learning techniques. Exploratory data analysis, hypothesis testing, and modeling are commonly used methods in this stage.

7. Data Delivery: Once insights are derived, the results need to be presented to stakeholders. This can be through reports, dashboards, or other visualization tools. Data delivery can also involve automating the process of making data-driven decisions.

8. Data Governance and Security: Throughout the pipeline, data governance and security must be maintained. This includes ensuring data privacy, compliance with regulations (e.g., GDPR), and data access control.

9. Monitoring and Maintenance: After the initial analysis, the pipeline should be monitored for changes in data patterns, and it may require updates to adapt to evolving data sources and business needs.

10. Scalability and Performance: As data volumes grow, the pipeline must be scalable to handle increased loads while maintaining acceptable performance.

11. Documentation: Proper documentation of the pipeline, including data sources, transformations, and analysis methods, is crucial for knowledge sharing and troubleshooting.

12. Version Control: For code and configurations used in the pipeline, version control is important to track changes and ensure reproducibility.

Transform Your Business with Data Analytics Solutions!

Partner with Kanerika for Expert Data Analytics Services

Data pipeline vs ETL pipeline

ETL and Data pipelines are both crucial components in managing and processing data, but they serve slightly different purposes within the realm of data engineering.

Data pipeline are a broader concept encompassing various processes involved in moving, processing, and managing data from source to destination. They can include ETL processes but are not limited to them. Data pipelines handle data ingestion, transformation, storage, and data movement within an organization. These pipelines are designed to handle a wide variety of data, including real-time streams, batch data, and more.

On the other hand, ETL pipelines specifically focus on the Extract, Transform, and Load process. ETL is a subset of data pipelines that primarily deals with extracting data from source systems, transforming it into a suitable format, and loading it into a destination such as a data warehouse. ETL pipelines are essential for cleaning, structuring, and preparing data for analytics and reporting purposes. They are typically used when data needs to be consolidated and integrated from multiple sources into a unified format for analysis. In summary, while data pipelines encompass a more extensive set of data processing tasks.

Data Analytics in Pharmaceutical Industries: Everything You Need to Know

Unlock the full potential of data analytics to revolutionize pharmaceutical operations—explore insights that drive innovation and efficiency!

Use cases of data analytics pipelines

Data pipelines have numerous real-life use cases across various industries. Here are some detailed examples of how data pipelines are applied in practice:

1. Retail and E-commerce

- Inventory Management: Data pipelines help retailers manage their inventory by collecting real-time data from sales, restocking, and supplier information, ensuring products are always in stock.

- Recommendation Systems: E-commerce sites use data pipelines to process user behavior data and provide personalized product recommendations to increase sales and user engagement.

2. Healthcare

- Patient Data Integration: Data pipelines merge patient data from different sources (electronic health records, wearable devices) for comprehensive patient profiles, aiding in diagnosis and treatment decisions.

- Health Monitoring: Wearable devices send health data to data pipelines, enabling continuous monitoring and early intervention for patients with chronic conditions.

3. Finance

- Risk Assessment: Financial institutions use data pipelines to collect and process market data, customer transactions, and external information to assess and mitigate financial risks in real time.

- Fraud Detection: Data pipelines analyze transaction data to detect unusual patterns and flag potential fraudulent activities, helping prevent financial losses.

4. Manufacturing

- Predictive Maintenance: Data pipelines process sensor data from machinery to predict when maintenance is needed, reducing downtime, and improving production efficiency.

- Quality Control: Data pipelines monitor and analyze product quality in real-time, identifying defects and ensuring only high-quality products reach customers.

5. Logistics and Supply Chain

- Route Optimization: Data pipelines collect and analyze data on traffic conditions, weather, and order volumes to optimize delivery routes, reducing fuel costs and delivery times.

- Inventory Tracking: Real-time data pipelines track inventory levels and locations, ensuring accurate inventory management and reducing stockouts or overstocking.

6. Social Media and Advertising

- Ad Targeting: Data pipelines process user data to target ads based on demographics, behavior, and interests, increasing the effectiveness of advertising campaigns.

- User Engagement Analytics: Social media platforms use data pipelines to track user engagement metrics, improving content recommendations and platform performance.

7. Energy and Utilities

- Smart Grid Management: Data pipelines manage data from smart meters and sensors, enabling utilities to optimize energy distribution, detect outages, and reduce energy waste.

- Energy Consumption Analysis: Data pipelines analyze data from buildings and industrial sites to identify energy-saving opportunities and reduce operational costs.

Data Analytics Pipeline Example – Twitter, Now X

Twitter stands out for its ability to curate a personalized feed, drawing users back for their daily dose of updates and interactions.

As a data-centric platform, Twitter’s decisions are grounded in thorough data analysis. At the heart of their operation lies a robust data pipeline responsible for gathering, aggregating, processing, and seamlessly transmitting data at an impressive scale:

- Handling an impressive volume, Twitter processes billions of events and manages multiple terabytes of data daily.

- During peak hours, the platform processes millions of events, demonstrating its ability to handle surges in activity.

- The data pipeline manages diverse streams of information, encompassing user tweets, interactions, trending topics, and more.

Furthermore, Twitter excels in real-time analytics, often delivering insights in near-instantaneous timeframes. This capability is essential for keeping users engaged and informed promptly. The scale of their operation is extensive and continues to expand.

In terms of infrastructure, Twitter employs a substantial setup: multiple clusters and instances dedicated to handling the substantial data flow. This includes powerful servers and robust data storage systems that collectively manage the immense data load. This showcases the formidable scale at which Twitter operates, ensuring a seamless and engaging user experience.

8. Agriculture

- Precision Agriculture: Data pipelines process data from drones, sensors, and satellites to monitor soil conditions, crop health, and weather for precise decision-making in farming.

9. Government and Public Services

- Crisis Response: Data pipelines collect and analyze real-time data during disasters or public health crises to aid in response efforts and resource allocation.

- Citizen Services: Governments use data pipelines to process citizen data for services such as taxation, welfare distribution, and urban planning.

10. Gaming

- Game Analytics: Data pipelines process in-game user data to provide insights into player behavior, helping game developers improve game character design and user experience.

- In-Game Events: Real-time data pipelines manage and trigger in-game events, enhancing player engagement and immersion.

How do you create a Data Analysis Pipeline?

Creating a data analysis pipeline involves a series of steps to collect, clean, process, and analyze data in a structured and efficient manner. Here’s a high-level overview of the process:

1. Define Objectives: Start by clearly defining your analysis goals and objectives. What are you trying to discover or achieve through data analysis?

2. Data Collection: Gather the data needed for your analysis. This can include data from various sources like databases, APIs, spreadsheets, or external datasets. Ensure the data is relevant and of good quality.

3. Data Cleaning: Clean and preprocess the data to handle missing values, outliers, and inconsistencies. This step is crucial to ensure the data is accurate and ready for analysis.

4. Data Transformation: Perform data transformation and feature engineering to create variables that are suitable for analysis. This might involve aggregating, merging, or reshaping the data as needed.

Read More: Data Transformation Guide 2024

5. Exploratory Data Analysis (EDA): Conduct exploratory data analysis to understand the data’s characteristics, relationships, and patterns. Visualization tools are often used to gain insights.

6. Model Building: Depending on your objectives, build statistical or machine learning models to analyze the data. This step may involve training, testing, and tuning models.

7. Data Analysis: Apply the chosen analysis techniques to derive meaningful insights from the data. This could involve hypothesis testing, regression analysis, classification, clustering, or other statistical methods.

8. Visualization: Create visualizations to present your findings effectively. Visualizations such as charts, graphs, and dashboards can make complex data more understandable.

Read More: The Role of Data Visualization in Business Analytics

9. Interpretation: Interpret the results of your analysis in the context of your objectives. What do the findings mean, and how can they be used to make decisions or solve problems?

10. Documentation and Reporting: Document your analysis process and results. Create a report or presentation that communicates your findings to stakeholders clearly and concisely.

11. Automation and Deployment: If your analysis is ongoing or needs to be updated regularly, consider automating the pipeline to fetch, clean, and analyze new data automatically.

12. Testing and Validation: Test the pipeline to ensure it works correctly and validate the results. Consistently validate your analysis to maintain data quality and accuracy.

13. Feedback Loop: Establish a feedback loop for continuous improvement. Take feedback from stakeholders and adjust the pipeline as needed to provide more valuable insights.

14. Security and Compliance: Ensure that your data analysis pipeline complies with data security and privacy regulations, especially if it involves sensitive or personal information.

AI in Robotics: Pushing Boundaries and Creating New Possibilities

Explore how AI in robotics is creating new possibilities, enhancing efficiency, and driving innovation across sectors.

Stay Ahead with Kanerika’s Advanced Data Analytics

In today’s business environment, organizations that fail to leverage powerful data analytics risk falling behind. Data is the foundation of decision-making, customer engagement, and operational efficiency. A well-designed data analytics pipeline ensures that businesses can efficiently collect, process, analyze, and visualize data to derive meaningful insights that drive success.

At Kanerika, we specialize in building future-ready data analytics solutions that empower organizations to harness the full potential of their data. Our expertise spans multiple industries, including retail, manufacturing, banking, finance, and healthcare. By utilizing advanced tools and technologies, we design custom solutions that seamlessly integrate your data, transforming it into actionable insights that fuel growth and innovation.

Our comprehensive approach ensures that data flows smoothly through every stage of the pipeline—from collection and cleansing to transformation, analysis, and visualization. This enables businesses to make faster, data-driven decisions while staying agile and responsive to market and regulatory changes.

With Kanerika’s data analytics pipeline solutions, businesses can unlock the true value of their data, streamline operations, and stay ahead of the competition. We focus on creating tailored pipelines that align with your specific needs, ensuring that data is processed efficiently and securely, driving sustainable success across industries.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

What is a data analytics pipeline?

A data analytics pipeline is an automated workflow that moves raw data from source systems through processing stages to deliver actionable business insights. It encompasses ingestion, transformation, validation, and delivery to analytics platforms or dashboards. Unlike basic data movement, an analytics pipeline specifically optimizes data for reporting, machine learning, and decision-making. Enterprise pipelines handle structured and unstructured data at scale while maintaining data quality and governance throughout the flow. Kanerika designs end-to-end data analytics pipelines that accelerate time-to-insight for enterprises across industries—connect with our team to discuss your requirements.

What is an example of a data pipeline?

A retail sales data pipeline is a practical example where point-of-sale transactions flow from store systems into a centralized data warehouse. The pipeline extracts daily sales records, transforms them by standardizing currency formats and calculating margins, then loads aggregated data into Power BI dashboards for regional managers. Another common example involves IoT sensor data from manufacturing equipment feeding real-time analytics for predictive maintenance. Each data pipeline example follows the same pattern: source extraction, transformation logic, and destination loading. Kanerika builds custom data pipelines tailored to your specific business workflows—schedule a discovery session today.

What are the 5 stages of a data pipeline?

The five stages of a data pipeline are ingestion, processing, transformation, storage, and delivery. Ingestion captures data from sources like databases, APIs, or streaming platforms. Processing handles initial cleansing and deduplication. Transformation applies business logic, aggregations, and schema mappings. Storage persists processed data in warehouses or lakehouses for durability. Delivery pushes refined datasets to analytics tools, dashboards, or downstream applications. Each stage requires monitoring and error handling to maintain pipeline reliability at enterprise scale. Kanerika implements robust data pipeline architectures covering all five stages with built-in governance—reach out for a technical consultation.

What is the difference between ETL and a data pipeline?

ETL is a specific type of data pipeline focused on extract, transform, and load operations, while a data pipeline is the broader architecture encompassing any automated data flow. All ETL processes are data pipelines, but not all pipelines use the ETL pattern. Modern pipelines may follow ELT approaches, handle real-time streaming, or orchestrate complex multi-step workflows beyond traditional batch extraction. Data pipelines also include orchestration, monitoring, and governance layers that extend past ETL’s core functions. Kanerika helps enterprises choose between ETL, ELT, and hybrid pipeline architectures based on your data strategy—let’s assess your needs together.

Is ETL a data pipeline?

Yes, ETL is a data pipeline, specifically one that follows the extract, transform, and load methodology. ETL pipelines pull data from source systems, apply transformations like cleansing and aggregation, then load results into target destinations such as data warehouses. However, ETL represents just one pipeline pattern among many. Streaming pipelines, ELT pipelines, and event-driven architectures serve different use cases within the broader data pipeline category. The distinction matters when designing solutions for real-time versus batch processing requirements. Kanerika’s data engineers evaluate whether ETL or alternative pipeline patterns best fit your analytics goals—book a free assessment.

What is an ETL pipeline in data analytics?

An ETL pipeline in data analytics automates the extraction of raw data from operational systems, transforms it into analysis-ready formats, and loads it into warehouses or BI platforms. The transformation stage is where analytics value emerges—applying calculations, creating aggregations, and enforcing data quality rules. ETL pipelines feed dashboards, reports, and machine learning models with consistent, validated datasets. They typically run on scheduled batches, though modern implementations support micro-batching for near-real-time analytics. Proper ETL pipeline design ensures data lineage tracking and compliance with governance policies. Kanerika builds ETL pipelines optimized for platforms like Microsoft Fabric and Databricks—contact us for a demo.

What are the key components of a data pipeline?

The key components of a data pipeline include data sources, ingestion mechanisms, processing engines, transformation logic, storage systems, orchestration tools, and monitoring frameworks. Data sources range from databases and APIs to streaming platforms and file systems. Processing engines like Spark or Databricks handle computation at scale. Orchestration tools such as Airflow or Azure Data Factory manage workflow scheduling and dependencies. Monitoring components track pipeline health, data quality metrics, and SLA compliance. Together, these components create reliable, automated data flows from source to consumption. Kanerika architects data pipeline solutions using enterprise-grade components tailored to your infrastructure—schedule a consultation.

What are the key stages in a data pipeline?

The key stages in a data pipeline are data collection, data preparation, data integration, data storage, and data consumption. Collection involves connecting to diverse sources and extracting raw datasets. Preparation encompasses cleansing, deduplication, and validation to ensure quality. Integration merges data from multiple sources using consistent schemas and business rules. Storage places processed data in optimized repositories like data warehouses or lakehouses. Consumption delivers refined data to analysts, applications, and AI models for actionable insights. Each stage requires proper error handling and logging for production reliability. Kanerika implements data pipeline stages with governance built in from day one—talk to our architects.

Which tool is used for data pipelines?

Popular data pipeline tools include Apache Airflow for orchestration, Apache Spark for distributed processing, and cloud-native services like Azure Data Factory, AWS Glue, and Google Cloud Dataflow. Microsoft Fabric and Databricks offer unified platforms combining ingestion, transformation, and analytics capabilities. For ETL-specific workflows, Informatica, Talend, and Alteryx remain widely deployed across enterprises. Tool selection depends on data volume, latency requirements, existing infrastructure, and team expertise. Most organizations use multiple tools integrated within their pipeline architecture for specialized functions. Kanerika implements data pipeline tools across Microsoft, Databricks, and Snowflake ecosystems—request a tool assessment for your environment.

Is Databricks a data pipeline tool?

Yes, Databricks functions as a comprehensive data pipeline tool through its unified Lakehouse platform. It provides Delta Live Tables for declarative pipeline development, Apache Spark for large-scale data processing, and built-in orchestration for workflow management. Databricks handles both batch and streaming pipelines within the same environment, simplifying architecture complexity. Its integration with cloud storage, data catalogs, and BI tools makes it a complete solution for enterprise analytics pipelines. The platform also includes data quality monitoring and lineage tracking essential for production workloads. Kanerika specializes in Databricks implementations for Lakehouse analytics—explore how we can modernize your data pipeline infrastructure.

How many types of data pipelines are there?

There are four primary types of data pipelines: batch pipelines, streaming pipelines, ETL/ELT pipelines, and event-driven pipelines. Batch pipelines process data at scheduled intervals, ideal for large-volume historical analysis. Streaming pipelines handle continuous data flows for real-time analytics and monitoring. ETL and ELT pipelines focus on transformation workflows for data warehousing. Event-driven pipelines trigger processing based on specific data events or conditions. Many enterprises combine multiple pipeline types within their architecture to address different latency and processing requirements across use cases. Kanerika designs hybrid pipeline architectures that balance real-time and batch processing needs—connect with us to explore your options.

Will ETL be replaced by AI?

AI will augment ETL rather than replace it entirely, automating complex tasks like schema mapping, data quality detection, and transformation code generation. Machine learning already powers intelligent data matching, anomaly identification, and pipeline optimization in modern platforms. However, ETL’s core functions of extraction, transformation, and loading remain essential for moving data between systems. AI enhances these processes by reducing manual coding effort and improving accuracy, but human oversight stays critical for business logic validation and governance compliance. The future combines AI-powered automation with foundational ETL architecture. Kanerika integrates AI capabilities into ETL workflows through intelligent automation solutions—discover how we can modernize your pipelines.

Is ETL obsolete?

ETL is not obsolete but has evolved significantly alongside modern data architectures. Traditional batch ETL now coexists with ELT patterns that leverage cloud warehouse computing power for transformations. Real-time ETL and streaming alternatives address latency requirements legacy approaches cannot meet. The core principle of extracting, transforming, and loading data remains fundamental to every analytics pipeline. What has changed is the tooling, scale, and flexibility of implementation. Cloud-native platforms like Microsoft Fabric and Databricks modernize ETL without abandoning its essential methodology. Kanerika helps enterprises modernize legacy ETL infrastructure to current best practices—reach out for a migration assessment.

What are the main 3 stages in a data pipeline?

The main three stages in a data pipeline are extraction, transformation, and loading. Extraction pulls data from source systems including databases, APIs, files, and streaming platforms. Transformation applies business rules, cleanses records, standardizes formats, and aggregates data for analytical consumption. Loading delivers processed datasets to target destinations such as data warehouses, lakehouses, or visualization tools. These three stages form the foundation of every data pipeline regardless of complexity. Additional stages like validation, monitoring, and orchestration enhance reliability but extraction, transformation, and loading remain the core workflow. Kanerika streamlines all three pipeline stages with automated DataOps practices—let’s discuss your implementation.

Which ETL tool is in demand in 2026?

Microsoft Fabric leads ETL tool demand in 2026, driven by its unified analytics platform combining data integration, warehousing, and BI capabilities. Databricks continues strong adoption for organizations prioritizing Lakehouse architectures and Apache Spark workloads. Snowflake’s native data transformation features gain traction alongside its cloud data warehouse dominance. Traditional tools like Informatica and Talend maintain enterprise presence, particularly in regulated industries requiring mature governance. Azure Data Factory and AWS Glue remain popular for cloud-native ETL within their respective ecosystems. Tool selection increasingly depends on existing platform investments and AI integration roadmaps. Kanerika delivers ETL implementations across leading platforms—contact us to evaluate which tool fits your enterprise strategy.

Do data analysts build data pipelines?

Data analysts occasionally build simple data pipelines, though data engineers typically handle complex pipeline architecture. Analysts commonly create lightweight pipelines using tools like Power Query, Alteryx, or Python scripts for specific reporting needs. Self-service analytics platforms increasingly enable analysts to assemble basic data flows without engineering support. However, production-grade data pipelines requiring scalability, fault tolerance, and governance remain data engineering responsibilities. The collaboration between analysts defining requirements and engineers building robust infrastructure produces the most effective pipeline implementations. Organizations benefit from clear role definitions while enabling appropriate self-service capabilities. Kanerika empowers both analysts and engineers with the right pipeline tools and training—explore our data analytics services.

How to prepare a data pipeline?

Preparing a data pipeline starts with defining clear business objectives and identifying required data sources. Document data schemas, volumes, and quality characteristics for each source system. Design the transformation logic that converts raw data into analytics-ready formats meeting business requirements. Select appropriate tools based on latency needs, scale, and existing infrastructure. Build incrementally, starting with a minimum viable pipeline before adding complexity. Implement comprehensive monitoring, logging, and alerting from the beginning. Test thoroughly with production-like data volumes and establish rollback procedures. Document everything for maintainability and knowledge transfer across teams. Kanerika provides end-to-end data pipeline development services with proven methodologies—start with a free architecture review.

What are the 4 pillars of data engineering?

The four pillars of data engineering are data architecture, data pipelines, data storage, and data governance. Data architecture establishes the blueprint for how data flows across the organization. Data pipelines automate the movement and transformation of data between systems. Data storage encompasses databases, data warehouses, and lakehouses optimized for different workloads. Data governance ensures data quality, security, compliance, and proper access controls throughout the lifecycle. These pillars work together to create reliable, scalable foundations for analytics and AI initiatives. Mastering all four enables organizations to derive consistent value from their data assets. Kanerika delivers expertise across all four data engineering pillars—partner with us to strengthen your data foundation.