“With data collection, ‘the sooner the better’ is always the best answer.” Marissa Mayer’s quote on data is as relevant today as it was a few years ago. In 2025, global data creation has reached to reach 181 zettabytes which is a massive jump from 79 zettabytes in 2021. The debate around data lake vs. data warehouse has never been more critical.

Take Tesla, for example, the company processes vast amounts of real-time sensor data from its vehicles, requiring a data lake to store unstructured data like video feeds and telemetry. On the other hand, its financial reports, sales metrics, and supply chain data are structured and neatly stored in a data warehouse for business intelligence.

Meanwhile, data warehouses provide structured, processed data optimized for quick decision-making. Understanding the strengths of data lake vs. data warehouse ensures businesses harness the full potential of their data strategy.

Key Learnings

- A data warehouse is built for trusted analytics – It centralizes structured data from multiple business systems, providing a single source of truth for reporting and decision-making.

- Schema-on-write ensures consistency and performance – By structuring data before storage, data warehouses deliver faster queries and reliable, repeatable reports.

- Data warehouses are optimized for BI and reporting – They are best suited for dashboards, financial reporting, and operational analytics that require accuracy and governance.

- Strong governance is a core advantage – Built-in controls for data quality, security, and compliance make data warehouses ideal for regulated enterprise environments.

- Data warehouses remain critical in modern data architectures – Even with data lakes and lakehouses, warehouses continue to play a key role in enterprise analytics strategies.

Transform Your Data Warehouse Into A Scalable Data Lake.

Partner With Kanerika To Ensure Accuracy, Security, And Speed.

What is Data Lake?

A data lake is a centralized storage system that can house a vast amount of structured, semi-structured, and unstructured data. It’s designed to store data in its raw format, with no initial processing required.

Additionally, this allows for various types of analytics, such as big data processing, real-time analytics, and machine learning, to be performed directly on the data within the lake. Therefore, the architecture of a Data Lake typically includes layers like the Unified Operations Tier, Processing Tier, Distillation Tier, and HDFS.

The primary goal of a Data Lake is to provide a comprehensive view of an organization’s data to data scientists and analysts. Hence, This enables them to derive insights and make informed decisions. It’s a flexible solution that supports the exploration and analysis of large datasets from multiple sources.

What is a Data Warehouse?

A data warehouse is simply a central repository that is meant to store, organize, and analyze large amounts of structured business information. To put it simply, it integrates information across various sources, like ERP, CRM, finance, and operations, into one trusted place to report and conduct analytics. This means that organizations will be able to have access to reliable and uninterrupted data to use in their decision-making.

A data warehouse is possible to use, unlike other data platforms as it is a schema-on-write. This implies that the data undergoes cleaning, transformation and structuring prior to storage. Due to this, requests are faster and reports are always consistent. Data warehouses are primarily designed to work with structured data, including tables, rows, and columns, and are, therefore, useful to business intelligence workloads.

The basic data warehouse structure consists of data sources, ETL /ELT pipelines, a centralized storage tier and analytics or BI tools on top. These elements then collaborate in an attempt to achieve data accuracy, performance, and governance.

Data warehouses are often used by enterprises to perform reporting, dashboards, financial analysis, and operation insights. Altogether, a data warehouse can offer a reliable and controlled base of analytics that assists companies to monitor their performance, address compliance requirements, and contribute to data-driven approaches.

Data Warehouse Migration: A Practical Guide for Enterprise Teams

Learn key strategies, tools and best practices for successful data-warehouse migration.

Data Lake vs Data Warehouse: Process and Strategy

Data Lakes are flexible and suited for raw, expansive data exploration, while Data Warehouses are structured and optimized for specific, routine business intelligence tasks.

Both play crucial roles in a comprehensive data strategy, often complementing each other within an organization’s data ecosystem.

Data Lake Process and Strategy

These are ideal for data scientists and analysts who need to perform in-depth analysis. They need it for predictive modeling and understanding large datasets in their raw form.

- The process begins with ingesting raw data from various sources. These include transactional databases, social media, IoT devices, logs, and more.

- Data ingestion tools such as Apache Kafka, Apache NiFi or custom scripts are employed to receive and transfer data to the data lake.

- The raw data is stored as native form in a distributed file system, i.e., Hadoop Distributed File System (HDFS), Amazon S3 or Azure Data Lake Storage (ADLS). It does not have an upfront schema, and is flexible when it comes to storing a variety of data.

- There are numerous data analytics tools and frameworks that will be used by data analysts, data scientists, and other stakeholders to investigate and examine the information in the data lake. These tools are SQL engine, machine learning libraries and visualization tools.

Data Warehouse Process and Strategy

Data Warehouses are best suited for business professionals and decision makers. They require operational data in a structured system for analytics and easy querying.

- Data is extracted from multiple operational systems, such as CRM, ERP, and financial systems, using ETL (Extract, Transform, Load) processes. This involves identifying relevant data sources, extracting data using predefined queries or APIs, and moving it to the data warehouse.

- Extracted data undergoes transformation and cleansing processes to ensure consistency, integrity, and quality. Data is standardized, normalized, and aggregated to make it suitable for analysis and reporting.

- Transformed data is loaded into the data warehouse, typically using batch processing techniques. The data is organized into dimensional models, which optimize query performance and facilitate analytical processing.

- Business users, analysts, and decision-makers query the data warehouse using BI (Business Intelligence) tools, SQL queries, or reporting interfaces. They can generate reports, dashboards, and visualizations to gain better insights.

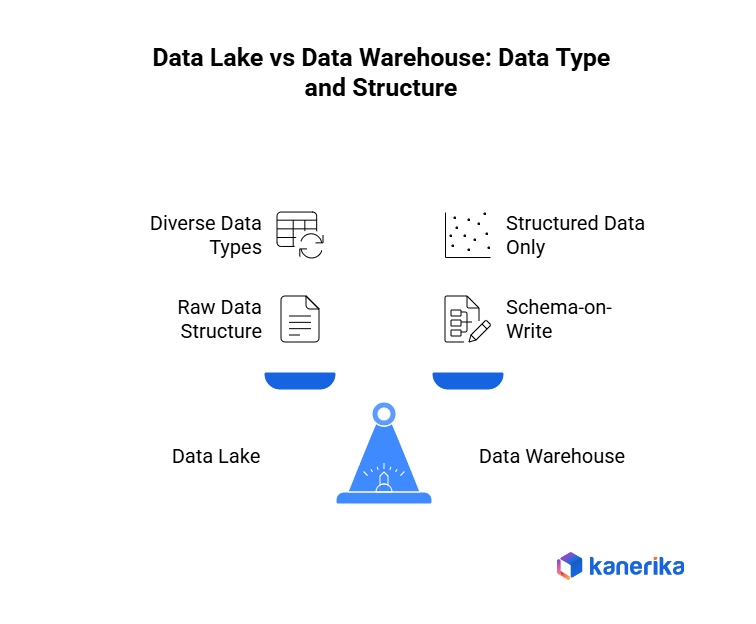

Data Lake vs Data Warehouse: Data Type and Structure Used

Data lakes are also good at managing diverse and unstructured data to be explored and analyzed in advanced analytics. In the meantime, data warehouses are best suited in the storage and analysis of structured information to support operational reporting and business intelligence.

Data Lake Data Type and Structure Used

Data Type: Data Lakes are structured to support large number of data types, such as structured, semi-structured and unstructured data. This implies that they are capable of storing both the traditional database records and the JSON files as well as multimedia files.

Data Structure: Within a Data Lake, data is often stored in its raw form without a predefined schema. It has a flat structure with every piece of data being given a distinct identifier and marked with a list of extended metadata tags. When business question occurs the data can be converted and formed to suit the analysis requirement.

Data Warehouse Data Type and Structure Used

Data Type: Data Warehouses mainly contain well-structured processed and formatted data. Such information has been usually obtained via the transactional systems and other relational databases.

Data Structure: Data in a Data Warehouse is very structured and is organized based on a schema-on-write basis. This implies that the schema (data model) is set by the time the data is being put into the warehouse. It is structured with tables and columns and information is usually aggregated, summarized and indexed to facilitate effective querying and reporting.

Data Lake vs Data Warehouse: Cost, Security and Accessibility

Although data lakes and data warehouses are both useful in managing data, they address divergent requirements which have different implications in terms of cost, security and accessibility. The major contrasts are the following:

Cost

- Data Lake: Data Lake is normally cheaper to store data than data warehouses particularly when a lot of raw and unstructured data has to be stored. It stores the data in its raw format thus removing pre-processing and schema definition which is expensive. Also, data lakes have a high-capacity of managing large amount of unstructured data at a low cost as opposed to a database warehouse.

- Data Warehouse:Typically involves higher upfront costs for infrastructure setup, licensing, and maintenance, particularly for on-premises deployments. Organized data storage is often more intensive in processing power which can result in an increase in the cost of computation. Although data warehouses could be more expensive than data lakes in terms of storage costs of structured data, it might be cheaper.

Security

- Data Lake: The companies should adopt solid protective strategies against the abuse or violation of access to sensitive data. Typically, data lakes include access control and security mechanisms on a granular basis to preserve data rest and transit.

- Data Warehouse: Generally offers stronger security. Access controls and user permissions are easier to be implemented since the schema is structured and predefined. Security in data warehouses is usually considered. The built-in features of role-based access control (RBAC) and row-level security (RLS) allow controlling data access with fine-grained control.

Accessibility

- Data Lake: It is more open to a broader group of users, especially those who are more at home with raw data, e.g., data scientists and analysts. They are in a position to navigate the information without restriction of set formats. There may however be some technical skills required to use and interpret the raw data.

- Data Warehouse: This is created to be simpler to access by the business users and analysts who might not have a great deal of technical expertise. The predefined queries with their structured format allow retrieving individual data points faster to report and analyze. Nonetheless, this priori organization may not allow associating the data with unexpected links.

Use Cases: Data Lake vs Data Warehouse

Data Lakes present a more lax setup that is more appropriate to store and analyze large amounts of different data in their original form, which is useful in machine learning, and real-time analytics. Conversely, Data Warehouses are more ordered and focused on standard regular and complex queries as well as regular operational reporting.

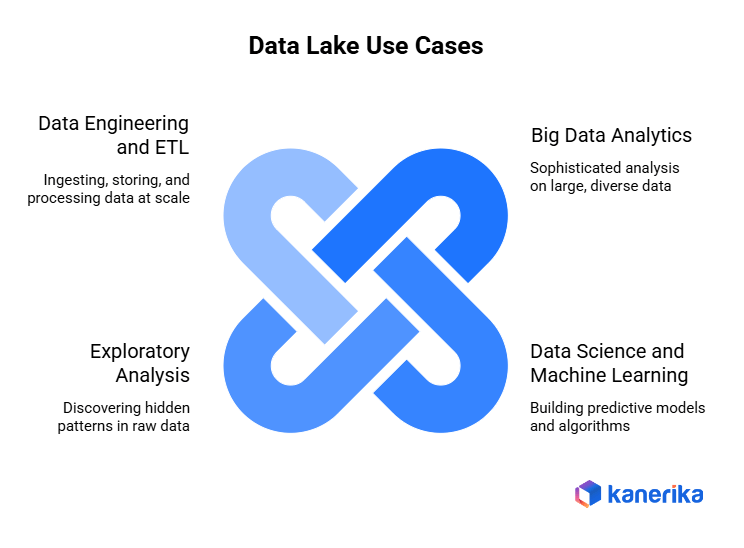

Data Lake Use Cases

- Big Data Analytics: They are suitable towards highly sophisticated analytics on huge quantities of differentiated and unstructured information. Examples of use cases are customer behavior analysis, sentiment analysis of social media data, and the patterns of the IoT sensor data.

- Data Science and Machine Learning: Data lakes provide a rich source of raw data for data scientists and machine learning engineers to build predictive models, perform clustering analysis, and conduct feature engineering tasks. Use cases include predictive maintenance, fraud detection, and recommendation systems.

- Exploratory Analysis: Data lakes allow the data analysts and researchers to interact with and learn something about raw data without established schemas or models. Exploratory data analysis, data visualization, and hypothesis testing are all examples of use cases in which an investigator tries to discover hidden data patterns and correlations.

- Data Engineering and ETL: Lakes serve as a foundational component for data engineering workflows, allowing organizations to ingest, store, and process data at scale. Use cases include data ingestion from various sources, data transformation pipelines, and real-time stream processing.

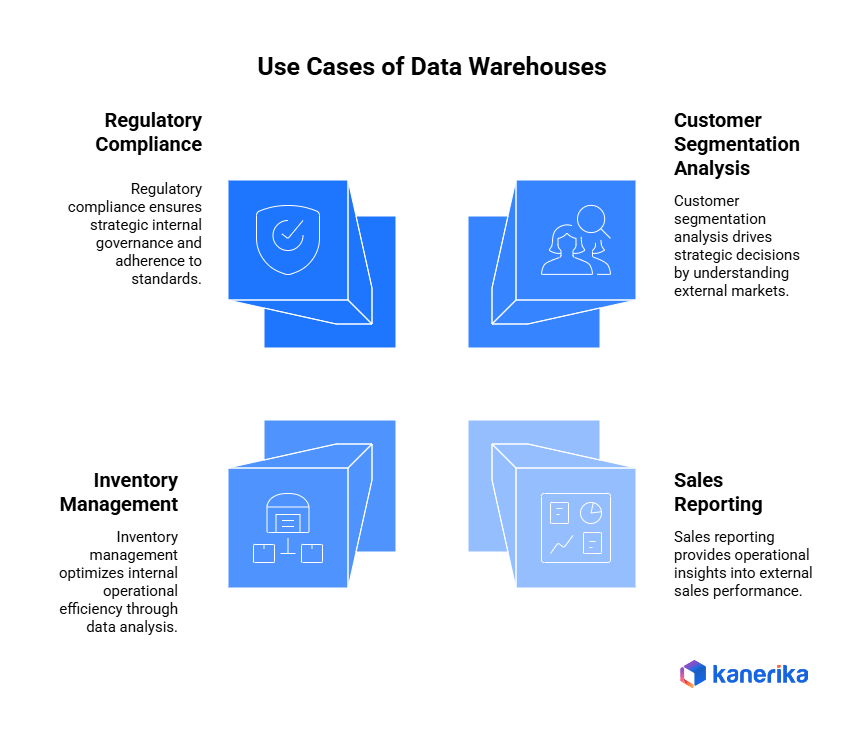

Use Cases of Data Warehouses

- Business Intelligence (BI) and Reporting: Warehouses could be effectively used to create standardized reports, dashboards, and KPI measures to be used by business users and executives. Examples of use cases are sales reporting, financial analysis and monitoring of operational performance.

- Operational Analytics: Data warehouses are useful in ad-hoc and interactive querying to analyze the past data to find the trends in the operation metrics. Examples of the use cases are inventory management, supply chain optimization and workforce planning.

- Regulatory Compliance and Governance: They offer central control and auditing functions in the process of ensuring that the regulatory requirements, governance of data and industry standards are adhered to. Therefore, these cases consist of GDPR compliance, HIPAA, and internal auditing.

- Data-driven Decision Making: Data warehouses allow the stakeholders to make informed decisions, using the correct and consistent data in the warehouse. Additionally, Use cases encompass customer segmentation and market segmentation analysis as well as churn predictions in order to accelerate business strategy and decision-making processes.

Data Lake vs Data Warehouse: Comparison Summary

This table provides a concise comparison of key aspects of Data Lakes and Data Warehouses. It helps to understand their differences and suitability for various applications.

| Aspect | Data Lake | Data Warehouse |

| Definition | A storage repository for raw data in its native format. | A system for reporting and analysis, storing processed data. |

| Data Types | Structured, semi-structured, unstructured. | Primarily structured data. |

| Data Structure | No predefined schema (schema-on-read). | Predefined schema (schema-on-write). |

| Cost | More cost-effective for large volumes of diverse data. | Higher due to sophisticated hardware/software requirements. |

| Security | Can be challenging due to the variety/volume of data. | Generally easier due to structured nature. |

| Accessibility | Flexible, ideal for data scientists/analysts. | User-friendly for business users with standard reporting tools. |

| Primary Use Cases | Big data analytics, machine learning, data discovery. | Structured business reporting, business intelligence. |

| Data Processing | Suitable for real-time data processing and analytics. | Optimized for batch processing and complex queries. |

| Storage Approach | Cost-effective, scalable for large data volumes. | Structured, requires data transformation and cleaning. |

| User Skill Level | Requires more advanced analytical skills. | More accessible for non-technical users. |

| Maintenance | Requires robust management to avoid becoming a data swamp. | Easier to maintain due to structured nature. |

Data Mesh vs Data Lake: Key Differences Explained

Explore key differences between a data mesh and a data lake, and how each approach addresses data management and scalability for modern enterprises.

Data Lake vs Data Warehouse: Which One is Right for You?

Choosing between a Data Lake and a Data Warehouse depends heavily on your business needs and intended applications.

Here’s a breakdown to assist you:

Data Lake: It excels at handling vast quantities of diverse data types, including raw and unstructured data. Therefore, Its scalability and support for advanced analytics and machine learning make it an attractive option. Considerations:

- Opt for a Data Lake if your business demands flexibility in data processing and exploration.

- It’s suitable for scenarios where storing data in its native format is essential for potential future use cases.

- Choose a Data Lake for analytics requiring intricate, non-standardized data processing.

Data Warehouse specializes in managing structured, processed data, facilitating routine and complex querying with optimized stability and speed in data retrieval and analysis. Considerations:

- If your business heavily relies on consistent, structured data for reporting and business intelligence, a Data Warehouse is ideal.

- Data Warehouses offer quick, dependable data access for operational insights and decision-making processes.

- Opt for a Data Warehouse if your data strategy prioritizes structured data analysis and historical reporting.

Case Study 1: Transforming Enterprise Data with Automated Migration from Informatica to Talend

Client Challenge

The client used a large Informatica setup that became costly and slow to manage. Licensing fees kept rising. Workflows were complex, and updates needed heavy manual work. Modernization stalled because migrations took too long.

Kanerika’s Solution

Kanerika used FLIP to automate the conversion of Informatica mappings and logic into Talend. FIRE extracted repository metadata so the team could generate Talend jobs with minimal manual rework. Outputs were validated through controlled test runs and prepared for a cloud-ready environment.

Impact Delivered

• 70% reduction in manual migration effort

• 60% faster time to delivery

• 45% lower migration cost

• Better stability through accurate logic preservation and smooth cutover

Case Study 2: Migrating Data Pipelines from SSIS to Microsoft Fabric

Client Challenge

The client’s SSIS-based pipelines were slow, expensive, and hard to maintain. Reports refreshed slowly, and the platform needed too much manual intervention.

Kanerika’s Solution

Kanerika rebuilt the client’s pipelines using PySpark and Power Query inside Microsoft Fabric. SSIS logic was mapped to the right Fabric components and transformed into Dataflows and PySpark notebooks. A unified Lakehouse structure improved performance and simplified monitoring.

Impact Delivered

• 30% faster data processing

• 40% reduction in operational cost

• 25% less manual maintenance

• Improved scalability through a modern Fabric-based architecture

Kanerika: Empowering Seamless Data Warehouse to Data Lake Migration

At Kanerika, we help enterprises modernize their data landscape by choosing the correct setup that aligns with their operational needs, data complexity, and long-term analytics goals. Traditional data warehouses are effective for managing structured, historical data used in reporting and business intelligence, but they often fall short in today’s dynamic, real-time environments. Consequently, this is where data lakes and data fabric setups come into play, offering the flexibility to efficiently handle diverse, unstructured, and streaming data sources.

As a Microsoft Solutions Partner for Data & AI and an early user of Microsoft Fabric, Kanerika delivers unified, future-ready data platforms. Furthermore, we focus on designing intelligent setups that combine the strengths of data warehouses and data lakes. For clients focused on structured analytics and reporting, we establish robust warehouse models. For those managing distributed, real-time, or unstructured data, we create scalable data lake and fabric layers that ensure easy access, automated governance, and AI readiness.

All our implementations comply with global standards, including ISO 27001, ISO 27701, SOC 2, and GDPR, ensuring security and compliance throughout the migration process. Moreover, with our deep expertise in both traditional and modern systems, Kanerika helps organizations transition from fragmented data silos to unified, intelligent platforms, unlocking real-time insights and accelerating digital transformation without compromise.

FAQs

What is the main difference between data lakes and data warehouses?

Data lakes store raw, unstructured data in its native format, while data warehouses store processed, structured data optimized for analytics. Data lakes use schema-on-read, applying structure only when data is accessed, whereas data warehouses enforce schema-on-write during ingestion. This fundamental difference means data lakes handle diverse data types including logs, images, and IoT streams, while data warehouses excel at structured business intelligence queries. Cost structures also differ significantly, with data lakes typically offering lower storage costs for massive volumes. Kanerika helps enterprises architect the right data storage strategy for their specific analytics needs—connect with our team for expert guidance.

Can a data lake replace a data warehouse?

A data lake cannot fully replace a data warehouse for most enterprises. While data lakes excel at storing massive volumes of raw, diverse data, they lack the performance optimization and governance features data warehouses provide for structured analytics. Business intelligence tools and SQL-based reporting perform significantly better on warehouse architectures with pre-defined schemas. Many organizations adopt both, using data lakes for exploration and machine learning while maintaining warehouses for production dashboards and compliance reporting. Kanerika designs hybrid data architectures that leverage each platform’s strengths—schedule a consultation to optimize your data infrastructure.

What is a data lake used for?

A data lake stores vast amounts of raw data in native formats for advanced analytics, machine learning, and data science workloads. Organizations use data lakes to consolidate structured, semi-structured, and unstructured data from multiple sources including IoT sensors, social media, clickstreams, and enterprise applications. Data scientists access this repository for exploratory analysis, training ML models, and discovering patterns impossible to find in siloed systems. Data lakes also serve as staging areas for data transformations before loading into downstream systems. Kanerika builds scalable data lake solutions on platforms like Databricks and Azure—reach out to modernize your analytics foundation.

What are the disadvantages of a data lake?

Data lakes present several challenges including data swamp risk, where poor governance transforms repositories into unusable chaos. Without proper metadata management and cataloging, finding relevant data becomes nearly impossible. Query performance suffers compared to optimized data warehouses, especially for complex analytical workloads. Security and compliance implementation requires additional tooling since data lakes lack built-in access controls found in enterprise warehouses. Data quality issues compound over time without validation rules enforced at ingestion. Skilled personnel for managing distributed storage systems remain scarce and expensive. Kanerika implements robust data lake governance frameworks that prevent these pitfalls—talk to our data platform experts today.

Is Databricks a data warehouse or lakehouse?

Databricks is a lakehouse platform that combines data lake flexibility with data warehouse performance and governance. The Databricks Lakehouse architecture uses Delta Lake technology to bring ACID transactions, schema enforcement, and time travel capabilities to data stored in open formats. This unified approach eliminates the need for separate lake and warehouse infrastructure while supporting both SQL analytics and machine learning workloads. Databricks handles structured and unstructured data equally well, making it ideal for organizations consolidating fragmented data environments. Kanerika delivers enterprise Databricks implementations with optimized lakehouse architectures—contact us to accelerate your Databricks journey.

Is Snowflake a data lake or data warehouse?

Snowflake is primarily a cloud data warehouse with data lake capabilities through its external tables and data sharing features. Built for structured and semi-structured analytics, Snowflake separates compute from storage, enabling elastic scaling and concurrent workload handling. While Snowflake excels at SQL-based analytics on structured data, it can query external data lake storage using its external tables functionality. The platform supports JSON, Parquet, and Avro formats natively, bridging traditional warehouse and modern lake requirements. For pure unstructured data workloads, dedicated data lakes remain preferable. Kanerika delivers comprehensive Snowflake implementations tailored to your analytics requirements—request a free architecture assessment.

Can you use a data lake and data warehouse together?

Using a data lake and data warehouse together represents the optimal architecture for most enterprises. In this hybrid approach, the data lake serves as a centralized repository for raw data ingestion and storage, while the data warehouse handles curated, business-ready datasets for reporting and dashboards. Data flows from lake to warehouse through ETL or ELT pipelines that cleanse, transform, and aggregate information. This combination supports both exploratory data science on raw data and reliable business intelligence on governed datasets. Kanerika architects integrated lake-warehouse solutions that maximize each platform’s strengths—connect with us to design your unified data ecosystem.

What is the difference between a data lake and a data lakehouse?

A data lakehouse adds data warehouse capabilities directly onto data lake infrastructure, eliminating the need for separate systems. Traditional data lakes store raw data without built-in governance, ACID transactions, or query optimization. A lakehouse architecture introduces these enterprise features while maintaining open file formats and low-cost storage benefits of lakes. Technologies like Delta Lake, Apache Iceberg, and Apache Hudi enable transaction support, schema evolution, and time travel on lake storage. Lakehouses deliver both exploratory analytics flexibility and production-grade reliability in one platform. Kanerika implements lakehouse architectures on Databricks and Microsoft Fabric—explore how we can modernize your data platform.

Can a lakehouse replace a data warehouse?

A lakehouse can replace a data warehouse for many use cases, though some scenarios still benefit from dedicated warehouse infrastructure. Lakehouse platforms now deliver comparable SQL query performance, ACID compliance, and governance capabilities that previously required traditional warehouses. Organizations running Databricks or Microsoft Fabric lakehouses successfully consolidate analytics workloads without separate warehouse systems. However, enterprises with heavy reliance on legacy BI tools, extensive stored procedures, or strict regulatory requirements may find traditional warehouses easier to maintain. Migration complexity and team expertise also influence this decision significantly. Kanerika evaluates lakehouse readiness and builds migration roadmaps for enterprises—schedule your assessment today.

Why use a data lake instead of a data warehouse?

Choose a data lake when handling diverse data types, massive volumes, or advanced analytics requirements that warehouses struggle to address. Data lakes accommodate unstructured data like documents, images, audio, and video alongside traditional structured records. Storage costs run significantly lower than warehouses, especially for petabyte-scale repositories using object storage. Data lakes support machine learning workloads with native access to raw training data without preprocessing constraints. Schema flexibility enables faster data onboarding since you define structure at query time rather than ingestion. Kanerika helps enterprises determine when data lakes deliver superior value over warehouses—reach out for a personalized architecture review.

What is the difference between ETL and ELT?

ETL extracts data, transforms it on a separate processing server, then loads it into the target system, while ELT loads raw data first and transforms it within the destination platform. ETL suits traditional data warehouses where transformation before loading ensures data quality and schema compliance. ELT leverages modern cloud platform processing power, ideal for data lakes and lakehouses that handle raw data ingestion efficiently. ELT preserves original data, enabling reprocessing when business requirements change without re-extracting from sources. Cloud scalability makes ELT increasingly popular for big data workloads. Kanerika designs ETL and ELT pipelines optimized for your target platform—discuss your data integration needs with our specialists.

Is a data lake ETL or ELT?

Data lakes predominantly use ELT processing, loading raw data first and transforming it later when needed. This approach aligns with data lake philosophy of storing data in native formats without upfront schema requirements. ELT leverages the distributed processing power of lake platforms like Spark to handle transformations at scale within the storage environment. However, some organizations implement hybrid approaches with light ETL validation before lake ingestion to prevent data quality degradation. The transformation step occurs on-demand when analysts query data or when pipelines prepare datasets for specific use cases. Kanerika builds efficient ELT pipelines for enterprise data lakes—let us optimize your data processing architecture.

What is a data lake in ETL?

Within ETL architecture, a data lake serves as either a landing zone for extracted raw data or a staging area between extraction and transformation phases. Modern data pipelines extract from diverse sources and load directly into data lakes, deferring transformation until consumption. This positions the lake as the central repository in an ELT pattern rather than traditional ETL. Some architectures use lakes for intermediate storage, running transformations that produce warehouse-ready datasets. The lake retains original data, enabling new transformations without re-extraction when business needs evolve. This flexibility makes data lakes valuable components in modern data integration workflows. Kanerika architects data lake solutions that streamline your entire data pipeline—contact us for implementation support.

Do you need a data warehouse if you have a data lake?

Having a data lake does not eliminate the need for a data warehouse in most enterprise scenarios. Data warehouses deliver optimized performance for business intelligence queries, governed metrics, and production reporting that data lakes cannot match natively. However, lakehouse architectures increasingly bridge this gap by adding warehouse capabilities to lake storage. Organizations with mature BI requirements, regulatory compliance needs, or complex dimensional models typically maintain dedicated warehouses fed from their data lakes. Startups and analytics-forward companies may successfully operate with lakehouse-only architectures. Kanerika assesses your specific requirements to recommend optimal architecture combinations—start with a complimentary data strategy consultation.

What are examples of data warehouses?

Leading data warehouse examples include Snowflake, Amazon Redshift, Google BigQuery, Azure Synapse Analytics, and Teradata. Snowflake pioneered cloud-native architecture with separated compute and storage. Redshift provides tight AWS ecosystem integration for Amazon-centric organizations. BigQuery offers serverless analytics with automatic scaling and machine learning features built in. Azure Synapse combines warehouse capabilities with Spark-based big data processing. Traditional on-premises options like Teradata, Oracle Exadata, and IBM Db2 Warehouse remain common in large enterprises with existing investments. Each platform presents distinct pricing models, performance characteristics, and integration capabilities. Kanerika implements and migrates enterprise data warehouses across all major platforms—discuss your warehouse requirements with our experts.

What are the 4 components of a data warehouse?

The four core data warehouse components are the data source layer, staging area, storage layer, and presentation layer. The data source layer connects to operational systems, applications, and external feeds supplying raw information. The staging area temporarily holds extracted data during cleansing and transformation before warehouse loading. The storage layer contains the structured repository with dimensional models, fact tables, and optimized indexes for analytical queries. The presentation layer provides user access through BI tools, dashboards, and SQL interfaces. Metadata management and ETL tooling support these components throughout the data lifecycle. Kanerika designs comprehensive data warehouse architectures addressing all components—reach out to modernize your analytics infrastructure.

Is Databricks a data lake?

Databricks is not a data lake itself but a unified analytics platform that operates on data lake storage. Databricks processes data stored in cloud object storage like AWS S3, Azure Data Lake Storage, or Google Cloud Storage using Apache Spark. The platform adds lakehouse capabilities through Delta Lake, enabling ACID transactions and governance features on top of standard data lake infrastructure. Organizations deploy Databricks to build, manage, and analyze data within their data lakes rather than as the storage layer itself. This distinction matters when architecting cloud data solutions. Kanerika implements Databricks solutions integrated with enterprise data lake infrastructure—connect with our team to plan your lakehouse deployment.

What is ETL in a data warehouse?

ETL in a data warehouse refers to the Extract, Transform, Load process that moves and prepares data from source systems into the warehouse. Extraction pulls data from operational databases, APIs, files, and applications. Transformation applies business rules, cleanses records, standardizes formats, and structures data into dimensional models optimized for analytics. Loading inserts processed data into warehouse tables with appropriate partitioning and indexing. ETL pipelines run on schedules or triggers, keeping warehouse data current while maintaining quality standards. Tools like Informatica, Talend, and Azure Data Factory automate these workflows at enterprise scale. Kanerika builds robust ETL pipelines that ensure reliable data warehouse delivery—talk to our data integration specialists today.