Companies like Uber handle millions of trips and user interactions every day, relying heavily on optimized data pipelines to provide real-time ride data and recommendations. A slow or inefficient data pipeline could disrupt their entire service, leading to customer dissatisfaction and lost revenue. While most enterprises struggle with data bottlenecks, slow insights, and escalating costs, companies that master data pipeline optimization gain a decisive competitive edge.

What separates organizations drowning in data from those turning it into strategic value? The answer lies not in more tools but in smarter pipelines. As data volumes grow exponentially, the efficiency of your data infrastructure becomes the hidden factor determining whether your analytics deliver timely insights or outdated noise.

This guide unpacks the essential practices reshaping data pipeline optimization in 2025, revealing how modern enterprises can transform data infrastructure from a cost center into their most valuable competitive advantage.

Transform Your Data Workflows With Expert Data Modernization Services!

Partner with Kanerika Today!

What is Data Pipeline Optimization?

Data pipeline optimization is the process of refining these crucial systems to ensure they operate efficiently, accurately, and at scale. By streamlining how data is collected, processed, and analyzed, businesses can transform raw data into actionable insights faster than ever before, driving smarter decisions and more effective outcomes.

Data Pipelines: An Overview

1. Components and Architecture

A data pipeline is a crucial system that automates the collection, organization, movement, transformation, and processing of data from a source to a destination. The primary goal of a data pipeline is to ensure data arrives in a usable state that enables a data-driven culture within your organization. A standard data pipeline consists of the following components:

- Data Source: The origin of the data, which can be structured, semi-structured, or unstructured data

- Data Integration: The process of ingesting and combining data from various sources

- Data Transformation: Converting data into a common format for improved compatibility and ease of analysis

- Data Processing: Handling the data based on specific computations, rules, or business logic

- Data Storage: A place to store the results, typically in a database, data lake, or data warehouse

- Data Presentation: Providing the processed data to end-users through reports, visualization, or other means

The architecture of a data pipeline varies depending on specific requirements and the technologies utilized. However, the core principles remain the same, ensuring seamless data flow and maintaining data integrity and consistency.

2. Types of Data Handled

Data pipelines handle various types of data, which can be classified into three main categories:

- Structured Data: Data that is organized in a specific format, such as tables or spreadsheets, making it easier to understand and process. Examples include data stored in relational databases (RDBMS) and CSV files

- Data-wpil-monitor-id=”3392″>Semi-structured Data: Data that has some structure but may lack strict organization or formatting. Examples include JSON, XML, and YAML files

- Unstructured Data: Data without any specific organization or format, such as text documents, images, videos, or social media interactions

These different data formats require custom processing and transformation methods to ensure compatibility and usability within the pipeline. By understanding the various components, architecture, and data types handled within a data pipeline, you can more effectively optimize and scale your data processing efforts to meet the needs of your organization.

Identifying Data Pipeline Inefficiencies

1. Performance Bottlenecks

CPU and memory constraints: Insufficient computing power or RAM limits pipeline throughput, causing processing queues and delays when handling complex transformations or large datasets.

I/O limitations: Slow disk operations create bottlenecks as data moves between storage and processing layers, particularly with high-volume batch processes or frequent small reads/writes.

Network transfer issues: Bandwidth constraints and latency problems slow data movement between distributed systems, especially in cross-region or multi-cloud architectures.

Poor query performance: Inefficient SQL queries or unoptimized NoSQL operations drain resources and create processing delays, often due to missing indexes or suboptimal join operations.

2. Cost Inefficiencies

Overprovisioned resources: Allocating excessive computing capacity “just in case” leads to significant waste, commonly seen when static resource allocation doesn’t match actual workload patterns.

Underutilized compute power: Purchased computing resources sit idle during low-demand periods, particularly problematic with fixed-capacity clusters that can’t scale down automatically.

Redundant data processing: Multiple teams unknowingly reprocess the same data multiple times, duplicating effort and creating unnecessary copies that waste storage and compute resources.

Storage inefficiencies: Improper data formats, compression settings, and retention policies bloat storage costs for data that delivers minimal or no business value.

3. Reliability Issues

Single points of failure: Critical pipeline components without redundancy create vulnerability to outages, commonly seen in master-node dependencies or singleton service architectures.

Error handling weaknesses: Poor exception management leads to silent failures or pipeline crashes, causing data loss or quality issues when unexpected input formats appear.

Monitoring blind spots: Insufficient observability into pipeline operations prevents early problem detection, leaving teams reacting to failures rather than preventing them.

Data quality problems: Lack of validation at pipeline entry points allows corrupted or non-conforming data to pollute downstream systems, creating cascading reliability issues.

Best Data Pipeline Optimization Strategies

1. Optimize Resource Allocation

Resource allocation optimization ensures you’re using exactly what you need, when you need it. By right-sizing compute resources and implementing auto-scaling, organizations can significantly reduce costs while maintaining performance. This approach aligns computing power with actual workload demands rather than peak requirements.

- Implement auto-scaling based on workload patterns

- Use spot/preemptible instances for non-critical workloads

- Right-size resources based on historical usage patterns

2. Improve Data Processing Efficiency

Efficient data processing minimizes the work required to transform raw data into valuable insights. By implementing incremental processing and optimizing data formats, organizations can dramatically reduce processing time and resource consumption while maintaining or improving output quality.

- Convert to columnar formats (Parquet, ORC) for analytical workloads

- Implement data partitioning strategies for faster query performance

- Use appropriate compression algorithms based on access patterns

3. Enhance Pipeline Architecture

Architectural improvements focus on the structural design of your data pipelines for better scalability and maintainability. Modern pipeline architectures leverage parallelization and modular components to process data more efficiently and adapt to changing requirements with minimal disruption.

- Break monolithic pipelines into modular, reusable components

- Implement parallel processing where dependencies allow

- Select appropriate processing frameworks for specific workload types

4. Streamline Data Workflows

Streamlining workflows eliminates unnecessary steps and optimizes the path data takes through your systems. By reducing transformation complexity and optimizing job scheduling, organizations can minimize processing time while maintaining data quality and integrity.

- Eliminate redundant transformations and unnecessary data movement

- Implement checkpoints for efficient failure recovery

- Optimize job scheduling based on dependencies and resource availability

5. Implement Caching Strategies

Strategic caching reduces redundant processing by storing frequently accessed or expensive-to-compute results. Properly implemented caching layers can dramatically improve response times and reduce computational load, especially for read-heavy analytical workloads.

- Cache frequently accessed or computation-heavy results

- Implement appropriate invalidation strategies to maintain freshness

- Use distributed caching for scalability in high-volume environments

6. Adopt Data Quality Management

Proactive data quality management prevents downstream issues that can cascade into major pipeline failures. By implementing validation at ingestion points and throughout the pipeline, organizations can catch and address problems before they impact business decisions.

- Implement schema validation at data entry points

- Create automated data quality checks with alerting

- Develop clear protocols for handling non-conforming data

7. Implement Continuous Monitoring

Comprehensive monitoring provides visibility into pipeline performance and helps identify optimization opportunities. With proper observability tooling, organizations can detect emerging issues before they become critical and measure the impact of optimization efforts.

- Monitor end-to-end pipeline health with key performance indicators

- Set up alerting for performance degradation and failures

- Implement logging that facilitates root cause analysis

8. Leverage Infrastructure as Code

Infrastructure as Code (IaC) brings consistency and repeatability to pipeline deployment and management. This approach enables organizations to version-control their infrastructure configurations and quickly deploy optimized pipeline components across environments.

- Use templates to ensure consistent resource provisioning

- Version-control infrastructure configurations

- Automate deployment and scaling operations

Read more – Different Types of Data Pipelines: Which One Better Suits Your Business?

Implementing a Data Pipeline Optimization Framework

1. Assessment Phase

Pipeline performance auditing: Systematically measure and analyze current pipeline metrics against benchmarks to identify bottlenecks, using tools like execution logs, resource utilization monitors, and end-to-end latency trackers.

Identifying optimization opportunities: Map pipeline components against performance data to pinpoint specific areas for improvement, focusing on resource utilization gaps, processing inefficiencies, and architectural limitations.

Prioritizing improvements by impact: Evaluate potential optimizations based on business impact, implementation effort, and resource requirements to create a ranked priority list that delivers maximum value first.

2. Implementation Roadmap

Quick wins vs. long-term improvements: Balance immediate high-ROI optimizations like query tuning against strategic architectural changes, allowing for visible progress while building toward sustainable improvements.

Phased implementation approach: Break optimization efforts into sequenced sprints that minimize disruption to production environments, starting with low-risk components and gradually addressing more complex pipeline segments.

Testing and validation strategies: Implement rigorous testing protocols including performance benchmarking, regression testing, and canary deployments to verify optimizations deliver expected improvements without introducing new issues.

3. Continuous Optimization Culture

Establishing pipeline performance SLAs: Define clear, measurable performance targets for each pipeline component, creating accountability and objective criteria for ongoing optimization efforts.

Creating feedback loops: Implement systematic review cycles where pipeline performance data feeds back into planning, ensuring optimization becomes an iterative process rather than a one-time project.

Building optimization into the development cycle: Integrate performance considerations into development practices through code reviews, performance testing gates, and optimization-focused training for engineering teams.

ETL Pipeline Essentials: What You Need to Know to Get Started

Kickstart your ETL journey with the essentials you need to know!

Handling Data Quality and Consistency

1. Ensuring Accuracy and Reliability

Maintaining high data quality and consistency is essential for your data pipeline’s efficiency and effectiveness. To ensure accuracy and reliability, conducting regular data quality audits is crucial. These audits involve a detailed examination of the data within your system to ensure it adheres to quality standards, compliance, and business requirements. Schedule periodic intervals for these audits to examine your data’s accuracy, completeness, and consistency.

Another strategy for improving data quality is by monitoring and logging the flow of data through the pipeline. This will give you insight into potential bottlenecks that may be slowing the data flow or consuming resources. By identifying these issues, you can optimize your pipeline and improve your data’s reliability.

2. Handling Redundancy and Deduplication

Data pipelines often encounter redundant data and duplicate records. Proper handling of redundancy and deduplication plays a vital role in ensuring data consistency and compliance. Design your pipeline for fault tolerance and redundancy by using multiple instances of critical components and resources. This approach not only improves the resiliency of your pipeline but also helps in handling failures and data inconsistencies.

Implement data deduplication techniques to remove duplicate records and maintain data quality. This process involves:

- Identifying duplicates: Use matching algorithms to find similar records

- Merging duplicates: Combine the information from the duplicate records into a single, accurate record

- Removing duplicates: Eliminate redundant records from the dataset

Security, Privacy, and Compliance of Data Pipelines

1. Data Governance and Compliance

Effective data governance plays a crucial role in ensuring compliance with various regulations such as GDPR and CCPA. It is essential for your organization to adopt a robust data governance framework, which typically includes:

- Establishing data policies and standards

- Defining roles and responsibilities related to data management

- Implementing data classification and retention policies

- Regularly auditing and monitoring data usage and processing activities

By adhering to data governance best practices, you can effectively protect your organization against data breaches, misconduct, and non-compliance penalties.

2. Security Measures and Data Protection

In order to maintain the security and integrity of your data pipelines, it is essential to implement appropriate security measures and employ effective data protection strategies. Some common practices include:

- Encryption: Use encryption techniques to safeguard data throughout its lifecycle, both in transit and at rest. This ensures that sensitive information remains secure even if unauthorized access occurs

- Access Control: Implement strict access control management to limit data access based on the specific roles and responsibilities of employees in your organization

- Data Sovereignty: Consider data sovereignty requirements when building and managing data pipelines, especially for cross-border data transfers. Be aware of the legal and regulatory restrictions concerning the storage, processing, and transfer of certain types of data

- Anomaly Detection: Implement monitoring and anomaly detection tools to identify and respond swiftly to potential security threats or malicious activities within your data pipelines

- Fraud Detection: Leverage advanced analytics and machine learning techniques to detect fraud patterns or unusual behavior in your data pipeline

ETL vs. ELT: How to Choose the Right Data Processing Strategy

Boost your financial performance—explore advanced data analytics solutions today!

Tools and Technologies for Data Pipeline Optimization

Data Processing Frameworks

1. Apache Spark

A unified analytics engine offering in-memory processing that significantly accelerates data processing tasks. Spark excels at pipeline optimization through its DAG execution engine, which analyzes query plans and determines the most efficient execution path. Its ability to cache intermediate results in memory dramatically reduces I/O bottlenecks for iterative workflows.

2. Apache Flink

A stream processing framework built for high-throughput, low-latency data streaming applications. Flink optimizes data pipelines through stateful computations, exactly-once processing semantics, and advanced windowing capabilities. Its checkpoint mechanism ensures fault tolerance without sacrificing performance, making it ideal for real-time pipelines.

3. Databricks

A unified data analytics platform built on Spark that enhances pipeline optimization through its Delta Lake architecture. Databricks offers automatic cluster management, query optimization, and Delta caching for improved performance. Its optimized runtime provides significant speed improvements over standard Spark deployments and integrates ML workflows seamlessly.

A fully managed service implementing Apache Beam for both batch and streaming workloads. Dataflow optimizes pipelines by dynamically rebalancing work across compute resources, auto-scaling resources to match processing demands, and offering templates for common pipeline patterns. Its serverless approach eliminates cluster management overhead.

Orchestration Platforms

An open-source workflow management platform that optimizes pipeline orchestration through directed acyclic graphs (DAGs). Airflow enables pipeline optimization by allowing detailed dependency management, task parallelization, automatic retries, and resource pooling to prevent overloading downstream systems.

2. Dagster

A data orchestrator focused on developer productivity and observability. Dagster optimizes pipelines through its asset-based approach, type checking, and structured error handling. Its ability to track data dependencies and visualize lineage helps identify optimization opportunities and eliminate redundant processing.

3. Prefect

A workflow management system designed for modern infrastructure. Prefect optimizes pipelines through dynamic task mapping, caching mechanisms, and state handlers. Its hybrid execution model allows seamless scaling between local development and distributed production environments, with detailed visibility into task performance.

A serverless orchestration service that coordinates multiple AWS services. Step Functions optimizes pipelines by managing state transitions, handling error conditions, and enabling parallel processing branches. Its visual workflow editor and built-in integrations simplify complex pipeline management without infrastructure overhead.

The Ultimate Databricks to Fabric Migration Roadmap for Enterprises

A comprehensive step-by-step guide to seamlessly migrate your enterprise data analytics from Databricks to Microsoft Fabric, ensuring efficiency and minimal disruption.

Monitoring Solutions

1. Prometheus

An open-source monitoring and alerting toolkit designed for reliability and scalability. Prometheus optimizes data pipelines by providing detailed time-series metrics, custom query language for analysis, and targeted alerts for performance degradation. Its pull-based architecture is lightweight and adaptable to diverse pipeline environments.

2. Datadog

A comprehensive monitoring platform that unifies metrics, traces, and logs. Datadog enables pipeline optimization through end-to-end visibility, anomaly detection, and correlation analysis across distributed systems. Its pre-built integrations with data processing tools provide immediate insights into pipeline performance without extensive setup.

3. New Relic:

An observability platform with deep application performance monitoring capabilities. New Relic optimizes data pipelines through distributed tracing, real-time analytics, and ML-powered anomaly detection. Its ability to connect pipeline performance directly to business metrics helps prioritize optimization efforts based on impact.

4. Grafana

An open-source analytics and visualization platform. Grafana optimizes pipelines by consolidating metrics from multiple sources, enabling custom visualizations tailored to specific pipeline components, and supporting alerting based on complex conditions. Its flexible dashboard system adapts to different team needs and monitoring requirements.

10 Different Types of Data Pipelines: Which One Better Suits Your Business?

Explore the 10 different types of data pipelines and find out which one is best suited for optimizing your business’s data flow and processing needs.

Informatica to DBT Migration

When optimizing your data pipeline, migrating from Informatica to DBT can provide significant benefits in terms of efficiency and modernization.

Informatica has long been a staple for data management, but as technology evolves, many companies are transitioning to DBT (for more agile and version-controlled data transformation. This migration reflects a shift towards modern, code-first approaches that enhance collaboration and adaptability in data teams.

Moreover, transitioning from a traditional ETL (Extract, Transform, Load) platform to a modern data transformation framework leverages SQL for defining data transformations and runs directly on top of a data warehouse. This process aims to modernize the data stack by moving to a more agile, transparent, and collaborative approach to data engineering.

Also Read- Whitepaper on Modernizing Integration Layer from Informatica to DBT

Here’s What the Migration Typically Entails

- Enhanced Agility and Innovation: DBT transforms how data teams operate, enabling faster insights delivery and swift adaptation to evolving business needs. Its developer-centric approach and use of familiar SQL syntax foster innovation and expedite data-driven decision-making

- Scalability and Elasticity: DBT’s cloud-native design integrates effortlessly with modern data warehouses, providing outstanding scalability. This adaptability ensures that organizations can manage vast data volumes and expand their analytics capabilities without performance hitches

- Cost Efficiency and Optimization: Switching to DBT, an open-source tool with a cloud-native framework, reduces reliance on expensive infrastructure and licensing fees associated with traditional ETL tools like Informatica. This shift not only trims costs but also optimizes data transformations, enhancing the ROI of data infrastructure investments

- Improved Collaboration and Transparency: DBT encourages better teamwork across data teams by centralizing SQL transformation logic and utilizing version-controlled coding. This environment supports consistent, replicable, and dependable data pipelines, enhancing overall effectiveness and data value delivery

Key Areas to Focus

- Innovation: Embrace new technologies and methods to enhance your data pipeline. Adopting cutting-edge tools can result in improvements related to data quality, processing time, and scalability

- Compatibility: Ensure that your chosen technology stack aligns with your organization’s data infrastructure and can be integrated seamlessly

- Scalability: When selecting new technologies, prioritize those that can handle growing data volumes and processing requirements with minimal performance degradation

When migrating your data pipeline, keep in mind that DBT also emphasizes testing and documentation. Make use of DBT’s built-in features to validate your data sources and transformations, ensuring data correctness and integrity. Additionally, maintain well-documented data models, allowing for easier collaboration amongst data professionals in your organization.

8 Best Data Modeling Tools to Elevate Your Data Game

Explore the top 8 data modeling tools that can streamline your data architecture, improve efficiency, and enhance decision-making for your business.

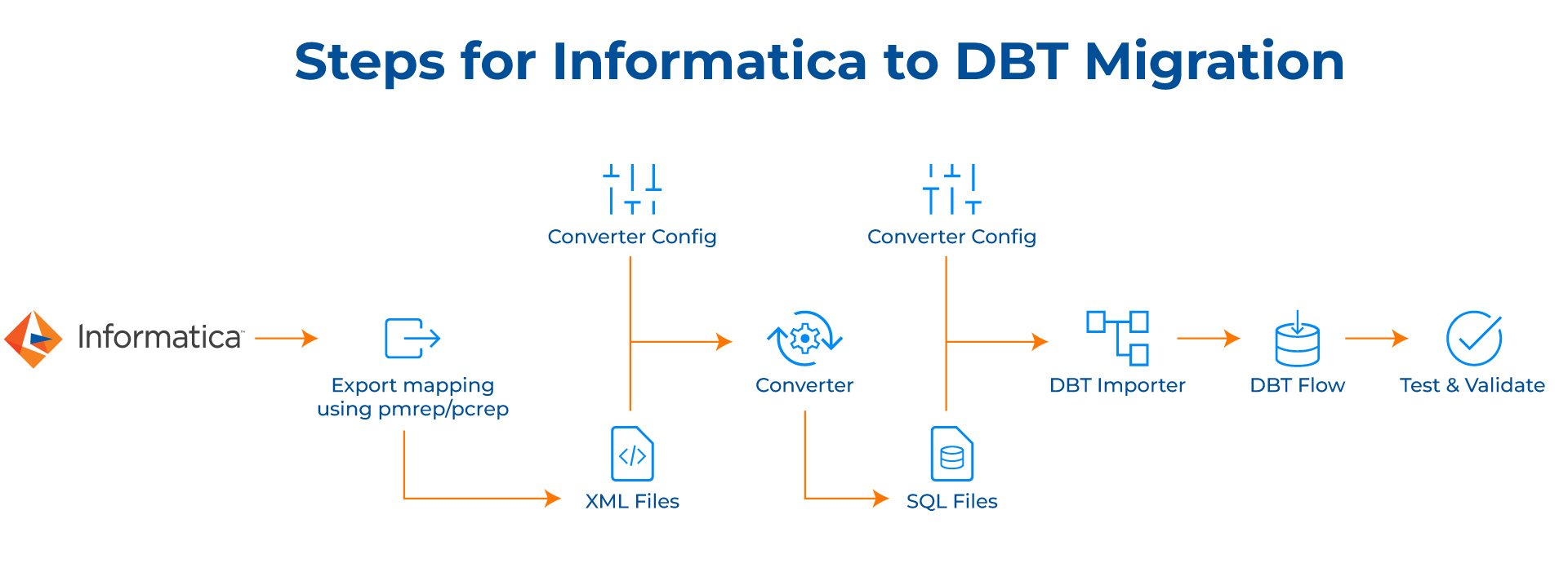

Migration Approach for Transitioning from Informatica to DBT

1. Inventory and Analysis

Catalog all Informatica mappings, including both PowerCenter and IDQ. Perform a detailed analysis of each mapping to decipher its structure, dependencies, and transformation logic.

2. Export Informatica Mappings

Utilize the pmrep command for PowerCenter and pcrep for IDQ mappings to export them to XML format. Organize the XML files into a structured directory hierarchy for streamlined access and processing.

3. Transformation to SQL Conversion

Develop a conversion tool or script to parse XML files and convert each transformation into individual SQL files. Ensure the conversion script accounts for complex transformations by mapping Informatica functions to equivalent Snowflake functions. Structure SQL files using standardized naming conventions and directories for ease of management.

4. DBT Importer Configuration

Create a DBT importer script to facilitate the loading of SQL files into DBT. Configure the importer to sequence SQL files based on dependencies, drawing from a configuration file with Snowflake connection details.

5. Data Model and Project Setup

Define the data model and organize the DBT project structure, including schemas, models, and directories, adhering to DBT best practices.

6. Test and Validate

Conduct comprehensive testing of the SQL files and DBT project setup to confirm their correctness and efficiency. Validate all data transformations and ensure seamless integration with the Snowflake environment.

7. Migration Execution

Proceed with the migration, covering the export of mappings, their conversion to SQL, and importing them into DBT, while keeping transformations well-sequenced. Monitor the process actively, addressing any issues promptly to maintain migration integrity.

8. Post-Migration Validation

Perform a thorough validation to verify data consistency and system performance post-migration. Undertake performance tuning and optimizations to enhance the efficiency of the DBT setup.

9. Monitoring and Maintenance:

Establish robust monitoring systems to keep a close watch on DBT workflows and performance. Schedule regular maintenance checks to preemptively address potential issues.

10. Continuous Improvement

Foster a culture of continuous improvement by regularly updating the DBT environment and processes based on new insights, business needs, and evolving data practices.

Data Integration Tools: The Ultimate Guide for Businesses

Explore the top data integration tools that help businesses streamline workflows, unify data sources, and drive smarter decision-making.

Choosing Kanerika to for Efficient Data Modernization Services

Businesses today face critical challenges when operating with legacy data systems. Outdated infrastructure limits data accessibility, compromises reporting accuracy, prevents real-time analytics, and incurs excessive maintenance costs. Kanerika, a leading and data and AI solutions firm, helps organizations transform these limitations into competitive advantages through modern data platforms that enable advanced analytics, cloud scalability, and AI-driven insights.

The migration journey, however, presents significant risks. Traditional manual approaches are resource-intensive and error-prone, potentially disrupting business continuity. Even minor mistakes in data mapping or transformation logic can cascade into serious problems—inconsistent outputs, permanent data loss, or extended system downtime.

Kanerika addresses these challenges through purpose-built automation solutions that streamline complex migrations with precision. Our specialized tools facilitate seamless transitions across multiple platform pairs: SSRS to Power BI, SSIS/SSAS to Fabric, Informatica to Talend/DBT, and Tableau to Power BI. This automation-first approach dramatically reduces manual effort while maintaining data integrity throughout the migration process.

Revamp Your Data Pipelines And Stay Ahead—Start With Data Modernization!

Partner with Kanerika Today!

Frequently Asked Questions

What is data pipeline optimization?

Data pipeline optimization is the systematic process of improving how data flows from source systems to destinations by enhancing speed, reliability, and resource efficiency. It involves identifying bottlenecks, reducing latency, minimizing failed jobs, and ensuring data quality throughout the pipeline architecture. Effective optimization reduces infrastructure costs while increasing throughput and data freshness for downstream analytics and applications. Organizations prioritize pipeline performance tuning to support real-time decision-making and scalable data operations. Kanerika’s DataOps specialists help enterprises streamline their data pipelines for maximum efficiency—schedule a consultation to identify your optimization opportunities.

What are data pipeline optimization techniques?

Data pipeline optimization techniques include parallel processing to distribute workloads, incremental loading to process only changed data, partitioning large datasets for faster queries, and implementing caching strategies for frequently accessed information. Additional methods involve optimizing transformation logic, right-sizing compute resources, implementing data compression, and scheduling jobs during low-traffic periods. Monitoring pipeline metrics helps identify slow stages requiring attention. Schema design improvements and index optimization also contribute to faster data movement. Kanerika implements proven optimization techniques tailored to your specific data architecture—connect with our team to accelerate your pipeline performance.

What is the difference between ETL and data pipeline?

A data pipeline is the broader infrastructure that moves data between systems, while ETL is a specific pattern within that pipeline focusing on Extract, Transform, and Load operations. Data pipelines can include streaming, batch processing, ELT, or hybrid approaches beyond traditional ETL. Pipelines encompass orchestration, monitoring, and error handling across the entire data journey. ETL specifically transforms data before loading into the target system, whereas modern pipelines often defer transformation using ELT patterns. Understanding this distinction helps architects choose appropriate data integration strategies. Kanerika designs end-to-end data pipeline solutions that incorporate the right processing patterns for your needs—let’s discuss your architecture.

What is the main purpose of a data pipeline?

The main purpose of a data pipeline is to automate the reliable movement and transformation of data from source systems to destinations where it can be analyzed and used for decision-making. Pipelines eliminate manual data transfers, ensure consistency, and enable timely access to accurate information across the organization. They handle data ingestion, validation, cleansing, and delivery while maintaining data integrity throughout the process. Well-designed pipelines support both batch and real-time data processing needs for analytics platforms and operational systems. Kanerika builds robust data pipelines that deliver trusted data to your business users—reach out for a pipeline assessment.

What is a data pipeline example?

A common data pipeline example involves extracting sales transactions from multiple retail point-of-sale systems, transforming the data by standardizing formats and calculating metrics, then loading it into a data warehouse for business intelligence reporting. Another example is a real-time streaming pipeline that ingests IoT sensor data, processes it through Apache Kafka, applies anomaly detection algorithms, and triggers alerts in operational dashboards. E-commerce companies use pipelines to sync customer behavior data from websites to recommendation engines. Each pipeline addresses specific business requirements through automated data flow management. Kanerika has implemented data pipelines across retail, manufacturing, and finance—explore how we can build yours.

What are the main 3 stages in a data pipeline?

The three main stages in a data pipeline are ingestion, transformation, and delivery. Ingestion involves extracting data from various sources including databases, APIs, files, and streaming platforms into the pipeline. Transformation encompasses cleansing, validating, enriching, and restructuring data to meet target schema requirements and business rules. Delivery loads the processed data into destination systems such as data warehouses, lakes, or operational applications where end users consume it. Optimizing each stage independently while ensuring smooth handoffs between them is critical for overall pipeline performance. Kanerika optimizes all three pipeline stages to ensure your data arrives fast, accurate, and ready for analysis—contact us to get started.

What is the data pipeline lifecycle?

The data pipeline lifecycle encompasses design, development, testing, deployment, monitoring, and maintenance phases. Design involves defining sources, transformations, destinations, and scheduling requirements based on business needs. Development builds the pipeline components using appropriate tools and frameworks. Testing validates data quality, performance, and error handling before production deployment. Once live, continuous monitoring tracks pipeline health, latency, and throughput metrics. Maintenance includes updating pipelines for schema changes, optimizing performance, and scaling infrastructure as data volumes grow. This lifecycle approach ensures sustainable, reliable data operations over time. Kanerika manages the complete data pipeline lifecycle for enterprises seeking operational excellence—let’s discuss your pipeline roadmap.

How many types of data pipelines are there?

There are several types of data pipelines categorized by processing approach and use case. Batch pipelines process data in scheduled intervals, ideal for high-volume historical analysis. Streaming pipelines handle real-time data continuously for immediate insights. ETL pipelines transform data before loading, while ELT pipelines load raw data first then transform within the destination. Lambda architecture combines batch and streaming for comprehensive processing. Delta pipelines focus on change data capture for incremental updates. Cloud-native pipelines leverage managed services for scalability. Choosing the right type depends on latency requirements and data characteristics. Kanerika architects the optimal pipeline type for your specific data strategy—schedule a discovery call today.

What are common data pipeline tools?

Common data pipeline tools include Apache Airflow for workflow orchestration, Apache Kafka for real-time streaming, and Apache Spark for large-scale data processing. Cloud-native options include Azure Data Factory, AWS Glue, and Google Cloud Dataflow for managed pipeline services. Databricks provides unified analytics pipelines while Snowflake offers built-in data sharing and transformation capabilities. Informatica and Talend remain popular enterprise ETL platforms. dbt has gained adoption for transformation-focused workflows within modern data stacks. Tool selection depends on scale, team expertise, cloud strategy, and integration requirements. Kanerika is certified across leading pipeline platforms including Microsoft Fabric and Databricks—let us recommend the right tools for your environment.

What are the 5 pillars of data pipeline monitoring?

The five pillars of data pipeline monitoring are latency, throughput, data quality, availability, and error rates. Latency measures how quickly data moves from source to destination, critical for time-sensitive applications. Throughput tracks volume processed per time period to ensure capacity meets demand. Data quality monitoring validates completeness, accuracy, and consistency of pipeline outputs. Availability measures uptime and reliability of pipeline components. Error rate tracking identifies failed jobs, transformation issues, and rejected records requiring attention. Together, these metrics provide comprehensive visibility into pipeline health and optimization opportunities. Kanerika implements holistic pipeline monitoring frameworks that keep your data flowing reliably—connect with us to strengthen your observability.

How to optimize an ETL pipeline?

Optimizing an ETL pipeline starts with profiling current performance to identify bottlenecks in extraction, transformation, or loading phases. Implement parallel processing to distribute workloads across multiple threads or nodes. Use incremental extraction to process only changed records rather than full refreshes. Optimize transformation logic by pushing computations to the source database where possible. Partition large tables to improve query performance during extraction. Tune database connections and batch sizes for efficient loading. Schedule resource-intensive jobs during off-peak hours. Add indexing strategically on frequently joined columns. Kanerika’s ETL optimization services have reduced processing times by over fifty percent for enterprise clients—request a pipeline performance review.

What is pipeline in ETL?

A pipeline in ETL refers to the automated sequence of processes that extracts data from source systems, transforms it according to business rules, and loads it into target destinations. The pipeline orchestrates each step, managing dependencies, scheduling, error handling, and logging throughout execution. Modern ETL pipelines include data validation checkpoints, retry logic for failures, and alerting mechanisms for anomalies. Pipeline architecture determines how data flows between extraction connectors, transformation engines, and loading components. Well-designed ETL pipelines ensure repeatable, auditable data processing that scales with growing data volumes. Kanerika builds enterprise ETL pipelines with built-in governance and quality controls—talk to our integration specialists today.

Will AI replace ETL?

AI will not replace ETL but will significantly augment and automate it. Machine learning is already enhancing ETL through intelligent schema mapping, automated data quality detection, and self-tuning performance optimization. AI-powered tools can suggest transformations, identify anomalies, and predict pipeline failures before they occur. However, ETL fundamentals of extracting, transforming, and loading data remain essential regardless of automation level. Human oversight is still required for complex business logic, compliance requirements, and strategic data decisions. The future combines AI capabilities with traditional ETL foundations for smarter data integration. Kanerika leverages AI-enhanced data integration approaches while maintaining robust ETL foundations—explore our intelligent automation solutions.

Is ETL obsolete?

ETL is not obsolete but has evolved alongside modern data architectures. While ELT patterns have gained popularity with cloud data warehouses, traditional ETL remains essential for scenarios requiring data transformation before landing in target systems, particularly for compliance-sensitive data or resource-constrained destinations. Many organizations run hybrid approaches combining ETL and ELT based on specific use cases. The principles of extracting, transforming, and loading data remain fundamental regardless of where transformation occurs. Modern ETL tools now support both patterns with enhanced automation and cloud-native capabilities. Kanerika helps enterprises modernize legacy ETL workflows while preserving business logic—schedule an assessment to evaluate your current architecture.

What are the basics of data pipelines?

Data pipeline basics involve understanding sources, transformations, destinations, and orchestration. Sources include databases, APIs, files, and streaming platforms from which data originates. Transformations clean, validate, enrich, and restructure data to meet target requirements. Destinations are warehouses, lakes, or applications where processed data lands for consumption. Orchestration coordinates job scheduling, dependency management, and error handling across pipeline components. Additional fundamentals include data lineage tracking, monitoring for failures, and implementing retry logic for resilience. Mastering these basics enables organizations to build reliable, scalable data flows supporting analytics and operations. Kanerika helps teams establish strong data pipeline foundations before scaling—start with a free architecture consultation.

What are the 4 pillars of data engineering?

The four pillars of data engineering are data ingestion, data storage, data transformation, and data serving. Ingestion handles collecting data from diverse sources through batch or streaming methods into the data platform. Storage involves selecting appropriate repositories like data lakes, warehouses, or lakehouses based on access patterns and cost requirements. Transformation processes raw data into analytics-ready formats through cleansing, modeling, and aggregation. Serving delivers processed data to consumers through APIs, dashboards, or direct queries. Mastering all four pillars ensures robust data infrastructure supporting enterprise analytics initiatives. Kanerika provides expertise across all data engineering pillars to build cohesive, optimized data platforms—discuss your engineering challenges with our team.

What are the 4 pillars of data strategy?

The four pillars of data strategy are data governance, data architecture, data quality, and data literacy. Governance establishes policies, ownership, and compliance frameworks ensuring responsible data use across the organization. Architecture defines technical infrastructure including pipelines, storage, and integration patterns supporting business requirements. Quality encompasses processes for validating accuracy, completeness, and consistency of data throughout its lifecycle. Literacy develops organizational capabilities for understanding and leveraging data effectively in decision-making. Together these pillars create sustainable data capabilities driving business value beyond individual projects. Kanerika aligns data pipeline optimization with broader enterprise data strategy—engage our consultants to build your comprehensive data roadmap.

What are the best ETL tools?

The best ETL tools depend on your specific requirements, but leading options include Microsoft Azure Data Factory for cloud-native Azure environments, Informatica PowerCenter for enterprise-scale deployments, and Talend for open-source flexibility. Databricks excels for unified analytics and lakehouse architectures while Snowflake provides built-in transformation capabilities. Apache Airflow offers powerful orchestration for custom pipelines. Fivetran and Airbyte simplify data ingestion with pre-built connectors. dbt has become essential for transformation workflows in modern data stacks. Evaluation criteria should include scalability, connector availability, team expertise, and total cost of ownership. Kanerika is certified across leading ETL platforms and helps enterprises select and implement the right tools—request a personalized tool recommendation.