Big data has become the backbone of modern business strategy, it is the base on which modern AI implementation stands on. 75% of businesses worldwide use data to drive innovation, while 50% report that data helps them compete in the market, according to Statista. But with great data comes great challenges.

According to Research and Markets, the big data and analytics services market size will grow to $365.42 billion in 2029 at a compound annual growth rate of 21.3%.

The amount of data at enterprises grows by 63% each month, with 12% of organizations reporting increases of 100% or more, reveals a survey by Matillion and IDG. This explosive growth brings both opportunities and obstacles that can make or break your data strategy. Opportunities range from implementing agentic AI to gaining a major market advantage.

Bottom Line: While big data offers tremendous potential for driving innovation and business growth, most organizations struggle with 11 core challenges that prevent them from extracting real value from their data investments.

What is Big Data?

Big data refers to datasets that are too large, complex, or fast-moving for traditional data processing tools to handle effectively. It’s characterized by the “5 Vs”:

- Volume: Petabytes or exabytes of information

- Velocity: Real-time or near real-time data streams

- Variety: Structured, semi-structured, and unstructured data types

- Veracity: Data quality and accuracy concerns

- Value: The ability to extract meaningful insights

Transform Your Business with Data & AI- Powered Solutions!

Partner with Kanerika for Expert Data & AI implementation Services

The 11 Biggest Big Data Challenges

1. Data Volume Explosion

The Challenge:

Organizations are drowning in data. Companies now handle petabytes or even exabytes of information, growing faster than their infrastructure can support.

Real Impact:

- Storage costs can reach millions annually

- Query performance degrades as datasets expand

- Infrastructure struggles under massive data loads

Solutions:

- Cloud storage adoption: AWS S3, Azure Blob, Google Cloud Storage offer scalable solutions

- Data compression: Reduce storage needs by 50-80%

- Automated lifecycle management: Archive old data, delete unnecessary files

- Data lakes: Store raw data cheaply until needed

Example:

Netflix processes over 15 petabytes of data daily but uses cloud-native solutions and intelligent data tiering to manage costs effectively.

2. Data Integration Complexity

The Challenge:

Data comes from everywhere – databases, APIs, IoT devices, social media, third-party sources. Integrating these disparate systems creates massive headaches.

Pain Points:

- Data silos prevent comprehensive analysis

- Complex ETL processes increase costs and delays

- Inconsistent formats make data unusable

- Integration projects can take months or years

Solutions:

- Modern integration platforms: Apache Kafka, Snowflake, Talend

- API-first architecture: Standardize data access methods

- Data virtualization: Query data without moving it

- Schema-on-read approaches: Handle diverse data types flexibly

Success Story:

Walmart integrated over 200 data sources using Apache Kafka, reducing integration time from months to weeks while improving data freshness.

3. Data Quality Issues

The Challenge:

“Garbage in, garbage out” is the biggest threat to big data success. Poor quality data leads to wrong decisions and wasted resources.

Common Problems:

- Inconsistent data formats across systems

- Missing or incomplete records

- Duplicate entries inflating datasets

- Outdated information skewing analysis

Solutions:

- Automated data validation: Real-time quality checks

- Data profiling tools: Identify quality issues automatically

- Master data management: Single source of truth for key entities

- Data cleansing pipelines: Fix issues before analysis

Impact:

According to IBM, poor data quality costs the US economy $3.1 trillion annually. Companies with high-quality data see 23x more customer acquisition and 6x higher profits.

4. Real-Time Processing Demands

The Challenge:

Business moves at the speed of light. Organizations need insights immediately, not hours or days later.

Industry Requirements:

- E-commerce: Real-time recommendations and fraud detection

- Finance: Millisecond trading decisions and risk monitoring

- Healthcare: Patient monitoring and emergency alerts

- Manufacturing: Predictive maintenance and quality control

Solutions:

- Stream processing: Apache Flink, Apache Storm, Amazon Kinesis

- Edge computing: Process data closer to the source

- In-memory databases: Redis, SAP HANA for faster queries

- Event-driven architecture: React to data changes instantly

Case Study:

Capital One processes over 1 billion events daily in real-time using Apache Kafka and custom streaming applications to detect fraud and improve customer experience.

5. Scalability and Performance Bottlenecks

The Challenge:

Systems that work fine with small datasets crash when scaled up. Performance degrades as data and users increase.

Scaling Problems:

- Slow query response times frustrate users

- System crashes during peak usage

- Adding more hardware doesn’t always help

- Legacy systems can’t handle modern workloads

Solutions:

- Distributed computing: Apache Spark, Hadoop clusters

- Query optimization: Indexing, partitioning, caching

- Auto-scaling infrastructure: Cloud-native solutions that grow with demand

- Load balancing: Distribute work across multiple servers

Technology Stack:

Companies like Uber use Apache Spark clusters with thousands of nodes to process billions of trips and deliver insights in seconds.

6. Data Security and Privacy Concerns

The Challenge:

Big data is a big target for cybercriminals. Data breaches can cost millions and destroy reputations.

Security Threats:

- Healthcare data breaches cost $10.93 million on average

- GDPR fines can reach 4% of annual revenue

- Ransomware attacks target data-rich companies

- Insider threats from employees and contractors

Compliance Requirements:

- GDPR: European data protection laws

- CCPA: California privacy regulations

- HIPAA: Healthcare data protection

- SOX: Financial reporting standards

Solutions:

- Encryption: Protect data at rest and in transit

- Access controls: Role-based permissions and multi-factor authentication

- Data masking: Hide sensitive information in non-production environments

- Regular audits: Monitor access and detect suspicious activity

- Privacy by design: Build security into systems from the start

7. Cost Management and ROI Challenges

The Challenge:

Big data projects can quickly spiral out of control financially. Cloud costs, infrastructure investments, and staffing expenses add up fast.

Cost Drivers:

- Cloud storage and compute expenses growing 50-100% annually

- Specialized talent commands premium salaries

- Infrastructure over-provisioning wastes resources

- Failed projects provide no return on investment

Cost Optimization Strategies:

- Right-sizing resources: Match capacity to actual needs

- Serverless architectures: Pay only for what you use

- Reserved instances: Commit to long-term usage for discounts

- Cost monitoring tools: AWS Cost Explorer, Azure Cost Management

- Data compression and deduplication: Reduce storage requirements

Budget Planning:

Successful organizations allocate 15-20% of their IT budget to data and analytics initiatives, with clear ROI metrics defined upfront.

8. Skills Gap and Talent Shortage

The Challenge:

Demand for data professionals far exceeds supply. Finding qualified people to implement and manage big data systems is extremely difficult.

Talent Shortage Statistics:

- 85% of organizations report difficulty finding qualified data professionals

- Data scientist salaries have increased 30-50% in recent years

- Average time to fill data roles: 4-6 months

- 60% of data teams are understaffed

Solutions:

- Internal training programs: Upskill existing employees

- Low-code/no-code platforms: Enable business users to work with data

- Outsourcing and partnerships: Work with specialized firms

- University partnerships: Build talent pipelines

- Remote work options: Access global talent pools

Training Investment:

Companies that invest in employee data training see 5x higher productivity and 20% lower turnover rates.

9. Data Governance and Compliance

The Challenge: Without proper governance, data becomes chaotic. Teams work with conflicting information, security risks increase, and compliance violations occur.

Governance Problems:

- Different departments using different definitions for the same metrics

- No clear data ownership or accountability

- Inconsistent security policies across systems

- Lack of data lineage and audit trails

Governance Framework:

- Data catalog: Inventory of all data assets

- Data stewardship: Clear ownership and responsibilities

- Policy enforcement: Automated compliance monitoring

- Metadata management: Documentation and lineage tracking

- Quality monitoring: Continuous data health checks

Success Metric: Organizations with strong data governance report 23% higher data quality scores and 47% fewer compliance issues.

10. Technology Selection and Integration

The Challenge:

The big data technology landscape changes constantly. Choosing the right tools and making them work together is complex and risky.

Technology Overload:

- Hundreds of vendors and solutions to evaluate

- Open source vs. commercial tool decisions

- Integration complexity between different platforms

- Risk of vendor lock-in

Selection Criteria:

- Scalability: Can it handle future growth?

- Performance: Does it meet speed requirements?

- Integration: How well does it work with existing systems?

- Support: What level of vendor/community support is available?

- Cost: What’s the total cost of ownership?

Best Practices:

- Start with proof-of-concept projects

- Choose platforms with strong ecosystems

- Prioritize open standards and APIs

- Plan for multi-cloud and hybrid deployments

11. Organizational Change Management

The Challenge:

Technology is often the easy part. Getting people to change how they work and make data-driven decisions is much harder.

Change Resistance:

- Employees comfortable with existing processes

- Fear of job displacement due to automation

- Lack of understanding about data benefits

- Cultural preference for gut-feeling decisions

Change Management Strategy:

- Executive sponsorship: Leadership must champion data initiatives

- Training and education: Help employees understand benefits

- Quick wins: Demonstrate value early and often

- Incentive alignment: Reward data-driven decision making

- Communication: Regular updates on progress and success stories

Culture Transformation:

Data-driven organizations are 23x more likely to acquire customers and 19x more likely to be profitable.

Industry-Specific Big Data Challenges

Healthcare

- Patient privacy compliance (HIPAA)

- Integrating electronic health records

- Real-time patient monitoring

- Drug discovery and clinical trials

Financial Services

- Fraud detection in milliseconds

- Regulatory reporting requirements

- Risk management and stress testing

- Algorithmic trading systems

Retail and E-commerce

- Real-time personalization

- Inventory optimization

- Supply chain visibility

- Customer journey analytics

Manufacturing

- Predictive maintenance

- Quality control automation

- Supply chain optimization

- Energy efficiency monitoring

Gen AI Paradox

The Gen AI Paradox defines the current era of AI adoption—where the technology’s greatest strengths are inseparably linked to its greatest risks.

Why Choose Kanerika to Solve Your Big Data Challenges

At Kanerika, we understand that big data challenges aren’t just technical problems – they’re business transformation opportunities. Here’s why leading organizations trust us with their most critical data initiatives:

Proven Track Record Across Industries

We’ve successfully delivered big data & generative AI solutions for healthcare systems, financial institutions, manufacturing companies, and technology firms. Our cross-industry experience means we understand both technical requirements and business contexts.

Client Results:

- 70% reduction in data processing time for a major healthcare provider

- $2.3M annual cost savings through cloud optimization for a manufacturing client

- 90% improvement in real-time analytics performance for a financial services firm

End-to-End Big Data Expertise

Unlike vendors who specialize in just one area, Kanerika provides comprehensive solutions covering:

- Data Strategy and Assessment: We evaluate your current state and design a roadmap for success

- Architecture and Implementation: Cloud-native solutions built for scale and performance

- Data Integration: Seamlessly connect all your data sources

- Analytics and AI/ML: Turn raw data into actionable insights and get a clear roadmap with our AI assessment

- Governance and Security: Ensure compliance and protect sensitive information

Technology-Agnostic Approach

We’re not tied to any single vendor or platform. Our experts work with:

- Cloud Platforms: AWS, Azure, Google Cloud

- Big Data Technologies: Spark, Hadoop, Kafka, Snowflake

- Analytics Tools: Power BI, Tableau, Qlik

- AI/ML Frameworks: TensorFlow, PyTorch, scikit-learn

This flexibility ensures you get the best solution for your specific needs, not what’s best for our profit margins.

How to Choose the Right Cloud Application Migration Service for Your Business

Explore strategies, services, benefits, challenges, and future trends shaping cloud application migration in 2025 and beyond.

Accelerated Time-to-Value

Our proven methodologies and pre-built accelerators mean faster deployments and quicker ROI:

- 30-60 day proof-of-concepts to demonstrate value quickly

- Reusable frameworks that reduce development time by 40-60%

- Best practice templates for governance, security, and compliance

- Change management support to ensure user adoption

Scalable Solutions Built for Growth

We design systems that grow with your business:

- Cloud-native architectures that scale automatically

- Microservices approach for flexibility and maintainability

- API-first design for easy integration with new systems

- Future-ready platforms that adapt to changing requirements

Dedicated Success Partnership

Your success is our success. We provide:

- 24/7 support for critical systems

- Continuous optimization to improve performance and reduce costs

- Regular health checks to prevent issues before they occur

- Training and knowledge transfer to build internal capabilities

- Strategic consulting to align technology with business goals

Our Big Data Services

Data Strategy and Consulting

- Current state assessment and gap analysis

- Future state architecture design

- Technology selection and roadmap planning

- ROI modeling and business case development

Data Engineering and Integration

- ETL/ELT pipeline development

- Real-time streaming data processing

- Data lake and warehouse implementation

- API development and integration

Analytics and Business Intelligence

- Interactive dashboards and reports

- Self-service analytics platforms

- Predictive modeling and machine learning

- Natural language query interfaces

Data Governance and Security

- Data catalog and metadata management

- Access control and security implementation

- Compliance automation (GDPR, HIPAA, SOX)

- Data quality monitoring and remediation

Cloud Migration and Optimization

- Multi-cloud strategy and implementation

- Legacy system modernization

- Cost optimization and performance tuning

- Disaster recovery and business continuity

Case Studies of Successful Big Data Implementations in Healthcare

At Kanerika, we have led big data implementation for global healthcare organizations. Our clients have approached us with requirements for data transformation, data analytics, and data management.

Leading Clinical Research Company – Improved Data Analytics for Better Research Outcomes

This clinical research company struggled with manual SAS programming delays and inefficient data cleaning, which affected productivity and research outcomes.

By adopting Trifacta, Kanerika’s data team was able to transform complex datasets more efficiently and streamline reporting and data preparation.

This led to a 40% increase in operational efficiency. As well as a 45% improvement in user productivity, and a 60% faster data integration process.

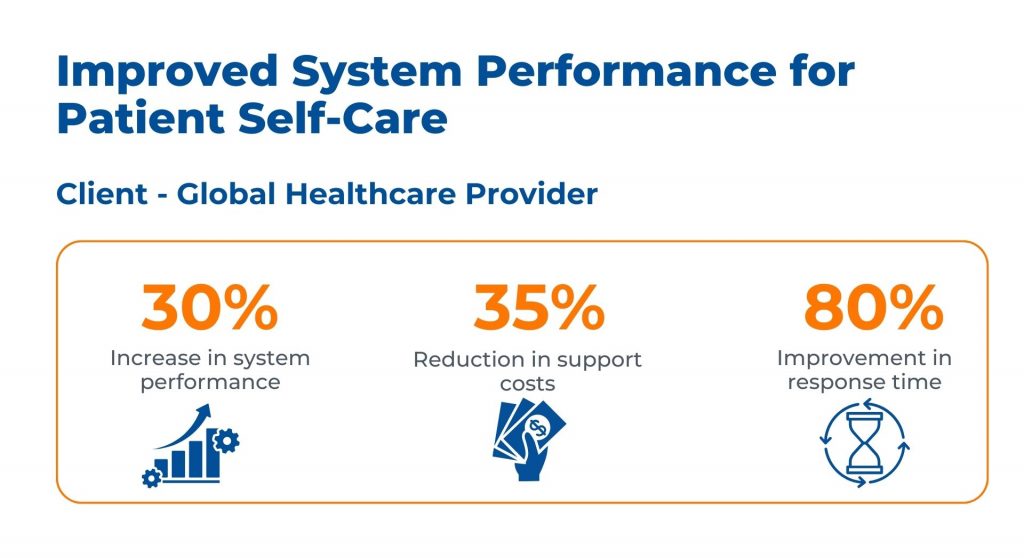

Global Healthcare Provider – Improved System Performance for Patient Self-Care

Inadequate system architecture was impeding patient self-care and system performance for this healthcare provider.

The implemented solution by Kanerika’s team involved re-architecting the system using Microsoft Azure to improve response time and scalability. The results were a 30% increase in system performance. As well as a 35% reduction in support costs, and an 80% improvement in response time.

These summaries highlight the key challenges, solutions, and outcomes for each case study, focusing on the significant improvements made post-intervention.

Transform Your Business with Data & AI- Powered Solutions!

Partner with Kanerika for Expert Data & AI implementation Services

FAQs

What is a big data challenge?

A big data challenge refers to any obstacle that prevents organizations from effectively capturing, storing, processing, or analyzing massive datasets. Common big data challenges include managing exponential data growth, ensuring data quality across disparate sources, maintaining security and privacy compliance, and extracting actionable insights within acceptable timeframes. These hurdles intensify as data volumes scale into petabytes and data sources multiply across cloud, on-premise, and edge environments. Kanerika helps enterprises navigate complex big data challenges with tailored data platform strategies—connect with our team to assess your specific needs.

What are the key challenges of big data?

The key challenges of big data include data storage scalability, processing speed, data quality management, security vulnerabilities, and integration complexity. Organizations struggle with siloed systems that fragment insights, skill gaps that delay implementation, and compliance requirements that restrict data movement. Real-time analytics demands strain legacy infrastructure, while unstructured data from IoT sensors, social feeds, and logs complicates traditional ETL pipelines. Addressing these big data obstacles requires unified platforms and automated governance frameworks. Kanerika’s data integration specialists help enterprises overcome these challenges with modern architectures—schedule a consultation to explore solutions.

What are the weaknesses of big data?

Big data weaknesses center on quality inconsistencies, privacy risks, and interpretation difficulties. Raw data often contains duplicates, missing values, and outdated records that corrupt analytical models. Privacy concerns escalate when sensitive information spans multiple jurisdictions with conflicting regulations. Additionally, correlation-based insights can mislead decision-makers who mistake patterns for causation. Infrastructure costs balloon without proper optimization, and talent shortages leave organizations unable to leverage their data investments effectively. These big data limitations demand robust governance and skilled execution. Kanerika addresses these weaknesses through comprehensive data governance frameworks—reach out to strengthen your data foundation.

What are the 4 V's of big data?

The 4 V’s of big data are Volume, Velocity, Variety, and Veracity. Volume describes the massive scale of data generated daily across enterprise systems. Velocity refers to the speed at which data arrives and requires processing, often in real-time streams. Variety encompasses structured databases, semi-structured logs, and unstructured content like videos and emails. Veracity addresses data accuracy and trustworthiness, a persistent big data challenge. Understanding these dimensions helps organizations architect appropriate storage and analytics solutions. Kanerika designs data platforms that handle all four V’s efficiently—talk to our experts about your requirements.

What are the 5 V's of big data?

The 5 V’s of big data expand the original framework by adding Value to Volume, Velocity, Variety, and Veracity. Value represents the business worth extracted from data through analytics and intelligence. Without demonstrable value, big data investments become cost centers rather than competitive advantages. Organizations must balance infrastructure spending against measurable outcomes like improved customer retention, operational efficiency, or revenue growth. This fifth dimension transforms big data from a technical exercise into a strategic asset. Kanerika helps enterprises maximize data value through purpose-built analytics solutions—contact us to calculate your potential ROI.

What are the 7 V's of big data?

The 7 V’s of big data include Volume, Velocity, Variety, Veracity, Value, Variability, and Visualization. Variability captures the inconsistent flow rates and format changes that complicate data pipelines, while Visualization addresses the challenge of presenting complex datasets in comprehensible formats for decision-makers. Each V introduces distinct big data challenges requiring specialized tools and expertise. Enterprises must architect systems that accommodate all seven dimensions without creating technical debt or operational bottlenecks. Kanerika builds comprehensive data platforms addressing every V with scalable, governed solutions—let us assess your big data maturity today.

How to overcome big data challenges?

Overcoming big data challenges requires a structured approach combining technology modernization, governance implementation, and talent development. Start by auditing existing data sources and quality issues, then establish clear data ownership and stewardship roles. Migrate to scalable cloud platforms like Databricks or Snowflake that handle volume spikes without manual intervention. Implement automated data quality checks within pipelines and deploy self-service analytics to reduce bottlenecks. Prioritize use cases by business impact rather than attempting enterprise-wide transformation simultaneously. Kanerika’s proven methodology helps organizations systematically address big data obstacles—request a free assessment to start your journey.

Why did big data fail?

Big data initiatives fail primarily due to unclear business objectives, poor data quality, and organizational resistance. Many projects prioritize technology acquisition over problem definition, resulting in expensive infrastructure without measurable outcomes. Data silos persist when governance frameworks lack executive sponsorship, while analytics teams produce reports nobody acts upon. Skill gaps leave sophisticated tools underutilized, and unrealistic timelines create disillusionment before value materializes. Failed big data projects share common patterns: weak stakeholder alignment, inadequate change management, and missing success metrics. Kanerika prevents these failures through outcome-focused implementation—partner with us to ensure your initiative succeeds.

What is a major challenge for storage of big data?

A major challenge for big data storage is balancing cost efficiency with performance requirements as data volumes scale exponentially. Organizations face difficult tradeoffs between hot storage for frequently accessed data and cold archives for compliance retention. Traditional storage architectures cannot handle the velocity of incoming streams or the variety of unstructured formats like video, sensor data, and documents. Data lakes often become data swamps without proper cataloging and lifecycle management policies. Storage costs multiply when redundancy and disaster recovery requirements apply. Kanerika architects cost-optimized storage solutions on platforms like Microsoft Fabric—discuss your storage challenges with our specialists.

What are the challenges of data growth?

Data growth challenges include infrastructure scalability, cost management, and maintaining performance as datasets expand. Organizations generating terabytes daily struggle with storage procurement cycles that cannot keep pace. Query performance degrades when databases exceed optimal sizes, frustrating analysts and delaying decisions. Backup windows extend beyond acceptable limits, creating recovery risks. Compliance requirements mandate retaining data longer while privacy regulations demand deletion capabilities. Data growth also strains network bandwidth and processing capacity across distributed architectures. Without proactive capacity planning, enterprises face emergency upgrades and budget overruns. Kanerika helps organizations scale sustainably with modern data platforms—contact us for growth planning guidance.

What are the challenges of variety in big data?

Variety challenges in big data arise from integrating structured databases, semi-structured JSON and XML files, and unstructured content like images, audio, and text documents. Traditional relational databases cannot efficiently store or query these diverse formats, requiring specialized systems for each type. Schema evolution across source systems breaks downstream pipelines, while inconsistent naming conventions complicate joins and aggregations. Extracting insights from unstructured data demands natural language processing and computer vision capabilities that many organizations lack. Data variety increases transformation complexity and extends development timelines significantly. Kanerika’s data integration expertise handles complex variety challenges—reach out to unify your diverse data sources.

What are the challenges in big data visualization?

Big data visualization challenges include rendering performance, cognitive overload, and maintaining accuracy at scale. Standard charting tools struggle when datasets contain millions of rows, causing dashboards to freeze or timeout. Aggregating data for display risks hiding important outliers and patterns, while showing too much detail overwhelms users. Real-time visualizations demand streaming architectures that refresh without disrupting analysis. Choosing appropriate chart types for multidimensional data requires expertise many organizations lack, leading to misleading representations. Mobile accessibility adds responsive design complexity. Kanerika builds high-performance dashboards using Power BI and modern visualization platforms—let us transform your data into actionable insights.

What is big data in simple words?

Big data refers to datasets so large, fast-moving, or complex that traditional software cannot process them effectively. It encompasses the massive digital footprint generated by websites, sensors, transactions, social media, and connected devices every second. Big data requires specialized storage systems, processing frameworks, and analytics tools designed for scale. Organizations leverage big data to discover patterns, predict outcomes, and make data-driven decisions that improve operations and customer experiences. The value lies not in the data itself but in the insights extracted through advanced analytics. Kanerika transforms raw big data into strategic business intelligence—explore our analytics services today.

What are some examples of big data?

Big data examples span every industry and function. Social media platforms process billions of posts, comments, and interactions daily. E-commerce companies analyze clickstream data from millions of concurrent shoppers. Healthcare systems aggregate electronic health records, imaging files, and genomic sequences. Financial institutions monitor real-time transaction streams for fraud detection. Manufacturing sensors generate continuous telemetry from production lines. Telecommunications providers track network performance across millions of devices. Smart cities collect traffic, energy, and environmental data from IoT sensors throughout urban infrastructure. Each example presents distinct big data challenges requiring tailored solutions. Kanerika delivers industry-specific big data implementations—discuss your use case with our specialists.

What are big data types?

Big data types are categorized as structured, semi-structured, and unstructured. Structured data resides in relational databases with defined schemas, including transaction records and customer tables. Semi-structured data contains organizational markers but lacks rigid schemas, such as JSON files, XML documents, and email metadata. Unstructured data has no predefined format and includes videos, images, audio files, PDFs, and social media content. Each data type requires different storage, processing, and analysis approaches, creating integration challenges when combining insights across categories. Understanding these types helps organizations select appropriate technologies for their data mix. Kanerika handles all data types through unified platform solutions—connect with us for architecture guidance.

What are the 4 big data strategies?

The four big data strategies are performance optimization, data exploration, analytics enhancement, and monetization. Performance optimization uses big data to improve operational efficiency through predictive maintenance, supply chain optimization, and resource allocation. Data exploration enables discovery of hidden patterns and correlations that inform new business opportunities. Analytics enhancement integrates big data into existing BI systems for deeper insights and better forecasting accuracy. Monetization creates new revenue streams by packaging data products or enabling data-driven services. Each strategy addresses different business objectives and requires distinct technical capabilities. Kanerika develops customized big data strategies aligned with your goals—schedule a strategy session with our team.

What are the factors affecting big data?

Factors affecting big data success include data quality, infrastructure readiness, organizational culture, and talent availability. Poor data quality undermines analytics accuracy regardless of tool sophistication. Legacy infrastructure creates bottlenecks when processing volumes exceed design limits. Organizations lacking data-driven cultures resist adopting insights that challenge established practices. Skill shortages in data engineering, analytics, and data science delay implementations and increase costs. Regulatory requirements constrain data collection and usage across jurisdictions. Budget limitations force tradeoffs between capability and coverage, while executive sponsorship determines priority and resource allocation. Kanerika helps organizations address all factors through comprehensive big data consulting—request an assessment to identify your gaps.

What are the best tools for big data analytics?

The best big data analytics tools include Apache Spark for distributed processing, Databricks for unified analytics, Snowflake for cloud data warehousing, and Microsoft Fabric for end-to-end data integration. Power BI excels at visualization while Tableau offers advanced charting capabilities. Kafka handles real-time streaming data, and Airflow orchestrates complex data pipelines. Cloud platforms like Azure and AWS provide managed services that reduce operational overhead. Tool selection depends on data volumes, latency requirements, existing infrastructure, and team skills. No single tool addresses all big data challenges—successful implementations combine multiple technologies strategically. Kanerika has deep expertise across leading analytics platforms—consult with us to build your optimal technology stack.