Most manufacturers are not short on data. They are short on data they can actually use. Every shift generates machine telemetry, quality records, production orders, and maintenance logs. But these sit across systems that were never built to share information. The OT side speaks one language.

The IT side speaks another. Somewhere in the gap between them, the insights that could prevent failures, reduce waste, and improve margins go unnoticed. 83% of manufacturers say data silos between departments prevent them from understanding the true cause of downtime, while unscheduled downtime costs the world’s 500 largest companies $1.4 trillion annually. The data to fix that already exists inside the plant. The infrastructure to connect it usually does not.

That is the problem a data Lakehouse solves. It brings OT and IT data together on one governed platform, combining the storage flexibility of a data lake with the query performance and audit reliability of a data warehouse. Sensor feeds, ERP transactions, quality inspection outputs, and supply chain data all land in one place. Analytics, AI models, and dashboards all run on top of it, without moving data between systems first.

This article covers the architecture behind that, the use cases that deliver measurable ROI, a comparison of Databricks, Microsoft Fabric, and Snowflake for manufacturing environments, and a phased implementation roadmap for teams at any stage of the journey.

Key Takeaways

- The OT/IT gap is the core problem. Sensor data and business data were never designed to communicate, and the cost shows up as unplanned downtime, quality escapes, and excess inventory.

- A data lakehouse closes both gaps that data lakes and data warehouses each fail to close separately, combining flexible storage with warehouse-grade governance and query performance.

- Discrete and process manufacturers need different architectures. Schema design, ingestion latency, and compliance requirements differ fundamentally between automotive assembly and pharmaceutical batch manufacturing.

- Start with one use case, one plant, one win. Phased implementation produces business outcomes in 6 to 12 weeks. Trying to connect everything at once is the most common failure mode.

- The lakehouse is the AI prerequisite. Every manufacturing AI initiative that fails does so because the data foundation was not ready, not because the AI was not capable.

Why Manufacturing Plants Cannot Act on the Data They Already Have

Picture a plant operations director walking into Monday morning review. She has OEE numbers from the MES system, downtime data from a spreadsheet the maintenance team keeps separately, quality reject rates from the QMS, and ERP production reports, all covering the same week, none of them agreeing.

The data exists. It just lives in systems that were never designed to talk to each other.

This is the real manufacturing data problem, not a shortage of data, but fragmentation so bad that analysts spend three days answering questions that should take three minutes. Unplanned downtime alone costs industrial manufacturers an estimated $50 billion annually. The root cause is rarely the machine itself. It’s the disconnected data around it, a data consolidation problem hiding inside an operations problem.

A data lakehouse for manufacturing is built to fix exactly that. It combines the schema-flexible, low-cost storage of a data lake with ACID transactions, query performance, and governance of a data warehouse, on one platform. For manufacturers, that means one place to land machine telemetry, ERP transactions, quality records, and supply chain feeds, and act on all of it without moving data between systems first.

This is the foundation that IoT analytics, smart manufacturing, and Industry 4.0 initiatives all depend on. It’s also consistently the piece that gets underbuilt. McKinsey’s research on capturing value at scale in discrete manufacturing makes the point clearly: manufacturers pulling ahead are not the ones with better machines. They are the ones with better data infrastructure underneath their AI investments.

Struggling to choose between Data Lakehouse and Data Lake ? We simplify the journey.

Partner with Kanerika for expert data strategy and implementation.

Data Lakehouse vs. Data Lake vs. Data Warehouse for Manufacturing

This is the first question every manufacturing data team asks. And the answer matters, because choosing the wrong architecture means rebuilding it when scale and governance demands arrive.

The core tension: data lakes offer flexibility but collapse under audit pressure. Data warehouses offer governance but break when sensor streams arrive. For years, most manufacturers ran both, with analysts manually bridging the gap in Excel. The lakehouse eliminates that middle layer.

| Capability | Data Warehouse | Data Lake | Data Lakehouse |

| Handles sensor/time-series data | Poor, schema-on-write is rigid | Yes, schema-on-read | Yes, schema-on-read with optional enforcement |

| SQL analytics performance | Excellent | Poor without optimization | Excellent, Delta Lake / Iceberg indexing |

| ACID transactions | Yes | No | Yes |

| Regulatory audit trail | Yes | No | Yes, time travel, row-level lineage |

| ML/AI model training data | Limited, structured only | Yes | Yes, all data types |

| OT historian ingestion | No, transform required first | Yes | Yes, raw and transformed |

| Total cost at scale | High, separate compute and storage | Low storage, high query cost | Optimized, decoupled compute and storage |

| Real-world manufacturing fit | ERP reporting only | Raw data archive only | Full OT/IT analytics and AI |

A data warehouse handles ERP reporting well but breaks when manufacturing teams need to analyze sensor streams alongside it. A data lake stores everything but has no governance, which creates serious problems under ISO, FDA, or aerospace audit. The lakehouse handles both. That is why it has become the manufacturing analytics platform of choice for operations building AI-grade data foundations in 2026.

OT/IT Data Integration: Why Manufacturing’s Data Problem Is Different

Most industries struggle with data silos. Manufacturing has a version that’s structurally different, and more expensive to solve without the right approach.

The OT/IT Divide and the Industrial Protocols Behind It

Operational Technology, PLCs, SCADA systems, DCS, vibration sensors, MES platforms, was built for uptime and safety, not data sharing. Information Technology, SAP, Oracle ERP, CRM, WMS, was built for transactional business data. These two worlds almost never communicate in real time. That’s by design.

The ISA-95 model, the international standard for integrating enterprise IT with manufacturing OT systems, defines exactly where this translation happens: between Level 3 (MES) and Level 4 (ERP/analytics). A lakehouse architecture aligned with ISA-95 makes governance defensible and integration predictable. IT service management frameworks need to account for this OT/IT boundary explicitly. Most ITSM implementations in manufacturing treat it as an afterthought.

The result: a maintenance engineer cannot correlate a quality defect with the specific machine parameter that caused it, because the data lives in systems that have never been connected. Fixing it requires a Silver-layer integration step that most manufacturers have never completed.

Historian Data Migration: The Hidden Bottleneck

Most process manufacturers store 10 to 20 years of machine data in proprietary historians, OSIsoft PI (now AVEVA PI), AspenTech IP.21, or Honeywell Uniformance. These systems are deeply embedded and irreplaceable operationally. They are also completely isolated from modern analytics platforms.

Migrating historian data to the lakehouse Bronze layer is specialized work. It involves handling proprietary export formats, time-series compression artifacts, timestamp normalization, and clock drift correction. It’s also frequently the single longest task in a lakehouse implementation.

Kanerika’s FLIP platform automates up to 80% of this migration work, compressing what typically takes 6 months into 6 to 8 weeks. Historian migration is the most underestimated task in manufacturing lakehouse projects, consistently.

Discrete vs. Process Manufacturing: Two Different Data Architectures

An automotive assembly plant and a pharmaceutical facility have fundamentally different data profiles. A lakehouse designed for one will fail in the other if it is not built accordingly.

| Dimension | Discrete Manufacturing | Process Manufacturing |

| Examples | Automotive, aerospace, electronics, machinery | Pharma, chemicals, food and beverage, oil and gas |

| Primary data type | Structured event data, cycle times, torque, BOM completions | Continuous time-series, temperature, pressure, pH, batch records |

| Regulatory drivers | IATF 16949, AS9100 | FDA 21 CFR Part 11, GMP, ALCOA |

| Primary AI use case | Defect detection, cycle time optimization | Batch yield optimization, deviation detection |

| Governance priority | Serial number traceability | Complete batch record integrity |

| ERP integration pattern | SAP PP production planning order completion events | SAP PM plant maintenance and batch management records |

Kanerika has implemented lakehouses across both, Dr. Reddy’s pharmaceutical manufacturing on Databricks and Southern States Material Handling on Microsoft Fabric. The architectural choices differ meaningfully between them.

Manufacturing Data Lakehouse Architecture: The Medallion Layer Model

The manufacturing lakehouse is organized around three layers, the Medallion Architecture, a design pattern developed by Databricks that progressively improves data quality from raw ingestion to business-ready analytics. Each layer has a specific job.

1. Bronze Layer: Raw Data Landing Zone for OT and IT Sources

SCADA historian dumps, ERP batch exports, vision inspection images, supplier EDI files. No transformation, no filtering. Regulatory investigations and ML model retraining both require the original raw record, so nothing gets discarded. Data streaming feeds Bronze continuously with real-time sensor data from PLCs, smart meters, and environmental monitors that batch processes cannot keep up with.

2. Silver Layer: Where OT/IT Integration Actually Happens

This is the most important layer and the most underinvested. Deduplication, quality rules, and schema enforcement happen here, along with the OT/IT join: matching a machine event timestamp to the production order active in SAP at that moment. Out-of-order sensor events, clock drift between OT and IT systems, and backfill gaps all need explicit handling rules before downstream models can trust the data.

Poor Silver layer design is the most common cause of lakehouse failure in manufacturing. Mapping which data joins which, at what cadence, and with what latency tolerance before implementation prevents the most expensive mid-project surprises.

3. Gold Layer: Business-Ready Manufacturing Analytics

Aggregated, analytics-ready datasets live here: OEE dashboards, demand forecasts, supplier risk scores, quality scorecards. This is what Power BI, Tableau, or an AI agent queries. Financial planning teams can pull cost-per-unit and WIP data directly, without waiting on ERP batch cycles. Open table formats like Delta Lake and Apache Iceberg underpin this with ACID transactions and time travel, essential for FDA audits and root cause investigations.

4. Edge-to-Lakehouse: Where the journey of data starts

High-frequency sensor data at 10kHz or thermal imaging cannot all be sent to cloud storage cost-effectively. Edge compute nodes run lightweight inference for anomaly detection and compression, forwarding pre-processed event summaries to Bronze rather than raw streams. For manufacturers with OT data residency requirements, the Bronze layer can sit within the plant network, with Silver and Gold in the public cloud.

The inventory below covers the major manufacturing data sources Kanerika’s architects encounter across implementations.

| Data Source | System of Origin | Protocol / Format | Lakehouse Layer | Notes |

| Machine sensor readings | PLC / SCADA | OPC-UA, MQTT, Modbus | Bronze to Silver | Requires OT gateway for cloud ingestion |

| Historian time-series | OSIsoft PI, AspenTech IP.21 | Proprietary export / REST API | Bronze | Needs format normalization, clock drift correction |

| Production orders | SAP PP / Oracle MFG | BAPI / REST / batch export | Bronze to Silver | Key join key for OT/IT integration |

| Quality inspection records | QMS / Vision systems | CSV, JSON, image files | Bronze | Image metadata joins to production order in Silver |

| Maintenance work orders | SAP PM / CMMS | REST / batch export | Bronze to Silver | Links to asset master data for predictive ML |

| Energy consumption | Smart meters / BMS | MQTT, CSV | Bronze to Silver | Normalize to kWh per unit for Gold-layer KPIs |

| Supplier / logistics data | EDI, vendor portals | EDIFACT, XML, REST | Bronze | Lead time variability feeds demand forecast models |

| ERP financial/inventory | SAP FI/CO, Oracle EBS | BAPI, JDBC | Bronze to Silver | Cost allocation and WIP reporting in Gold |

| Environmental / compliance | EHS systems | CSV, REST | Bronze to Gold | Regulatory reporting; append-only governance required |

This is a starting point, not a complete list. Every manufacturer has edge cases, legacy SCADA historians running protocols no longer in active development, custom MES integrations that export flat files on a schedule, ERP systems with non-standard field mappings. The value of building this inventory early is that it surfaces those surprises before they become timeline risks.

Manufacturing Data Lakehouse Use Cases with Documented ROI

1. Predictive Maintenance: Reducing Unplanned Downtime with Unified Sensor and Maintenance Data

Traditional maintenance is binary: run on schedule or wait for failure. Neither uses the data machines are already generating. With the Silver-layer OT/IT join in place, sensor readings can be correlated with historical failure records, and ML models predict failure 24 to 72 hours before it occurs. The decision intelligence layer on top is what moves teams from a flagged anomaly to a recommended action with a clear owner, which is where most predictive maintenance programs stall.

2. AI-Powered Quality Control and Inline Defect Detection

Vision inspection systems generate thousands of images per shift, but most manufacturers only store pass/fail classifications. The correlation between defect patterns and upstream process conditions is never analyzed. In a lakehouse, raw inspection images land in Bronze and Silver-layer joins link each defect to the machine parameters, operator, shift, and material lot active at the time. Computer vision models score inline, flagging products before the next station, not at end-of-line.

3. Real-Time OEE Optimization Across Lines, Cells, and Shifts

OEE requires availability, performance, and quality data in one view, but those three streams live in separate systems: MES, sensors, and QMS. No single system sees all three, which is why most OEE dashboards are approximations, not actuals. A manufacturing data lakehouse connects machine cycle times from OT/MES with production orders from ERP and quality outcomes from QMS, producing true OEE by line, cell, shift, and operator.

4. Demand Forecasting and Inventory Optimization with Unified Supply Chain Data

Disconnected demand signals across CRM, sales order history, and distributor portals produce inventory plans with 10 to 15% excess carrying cost. ERP-native forecasting models run on ERP data alone, missing everything the supply chain and market are already signaling. A lakehouse consolidates all demand signals into Gold-layer training data for ML forecasting models, improving forecast accuracy by 15 to 25% versus ERP-native approaches.

Choosing the Right Manufacturing Data Platform for your enterprise

Most articles recommending a lakehouse platform for manufacturing are written by a vendor or a vendor partner advocating for one platform. The comparison below is based on documented capabilities against manufacturing-specific requirements.

| Capability | Databricks | Microsoft Fabric | Snowflake |

| Best manufacturing fit | ML/AI-heavy workloads, pharma R&D, data science teams | Microsoft-centric enterprises, Power BI users, Azure-native shops | SQL-first analytics, multi-cloud environments |

| Streaming / IoT ingestion | Excellent, Spark Streaming, Delta Live Tables | Good, Eventstream, Real-Time Intelligence hub | Moderate, Snowpipe Streaming, less native for OT data |

| ML / AI workloads | Industry-leading, MLflow, AutoML, Feature Store native | Growing, Fabric ML and Azure AI integration | Snowpark ML, improving but younger |

| Pharma/compliance | Strong, documented FDA Part 11 architecture patterns | Adequate, needs custom governance configuration | Adequate, needs custom governance work |

| SAP/ERP integration | Via Spark connectors and third-party tools | Native, SAP connector in Fabric Data Factory | Via Fivetran, dbt connectors |

| Historian migration support | Strong, Databricks and partner ecosystem for PI/IP.21 | Growing, Azure IoT and Fabric pipeline patterns | Limited, requires custom ETL tooling |

| Cost model | Compute-based DBU pricing, scales with workload | Capacity unit F-SKU, more predictable | Storage and compute credits, variable |

As a certified Databricks partner, a Microsoft Solutions Partner for Data and AI with Analytics Specialization, and a Snowflake partner, Kanerika recommends platforms based on enterprise context, not commercial preference.

- Microsoft Fabric suits heavily SAP and Azure-centric shops best, with native connectors and predictable capacity pricing. Microsoft licensing optimization matters here, Fabric F-SKU pricing requires careful capacity planning to avoid overspend.

- Databricks leads for ML-intensive operations and pharmaceutical manufacturing, with the strongest MLflow and feature store capabilities.

- Snowflake works best for SQL-first teams in multi-cloud environments. The Snowflake Manufacturing Data Cloud is maturing, but historian migration support still requires custom tooling.

Hybrid cloud deployment considerations matter especially for manufacturers running some OT infrastructure on-premises. Private cloud configurations are sometimes required for OT data residency compliance in regulated sectors.

Microsoft Fabric Vs Databricks: A Comparison Guide

Explore key differences between Microsoft Fabric and Databricks in pricing, features, and capabilities.

Kanerika’s IMPACT Framework for Lakehouse Implementation

Most lakehouse projects fail not because the technology is wrong, but because the implementation ignores how manufacturing plants actually operate. Plant engineers distrust IT initiatives. OT teams have uptime SLAs that make data integration projects feel threatening. IT architects design for elegance before they design for adoption.

Change management is the underrated variable in manufacturing lakehouse implementations. The technical design can be perfect and the project still stalls if OT team adoption is not engineered from Day 1, not addressed retroactively after deployment.

Kanerika structures manufacturing lakehouse engagements around the IMPACT framework, six phases that ensure the architecture produces a business outcome, not just a technical deliverable.

- Identify: Map all OT and IT data sources. Quantify data volumes and velocity. Prioritize 3 to 5 highest-value use cases based on operational pain, not technology preference. Process mapping at this stage creates a visual foundation that OT and IT teams can both read and agree on.

- Map: Design the target architecture, ingestion patterns, medallion layer schema, governance model, and platform selection based on existing enterprise context.

- Prove: A 4 to 6 week pilot on one production line. One data integration. One measurable outcome. Business case for full rollout built on real numbers, not projections.

- Analyze: Quantify pilot ROI against pre-established baselines, downtime hours prevented, defects caught, analyst hours recovered.

- Create: Full implementation roadmap with phased milestones, resource plans, and governance requirements.

- Transform: Agile delivery with dedicated team, OT/IT change management support, and knowledge transfer so the client’s team can extend the platform independently.

The pilot-first approach matters more than any architectural decision. Organizational trust in the platform comes from a concrete win in week 8, not a technically sophisticated architecture reviewed in a slide deck.

| Phase | Duration | Key Activities | Deliverable | Success Criterion |

| Identify | Weeks 1-2 | Data source mapping, use case scoring, stakeholder alignment | Prioritized use case list and data source inventory | 3-5 validated use cases with baseline metrics defined |

| Map | Weeks 2-4 | Architecture design, platform selection, governance model design | Target state architecture blueprint | Signed-off architecture with OT team involvement |

| Prove | Weeks 4-10 | Bronze/Silver build for one data source, pilot use case deployment | Live pilot with one measurable business outcome | Quantified ROI figure from production data |

| Analyze | Weeks 10-12 | Pilot ROI quantification, gap analysis, Phase 2 scoping | Business case document for full rollout | Stakeholder approval for Phase 2 investment |

| Create | Weeks 12-14 | Full roadmap, resource plan, governance requirements finalization | Detailed project plan with milestones | Approved roadmap and resourcing |

| Transform | Months 4-18 | Multi-site rollout, AI layer build, team capability transfer | Production lakehouse, AI use cases, trained internal team | Client team can extend platform independently |

The Prove phase is where most engagements either accelerate or stall. A pilot that produces a real number, not a projected estimate, in week 10 changes the internal conversation about Phase 2 budget from a debate into a business decision.

Real Outcomes from Kanerika Manufacturing Implementations

Southern States Material Handling (TOYOTAlift): Kanerika implemented Microsoft Fabric with unified multi-source data integration, delivering a single analytics framework across manufacturing and distribution operations that had previously relied on disconnected reporting systems.

Dr. Reddy’s Laboratories: Kanerika built a unified data platform on Databricks for pharmaceutical manufacturing operations, making R&D and process optimization cycles possible that siloed data infrastructure had blocked entirely.

ABX Innovative Packaging Solutions: Kanerika modernized ABX’s manufacturing data management, unifying production and operational data that had been fragmented across legacy systems, replacing manual reporting processes with a consolidated analytics foundation.

Data Lakehouse Implementation Roadmap for Manufacturing: Three Phases

Phase 1: Connect Priority Data Sources and Validate ROI (Weeks 1 to 12)

Connect 3 to 5 priority data sources. Build Bronze and Silver layers for one production line, including the OT/IT timestamp join and protocol translation from OPC-UA or MQTT. Deploy one business use case, OEE dashboard or predictive maintenance pilot, not both. Establish governance baseline: data catalog, role-based access, audit logging.

The goal is one quantifiable business outcome before committing Phase 2 budget. Shadow IT risks surface here, the spreadsheets and disconnected tools OT teams have built to compensate for missing integration need to be surfaced and included in the Bronze-layer source inventory, not ignored.

Phase 2: Multi-Site Rollout and Self-Service Analytics (Months 4 to 9)

Roll the architecture to all production sites. Add supply chain, quality, and energy data sources. Build Gold-layer models: quality scorecards, demand forecasts, supplier scorecards. Onboard operations and quality teams on self-service analytics tools against the Gold layer, without requiring SQL skills or analyst intermediaries.

Data literacy programs run in parallel with Phase 2 rollout. The best Gold-layer architecture produces zero value if the operations teams using it do not trust or understand what they are looking at.

Phase 3: Predictive Maintenance and Manufacturing (Months 9 to 18)

With reliable Gold-layer data established, production-grade AI becomes achievable. Predictive maintenance at scale. Computer vision defect detection integrated inline. Demand forecasting ML replacing ERP-native rule-based models. AI agents querying the lakehouse in natural language for operations teams.

Multi-agent workflows coordinate across quality, maintenance, and supply chain simultaneously, not just responding to queries but monitoring KPIs autonomously and escalating anomalies without human prompting. Advanced RAG architectures built on top of the Gold layer let AI agents pull answers from structured manufacturing data and unstructured maintenance documentation in the same query.

A note for mid-market manufacturers: Phase 1 can be completed with a team of 3 to 5 people and cloud-native infrastructure, no multi-million dollar upfront commitment required. Kanerika has implemented manufacturing lakehouses for facilities running 2 production lines and for global manufacturers with 40+ plants. The architecture scales both ways.

| Phase | Technical Readiness | Organizational Readiness | Common Gap and Fix |

| Phase 1 – Foundation | OT gateway or IoT hub exists; cloud storage provisioned; at least one data source owner identified | Plant operations and IT have agreed on pilot scope; executive sponsor named | OT team not yet engaged: run a 1-day ISA-95 mapping workshop before starting Bronze layer design |

| Phase 2 – Expand | Bronze/Silver layers stable for Phase 1 sources; data catalog in place; role-based access enforced | Analytics users trained on Gold-layer tools; data governance owner designated | No governance owner: assign a data steward role (can be part-time at this stage) |

| Phase 3 – AI Layer | Gold-layer data quality above threshold; feature store or equivalent in place; model deployment pipeline defined | Business owners for each AI use case identified; feedback loop for model monitoring established | Business owners not engaged in model validation: run a 2-week model output review with operations teams before production deployment |

The readiness check catches organizational gaps more often than technical ones. Every Phase 3 delay Kanerika has seen in manufacturing came from a missing business owner for AI use case validation, not from a data pipeline problem.

Make the most of Databricks Lakehouse Architecture with seamless integration.

Partner with Kanerika to build scalable, future-ready data solutions.

3 Common Manufacturing Lakehouse Implementation Failures and How to Avoid Them

Starting with the Technology, Not the Use Case

We need a lakehouse’ is not a business case. ‘We lose significant revenue per year in unplanned downtime we can predict with existing sensor data’ is. Platform selection before use case prioritization produces architectures that are technically impressive and operationally irrelevant.

Under-Investing in the Silver Layer

This layer is unglamorous and technically tedious. The OT/IT timestamp join, data quality enforcement, clock drift correction, and out-of-order event handling that live here determine whether every downstream ML model is reliable or not. Most teams rush through it to get to dashboards faster, and pay for it when the AI models built on that data produce unreliable outputs.

Treating Governance as an Afterthought

Regulatory audits happen without warning. Building governance retroactively into a live manufacturing data platform is three times harder than building it in from Day 1. For any manufacturer subject to ISO, FDA, or aerospace quality standards, governance is a Phase 1 requirement, not a Phase 3 project.

| What You’re Observing | Likely Failure Mode | Root Cause | What to Fix |

| Platform selected, 6 months in, no business outcome yet | Technology-first sequencing | Use cases were not defined before architecture was designed | Pause. Define 3 prioritized use cases with measurable baselines. Rebuild scope around them. |

| ML models built but producing unreliable outputs | Silver layer under-investment | OT/IT join incomplete; out-of-order events unhandled; clock drift uncorrected | Audit Silver layer for timestamp integrity and join completeness before any new model work |

| Analytics exist but teams do not trust the numbers | Silver layer under-investment | Data quality rules never enforced; multiple versions of truth in Gold layer | Implement data quality scoring; trace each Gold-layer metric back to a single Silver source |

| Regulatory audit findings on data lineage | Governance as afterthought | Audit trail not built; row-level lineage missing; no time-travel capability | Implement Delta Lake time travel; build data catalog with lineage metadata |

| Pilot succeeded, Phase 2 budget blocked | Technology-first sequencing | Pilot ROI was not quantified against a pre-established baseline | Establish baselines in Phase 1 documentation; present Phase 2 as a return on a proven investment |

| Data engineers cannot keep up with new source requests | Architecture brittleness | No standard ingestion pattern defined; every source is a custom build | Define a standard Bronze ingestion template for OT and IT sources; enforce it for all new connections |

Accurately identifying which failure mode you’re in changes the conversation. ‘Our Silver layer has clock drift problems’ gets fixed. ‘Something seems off with our data’ does not.

ROI Benchmarks: What Manufacturing Lakehouses Actually Deliver

| Use Case | Documented Improvement | How to Measure |

| Predictive maintenance | 25-30% unplanned downtime reduction | Downtime cost 6 months pre vs. post-deployment |

| Quality defect detection | 15-40% escape rate reduction | Inspect-to-pass ratio per production run, before and after |

| Demand forecasting | 10-20% MAPE improvement | Forecast vs. actual by SKU, monthly rolling comparison |

| Energy management | 8-15% energy intensity reduction | kWh per unit produced vs. production throughput |

| Data infrastructure TCO | 40-60% reduction vs. separate lake and warehouse | Annual platform cost including labor |

| Analytics cycle time | 80-90% faster time-to-insight | Analyst request queue depth and turnaround time |

Kanerika’s IMPACT framework establishes baseline metrics in the Identify phase specifically so ROI can be quantified, not estimated, after implementation. This is what makes Phase 2 budget approvals straightforward: the numbers come from Phase 1 actuals, not analyst projections.

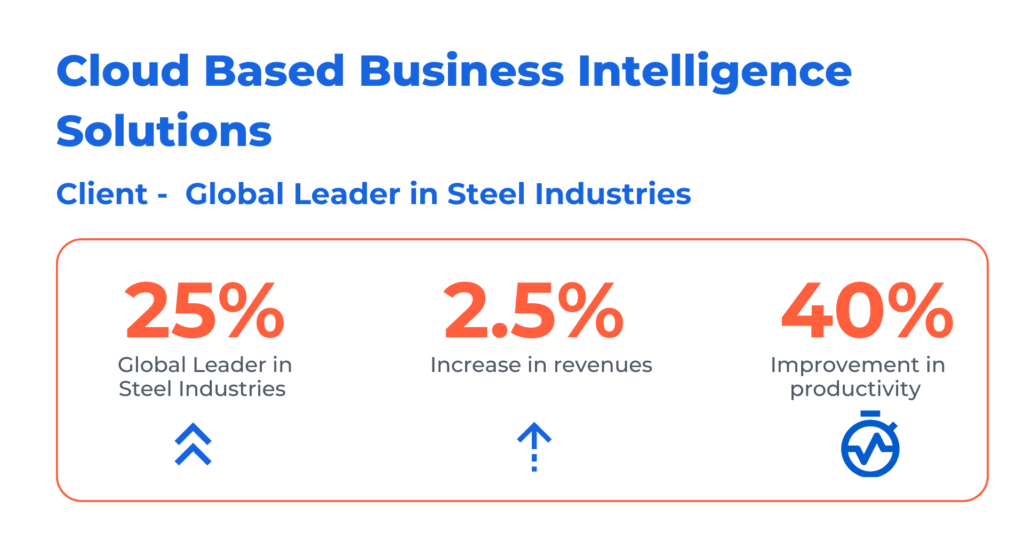

Case Study: Cloud Based Business Intelligence Solutions For Global Leader in Steel Industries

Challenges

- Complex interface, lack of drag and drop features, and inefficient analysis hindered data manipulation & decisions

- Legacy system did not support all encryption types, posing risks to data security and compliance

- Existing solution required significant processing power and was highly priced, straining the company’s resources

Solutions

- Organized, optimized, and analyzed data using Dynamics AX and Azure, improving data management and efficiency

- Transformed data into interactive dashboards using Power BI, enabling analysis for improved decision-making

- Implemented scalable, cost-effective BI platform with Azure data engineering and Power BI, boosting efficiency

Results

- 25% Reduction in storageexpenses

- 2.5% Increase in revenues

- 40% Improvement in productivity

Why Manufacturers Choose Kanerika for Data Lakehouse Implementation

Kanerika is a premier provider of data-driven software solutions and services that facilitate digital transformation. Specializing in Data Integration, Analytics, AI/ML, and Cloud Management, Kanerika prides itself on its expertise in employing cutting-edge technologies and agile methodologies to ensure exceptional outcomes.

As a Microsoft Solutions Partner for Data and AI, a certified Databricks partner, and a Snowflake partner, Kanerika brings platform-neutral expertise to manufacturing data lakehouse engagements. Platform recommendations are based on enterprise context, existing technology stack, and workload type, not commercial preference. Whether the environment is SAP-heavy, Azure-native, or multi-cloud, the architecture is designed around what the manufacturer actually needs.

For manufacturers looking to add AI on top of a governed data foundation, Kanerika’s KARL AI Data Insights Agent connects directly with lakehouse datasets to enable natural language querying across production, quality, and supply chain data. Operations teams get answers in seconds without SQL knowledge or analyst involvement. Kanerika’s FLIP migration platform handles the heavy lifting on the technical side, automating up to 80% of pipeline and historian migration work and compressing timelines that typically run six months down to six to eight weeks.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

FAQs

What is a data lakehouse for manufacturing?

A data lakehouse for manufacturing is a unified data platform that consolidates OT data (sensors, SCADA, MES), IT data (ERP, CRM, WMS), and external data (supply chain, logistics) into a single governed repository. It makes analytics, machine learning, and AI possible across production, quality, maintenance, and supply chain — from one place. It replaces the disconnected data lake and data warehouse setup most manufacturers currently operate. Unlike a pure data lake, it adds ACID transactions, row-level governance, and warehouse-grade query performance. Unlike a data warehouse, it handles unstructured sensor data, historian exports, and inspection images natively.

What is the difference between a data lake and a data lakehouse for manufacturing?

A data lake stores raw manufacturing data cheaply — but has no governance, no ACID transactions, and poor query performance without heavy optimization. Sensor data and ERP exports can land there, but no audit trail exists, data quality is unenforced, and analysts wait minutes for simple queries. A data lakehouse adds warehouse-grade governance, ACID transactions, and query optimization on top of the same low-cost storage. Manufacturers get the flexibility of a lake without giving up the reliability a data warehouse provides. For regulated manufacturers — pharma, aerospace, food and beverage — the critical addition is time travel and row-level audit lineage, which a data lake simply can’t provide.

How do OT systems connect to a manufacturing data lakehouse?

OT systems communicate via industrial protocols — OPC-UA, MQTT, and Modbus primarily. These don’t connect natively to cloud data platforms. An OT-to-cloud ingestion layer — Azure IoT Hub, AWS IoT Greengrass, or an on-premises industrial edge gateway — translates these protocols and forwards data to the Bronze layer. For legacy historians like OSIsoft PI or AspenTech IP.21, a separate migration and streaming connector normalizes proprietary time-series formats before they reach the lakehouse. Getting this ingestion layer right is the first architectural decision in any manufacturing lakehouse project, and the one most commonly underspecified.

How long does it take to implement a data lakehouse for manufacturing?

A focused Phase 1 — one plant, 3–5 data sources, one primary use case — typically takes 6–12 weeks with experienced implementation partners. Full multi-site deployment with AI use cases completes in 6–18 months depending on data complexity, legacy historian migration requirements, and organizational readiness. Kanerika’s FLIP migration platform compresses typical timelines by automating up to 80% of pipeline migration work, reducing historian migration from 6 months to 6–8 weeks.

Which platform is best for manufacturing: Databricks, Microsoft Fabric, or Snowflake?

The right platform depends on existing technology stack and primary workload type. Databricks leads for ML-heavy workloads and data science teams — particularly in pharmaceutical and process manufacturing with complex SAP PM integration requirements. Microsoft Fabric is the strongest choice for Microsoft-centric enterprises using Azure and Power BI, with native SAP connectors through Fabric Data Factory. Snowflake serves SQL-first analytics teams in multi-cloud environments well via Fivetran and dbt connectors. A platform-neutral assessment of current systems before committing is the most important step.

Can a data lakehouse support both discrete and process manufacturing?

Yes — but the architecture has to be designed for the manufacturing type. Discrete manufacturers need event-driven schema design optimized for high-volume structured data and serial number traceability, with SAP PP integration patterns. Process manufacturers need time-series-first schema design with batch record integrity and ALCOA compliance for FDA 21 CFR Part 11. Kanerika has implemented lakehouses for both — Dr. Reddy’s for pharmaceutical process manufacturing and SSMH for distribution and discrete operations — and the architectural choices differ meaningfully between them.

Is a data lakehouse viable for mid-size manufacturers, or only large enterprises?

It’s viable for mid-size manufacturers. Phase 1 can be completed with a team of 3–5 people using cloud-native infrastructure — no multi-million dollar upfront commitment required. The architecture scales from 2-line facilities to 40+ plant global operations. The phased approach means mid-market manufacturers start with one use case, prove ROI in 6–12 weeks, and expand investment on the back of demonstrated results.

What ROI can manufacturers realistically expect from a data lakehouse?

Documented outcomes include: 25–30% reduction in unplanned downtime through predictive maintenance on unified sensor and maintenance data, 15–40% reduction in quality defect escape rates through inline AI inspection, 10–20% improvement in demand forecast accuracy, and 40–60% reduction in data infrastructure total cost of ownership compared to maintaining separate lake and warehouse systems. Kanerika establishes pre-implementation baselines specifically so these figures are measured against actuals — not estimated.

Why do manufacturing data lakehouse projects fail?

Three failure modes dominate. First, starting with platform selection before defining business use cases — producing technically impressive architecture with no operational relevance. Second, under-investing in the Silver layer, where the OT/IT data integration happens. Clock drift correction and out-of-order event handling here determine whether downstream ML models are reliable or not. Third, treating governance as an afterthought rather than a Phase 1 requirement — which creates serious problems when regulatory audits arrive. Most failures are implementation and sequencing failures, not technology failures.

What is the Medallion Architecture in manufacturing data lakehouses?

The Medallion Architecture organizes a manufacturing data lakehouse into three layers. Bronze is the raw data landing zone — sensor readings, historian exports, ERP batches stored as-is for regulatory traceability. Silver is the curated and integrated layer — where the OT/IT join happens, data quality rules are enforced, and time-series issues like clock drift are corrected. Gold is the business-ready layer — aggregated OEE dashboards, demand forecasts, and quality scorecards optimized for analyst and AI agent queries. The Silver layer is the most technically demanding and the most consequential for downstream AI model reliability.