What do “YouTube,” “Amazon,” “Facebook,” “Weather,” and “Google” have in common? They represent the most popular searches driving Google’s massive data engine, yet they’re just the beginning of why every modern enterprise needs a cloud data warehouse strategy. However, these searches are just the tip of an enormous data iceberg transforming how businesses operate.

According to a survey, every single day, 402.74 million terabytes of data are generated globally, while 90% of the world’s data was created in just the last two years. This overwhelming data explosion poses a critical challenge: how do organizations harness this immense wealth of information for competitive advantage?

Traditional on-premises data warehouses simply cannot handle this exponential growth, creating bottlenecks that slow decision-making and inflate infrastructure costs. Consequently, enterprises are rapidly migrating to cloud-native analytics platforms that offer instant scalability and flexible pricing models.

The market response is extraordinary: cloud data warehouse adoption drives market growth from $11.78 billion in 2025 to $39.91 billion by 2030 Mordor Intelligence. Therefore, cloud data warehouses have become indispensable assets for businesses that depend on data-driven decision-making, offering secure, scalable, and efficient methods to store and analyze vast information volumes.

Key Learnings

- Choosing the right platform depends on business needs – Each cloud data warehouse has strengths, so enterprises must align platform choice with use cases, data volume, and existing cloud ecosystems.

- Cloud data warehouses enable scalable, modern analytics – They separate storage and compute, allowing enterprises to scale on demand and handle growing data volumes without infrastructure limits.

- Cost efficiency is a major driver of cloud data warehousing – Pay-as-you-go pricing and reduced maintenance help organizations lower upfront and operational costs compared to on-prem systems.

- Cloud data warehouses support faster insights – Elastic compute and optimized query engines deliver high performance, enabling near real-time analytics and reporting.

- Security and governance are built into cloud platforms – Modern cloud data warehouses offer strong access controls, encryption, and compliance support for regulated industries.

Elevate Your Enterprise Data Operations by Migrating to Modern Platforms!

Partner with Kanerika for Data Warehouse Services

What is a Cloud Data Warehouse?

A cloud data warehouse is essentially a data storage and analytics platform hosted entirely in the cloud. Unlike traditional systems, it eliminates physical hardware requirements while providing instant access to massive computational power and storage capacity.

Key Differences from Traditional Systems

Conventional data warehouse involves high costs of initial investments in servers, storage, and maintenance staff. On the other hand, cloud-based warehouses are pay as you drive and have the automatic scaling features. Also, the cloud solutions are installed within hours and not months making the business respond quickly.

Moreover, the traditional systems are not able to handle the sudden bursts of data whereas cloud warehouses are able to automatically make scale in response to demand.

Fully managed cloud warehouses are automatically maintained, updated and optimized. Hence IT teams are business-value oriented and not system administration oriented. Self managed options on the other hand offer more control over customization but demand more technical knowledge.

Role in Modern Data Architecture

Present-day organizations take cloud data warehouses as a central point of analytics, business intelligence, and machine learning programs. Later, these platforms can be smoothly connected to the current applications and they assist in real-time data processing and innovative analytics processes. Therefore, cloud data warehouses are used to convert raw data into business insights that can be acted upon without being limited by traditional infrastructure.

How Cloud Data Warehouses Work?

Ever wondered how a cloud data warehouse can instantly process massive datasets while you sleep? Behind the scenes, these systems orchestrate complex operations that would overwhelm traditional databases. The magic lies in their revolutionary architecture that treats storage and computing as completely separate resources.

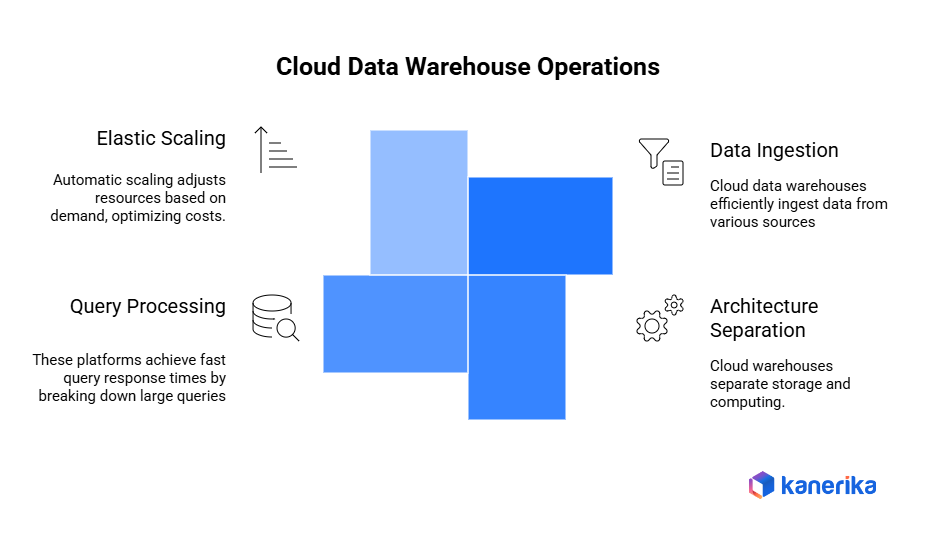

1. Data Ingestion and Integration

First, cloud data warehouses excel at data ingestion from multiple sources simultaneously. Whether you’re pulling information from databases, APIs, or file systems, these platforms handle everything automatically. Additionally, they process both real-time streams and batch uploads without breaking a sweat.

2. Compute-Storage Architecture Separation

Here’s where things get interesting. Traditional systems force you to buy storage and computing power together. However, cloud warehouses separate these completely. Therefore, you can store massive amounts of data cheaply while only paying for computing power when you actually run queries.

This separation means your data stays put while computing resources scale up or down instantly based on demand.

3. Query Processing and Performance Optimization

Leading platforms achieve 1-30 second response times for common queries while processing over 200TB of data daily Google Cloud. Furthermore, these systems break large queries into smaller pieces that run simultaneously across multiple processors.

Modern cloud warehouses use massively parallel processing to distribute workloads across hundreds of nodes simultaneously.

4. Elastic Scaling and Cost Optimization

The real magic happens with automatic scaling. When demand spikes, more resources automatically activate. When things slow down, resources scale back. BigQuery automatically scales slot usage up or down based on how much parallelism each query stage can use ClickHouse, ensuring you never pay for idle capacity.

Organizations using pay-as-you-go models avoid up to 40% in unnecessary infrastructure costs Singdata while maintaining peak performance during busy periods.

Operational Dynamics of Data Warehouse in the Cloud

The cloud-based ETL tools of the modern world are scalable, automated, and capable of handling the increase in the volume of data, which is a change in the way organizations deal with the data infrastructure.

1. Automated Data Processing and ETL Operations

Cloud data warehouses are efficient in automated data adding of numerous sources at the same time. Besides, the ETL processes are scalable in that they can support increasing volumes of data without losing its performance Rivery. Besides, companies are able to run ETL jobs at off seasons to reduce the impact on the operational systems.

2. Dynamic Resource Allocation and Scaling

These systems automatically dispense computing resources according to on-demand. In addition, elastic scaling provides optimal performance when the site receives a lot of traffic and also minimizes the costs when it is idle. This means that overprovisioning of costly hardware is not done by the business.

3. Intelligent Query Processing and Optimization

Cloud warehouses rely on more efficient query optimizers which automatically choose the most efficient execution paths. Thus, intricate analytical queries take shorter time to execute on the basis of parallel processing and intelligent caching handles.

4. Proactive Monitoring and Maintenance

The ETL tools are cloud-based and have automated connectors and almost zero maintenance Binmile which support faster real-time analytics. Then automated health monitoring systems are used to monitor the health of pipelines and provide the alerts to have the issues addressed immediately.

5. Complex Security and Access Control

Contemporary cloud data warehouses adopt automated security measures such as encryption, identity and compliance monitoring. Moreover, role-based access control is able to protect the data and still maintain the efficiency of operational teams in a distributed teamwork.

These operational dynamics are intertwined with each other and generate self-managed data platforms that provide stable performance and minimize administrative overhead.

Key Benefits of Cloud Data Warehousing

In the contemporary business environment, intelligent data requirements are essential to compete. Cloud data warehousing has become one of the most influential tools that change the manner in which organisations store, manage as well as analyze their data. In addition, the technology has many benefits which cannot be matched with the traditional systems.

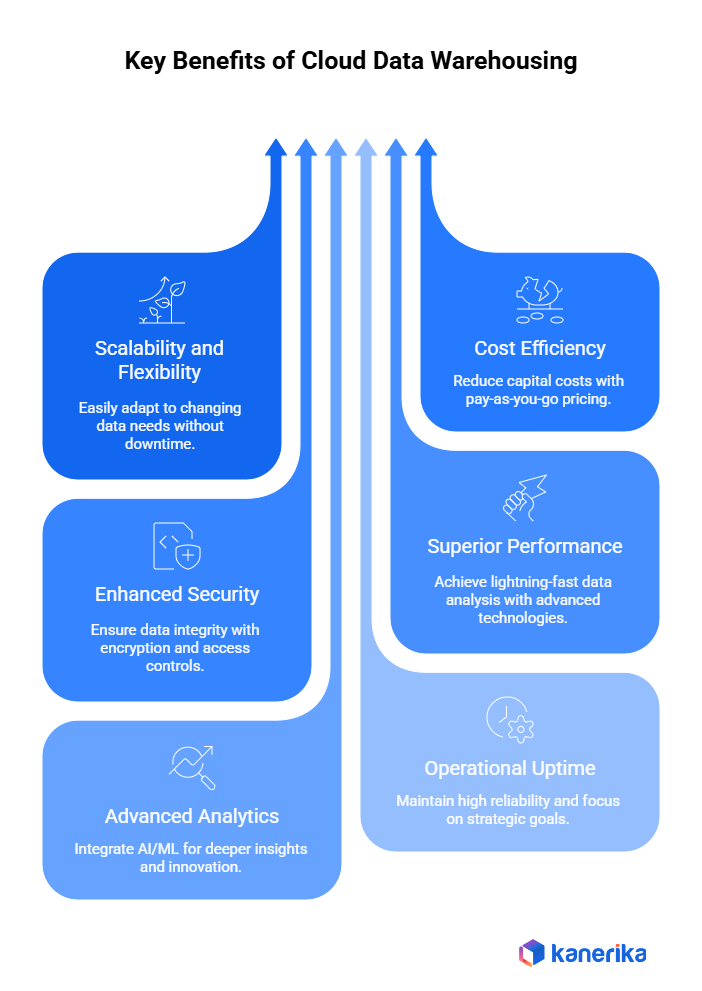

1. Scalability and Flexibility for Organizations

To begin with, cloud data warehouses are extremely scalable. These systems can easily be modified as your business expands to accommodate the increase in data. Moreover, they scale down or scale up according to your requirements without taking down time or incursive hardware acquisitions. The organizations are therefore able to respond quickly to changes in the market or the seasonal needs.

2. Cost Efficiency Through Pay-As-You-Go Models

Besides, cloud solutions save on expensive capital expenditures on the actual physical infrastructure. Rather, organizations have a pay-as-you-go price model, which only requires payment of what is actually used. Thus, businesses lower the initial cost of capital and match the current expendisures with actual use. This will provide a substantial saving in operation with time.

3. Enhanced Security with Encrypted Storage

Any data solution has made security its number one priority. Cloud data warehouses provide high levels of security such as storage of data in encrypted form both at rest and in transit. On the same note, they also have role-based access controls and full auditing features. Consequently, organizations ensure data integrity and also achieve high regulatory compliance levels.

4. Superior Performance

The other strength is performance optimization. Cloud environments are based on columnar storage, in-memory computing and parallel processing to provide lightning fast output. This way, companies will be able to conduct sophisticated data analysis effectively and acquire real-time benefits which the traditional warehousing solutions are not able to offer.

5. Support for Advanced Analytics with AI/ML

In addition, these platforms are good in encouraging artificial intelligence and machine learning projects. They have robust data processing features and easy integration with the analytical tool. As a result, organizations derive a deeper insight and innovate all over their operations.

6. Operational Uptime and Reliability

All the infrastructure management, upgrades and maintenance are done by cloud providers. This implies that businesses have a better uptime and reliability with disruption concerns put aside. As such, teams will be able to devote their time to core activities and strategic goals.

7. Collaborative Efficiency for Teams

A significant advantage of cloud data warehouses is the facilitation of collaboration. With web-based interfaces, these platforms make it easier for teams to access, query, and visualize data collaboratively, leading to faster insights and more informed decision-making.

Cloud Data Warehouse Architecture

The knowledge of cloud data warehouse architecture will guide business in making smarter infrastructure decisions. This is a contemporary design method which separates data systems into different layers which interact harmoniously.

1. Core Components: Storage, Compute, and Metadata

There are three main components of the foundation. To store all your data in scalable format, first, the storage layer. Second, the compute layer received queries and executed analytics without involvement of storage. Third, there is metadata layer that monitors data definitions, lineage and quality measures. This segregation gives every element the ability to scale separately according to your demands.

2. Integration with Data Lakes and Lakehouses

Cloud warehouses do not operate alone. They relate well with the data lakes where the raw and unstructured information is stored. Moreover, the contemporary lakehouse designs merge the consistency in warehouses and the malleability of the lake. This incorporation makes it a unified ecosystem with the presence of both structured and unstructured data. Organizations can, therefore, have all their information in one platform.

3. ELT and ETL of Cloud Data Warehousing

The conventional ETL (Extract, Transform, Load) operations process data and then load it. Nonetheless, ELT (Extract, Load, Transform) models are preferred by cloud environments. Using ELT, raw data is loaded, and it is then transformed in the warehouse using its compute-intensive resources. This approach is quicker and more agile as the transformations occur in areas with high processing capacity.

4. Access Control Layers and Security

Security occurs on several levels in cloud designs. The security layer consists of encryption and network isolation as well as detection of threats. In the meantime, access control mechanisms spell out who is allowed to view or edit data. The role-based permissions will allow employees to access only the information that is relevant to them. Audit logs are used to monitor all activities to comply with the audit.

5. Multi-Cluster and Auto-Scaling

The intelligent scaling is integrated in the modern cloud warehouses. Auto-scaling is a scaling feature which automatically adds or removes compute resources when there is peak demand or when the demand is low. Multi-cluster server configurations enable various teams to execute workloads concurrently without impact. It will provide that marketing is able to make reports and finance undertakes month-end data without delay. The capabilities will maximize performance and cost.

AI Adoption and Business Transformation Explained

Discover how AI adoption transforms businesses through automation, smarter decisions, and improved efficiency across every department.

Challenges in Cloud Data Warehousing

Despite these benefits, cloud data warehousing has a number of challenges that the organization encounters in the execution and operation. Knowledge about these obstacles assists teams to devise viable solutions.

1. Data Migration Complexity

Migration of current data to the cloud is a technical challenge. Old systems tend to adopt variants of formats and structure. Moreover, big data is also time-consuming to transfer. Migration should be planned in an organization to prevent loss of data and disruption to business. Several corporations carry out migrations in stages as a way of minimizing risks.

2. Cost Management and Optimization

Without proper management, cloud costs are easily run out of control. The pay-as-you-go model must be monitored to ensure that there is no unnecessary expenditure. Idle resources, wasted queries and redundant data storage are all bill inflators. That is why companies should have transparent cost optimization measures and frequent evaluations of their cloud expenditure patterns.

3. Data Governance and Compliance

Governance of data in cloud settings is a tricky task. Organizations have to follow data lineage, quality standards, and policies inevitably. Moreover, the compliance requirements depend on industry and region. Compliance with such regulations as GDPR, HIPAA, or SOC 2 requires a strong control and documentation. Failure to comply may lead to huge fines and reputation losses.

4. Performance Tuning

There must be continuous effort to achieve best performance. The queries that are designed poorly slow down the entire system. Besides, inappropriate indexing or partitioning schemes consume resources in computing. Teams should be able to constantly check performance metrics and make corresponding changes. This involves special skills which most organizations do not have within them.

5. Vendor Lock-In Concerns

There are high difficulties when changing providers of cloud services. Stations of each platform have proprietary tools and formats that are not easily transferable. Companies get attached to certain suppliers which becomes restrictive. There are high migration fees and technicalities that render the switching of providers highly challenging. The companies, therefore, ought to critically consider the advantages of any long-term vendor relationships prior to investing in any specific platform.

Best Practices for Implementing a Cloud Data Warehouse

Effective implementation of cloud data warehouse entails effective planning and best practices. Companies that adhere to laid down best practices deploy faster, have stronger performance, and have a higher success rate in user adoption as opposed to falling into the numerous pitfalls that plague projects.

1. Establish specific Business Objectives

Begin with business cases specific to business instead of technical requirements. Find out which analytics processes, reporting requirements, and data sources can be sources of quick value. Moreover, give priority to use cases according to the level of business impact and/or the complexity of implementation to achieve early wins.

2. Plan for Long-Term Growth

Creating a cloud data warehouse architecture at a scale with a governance approach should be considered at the very beginning of its design. Thus, define data quality, access controls and naming conventions and then load production data. Also, deploy role based security structures that facilitate organizational expansion and compliance needs.

3. Install Data Pipes that are Strong

Install data ingestion and validation mechanisms to have uniform and dependable data flows. Next, introduce a system of error handling of builds and data quality control to all pipelines to prevent errors before they can affect business users. Further, put up automated testing processes that ensure data accuracy and completeness is verified on a regular basis.

4. Create the Culture of on-going optimization

Measure the performance in terms of metrics and costs continuously to find optimization opportunities. As a result, establish automated notifications about the deterioration of query performance and unforeseen cost rise. Moreover, periodically analyze consumption behavior to downsize resources and get rid of avoidable costs.

5. Value Security and Compliance

Established elaborate security and compliance standards at the initial stages of implementation. Thus, set up the encryption and access controls as well as audit logging in reference to industry demands and regulatory provisions. Besides, set up data classification guidelines to safeguard confidential data and allow legitimate access.

Selecting the Right Cloud Data Warehouse Solution

Here’s a comparative table for the major cloud data warehouse platforms:

| Feature/Platform | Amazon Redshift | Azure Synapse Analytics | Google BigQuery | Snowflake |

| Best For | Big data warehousing | Enterprise data warehousing | Cost-effective storage for large volumes with infrequent queries | Cloud-agnostic data warehousing |

| Key Features | SQL querying of exabytes of data, Federated querying, Integration with Amazon EMR and ML services | Integration with 90+ data sources, Advanced indexing and query performance, Integration with Azure Machine Learning | Exabyte-scale storage, More than 100 data source connectors, Federated querying support | Separate scaling of storage and compute resources, Support for multiple cloud vendors, Automated database maintenance |

| Pricing Model | On-demand, Reserved instances | On-demand, Reserved instances | Storage based, Pay-per-query | On-demand, Pre-purchase |

| Storage Costs | Separate charges for RA3 node type | $23/TB/month | $0.02/GB/month (active), $0.01/GB/month (long-term) | Separate billing of storage and compute |

| Scalability | Handles terabytes to petabytes of data | Ideal for data more than 1TB, Supports billion-row tables | Suitable for large-scale data storage | Scales without affecting performance |

| Suitability | Companies dealing with exabytes of data, Advanced querying needs | Large enterprises, Extensive data integration needs | Storing vast data cost-effectively, Variable querying needs | Flexibility in cloud provider choice, Balance of performance and cost efficiency |

Case Study: Healthcare Giant Streamlines Analytics with Snowflake and Power BI

Challenge: A leading healthcare organization, grappling with a complex and fragmented data landscape, sought help extracting actionable insights from their extensive patient data. The data, scattered across numerous departments and geographical locations, presented a significant hurdle to effective decision-making and the enhancement of patient care.

Solution: Kanerika helped the organization embark on a data modernization project, implementing a two-pronged approach:

- Centralized Data Management with Snowflake: We adopted Snowflake’s cloud-based data warehouse solution. Snowflake’s ability to handle massive datasets and its inherent scalability made it ideal for consolidating data from disparate sources.

- Empowering Data Visualization with Power BI: Once the data was centralized in Snowflake, we leveraged Power BI, a business intelligence tool, to gain insights from the unified data set.

Results:

- By leveraging Snowflake and Power BI together, Kanerika helped the healthcare expert achieve significant improvements:

- Enhanced Patient Care: Having a holistic view of patient data allowed for improved care coordination and the development of more personalized treatment plans.

- Faster and More Accurate Insights: Snowflake’s unified data platform and Power BI’s user-friendly interface enabled faster and more accurate analysis of patient data.

- Improved Decision-Making: Data-driven insights from Power BI empowered healthcare professionals to make better decisions regarding patient care, resource allocation, and overall healthcare strategy.

Kanerika: Your Trusted Partner for Cloud Data Warehouse Solutions

Kanerika empowers businesses to unlock the full potential of their data through expert cloud data warehouse consulting and implementation services. As a leading data analytics firm, we help organizations modernize legacy systems and build scalable, cost-effective cloud-based data warehouses that drive real business value.

Our team specializes in implementing industry-leading platforms including Snowflake, Microsoft Fabric, and Databricks. Moreover, we guide you through every step from migration planning to optimization. With proven expertise across healthcare, retail, manufacturing, and financial services, we design solutions tailored to your unique needs.

Furthermore, our services include data integration, real-time analytics, security implementation, and performance optimization. We leverage our proprietary frameworks to ensure smooth transitions with minimal disruption. Additionally, our experts help reduce infrastructure costs by 40-60% while improving query performance and data accessibility.

Whether you’re moving from on-premises systems or consolidating multiple data sources, Kanerika delivers reliable, secure, and future-ready cloud data warehouse solutions that transform how you work with data.

FAQs

What is a cloud data warehouse?

A cloud data warehouse is a managed data storage and analytics platform hosted on cloud infrastructure that centralizes structured data from multiple sources for reporting and business intelligence. Unlike traditional on-premises data warehouses, cloud-based solutions offer elastic scalability, pay-as-you-go pricing, and reduced maintenance overhead. Enterprises use platforms like Snowflake, Databricks, and Microsoft Fabric to store petabytes of data while enabling fast SQL queries. Kanerika helps organizations architect and migrate to modern cloud data warehouse solutions that align with their analytics goals—connect with our team to explore your options.

What is the best cloud data warehouse?

The best cloud data warehouse depends on your workload, existing ecosystem, and budget. Snowflake excels in multi-cloud flexibility and workload isolation. Databricks suits organizations prioritizing unified analytics and machine learning on a Lakehouse architecture. Microsoft Fabric integrates seamlessly with Power BI and Azure services for end-to-end analytics. Google BigQuery delivers serverless simplicity, while Amazon Redshift fits AWS-centric enterprises. Evaluating query performance, concurrency, and total cost of ownership is critical before selecting a platform. Kanerika’s data platform experts assess your requirements and recommend the ideal cloud data warehouse—schedule a free consultation today.

Is Snowflake a cloud data warehouse?

Snowflake is a fully managed cloud data warehouse built natively for the cloud with support across AWS, Azure, and Google Cloud. It separates compute from storage, allowing independent scaling and cost optimization. Snowflake handles structured and semi-structured data, making it versatile for analytics, data sharing, and application development. Its architecture eliminates infrastructure management, enabling teams to focus on insights rather than maintenance. Organizations choosing Snowflake benefit from near-unlimited concurrency and consumption-based pricing. Kanerika delivers Snowflake implementation and data migration services—reach out to accelerate your cloud data warehouse deployment.

How much does a cloud data warehouse cost?

Cloud data warehouse costs vary based on storage volume, compute usage, and platform choice. Snowflake and Databricks use consumption-based pricing where you pay for credits or processing units. Redshift and BigQuery offer reserved capacity and on-demand models. Typical enterprise deployments range from a few hundred dollars monthly for small workloads to hundreds of thousands annually at scale. Hidden costs include data egress, concurrency spikes, and third-party integrations. Accurate cost modeling requires analyzing query patterns and growth projections. Kanerika’s migration ROI calculator helps enterprises estimate cloud data warehouse expenses—request your assessment now.

What is the difference between cloud data warehouse and cloud data lake?

A cloud data warehouse stores structured, processed data optimized for SQL queries and business intelligence, while a cloud data lake holds raw, unstructured, and semi-structured data at scale for diverse analytics workloads. Data warehouses enforce schemas on write for fast reporting; data lakes use schema-on-read for flexibility. Modern Lakehouse architectures on platforms like Databricks and Microsoft Fabric combine both, delivering structured query performance with data lake economics. Choosing between them depends on your analytics maturity and use cases. Kanerika guides enterprises in designing unified data platforms—contact us to build your optimal architecture.

What are the 4 components of a data warehouse?

The four core components of a data warehouse are the data source layer, ETL processes, the central repository, and the analytics or presentation layer. Data sources feed raw information from operational systems. ETL extracts, transforms, and loads data into the warehouse. The central repository stores cleansed, integrated data in a structured format. The analytics layer enables reporting, dashboards, and business intelligence queries. Modern cloud data warehouses bundle these components with managed services for simplified operations. Kanerika designs data warehouse architectures that integrate these components seamlessly—let us help you modernize your data infrastructure.

What is the difference between a data warehouse and a database?

A database is designed for transactional operations with fast reads and writes for day-to-day applications, while a data warehouse aggregates historical data from multiple databases for analytical queries and reporting. Databases use normalized schemas to minimize redundancy; data warehouses employ denormalized or star schemas for query performance. Databases handle OLTP workloads; data warehouses support OLAP workloads with complex joins and aggregations. Cloud data warehouses like Snowflake and Redshift scale analytics independently from production databases. Kanerika helps enterprises integrate databases with modern cloud data warehouse platforms—talk to us about your data strategy.

Is Snowflake a database or ETL tool?

Snowflake is a cloud data warehouse, not an ETL tool. It functions as an analytical database optimized for storing and querying large datasets across structured and semi-structured formats. Snowflake supports data transformation using SQL, Snowpark, and stored procedures, but it does not replace dedicated ETL platforms like Informatica, Talend, or Azure Data Factory. Organizations typically pair Snowflake with ETL or ELT tools to ingest and transform data before analysis. Snowflake’s architecture separates storage and compute for elastic scalability. Kanerika implements Snowflake alongside modern ETL pipelines—reach out to streamline your data integration.

What is the 3-tier architecture of a data warehouse?

The 3-tier architecture of a data warehouse includes the bottom tier for data storage, the middle tier for the OLAP server, and the top tier for front-end analytics tools. The bottom tier contains the relational database or cloud data warehouse storing integrated data. The middle tier processes multidimensional queries using OLAP engines for fast aggregation. The top tier presents insights through dashboards, reports, and BI applications like Power BI. This separation enables scalability and optimized performance at each layer. Kanerika architects cloud data warehouse solutions using proven multi-tier designs—schedule a consultation to discuss your requirements.

What is the difference between cloud computing and data warehousing?

Cloud computing is the delivery of computing resources like servers, storage, and networking over the internet, while data warehousing is a discipline focused on consolidating data for analytics and reporting. A cloud data warehouse combines both, running data warehouse workloads on cloud infrastructure for scalability and cost efficiency. Cloud computing enables services like IaaS, PaaS, and SaaS; data warehousing provides structured repositories for business intelligence. Platforms like Snowflake and Databricks are cloud data warehouses that leverage cloud computing foundations. Kanerika helps enterprises leverage cloud computing for modern data warehousing—contact us to begin your transformation.

Is Snowflake better than Redshift?

Snowflake and Redshift both deliver enterprise-grade cloud data warehouse capabilities, but differ in architecture and flexibility. Snowflake separates compute and storage completely, enabling independent scaling and multi-cloud deployment across AWS, Azure, and GCP. Redshift ties closely to the AWS ecosystem with provisioned clusters and newer serverless options. Snowflake handles concurrency better out of the box; Redshift offers deeper AWS integration and cost advantages for committed usage. The better choice depends on your cloud strategy, workload patterns, and existing tooling. Kanerika evaluates both platforms against your needs—request a comparative assessment today.

What is the difference between a data warehouse and big data?

A data warehouse is a structured repository optimized for SQL-based analytics and business intelligence, while big data refers to massive, varied datasets that require distributed processing technologies. Data warehouses handle structured data with defined schemas; big data encompasses structured, semi-structured, and unstructured data at petabyte scale. Big data platforms like Hadoop and Spark process raw data, often feeding refined outputs into cloud data warehouses for reporting. Modern Lakehouse solutions blur this boundary by unifying big data processing with warehouse query performance. Kanerika integrates big data pipelines with cloud data warehouse platforms—let us design your unified architecture.

What are the 4 pillars of a data warehouse?

The four pillars of a data warehouse are subject orientation, integration, time variance, and non-volatility. Subject orientation means data is organized around business subjects like customers or sales rather than applications. Integration ensures data from diverse sources is cleansed and unified. Time variance captures historical data to support trend analysis. Non-volatility means data remains stable once loaded, supporting consistent reporting. Cloud data warehouses maintain these principles while adding scalability, elasticity, and managed infrastructure benefits. Kanerika builds cloud data warehouse solutions grounded in these foundational pillars—connect with us to strengthen your analytics foundation.

What is the difference between a data store and a data warehouse?

A data store is any repository that holds data, including databases, file systems, and object storage, while a data warehouse is a specialized analytical data store designed for reporting and business intelligence. Data stores serve varied purposes from transactional processing to archival storage. Data warehouses specifically consolidate, transform, and structure data for complex queries and historical analysis. Cloud data warehouses like Snowflake and BigQuery are purpose-built analytical data stores with optimized columnar storage and query engines. Kanerika helps enterprises migrate from disparate data stores to unified cloud data warehouse platforms—reach out to simplify your data landscape.

Is Google Cloud Storage a data warehouse?

Google Cloud Storage is not a data warehouse; it is an object storage service for storing unstructured files like images, backups, and raw data. Google BigQuery is Google Cloud’s cloud data warehouse, purpose-built for large-scale SQL analytics with serverless architecture and columnar storage. Cloud Storage often serves as a data lake staging area that feeds into BigQuery for analysis. Understanding this distinction helps enterprises architect appropriate solutions for storage versus analytics workloads. Kanerika designs data architectures integrating Google Cloud Storage with BigQuery and other cloud data warehouse platforms—contact us to optimize your Google Cloud analytics stack.

Is AWS a data warehouse?

AWS is not a data warehouse itself; it is a cloud platform offering various services including Amazon Redshift, which is AWS’s cloud data warehouse solution. Redshift provides petabyte-scale data warehousing with columnar storage, massively parallel processing, and integration with the broader AWS ecosystem. AWS also offers Redshift Serverless for automatic scaling without cluster management. Other AWS services like S3 and Athena complement Redshift for data lakes and ad-hoc querying. Selecting the right combination depends on your analytics requirements and existing infrastructure. Kanerika implements and optimizes Redshift-based cloud data warehouse solutions—talk to our AWS specialists today.

What is the Gold layer of data?

The Gold layer represents the final, business-ready data tier in a medallion architecture commonly used in Lakehouse and cloud data warehouse environments. Raw data enters the Bronze layer, undergoes cleansing in the Silver layer, and reaches the Gold layer as curated, aggregated datasets optimized for reporting and analytics. Gold layer tables follow business logic, enforce data quality standards, and serve dashboards, machine learning models, and downstream applications. Platforms like Databricks and Microsoft Fabric implement this pattern for scalable data governance. Kanerika architects medallion-based data pipelines on modern cloud data warehouse platforms—let us structure your data for maximum value.

Can ETL be done in Snowflake?

ETL can be performed in Snowflake using SQL transformations, stored procedures, streams, tasks, and Snowpark for Python-based processing. However, Snowflake primarily functions as an ELT platform where raw data loads first and transforms inside the warehouse using its scalable compute. This approach leverages Snowflake’s processing power rather than external ETL servers. For complex orchestration, enterprises often integrate Snowflake with tools like dbt, Informatica, or Azure Data Factory. Native features like dynamic tables further simplify transformation workflows. Kanerika builds efficient ELT pipelines on Snowflake tailored to enterprise requirements—connect with us to optimize your data transformation strategy.