Snowflake Cortex stopped being a Snowflake-only product on February 23, 2026. That was the day Snowflake announced Cortex Code CLI would work on a standalone monthly subscription, without requiring customers to run workloads on Snowflake. It also extended support to dbt and Apache Airflow, the two tools most data engineers live inside every day.

For buyers evaluating Cortex, this changes the pitch. The AI layer now works without Snowflake consumption, which makes the product easier to adopt and harder to compare against Databricks or Microsoft Fabric. In this article, we’ll cover what Cortex includes, which product fits which job, where bills go wrong, and where accuracy breaks in production.

Key Takeaways

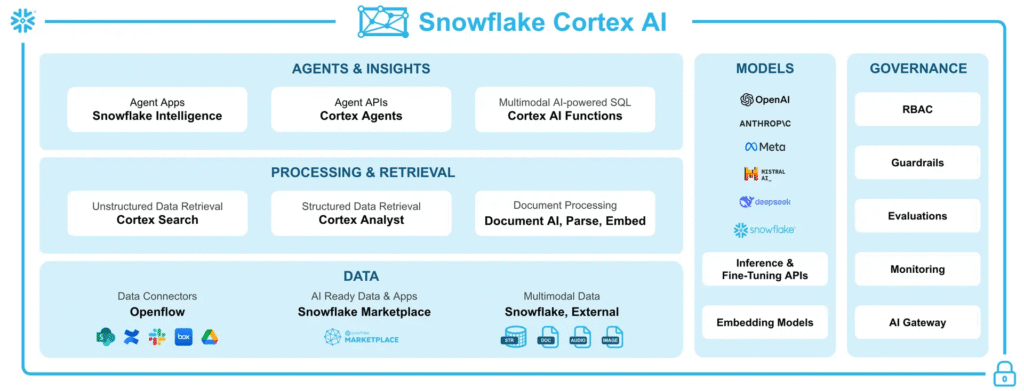

- Snowflake Cortex is now a product line of five components, Cortex Code, Cortex Analyst, Cortex Search, AISQL functions, and Cortex Agents.

- The February 23, 2026 standalone subscription decouples Cortex Code from Snowflake consumption, a first for the vendor.

- Cortex bills on tokens and serving compute, so standard warehouse cost monitors do not apply.

- Cortex Analyst accuracy depends on a clean semantic view more than model choice.

- Cortex beats Databricks and Fabric when data already lives in Snowflake, and the opposite is true when it does not.

What Is Snowflake Cortex?

Snowflake Cortex is the AI layer inside the Snowflake Data Cloud. It gives enterprises a way to run LLM calls, text-to-SQL queries, semantic search, and agentic workflows directly against Snowflake data without exporting to external APIs.

Cortex ships as SQL functions and REST APIs that invoke models from OpenAI, Anthropic, Meta, Mistral, DeepSeek, and Snowflake’s own Arctic family. The compute runs inside Snowflake’s security perimeter, so prompts, responses, and source data stay inside the governance boundary.

At a high level, Cortex handles four kinds of work:

- Text-to-SQL for business users asking questions in plain English

- RAG and semantic search across structured and unstructured data

- Inline AI calls for summarization, classification, extraction, and translation inside regular SQL queries

- Multi-step agentic workflows that combine structured and unstructured sources

Snowflake Cortex After the Feb 2026 Reset

Cortex started in 2023 as a set of SQL-callable LLM functions. By early 2026 it has grown into five distinct products, a standalone subscription, and native access to OpenAI GPT-5.2 and Claude Opus 4.6 after the $200 million Snowflake and OpenAI partnership announced on February 2, 2026.

Cortex now competes as a full product line. The February 23 announcement was the structural change. Before that date, buying Cortex meant buying Snowflake. Now a developer at an enterprise that runs pipelines in dbt and Airflow can subscribe to Cortex Code CLI and use it without a Snowflake account.

1. Cortex is not just an add-on anymore

Through 2024 and 2025, Cortex was packaged within Snowflake credits, but that positioning has shifted, with Snowflake now treating it as a standalone revenue stream with its own subscription model, and partners such as Kanerika reporting that Cortex is increasingly part of consulting discussions even in deals without an existing Snowflake footprint.

2. 5 components under the Cortex umbrella today

Cortex contains five products in 2026, each with a different user and use case.

- Cortex Code (CLI and Snowsight surface). AI coding agent for SQL, Python, pipeline design, and account admin tasks.

- Cortex Analyst. Text-to-SQL for business users, grounded in a semantic view.

- Cortex Search. Managed hybrid vector and keyword search for RAG pipelines.

- AISQL functions. Inline AI calls such as AI_COMPLETE, AI_CLASSIFY, AI_EXTRACT inside SQL queries.

- Cortex Agents. Multi-step agentic workflows that combine structured and unstructured data.

Which Cortex Product Fits Your Enterprise

Most enterprises pick the wrong Cortex product on first try. The products look similar on the docs page, but each solves a different problem. The decision starts with who is asking and what data they are asking of.

| Product | Best for | Primary user | Data type |

| Cortex Code | Generating SQL and Python, fixing broken pipelines | Data engineers and analysts | Code and metadata |

| Cortex Analyst | Natural language questions against business metrics | Business users | Structured |

| Cortex Search | RAG, document lookup, customer support bots | App developers | Unstructured |

| AISQL functions | Inline enrichment, classification, extraction at scale | Data engineers | Structured and text |

| Cortex Agents | Multi-step workflows across tools and data types | Application developers | Mixed |

The matrix clears up most of the confusion enterprises hit in the first two weeks of evaluation. It does not solve the harder question, which is where each product hits its accuracy and cost limits. That comes in the next two sections.

1. Cortex Code for developers

Cortex Code is the agent that understands the Snowflake account it runs in. It reads schema, permissions, warehouse history, and governance policies, then writes code against that context. The Feb 2026 update pushed it into dbt and Airflow, which is where most production pipelines actually live.

2. Cortex Analyst for business

Cortex Analyst turns “what was Q3 revenue in the Northeast” into SQL against a semantic view. The semantic view drives accuracy far more than the LLM choice does. Enterprises that skip the semantic model and point Cortex at raw tables get the 90% accuracy claim in benchmarks and 60 to 70% in the real world.

3. Cortex Search for unstructured retrieval and RAG

Cortex Search indexes documents, PDFs, images, and text columns into a hybrid vector plus keyword store. It handles embeddings, chunking, and refresh automatically. The catch is serving cost, covered in the next section.

4. AISQL functions for inline AI inside queries

Functions like AI_COMPLETE, AI_CLASSIFY, AI_SENTIMENT, and AI_EXTRACT run against entire columns in a SELECT statement. They work well for enrichment jobs, but a poorly scoped query can send millions of tokens through Claude Opus or GPT-5.2 and cost thousands of dollars before anyone notices.

5. Cortex Agents for multi-step workflows

Agents combine Cortex Analyst, Cortex Search, and custom tools into workflows that can reason, plan, and act. The Siemens Energy and Nissan deployments referenced in Snowflake documentation are public examples. For most mid-market enterprises, agentic AI implementations start with Analyst and Search before moving to Agents.

Why Cortex Bills Are Catching Enterprises Off Guard

Cost is the biggest complaint about Cortex in 2026, with accuracy a distant second. Unlike Snowflake’s traditional warehouse-based billing, which is predictable and controllable through resource monitors, Cortex operates on a different model. In one documented case from Seemore Data, an enterprise processed 1.18 billion records using AISQL and incurred nearly $5,000 for a single query, where the primary driver was token usage rather than compute.

| Service | Cost basis | Hidden charge | Control lever |

| Cortex Code | Tokens per turn | Context cache fills on turn 1 | Daily per-user credit limits |

| AISQL functions | Input plus output tokens | Output tokens cost more | Pick smallest model that works |

| Cortex Search | Serving compute per GB plus embedding tokens | Idle serving charges | Suspend services when not in use |

| Cortex Analyst | Tokens plus warehouse for SQL execution | Two billing streams | Dedicated warehouse plus monitoring |

| Document AI | Per page plus warehouse plus storage | Storage of parsed results | Batch processing |

Each Cortex service uses a different billing model, and the common mistake is assuming token-based pricing behaves like compute-based pricing, when it does not.

Where Cortex Accuracy Breaks Down in Production

Snowflake reports more than 90% accuracy for Cortex Analyst on benchmarks, but performance in real-world use is lower, largely because of inconsistencies in semantic definitions.

Benchmarks assume a clean, well-structured schema, whereas real data warehouses contain overlapping versions of sales data, ambiguous customer segments, and multiple definitions of churn.

| Scenario | Typical accuracy | Why |

| Clean semantic view with governed metrics | 85 to 90% | LLM has business context |

| Raw schema, no semantic view | 60 to 70% | LLM guesses business definitions |

| Ambiguous question, classifier allows through | Lower | Confident but wrong answer |

| Multi-step question spanning structured and unstructured data | Variable | Needs Cortex Agents, not Analyst alone |

| Sensitive metric with multiple definitions | Low | Semantic view must define canonical version |

Data readiness governs accuracy far more than model quality does. The same LLM produces 90% accuracy on one account and 60% on another because the semantic layer differs.

Cortex Accuracy Depends on Semantics and Clarity

Cortex accuracy issues largely stem from weak semantic foundations and unresolved ambiguity in user queries. Even when the system generates technically correct SQL, mismatches between business definitions and underlying logic can lead to incorrect answers. These gaps become more pronounced in real-world environments with inconsistent metrics, poor data governance, and loosely defined questions.

- Missing semantic layer leads to misreads: Without a well-defined semantic layer, Cortex can misinterpret business terms. For example, a query for “forecast accuracy” may return a valid SQL calculation for percentage difference, even if the correct business definition is absolute variance, resulting in a technically correct query but a wrong answer.

- High accuracy depends on clean definitions: Reported accuracy levels rely on well-structured metric definitions. While Semantic View Autopilot helps automate semantic modeling, it still depends on the quality of underlying metadata. Organizations with complex schemas and weak governance tend to see lower accuracy.

- Ambiguity still slips through: Cortex’s classification agent filters out clearly vague queries but is less effective with subtle ambiguity. As a result, questions that appear reasonable but lack precise definitions can pass through and generate confident yet incorrect responses.

Plan Your Cortex Strategy with Real-World Insights!

Partner with Kanerika for Expert AI implementation Services

Did Snowflake Cortex Beat Databricks and Fabric?

Every evaluator asks this question and most vendor content dodges it. The honest answer depends on where data already lives. Feature parity matters less than fit.

| Dimension | Snowflake Cortex | Databricks | Microsoft Fabric |

| Best-fit customer | Data already in Snowflake | ML engineering enterprises | Microsoft-centric orgs |

| Strongest feature | Native LLM inside SQL | MLflow, model training, open lakehouse | Power BI and M365 integration |

| AI cost model | Token-based, can surprise | Compute-based, predictable | Capacity-based |

| Agentic workflows | Cortex Agents | MosAIc AI Agents | Fabric AI skills |

| Governance | Horizon Catalog | Unity Catalog | Microsoft Purview |

1. How to choose the right platform

Switching platforms for Cortex access alone rarely makes sense. The tighter fit question is whether AI belongs where the data sits or where the BI tools sit. For a detailed feature-by-feature comparison, see our Databricks vs Snowflake vs Fabric analysis.

Cortex wins when data already sits in Snowflake, SQL is the primary query language, and workloads are analytics-heavy with occasional AI. Zero data movement, native governance, and inline SQL make Cortex the path of least resistance for existing Snowflake customers.

Databricks with Mosaic AI and MLflow is the stronger platform in these cases:

- Model training, fine-tuning, and MLOps are primary use cases

- Multi-modal data spans text, images, audio, and video in one pipeline

- Existing team skills sit in PySpark, MLflow, or Mosaic

- An open lakehouse with Delta or Iceberg matters for vendor neutrality

Microsoft Fabric fits best when:

- Power BI is already the primary BI tool

- Microsoft 365 and Dynamics anchor the enterprise stack

- Microsoft Purview already governs data and a second catalog adds overhead

- Existing Microsoft enterprise agreements shift the licensing math

Pick Cortex for SQL-first enterprises with analytics workloads, Databricks for ML-first enterprises building custom models, and Fabric for Microsoft-first enterprises with Power BI as the reporting layer.

Case Study: Real-Time Insights with Snowflake and Cortex

1. The problem

A logistics operator running distributed operations across geographies needed faster visibility into field events, inventory, and delivery performance. Siloed reporting and batch pipelines meant decisions lagged operations by hours.

Each region ran on its own warehouse tools, so consolidating numbers for headquarters took manual work and introduced reconciliation errors. Cost control and governance were inconsistent across the geographies.

2. The solution

Kanerika led a Snowflake engagement that enabled real-time insights across geographies, shortening the path from event to decision. Regional data was consolidated into a single Snowflake account with Cortex AI functions used for inline enrichment and classification directly inside SQL.

Token consumption was sized per use case before any production workload ran. CORTEX_AI_FUNCTIONS_USAGE_HISTORY and per-user daily credit limits were wired into the admin dashboard from day one, which gave the platform team cost visibility before spending hit budget thresholds.

3. The results

- Reporting cycles moved from hours to minutes across all regions

- Distributed operations visibility consolidated into a single analytics surface

- Token costs controlled through model selection and per-user daily credit limits

- Governance maintained through Horizon Catalog plus Kanerika’s KANGovern layer

- Platform team gained same-day visibility into AI Services spend rather than monthly invoice surprises

How Kanerika Implements Cortex Without the Surprise Bills

Kanerika is a Snowflake Consulting Partner with documented delivery across AI and ML, data analytics, and generative AI projects. The implementation pattern we use is built to avoid the token billing trap and the semantic accuracy gap that most enterprises hit in the first quarter.

Every Cortex engagement starts with a cost model before the first feature is built. We size token consumption per use case, pick the smallest model that meets accuracy targets, and wire CORTEX_AI_FUNCTIONS_USAGE_HISTORY queries into the Snowsight admin dashboard on day one. Cost visibility exists before anyone runs the first AISQL function at scale.

For a logistics operator running distributed operations, Kanerika led a Snowflake engagement that enabled real-time insights across geographies, shortening the path from event to decision. The engagement used Snowflake core plus Cortex AI functions under a governed cost model.

Across 100+ enterprise clients and 10+ years of delivery, Kanerika brings the following to Cortex work.

- Snowflake Consulting Partner status with named Cortex deployments

- In-house Microsoft MVP for cross-platform design decisions

- ISO 27001, SOC II Type II, and CMMI Level 3 certifications

- 98% client retention and 520+ KPIs delivered across verticals

- Governance suite (KANGovern, KANComply, KANGuard) that pairs with Horizon Catalog

- FLIP platform for data migration from legacy warehouses into Snowflake

Shape Your Cortex Approach with Practical Insights

Partner with Kanerika for Expert AI implementation Services

Wrapping Up

Snowflake Cortex in 2026 is a product line with a standalone subscription, native access to frontier models, and five distinct components that each solve a different problem.

The enterprises getting value from it picked the right component, set up token-level cost monitoring before scaling, and invested in a proper semantic view before trusting text-to-SQL. The enterprises struggling treated Cortex as a single product and expected warehouse-style billing. The right implementation partner closes that gap in weeks.