More than 31,000 organizations now run Microsoft Fabric in production, including 74% of Fortune 500 companies. But most of the agentic AI applications inside those environments are still stuck at the pilot stage. Moving an agent from sandbox to production requires more than orchestration. It requires knowing what the agent did, whether it was safe, and whether it moved a business metric.

That’s the production problem agentic AI on Microsoft Fabric is built to solve. In this article, we’ll cover what a governed, production-grade agentic architecture looks like on Fabric, which patterns actually work at enterprise scale, and where the hard architectural decisions still fall on the team building the system.

Key Takeaways

- Microsoft Fabric provides a unified, governed data plane that captures agent behavior as structured, queryable data rather than opaque logs.

- The multi-agent pattern using a coordinator-and-specialists architecture is the dominant production approach in Fabric-based agentic applications.

- OneLake acts as the shared data foundation across operational, analytical, and AI workloads, making agent observability and evaluation continuous rather than reactive.

- Fabric Data Agents are now generally available (as of FabCon 2026) and operate within the same permission model and semantic layer already used by reporting teams.

- MCP integration (Local GA, Remote in preview) lets AI tools from GitHub Copilot to Claude operate against Fabric’s live APIs within existing RBAC and audit boundaries.

Why POC Success Does Not Predict Production Success

Getting an AI agent to respond correctly in a test environment is not the hard part. The hard part is knowing what it did in production, whether that action was safe and within policy, and whether it actually moved a business metric.

Agentic applications generate rich operational data during execution. Every session produces user prompts, routing decisions, tool calls, model outputs, token usage, latency readings, and safety signals. Most teams treat that data as logs at best or discard it entirely, making debugging reactive and governance impossible.

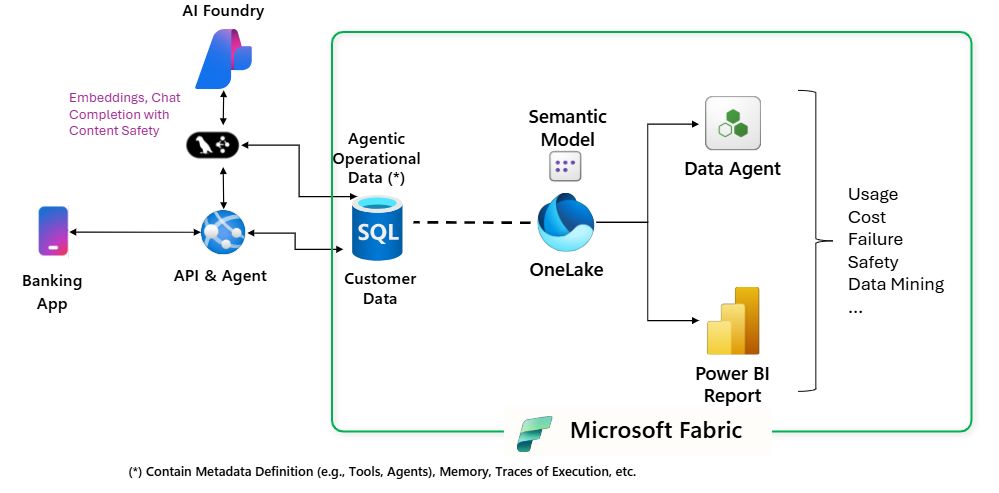

Microsoft’s own reference implementation, the Agentic Banking App, was designed specifically to surface this problem and show what solving it looks like in practice. The architecture it demonstrates is not banking-specific. The patterns apply across any domain where agents act on real systems and need to be observed continuously.

How Microsoft Fabric Changes the Agentic AI Infrastructure Problem

1. OneLake as the Single Data Foundation

Traditional agentic application stacks require stitching together separate systems: a database for transactions, a logging platform for telemetry, a monitoring tool for safety signals, and a BI layer for analytics. Each handoff creates latency, governance gaps, and operational overhead.

Microsoft Fabric replaces that stack with a shared data plane built on OneLake. Operational data, agent telemetry, safety events, and business analytics all live in the same governed storage layer. Agents can be observed and evaluated against the same data foundation that powers production reporting, with no ETL overhead or governance seams between them.

As of FabCon 2026, more than 31,000 organizations are running Fabric in production, with 74% of Fortune 500 companies on the platform.

| Component | Traditional Fragmented Stack | Fabric-Unified Approach |

| Transactional data | Separate operational DB | SQL Database in Fabric |

| Agent session memory | Custom store or Redis | Cosmos DB in Fabric |

| Safety event streaming | External SIEM or log aggregator | Eventstream into Eventhouse (KQL) |

| Analytics and BI | Separate warehouse + BI tool | Lakehouse + semantic model + Power BI |

| Governance policy | Applied per system | OneLake-level, consistent across all workloads |

| Evaluation notebooks | Disconnected data science environment | Fabric notebooks, same data, same governance |

The unified approach reduces governance seams from five to one. Teams audit, observe, and evaluate agents from the same platform they use for everything else.

2. Agent Operational Data as a First-Class Asset

The most common architectural mistake in early agentic deployments is treating agent execution data as logs. Logs are unstructured, hard to query at scale, and nearly impossible to correlate across multi-step workflows.

In the Fabric model, agent sessions, routing decisions, tool calls, model metadata (token counts, latency per call), and safety outcomes are captured as relational data in SQL Database in Fabric. End-to-end traces can be reconstructed, failures correlated with specific routing decisions, and agent behavior tied directly to business outcomes.

A team can ask, with a SQL query, which agents were invoked on a given request, what tools they called, how long each step took, and whether a safety block fired. That is a fundamentally different debugging experience from combing through log files.

3. Real-Time Safety Monitoring via Eventstream and KQL

Every user prompt going through a Fabric-based agentic application can be evaluated for content safety, and the resulting signals can be streamed in real time through Fabric’s Eventstream into Eventhouse (KQL). This makes it possible to detect safety anomalies as they happen rather than after the fact.

KQL databases in Fabric support low-latency querying over event streams, which means dashboards like “safety flags over time” or “top categories of blocked content” are live views, not scheduled refreshes.

For enterprises in regulated industries, real-time safety monitoring is not optional. It is a precondition for production deployment in most enterprise governance frameworks.

Get Expert Guidance on Agentic AI Deployment with Microsoft Fabric

Kanerika brings certified Fabric expertise and hands-on agentic AI experience.

The Multi-Agent Architecture That Works in Production

1. Coordinator-and-Specialists: The Dominant Pattern

Single-agent architectures break at scale. When one agent is responsible for routing, executing, answering questions, and performing transactions, it becomes unpredictable under load and nearly impossible to debug when something goes wrong.

The coordinator-and-specialists pattern solves this by splitting responsibilities. A coordinator agent handles intent classification and routing. Specialist agents handle specific domains with defined toolsets and restricted permissions.

In the Agentic Banking App reference implementation, this looks like:

- Coordinator agent routes each incoming request to the right specialist and acts as the single policy enforcement point before any specialist is invoked.

- Account agent executes banking operations via parameterized SQL, with read/write access scoped to account data only.

- Support agent answers service questions using RAG grounded in bank documentation, with no access to transactional systems.

- Visualization agent produces and persists user-specific UI configurations, operating entirely on a separate data scope from the operational agents.

Each agent runs with scoped permissions, not inherited parent access. The result is a system where failures are isolated, routing decisions are traceable, and each specialist can be evaluated independently.

2. Durable Session Memory with Cosmos DB in Fabric

Agent conversations need to be stateful. A customer who starts a support interaction on one session should not be forced to re-explain their situation the next time they interact with the agent. Most early implementations store conversation state in temporary in-memory structures that evaporate when a session ends.

Cosmos DB in Fabric handles high-velocity, semi-structured session data and restores conversation state instantly on reconnect. User-specific UI configurations and preferences are persisted the same way, so personalization accumulates across sessions rather than resetting each time.

Fabric’s governance boundary extends to Cosmos DB, which means session memory falls under the same access controls and audit trail as everything else.

3. Fabric Data Agents for Governed Natural Language Queries

Fabric Data Agents (generally available as of FabCon 2026) are read-only, conversational agents grounded in semantic models and OneLake-backed data. They answer questions using SQL, DAX, or KQL under the hood, but cannot modify data.

This makes them suited for a specific and important class of enterprise use cases: scenarios where business users need natural language access to data without the risk of unintended side effects from write operations.

Because they operate inside Fabric, they inherit workspace-level permissions, access controls, and audit logging with no additional configuration. For regulated environments, that alignment with existing governance infrastructure is a real operational advantage.

| Dimension | Detail |

| Query methods | SQL, DAX, KQL under the hood |

| Data mutation | Not supported (read-only) |

| Permission model | Inherits workspace RBAC |

| Data sources | Semantic models, configured tables in OneLake |

| Governance | Full audit logging, same as portal and API operations |

| Integration | Azure AI Foundry, Copilot Studio, custom apps via public endpoints |

| GA status | Generally available as of FabCon 2026 |

Microsoft Fabric Data Agents: Everything You Need to Know

Learn how Microsoft Fabric Data Agents automate data operations, improve governance, and help teams manage analytics workflows more efficiently.

MCP Integration: How AI Tools Operate Against Fabric Infrastructure

The Model Context Protocol (MCP), an open standard created by Anthropic and adopted across the industry, gives AI agents a standard way to discover and operate external systems. Microsoft has implemented MCP for Fabric in two modes.

Fabric Local MCP (generally available) is an open-source server that lets AI coding assistants like GitHub Copilot, Claude, and Cursor interact with Fabric’s APIs from a local development environment. An agent can look up the correct API spec, generate grounded code, upload data to OneLake, and inspect table schemas within a single conversation without relying on stale training data.

Fabric Remote MCP (currently in preview) is a cloud-hosted server that allows AI agents to perform authenticated operations directly in a Fabric environment without local setup. An autonomous agent in Copilot Studio can use Remote MCP to manage workspaces and permissions on behalf of a team, with every operation recorded in Fabric Audit Logs.

Both modes enforce RBAC at the user permission level. There is no elevation, no bypass. Destructive operations are flagged before execution, and bulk data access goes through OneLake’s own security boundaries rather than the MCP layer.

For enterprise teams building agentic workflows, MCP matters because it removes the need for custom integrations for each AI tool. Any MCP-compatible client connects to the same Fabric surface with the same security model.

Where Agentic AI Apps on Microsoft Fabric Are Running Today

1. Financial Services: Traceability at the Transaction Level

The financial services pattern is the most developed. Microsoft’s Agentic Banking App reference implementation shows how to capture every routing decision, tool call, and model output as relational data that compliance teams can audit without custom tooling.

When a customer disputes a transaction or a regulator asks what an agent did during a specific session, the answer is a SQL query, not a manual log investigation.

Kanerika has built this pattern for regulated financial clients. The real-time compliance AI agent case study covers how a compliance-grade agent was deployed with full decision path logging and exception-based review workflows replacing manual output review.

2. Manufacturing: From Dashboards to Agentic Operations

In April 2026 at Hannover Messe, Accenture and Avanade demonstrated an agentic factory intelligence system built on Microsoft Fabric and Azure AI Foundry. The system combines structured data from manufacturing execution systems and machine telemetry with unstructured maintenance logs and operator manuals.

AI agents analyze operational context and historical machine behavior to suggest likely causes and recommended actions when production lines deviate. Kruger, a major North American paper and tissue producer, is an early adopter. Their COO noted that a 10 to 15 percent reduction in mean-time-to-repair translates into multimillion-dollar savings at scale across production lines.

That outcome depends on Fabric as the unified data foundation. Structured sensor data and unstructured maintenance records live in the same OneLake layer, accessible to agents reasoning across both without custom connectors or ETL jobs between them.

For teams evaluating the manufacturing pattern, Kanerika’s supply chain AI implementation case study and Karl inventory analysis case study show what governed, production-grade agent deployment looks like in this vertical.

3. Multi-Industry Pattern: Evaluation and Continuous Improvement

Across industries, the pattern that separates production deployments from pilots is systematic evaluation. Fabric notebooks make it practical to run recurring LLM-as-judge workflows that score agent responses on intent resolution, relevance, coherence, and fluency.

Those scores are stored in OneLake, queryable alongside business KPIs, and visualized in Power BI alongside token usage and latency data. Teams can track quality over time rather than relying on anecdotal feedback.

Kanerika’s AI member support agent case study shows this evaluation layer in practice, with continuous monitoring built into the deployment from day one.

| Use Case | Fabric Fit | Primary Fabric Components |

| Financial services compliance tracing | High | SQL DB, Lakehouse, Power BI, Purview |

| Manufacturing operations and diagnostics | High | OneLake, Eventstream, Eventhouse (KQL), Foundry |

| Customer support with governed data access | High | Fabric Data Agents, semantic models, RBAC |

| Developer tooling and CI/CD automation | High | Local MCP, Remote MCP, Git integration |

| Real-time safety monitoring | High | Eventstream, KQL, Power BI |

| Regulated document processing | Medium-High | RAG via Azure AI Search, Purview, DLP policies |

| Multi-agent orchestration at enterprise scale | Medium | Copilot Studio, A2A protocol, Fabric multi-agent support |

Five Architecture Decisions That Determine Production Success

1. Multi-Agent Coordination at Scale Is Not Fully Solved

Fabric multi-agent support (via Copilot Studio and the A2A protocol) lets agents delegate to each other and share enterprise data context. But this is still maturing. Teams building complex, multi-tier agent hierarchies will find that the platform provides the infrastructure while the coordination logic remains their responsibility.

Agent-to-agent communication protocols, failure handling between agent layers, and state synchronization across concurrent subagent executions are architectural decisions that require deliberate design, not just platform defaults.

2. Self-Healing Pipelines Are Coming, Not Here Yet

Microsoft acquired Osmos in January 2026 to bring autonomous data engineering to Fabric. Self-healing pipelines, AI-generated data quality rules, and intelligent schema change adaptation are on the roadmap. As of May 2026, these capabilities are in various stages of preview or planned release, and teams building production pipelines today should design them to accommodate these features without treating them as currently available.

3. OneLake Security Configuration Needs an Audit Before Agent Access

OneLake Security, which unifies Row-Level Security, Column-Level Security, and Object-Level Security across all Fabric workloads into a single model, reached general availability in 2026. But existing Fabric deployments with workload-level security configurations may need remediation before agentic workloads inherit consistent access controls automatically.

Teams adding agentic applications to existing Fabric environments should audit their security configuration before giving agents access to OneLake-backed data.

4. Human-in-the-Loop Escalation Needs Explicit Design

Fabric provides the infrastructure for observability, but it does not automatically implement human review workflows. For regulated enterprises, escalation paths, human review queues for flagged agent outputs, and exception handling workflows all need to be built into the application layer.

This is not a criticism of Fabric. It is an architectural reality: governance frameworks for agentic AI require design decisions that a data platform cannot make for you.

5. Data Agent Scope Decisions Require Cross-Functional Governance

Because Fabric Data Agents rely on explicitly configured tables and models rather than full schema access, teams need a process for deciding what data should be queryable in natural language. That process involves data owners, compliance stakeholders, and technical architects. In most organizations, that cross-functional decision-making takes longer than the technical setup.

How Kanerika Builds Governed Agentic AI on Microsoft Fabric

The gap between what Microsoft Fabric makes possible and what a production enterprise deployment requires is precisely where Kanerika operates. As a Microsoft Fabric Featured Partner and Microsoft Solutions Partner for Data and AI, Kanerika brings certified depth to every Fabric engagement.

1. Certified Expertise Across the Entire Fabric Stack

Kanerika’s deployment team holds DP-600 (Fabric Analytics Engineer) and DP-700 (Fabric Data Engineer) certifications, ensuring every implementation reflects the platform’s current capabilities and best practices. The team also includes Amit Chandak, Chief Analytics Officer and Microsoft MVP, alongside Fabric Superusers with deep, hands-on platform knowledge.

Kanerika is one of the earliest global implementors of Microsoft Fabric, which means the team encountered and solved the platform’s early-stage edge cases before they became known failure modes. That experience carries forward into every production deployment.

As an official FAIAD (Fabric Analyst in a Day) and RTIAD (Real-Time Intelligence in a Day) delivery partner, Kanerika is also Microsoft-recognized to conduct certified training programs, giving enterprise teams the knowledge transfer they need to operate their Fabric environments independently after implementation.

2. Karl: A Production Agentic AI App Built Natively on Fabric

Karl, Kanerika’s data analytics AI agent, is now generally available as a native Microsoft Fabric workload. Karl integrates directly into the Fabric environment, giving business users conversational access to lakehouse data in plain English, with no SQL required.

What Karl represents is more than a product feature. It is evidence of Kanerika’s ability to build production agentic AI applications that operate within Fabric’s governance model, respect its security boundaries, and deliver measurable value to end users at enterprise scale. Karl was recognized at FabCon 2026 as part of Kanerika’s broader Fabric innovation track.

Beyond Karl, Kanerika has deployed five additional production AI agents: DokGPT, Alan, Susan, Mike, and Jennifer. Each is purpose-built for a specific enterprise function and deployed in live client environments.

3. Migration Accelerators That Remove the Biggest Barrier to Fabric Adoption

Building agentic AI on Fabric requires the data foundation to already be on Fabric. For many enterprises, that means migrating from legacy platforms before the agentic layer can go live. Kanerika’s automated migration accelerators support moves from SSIS, SSAS, Azure Data Factory, Informatica, and Synapse Analytics to Fabric, cutting timelines from months to weeks.

The Azure to Fabric Migration Workload is available as a native Fabric workload, featured by Microsoft at Ignite 2025. It automates schema conversions, dependency mapping, connection reconfiguration, and workspace organization, covering the tasks that consume most of the manual effort in legacy migrations.

Kanerika also holds Microsoft’s Data Warehouse Migration to Azure and Analytics on Azure Advanced Specializations, which validate end-to-end capability across the data modernization path that precedes most Fabric deployments.

SSMH Case Study: Microsoft Fabric Deployed for Real Operational Impact

Southern States Material Handling (SSMH), a Toyota Material Handling dealer operating across multiple states, needed a unified data and analytics platform to support faster, more accurate operational decisions across a distributed business.

Kanerika implemented Microsoft Fabric and Power BI to consolidate fragmented data sources, build real-time reporting pipelines, and deliver actionable intelligence to SSMH’s operational and leadership teams. The engagement earned direct recognition from SSMH’s CIO, Delano Gordon: “Kanerika’s flexibility in aligning Microsoft Fabric with our business needs ensures that we are building a system that will drive even better results across our operations.”

The SSMH engagement shows how Kanerika approaches Fabric: not as a platform choice, but as an operational architecture decision. Teams get value when they govern the implementation and connect it to the workflows that actually move the business.

Measurable Impact

- 85% Increased Operational Visibility

- 90%Data Accuracy and KPI Reliability

- 100% Scalability and Support

| Dimension | Default Platform Deployment | Kanerika Enterprise Deployment |

| Data access controls | Platform-level defaults | Role-based, Purview-enforced |

| Agent decision traceability | Depends on application design | Full decision path logging |

| Compliance coverage | Requires manual configuration | Mapped to regulatory requirements |

| Human escalation | Not implemented by default | Built into application workflow |

| Governance audit trail | Requires explicit logging setup | End-to-end, audit-ready |

| Time to production readiness | Weeks to months (self-managed) | Accelerated with 10+ years of enterprise patterns |

Kanerika works with enterprise teams across financial services, healthcare, manufacturing, logistics, and retail to design and build agentic AI systems that operate within real business constraints, governed, traceable, and built to survive contact with production data.

Talk to a Team That Has Deployed Agentic AI on Microsoft Fabric

Kanerika’s Karl AI Agent is now live on Fabric, accelerating decision making with conversational analytics

Wrapping Up

Microsoft Fabric gives enterprises a serious platform for building agentic AI that is observable, governed, and connected to business outcomes. OneLake as a shared data foundation, structured agent telemetry, real-time safety monitoring, MCP integration, and Fabric Data Agents as governed query surfaces are all production-ready today.

What Fabric does not do is make the architectural decisions that production agentic AI requires. Multi-agent coordination logic, data access scoping, human escalation workflows, and governance framework alignment are design responsibilities that belong to the implementation team. The platform enables them. It does not resolve them.

For enterprises with regulated workflows or customer-facing accountability, the gap between platform capability and production readiness is where the real work happens.

FAQs

What is agentic AI in Microsoft Fabric?

Agentic AI in Microsoft Fabric refers to autonomous agent applications that use Fabric’s unified data platform to execute, observe, and govern AI-driven workflows. Fabric acts as the operational backbone, capturing agent behavior as structured data in OneLake, monitoring safety signals in real time, and connecting agent activity to business analytics in Power BI. Unlike isolated agent deployments, Fabric-based agents operate within a single governance boundary covering data access, audit logging, and security policy.

How do Fabric Data Agents work?

Fabric Data Agents are read-only, conversational AI agents grounded in semantic models and OneLake-backed data. They answer natural language questions using SQL, DAX, or KQL under the hood, but cannot modify data. They inherit workspace-level permissions and audit controls from the Fabric platform, which means access management and compliance logging require no additional setup. Teams configure which tables and models are queryable, rather than exposing raw schema access. Fabric Data Agents reached general availability at FabCon 2026.

What is the coordinator-and-specialists multi-agent pattern?

The coordinator-and-specialists pattern separates routing from execution in multi-agent systems. A coordinator agent classifies intent and routes each request to the appropriate specialist. Specialist agents handle specific domains with scoped toolsets and restricted permissions. This approach makes failures easier to isolate, routing decisions traceable, and each specialist independently evaluable. It is the dominant production pattern in Fabric-based agentic applications, as demonstrated in Microsoft’s Agentic Banking App reference implementation.

What is Fabric MCP and why does it matter for enterprise teams?

Fabric MCP (Model Context Protocol) integration allows AI tools like GitHub Copilot, Claude, and Cursor to interact with Fabric’s live APIs using a standard protocol rather than custom integrations. Fabric Local MCP is generally available and runs locally. Fabric Remote MCP is in preview and allows cloud-based agents to perform authenticated operations in Fabric environments. Both enforce RBAC at the user permission level and log every operation in Fabric Audit Logs. This matters for enterprises because it removes the need for separate authentication stacks for each AI tool that needs to interact with Fabric.

What are the biggest challenges in running agentic AI on Microsoft Fabric in production?

The most common challenges are multi-agent coordination logic (which the platform supports but does not design for you), OneLake security configuration for existing deployments, human-in-the-loop escalation workflows that require application-layer design, and data governance decisions about what data Fabric Data Agents should be allowed to query. Teams that treat these as platform defaults rather than explicit architectural decisions encounter them as failure modes after deployment.

How does OneLake support agentic AI workloads?

OneLake is the shared data lake at the core of Microsoft Fabric. For agentic AI workloads, it acts as the single storage layer for operational data, agent telemetry, session state, and business analytics. Because all Fabric workloads read from the same OneLake layer, agent behavior can be observed and evaluated using the same data that powers production reporting, with no ETL overhead or governance gaps between systems. Fabric’s unified security model, including Row-Level, Column-Level, and Object-Level Security, applies consistently across all OneLake-backed workloads.

What regulated industry use cases are agentic AI on Fabric suited for?

Financial services, healthcare, and manufacturing are the most mature enterprise verticals for Fabric-based agentic AI. Financial services benefits from traceable routing decisions and compliance-grade audit trails. Healthcare benefits from governed data access and Purview-enforced classification policies. Manufacturing benefits from the combination of structured sensor data and unstructured documentation in the same OneLake layer, which agents can reason across for operational diagnostics. Regulated deployments in all three sectors still require explicit human-in-the-loop escalation design and compliance framework mapping beyond platform defaults.

How is Microsoft Fabric different from building agentic AI on a standalone AI platform?

Standalone AI platforms handle inference and orchestration but leave teams to solve data governance, observability, and business analytics integration separately. Microsoft Fabric provides those capabilities in the same governed workspace. Agent telemetry, safety events, and business outcomes all land in OneLake, queryable with the same tools used for reporting and data science. The tradeoff is that Fabric’s integrated model works best when the enterprise data estate is already on Fabric or moving there. Teams with mature multi-cloud data estates may need to evaluate OneLake interoperability with their existing Snowflake, Databricks, or Azure SQL environments before committing to Fabric as the agentic AI backbone.