Enterprise migrations are not getting easier. Legacy stacks have grown denser, integrations have piled up, and cutover windows that used to span quarters now have to fit a sprint. Yet the failure rate has barely moved. McKinsey research still shows around 70 percent of large-scale transformations fall short of their goals.

That gap between ambition and execution is where migration accelerators have become a category. They are packaged toolkits that handle discovery, mapping, conversion, and validation as one connected system, so teams stop rebuilding the same wiring on every project.

In this article, we’ll cover what migration accelerators are, the five types enterprises use, the components that matter, how AI is changing the work, and how to evaluate vendors.

Key Takeaways

- Migration accelerators package automation, templates, and validation into one connected system, not separate tools you stitch together

- Around 70 percent of digital transformations still miss their goals, and poor migration execution is one of the recurring causes

- Five accelerator categories matter: cloud, data, application, BI/reporting, and AI/analytics

- AI and LLMs now handle schema mapping, code conversion, dependency detection, and test generation, work that used to be manual

- Manual rebuild can take quarters; FLIP-pattern accelerators automate 70 to 80 percent of conversion work and compress timelines from months to weeks

- Picking the right accelerator comes down to migration path coverage, validation depth, governance, and marketplace presence

From Legacy to Modern Systems—We Migrate Seamlessly!

Partner with Kanerika for proven migration expertise.

Why Most Enterprise Migrations Miss Their Goals

Migration projects rarely fail for one clean reason. They fail because several smaller problems compound at once.

Common failure patterns include:

- Legacy complexity is underestimated at planning time: Discovery turns up integrations nobody documented and dependencies nobody owns. The project scope expands before the first cutover even runs.

- Manual processes scale badly: A typo in a mapping spreadsheet does not surface until reports break in production. Validation is treated as a final-stage activity instead of a continuous check.

- Timelines slip because everything is connected: Touch one system, three others break. The architect who built half the stack is no longer at the company.

- Governance is bolted on after the fact: Audit teams ask for lineage and access logs that the migration team never built in.

Researchers at McKinsey have tracked this pattern across hundreds of transformations and consistently find that about 70 percent of organizations fail to hit their transformation targets. BCG’s 2020 study of 825 companies put the success rate at roughly one in three. The numbers have not improved much since.

This is the gap migration accelerators are designed to close. The point is not to replace engineers. It is to remove work that should not be manual in the first place.

What A Migration Accelerator Actually Does

A migration accelerator is a packaged toolkit of scripts, templates, connectors, and validation logic that handles a migration end to end, from discovery to cutover, as one connected workflow.

The difference between a tool and an accelerator comes down to scope:

- A migration tool handles a single task. Schema conversion. Data extraction. Report rebuild. You buy several and stitch them together.

- A migration accelerator covers the full lifecycle. Discovery, mapping, transformation, validation, monitoring, and rollback under one roof.

| Migration Tool | Migration Accelerator |

|---|---|

| Single-purpose (extract, convert, or validate) | Covers discovery to cutover |

| Manual handoffs between stages | Connected workflow with shared state |

| Validation is a separate purchase | Validation is built in |

| Generic, not migration-specific | Tuned for specific migration paths |

| You assemble the process | The process is pre-assembled |

What makes an accelerator worth using:

- Automation that does not need backing up: If an engineer has to sit next to it the whole time, it is not an accelerator.

- Consistent output: A migration in Q2 should look like a migration in Q4. Standardization is the point.

- Built-in governance: Lineage, access logs, and audit trails captured during the migration, not reverse-engineered after.

- Live dashboards: Status, errors, throughput, and validation gaps visible in real time. Not weekly reports.

- A learning loop: What broke last time should be captured and prevented next time.

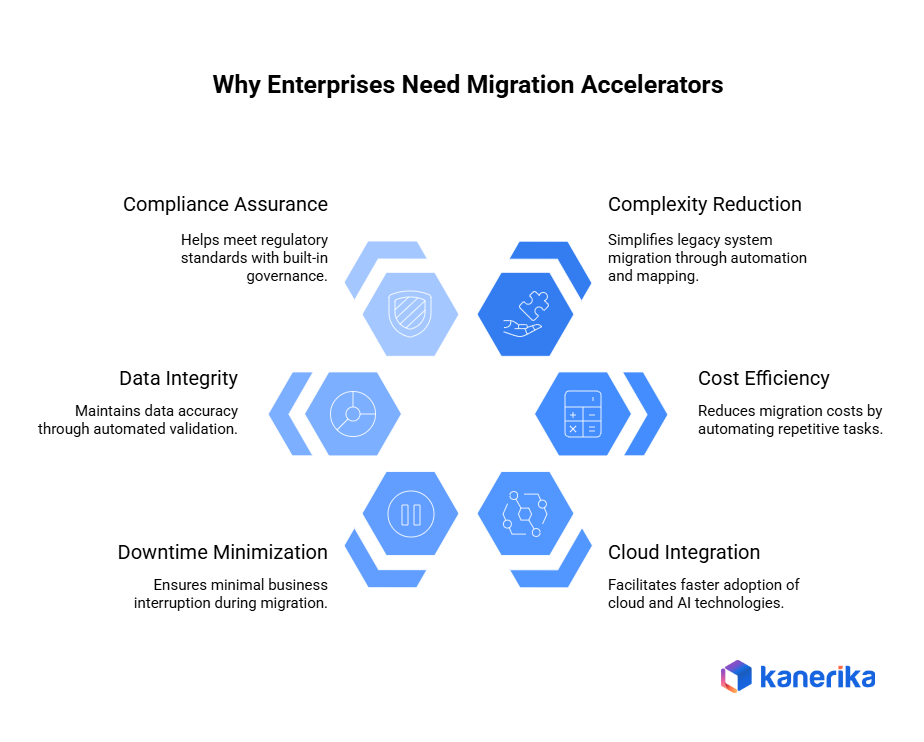

Why Enterprises Need Migration Accelerators Now

Several shifts have made the old approach unworkable.

1. Legacy Systems Got Older And Harder To Document

That mainframe from 1998 is still running. The COBOL specialist retired in 2019. Half the integrations were marked temporary and never replaced. Accelerators scan the environment first and surface the real inventory before anyone touches it. Surprises during discovery are useful information. Surprises during migration are expensive.

2. Deadlines Got Tighter

Executives read about Microsoft Fabric, Databricks, and Snowflake. They see competitors moving faster. They want results next quarter, not next year. Pre-built connectors and proven migration patterns let teams hit the speed leadership expects without skipping the validation steps that protect data integrity.

3. Mistakes Got More Expensive

One bad record in a manual process cascades through reports for weeks before anyone notices. Compliance frameworks like GDPR and CCPA require traceability that manual workflows struggle to provide. Validation tools catch problems early, before they reach production.

4. Downtime Tolerance Dropped To Zero

Customers expect always-on systems. Partners need real-time data feeds. Maintenance windows have shrunk. Phased migration patterns and automated cutovers allow piece-by-piece transition without taking the business offline.

5. Compliance Requirements Multiplied

Regulators have added new categories and tightened existing ones. Audit trails showing what data moved where and when are not optional. Accelerators generate that documentation as they work, not as a separate workstream.

The 5 Types Of Migration Accelerators

Most enterprise migration work falls into one of five categories. Each has its own toolkit.

1. Cloud Migration Accelerators

These move workloads from on-premise infrastructure to AWS, Azure, or GCP.

- Lift-and-shift templates move existing applications to the cloud with minimal architecture changes

- Infrastructure-as-code provisioning through Terraform, ARM templates, or CloudFormation eliminates manual configuration drift

- Cloud architecture blueprints for common patterns like three-tier apps, microservices, and data platforms

These tools cut the time to establish cloud environments and bake in security and cost controls from day one.

2. Data Migration Accelerators

These handle data movement between systems while protecting quality.

- Schema mapping tools analyze source and target databases and suggest mappings between different data models

- ETL/ELT automation generates transformation code from mapping rules, removing manual coding for common patterns

- Data validation scripts compare migrated data against source through automated checks, surfacing discrepancies early

- CDC (change data capture) frameworks keep old and new systems in sync during transition by tracking and applying source changes to the target

The CDC pattern matters because it lets organizations run both environments in parallel and roll back if something breaks.

3. Application Modernization Accelerators

These transform legacy applications into modern architectures.

- Refactoring toolkits analyze existing code, surface technical debt, and apply common refactoring patterns automatically

- API enablement frameworks add API layers to legacy applications so modern systems can integrate without rewrites

- Containerization accelerators convert applications to Docker containers and generate Kubernetes deployment configurations

Instead of demanding a full rewrite, these enable incremental modernization while business operations continue.

4. BI And Reporting Migration Accelerators

These automate the transition between reporting platforms, which is one of the most labor-intensive migration types.

- Tableau to Power BI converts dashboards, extracts data connections, and rebuilds visualizations

- SSRS to Fabric or Power BI migrates SQL Server Reporting Services reports to modern cloud platforms

- Cognos to Power BI handles IBM Cognos migrations, converting reports and analytics

- Crystal Reports to Power BI moves legacy reports to modern platforms, which matters more now that SAP has set Crystal Reports 2025 mainstream maintenance to end on December 31, 2027

These accelerators address the worst part of BI migration, the manual rebuild of hundreds or thousands of reports.

5. AI And Analytics Migration Accelerators

These move advanced analytics workloads to modern platforms.

- ML model migration toolkits convert machine learning models between frameworks, preserving accuracy

- Databricks and Fabric modernization accelerators migrate notebooks, pipelines, and analytics code, handling syntax conversions and library mappings

Rebuilding analytics pipelines from scratch is slow and risky. These accelerators preserve existing investments while enabling the move to modern platforms.

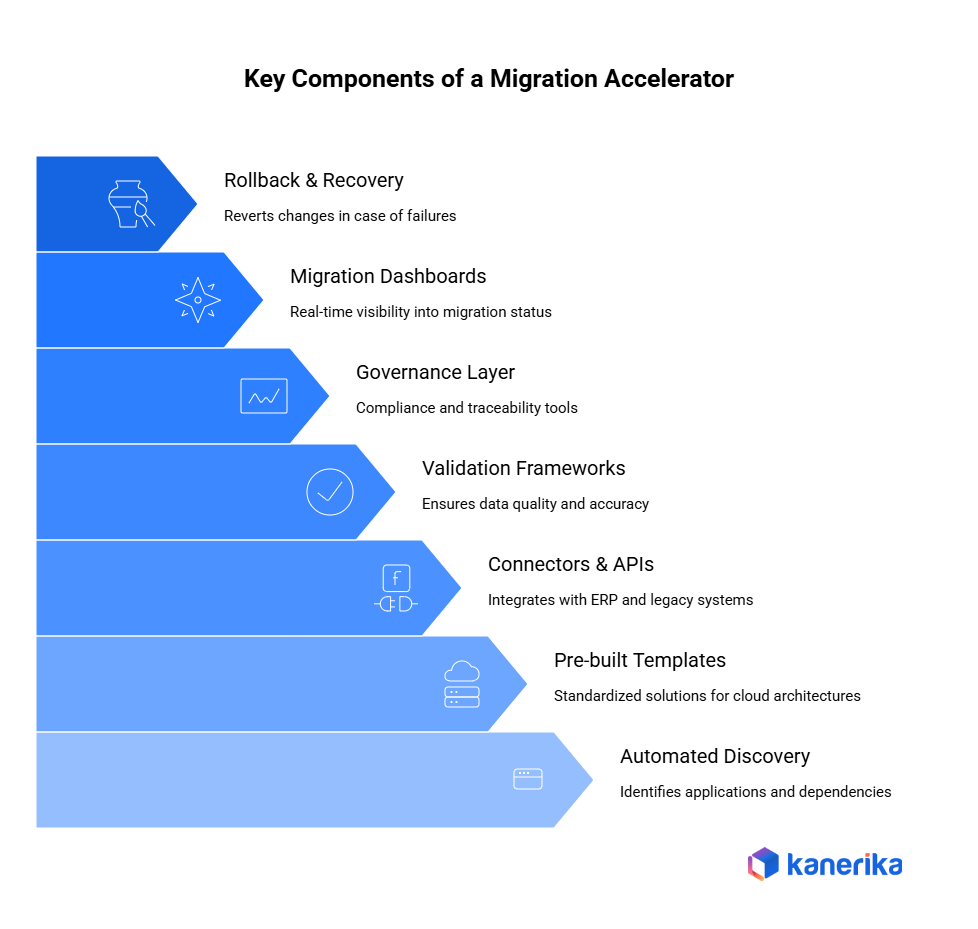

Key Components of a Migration Accelerator

A strong migration accelerator is built on several essential components that work together to make enterprise modernization faster, safer, and more predictable. Below are the key elements explained in simple and clear terms.

1. Automated Discovery Tools

To begin with, discovery tools identify existing applications, data sources, and dependencies. They create an application inventory and perform dependency mapping, which helps teams understand what needs to be migrated and how systems are connected.

2. Pre-built Templates

Migration accelerators include ready-made templates for cloud architectures, data pipelines, dashboards, and security policies. These templates ensure standardization and reduce the time spent designing solutions from scratch.

3. Connectors & APIs

Enterprises often rely on ERP, CRM, databases, and legacy systems. Accelerators provide connectors and APIs to integrate with these systems easily, reducing manual setup and enabling faster data movement.

4. Validation Frameworks

Reliable migration requires verified and accurate data. Validation frameworks offer data quality checks, reconciliation scripts, and transformation logs to ensure that migrated data matches source data and meets defined standards.

5. Governance Layer

Governance is essential in regulated industries. A strong accelerator includes tools for data lineage, access control, and audit logs, ensuring compliance and traceability across the entire migration process.

6. Migration Dashboards

These dashboards provide real-time visibility into migration status, progress, errors, and performance metrics. They help teams make informed decisions quickly and track overall project health.

7. Rollback & Recovery Mechanisms

Finally, rollback and recovery tools allow teams to revert changes safely in case of failures. This reduces migration risk and ensures business continuity.

Architecture Of An Enterprise Migration Accelerator

Most production-grade accelerators follow the same modular architecture. Six layers, each handling a distinct stage. The architecture matters because gaps between layers are where most migration failures originate. A strong accelerator treats these as a connected system, not separate tools that hand off in batches.

1. Discovery And Assessment Layer

This is the entry point. The discovery layer scans the source environment to build a complete inventory before any work starts. Without it, planning is guesswork and timelines slip the moment an undocumented dependency surfaces.

What the layer typically captures:

- Application inventory across legacy and modern systems

- Database schemas, table structures, stored procedures, and views

- Data volumes, growth rates, and refresh patterns

- Integration points with upstream and downstream systems

- Custom code, business rules, and undocumented workflows

Output is a dependency map and a baseline that planning teams can size against.

2. Planning And Mapping Engine

Once discovery is complete, the planning layer defines how everything will move. This is where source-to-target mappings, transformation rules, and migration standards get codified so the rest of the pipeline can execute against them.

Key functions:

- Source-to-target schema mapping with type conversions handled automatically

- Transformation rules for data cleansing, format conversion, and business logic

- Migration sequencing based on dependencies (what must move before what)

- Risk scoring per object so high-risk items get extra validation

- Standards enforcement so every migration follows the same patterns

A strong planning engine reduces ambiguity, which is what causes review cycles to drag.

3. Automation Execution Layer

The execution layer runs the actual migration. Extraction, loading, schema creation, pipeline generation, configuration updates, and code conversion all happen here using scripts, workflows, and templates.

What separates a real execution layer from a script bundle:

- Idempotent operations so reruns do not corrupt state

- Parallelization for high-volume workloads

- Checkpointing so failures resume from the last good state, not the start

- Configuration-driven execution so the same engine handles different migration paths

- Built-in retry logic for transient failures

This is the layer that determines how fast the migration runs and how predictable cutover is.

Data Conversion vs Data Migration: Which Approach Suits Your Project?

Explore the differences between data conversion and migration, and how Kanerika handles both.

4. Data Transformation Layer

Source data rarely arrives in the shape the target needs. The transformation layer applies cleansing, formatting, restructuring, and type conversions so the target receives clean, compatible data.

Common transformations handled here:

- Type casting (string to date, numeric precision adjustments)

- Encoding conversions (UTF-8, code page changes)

- Null handling and default value population

- Restructuring (denormalization, pivoting, flattening nested structures)

- Reference data lookups against master data tables

- Business rule application (currency conversion, unit standardization)

Done well, this layer eliminates most of the data quality issues that surface in production after migration.

5. Validation And Testing Layer

Validation is what protects against silent data drift after cutover. The validation layer performs data quality checks, record comparisons, reconciliation, and functional testing to verify accuracy and completeness.

Validation depth that matters:

- Row count and checksum reconciliation between source and target

- Field-level comparison for sampled records

- Business rule validation (do calculated fields match)

- Aggregate parity testing for reports and dashboards

- Functional testing of downstream applications consuming the migrated data

- Performance benchmarks against the source system

This is the dimension where most accelerators differ from one another. Row counts and checksums are baseline. Business rule and parity testing are what separate enterprise-grade tools from glorified scripts.

6. Monitoring And Reporting Layer

The monitoring layer provides real-time visibility into the migration as it runs. Dashboards, error tracking, throughput metrics, and progress reporting all flow through here.

What strong monitoring provides:

- Real-time pipeline status and throughput

- Error tracking with root cause classification

- SLA tracking against migration milestones

- Audit logs for compliance handoff

- Alerts for failures or threshold breaches

- Historical reporting for retrospectives and future migration planning

Underneath these six layers, modern accelerators use microservices, API-first design, and cloud-native infrastructure to scale, integrate with enterprise systems, and run parallel migrations. The microservices model lets each layer scale independently. API-first design means the accelerator slots into existing CI/CD, monitoring, and governance stacks instead of forcing teams to work around it.

How AI And LLMs Are Changing Migration Work

AI and LLMs have moved several manual migration tasks into the automation layer. The change is not theoretical, it is already in production tooling. The work that used to require senior engineers reading legacy documentation, tracing dependencies through codebases, and writing test cases manually is now handled by models that do it faster and more consistently. The engineers shift to oversight, validation, and the edge cases that still need human judgment.

1. NLP For Schema Mapping And Code Conversion

Natural language processing interprets legacy schemas, column descriptions, and code logic that often lacks formal documentation. Models read column names, comments, and surrounding code to infer intent, then generate target mappings and convert SQL, ETL scripts, and config files into modern formats.

Where this shows up in real migrations:

- Mapping cryptic legacy column names (CUST_ID_NBR, TRANS_AMT_USD) to modern, well-named target fields

- Converting Informatica or SSIS transformations to Databricks notebooks or Fabric pipelines

- Translating SQL dialects between vendors (Oracle PL/SQL to T-SQL, Teradata to Snowflake)

- Interpreting business logic embedded in stored procedures and converting to modern equivalents

The work that used to take weeks of manual code review now happens in hours with human review of the output.

2. AI Agents For Multi-Step Tasks

AI agents execute end-to-end workflows that previously required human orchestration. They plan tasks, run scripts, validate results, escalate issues, monitor pipelines, and self-correct routine errors without waiting for an engineer to intervene.

Typical agent responsibilities in migration:

- Sequencing migration jobs based on dependencies discovered at runtime

- Running validation suites and triaging failures by type

- Restarting failed jobs with appropriate retry strategies

- Escalating non-recoverable errors to human reviewers with full context attached

- Generating status reports and updating tracking systems

The shift is from engineers running pipelines to engineers supervising agents that run pipelines.

3. LLMs For Documentation And Validation

Documentation has historically been the lowest-priority deliverable on migration projects. It gets cut when timelines slip. LLMs change that economics by generating migration documents, data dictionaries, runbooks, and user guides automatically as the migration runs.

What LLMs document automatically:

- Source-to-target field mappings with transformation logic

- Data dictionaries with column descriptions, data types, and example values

- Runbooks for cutover, rollback, and incident response

- User guides for the new platform with screenshots and walkthroughs

- Audit trails showing what changed, when, and why

LLMs also validate logic by summarizing differences between source and target outputs and flagging missing components for review.

What Are The Top 5 Microsoft Fabric Use Cases In 2026?

Explore what are the top use cases organizations are using Microsoft Fabric

4. Predictive Analytics For Risk Scoring

Predictive models analyze legacy systems to identify data quality gaps, dependency conflicts, and likely performance issues before they cause delays. Risk scoring lets teams prioritize the parts of the migration that need extra attention.

What gets scored:

- Data quality risk per source table (null rates, duplicates, referential integrity)

- Dependency complexity per object (how many downstream consumers exist)

- Conversion risk based on legacy code complexity and rare constructs

- Performance risk based on historical query patterns and target platform characteristics

- Business impact if the object fails post-migration

High-risk items get extra validation, parallel runs, and senior review. Low-risk items move through the standard pipeline.

5. Automated Test Case Generation

Test coverage is usually the gap that causes migration projects to ship with hidden defects. AI generates unit tests, integration tests, reconciliation scripts, and test datasets, cutting manual QA effort while expanding coverage.

What gets generated:

- Unit tests for transformations and business rules

- Integration tests for end-to-end pipeline runs

- Reconciliation scripts comparing source and target outputs

- Synthetic test datasets covering edge cases (nulls, boundary values, malformed inputs)

- Performance test scenarios benchmarked against source system baselines

The combination of generated tests and AI-driven validation means migration projects ship with materially better coverage than they used to, even on tighter timelines.

Real-World Use Cases

Migration accelerators are widely used across industries to modernize systems faster, reduce project risk, and improve long-term performance. Below are some of the most common and impactful enterprise use cases, explained with clear benefits.

1. Cloud Modernization

Many enterprises still run critical workloads on on-premise servers. Migration accelerators help move these workloads to AWS, Azure, or GCP much faster by using automated discovery, infrastructure templates, and pre-built scripts.

Business Value: Lower infrastructure cost, faster scaling, improved security.

Impact: Migration timelines reduce from months to weeks, with minimal disruption.

2. Data Warehouse Modernization

Enterprises often need to shift from legacy warehouses like Netezza, Teradata, or Oracle to modern cloud warehouses such as Snowflake, BigQuery, Microsoft Fabric, or Databricks. Accelerators provide schema converters, ELT templates, and validation scripts.

Business Value: Better performance, lower storage cost, support for AI workloads.

Impact: Reduced risk and fast cutover with high data accuracy.

3. BI Reporting Migration

Organizations often migrate from Tableau, Cognos, or SSRS to Power BI. Migration accelerators automate report mapping, metadata extraction, and visual conversion.

Business Value: Standardized reporting, unified analytics, lower licensing costs.

Impact: Faster migration with consistent dashboards across teams.

4. Application Transformation

Enterprises modernize old systems by breaking monoliths into microservices or converting legacy applications into containerized, API-enabled services.

Business Value: Improved scalability, faster deployments, and easier updates.

Impact: Reduced refactoring time and smoother application upgrades.

5. ERP/CRM Migration

Accelerators support migrations from SAP or Oracle to cloud-based versions with automated data extraction, mapping, and validation.

Business Value: Better integration, enhanced performance, future-ready architecture.

Impact: Lower transition risk and fewer business interruptions.

6. AI & Analytics Migration

Machine learning pipelines and analytics workloads are moved to cloud AI platforms. Accelerators automate pipeline conversion, model deployment, and testing.

Business Value: Faster experimentation, real-time insights, support for LLM and AI agents.

Impact: Reduced engineering effort and quicker adoption of advanced analytics.

Data Ingestion vs Data Integration: Which One Do You Need?

Understand data ingestion vs integration: key differences & Kanerika’s approach to seamless data handling.

How To Evaluate A Migration Accelerator Vendor

Most accelerator pitches sound similar. The differences show up in five areas.

1. Coverage For Your Specific Migration Path

Generic accelerators handle a few common paths well and the rest poorly. Confirm the vendor has a documented, productized accelerator for your exact source and target combination.

2. Validation Depth

Ask how the accelerator validates output. Row counts and checksums are baseline. Business rule validation, sample comparisons, and parity testing are what protect against silent data drift post-migration.

3. Governance And Compliance Features

Lineage, access controls, and audit trails should be generated during migration. If they are produced as a separate deliverable, the vendor is selling consulting hours wrapped around a tool, not an accelerator.

4. Marketplace And Procurement Path

Accelerators listed on Azure Marketplace or AWS Marketplace are usually procurement-eligible through committed cloud spend. This matters for budget approval and reduces out-of-pocket tooling cost.

5. Industry Experience

Healthcare, financial services, manufacturing, and retail have different compliance and integration constraints. A vendor with case studies in your industry will have already solved problems specific to your stack.

Kanerika : Your Trusted Partner for Data Migrations

Kanerika is a Microsoft Solutions Partner for Data and AI with Analytics Specialization, a Microsoft Fabric Featured Partner, and a Databricks Consulting Partner. Compliance posture includes ISO 27001, ISO 27701, SOC II Type II, CMMI Level 3, and GDPR.

Kanerika’s migration practice runs on FLIP, a proprietary AI-enabled low-code migration accelerator. FLIP automates the most time-intensive phases of data migration: discovery, asset extraction, logic mapping, format conversion, and validation. The platform supports twelve migration paths across data, BI, and RPA categories, and is listed on the Azure Marketplace, eligible for Azure Committed Spend (MACC).

FLIP supported migration paths include:

- Tableau, Cognos, Crystal Reports, and SSRS to Power BI

- Informatica PowerCenter to Microsoft Fabric, Databricks, Alteryx, or Talend

- Azure Data Factory to Microsoft Fabric

- SQL Server and SSIS to Microsoft Fabric

- UiPath to Microsoft Power Automate

Typical FLIP outcomes include 70 to 80 percent automation of conversion work, 60 to 70 percent reduction in labor cost, and migration timelines compressed from months to weeks.

Case Study: Performance Gain with Cognos to Power BI Migration

A global retail enterprise, the organization delivers comprehensive merchandising, supply chain, and sales management solutions across multiple regions. Its operations span key business areas including store operations, procurement, finance, logistics, marketing, and human resources. With hundreds of BI and performance reports supporting daily decision-making across departments, maintaining data accuracy, consistency, and agility has become an increasingly challenging task.

Client’s Challenges

- Faced limited interactivity and slow decision-making due to static, outdated Cognos dashboards.

- Incurred high operational costs from legacy BI licensing, impacting scalability and flexibility.

- Struggled with inconsistent data access across departments, leading to inefficiencies and delayed insights.

Solutions

- Migrated to Power BI using automated accelerators that replicated data structures, converted formulas into DAX, and recreated visuals for faster, consistent reporting.

- Reduced maintenance and licensing overheads, achieving significant cost savings while enhancing performance.

- Enabled centralized data accessibility, ensuring faster, more reliable insights across business units.

Results

- 65% Increase in Operational Performance

- 27% Overall Reduction in BI-Related Costs

- 2X Scalable Data and BI Capabilities

Wrapping Up

Migration accelerators have moved from a nice-to-have to the default approach for any enterprise modernization with serious scope. Manual migration still works for small, contained projects, but it does not scale for the kind of multi-system, multi-region, deadline-bound work most enterprises now face. The vendors worth evaluating are the ones with documented coverage for your specific path, validation built into the workflow, and enough industry experience to anticipate the problems your stack will surface.

Simplify Your Migration Journey with Experts You Trust!

Partner with Kanerika for smooth, error-free execution.

FAQs

1. What are migration accelerators for enterprises?

A migration accelerator is a set of tools, frameworks, and automation assets designed to speed up large-scale data or platform migrations. It reduces manual effort by automating assessment, code conversion, validation, and testing. For enterprises, accelerators help minimize risk, ensure consistency, and shorten migration timelines. They are especially valuable for complex, multi-system environments.

2. How do migration accelerators reduce enterprise migration risk?

Migration accelerators enforce standardized migration patterns and proven workflows. They include built-in checks for data accuracy, schema consistency, and dependency mapping. By automating validation and reconciliation, they reduce human error. This ensures data integrity and business continuity during enterprise migrations.

3. What types of migrations benefit most from accelerators?

Accelerators are highly effective for migrations involving legacy systems such as SQL Server, Informatica, on-prem data warehouses, and BI platforms. They are also useful for cloud migrations to platforms like Microsoft Fabric, Azure Databricks, and cloud data lakes. Enterprises with high data volumes and complex dependencies see the greatest value. Repeatable workloads benefit the most.

4. How do migration accelerators improve migration speed and efficiency?

Accelerators automate repetitive tasks such as code translation, pipeline creation, and test case execution. This significantly reduces manual development and rework. As a result, migration cycles are faster and more predictable. Enterprises can move from planning to production in shorter timelines without compromising quality.

5. Do migration accelerators support validation and post-migration testing?

Yes, enterprise-grade migration accelerators include automated validation, reconciliation, and performance testing. They compare source and target results to ensure accuracy after migration. Post-migration testing confirms that reports, dashboards, and analytics perform as expected. This builds confidence in the migrated environment.

6. Can migration accelerators be customized for enterprise needs?

Most accelerators are designed to be flexible and configurable. Enterprises can adapt them to specific business rules, compliance requirements, and system architectures. Customization ensures alignment with industry regulations and internal standards. This makes accelerators suitable for healthcare, retail, finance, and logistics enterprises.

7. How do migration accelerators support long-term value beyond migration?

Beyond initial migration, accelerators help standardize architecture, improve governance, and enable scalability. They create reusable migration patterns that support future modernization initiatives. Enterprises benefit from lower technical debt and faster onboarding of new workloads. This ensures sustained value long after migration is complete.

8. What's the difference between a migration tool and a migration accelerator?

A tool handles one task—data extraction, schema conversion, that kind of thing. An accelerator covers the whole journey from discovery through validation and monitoring. Kanerika’s FLIP platform connects these stages so you’re not stitching together separate products and hoping they work together.

9. How do accelerators handle data validation during migration?

Automated reconciliation compares source and target data throughout the process. Row counts, checksums, business rule validation, sample comparisons. Kanerika’s FLIP platform generates validation reports automatically so teams can verify accuracy without manual spot-checking.

10. What should we look for when evaluating migration accelerator vendors?

Coverage for your specific migration path, integration with your existing systems, governance and compliance features, and proven experience in your industry. Kanerika offers pre-built accelerators for the most common enterprise migrations plus the flexibility to handle custom scenarios through the FLIP platform.