Modern organizations are under immense pressure to turn vast amounts of data into actionable insights—quickly, securely, and at scale. The challenge isn’t just about storage or pipelines anymore; it’s about accessibility, governance, and adaptability. That’s where the debate around Data Mesh vs. Data Fabric gains relevance. As companies adopt AI and self-service analytics, choosing the right architecture depends on how they balance centralized control with distributed ownership. Both models offer powerful benefits—but aligning them with your business goals is what truly unlocks value.

Airbnb, which deals with vast volumes of data across products, operations, marketing, and customer service. To overcome data silos and ensure teams can operate autonomously yet consistently, Airbnb implemented Data Mesh principles—empowering domain teams to treat data as a product, own its lifecycle, and ensure its usability organization-wide. In contrast, other large enterprises are turning to Data Fabric to centralize governance and deliver consistent data access across hybrid environments.

While both frameworks aim to democratize data and improve agility, their execution models vary significantly. Data Fabric relies on a unified architecture with centralized governance, whereas Data Mesh emphasizes decentralized ownership and domain-specific responsibility.

In this blog, we’ll break down the key differences, challenges, and ideal use cases of Data Fabric vs Data Mesh—so you can make informed decisions about the right data strategy for your business in 2025 and beyond.

What is Data Fabric?

Imagine using a single, easy-to-use interface to instantly access all your company’s data, whether it is kept in cloud storage, outdated systems, or a combination of both. Data Fabric provides just that. By linking divergent data sources, this method enables companies to access and handle information without the typical complications and hassles.

Key Features

- Centralized Data Integration brings together scattered information from multiple sources into one accessible place. Instead of jumping between different systems, users work through a single interface that shows all company data in one view.

- Automation handles routine tasks like finding data, managing permissions, and controlling access without manual work. This means less time spent on administrative tasks and more focus on using the data effectively.

- Data Security protects information with strong safeguards built into the system. Companies maintain complete control over who can access what data, ensuring privacy rules and regulations are always followed.

- Data Virtualization provides instant access to information without moving it from its original location. Users can search and analyze data immediately while it stays where it belongs, saving storage space and time.

Benefits for Your Business

- Simplified Data Management eliminates the hassle of working with multiple systems. Confusion and training time are decreased as everything is made available from a single location.

- Scalability refers to the system’s ability to evolve with your company, whether you’re developing on-site systems or adding cloud services. No need to rebuild everything as you expand.

- Improved Decision-Making gives leaders access to complete, up-to-date information from across the organization, leading to better strategic choices and faster responses to market changes.

What is Data Mesh?

Data Mesh is a decentralized approach to data architecture where data is managed as a product by autonomous teams rather than through centralized data platforms. This paradigm shift treats data as a distributed system, with individual domain teams taking ownership of their data products while maintaining organizational coherence through shared standards and governance frameworks.

Key Features

- Decentralization represents the fundamental difference from Data Fabric approaches. Instead of centralizing data management, Data Mesh distributes responsibility to domain teams who best understand their specific data contexts and business requirements. This eliminates bottlenecks created by centralized data teams.

- Domain-Oriented Design treats data as a product managed by teams closest to its creation and business context. Each domain team acts as both producer and steward of their data products, ensuring relevance, quality, and accessibility based on deep domain expertise and direct stakeholder relationships.

- Interoperability ensures seamless integration between different data products across domains through standardized interfaces and protocols. Despite decentralized ownership, data products can communicate and integrate effectively, maintaining organizational data coherence.

- Self-Service Data Infrastructure empowers domain teams with platforms and tools to manage their data independently. Teams can provision, deploy, and maintain their data products without depending on central IT resources, accelerating innovation cycles.

Benefits

- Scalability improves significantly through decentralized management, as organizations can scale data capabilities by adding domain teams rather than expanding centralized infrastructure. Each team operates independently without creating system-wide bottlenecks.

- Faster Data Access results from eliminating intermediary processes. Domain teams can respond directly to data needs without routing requests through centralized teams, dramatically reducing time-to-insight and enabling rapid data-driven innovation.

- Domain Expertise ensures higher data quality and relevance since teams managing data products possess intimate knowledge of business context, data semantics, and user requirements, leading to more accurate and useful data products.

Data Fabric vs Data Mesh: Key Differences

| Aspect | Data Fabric | Data Mesh |

| Centralization | Centralized data access across systems | Decentralized data management by domain teams |

| Data Ownership | Centralized ownership of data management | Domain-specific ownership of data products |

| Scalability | Scalable but can become complex as data grows | Highly scalable for large organizations with multiple teams |

| Data Governance | Centralized governance and security | Governance is decentralized; each domain manages its own data |

| Integration Complexity | Can be complex to integrate with legacy systems | Easier integration for domain-specific needs but requires coordination |

| Implementation Time | Faster setup, especially in smaller organizations | Longer setup due to the need for infrastructure for each domain |

| Flexibility | Less flexible, as it depends on a centralized model | Highly flexible for domain-specific requirements |

| Data Silos | Reduces data silos by integrating all data sources | Can introduce silos as data is handled by individual domains |

| Operational Speed | Slower as data is processed centrally | Faster data access and updates for domain teams |

| Use Case | Ideal for smaller organizations or unified data needs | Best for larger organizations with diverse teams and complex data needs |

| Compliance | Easier to maintain compliance with centralized control | Can be challenging due to decentralized control and varying data standards |

| Autonomy | Limited autonomy for individual teams | Full autonomy for domain teams over their own data |

| Data Discovery | Centralized data discovery and access | Decentralized discovery, handled by domain teams, can be more tailored |

| Cost Efficiency | Potentially more expensive at scale due to centralization | More cost-efficient for large organizations with multiple domains, as each domain manages its own data |

| Operational Control | Centralized management, which can become a bottleneck | Distributed control can lead to faster decision-making and operations within domains |

| Technology Stack | Uses standardized tools across the entire organization | Uses domain-specific technologies and tools, tailored to each team’s needs |

Centralization vs. Decentralization

Data Fabric:

- Emphasizes centralization and integration of all data sources into a unified platform

- Creates a single, coherent layer that abstracts complexity of underlying data systems

- Provides users with consistent interface regardless of where data physically resides

- Enables standardized access patterns and unified governance across entire data landscape

Data Mesh:

- Decentralizes data management with individual domain teams taking full responsibility

- Each domain operates as independent data provider managing data quality to access controls

- Distributed model treats data as federated system rather than centralized resource

- Teams manage everything within their specific business context

Data Management

Data Fabric:

- Provides single point of access through virtualization and automation technologies

- Uses intelligent automation for data discovery, cataloging, and integration

- Maintains centralized metadata management across platforms

- Users interact with one unified interface presenting data from multiple sources

Data Mesh:

- Creates independent, self-contained data products accessible across domains

- Uses standardized APIs and interfaces for data sharing

- Each data product is discoverable, addressable, and interoperable

- Functions as standalone service with own lifecycle management and documentation

Scalability

Data Fabric:

- Provides scalability by simplifying data access through unified architecture

- Reduces complexity for end users through centralized management

- Can become bottleneck as data complexity increases

- Central fabric layer may struggle with growing volumes, variety, and velocity

Data Mesh:

- Naturally scales with organizational growth through decentralized responsibilities

- Additional teams can independently manage data products without impacting existing systems

- Particularly benefits larger companies with diverse domains

- Each team can scale data capabilities independently

Governance and Security

Data Fabric:

- Offers centralized governance and security frameworks

- Easier to implement consistent policies and control data access

- Maintains compliance across entire organization from single control point

- Ensures organizational consistency in security measures and data quality standards

Data Mesh:

- Relies on individual domain teams to govern their own data products

- Can create challenges for maintaining consistency in security and compliance

- Enables teams to implement governance measures tailored to specific needs

- Requires robust federated governance frameworks and shared standards

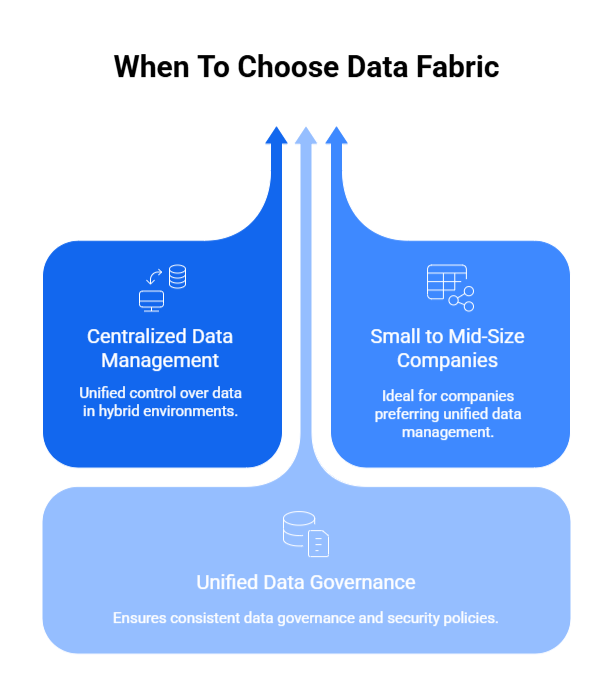

When to Choose Data Fabric?

1. Ideal for Centralized Data Management

Data Fabric is the optimal choice when organizations need centralized access to data distributed across hybrid environments. It is perfect for companies seeking to eliminate data silos while maintaining unified control over data assets. Moreover, enables seamless integration between cloud and on-premises systems through a single access layerALso, provides consistent data experience regardless of underlying infrastructure complexity

2. Small to Mid-Size Companies

Well-suited for businesses without complex organizational structures or multiple autonomous domains and it is ideal when companies prefer unified approaches to data management over distributed ownership models. Additionally, it reduces overhead by eliminating the need for multiple specialized data teams across different business units.

As well as simplifies data operations through centralized management, reducing administrative complexity. It is a cost-effective solution for organizations lacking resources to maintain multiple independent data products

3. Unified Data Governance

Essential when consistent data governance and security policies across multiple platforms are organizational priorities. It provides a single point of control for implementing enterprise-wide data quality standards and enables uniform compliance monitoring and reporting across all data sources.

Moreover, it facilitates consistent metadata management and data lineage tracking. Consequently, supports standardized access controls and audit trails throughout the data ecosystem

Examples

Regulated Industries

- Financial services require consistent security protocols and regulatory compliance across all data touchpoints

- Healthcare organizations need unified patient data access while maintaining HIPAA compliance

- Insurance companies requires integrated risk assessment data from multiple sources

- Government agencies needs secure, centralized access to sensitive information

Centralized Data Layer Requirements

- Retail organizations seeks integrated view of customer data across online and offline channels

- Manufacturing companies require unified operational data from multiple facilities and systems

- Technology companies needs centralized analytics platform for product development insights

- Educational institutions seek integrated student information systems across multiple campuses

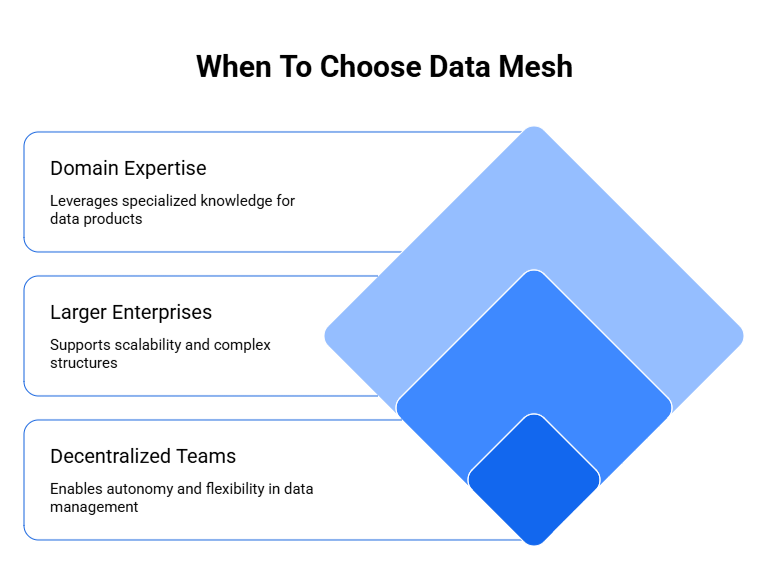

When to Choose Data Mesh?

1. Ideal for Decentralized Teams

Data Mesh excels in organizations with autonomous teams managing different data domains. Enables flexibility by allowing domain teams to make independent decisions about their data products. ‘

Additionally, facilitates faster decision-making by eliminating centralized bottlenecks and approval processes. It empowers teams to respond quickly to changing business requirements within their specific domains. Correspondingly, supports agile development practices where teams can iterate on data products independently

2. Larger Enterprises

Data Mesh is perfect for companies operating at scale with multiple data teams across various departments. It is more efficient for organizations where centralized data management creates operational bottlenecks. Moreover, it is ideal when different business units have distinct data requirements and use cases.

Supports complex organizational structures with diverse data needs and technical capabilities. This enables horizontal scaling as new domains can be added without impacting existing data products and eventually reduces dependencies on central IT resources, allowing for parallel development across teams.

3. Need for Domain Expertise

Essential when individual business units possess specialized knowledge about their data contexts and marketing teams can better manage customer segmentation and campaign performance data. Finance departments can optimize their financial reporting and risk assessment data products.

As well as R&D units can control their research data and intellectual property more effectively. Enables teams to become data product owners with end-to-end responsibility for data quality and relevance

Examples

Large E-commerce Platforms

- Inventory management teams managing real-time stock data and supply chain information

- Customer service departments handling support tickets and customer interaction data

- Recommendation engines teams managing user behavior and preference data

- Payment processing units handling transaction and fraud detection data

- Each domain requires real-time updates and specialized expertise for optimal performance

Multinational Organizations

- Regional offices managing localized customer and market data according to local regulations

- Product divisions across different countries managing their specific product performance data

- Dispersed teams operating in different time zones requiring autonomous data management capabilities

- Global supply chain teams managing regional logistics and vendor data independently

- Organizations with diverse regulatory requirements across different geographical regions

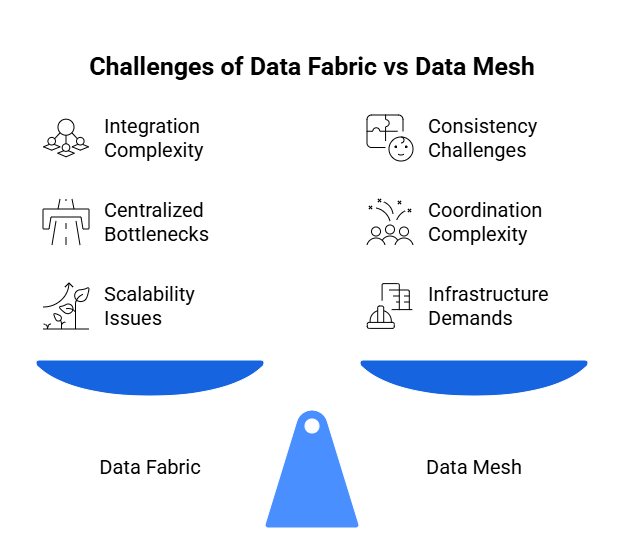

Challenges of Data Fabric vs Data Mesh

Data Fabric Challenges

- Complexity in Integration presents significant hurdles as organizations attempt to connect diverse data sources with varying formats, protocols, and access methods. This integration process demands substantial technical expertise and resources, often requiring custom connectors and extensive mapping efforts.

- Potential Bottlenecks emerge from centralized control mechanisms, particularly in larger organizations where multiple departments compete for data access. This centralization can create performance constraints and slow data flow, especially during peak usage periods or when processing large datasets.

- Scalability Issues become pronounced as organizations expand, making it increasingly difficult and expensive to maintain a unified data layer. The centralized architecture may struggle to accommodate growing data volumes and user demands while preserving performance standards.

Data Mesh Explained: Key Concepts & Implementation Guide

Learn Data Mesh fundamentals—principles, architecture, and real-world implementation strategies for scalable enterprise data systems.

Data Mesh Challenges

- Consistency becomes problematic when multiple domain teams independently manage their data products. Ensuring uniform governance policies, security standards, and data quality across decentralized teams requires constant coordination and monitoring.

- Complex Coordination is necessary to maintain interoperability between domain teams and their data products. This requires careful planning, standardized interfaces, and ongoing communication to prevent fragmentation and ensure seamless data sharing.

- Infrastructure Demands require significant upfront investment to establish the technological foundation supporting autonomous data products. Organizations must invest in platforms, tools, and training to enable each domain team to operate independently while maintaining overall system coherence.

Real-World Examples: Data Fabric vs Data Mesh

- Deloitte implemented a Data Fabric architecture to unify their data sources and provide real-time insights across their global operations

- Use Case Benefits: Ensuring consistent compliance and enhanced analytics across multiple systems and platforms

- Key Outcomes: Improved client service delivery through unified access to comprehensive business data while maintaining strict security and governance standards.

- Operational Impact: Facilitated real-time decision-making by providing consultants seamless access to organizational knowledge and client information regardless of source system

Additional Data Fabric Applications

- Major financial institutions leverage data fabric to unify trading data, risk management systems, and customer information across global operations

- Healthcare organizations implement data fabric for integrated patient care systems with unified compliance frameworks

- Use Case Benefits: Ensures consistent regulatory compliance and real-time risk assessment across multiple platforms and geographical locations

Data Mesh Example – Zalando E-commerce Platform

- Zalando, a leading e-commerce platform, adopted Data Mesh to decentralize their data management and improve the quality and speed of decision-making

- Use Case Implementation: Enabling autonomous data management within each domain (e.g., marketing, inventory) to respond quickly to customer needs

- Key Benefits: Teams can develop and deploy data products independently, reducing bottlenecks and improving responsiveness to market changes

- Operational Impact: Enhanced data quality through domain expertise and faster innovation cycles in customer experience optimization

Additional Data Mesh Applications

- Netflix implemented data mesh architecture to manage diverse data domains across content creation, recommendation algorithms, and user engagement analytics

- Large technology companies use data mesh for managing product development data, user analytics, and operational metrics across autonomous teams

- Key Outcomes: Faster time-to-market for data products and enhanced scalability for organizations with diverse business requirements

Comparative Outcomes

Data Fabric Success Factors

- Unified compliance and governance frameworks across complex regulatory environments

- Consistent security policies and audit trails for sensitive data across multiple systems

- Simplified data access for organizations with centralized decision-making structures

Data Mesh Success Factors

- Improved data quality through domain expertise and specialized knowledge

- Faster time-to-market for data products and analytics capabilities

- Enhanced scalability for large organizations with diverse business requirements and autonomous operational teams

How to Choose Between Data Fabric and Data Mesh

1. Consider Your Organization’s Size and Complexity

- Smaller or Centralized Organizations: Data Fabric is ideal as it offers a simplified and unified approach to managing data. Centralized control makes it easier to integrate data from various sources, providing a single point of access.

- Larger or Distributed Organizations: Data Mesh is better suited for large-scale organizations with multiple distributed teams. It offers more flexibility and scalability, allowing different teams to manage their data autonomously and efficiently.

2. Evaluate Governance Needs

- Uniform Data Governance: If maintaining consistent governance across the entire organization is a priority, Data Fabric is the better choice. It ensures centralized control over data security, compliance, and access, making it easier to manage risks.

- Autonomy for Domain Teams: If your teams need more control over their data and can independently manage it, Data Mesh might be a better fit. Moreover, it provides domain-specific autonomy, giving teams the flexibility to handle their own data products while still ensuring interoperability.

Microsoft Fabric Vs Tableau: Choosing the Best Data Analytics Tool

A detailed comparison of Microsoft Fabric and Tableau, highlighting their unique features and benefits to help enterprises determine the best data analytics tool for their needs.

Enhance Your Analytics with Kanerika’s Microsoft Fabric Expertise

Implementing Microsoft Fabric, the right way can make a significant difference in how teams automate pipelines, reduce manual work, and ensure data is up to date across systems. At Kanerika, we specialize in helping organizations achieve just that.

As a certified Microsoft solutions partner with deep expertise in data and AI, Kanerika works closely with businesses to integrate Microsoft Fabric into real-world workflows. Whether it’s setting up multi-capacity environments or designing efficient, scalable models, we build practical solutions tailored to your unique goals.

With extensive hands-on experience across industries, we don’t just recommend best practices—we implement them quickly and effectively. Whether you’re modernizing reporting, consolidating data, or building long-term scale, we ensure your Microsoft Fabric environment is set up to deliver measurable results from day one.

Partner with Kanerika and take the next step toward faster insights, cleaner architecture, and smarter decision-making.

Transform Your Data Analytics with Microsoft Fabric!

Partner with Kanerika for Expert Fabric implementation Services

Frequently Asked Questions

What is the difference between a data mesh and a data fabric?

Data fabric is a technology-driven architecture that uses automation and metadata to integrate data across distributed environments, while data mesh is an organizational approach that decentralizes data ownership to domain teams. Data fabric prioritizes unified access through a centralized integration layer, whereas data mesh emphasizes treating data as a product with federated governance. The key distinction lies in execution: fabric relies on tools and AI-driven automation, mesh depends on cultural and structural changes. Kanerika helps enterprises evaluate both architectures to determine the optimal fit for their data strategy.

Can data mesh and data fabric be used together?

Data mesh and data fabric can absolutely work together as complementary approaches. Organizations often implement data fabric’s automated integration layer to support data mesh’s decentralized domain ownership model. The fabric provides the technical infrastructure for metadata management and data discovery, while mesh establishes governance principles and team accountability. This hybrid approach delivers both technological efficiency and organizational scalability for complex enterprise environments. Kanerika’s data architects specialize in designing hybrid data architectures that combine the strengths of both paradigms—connect with us to explore your options.

What are the 4 pillars of data mesh?

The four pillars of data mesh are domain-oriented ownership, data as a product, self-serve data platform, and federated computational governance. Domain ownership assigns data responsibility to business units closest to the data source. Treating data as a product ensures quality, discoverability, and usability standards. Self-serve platforms enable teams to manage data independently without bottlenecks. Federated governance balances autonomy with enterprise-wide compliance standards. These principles distinguish data mesh from centralized approaches like data fabric. Kanerika guides organizations through implementing these pillars effectively—schedule a consultation to begin your data mesh journey.

Is data mesh obsolete?

Data mesh is not obsolete but has evolved beyond initial hype into practical, selective adoption. Organizations now recognize that pure data mesh implementation requires significant cultural transformation and mature engineering capabilities. Many enterprises adopt hybrid approaches, combining data mesh principles with data fabric technology for pragmatic results. The core concepts of domain ownership and data products remain valuable, particularly for large organizations with distributed teams. Data mesh continues to influence modern data architecture thinking. Kanerika helps enterprises assess whether data mesh principles align with their organizational readiness—reach out for an honest evaluation.

Is mesh better than fabric?

Neither data mesh nor data fabric is universally better; the right choice depends on your organization’s structure and needs. Data mesh suits enterprises with strong domain expertise, mature engineering teams, and willingness to decentralize data ownership. Data fabric excels when you need rapid integration across disparate sources with automated governance and limited organizational change. Companies prioritizing technology-led solutions lean toward fabric, while those embracing cultural transformation prefer mesh. Most successful implementations blend elements of both. Kanerika assesses your specific environment to recommend the architecture that delivers measurable outcomes—request a free assessment today.

Who needs a data mesh?

Data mesh is ideal for large, complex organizations with multiple business domains generating and consuming data independently. Companies experiencing bottlenecks from centralized data teams, struggling with data ownership ambiguity, or seeking to empower domain experts benefit most. Technology companies, financial institutions, and enterprises with distributed operations often find data mesh addresses their scaling challenges. However, successful implementation requires strong engineering culture and executive commitment to decentralization. Smaller organizations or those needing quick wins may prefer data fabric alternatives. Kanerika evaluates your organizational maturity to determine if data mesh fits your enterprise—let’s discuss your situation.

What problems does data mesh solve?

Data mesh solves critical challenges including centralized data team bottlenecks, unclear data ownership, poor data quality from distant producers, and slow time-to-insight for business domains. By distributing ownership to domain teams who understand the data best, mesh improves accountability and quality at source. It eliminates the monolithic data platform problem where central teams cannot scale with organizational growth. Data mesh also addresses the disconnect between data producers and consumers that plagues traditional architectures. Kanerika implements data mesh strategies that directly target your specific pain points—contact us to identify which problems we can solve together.

Why is it called data fabric?

Data fabric gets its name from the concept of weaving together disparate data sources into a unified, interconnected layer—similar to threads forming a cohesive fabric. The architecture creates a seamless integration mesh that spans on-premises systems, cloud platforms, and hybrid environments. This woven approach enables consistent data access regardless of where data physically resides. The fabric metaphor emphasizes how metadata, integration tools, and governance policies interweave to create a cohesive data management layer. Kanerika designs data fabric implementations that truly unify your enterprise data landscape—reach out to explore how fabric architecture transforms data accessibility.

What is data fabric used for?

Data fabric is used to create unified data access across heterogeneous environments, automate data integration, and enforce consistent governance enterprise-wide. Organizations deploy data fabric to break down data silos, enable real-time data discovery, and reduce manual integration efforts through AI-driven automation. It serves analytics acceleration by providing a single semantic layer over distributed data sources. Data fabric also supports compliance by applying consistent security policies across all data assets. Enterprises managing multi-cloud or hybrid infrastructures particularly benefit from fabric’s unified approach. Kanerika builds data fabric solutions that deliver immediate integration value—talk to our architects about your use case.

Is Microsoft Fabric a data mesh?

Microsoft Fabric is not a data mesh but rather a unified analytics platform that aligns more closely with data fabric principles. Fabric provides integrated services for data engineering, data science, real-time analytics, and business intelligence within a single SaaS environment. While it supports some decentralized capabilities through workspaces and domains, it fundamentally operates as a centralized, technology-driven platform—characteristic of data fabric architecture. Organizations can implement data mesh governance principles on top of Microsoft Fabric, but the platform itself is a data fabric implementation. Kanerika is a Microsoft partner specializing in Fabric deployments—connect with us for expert implementation guidance.

How do data fabric and data mesh handle data governance?

Data fabric implements centralized, automated governance using metadata management, AI-driven policy enforcement, and unified access controls across all data sources. Data mesh takes a federated governance approach where domain teams own governance within their boundaries while adhering to enterprise-wide standards set by a central governance board. Fabric excels at consistent, technology-enforced compliance, while mesh distributes accountability to those closest to the data. Many organizations combine both: fabric’s automation with mesh’s federated ownership model. Kanerika designs governance frameworks that balance control with agility—schedule a consultation to optimize your data governance strategy.

Between data fabric and data mesh, which is easier to implement?

Data fabric is generally easier to implement because it relies primarily on technology deployment rather than organizational transformation. You can adopt data fabric tools and platforms incrementally without restructuring teams or changing ownership models. Data mesh requires significant cultural change, cross-functional alignment, and mature platform engineering capabilities—making implementation more complex and time-intensive. However, data fabric’s ease of deployment does not guarantee success if organizational issues remain unaddressed. The right choice depends on your starting point and transformation appetite. Kanerika accelerates implementation of both architectures with proven methodologies—contact us for a realistic implementation roadmap.

What are the cost implications for both data fabric and data mesh?

Data fabric costs concentrate on technology investments: integration platforms, metadata tools, and AI-powered automation software, with potentially faster time-to-value. Data mesh costs spread across organizational change management, platform engineering teams, domain team training, and longer implementation timelines. Fabric may have higher upfront licensing costs but lower personnel overhead. Mesh requires sustained investment in people and processes but can reduce long-term tool sprawl. Hidden costs in mesh include coordination overhead and potential productivity dips during transition. Kanerika provides transparent cost analyses for both approaches—use our ROI calculator or speak with our team for detailed projections.

Is data mesh suitable for real-time analytics?

Data mesh can support real-time analytics when domain teams implement appropriate streaming infrastructure and treat real-time data products with the same rigor as batch data. The decentralized model allows domains to choose optimal technologies for their latency requirements. However, cross-domain real-time analytics becomes challenging without strong interoperability standards. Data fabric often handles real-time scenarios more seamlessly through its unified integration layer. For enterprise-wide real-time analytics, combining mesh governance principles with fabric’s technical integration typically delivers better results. Kanerika architects real-time data solutions across both paradigms—discuss your analytics latency requirements with our specialists.

What is data mesh vs data fabric vs data vault?

Data mesh is an organizational paradigm decentralizing data ownership to domains. Data fabric is a technology architecture automating integration across distributed sources. Data vault is a data modeling methodology for building scalable, auditable data warehouses using hubs, links, and satellites. These serve different purposes: mesh addresses organizational structure, fabric solves integration challenges, and vault provides modeling patterns for historical data storage. Organizations often use vault modeling within mesh domains or fabric-integrated warehouses. They are complementary rather than competing approaches. Kanerika implements solutions spanning all three approaches—reach out to design an architecture leveraging the right combination.

What is data mesh vs data lake vs data warehouse?

Data mesh is an architectural paradigm defining how organizations manage data ownership and governance. Data lakes and data warehouses are storage technologies that can exist within mesh or fabric implementations. Data lakes store raw, unstructured data for flexible analytics exploration. Data warehouses store structured, processed data optimized for business intelligence queries. Under data mesh, domain teams might own their data lakes or warehouses as data products. Data fabric can integrate across both storage types seamlessly. These concepts operate at different architectural levels. Kanerika helps enterprises determine the right storage and governance combination—contact us for architecture guidance.

What is the difference between data fabric and data lakehouse?

Data fabric is an integration architecture that unifies access across multiple data sources, while data lakehouse is a storage platform combining data lake flexibility with data warehouse performance. Data fabric operates as a virtual layer connecting disparate systems including lakehouses, warehouses, and operational databases. A lakehouse is a specific destination where data resides, featuring ACID transactions and schema enforcement on top of object storage. Organizations often deploy data fabric to integrate their lakehouse with other enterprise data assets. Kanerika implements both Databricks lakehouse solutions and data fabric integrations—talk to us about building your modern data platform.

Between data fabric and data mesh, which is better for large, complex organizations?

Large, complex organizations often benefit from combining data fabric and data mesh rather than choosing one exclusively. Data mesh addresses organizational complexity by distributing ownership to domains that understand their data best. Data fabric handles technical complexity by automating integration across diverse systems and geographies. Enterprises with mature engineering cultures and strong domain expertise lean toward mesh. Those prioritizing rapid integration with existing centralized governance prefer fabric. The most successful large organizations implement fabric’s technology layer while adopting mesh’s ownership principles. Kanerika specializes in enterprise-scale data architecture for complex organizations—schedule an assessment to identify your optimal approach.