TLDR

Microsoft Fabric Eventhouse is the real-time analytics engine inside Fabric, built for streaming, time-series, and event-based workloads. It supports a full medallion architecture (bronze, silver, gold) using update policies and materialized views, with no external orchestration required. Cost control comes from per-table retention and cache policies, not capacity changes. Eventhouse also connects directly to AI workflows as a real-time context source for LLM prompts, Copilot queries, and agentic pipelines.

Partner with Kanerika to Turn real-time data into instant, AI-driven decisions with Microsoft Fabric Eventhouse

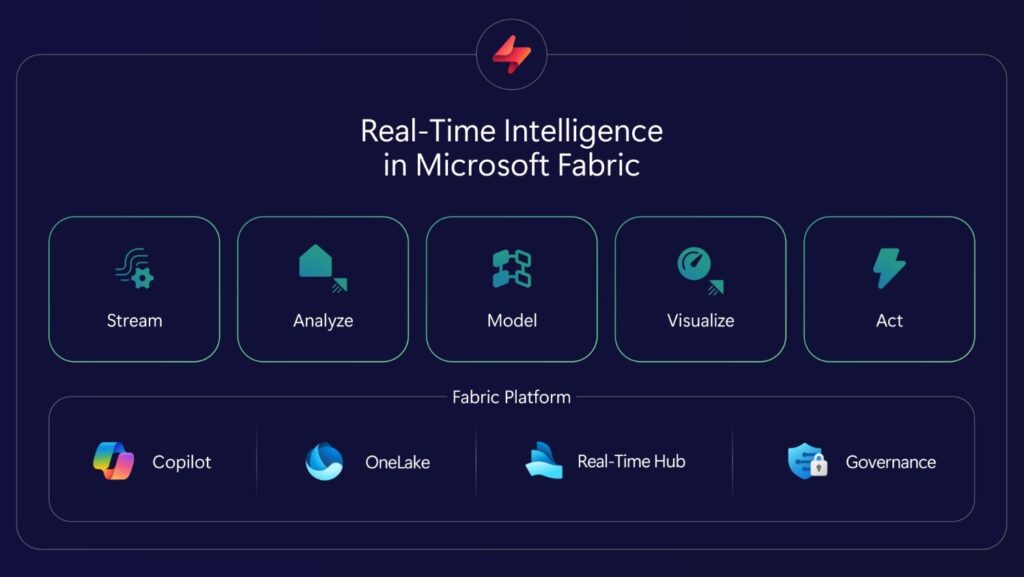

Why Eventhouse Is Getting More Attention in 2026

Microsoft Fabric has crossed 31,000 customers and is the fastest-growing data platform in Microsoft’s history. At FabCon 2026 in Atlanta, Microsoft’s principal PMs dedicated an entire session to Eventhouse patterns for real-time intelligence at scale.

That focus makes sense. As more enterprises move their analytics stack into Fabric, the gap between batch processing and real-time decision-making becomes harder to ignore. Eventhouse fills that gap for teams dealing with IoT telemetry, application logs, security events, or financial ticks.

In this article, we’ll cover how Eventhouse fits into Fabric’s architecture, the medallion pattern for real-time data, materialized views, cost optimization, and how Eventhouse connects to AI workflows.

Key Takeaways

- Eventhouse is Microsoft Fabric’s real-time analytics engine, built for streaming, time-series, and event-based data that needs sub-second query response

- A medallion architecture (bronze, silver, gold) can run entirely inside Eventhouse using update policies and materialized views, with no external orchestration

- Granular retention and cache policies per table are the primary cost control levers, not capacity SKU changes

- Eventhouse now works as an endpoint for Lakehouse and Data Warehouse, enabling KQL queries on data that was never ingested into Eventhouse directly

- AI agents and Copilot can pull live context from Eventhouse via KQL, making it a real-time memory layer for grounded AI workflows

What Is Microsoft Fabric Eventhouse?

Eventhouse is a workspace inside Microsoft Fabric that hosts one or more KQL (Kusto Query Language) databases. Think of it as a container that manages compute, storage, and monitoring for all the KQL databases under it.

Each KQL database holds tables optimized for append-only, time-based data. Fabric automatically indexes every column and partitions data by ingestion time. This means queries against recent data are fast by default, without manual index tuning.

1. Eventhouse vs. Lakehouse vs. Data Warehouse in Fabric

Fabric has three main data storage options, and each one is built for a different access pattern. Eventhouse handles data in motion. Lakehouse handles large-scale analytical data at rest. Data Warehouse handles structured BI workloads with T-SQL.

| Capability | Eventhouse | Lakehouse | Data Warehouse |

| Primary data pattern | Streaming, time-series, events | Large-scale analytical data at rest | Structured BI and reporting |

| Query language | KQL (primary), limited T-SQL | Spark SQL, T-SQL via SQL endpoint | T-SQL |

| Ingestion model | Streaming and micro-batch | Batch, Spark, Dataflows | Batch, pipelines, COPY INTO |

| Schema flexibility | Dynamic columns for semi-structured JSON | Schema-on-read with Delta | Fixed schema |

| Ideal latency | Sub-second to seconds | Minutes to hours | Seconds to minutes |

| Best for | Telemetry, IoT, logs, security events, financial ticks | Data engineering, ML training data, historical analytics | Enterprise BI, dimensional modeling |

The decision comes down to how your data arrives and how quickly you need answers. If data streams continuously and decisions depend on the last few minutes or hours, Eventhouse is the right fit.

2. What Data Types Does Eventhouse Handle?

A single IoT device might send battery levels as integers, temperature as floats, and metadata as nested JSON objects. Eventhouse accepts all of these, including free-text log entries and error messages, without requiring a rigid schema upfront. This matters because real-world streaming data is rarely clean or uniform.

How Eventhouse Handles Data Ingestion

Choosing the right ingestion pattern is one of the first decisions you make when setting up an Eventhouse. There are two paths, and each one has different tradeoffs around latency, cost, and reliability.

1. Streaming Ingestion for Low-Latency Workloads

This is the default for Fabric’s Real-Time Intelligence workload. Data arrives and becomes queryable in under 10 seconds. It works through Event Hub, Eventstream, SDKs, or direct API calls.

Streaming ingestion is the right choice when freshness matters more than cost efficiency. Real-time dashboards, anomaly detection, and operational monitoring all benefit from sub-10-second latency. But it can experience backpressure at extreme volumes, and you need to handle schema drift and deduplication downstream.

2. Batch Ingestion for High-Volume Workloads

This is the default for Azure Data Explorer (the engine behind Eventhouse). The Kusto ingestion service batches incoming data, handles partitioning, compression, and indexing automatically.

Batch ingestion works well for high-volume workloads where throughput and cost efficiency matter more than sub-second freshness. It supports Parquet, CSV, JSON, XML, and other file formats from Blob storage or ADLS via managed identity. Schema evolution is easier here because mappings and update policies can transform data during ingestion.

| Factor | Streaming Ingestion | Batch Ingestion |

| Latency | Under 10 seconds | Seconds to minutes |

| Cost efficiency | Lower (more compute per event) | Higher (batched, optimized) |

| Backpressure risk | Yes, at extreme volumes | Minimal |

| Schema handling | Must handle drift downstream | Mappings and update policies available |

| Best use case | Real-time dashboards, alerts, monitoring | High-volume historical loads, cost-sensitive pipelines |

Many production deployments use both patterns. Streaming handles the live operational feed, while batch loads bring in historical backfill or reference data.

Medallion Architecture Inside Eventhouse

The medallion pattern (bronze, silver, gold layers) is a well-known approach in data engineering. What makes Eventhouse interesting is that all three layers can run inside a single Eventhouse, using update policies and materialized views instead of external orchestration tools.

This keeps the entire real-time pipeline self-contained. No Spark jobs, no external schedulers, no separate compute clusters.

1. Bronze Layer

The bronze layer is where raw data lands first. The goal here is simple. Capture everything, lose nothing.

Data arrives in its original format, often as JSON payloads with a timestamp, device ID, and a dynamic column holding the telemetry payload.

The questions to answer at this layer are practical ones. How long do you keep raw data? Do you need transformations here, or is this purely a staging area?

Should you flatten nested JSON now, or defer that to silver? For most real-time workloads, the bronze table should have a short retention policy (sometimes zero days if the silver layer captures everything needed). This saves storage cost and keeps the Eventhouse lean.

2. Silver Layer

The silver layer is where data gets cleaned, enriched, and deduplicated. This is typically the main analytical layer for most use cases.

Update policies in Eventhouse trigger automatically when new data lands in the bronze table. They run a function that extracts fields from dynamic columns, performs lookups against dimension tables (using materialized views), normalizes text casing, and adds computed columns based on business logic.

The result is a typed, structured table with explicit columns for every field that analysts and dashboards need. Deduplication happens here through materialized views using the take_any() aggregation function.

3. Gold Layer

The gold layer holds pre-aggregated data for dashboards and trend analysis, and long-term retention. Materialized views in Eventhouse handle this automatically.

A common pattern is an hourly aggregate materialized view that sits on top of the silver dedup view. It computes averages, counts, or sums grouped by device, model, and time bucket. This pre-aggregation makes dashboard queries fast because they scan a fraction of the data.

For daily or weekly aggregates, you do not need separate materialized views. Build the hourly aggregate, and convert to daily granularity at query time. This avoids the overhead of maintaining too many materialized views.

| Aspect | Bronze | Silver | Gold |

| Purpose | Raw capture, staging | Cleaned, enriched, deduplicated | Pre-aggregated for dashboards |

| Typical retention | 0 to 1 day | 1 to 7 days | 30 to 365 days |

| Transformation method | None (raw ingest) | Update policies with lookups | Materialized views with bin() |

| Schema | Dynamic columns, minimal typing | Typed columns, explicit fields | Aggregated metrics by time bucket |

| Primary consumers | Pipeline debugging, replay | Analysts, data scientists | Dashboards, BI, trend reports |

Each layer serves a different audience and retention need. Designing these upfront prevents the common problem of storing everything at full granularity indefinitely, which drives up both storage and cache costs.

How Materialized Views Speed Up Eventhouse Queries

Materialized views are one of Eventhouse’s most useful features, but they also have constraints that are easy to overlook. Understanding the patterns and anti-patterns saves debugging time later.

1. Patterns That Work

Deduplication using take_any() is the most common pattern. It picks one record per unique key combination, removing duplicates that are common in streaming pipelines.

Aggregation and downsampling using bin() groups data into time buckets (hourly, daily) and computes summary statistics. This is the standard gold layer pattern.

Latest value using arg_max(timestamp, *) returns the most recent record for each entity. This is useful for “current state” dashboards that show the last known reading per device or sensor.

Update records using arg_max(ingestion_time(), *) returns the record that was ingested most recently for a given key. This handles late-arriving corrections.

| Pattern | Function | Use Case | Can Stack Aggregate On Top? |

| Deduplication | take_any() | Remove duplicate events from streaming | Yes |

| Aggregation | summarize with bin() | Hourly or daily rollups for dashboards | N/A (this is the aggregate) |

| Latest value | arg_max(timestamp, *) | Current state per device or entity | Yes |

| Update records | arg_max(ingestion_time(), *) | Late-arriving corrections | No |

Choosing the right pattern depends on whether you need deduplication, summarization, or state tracking. The stacking constraint on update records views is the most common gotcha in production setups.

2. Constraints to Know

Aggregate materialized views can run on top of a base table or on top of a dedup materialized view. Both work fine. But you cannot stack an aggregate materialized view on top of an update records (arg_max ingestion time) view.

This is a hard platform constraint. The recommended query pattern is to use materialized_view(‘ViewName’) instead of querying the view name directly. This ensures you get the materialized (pre-computed) results rather than running the full aggregation at query time.

And the biggest anti-pattern is creating a separate materialized view for every possible aggregation. If you have an hourly aggregate, you do not need a daily aggregate view too.

Aggregate the hourly data at query time instead. Over-creating views wastes compute and increases materialization lag.

Eventhouse Cost Optimization Strategies

Eventhouse costs are driven by two things. The amount of data sitting in hot cache (premium storage), and how long your Eventhouse stays active (compute uptime). Controlling both requires per-table configuration, not global settings.

1. Retention Policy Per Table

The retention policy controls how long compressed data stays in OneLake storage. Setting different retention periods per table is the single biggest cost lever.

A practical tiered approach works like this. Zero days on the raw bronze table (data flows through to silver via update policy, then gets dropped). One day on the silver table.

30 days on the dedup materialized view. 365 days on the hourly aggregate materialized view.

This way, you keep granular data for short-term debugging and aggregated data for long-term trending, without paying to store raw events indefinitely.

2. Cache Policy Per Table

The cache policy determines how much data stays in premium (hot) storage for fast queries. Each Eventhouse SKU includes a fixed amount of hot cache. If your cached data exceeds that amount, the Eventhouse auto-scales to the next size up, which increases cost.

Setting cache policies per table prevents this. The silver table might need one day of hot cache. The dedup view might need seven days.

The hourly aggregate might need 90 days. Everything else drops to standard OneLake storage, which is significantly cheaper.

| Layer | Retention | Hot Cache | Rationale |

| Raw bronze table | 0 days | 0 days | Data passes through to silver, then drops |

| Silver table | 1 day | 1 day | Short-term debugging and troubleshooting |

| Dedup materialized view | 30 days | 7 days | Operational queries on clean data |

| Hourly aggregate MV | 365 days | 90 days | Long-term trending and dashboards |

This tiered setup keeps costs predictable. Raw data does not accumulate. Granular data stays available for a short window.

And aggregated data, which is orders of magnitude smaller, carries the long-term retention burden.

3. Capacity Planner and Always-On

Eventhouse suspends automatically when idle to save cost. Reactivation takes a few seconds. For time-sensitive systems that cannot tolerate this latency, the Always-On (Capacity Planner) mode keeps the service running continuously.

With Always-On enabled, you get 100% uptime and cache storage is included in capacity charges. You can also set a minimum capacity floor to prevent auto-scale from dropping below a certain size during unpredictable query loads.

Hybrid Queries That Combine Real-Time and Historical Data

One of Eventhouse’s more practical capabilities is querying across real-time and historical data in a single request. This eliminates the need to move data between systems for time-spanning analysis.

1. How the Storage Tiers Work

Eventhouse organizes data into three tiers. The hot layer holds recent data in premium cache (hours or days, depending on your cache policy). The warm layer holds older data in cold Eventhouse storage (weeks or months).

The cold layer consists of OneLake shortcuts pointing to Delta or Parquet tables produced by Spark, Data Factory, or other Fabric workloads.

All three tiers are queryable from a single Eventhouse using KQL. No data movement is required. The KQL union operator combines results from a live table and an archive shortcut in one query.

2. When This Pattern Matters

Anomaly detection against a historical baseline is a common use case. You compare the last hour of sensor readings against 90 days of history to spot deviations. Regulatory dashboards that combine live operational data with audit trails also benefit from this pattern.

Predictive ML pipelines use it too. Training features come from historical data, while inference inputs come from the real-time feed. Both are accessible through one query surface.

The OneLake shortcut is what makes this work without ETL. External Delta or Parquet tables become queryable instantly through the shortcut, with no data duplication or separate ingestion pipeline.

How Eventhouse Handles JSON and Schema Evolution

Real-world streaming data rarely arrives in a fixed schema. Devices get firmware updates. APIs add fields.

New event types appear without warning. Eventhouse handles this through dynamic columns, which accept any JSON structure without breaking ingestion.

1. Dynamic Columns for Flexibility

When Eventhouse encounters a JSON field it has not seen before, that field lands in a dynamic-typed column automatically. No schema change is needed. No pipeline restarts.

The data is there, queryable immediately using dot notation or functions like todynamic() and bag_unpack().

2. Evolving the Schema Over Time

The recommended pattern is to start with a wide table that has a few typed columns (timestamp, device ID) and a dynamic column for everything else. As the data matures and you identify which fields are queried frequently, you promote those fields to typed columns through update policies.

This gives you the best of both worlds. New fields arrive without any disruption. High-frequency fields get the indexing and compression benefits of typed columns.

The schema evolves gradually, driven by actual usage patterns rather than upfront guesswork.

AI Integration with Eventhouse

Eventhouse’s role in AI workflows is becoming more defined with each Fabric release. Three patterns are emerging in production deployments, each using Eventhouse as a live context source rather than a static data store.

Real-Time RAG (Retrieval-Augmented Generation) stores structured context like product details, device states, and customer records as queryable Eventhouse tables. At inference time, a KQL query retrieves relevant rows and feeds them into the LLM prompt alongside the user’s question. This solves the stale data problem in traditional RAG setups because the context always reflects the current state of the business.

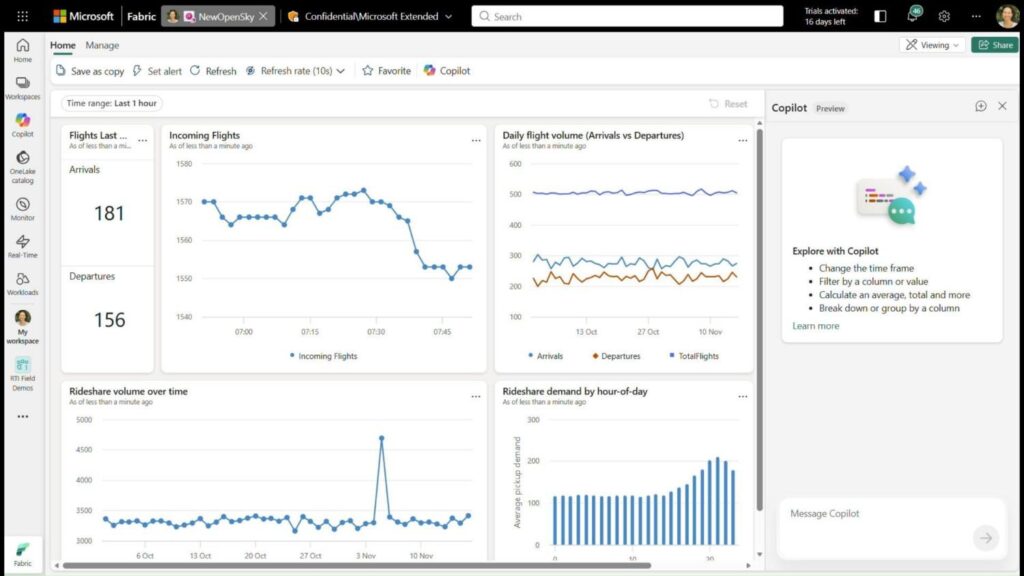

Copilot in Real-Time Dashboards can generate KQL queries from natural language questions and narrate results. Business users ask questions about live data without writing KQL. Copilot translates the question, runs the query against the Eventhouse, and returns a visual or text explanation. This is currently in public preview.

Agentic workflows let AI agents call Eventhouse via the KQL MCP (Model Context Protocol) server or REST API. The agent retrieves the latest device state, compares it to historical norms, and decides what action to take. Eventhouse acts as the agent’s persistent, queryable, time-aware memory layer. The Remote MCP for Eventhouse is in public preview.

For structured real-time data, these patterns eliminate the need for a separate vector database. The data is already indexed, queryable, and fresh.

Eventhouse Endpoint for Lakehouse and Data Warehouse

A newer capability in Fabric lets you create an Eventhouse endpoint on top of an existing Lakehouse or Data Warehouse. This means you can run KQL queries against Lakehouse or Warehouse tables without ingesting that data into Eventhouse.

1. How It Works

When you enable the endpoint, Eventhouse creates a mirrored schema with OneLake shortcuts to the source tables. Schema changes sync within seconds. The data stays in its original location.

This gives you access to KQL’s analytical operators, Copilot’s natural language querying, and Real-Time Intelligence dashboards on data that lives in a Lakehouse or Warehouse. Cache policies control how much data gets pulled into premium storage for fast queries.

2. When to Use It

The endpoint makes sense when you have existing Lakehouse or Warehouse tables and want to run time-series analysis, text search, or KQL-specific operations without duplicating data. It also enables Copilot-powered exploration of Warehouse data, which is not natively available through the Warehouse’s T-SQL interface.

It does not replace native Lakehouse or Warehouse querying for their primary workloads. It adds a complementary query surface for scenarios where KQL is a better fit than Spark SQL or T-SQL.

How SSMH Built a Fabric Analytics Framework with Kanerika

Southern States Material Handling (SSMH) operates a network of TOYOTAlift dealerships across multiple locations. Their operational reporting was fragmented, with data scattered across SQL Server, SharePoint, and separate semantic models. Managers had no unified view of service, parts, or fleet performance.

Kanerika built a data analytics framework for SSMH using Microsoft Fabric and Power BI. The implementation used OneLake as the centralized data foundation, with Azure Data Factory pipelines handling ingestion from multiple source systems. The reporting layer followed a 1:3:10 methodology, with one executive dashboard, three managerial scorecards, and ten operational reports.

The results, published on Microsoft’s customer stories page, were concrete. SSMH reached 90% data accuracy and 85% higher operational visibility. Inventory costs dropped by 8 to 10%, labor utilization improved by 3 to 5%, and customer satisfaction ratings went up by more than 5%.

Delano Gordon, CIO of SSMH, noted that the ability to bring in many data sources and shape a strong analytics setup would be a game changer for SSMH.

How Kanerika Implements Eventhouse for Enterprise Clients

Kanerika is an AI-first data and analytics company and a trusted Microsoft Fabric Featured Partner. As a Microsoft Solutions Partner for Data and AI with an Analytics Specialization, Kanerika has been implementing Fabric across enterprise clients since the platform’s early access period.

The team includes a Microsoft MVP for Power BI in a senior leadership role, and has delivered Fabric implementations across logistics, manufacturing, financial services, and healthcare. Kanerika is recognized for efficient, structured implementations that move from proof-of-concept to production without extended timelines.

Kanerika runs a Real-Time Intelligence in a Day workshop program in partnership with Microsoft. These hands-on sessions walk teams through Eventhouse, Eventstream, Data Activator, and real-time dashboards in a single day.

For organizations evaluating Eventhouse or broader Fabric adoption, Kanerika’s FLIP Migration Accelerator reduces migration effort by up to 75%. The Microsoft Azure Accelerate program also provides up to $100K in development funding for qualifying projects.

Partner with Kanerika to Move from legacy pipelines to real-time intelligence with Microsoft Fabric Eventhouse

Wrapping Up

Microsoft Fabric Eventhouse fills a specific gap in the analytics stack. It handles streaming, time-series, and event-based data with the speed and flexibility that batch-oriented systems were never designed for.

The medallion architecture pattern works natively inside Eventhouse through update policies and materialized views. Cost control comes from granular retention and cache policies per table. And the AI integration patterns, from real-time RAG to agentic workflows, position Eventhouse as more than a data store.

For teams already invested in Fabric, Eventhouse is the component that closes the real-time gap.

Frequently Asked Questions

What is an Eventhouse in Fabric?

An Eventhouse in Microsoft Fabric is a specialized database optimized for real-time analytics on streaming and time-series data. It stores event-driven data from IoT devices, application logs, and telemetry streams, enabling sub-second query performance using Kusto Query Language. Unlike traditional warehouses, Eventhouse excels at handling high-velocity data ingestion while maintaining low-latency analysis capabilities. It integrates seamlessly with other Fabric components like Power BI for instant visualization. Kanerika’s Fabric specialists can architect your Eventhouse implementation for maximum real-time analytics performance—schedule a consultation today.

What is Microsoft Fabric Eventhouse?

Microsoft Fabric Eventhouse is a real-time analytics engine designed for processing streaming events and time-series data at scale. Built on Azure Data Explorer technology, it provides near-instant query responses on billions of records using KQL. Eventhouse automatically handles data ingestion from sources like Event Hubs, IoT Hub, and Kafka while compressing data efficiently for cost-effective storage. It powers use cases including operational intelligence, log analytics, and IoT monitoring within Fabric’s unified analytics platform. Kanerika helps enterprises deploy Eventhouse solutions that deliver actionable insights in milliseconds—connect with our team to explore your options.

How does Eventhouse differ from Lakehouse and Data Warehouse in Fabric?

Eventhouse handles real-time streaming analytics while Lakehouse and Data Warehouse serve different purposes within Microsoft Fabric. Lakehouse combines raw data lake storage with structured analytics using Delta Lake format, ideal for exploratory data science workloads. Data Warehouse delivers traditional SQL-based analytics with T-SQL compatibility for structured business intelligence. Eventhouse specializes in high-velocity event ingestion and sub-second KQL queries on time-series data. Each component shares OneLake storage but optimizes for distinct query patterns and latency requirements. Kanerika architects unified Fabric solutions that leverage each component’s strengths—let us design your optimal data architecture.

What is the difference between Eventhouse and Azure Data Explorer?

Eventhouse is essentially Azure Data Explorer integrated natively into Microsoft Fabric with enhanced platform connectivity. Both use Kusto Query Language and share the same high-performance analytics engine for time-series and streaming data. However, Eventhouse benefits from Fabric’s unified OneLake storage, automatic data sharing across workloads, and simplified capacity management under a single billing model. Azure Data Explorer remains a standalone Azure service requiring separate infrastructure management. Eventhouse also integrates directly with Fabric’s Power BI and Data Activator without additional configuration. Kanerika migrates Azure Data Explorer workloads to Fabric Eventhouse seamlessly—reach out to plan your transition.

Can I use SQL to query data in an Eventhouse?

Eventhouse primarily uses Kusto Query Language for queries, though it supports a subset of T-SQL through translation. KQL delivers superior performance for time-series analytics, log exploration, and streaming data aggregations compared to traditional SQL. For users familiar with SQL syntax, KQL shares similar concepts like filtering, aggregation, and joins but optimizes specifically for event-based data patterns. Microsoft provides SQL-to-KQL conversion tools to ease the learning curve. Complex analytical queries that would require multiple SQL statements often condense into single KQL expressions. Kanerika’s data engineers train teams on KQL while building production-ready Eventhouse solutions—contact us to accelerate your adoption.

How can I optimize Eventhouse costs?

Optimizing Eventhouse costs requires strategic data management and query efficiency practices. Implement materialized views to pre-aggregate frequently queried data, reducing compute consumption per query. Configure retention policies to automatically purge outdated event data that no longer provides analytical value. Use update policies to transform data during ingestion rather than at query time. Partition large datasets by time columns to enable efficient data pruning. Monitor cache hit ratios and adjust hot cache windows based on actual query patterns rather than defaults. Right-size your Fabric capacity based on peak concurrent query loads. Kanerika’s Fabric optimization assessments identify cost reduction opportunities specific to your workloads—request your free analysis.

What data sources can I connect to an Eventhouse?

Eventhouse connects to diverse streaming and batch data sources through native connectors and ingestion pipelines. Real-time sources include Azure Event Hubs, IoT Hub, Apache Kafka, and change data capture streams from operational databases. Batch ingestion supports Azure Blob Storage, ADLS Gen2, Amazon S3, and local file uploads in formats like JSON, CSV, Parquet, and Avro. Eventstream in Fabric routes data from multiple sources into Eventhouse with transformation capabilities. You can also query external OneLake tables through shortcuts without data movement. Kanerika designs comprehensive data ingestion architectures connecting all your sources to Eventhouse—let us map your integration strategy.

Does Eventhouse work with Power BI and Copilot?

Eventhouse integrates seamlessly with Power BI and Microsoft Copilot within the Fabric ecosystem. Power BI connects directly to Eventhouse databases for real-time dashboard visualizations that refresh in seconds rather than minutes. DirectQuery mode enables live queries against streaming data without importing into Power BI datasets. Copilot in Fabric uses natural language to generate KQL queries against Eventhouse data, making analytics accessible to non-technical users. Data Activator monitors Eventhouse streams and triggers automated alerts or workflows based on defined conditions. Kanerika builds end-to-end Fabric solutions connecting Eventhouse analytics to Power BI dashboards—reach out to visualize your real-time data.

How do I create an Eventhouse in Microsoft Fabric?

Creating an Eventhouse in Microsoft Fabric takes minutes through the workspace interface. Navigate to your Fabric workspace, select New Item, and choose Eventhouse from the Real-Time Intelligence category. Name your Eventhouse and specify the workspace location. Fabric automatically provisions the database with default settings including cache policies and retention periods. Next, create KQL databases within the Eventhouse to organize your data by domain or use case. Configure data connections through Eventstream or direct ingestion methods. Set up materialized views and functions for common analytical patterns. Kanerika accelerates Eventhouse deployments with proven implementation frameworks—connect with us to launch your real-time analytics capability.

What exactly is Microsoft Fabric?

Microsoft Fabric is a unified analytics platform that consolidates data engineering, warehousing, science, and business intelligence into one SaaS environment. It eliminates the complexity of managing separate Azure services by providing integrated workloads including Data Factory, Synapse, and Power BI under a single capacity model. OneLake serves as the centralized storage layer accessible across all Fabric components, eliminating data duplication and silos. Fabric supports end-to-end analytics from raw data ingestion through real-time dashboards with unified governance and security. Organizations benefit from simplified administration and predictable costs. Kanerika guides enterprises through Fabric adoption strategies tailored to their analytics maturity—schedule your assessment today.

What is the difference between Microsoft Fabric and Azure?

Microsoft Fabric is a SaaS analytics platform built on Azure infrastructure but differs fundamentally in deployment and management models. Azure provides individual PaaS and IaaS services requiring separate provisioning, configuration, and billing management. Fabric bundles multiple analytics capabilities including Data Factory, Synapse Analytics, and Power BI into one unified experience with shared capacity pricing. While Azure demands significant infrastructure expertise, Fabric abstracts complexity for faster time-to-value. Fabric workloads store data in OneLake, which itself runs on Azure storage. Organizations can use both together, with Azure handling custom workloads alongside Fabric’s managed analytics. Kanerika helps enterprises determine the right mix of Azure and Fabric services—contact us for a strategic consultation.

Is Microsoft Fabric a PaaS or SaaS?

Microsoft Fabric operates as a SaaS platform, meaning Microsoft manages all infrastructure, updates, and maintenance automatically. Unlike PaaS services where customers configure virtual networks, scaling rules, and security patches, Fabric abstracts these complexities entirely. Users access Fabric through a web-based interface and purchase capacity units rather than individual compute or storage resources. This SaaS model enables organizations to focus on analytics outcomes rather than infrastructure operations. Fabric’s automatic scaling adjusts resources based on workload demands without manual intervention. Security, compliance certifications, and feature updates deploy seamlessly across all tenants. Kanerika maximizes your Fabric SaaS investment with implementation best practices—reach out to optimize your deployment.

What problems does Microsoft Fabric solve?

Microsoft Fabric solves data fragmentation, tool sprawl, and governance complexity that plague enterprise analytics environments. Organizations traditionally juggle separate services for ingestion, transformation, warehousing, and visualization, creating integration overhead and data silos. Fabric unifies these capabilities under one platform with OneLake providing single-copy storage accessible across all workloads. It eliminates the need for complex ETL pipelines between analytics services since all components share the same data foundation. Simplified capacity-based pricing replaces unpredictable costs from multiple service meters. Unified governance applies consistent security policies across all data assets. Kanerika implements Fabric solutions that eliminate these pain points in your environment—let us assess your current challenges.

Is Microsoft Fabric the same as Snowflake?

Microsoft Fabric and Snowflake serve overlapping but distinct purposes in the modern data stack. Snowflake specializes as a cloud data warehouse with exceptional SQL query performance and data sharing capabilities. Fabric provides a broader unified platform encompassing data engineering, real-time analytics with Eventhouse, data science, and business intelligence alongside warehousing. Snowflake requires external tools for ETL, visualization, and machine learning, while Fabric integrates these natively. Both support multi-cloud strategies, though Fabric benefits from deep Microsoft 365 and Azure integration. Pricing models differ significantly, with Snowflake using consumption-based credits versus Fabric’s capacity reservations. Kanerika implements both platforms and helps organizations choose based on their specific requirements—connect with us for an objective comparison.

Is Fabric built on Azure?

Microsoft Fabric runs entirely on Azure infrastructure while presenting a distinct SaaS experience to users. Azure provides the underlying compute, storage, networking, and security foundations that power Fabric workloads. OneLake, Fabric’s unified storage layer, leverages Azure Data Lake Storage Gen2 technology internally. Fabric services like Eventhouse evolved from Azure Data Explorer, and Data Factory capabilities derive from Azure Data Factory. However, Fabric simplifies access by removing direct Azure subscription management requirements. Organizations can use Fabric without deep Azure expertise while still benefiting from Azure’s global scale and compliance certifications. Kanerika leverages expertise across both Azure and Fabric to design optimal analytics architectures—request your consultation today.

What is the purpose of a Lakehouse?

A Lakehouse in Microsoft Fabric combines data lake flexibility with data warehouse reliability for unified analytics workloads. It stores raw, semi-structured, and structured data in open Delta Lake format while supporting ACID transactions typically found only in warehouses. Data scientists access the same data as business analysts without maintaining separate copies or complex pipelines. Lakehouse enables schema evolution, time travel queries, and efficient upserts that traditional data lakes lack. Unlike Eventhouse which optimizes for real-time streaming, Lakehouse serves batch analytics, machine learning feature engineering, and exploratory analysis use cases. Kanerika architects Fabric solutions that leverage both Lakehouse and Eventhouse for comprehensive analytics—talk to us about your data strategy.

Who needs Microsoft Fabric?

Microsoft Fabric benefits organizations seeking to consolidate fragmented analytics tools and eliminate data silos across their enterprise. Companies already invested in Microsoft ecosystems gain significant advantages from native Power BI, Teams, and Microsoft 365 integration. Enterprises struggling with escalating costs from multiple Azure analytics services find Fabric’s unified capacity model more predictable. Teams requiring real-time analytics through Eventhouse alongside traditional warehousing reduce complexity by standardizing on one platform. Organizations lacking dedicated data engineering staff appreciate Fabric’s low-code capabilities and managed infrastructure. Mid-market companies can access enterprise-grade analytics without building specialized teams. Kanerika evaluates whether Fabric aligns with your organization’s analytics maturity and goals—schedule your free assessment.

Is Microsoft Fabric like Databricks?

Microsoft Fabric and Databricks overlap in data engineering and lakehouse capabilities but serve different strategic purposes. Databricks excels in advanced machine learning, MLOps workflows, and Apache Spark-based data processing with deep Python ecosystem integration. Fabric provides broader unified analytics including Power BI visualization, real-time analytics via Eventhouse, and Data Activator for automated actions. Databricks requires integration with external BI tools while Fabric includes them natively. Both support Delta Lake format, enabling data portability between platforms. Databricks offers multi-cloud deployment whereas Fabric runs exclusively on Azure. Many enterprises use both, with Databricks handling ML workloads and Fabric managing analytics consumption. Kanerika implements both platforms and helps determine optimal workload placement—contact us for guidance.