Capital One shut down eight on-premises data centers across an 8-year migration, scaling its technology team to 11,000 people along the way. It became the first U.S. bank to report a full exit from legacy infrastructure to the public cloud. Most enterprises do not attempt migrations at this scale, and the data explains why. Gartner estimates 83% of data migration projects fail outright or overrun their budgets and schedules, and McKinsey finds that 75% of cloud migrations run over budget.

Data migration is the process of moving data between systems, storage, or formats, usually during a platform change or cloud move. The tool you pick shapes cost, downtime risk, and whether your schema and business logic survive the trip intact. In this article, we’ll cover the 10 best data migration tools for 2026 and tailor it to your business needs.

Key Takeaways

- 83% of data migration projects fail or overrun, and the root cause is usually legacy code and schema debt, not the transfer itself.

- Cloud-native tools (AWS DMS, Azure DMS) are free or low-cost for same-platform moves, but limit flexibility.

- ETL and ELT tools (Fivetran, Matillion, Stitch) are built for ongoing pipelines, not one-time migrations.

- Legacy BI and ETL conversion needs accelerators. Manual SSIS-to-Fabric or Informatica-to-Talend rewrites take months per pipeline.

- Kanerika’s FLIP reduces migration effort by 50 to 60% and cuts licensing costs by 75% on legacy BI and ETL modernization projects.

What is Data Migration?

Data migration is the transfer of data from one system, storage device, or format to another. It is an essential move for businesses interested in upgrading IT infrastructure, consolidating data centers, adopting cloud-based platforms, or consolidating information from various sources. Properly implemented data migration will provide the data with accessibility, security, accuracy, and usefulness of the data both during and after the transition.

Data migration is a process for transferring structured and unstructured data, such as databases, files, applications, and documents, between environments. Such a transfer can be intra-system, such as a software version change, or inter-system, such as replacing an on-premises server with a cloud-based one. Data cleansing, transformation, validation, and testing are usually involved in the process to ensure data integrity.

Popular causes of data migration are:

- System Upgrades: Legacy systems to modern high-performance systems.

- Cloud Adoption: Moving data and applications to the cloud to be scaled, flexible, and cost-effective.

- Data Consolidation: The process of consolidating information from multiple sources to enable centralized reporting and analytics.

- Mergers and Acquisitions: Applying acquired information to one platform.

RPA for Data Migration: Best Practices and Considerations

Streamline your data migration by following RPA best practices for efficiency and precision.

What Are Data Migration Tools?

Data migration tools are programs that help you move data from one place to another without manual effort. Think of them as smart assistants that handle all the technical work. They schedule transfers, connect different systems, change data formats when needed, double-check everything moved correctly, and show you what’s happening. These tools prevent costly mistakes, keep your business running smoothly during transfers, and protect your data from damage or loss.

1. On-Premise Data Migration Tools

These tools get installed directly on your company’s computers and servers. All the work happens inside your building, so your data stays on your network the entire time.

Key Features

- You control everything about how the migration works, from security rules to exactly how data gets handled.

- Great for companies with strict regulations or sensitive data that legally can’t leave their facilities.

- They plug right into your existing databases, software, and older systems without needing internet connections.

- Your IT team needs solid technical skills to install, configure, and maintain these tools properly.

Examples

- Informatica PowerCenter: A business platform that many large companies use for complex data moves that require lots of quality checking and transformation.

- IBM InfoSphere DataStage: A heavy-duty tool that processes huge amounts of data fast and handles complicated changes between very different systems.

2. Cloud Data Migration Tools

Cloud companies like Amazon, Microsoft, and Google offer these as online services. They focus on getting your data into the cloud or moving it between different cloud services.

Key Features

- Built to work perfectly with AWS, Microsoft Azure, Google Cloud, and similar platforms right out of the box.

- Simple interfaces that regular business users can understand, plus connections to popular software already set up.

- Handle massive data volumes and can keep systems talking to each other continuously.

- Your data security depends on whatever the cloud provider offers, which might not fit every company’s needs.

Examples

- AWS Database Migration Service: Amazon’s service that moves databases to their cloud safely while keeping your business running normally.

- Azure Database Migration Service: Microsoft’s tool for getting databases into Azure with barely any interruption to your daily operations.

3. Open-Source Data Migration Tools

Free programs where you can look at and change the code however you want. Companies choose these to save money and get exactly what they need.

Key Features

- It costs nothing to use, and you can customize every detail to match your specific business requirements.

- Way more flexible than paid options since you can modify anything that doesn’t work for your setup.

- Require more technical knowledge to install, monitor, and troubleshoot than commercial alternatives.

- Backed by communities of programmers who constantly improve the tools and help solve problems.

Examples

- Apache Kafka: An extremely powerful real-time data streaming system that offers unlimited flexibility but needs expert developers to implement successfully.

- Airbyte: A free platform that makes building data connections simple through an easy interface, even for non-technical people.

What are the Core Capabilities of Data Migration Tools?

Vendor feature lists often overstate capabilities. The items below are the characteristics that determine whether a migration tool performs reliably on a real project. Test each one against your specific workload before signing a contract.

| Capability | Why It Matters | What to Test |

|---|---|---|

| Schema conversion | Most migration pain comes from data type mismatches and schema differences between source and target | Run a sample migration on a table with custom types, nested JSON, and nullable fields |

| Change Data Capture | CDC keeps source and target in sync during cutover, reducing downtime from hours to minutes | Verify CDC latency under load, not in a quiet test environment |

| Error recovery | Long-running migrations fail. The question is whether the tool resumes or restarts | Terminate the process mid-migration and observe how it recovers |

| Monitoring and alerting | Silent data loss is worse than visible failure. Good tools log row counts, mismatches, and throughput in real time | Force a row mismatch and see if it triggers an alert |

| Security controls | Encryption in transit and at rest is table stakes. Role-based access and audit logs are not | Check SOC 2, HIPAA, and GDPR compliance documentation |

Top 11 Data Migration Tools

1. FLIP by Kanerika

FLIP converts legacy BI and ETL codebases into modern platforms. It reads metadata from SSIS, SSRS, Informatica, Tableau, Cognos, and Crystal Reports, then regenerates equivalent logic in Talend, Power BI, or Microsoft Fabric. The conversion is automated, but a human engineer validates every pipeline before cutover.

Most migration tools only move data. FLIP rewrites the code that processes it. On documented projects, 50 to 100 pipelines convert in 2 to 3 weeks, and 500+ pipeline migrations complete in 6 to 8 weeks. Manual rewrites of the same scope usually take 6 to 12 months. Available on Azure Marketplace.

Best for: Enterprises operating on legacy BI or ETL platforms that need to move to Microsoft Fabric, Power BI, Databricks, or Talend without rebuilding every pipeline from scratch.

2. Stitch Data

Stitch is the entry-level cloud pipeline tool from Talend. It handles simple source-to-warehouse moves with minimal setup. Row-count pricing is transparent and suitable for small data volumes.

The connector library is smaller than Fivetran’s and transformation capability is limited. Teams typically outgrow Stitch within 18 to 24 months and move to Fivetran or Matillion.

Best for: Startups and mid-market teams running fewer than 20 data sources into a single warehouse.

3. AWS Database Migration Service (DMS)

AWS DMS handles database-to-database moves where the destination is AWS. It supports same-engine migrations (Oracle to Oracle) and cross-engine work (Oracle to PostgreSQL, SQL Server to Aurora). Pricing is hourly plus data transfer, which keeps small moves inexpensive.

Change Data Capture keeps source and target in sync, so cutover windows remain short. The limitation is scope. DMS moves data and schema. It does not rewrite stored procedures, ETL code, or BI reports. For complex workloads, organizations pair it with AWS Schema Conversion Tool or with accelerators like FLIP. For detailed guidance on moving SQL Server workloads specifically, see the SQL Server data migration guide.

Best for: Teams moving production databases into AWS with minimal downtime tolerance.

4. Azure Database Migration Service

Azure DMS is the Microsoft counterpart. It moves SQL Server, MySQL, PostgreSQL, MongoDB, and Oracle workloads into Azure SQL, Azure Database for PostgreSQL, or Cosmos DB. The standard SKU is free per Microsoft Learn documentation.

Online migration keeps both systems live until cutover, which is useful for 24/7 workloads. Offline migration is faster but requires a maintenance window. The tool’s limitation is the same as AWS DMS. It does not address code modernization. If you are moving off SSIS or SSRS, you still need a separate accelerator path. Dedicated SSIS to Microsoft Fabric and SSRS to Power BI migration services are built for those conversions.

Best for: Azure-bound database moves in organizations already invested in Microsoft infrastructure.

5. XMigrate

XMigrate is a Free, open-source tool that works through command-line interfaces. Good for technical folks who like having full control over every aspect of the migration process. You can customize how it transforms data, write custom scripts, and modify the source code as needed to meet specific requirements. No licensing fees ever, though you’ll need skilled developers to implement and maintain it properly.

Best for: Technical teams who prefer command-line tools and want a solution they can fully customize for unique business requirements.

6. Ingestro

Ingestro is an AI-powered data migration platform that helps software companies ingest customer data from spreadsheets and legacy system exports into their products. It streamlines file-based data extraction, schema mapping, validation, and transformation before data is loaded into the target environment. This helps improve data quality and consistency, enabling smoother implementations and faster customer onboarding.

Best for: Service and product teams at HR, payroll, and software companies looking to manage customer data onboarding at scale.

7. Talend Open Studio

Talend Open Studio is the free, open-source version of Talend Data Integration. It has a visual component-based pipeline builder, 900+ connectors, and an active user community. Code generation produces Java internally, which teams can export and run anywhere. For a deeper comparison of Talend’s positioning, see Talend vs Informatica PowerCenter.

The free version lacks enterprise features like scheduling, monitoring, and collaborative development. Most organizations using Open Studio at scale eventually upgrade to the paid Talend Data Fabric, where the licensing costs begin.

Best for: Teams running one-off ETL jobs or evaluating Talend before committing to the paid tier.

8. Fivetran

Fivetran is built for continuous ELT pipelines between SaaS apps and cloud warehouses. It has 500+ prebuilt connectors for Salesforce, HubSpot, Stripe, and other business systems. Once configured with a source and destination, it handles schema drift, incremental loading, and retries automatically. For a detailed breakdown, see data migration vs data integration.

Pricing is based on Monthly Active Rows (MAR), which can spike unpredictably. Teams report bills 3x to 10x higher than initial estimates on high-volume sources. Fivetran is not a migration tool in the traditional sense. It is a pipeline platform that also handles one-time moves.

Best for: Continuous replication from SaaS apps to Snowflake, BigQuery, or Redshift.

9. Matillion

Matillion runs enterprise ETL on Snowflake, Databricks, BigQuery, Redshift, and Microsoft Fabric. The visual pipeline builder is closer to SSIS than to Fivetran’s automated model, so engineering teams with transformation logic tend to prefer it.

It handles large data volumes and complex joins natively on the target platform. Pricing is credit-based and predictable compared to Fivetran’s MAR model. The learning curve is steeper.

Best for: Enterprise ETL on cloud warehouses where transformation logic matters more than connector breadth.

10. Airbyte

Airbyte is the open-source ELT challenger to Fivetran. It has 350+ connectors, a self-hosted deployment option, and a cloud version for teams that do not want to run infrastructure. Connectors are written in a standardized SDK, so building custom ones is easier than with most platforms.

Self-hosted Airbyte requires engineering time to operate. Cloud Airbyte is priced by volume and competitive with Fivetran on many workloads. Stability remains a consideration. Community-maintained connectors fail more frequently than Fivetran’s vendor-maintained ones.

Best for: Data teams with engineering capacity who want connector flexibility without vendor lock-in.

11. SnapLogic

SnapLogic Uses pre-built connectors they call “Snaps” to automate migration workflows through simple drag-and-drop interfaces. Handles real-time data integration scenarios where data needs to flow continuously between systems. Works for various migration patterns, including cloud-to-cloud transfers, on-premises-to-cloud moves, and hybrid environments. Includes monitoring tools to track data flow and catch problems before they impact business operations.

Best for: Quick migrations using ready-made connections when you need real-time data syncing and don’t want to build custom integrations from scratch.

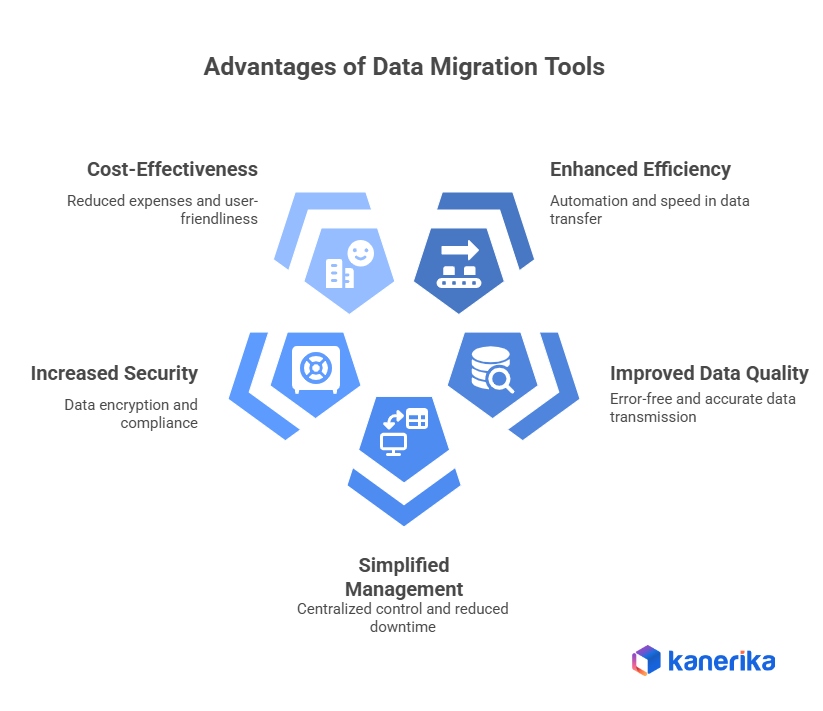

What Are the Advantages of Using Data Migration Tools

1. Enhanced Efficiency and Automation

Data migration tools excel at accelerating the process and reducing workload. They automate repetitive tasks like data extraction, transformation, and loading (ETL), eliminating the need for error-prone manual scripting and coding. Additionally, these tools efficiently handle large data volumes, significantly speeding up migration times.

2. Improved Data Quality and Accuracy

By improving data quality, data migration solutions ensure error-free data transmission. Prior to migration, they fix formatting errors, find and eliminate duplicate records, and ensure data integrity. Moreover, they can alter data to conform to the structure of the target system, ensuring accurate data representation in the relocated setting.

3. Simplified Management and Reduced Risk

These tools give you a clear perspective and enable real-time monitoring by giving you a central platform to manage the entire migration process. Furthermore, automation reduces the downtime that comes with data movement, guaranteeing that your company’s operations are not adversely affected. Built-in error management and rollback features let you recover from possible problems and resume the migration process if needed, which further lowers risks.

4. Increased Security and Compliance

Data migration tools prioritize data security and compliance. They encrypt sensitive data during transfer to safeguard it, and access control mechanisms restrict unauthorized access. Furthermore, they provide detailed audit trails and logs of the migration process, ensuring compliance with data security regulations and facilitating troubleshooting if needed. Many tools even cater to specific industry regulations and compliance standards.

5. Cost-Effectiveness and User-Friendliness

Data migration tools are more affordable than manual techniques. They lower project expenses by freeing up critical IT resources through task automation and a reduction in human labor. Furthermore, a lot of tools have drag-and-drop functionality and user-friendly interfaces, making them usable even by non-technical people. The automation and data validation features offered by these tools can significantly reduce the risk of errors, leading to potential cost savings in the long run by avoiding the need to fix errors or re-migrate data due to inaccuracies.

How to Select the Right Data Migration Tool in 2026?

The right tool depends on four variables, and most failed migrations come from optimizing for the wrong one. Price is the most tempting factor to prioritize and the least predictive of success.

| If You Are Migrating | Start With | Avoid |

|---|---|---|

| Databases into AWS | AWS DMS + Schema Conversion Tool | Generic ETL platforms |

| Databases into Azure | Azure DMS | Third-party tools when free native option works |

| Legacy BI (Tableau, Cognos, Crystal Reports) | FLIP or manual rebuild | AWS DMS or Azure DMS (they don’t convert reports) |

| Legacy ETL (SSIS, Informatica) | FLIP or Matillion with engineering support | Fivetran (pipeline tool, not conversion tool) |

| SaaS apps to warehouse | Fivetran or Airbyte | AWS/Azure DMS |

| Continuous real-time integration | SnapLogic or Matillion | One-time migration tools like AWS DMS |

The second variable is data volume. Tools that scale to 10 million rows often break at 1 billion. Always test with production-scale data before committing. For Azure-specific planning, the Azure data migration guide covers the architectural decisions that precede tool selection. For SQL Server workloads, see the SQL Server data migration guide.

The third variable is your team’s technical depth. Open-source tools save money on licensing and spend it on engineering hours. Budget accordingly. The fourth is compliance. If you handle PHI, PII, or financial records, verify certifications (SOC 2 Type II, HIPAA, GDPR) before the technical evaluation starts.

Data Visualization Tools: A Comprehensive Guide to Choosing the Right One

Choose the perfect data visualization tool to transform complex data into clear, actionable insights for your business success.

Best Practices for Successful Data Migration

The 83% failure rate holds across every tool category, which means the problem sits upstream of tool choice. Bloor Research puts average time overruns at 41% and cost overruns at 30%, while McKinsey’s survey of 450 CIOs found only 15% of cloud migrations finish on time and within budget. Four practices consistently separate the projects that land on time from the ones that don’t.

1. Source Data Quality Determines Migration Success More Than Tool Selection

Most migration plans assume source data is cleaner than it is. Experian research shows 48% of APAC organizations cite data quality as the primary cause of migration delays, and only 43% of project teams report having a solid grasp of migration best practices before kickoff. Duplicate records, inconsistent date formats, and orphaned foreign keys surface during migration and stall the project by weeks.

Run a data quality audit 4 to 6 weeks before cutover. Profile at least 15 to 20% of source records across every critical table. Expect 5 to 15% of records to need cleanup on legacy systems that have run for more than 5 years. Fix issues in the source, not in the pipeline. Source-side fixes cost 3 to 5x less than pipeline-side workarounds because workarounds compound across every downstream system.

2. Rollback Planning Must Come Before Migration Execution

Teams that succeed assume the migration will partially fail and plan for it. That means keeping the source system live for 30 to 90 days post-cutover, maintaining bi-directional sync where possible, and defining clear rollback triggers before go-live. Rollback triggers should be numeric, not subjective. Examples include data reconciliation gaps above 0.5%, query latency increases above 20%, or critical workflow failure rates above 2%.

Budget 10 to 15% of total migration cost for parallel-run infrastructure. Teams that skip this line item to save money end up paying 2 to 4x more when rollback is needed mid-cutover and the source environment has already been partially decommissioned.

3. Parallel Validation Catches Issues That Post-Cutover Testing Misses

Row counts match but business logic does not. This is the pattern that causes post-migration validation failures. Common symptoms include reports showing matching totals but divergent breakdowns by region or product, or aggregates that match while underlying line items are off by 1 to 3%.

Run the source and target in parallel for 2 to 4 weeks before cutover. Compare 100% of critical reports end-to-end, not just row counts. Automate daily reconciliation checks on the top 20 to 30 business metrics. Only cut over when reconciliation variance sits below 0.1% for 10 consecutive business days. Teams that compress validation to less than 1 week see rollback rates 4 to 5x higher than teams that hold the full window.

4. Team Sizing Should Reflect Code Complexity Rather Than Data Volume

Moving 10 terabytes of clean tabular data needs fewer engineers than moving 500 gigabytes of Cognos reports with custom SQL. A useful rule of thumb: budget 1 senior engineer per 50 to 80 legacy pipelines for manual migrations, or 1 engineer per 200 to 400 pipelines when using automation accelerators.

Sizing by data volume consistently under-resources legacy code conversion projects by 40 to 60%. When internal engineering capacity is short, data migration companies with accelerator IP close the gap 3 to 6x faster than hiring. Time-to-productivity for a new hire on legacy ETL code averages 8 to 12 weeks, versus 1 to 2 weeks for an external team that has run the same migration pattern 10+ times before.

How Kanerika Delivers Seamless Data Platform Migration Through Automation

Kanerika can help your business seamlessly migrate to modern data platforms using our FLIP migration accelerators. We specialize in transforming data pipelines from legacy systems like Informatica to Talend, SSIS to Fabric, Tableau to Power BI, and SSRS to Power BI. Our expertise ensures your data migration is smooth and minimizes disruptions to your operations.

Moving to modern platforms offers significant advantages, including enhanced performance, scalability, and better integration with emerging technologies such as AI and machine learning. These platforms allow for faster data processing, real-time analytics, and a more user-friendly interface, empowering your teams to make data-driven decisions with greater efficiency.

By partnering with Kanerika, businesses can streamline the migration process, reduce manual effort, and lower the risk of errors. Our tailored automation solutions are designed to meet your specific needs, ensuring that the migration is not just efficient but also aligned with your business goals. With our experience across various industries, we provide end-to-end support from planning to execution, helping you optimize costs, improve productivity, and unlock the full potential of your data in a modern, agile environment. Let us be your trusted partner in your data platform transformation.

Case Study: How Kanerika Migrated from Informatica to Talend

The Problem

A mid-size financial services enterprise running Informatica PowerCenter across multiple business units faced a challenge common to organizations in regulated industries. The environment had been built up over eight years and contained hundreds of pipelines, custom transformations, and compliance-sensitive business logic that had never been formally documented.

A manual migration to Talend was scoped at well over a year. It would require a large team of developers to rebuild pipelines from scratch while simultaneously learning a new platform. For a regulated business, the risk of losing compliance logic during that process was not acceptable. Neither was the timeline.

The Solution

Kanerika deployed FLIP, a purpose-built migration tool that automates the conversion from Informatica to Talend, to handle the engagement. FLIP works in two parts. The first step uses a built-in export tool that connects directly to the client’s Informatica repository and packages all pipelines, workflows, and dependencies into a structured file ready for conversion.

FLIP then processed that file and converted everything into working Talend jobs automatically, flagging any complex custom logic for human review rather than attempting to convert it blindly. The team focused entirely on validation and testing rather than rebuilding pipelines from scratch.

The Results

- Migration completed in 90 days against a manual estimate of 12 to 18 months

- 75% reduction in annual licensing costs after switching to Talend

- 75% reduction in overall migration effort compared to a manual approach

- Business logic preserved throughout with no post-go-live logic failures

- Talend environment was cloud-ready from day one

Conclusion

The data migration tool market has matured, but the core problem has not changed. Moving data is straightforward. Preserving business logic, validating transformation accuracy, and avoiding the 83% failure rate requires tools that match the specific shape of the migration. Cloud-native DMS tools are best for vendor-lock-in moves. ETL platforms handle continuous pipelines. Migration accelerators like FLIP are the answer for legacy BI and ETL modernization where code conversion is the primary cost driver. Evaluate tools against your actual workload rather than generic feature checklists.

Achieve Operational Excellence with Proven Data Migration Solutions

Partner with Kanerika Today!

FAQs

Which tool is used for data migration?

Common tools used for data migration include Microsoft Fabric, Informatica PowerCenter, Talend, Databricks, and Azure Data Factory. The right choice depends on your source systems, target platforms, data volume, and transformation requirements. Enterprise migrations often require specialized migration accelerators that automate schema mapping, data validation, and error handling. Cloud-native tools like Microsoft Fabric excel at consolidating analytics infrastructure while maintaining data integrity throughout the transfer process. Kanerika’s migration accelerators automate complex platform transitions with built-in validation—connect with our team to explore the right tool for your environment.

What are the 7 migration strategies?

The seven migration strategies, often called the 7 Rs, are Rehost (lift-and-shift), Replatform (lift-tinker-and-shift), Repurchase (replace with SaaS), Refactor (re-architect), Retire (decommission), Retain (keep as-is), and Relocate (hypervisor-level migration). Each strategy balances speed, cost, and modernization goals differently. Rehosting delivers quick wins while refactoring maximizes long-term value. Enterprises typically combine multiple strategies across their application portfolio based on business criticality and technical debt. Kanerika helps organizations evaluate workloads and select optimal migration strategies—schedule a discovery session to map your modernization roadmap.

What are ETL tools in data migration?

ETL tools in data migration are software platforms that Extract data from source systems, Transform it to match target requirements, and Load it into destination databases or data platforms. Leading ETL tools include Informatica PowerCenter, Talend, Microsoft Fabric, and Databricks. These tools handle schema conversions, data cleansing, format standardization, and business logic preservation during migration. Modern ETL platforms also support real-time streaming and automated pipeline orchestration for continuous data synchronization. Kanerika specializes in ETL pipeline development and Informatica-to-modern-platform transitions—reach out for a technical consultation.

What's the best migration tool?

The best migration tool depends on your specific source platform, target environment, and data complexity. Microsoft Fabric excels for organizations consolidating analytics infrastructure, while Databricks suits enterprises building lakehouse architectures. Informatica remains strong for complex ETL transformations, and Talend offers flexibility for hybrid deployments. Evaluate tools based on connector availability, transformation capabilities, automation features, and vendor support. The ideal solution often combines platform-native tools with specialized migration accelerators that automate conversion and validation. Kanerika’s certified consultants help enterprises select and implement the right migration toolset—request a free assessment today.

What are the four types of data migration?

The four types of data migration are storage migration, database migration, application migration, and cloud migration. Storage migration moves data between physical or virtual storage systems. Database migration transfers data between database platforms like SQL Server to Microsoft Fabric. Application migration relocates entire applications along with their associated data. Cloud migration shifts on-premises data and workloads to cloud platforms like Azure or Databricks. Each type requires different tooling, validation approaches, and risk mitigation strategies. Kanerika delivers end-to-end migration services across all four types—contact us to discuss your specific migration scenario.

What is ETL in data migration?

ETL in data migration refers to the Extract, Transform, Load process that moves data between systems while ensuring compatibility and quality. Extract pulls data from source databases, applications, or files. Transform applies business rules, cleanses records, converts formats, and restructures schemas. Load writes the processed data into the target platform. ETL processes handle the heavy lifting during database migrations, ensuring referential integrity and data accuracy. Modern platforms like Microsoft Fabric and Databricks support both batch ETL and real-time streaming approaches. Kanerika builds automated ETL pipelines that preserve business logic during complex migrations—let us streamline your data transformation.

What are the best ETL tools for data migration?

The best ETL tools for data migration include Microsoft Fabric, Informatica PowerCenter, Talend, Databricks, Azure Data Factory, and Alteryx. Microsoft Fabric provides unified analytics with built-in data integration capabilities. Informatica offers enterprise-grade transformation and governance features. Talend delivers open-source flexibility with strong connector libraries. Databricks excels for lakehouse ETL pipelines handling massive data volumes. Selection depends on existing infrastructure, team expertise, and scalability requirements. Many enterprises use multiple tools across different migration projects. Kanerika implements and optimizes ETL solutions across all major platforms—talk to our data engineers about your integration needs.

Is ETL the same as data migration?

ETL and data migration are related but distinct concepts. Data migration is the broader process of moving data from one system to another, encompassing planning, execution, validation, and cutover activities. ETL is a specific method used within data migration that extracts, transforms, and loads data. While ETL handles the technical data movement and transformation, data migration includes additional concerns like downtime planning, rollback strategies, stakeholder coordination, and business continuity. Many migrations use ETL processes alongside other techniques like direct database replication or API-based transfers. Kanerika architects comprehensive migration programs that leverage ETL alongside complementary approaches—reach out to plan your migration strategy.

What is a database migration tool?

A database migration tool is software that automates moving data, schemas, and objects between database platforms. These tools handle schema conversion, data type mapping, stored procedure translation, and referential integrity validation. Examples include Azure Database Migration Service, AWS Database Migration Service, and specialized accelerators for transitions like SQL Server to Microsoft Fabric. Database migration tools reduce manual coding effort, minimize errors, and accelerate project timelines. They typically include assessment features to identify compatibility issues before migration begins and validation capabilities to ensure data accuracy post-migration. Kanerika’s database migration accelerators automate complex platform transitions—start with a free migration assessment.

What is data migration software?

Data migration software automates the process of transferring data between storage systems, databases, applications, or cloud platforms. This software category includes ETL tools, database migration utilities, cloud migration services, and specialized migration accelerators. Key capabilities include data extraction, transformation, validation, error handling, and progress monitoring. Enterprise-grade data migration software also provides audit trails, rollback capabilities, and parallel processing for large-scale transfers. Solutions range from platform-specific tools like Microsoft Fabric’s data integration features to cross-platform solutions like Informatica and Talend. Kanerika implements and customizes data migration software to match your enterprise requirements—contact us for a solution demo.

Is Informatica a data migration tool?

Informatica is a comprehensive data management platform that includes powerful data migration capabilities. Informatica PowerCenter and Informatica Cloud handle ETL processes, data transformation, and large-scale migrations across on-premises and cloud environments. The platform excels at complex enterprise migrations requiring sophisticated business logic preservation and data quality rules. However, many organizations now migrate from Informatica to modern platforms like Microsoft Fabric, Databricks, or Talend for improved cost efficiency and cloud-native capabilities. Informatica remains valuable for organizations with significant existing investments in its ecosystem. Kanerika specializes in Informatica-to-modern-platform migrations—explore our accelerators to modernize your data infrastructure.

What are the 6 Rs of data migration?

The 6 Rs of data migration are Rehost, Replatform, Repurchase, Refactor, Retire, and Retain. Rehost moves applications without modification. Replatform makes minor optimizations during migration. Repurchase replaces existing systems with SaaS alternatives. Refactor re-architects applications for cloud-native performance. Retire decommissions obsolete systems. Retain keeps certain workloads in place temporarily. Some frameworks expand this to 7 Rs by adding Relocate. Organizations typically apply different strategies across their portfolio based on application criticality, technical debt, and business value. Kanerika guides enterprises through strategic migration planning using these frameworks—schedule a workshop to assess your application portfolio.