According to Gartner, 80% of data and analytics projects fail to deliver business value—a statistic that highlights a persistent flaw in centralized data architectures. Enter data mesh, a decentralized model that shifts data ownership to domain experts across functions like marketing, sales, and operations. By treating data as a product and enabling teams to govern, document, and share their own datasets, organizations can overcome bottlenecks, improve data quality, and scale insights faster.

First introduced by Zhamak Dehghani of ThoughtWorks, data mesh is rapidly gaining traction as a practical solution for large enterprises navigating complex data ecosystems.

One of the key benefits of Data Mesh is that it helps solve advanced data security challenges through distributed, decentralized ownership. Moreover,organizations have multiple data sources from different lines of business that must be integrated for analytics. With Data Mesh, data owners are responsible for their data products’ quality, access, and distribution. This allows for greater accountability and transparency while also reducing bottlenecks and silos that can occur in traditional centralized data architectures.

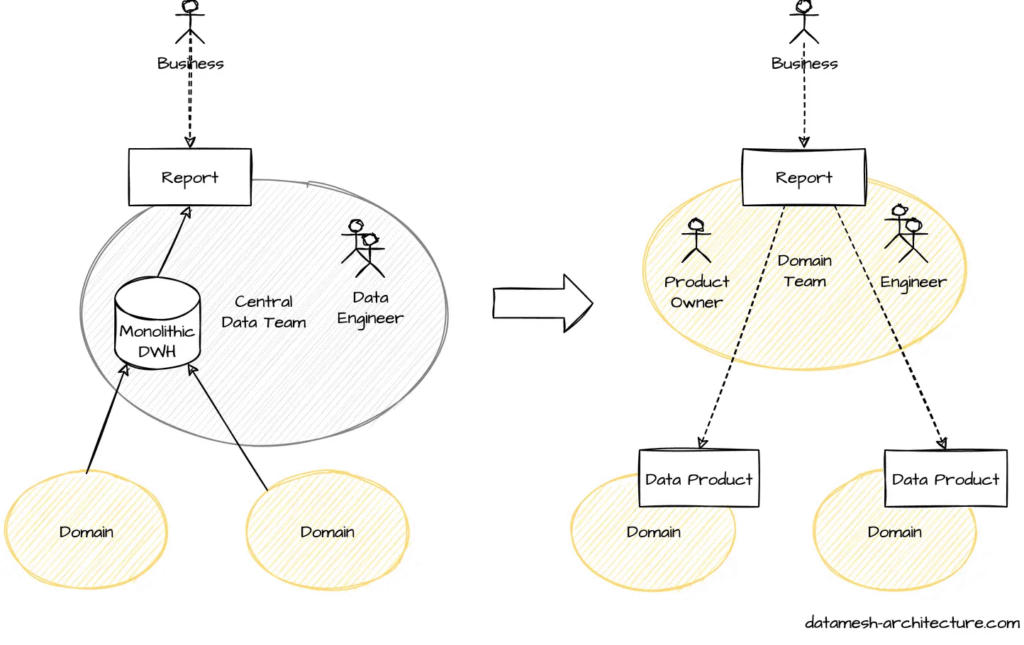

How Data Mesh Distributes Control and Responsibility

A data mesh fundamentally changes how organizations perceive and manage data—it’s no longer a passive by-product but a valuable product in itself. In this model, data ownership shifts from a centralized infrastructure team to the domain experts who generate and understand the data best. These producers become data product owners, responsible not only for creating usable datasets but also for understanding the needs of data consumers and designing APIs that support seamless, self-service access.

While domain teams handle the transformation, documentation, and stewardship of their data—including defining semantics, managing metadata, and setting access policies—a central governance function still plays a key role. It ensures consistency, compliance, and interoperability across the organization. Similarly, a central data engineering team continues to provide shared infrastructure guidance, focusing more on platform support than direct ownership of pipelines.

Much like microservices in software architecture, data mesh promotes modularity by aligning data around business functions. This domain-driven structure enables scalable, real-time integration across decentralized sources, empowering users—from analysts to data scientists—to access and leverage data products independently and efficiently.

Understanding Data Mesh

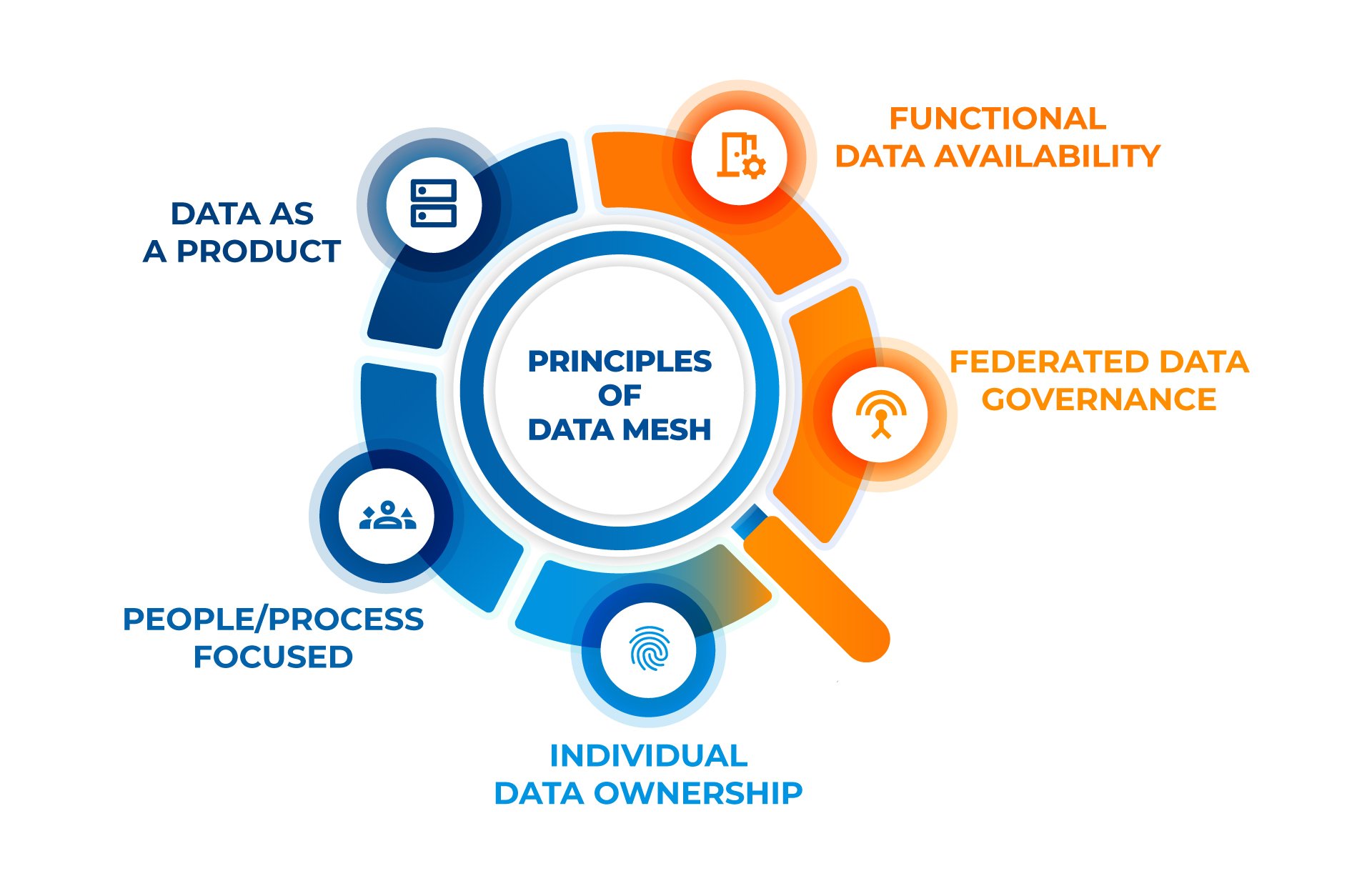

Data Mesh is a holistic approach involving people and processes. It’s adaptable, allowing organizations to tailor it to their unique needs. Focusing on a few principles provides a framework for more efficient and effective data management in the modern data-driven world.

- Functional Data Availability: Ensures that data is available and functionally usable across different domains. This principle emphasizes the need for data to be easily accessible and in a format suitable for various functional requirements, enhancing its utility for diverse business applications.

- Federated Governance: Governance is spread across various domains, allowing each team to manage its data while aligning with the broader organizational standards. This promotes faster decision-making and collaborative efforts.

- Individual Data Ownership: Data is organized around business domains, not just technical functions. Each domain is managed by a data product owner, ensuring the data is accurate, timely, and relevant for business decisions.

- People/Process Focused: These platforms empower users across the organization to access and use data independently, fostering a more agile and responsive data environment.

- Treating Data as a Product: Data is viewed as a product with its lifecycle, from creation to maintenance, ensuring it remains discoverable, usable, and high-quality.

How Databricks is Reshaping Enterprise Analytics

Databricks plays a crucial role in making enterprises Data & AI ready with its Revolutionary Data Intelligence Platform

Data Mesh vs. Data Lake vs. Data Fabric

When managing large amounts of data, several modern frameworks are available, including Data Mesh, Data Lake, and Data Fabric. While all three concepts are used to improve data management, their approach and functionality differ.

Data Lake

Data Lake is a centralized repository that stores raw data from multiple sources in its native format. The primary goal of Data Lake is to provide a cost-effective way to store and process large amounts of data. Additionally, it is designed to be flexible and scalable, making it easier to store and analyze data from multiple sources.

Data Fabric

Data Fabric is a design concept and architecture that addresses the complexity of data management. It minimizes disruption to data consumers while ensuring that data on any platform from any location can be effectively combined, accessed, shared, and governed. Moreover, it is designed to be agile and flexible, allowing organizations to adapt quickly to changing business needs.

Data Mesh

Data Mesh is a new data management approach emphasizing decentralization and domain-driven design. In a Data Mesh architecture, data is treated as a product, and each domain owns and manages its data products. The goal of Data Mesh is to improve data quality, reduce data silos, and increase data ownership and autonomy.

Benefits of Data Mesh

Implementing a data mesh architecture can bring several benefits to your organization. Here are some of the most significant benefits:

1. Improved Data Access

Data mesh architecture promotes decentralized data ownership, which means data is owned and managed by the domain or business function that understands it best. This approach allows faster and more efficient access to data, as it eliminates the need for data consumers to access data through a centralized team.

2. Better Scalability

Data mesh architecture enables better scalability by allowing individual domain teams to manage their data and data pipelines. This approach ensures that the data infrastructure can scale as the organization grows without requiring a centralized team to manage everything.

3. Enhanced Data Security

Data mesh architecture promotes data security by allowing domain teams to manage their data and pipelines. This approach ensures that sensitive data is only accessible to those who need it and that data is protected from unauthorized access.

4. Increased Agility

Data mesh architecture promotes agility by enabling domain teams to move faster and experiment with new data products without requiring approval from a centralized team. This approach allows organizations to respond quickly to changing business needs and market conditions.

5. Improved Data Quality

Data mesh architecture promotes data quality by allowing domain teams to take ownership of their own data and data pipelines. This approach ensures that data is accurate, up-to-date, and relevant to the business needs of each domain team.

Implementing a data mesh architecture can bring several benefits to your organization, including improved data access, better scalability, enhanced data security, increased agility, and improved data quality.

Data Intelligence: Transformative Strategies That Drive Business Growth

Explore how data intelligence strategies help businesses make smarter decisions, streamline operations, and fuel sustainable growth.

Top Use Cases for Data Mesh

Data Mesh is a decentralized data architecture gaining popularity due to its ability to enable self-service access and provide more ownership to the producers of a given dataset. Here are some top use cases for Data Mesh:

1. Analytics

Data Mesh is particularly useful for analytics because it allows organizations to integrate multiple data sources from different lines of business. Dedicated data product owners can manage and maintain data quality by treating data as a product, making it easier to analyze. This approach also enables business units to take ownership of their data, which can lead to better decision-making.

2. Data Governance

Data Mesh can help organizations improve their data governance by providing a framework for federated governance. Simply put, each domain has its governance policies and practices, enforceable by the data owners. By treating data as a product, organizations can ensure data quality along side ethical and responsible usage.

3. Data Science

It can also be used in data science to improve the accuracy and reliability of models. By treating data as a product, data scientists can be confident that their data is high quality and properly curated. Additionally, it leads to accurate and reliable models, which is crucial for better predictions and decisions.

4. Collaboration

It can also facilitate collaboration between different business units. By treating data as a product, data product owners can work together to ensure that data is properly integrated and used consistently and standardized. This can lead to better collaboration and communication between different business units, ultimately leading to better decision-making and improved business outcomes.

How to Set Up a Data Mesh for Your Organization

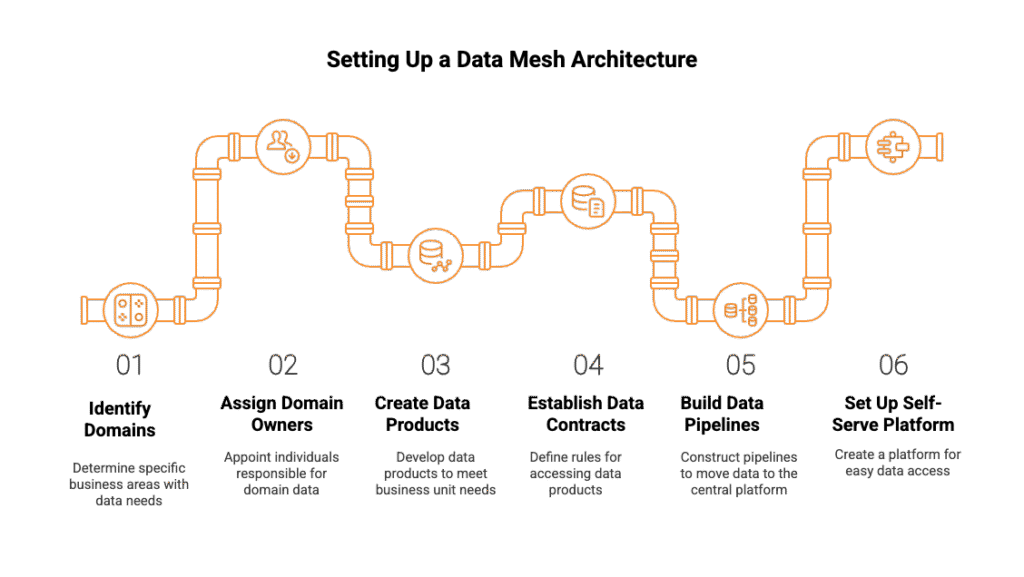

Implementing a data mesh architecture can be complex, but it can also provide significant benefits to your organization. Here are some steps to help you set up a data mesh for your organization:

1. Identify your domains:

The first step in setting up a data mesh is identifying your organization’s domains. A domain is a specific business area with data needs and requirements. For example, you may have marketing, sales, finance, and customer service domains.

2. Assign domain owners:

Once you have identified your domains, you must assign owners to each domain. Domain owners are responsible for the data within their domain and ensuring that it meets the needs of their business unit.

3. Create data products:

Each domain owner should create data products that meet the needs of their business unit. Data products can include raw data, cleaned data, and aggregated data. These data products should be stored in the domain’s data lake.

4. Establish data contracts:

Data contracts define the available data products within each domain and the rules for accessing them. Moreover, domain owners should set clear agreements about data use. These agreements need periodic review to ensure they still fit the needs of the business.

5. Build data pipelines:

Once the data products and contracts have been established, you must build pipelines to move the data from the domain’s data lake to the central data platform. Data engineers should work with the domain owners to build these pipelines.

6. Set up a self-serve data platform:

The final step is to set up a self-serve data platform. This platform should allow business units to access the needed data products without going through IT or data engineering. The self-serve data platform should also provide data discovery, exploration, and visualization tools.

Pitfalls to Avoid for Data Mesh

1. Failure to Follow DATSIS Principles

The DATSIS acronym stands for Discoverable, Addressable, Trustworthy, Self-describing, Interoperable, and Secure. These principles are essential for successful data mesh implementation. Failure to implement any part of DATSIS could doom your data mesh.

- Discoverable: Consumers must be able to research and identify data products from different domains.

- Addressable: Data products must be accessible through a standard interface.

- Trustworthy: Data must be reliable and accurate.

- Self-describing: Data products must be self-describing, including metadata and documentation.

- Interoperable: Data products must be able to work with other products and systems.

- Secure: Data must be protected from unauthorized access.

2. Ignoring Data Observability

Data observability is the ability to monitor and understand the behavior of data in a system. It is essential for ensuring data quality, reliability, and accuracy. Moreover, ignoring data observability can lead to data quality issues, which can undermine the effectiveness.

3. Lack of Governance

A data mesh requires strong governance to ensure security and privacy. Without governance, there is a risk of data misuse, which can lead to legal and reputational damage.

4. Overlooking the Importance of Culture

The success of a mesh depends on a culture of collaboration and transparency. Data owners must be willing to share their data, and business users must be willing to work with data in new ways. Overlooking the importance of culture can lead to resistance to change and a lack of adoption.

5. Underestimating Technical Complexity

Implementing a mesh can be technically complex, requiring significant system and process changes. Underestimating technical complexity can lead to delays, cost overruns, and implementation failure.

By avoiding these pitfalls, you can ensure that your data mesh implementation is successful and that you can reap the benefits of improved data access and usage.

Applying Data Mesh Principles Through Smart Engineering

Implementing a data mesh isn’t just a conceptual shift—it requires strong data engineering foundations to decentralize ownership, ensure interoperability, and maintain governance across domains. And, Kanerika has delivered measurable outcomes by applying these principles in real-world scenarios.

Logistics & Supply Chain: Enabling Federated Data Ownership

A leading logistics firm was grappling with fragmented systems that delayed access to critical insights and exposed security gaps. Kanerika addressed this by engineering a unified, domain-aligned data infrastructure, enabling business teams to own and govern their data while maintaining centralized oversight.

Results achieved:

- 42% increase in decision-making precision

- 54% improvement in data accuracy

- 61% growth in data-driven decisions

By decentralizing operational data through scalable pipelines and domain-specific reporting, this solution mirrors the self-serve, federated approach at the heart of data mesh.

Media Enterprise: Scaling Analytics Across Domains

A global media company needed to unify siloed data from Salesforce and external systems. Kanerika restructured their architecture to support domain-centric integration, allowing different business units to create and consume their own data products while aligning with organizational standards.

Results delivered:

- 75% increase in data integration capacity

- 50% reduction in report customization time

- 65% improvement in scalability for analytics

This outcome demonstrates how decentralized engineering and thoughtful orchestration enable a mesh-style architecture—where teams can move fast without sacrificing control or context.

Kanerika: Your Trusted Data Strategy Partner

At Kanerika, we specialize in transforming complex data landscapes into scalable, business-ready ecosystems. As a certified Microsoft Data & AI Solutions partner and a strategic collaborator with Databricks, we deliver end-to-end data solutions that empower organizations to harness the full potential of modern architectures like data mesh.

Our expertise spans across data integration, advanced analytics, AI/ML, and cloud-native platform development. Leveraging tools such as Microsoft Fabric, Azure Synapse, and Databricks Lakehouse, we help businesses break down silos, unify data across domains, and enable real-time decision-making.

Whether you’re just beginning your data modernization journey or looking to scale a decentralized architecture, Kanerika combines strategic consulting with deep technical delivery. Our solutions are designed to be secure, future-proof, and aligned with your unique business objectives—ensuring your data not only flows efficiently but delivers measurable impact.

Partnering with Kanerika for your data mesh strategy can provide numerous benefits. Some of these benefits include:

- Increased efficiency and agility in data management

- Reduced bottlenecks and silos in data management

- Improved data security and governance

- Better alignment between business objectives and data strategy

With Kanerika as your data strategy partner, you’re equipped to build a truly data-driven organization—one domain, one product, and one insight at a time.

FAQs

What is data mesh in simple terms?

Data mesh is a decentralized data architecture that treats data as a product, owned and managed by individual business domains rather than a central IT team. Instead of funneling all enterprise data into one monolithic warehouse, each domain such as sales, marketing, or operations takes responsibility for its own data products. This distributed approach enables faster access, better scalability, and improved data quality because domain experts understand their data best. Kanerika helps enterprises implement data mesh architectures that align with their organizational structure—schedule a consultation to explore what domain-driven data ownership looks like for your business.

What is a data mesh vs data lake?

A data lake is a centralized repository storing raw data in various formats, while data mesh is an architectural paradigm that decentralizes data ownership across business domains. Data lakes consolidate everything into one location managed by a central team, often creating bottlenecks. Data mesh distributes accountability so each domain publishes its own data products with clear ownership and quality standards. Organizations frequently use data lakes within a data mesh framework, where each domain maintains its own lake or lakehouse. Kanerika designs hybrid architectures combining data mesh principles with modern lakehouse platforms—connect with our team to assess your current data landscape.

What are the 4 principles of data mesh?

Data mesh is built on four core principles: domain-oriented ownership, data as a product, self-serve data platform, and federated computational governance. Domain ownership assigns data responsibility to business units closest to the source. Treating data as a product ensures discoverability, quality, and usability. A self-serve platform empowers domains to manage data without heavy IT dependency. Federated governance balances autonomy with enterprise-wide standards for security and compliance. Together, these principles enable scalable, trustworthy data ecosystems. Kanerika’s data architects specialize in operationalizing these four pillars—reach out to build a governance framework tailored to your enterprise.

Is data mesh obsolete?

Data mesh is not obsolete; it remains highly relevant for enterprises struggling with centralized data bottlenecks and scaling challenges. While initial hype has stabilized, organizations continue adopting data mesh principles selectively to improve agility and domain accountability. Many enterprises now implement hybrid approaches, combining data mesh concepts with data fabric automation or lakehouse architectures. The key is practical implementation rather than dogmatic adherence. Successful adoption requires organizational readiness, clear domain boundaries, and robust governance foundations. Kanerika evaluates your enterprise maturity to determine if data mesh principles align with your goals—request a free assessment today.

Is data mesh an agile approach?

Data mesh embodies agile principles by decentralizing ownership, enabling autonomous domain teams, and promoting iterative delivery of data products. Like agile software development, it breaks down monolithic structures into smaller, manageable units where cross-functional teams own end-to-end delivery. Domains can evolve their data products independently without waiting on centralized bottlenecks, accelerating time-to-insight. This autonomy mirrors agile’s emphasis on self-organizing teams and continuous improvement. However, federated governance ensures enterprise alignment without sacrificing speed. Kanerika helps organizations apply agile data practices through domain-driven data mesh implementations—let us show you how to accelerate your data delivery cycles.

What is the difference between data fabric and data mesh?

Data fabric is a technology-driven approach using automation, AI, and metadata management to integrate data across sources, while data mesh is an organizational approach decentralizing ownership to business domains. Data fabric emphasizes unified access through intelligent orchestration, typically managed centrally. Data mesh prioritizes people and processes, assigning accountability to domain experts who understand their data best. Many enterprises combine both: using data fabric’s automated integration layer to support data mesh’s distributed ownership model. The choice depends on organizational culture and technical maturity. Kanerika architects solutions leveraging both paradigms—contact us to determine the right fit for your enterprise.

What problems does data mesh solve?

Data mesh addresses centralized data team bottlenecks, poor data quality, slow time-to-insight, and scalability limitations in traditional architectures. When one team manages all enterprise data, request backlogs grow while business agility suffers. Data mesh distributes ownership so domain experts maintain data products they understand deeply, improving quality and relevance. It reduces dependencies on overstretched central teams and enables faster innovation. Organizations also gain clearer accountability since each domain owns its data’s accuracy and availability. Kanerika helps enterprises identify which pain points data mesh can realistically solve—book a discovery session to map your specific challenges.

What is the purpose of data mesh?

The purpose of data mesh is to scale data management by distributing ownership to business domains while maintaining enterprise-wide interoperability and governance. Traditional centralized architectures struggle when data volumes and consumer demands grow exponentially. Data mesh enables organizations to treat data as a strategic product, with each domain responsible for delivering high-quality, discoverable datasets to internal consumers. This approach reduces bottlenecks, improves accountability, and accelerates analytics delivery across the enterprise. It shifts data from a technical burden to a business asset. Kanerika partners with enterprises to define clear data product strategies—connect with our team to start your transformation.

Who needs a data mesh?

Data mesh benefits large, complex organizations with multiple business domains generating diverse datasets and experiencing bottlenecks with centralized data teams. Enterprises in banking, insurance, healthcare, retail, and manufacturing where distinct departments like sales, operations, and finance need autonomous data access are prime candidates. If your organization struggles with slow analytics delivery, unclear data ownership, or scaling challenges as data volumes grow, data mesh may be appropriate. Smaller companies with simpler structures may not need this level of decentralization. Kanerika assesses organizational readiness to determine if data mesh aligns with your enterprise needs—request a maturity evaluation.

What companies use data mesh?

Major enterprises including Zalando, JPMorgan Chase, Intuit, Netflix, and PayPal have adopted data mesh principles to scale their data operations. These organizations faced challenges with centralized data platforms struggling to keep pace with diverse business demands. By decentralizing data ownership across domains, they achieved faster analytics delivery and improved data quality. Financial services, e-commerce, and technology sectors lead adoption due to their complex, multi-domain environments and high data volumes. Implementation varies from full adoption to selective application of mesh principles. Kanerika has guided enterprises across industries in implementing practical data mesh strategies—explore how we can support your journey.

How does data mesh differ from traditional data architectures?

Traditional data architectures centralize data management under one IT team responsible for warehousing, integration, and governance, while data mesh decentralizes these responsibilities to individual business domains. Conventional approaches create monolithic data platforms where requests queue through a single team, causing delays. Data mesh distributes ownership so each domain publishes data products independently while adhering to federated governance standards. This shift moves from technology-centric to domain-centric organization, treating data as a product rather than a byproduct. Traditional architectures suit smaller organizations, but enterprises benefit from mesh scalability. Kanerika modernizes legacy data architectures toward distributed models—contact us for a migration roadmap.

How does data mesh handle data governance and compliance?

Data mesh implements federated computational governance, balancing domain autonomy with enterprise-wide standards for security, compliance, and interoperability. Instead of a centralized governance team dictating all rules, domains adhere to shared policies enforced through automated guardrails embedded in the self-serve data platform. This includes standardized metadata schemas, access controls, data quality thresholds, and lineage tracking. Regulatory requirements like GDPR or HIPAA are codified as global policies applied consistently across domains. The approach ensures compliance without creating bottlenecks or stifling domain innovation. Kanerika builds governance frameworks that scale with your data mesh—talk to our compliance specialists today.

How does data mesh improve data quality?

Data mesh improves data quality by assigning ownership to domain experts who understand their data’s context, usage, and nuances better than centralized teams. When domains treat data as a product, they become accountable for accuracy, completeness, timeliness, and usability. Quality standards are embedded into data product specifications with automated validation at ingestion and publishing. Consumers can provide feedback directly to domain owners, creating continuous improvement loops absent in centralized models. This accountability-driven approach catches issues faster and prevents low-quality data from propagating across the enterprise. Kanerika implements data quality frameworks within mesh architectures—reach out to enhance your data reliability.

What is the difference between DDD and data mesh?

Domain-Driven Design is a software engineering approach organizing code around business domains, while data mesh applies similar domain-centric principles specifically to data architecture. DDD focuses on bounded contexts for application development, ensuring software models reflect business realities. Data mesh borrows this philosophy, structuring data ownership around business domains rather than technical functions. Where DDD produces domain-aligned microservices, data mesh produces domain-owned data products. Data mesh explicitly acknowledges its intellectual debt to DDD, extending domain thinking from operational systems to analytical data. Kanerika applies DDD principles when architecting data mesh implementations—consult with us to align your data and application domains.

What is the difference between microservices and data mesh?

Microservices architecture decentralizes application functionality into independently deployable services, while data mesh decentralizes data ownership into domain-managed data products. Both share principles of autonomy, bounded contexts, and distributed responsibility, but microservices address operational systems and data mesh addresses analytical data. Microservices produce and consume transactional data, whereas data mesh organizes how analytical datasets are published, discovered, and governed across the enterprise. Organizations often adopt both patterns together, with domain teams owning both microservices and corresponding data products for complete end-to-end accountability. Kanerika helps enterprises align microservices and data mesh strategies—schedule a consultation to unify your architecture approach.

Is Microsoft Fabric a data mesh?

Microsoft Fabric is not a data mesh but a unified analytics platform that can support data mesh implementations. Fabric provides integrated services for data engineering, warehousing, science, and business intelligence within a single SaaS environment. Organizations can leverage Fabric’s workspaces and domains feature to implement data mesh principles, assigning ownership to business units while using Fabric’s underlying infrastructure. OneLake serves as the shared storage layer while domains manage their data products independently. Fabric enables mesh-like decentralization without requiring separate platform instances per domain. Kanerika implements data mesh architectures on Microsoft Fabric—contact us to explore domain-driven design on this platform.

Is Databricks a data mesh?

Databricks is not a data mesh but a lakehouse platform that can serve as infrastructure for data mesh implementations. The platform provides tools like Unity Catalog for centralized governance, workspaces for team isolation, and Delta Sharing for cross-domain data product distribution. Organizations adopt Databricks to build domain-owned data products while maintaining enterprise governance standards through Unity Catalog’s fine-grained access controls and lineage tracking. Databricks enables the self-serve platform principle essential to data mesh, allowing domains to independently build and publish datasets. Kanerika architects data mesh solutions on Databricks—reach out to design your domain-driven lakehouse strategy.

Is Databricks a data lake or lakehouse?

Databricks is a lakehouse platform, combining data lake storage flexibility with data warehouse reliability and performance. The lakehouse architecture uses open formats like Delta Lake to store structured, semi-structured, and unstructured data while enabling ACID transactions, schema enforcement, and SQL analytics traditionally limited to warehouses. Unlike pure data lakes that require separate processing engines, Databricks provides unified compute for ETL, streaming, machine learning, and BI on one platform. This eliminates data duplication between lake and warehouse systems. Organizations implementing data mesh often use Databricks as their domain-level storage and processing layer. Kanerika accelerates Databricks lakehouse implementations—connect with us to modernize your data infrastructure.