When Instacart’s data engineering team migrated from Amazon Redshift to Snowflake, their clusters were running at 85% disk capacity and internal teams were raising flags about data freshness. Getting the types of data migration right, and matching the approach to the environment, was what made the project work.

According to Oracle, more than 80% of data migration projects fail to meet deadlines or stay within budget. Forbytes A large part of that comes down to picking the wrong approach for the environment. Storage migrations, database migrations, application migrations, and cloud-to-cloud moves each carry different risks, timelines, and tooling requirements.

In this blog, we cover the main types of data migration, when each applies, and how a data migration framework helps teams choose the right approach before execution begins.

Key Takeaways

- Data migration is not a one-size-fits-all effort; the right approach depends on data structure, system criticality, business impact, and tolerance for downtime.

- Structured, unstructured, and semi-structured data each bring different risks, validation needs, and cleanup requirements that must be planned upfront.

- Migration strategy matters as much as technology, with big-bang, phased, and hybrid approaches offering different trade-offs among speed, risk, and operational stability.

- Storage, database, application, cloud, and business-driven migrations solve very different problems and should not be treated as interchangeable projects.

- Many migration failures happen because teams underestimate dependencies, performance behavior, or ongoing data changes during the move.

- Kanerika’s FLIP platform and migration accelerators reduce manual effort, preserve business logic, and help enterprises execute complex migrations faster with lower risk.

Make Your Migration Hassle-Free with Trusted Experts!

Work with Kanerika for seamless, accurate execution.

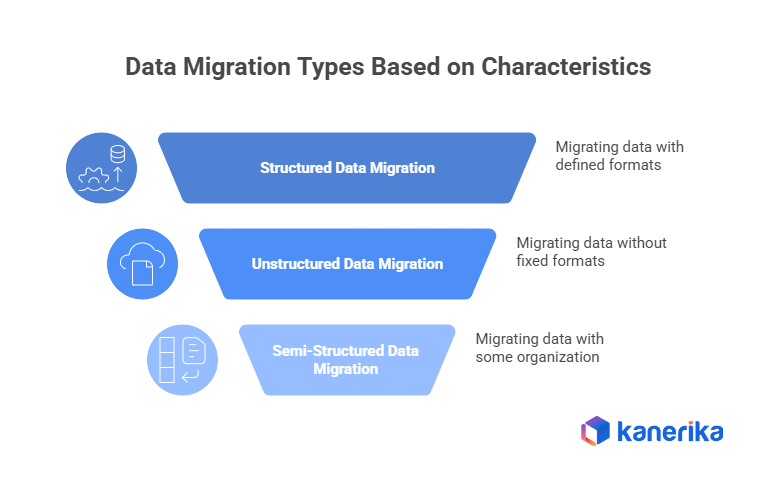

Data Migration Types Based on Data Structure

1. Structured Data Migration

Structured data migration deals with information that is stored in a clearly defined format. This usually refers to data kept in relational databases or spreadsheets, such as customer records, transaction histories, inventory systems, payroll data, or employee master tables. Because the structure is known in advance, this kind of migration is generally easier to monitor and validate. At the same time, it leaves very little room for error. When something breaks, the impact is often immediate and visible.

In most business environments, structured data is tightly connected. Tables rarely stand on their own. They are linked through keys, references, and dependencies, which means a mistake in one place can ripple across reports, applications, and downstream systems.

When teams work on structured data migration, they usually pay attention to things like:

- Ensuring table relationships remain intact so linked records continue to point to the correct entities after migration

- Maintaining transaction consistency, especially when systems are still active, and data continues to change

- Managing performance carefully when large volumes are involved, since poorly planned migrations can slow down live workloads

- Verifying results using practical checks such as record counts, financial totals, or reconciliation reports

A common example is financial data migration. Teams often run parallel reports in the old and new systems and compare totals line by line. Even small differences raise red flags and are investigated before the migration is signed off.

2. Unstructured Data Migration

Unstructured data migration focuses on content that does not follow a fixed format. This includes documents, emails, images, videos, scanned files, shared folders, and collaboration data. In many organizations, unstructured data accounts for most of what is stored, yet it is often the least controlled.

Unlike structured systems, unstructured data tends to grow in an unplanned way. Files are created, copied, renamed, shared, and forgotten. Ownership becomes unclear, and rules that once made sense may no longer apply. As a result, unstructured data migrations often turn into cleanup exercises as much as technical ones.

Teams commonly run into challenges such as:

- Moving very large volumes of files without interrupting day-to-day access

- Preserving metadata like file ownership, timestamps, and version history

- Keeping folder structures recognizable so users can still find what they need

- Making sure access permissions still reflect current roles and responsibilities

A typical case is moving shared network drives to a document management system. During these projects, teams often discover duplicate files, outdated folders, or permissions that were granted years ago and never reviewed.

3. Semi-Structured Data Migration

Semi-structured data sits somewhere between structured and unstructured formats. It does not rely on rigid tables, but it still contains markers that provide some organization. Examples include JSON files, XML data, configuration files, application logs, and event records.

This type of data has become far more common with modern applications and cloud platforms. While its flexibility makes it easy to generate and adapt, that same flexibility introduces complexity during migration.

Things teams usually need to think through include:

- Variations in structure across records that belong to the same dataset

- Nested or layered data that needs careful parsing

- Converting semi-structured data into structured formats for reporting or analysis

- Handling new data that continues to be generated while migration is underway

For example, when migrating application logs into a centralized monitoring tool, teams often find that different services log similar events in slightly different formats. Making that data usable usually requires extra normalization work.

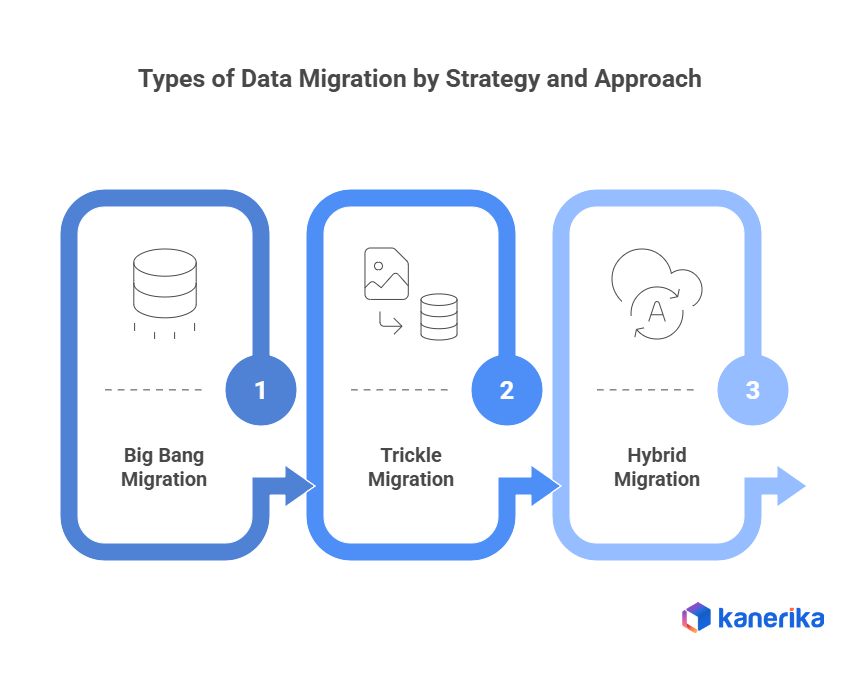

Types of Data Migration by Strategy and Approach

1. Big Bang Migration

Big bang migration is an approach where all data is moved from the existing system to the new system in one planned execution window. The old system is shut down, the migration is carried out, and once it completes, users move entirely to the new environment. There is no overlap period where both systems run side by side.

This approach is usually chosen when timelines are tight or when running two systems in parallel is not realistic due to cost, licensing, or operational overhead. Because everything happens at once, success depends heavily on preparation.

Big bang migrations typically involve:

- A short, clearly defined migration window, often scheduled during weekends or holidays

- A firm cutover point, after which all users and processes operate only in the new system

- Extensive testing and rehearsal runs, since many issues only appear after go-live

- Limited rollback options once new data starts flowing into the target system

In practice, big bang migration works best when the scope is tightly controlled. A mid-sized company migrating an internal reporting tool with few integrations might complete the move over a weekend. Larger ERP or customer-facing systems often struggle with this approach due to their complexity.

This strategy is generally suitable for:

- Smaller systems with manageable data volumes

- Applications that are not central to daily operations

- Projects where downtime is planned and accepted

- Environments with limited dependencies

2. Trickle or Phased Migration

Trickle, or phased, migration moves data gradually while both old and new systems continue to operate. Instead of switching everything at once, data is migrated in stages, giving teams time to validate results along the way.

This approach is common in large organizations where availability is critical. Experience from enterprise projects consistently shows that phased migrations reduce the risk of major outages, especially for systems that operate continuously.

Phased migration usually involves:

- Migrating data in logical segments, such as older records first and recent data later

- Keeping source and target systems synchronized so users see consistent information

- Validating and reconciling data after each phase before moving forward

- Accepting a longer overall timeline due to parallel system operation

While phased migration lowers risk, it requires more coordination. Teams must manage synchronization, user access, and change control carefully to avoid confusion or inconsistency.

It is commonly chosen for:

- Mission-critical systems where downtime is unacceptable

- Large datasets that cannot be moved in one window

- Systems with many integrations or reporting dependencies

- Organizations that prefer gradual change

A familiar example is customer data migration in telecom or banking systems, where historical data is migrated first while live transactions continue without interruption.

3. Hybrid Migration

Hybrid migration combines elements of both big bang and phased approaches. Instead of applying a single strategy everywhere, different parts of the system are migrated differently based on risk, data usage, and business impact.

In practice, this often means migrating historical or inactive data in phases well before go-live, then moving active transactional data during a short final cutover.

Common hybrid patterns include:

- Migrating several years of historical data in stages

- Performing a one-time cutover for live transactional data

- Using phased migration for non-critical components while switching tightly coupled systems together

- Allowing limited parallel operation where necessary, while keeping the user transition simple

Hybrid migration is increasingly common in large transformation programs, particularly cloud initiatives, where archival data is moved early and only recent data is handled at cutover.

It works well in:

- Large organizations with mixed system criticality

- Environments where different data types behave differently

- Projects with strict downtime limits but flexible preparation timelines

- Situations where teams have different levels of risk tolerance

Types of Data Migration by Scope and Purpose

1. Storage Migration

Storage migration involves moving data from one storage system to another while keeping applications, file formats, and access methods unchanged. The intent is usually operational rather than functional. Performance improvements, cost reduction, hardware refresh, or vendor changes are the most common triggers.

Storage migration is one of the most frequent forms of migration. Most enterprises refresh storage infrastructure every 3 to 5 years, and growing data volumes make this unavoidable. Even when applications stay the same, storage changes can affect how quickly users access data.

What teams usually deal with during storage migration:

- Transferring very large volumes of data without changing directory structures or file formats, so applications continue to work without modification

- Managing bandwidth limits, especially when data is moved between physical locations or data centers

- Preventing migration jobs from competing with live workloads, which can slow down business systems

- Validating data after migration to ensure files are complete, readable, and accessible

For example, organizations upgrading from older disk-based storage to solid-state systems often expect immediate performance gains. In practice, performance only improves after access patterns, caching, and tiering policies are adjusted post-migration.

2. Database Migration

Database migration involves moving data between database platforms or upgrading existing versions. This may include switching vendors, adopting managed database services, or replacing unsupported systems.

Because databases sit at the center of most applications, these migrations tend to be high-impact. Issues after migration are often linked to performance changes rather than missing data, especially when the new database behaves differently under real workloads.

Teams typically deal with:

- Differences in how databases handle data types, indexes, and query execution

- Stored procedures and custom logic that require partial or full rewrites

- The need for performance testing using real usage patterns

- Careful downtime planning for systems that must stay available

Organizations migrating away from costly proprietary databases often achieve savings, but usually spend significant time tuning queries and indexes before performance stabilizes.

3. Application Migration

Application migration occurs when data moves as part of replacing or modernizing an application, such as during SaaS adoption or legacy system retirement.

This type of migration directly affects users. Even when data transfers correctly, changes in workflows, screens, or reports can disrupt how teams work.

Key focus areas include:

- Mapping data between old and new application models

- Preserving historical data for audits and reference

- Verifying business rules and automated processes

- Testing usability, not just data accuracy

In CRM migrations, for example, records may move cleanly, but teams often struggle if dashboards or activity history behave differently.

How to Migrate from SSRS to Power BI: Enterprise Migration Roadmap

Discover a structured approach to migrating from SSRS to Power BI, enhancing reporting, interactivity, and cloud scalability for enterprise analytics.

4. Cloud Migration

Cloud migration involves moving data from on-premises systems to cloud platforms or between cloud providers. Most large organizations now operate in hybrid environments.

While cloud adoption offers flexibility, it introduces new operational considerations that are easy to underestimate.

Common challenges include:

- Redesigning security and access controls

- Managing data transfer time for large datasets

- Understanding pricing tied to storage, access, and data movement

- Updating backup and recovery practices

Frequently accessed data can cost more than expected if usage patterns and egress charges are not planned upfront.

5. Business Process Migration

Business process migration is driven by organizational change, such as mergers, restructuring, or standardization efforts, rather than technology upgrades alone.

Here, data supports a new way of working, which often makes decisions more complex than technical migrations.

Teams commonly face:

- Aligning data definitions across systems

- Resolving duplicates or conflicting records

- Maintaining regulatory and contractual boundaries

- Coordinating across business, legal, and IT teams

Post-merger data consolidation is a common example where technical migration is only part of the challenge.

6. Data Center Migration

Data center migration involves relocating or consolidating entire IT environments, including servers, storage, and networks. These projects carry a higher risk due to system interdependencies.

They are often triggered by cost pressures, expiring leases, or plans to reduce physical infrastructure.

Typical challenges include:

- Hidden dependencies discovered late

- Network and security reconfiguration issues

- Coordinating cutovers across multiple systems

- Extensive testing after migration

Even small oversights can lead to downtime, which is why data center migrations are planned carefully and executed in stages.

7. Business process migration

Business process migration is driven by organizational change such as mergers, restructuring, or standardization, rather than a technology upgrade. It adds governance and alignment complexity on top of the technical work:

- Aligning data definitions across systems with different naming conventions and business rules

- Resolving duplicate or conflicting records from previously separate business units

- Coordinating across business, legal, and IT teams with different definitions of success

In regulated industries like pharma, banking, and healthcare, compliance obligations shape the migration plan from the start. GDPR, HIPAA, SOX, and PCI-DSS carry specific requirements on data residency, retention, and access during transition that must be addressed before data moves, not after.

8. Data center migration

Data center migration relocates or consolidates entire IT environments, triggered by expiring leases, cost pressure, or infrastructure reduction plans. It carries higher risk than most types because of system interdependencies:

- Hidden dependencies discovered late, after sequencing decisions are already made

- Network and security issues that only surface once systems are in the new environment

- Extensive post-migration testing before the old environment can be decommissioned

Oversight in dependency mapping can cause simultaneous downtime across multiple systems. These migrations are almost always executed in carefully sequenced stages.

| Migration type | Primary trigger | Typical output | Key risk |

| Storage | Hardware refresh, cost reduction | Faster, cheaper storage | Performance gap post-move |

| Database | Vendor switch, version upgrade | Modern, supported platform | Query and procedure rewrites |

| Application | SaaS adoption, legacy retirement | Modern application stack | User workflow disruption |

| Cloud | Scalability, agility, cost | Cloud-native environment | Egress costs, security gaps |

| ETL / Pipelines | Platform modernization | Modern pipeline stack | Embedded business logic loss |

| Data warehouse | Cloud analytics, AI readiness | Scalable analytics platform | Semantic model re-validation |

| Business process | Merger, restructuring | Unified data model | Conflicting definitions, governance |

| Data center | Lease expiry, consolidation | Reduced infrastructure footprint | Hidden dependencies, downtime |

How to Choose the Right Type of Data Platform Migration

Before selecting a strategy, assess the source data. Teams that skip this step consistently find quality problems mid-migration. A basic profiling pass should cover:

- Volume and completeness: total record counts, null rates, and missing fields

- Referential integrity: whether foreign keys and linked records are consistent across systems

- Regulated data location: where PII, PHI, or compliance-sensitive data lives before it moves

From there, a few factors shape which type and strategy fits:

In most projects, the final approach is a combination. What matters is choosing a path that keeps systems usable, reduces surprises at go-live, and maintains trust in the data afterward.

| Factor | What to assess | Migration type to consider |

| Data volume | Total size and whether it fits in a single migration window | Large volumes favor phased or hybrid over big bang |

| Downtime tolerance | Maximum acceptable offline time for the system | Zero tolerance drives phased or parallel-run strategies |

| System criticality | Whether the system is core to operations or a supporting tool | Critical systems need phased approaches with rollback planning |

| Dependency count | Number of integrations and downstream systems relying on this data | High dependency count increases big bang risk significantly |

| Data quality | Current state of source data and extent of known gaps | Poor quality requires a cleanse-first phase regardless of type |

| Compliance | Regulatory, contractual, or audit obligations on the data | Compliance drives sequencing and adds validation checkpoints |

Kanerika’s AI-Powered FLIP Platform and Migration Accelerators

At the core of Kanerika’s migration approach is FLIP, a low-code platform designed to simplify and speed up complex data migrations. Instead of relying heavily on manual scripting, FLIP automates key stages such as discovery, schema mapping, transformation, validation, lineage extraction, and cutover. In practical terms, this means teams can automate a large portion of repetitive migration work, often around 70–80%, which helps reduce timelines and lowers the risk of human error while keeping business logic intact.

What makes FLIP useful in real projects is how it handles common enterprise migration paths through pre-built accelerators. FLIP reduces migration effort by 75% and most migrations complete in 2 to 8 weeks depending on pipeline volume. These accelerators are designed to reduce rebuild effort while preserving how data is actually used by the business.

Supported Accelerators

- Cognos / Crystal Reports / SSRS / Tableau → Microsoft Power BI

Streamlines the migration of reports, dashboards, calculations, and filters into Power BI while preserving reporting intent and usability. - Informatica → Alteryx / Databricks / Microsoft Fabric / Talend

Automates the conversion of Informatica workflows and transformations into modern data engineering and analytics platforms. - Microsoft Azure → Microsoft Fabric

Aligns existing Azure data pipelines and workloads with Fabric’s unified analytics architecture. - SQL Services → Microsoft Fabric

Modernizes legacy SQL Server workloads into scalable, governed Fabric-based solutions. - UiPath → Microsoft

Transitions automation workflows into Microsoft-native environments for tighter integration across the data and analytics stack.

These accelerators help organizations modernize faster, reduce dependency on manual rebuilds, and move confidently toward cloud-ready, analytics-driven platforms.

Case Study: 45% Performance Gain for a Multinational Pharmaceutical Enterprise

A multinational pharmaceutical company operating across 50+ countries was running its analytics function on Tableau. As the organization scaled, high licensing costs, restricted data access, and static dashboards made it difficult to support fast, self-service decision-making.

Challenges

- Tableau dashboards lacked interactivity, limiting their usefulness for real-time operational decisions

- High licensing and infrastructure costs were straining the BI budget across a 50-country operation

- Restricted data access meant teams waited on centralized reports rather than exploring data independently

Solutions

- Migrated all Tableau reports to Power BI using FLIP’s migration utility, preserving dashboard logic and minimizing rebuild effort

- Replaced static dashboards with interactive, real-time Power BI visuals for direct operational data access

- Improved data governance and access controls, enabling secure self-service analytics across geographies

Results

- 45% increase in operational performance across business units

- 30% reduction in BI-related costs from optimized licensing and infrastructure

- 100% scalable analytics capability, supporting growing data volumes without performance constraints

Conclusion

Data migration is a critical step in any modernization effort, but success depends on choosing the right type and approach for your specific context. Each migration type comes with its own risks, validation needs, and operational impact, making upfront planning essential.

Organizations that align their strategy with data complexity, system dependencies, and business goals are far more likely to stay on schedule and avoid costly rework. With the right combination of strategy, tools, and execution discipline, data migration can become a smooth transition rather than a disruptive challenge.

Accelerate Your Data Transformation by Migrating to Modern Platforms!

Partner with Kanerika for Expert Data Migration Services

FAQs

What are the types of data migration?

The primary types of data migration include storage migration, database migration, application migration, cloud migration, and data warehouse migration. Storage migration moves data between physical or virtual storage systems. Database migration transfers data between different database platforms or versions. Application migration shifts data when replacing or upgrading enterprise software. Cloud migration moves on-premises data to cloud environments like Azure or AWS. Data warehouse migration consolidates analytics infrastructure into modern platforms. Each type requires distinct planning, validation protocols, and execution frameworks. Kanerika delivers end-to-end data migration services across all these categories—connect with our team to plan your migration roadmap.

What are some data migration strategies?

Common data migration strategies include big bang migration, phased migration, parallel adoption, and hybrid approaches. Big bang migration transfers all data in a single operation during a defined downtime window. Phased migration moves data incrementally across scheduled stages, reducing risk exposure. Parallel adoption runs old and new systems simultaneously until full validation completes. The right strategy depends on data volume, acceptable downtime, compliance requirements, and business continuity needs. Enterprise data platform migrations often combine multiple approaches for optimal results. Kanerika’s migration accelerators help enterprises execute these strategies with precision—schedule a discovery call to explore your options.

What are the 6 Rs of data migration?

The 6 Rs of data migration are Rehost, Replatform, Repurchase, Refactor, Retire, and Retain. Rehosting lifts and shifts applications without modification. Replatforming makes minimal optimizations during the move. Repurchasing replaces existing systems with cloud-native solutions. Refactoring re-architects applications for better cloud performance. Retiring eliminates redundant systems no longer needed. Retaining keeps certain workloads on-premises when migration isn’t viable. These strategies help organizations categorize workloads and determine the most efficient migration path for each application or dataset. Kanerika uses this framework to build customized migration plans—reach out for a free workload assessment.

Is ETL the same as data migration?

ETL and data migration are related but distinct concepts. ETL (Extract, Transform, Load) is a process that extracts data from sources, transforms it into a usable format, and loads it into a destination system. Data migration refers to the broader initiative of moving data between storage systems, databases, or platforms. ETL serves as a common methodology within data migration projects, particularly when data requires cleansing, restructuring, or format conversion during transfer. However, some migrations use direct replication without transformation. Understanding this distinction helps organizations select appropriate tools and approaches. Kanerika implements ETL-driven migrations on platforms like Databricks and Microsoft Fabric—let’s discuss your requirements.

How do I choose the right type of data migration for my organization?

Choosing the right type of data migration requires assessing your current infrastructure, target environment, data complexity, and business objectives. Start by inventorying all data sources, documenting dependencies, and defining acceptable downtime windows. Evaluate whether you need storage migration for infrastructure consolidation, database migration for platform upgrades, or cloud migration for scalability. Consider regulatory compliance, data sensitivity, and integration requirements with existing applications. Budget constraints and timeline expectations also influence your decision between big bang and phased approaches. Kanerika conducts comprehensive migration assessments to match the optimal migration type to your enterprise needs—request your free evaluation today.

What is the difference between cloud migration and data warehouse migration?

Cloud migration moves data, applications, and workloads from on-premises infrastructure to cloud environments like Azure, AWS, or Google Cloud. Data warehouse migration specifically transfers analytics data and reporting structures from legacy warehouses to modern platforms like Microsoft Fabric, Snowflake, or Databricks. Cloud migration focuses on infrastructure modernization, while data warehouse migration emphasizes analytics optimization, schema transformation, and BI tool compatibility. A cloud migration project may include data warehouse migration as one component, but data warehouse migrations can also occur between cloud platforms. Kanerika specializes in both migration types with dedicated accelerators—connect with us to discuss your modernization goals.

Can a data migration involve more than one type?

Yes, enterprise data migration projects frequently involve multiple migration types simultaneously. A digital transformation initiative might combine cloud migration with database migration and application migration within a single program. For example, moving from on-premises SQL Server to Microsoft Fabric involves storage migration, database migration, and cloud migration elements. Organizations consolidating legacy systems often execute data warehouse migration alongside application modernization. These complex migrations require careful orchestration, dependency mapping, and phased execution to prevent data loss or system conflicts. Kanerika manages multi-type migration programs with integrated governance frameworks—talk to our architects about coordinating your enterprise migration.

What are common challenges in data migration?

Common data migration challenges include data quality issues, schema incompatibilities, extended downtime, data loss risks, and integration failures. Poor source data with duplicates, missing values, or inconsistent formats creates validation headaches during transfer. Legacy system dependencies often surface unexpectedly, causing application breakdowns. Underestimating data volumes leads to timeline overruns and budget escalations. Security and compliance requirements add complexity when migrating sensitive information across environments. Inadequate testing before cutover results in production failures. Successful migrations require thorough discovery, comprehensive mapping, and rigorous validation protocols. Kanerika’s migration accelerators address these challenges with automated profiling and validation—explore our proven methodology for risk-free migrations.

How long does a data migration project take?

Data migration project timelines vary significantly based on scope, complexity, and migration type. Simple storage migrations with clean data may complete in weeks, while enterprise-wide database migrations or cloud transformations typically span three to twelve months. Factors affecting duration include data volume, number of source systems, integration complexity, testing requirements, and organizational readiness. Phased migration approaches extend timelines but reduce risk exposure. Regulatory compliance validation and user training add additional time beyond technical completion. Accurate timeline estimation requires detailed discovery and dependency mapping. Kanerika’s migration ROI calculator helps enterprises estimate realistic project timelines—use our free assessment tool to plan your migration schedule.

How can automation and AI help with data migration?

Automation and AI dramatically accelerate data migration by handling repetitive tasks, identifying patterns, and reducing manual errors. Automated data profiling quickly catalogs source systems and detects quality issues. AI-powered schema mapping suggests optimal transformations between incompatible structures. Machine learning algorithms identify anomalies during validation that humans might miss. Automated testing frameworks run thousands of validation checks in hours instead of weeks. Intelligent process automation handles ETL workflow conversion and documentation generation. These technologies reduce migration timelines by 40-60% while improving accuracy. Kanerika’s FLIP platform leverages AI-driven automation for enterprise migrations—see how our intelligent accelerators transform migration efficiency.

What are the 7 migration strategies?

The 7 migration strategies, often called the 7 Rs, are Rehost, Relocate, Replatform, Refactor, Repurchase, Retire, and Retain. Rehosting lifts and shifts workloads without changes. Relocating moves infrastructure to cloud with minimal modification. Replatforming optimizes during migration for better cloud utilization. Refactoring re-architects applications for cloud-native capabilities. Repurchasing replaces legacy systems with SaaS alternatives. Retiring decommissions outdated applications no longer needed. Retaining keeps specific workloads on-premises when migration isn’t practical. Organizations typically apply different strategies across their application portfolio based on business value and technical feasibility. Kanerika helps enterprises categorize workloads and select optimal strategies—schedule a portfolio assessment with our migration experts.

What is the most common type of migration?

Cloud migration is currently the most common type of data migration as organizations accelerate digital transformation initiatives. Enterprises worldwide are moving on-premises data and applications to cloud platforms like Microsoft Azure, AWS, and Google Cloud for improved scalability, cost efficiency, and modern analytics capabilities. Database migration follows closely, driven by organizations upgrading from legacy systems to platforms like Snowflake, Databricks, or Microsoft Fabric. Application modernization projects also drive significant migration activity as businesses replace outdated software with cloud-native solutions. Market trends show cloud and data warehouse migrations dominating enterprise IT budgets. Kanerika delivers rapid cloud migration implementations with zero data loss guarantees—explore our cloud migration accelerators.

What are the main types of data migration?

The main types of data migration encompass storage migration, database migration, application migration, cloud migration, and data warehouse migration. Storage migration transfers data between physical drives, SAN systems, or virtual storage environments. Database migration moves data across database platforms like Oracle to SQL Server or SQL Server to Databricks. Application migration accompanies software upgrades or replacements requiring data restructuring. Cloud migration shifts on-premises workloads to cloud infrastructure. Data warehouse migration consolidates analytics into modern platforms like Microsoft Fabric or Snowflake. Each migration type addresses specific business objectives and technical requirements. Kanerika provides specialized accelerators for every major migration type—contact us to identify your optimal approach.

What are the 5 Rs of migration?

The 5 Rs of migration are Rehost, Refactor, Revise, Rebuild, and Replace. Rehosting moves applications to new infrastructure without code changes, ideal for rapid cloud migration. Refactoring modifies applications to leverage cloud-native features while preserving core functionality. Revising extends or optimizes applications during the migration process. Rebuilding completely rewrites applications using modern architectures and technologies. Replacing retires legacy systems entirely in favor of commercial off-the-shelf or SaaS solutions. This framework helps organizations evaluate each application’s migration path based on technical debt, business criticality, and modernization goals. Kanerika applies the 5 Rs methodology to optimize your migration portfolio—start with our workload assessment service.