“Without data, you’re just another person with an opinion.” – W. Edwards Deming

Among the many business intelligence tools out there — Tableau, Qlik, Looker — Power BI continues to lead because of its deep integration with Microsoft services, cost-effectiveness, and flexibility. According to the 2024 Gartner Magic Quadrant for Analytics and BI Platforms, Power BI holds a Leader spot for the 16th time, praised for its scalability and accessibility across skill levels.

With the March 2025 update, Microsoft added a major feature: the ability to create Direct Lake Semantic Models directly in Power BI Desktop — and across multiple lakehouses or warehouses. This means you can build and analyze large-scale models without constantly refreshing data or juggling manual steps.

In this blog, you’ll learn how to turn on the Direct Lake feature, connect multiple lakehouses in a single model, create calculated measures, and build reports — all inside Power BI Desktop. We’ll also cover how this method stacks up against traditional import and DirectQuery modes.

What is a Direct Lake Semantic Model in Power BI?

A Direct Lake Semantic Model is a Power BI feature introduced in the March 2025 update. It allows users to build semantic models directly on top of Microsoft Fabric’s OneLake storage system. Instead of importing data or relying on refresh-heavy connections, this model reads live data straight from lakehouses or warehouses — even across multiple sources.

You’re no longer limited to a single data source or dependent on scheduled refreshes. Power BI reads the data directly from where it’s stored, keeping your model up to date without duplication or delay.

Key Features of Direct Lake Semantic Models in Power BI

- Model Creation in Power BI Desktop: You can build and manage your semantic model fully inside the desktop app, without needing to jump into the Power BI Service.

- Multiple Lakehouse Connections: Combine data from more than one lakehouse or warehouse. Earlier Direct Lake models were limited to a single source.

- No Data Import Required: Models run on live data — which means no duplication, no manual syncing, and no size bloating.

- Fast Performance: It directly queries OneLake, the model loads faster and reacts quicker during report generation.

- Auto-Version Tracking: Any changes made to the model can be tracked over time, useful for debugging and collaboration.

- Full Integration with Fabric Workspaces: Once created, your semantic model is immediately saved to your Fabric workspace, making it available in the Power BI Service for reuse.

Move Beyond Legacy Systems and Embrace Power BI for Better Insights!

Partner with Kanerika Today.

Steps to Enable Direct Lake Semantic Model Support in Power BI

Before you can start building a Direct Lake Semantic Model, you need to turn on a specific preview feature in Power BI Desktop. This setting unlocks the ability to create models that pull data live from multiple lakehouses or warehouses using Direct Lake mode.

Follow these steps to enable it:

Step 1: Launch Power BI Desktop

Make sure you’re using the latest version of Power BI Desktop. The feature is part of the March 2025 release, so older versions won’t have this option.

Step 2: Go to Options

Once Power BI Desktop is open, click on the File tab in the top-left corner, then choose Options and Settings, and click on Options.

Step 3: Find the Preview Features Section

In the Options window, look on the left-hand side and scroll down to Preview Features.

Step 4: Enable the Feature

Look for the checkbox labeled:

“Create semantic models in Direct Lake storage mode from one or more Fabric artifacts”

Tick this box to enable it.

Step 5: Save and Restart

Click OK to save the setting. Then close and reopen Power BI Desktop. This step is essential — the feature won’t take effect until Power BI restarts.

This isn’t just a minor switch. Enabling this feature changes how Power BI Desktop behaves, giving you access to a more powerful modeling experience. Without it, the Direct Lake model creation option simply won’t show up in your interface.

How to Connect to Your First Lakehouse in Power BI

Now that you’ve enabled the preview feature, you’re ready to build your first Direct Lake Semantic Model. In this section, you’ll connect Power BI Desktop to a lakehouse in Microsoft Fabric and create your base model using live data.

Here’s how to do it:

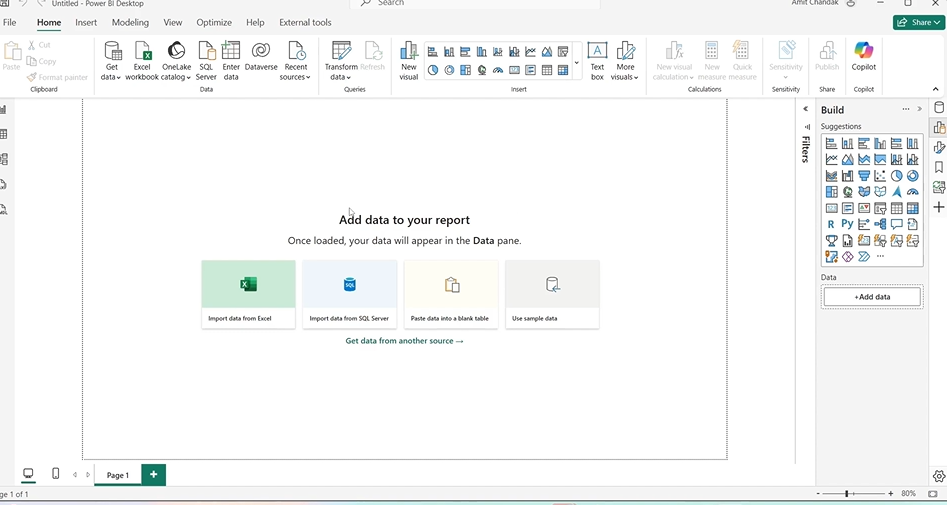

Step 1: Open Power BI Desktop

Launch Power BI Desktop after enabling the preview feature. You’ll land on a blank report canvas.

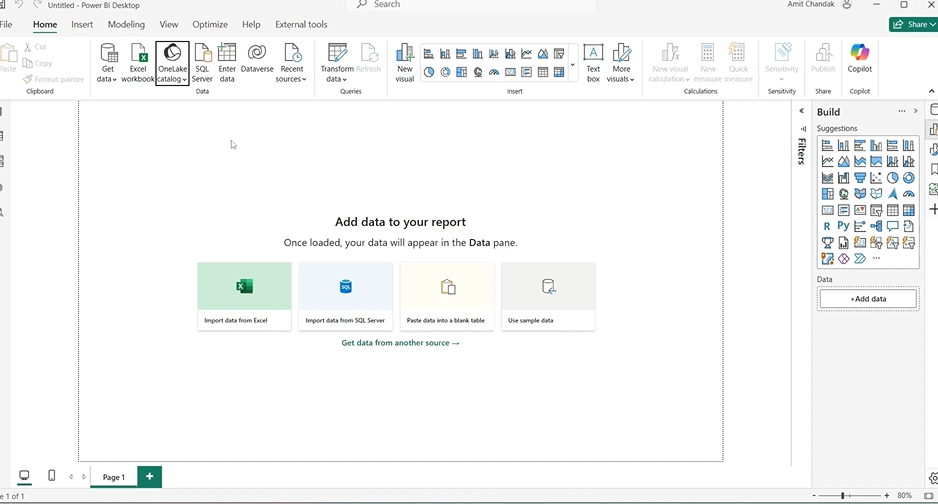

Step 2: Access the OneLake Catalog

On the Home ribbon, click the OneLake Data Hub or find the OneLake Catalog pane. This is where your available lakehouses and warehouses are listed.

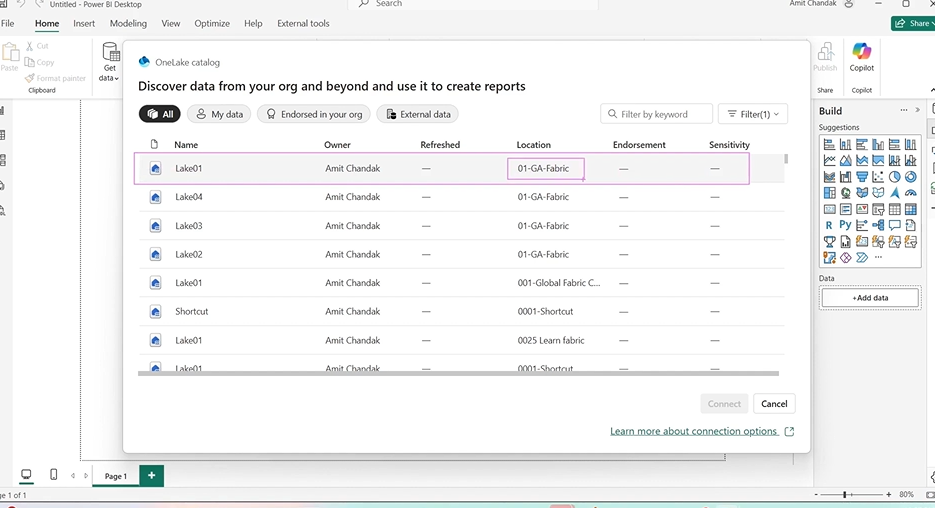

Step 3: Choose a Lakehouse

From the list of available resources, select your target lakehouse. For example, choose lake01 under a workspace like 01GA fabric.

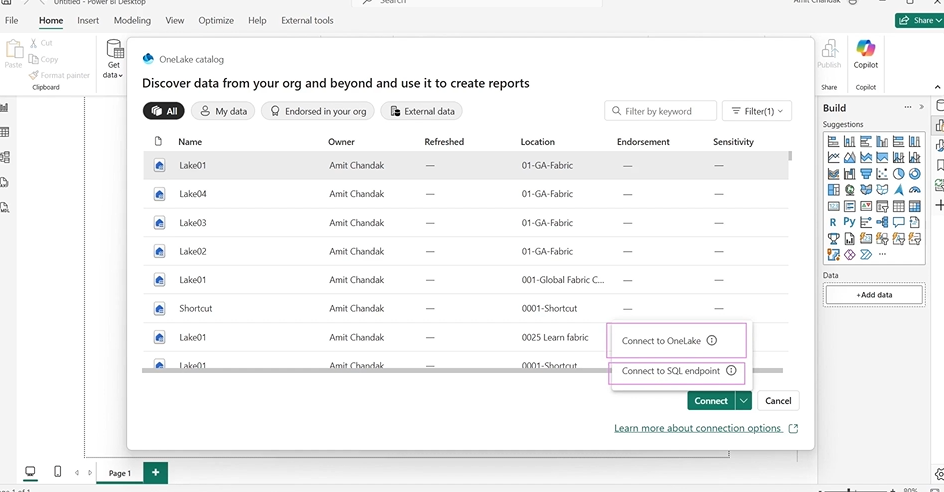

Step 4: Click Connect

Once you select the lakehouse, click the arrow next to the Connect button. You’ll see two options:

- Connect to OneLake

- Connect to SQL Endpoint

Choose Connect to OneLake.

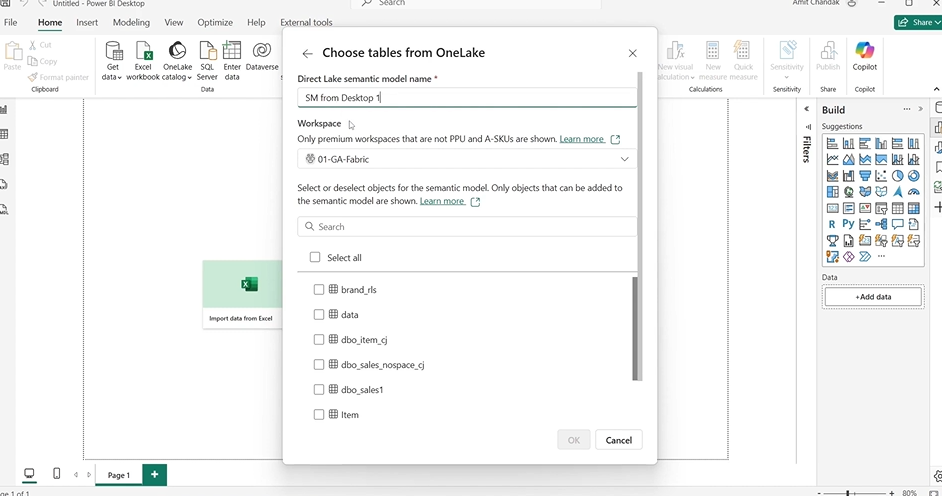

Step 5: Name Your Semantic Model

You’ll be prompted to give your semantic model a name. Use something simple and meaningful like:

SemanticModel_Desktop_01

Step 6: Select Your First Table

A list of tables from the selected lakehouse will appear. Pick a starting table — for example, Item.

Step 7: Click OK

Once the table is selected, click OK to confirm. Power BI will now create your semantic model in Direct Lake mode.

Steps to Add Tables from Multiple Lakehouses in Power BI

You might have sales data in one lakehouse, product info in another, and regional insights stored separately. In the past, this meant merging all of it before analysis — either through ETL processes or workarounds that slowed you down.

With Direct Lake Semantic Models in Power BI, you don’t need to do that anymore. You can pull data from multiple lakehouses or warehouses, combine them into one model, and work on it live — all from Power BI Desktop.

Let’s look at why these matters and how to do it step by step.

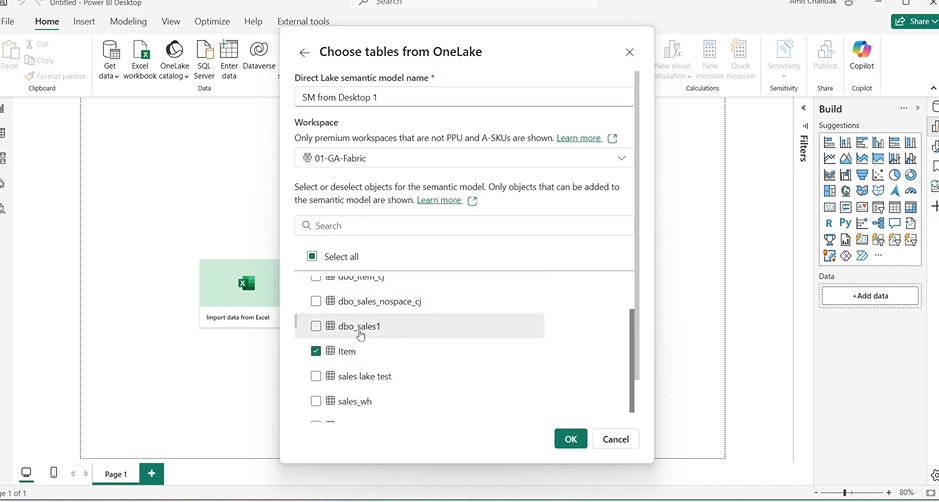

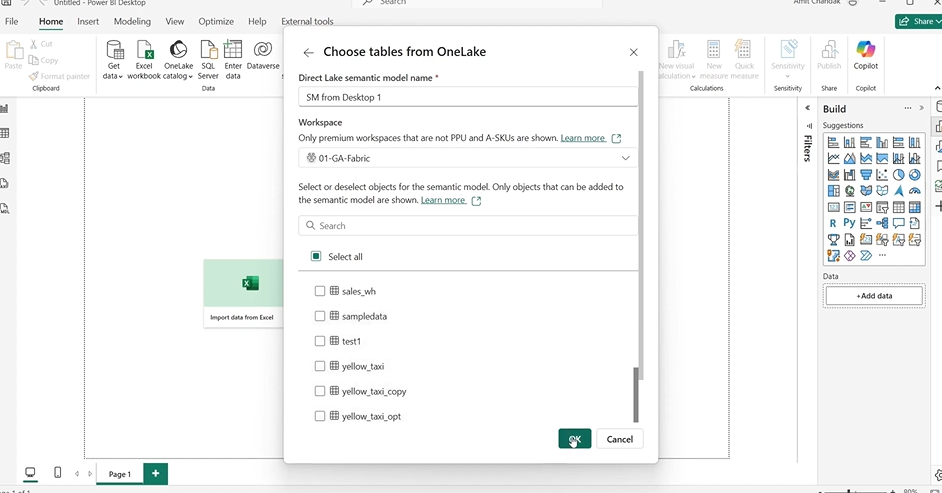

Step 1: Stay Inside Your Current Model

Make sure you’re still in the model you created earlier in Power BI Desktop. You don’t need to start a new file.

Step 2: Open the OneLake Catalog Again

On the left pane, go to the OneLake catalog. This shows all the available lakehouses and warehouses you have access to.

Step 3: Pick a Second Lakehouse

Select a different lakehouse (e.g., lake04). This can be from the same workspace or another one, as long as you have permission.

Step 4: Connect and Select a Table

Click the arrow next to Connect, then choose Connect to OneLake. Pick the table you need — for example, Sales — and confirm.

Step 5: Check That It’s Added

The new table will now appear in your model view, alongside the table from the first lakehouse (e.g., Item).

Step 6: Create Relationships

To link the data:

- Drag a common field (e.g., Item ID in Sales) to its counterpart in the other table (Item).

- In the dialog that opens, set the relationship as:

- Many-to-One

- Single Direction

- Make it Active

Then click OK.

Step 7: Save Your Model

Save the changes. The model is now live and supports querying across lakehouses.

Why Add Tables from Multiple Lakehouses?

- Cross-domain analysis: Connect sales with product, customer, and supply chain data in one place.

- Faster decision-making: Avoid manual joins or exports from different teams or tools.

- Cleaner architecture: No ETL or staging layers needed just to combine two sources.

- Modular modeling: Keep data domains separate but use them together when needed.

How to Verify and Use Your Direct Lake Semantic Model in Power BI

After building your Direct Lake Semantic Model in Power BI Desktop, it’s essential to verify its successful deployment in the Power BI Service. This step ensures that your model is accessible for collaboration, report creation, and further analysis within your organization.

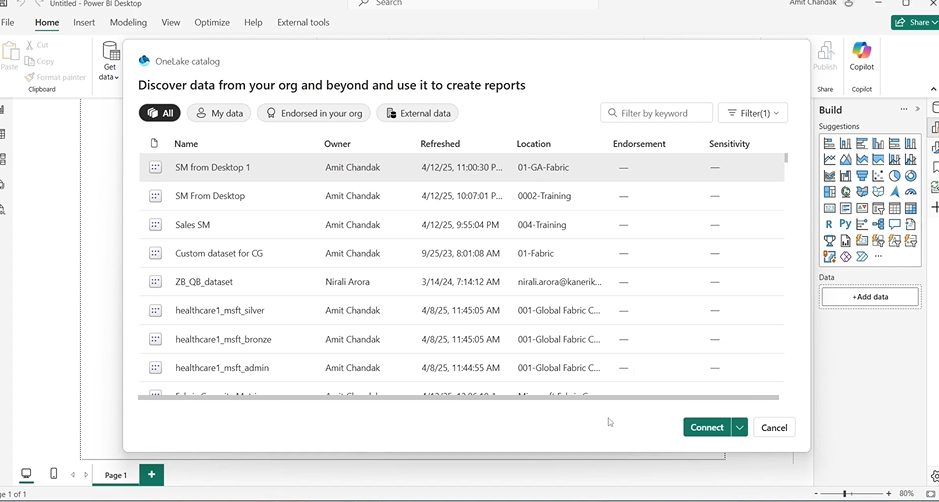

Step 1: Open Power BI Service

Navigate to Power BI Service in your web browser and sign in with your organizational credentials.

Step 2: Access Your Workspace

In the left-hand navigation pane, click on Workspaces and select the workspace where you saved your semantic model, such as 01GA fabric.

Step 3: Locate the Semantic Model

Within the workspace, go to the Datasets + Dataflows tab. Here, you should see your newly created semantic model listed, for example, SemanticModel_Desktop_01.

Step 4: Open the Semantic Model

Click on the semantic model name to open it. This action allows you to view the model’s structure, including tables, relationships, and measures.

Step 5: Create a New Report

From the semantic model view, click on Create Report. This option opens a new report canvas where you can start building visualizations using the data from your Direct Lake Semantic Model.

Step 6: Build Visualizations

In the report canvas:

- Drag fields from your model into the Values, Axis, and Legend areas to create visuals.

- Use slicers and filters to interact with your data dynamically.

- Customize visuals to suit your analytical needs.

Step 7: Save and Share the Report

Once your report is ready:

- Click on File > Save to store the report within the workspace.

- Use the Share option to distribute the report to colleagues or stakeholders, ensuring they have the necessary permissions to view it.

Cognos vs Power BI: A Complete Comparison and Migration Roadmap

A comprehensive guide comparing Cognos and Power BI, highlighting key differences, benefits, and a step-by-step migration roadmap for enterprises looking to modernize their analytics.

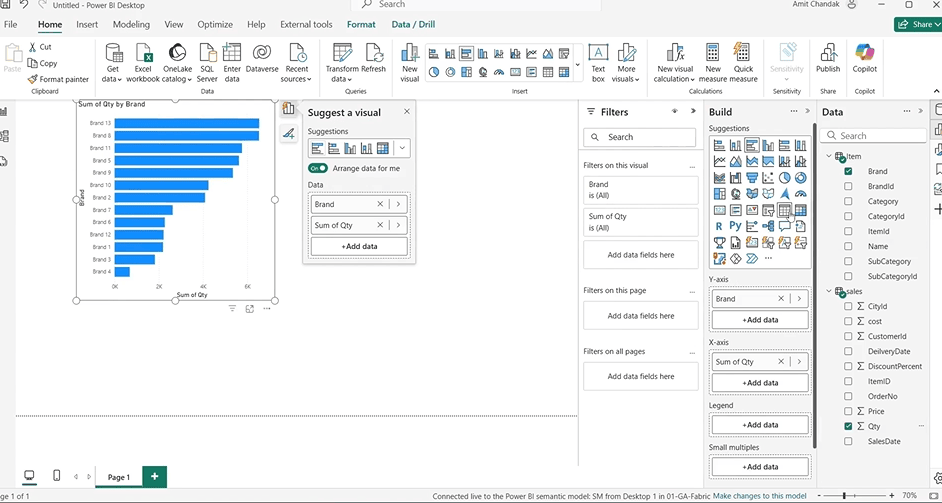

Building Reports Using Your Direct Lake Semantic Model

Once you’ve created your Direct Lake Semantic Model and connected data from one or more lakehouses, the next step is where the real magic happens — building reports.

With traditional Power BI workflows, you often had to wait for data imports, manage refresh cycles, or pre-process data to make reports possible. Now, with Direct Lake, you can query data live, right from OneLake, and build interactive visuals without delay.

Here’s how to get started with your first report, using the model you just built.

Step 1: Open a New Report

In Power BI Desktop, open a new blank report. This report will connect to the semantic model you just created — not start from scratch.

Step 2: Connect to the Semantic Model

Go to Home → Get Data → Power BI datasets (or OneLake catalog, if you prefer).

Look for your semantic model, named something like SemanticModel_Desktop_01.

Step 3: Choose Connection Mode

When prompted, choose the default read-only mode. This is ideal for building reports without making changes to the model itself.

Tip: If you do want to edit the model again later, use the edit mode. But for reporting only, read-only is perfect.

Step 4: Add Visuals

Start dragging fields into the canvas:

- From Item table, drag in a column like Brand

- From Sales, drag in a metric like Quantity

Power BI will automatically detect relationships and visualize the data.

Step 5: Format and Customize

Change the visual type to suit your needs — e.g., table, bar chart, or matrix. Add filters, slicers, or formatting options to make the data easier to read.

Step 6: Save the Report

Once done, save the report as a .pbix file. You can also publish it to Power BI Service if you want to share it with others.

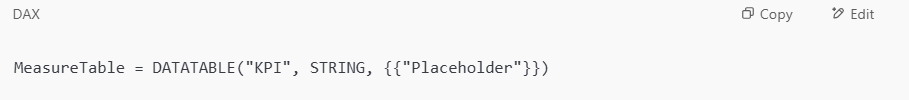

Creating a Central Measure Table in Power BI

Once your model is set up and your tables are connected, the next step is to calculate meaningful metrics — like Gross Sales, Profit, or KPIs. But instead of scattering measures across different fact tables, it’s cleaner and more scalable to store them in a dedicated measure table.

This is a common Power BI best practice. It keeps your model organized and your report-building process smoother.

Let’s walk through how to set this up — especially in Direct Lake mode, where a few traditional options (like Enter Data) aren’t available.

Step 1: Use the “New Table” Option

Since “Enter Data” is not available in Direct Lake models, go to the Home ribbon and click on New Table.

Step 2: Define a Dummy Table

Use a basic table expression that creates a placeholder row. Example:

You’ll now see a table named MeasureTable with one row and one column called KPI.

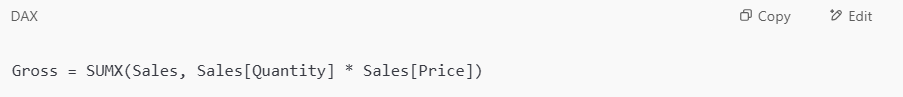

Step 3: Add a New Measure

Click on the MeasureTable, and then click New Measure.

For example, to calculate Gross Sales:

This measure will now live inside the MeasureTable, separate from your data tables.

Step 4: Hide the Placeholder Column

To clean things up, right-click on the KPI column and select Hide in Report View. This keeps your model tidy and focused only on usable fields.

How to Use Your Measures in Power BI Reports

Now that you’ve created a dedicated measure table and added some DAX calculations like Gross, it’s time to use those measures in your reports.

This step confirms that your Direct Lake Semantic Model is not only functional, but also ready for business use — live, fast, and accurate.

Let’s walk through how to bring your new measures into a Power BI report.

Step 1: Return to Your Open Report

Go back to the report file you created earlier that’s already connected to your semantic model.

Step 2: Refresh the Model

Click Refresh from the toolbar. This ensures any new objects — like your MeasureTable or added DAX measures — appear in the fields pane.

Step 3: Locate Your Measure Table

In the fields pane on the right, find the MeasureTable. If you hid the placeholder column earlier, you’ll only see the actual measures inside.

Step 4: Add a Measure to a Visual

Drag the measure — for example, Gross — into a visual like:

- A table

- A card

- A column chart

Combine it with dimensions from other tables (e.g., Brand from the Item table or Region from the Sales table) to get segmented insights.

Step 5: Confirm Output

Power BI should render the results immediately. Since this is Direct Lake mode, the query is sent live to OneLake — no waiting, no refresh required.

If your measure references fields that don’t exist or relationships aren’t set properly, Power BI will throw an error. Double-check your table links if anything seems off.

Real-World Example: Bringing It All Together

Let’s say you’re building a sales dashboard for your company. You’ve already connected two tables:

- Item (from one lakehouse), which includes product details like Brand and Item ID

- Sales (from another lakehouse), which includes Quantity and Price

You’ve also created a custom measure:

This measure calculates Gross Sales by multiplying quantity sold by price — row by row — and summing the result.

Now you want to see gross sales by brand. Here’s how:

- In your report, add a Table visual.

- Drag Brand (from the Item table) to the rows.

- Drag the Gross measure (from your MeasureTable) to the values.

Power BI now shows a clean breakdown of gross sales per brand — live, accurate, and instantly calculated using data from two different lakehouses. No refresh needed. No data copy. Just smart modeling.

Direct Lake vs Import vs DirectQuery: Which Power BI Mode Should You Use?

Power BI now supports three main data access modes — and choosing the right one can make or break your model’s performance, flexibility, and maintenance effort.

Here’s a side-by-side comparison to help you decide:

| Feature | Import Mode | DirectQuery | Direct Lake (New) |

| Data Refresh Needed | Yes | No | No |

| Performance | Fast (cached data) | Can be slow (live calls) | Fast (live from OneLake) |

| Multi-source Modeling | Possible but messy | Limited and rigid | Yes, built-in support |

| Transformation Support | Full via Power Query | Limited | None (for now) |

| Offline Availability | Yes | No | No |

| Data Duplication | Yes | No | No |

| Data Size Limits | File-based constraints | Backend limits apply | Unlimited (live model) |

Key Takeaways

- Use Import Mode if you need full transformation control and don’t mind managing refreshes.

- Use DirectQuery when connecting to real-time systems like SQL Server — but expect some performance trade-offs.

- Use Direct Lake when your data is in Microsoft Fabric (OneLake) and you want speed, simplicity, and zero refresh cycles.

Direct Lake isn’t a replacement for every scenario, but it’s now the best fit for live reporting across Fabric-based storage.

Direct Lake Semantic Models: Real-World Scenarios

Understanding the technical “how” is important — but just as crucial is knowing when and why to use Direct Lake Semantic Models in your actual business environment.

Below are real-world examples that show how organizations across different industries are applying this feature to streamline reporting, reduce manual work, and get faster insights.

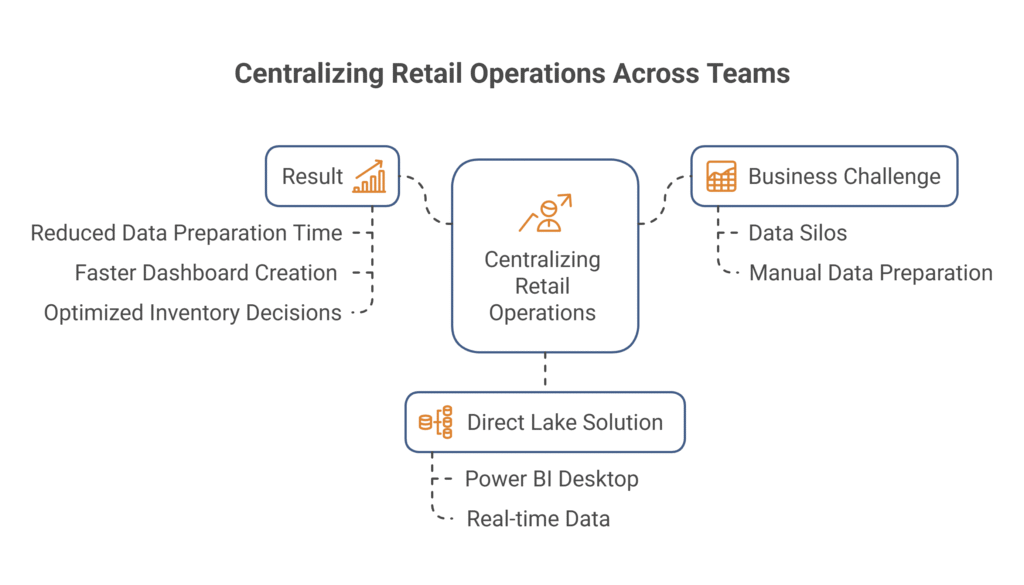

Scenario 1: Centralizing Retail Operations Across Teams

Business Challenge:

In retail, different teams manage data in silos — sales in one lakehouse, inventory in another. As a result, analysts often spend hours manually exporting, merging, and cleaning datasets before they can start reporting.

How Direct Lake Helps:

With a Direct Lake Semantic Model, both sources can be connected in Power BI Desktop — live and in one model. Analysts can track real-time sales against stock levels, flag out-of-stock issues quickly, and optimize reorder cycles without needing IT support or nightly refresh jobs.

Result:

The company reduces data preparation time, speeds up dashboard creation, and makes faster pricing and inventory decisions.

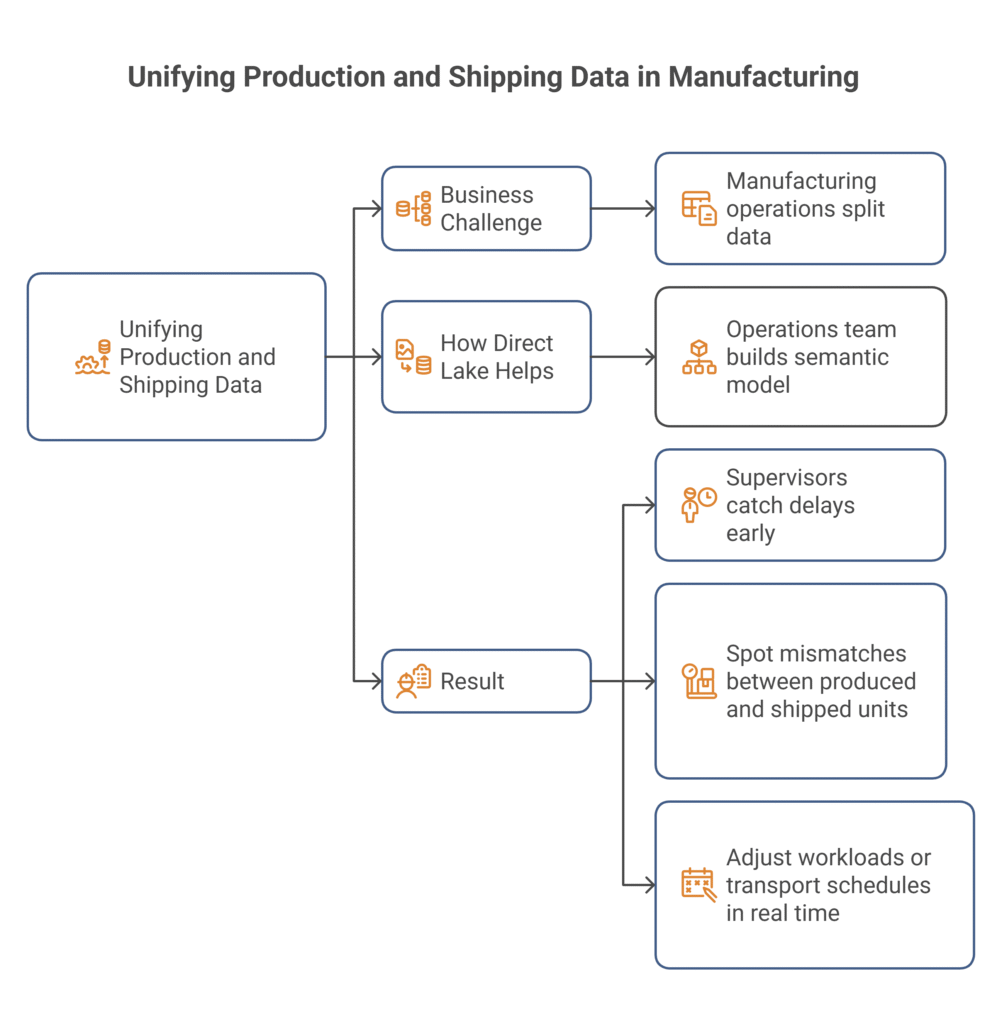

Scenario 2: Unifying Production and Shipping Data in Manufacturing

Business Challenge:

Manufacturing operations often split data between systems — production logs in a structured warehouse, and shipping/tracking details in a separate lakehouse. Visibility across this pipeline is difficult and often delayed.

How Direct Lake Helps:

By using Direct Lake mode, the operations team builds a semantic model that combines live data from both sources. They create dashboards showing production timelines next to shipping ETAs — updated instantly.

Result:

Supervisors can catch delays early, spot mismatches between produced and shipped units, and adjust workloads or transport schedules in real time.

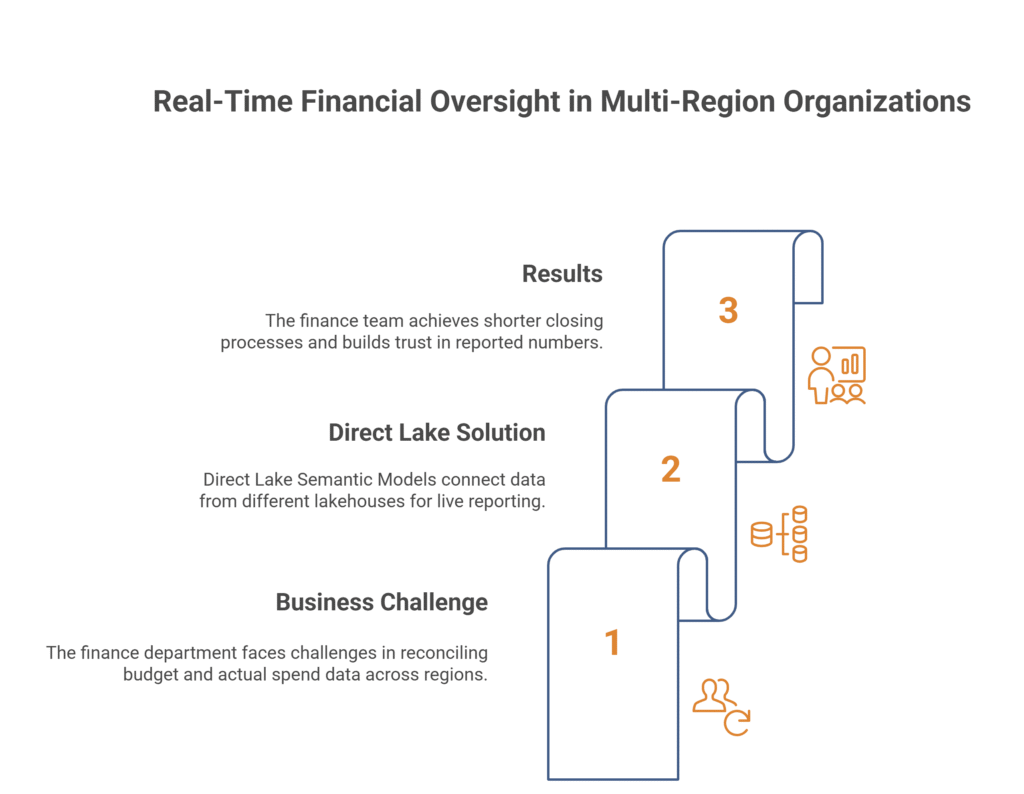

Scenario 3: Real-Time Financial Oversight in Multi-Region Organizations

Business Challenge:

The finance department of a multinational company manages budget planning in one data store and actual spend across regional teams in others. Reconciling both for reports involves offline exports, Excel hacks, and coordination between teams.

How Direct Lake Helps:

Direct Lake Semantic Models let them connect both budget and actual spend tables from different lakehouses, building a single live report that shows performance against plan, per region — without duplicating data.

Result:

The finance team shortens month-end closing processes, improves accuracy, and builds trust in the numbers being reported.

Stay Ahead of the Competition with Kanerika’s Advanced Analytics Solutions

Kanerika is a premier data and AI solutions company that helps businesses unlock the full potential of their data with cutting-edge analytics solutions. Our expertise enables organizations to extract fast, accurate, and actionable insights from their vast data estate, empowering smarter decision-making.

As a certified Microsoft Data and AI solutions partner, we leverage the power of Microsoft Fabric and Power BI to develop tailored analytics solutions that solve business challenges and optimize data operations for better efficiency, performance, and scalability.

Whether you need real-time insights, AI-driven analytics, or advanced BI capabilities, Kanerika delivers customized solutions that drive growth and innovation. Our deep expertise in data engineering, visualization, and AI ensures that your business stays ahead in an increasingly data-driven world.

Partner with Kanerika today and transform your data into a strategic advantage for long-term success!

FAQs

What is a Direct Lake semantic model?

A Direct Lake semantic model is a Power BI dataset that reads Delta tables directly from OneLake without importing data or requiring DirectQuery. It combines the performance benefits of import mode with the real-time freshness of DirectQuery by loading column data on demand into memory. This approach eliminates data duplication, reduces storage costs, and ensures reports always reflect current lakehouse data. Direct Lake semantic models are native to Microsoft Fabric and ideal for enterprise analytics at scale. Kanerika’s Fabric specialists can help you design Direct Lake models optimized for your reporting needs.

How to create a Direct Lake semantic model?

Creating a Direct Lake semantic model starts in Microsoft Fabric by navigating to your lakehouse or warehouse and selecting tables for your model. Fabric automatically generates a default semantic model when you create a lakehouse. You can customize it by adding relationships, measures, and hierarchies in the model view. Ensure your Delta tables are properly structured with appropriate data types before modeling. The semantic model inherits Direct Lake connectivity automatically when sourced from OneLake artifacts. Kanerika helps enterprises build production-ready Direct Lake semantic models with optimized DAX and governance controls.

What is the difference between Direct Lake on OneLake and Direct Lake on SQL?

Direct Lake on OneLake reads data directly from Delta Parquet files stored in your lakehouse, providing fastest query performance for analytical workloads. Direct Lake on SQL endpoint queries the SQL analytics endpoint of your lakehouse or warehouse, enabling T-SQL compatibility and row-level security enforcement. OneLake mode offers superior speed for large-scale aggregations, while SQL mode supports more complex security scenarios and relational query patterns. Choose based on your performance requirements and security architecture. Kanerika’s data architects can assess your workload to recommend the optimal Direct Lake configuration for your enterprise.

What are examples of semantic models?

Semantic models include Power BI datasets, Azure Analysis Services models, and SQL Server Analysis Services tabular models. In Microsoft Fabric, Direct Lake semantic models represent the latest evolution, connecting directly to lakehouse Delta tables. Common examples include sales analytics models with revenue measures, customer segmentation models with demographic hierarchies, and financial reporting models with time intelligence calculations. Each semantic model defines relationships, measures, and business logic that transform raw data into meaningful insights. Kanerika builds enterprise semantic models across industries, from retail analytics to healthcare reporting—connect with us to modernize your BI layer.

How to create a new semantic model?

To create a new semantic model in Microsoft Fabric, open your workspace and select New, then Semantic model. Choose your data source—lakehouse, warehouse, or external connections. Define tables, create relationships between entities, and add DAX measures for business calculations. For Direct Lake connectivity, source your model from Fabric lakehouse or warehouse artifacts. Configure refresh settings, apply row-level security rules, and publish to your workspace for report consumption. Test with Power BI Desktop before deploying to production environments. Kanerika delivers end-to-end semantic model development with performance tuning and governance best practices built in.

How to select a semantic model in Direct Lake mode?

Selecting Direct Lake mode happens automatically when your semantic model sources data from a Fabric lakehouse or warehouse. In Power BI Desktop, connect using the OneLake data hub and choose your lakehouse tables. Verify Direct Lake mode by checking the model’s storage mode property in the model view—it should display DirectLake rather than Import or DirectQuery. For existing models, you cannot switch modes; create a new model from lakehouse artifacts instead. Monitor framing behavior in Fabric capacity metrics to ensure optimal performance. Kanerika guides enterprises through Direct Lake migrations, ensuring seamless transitions from legacy import models.

What is the difference between semantic model and Direct Lake?

A semantic model is a business-friendly data structure containing tables, relationships, and measures that Power BI uses for reporting. Direct Lake is a storage mode that defines how that semantic model accesses underlying data. Traditional semantic models use Import mode (cached data) or DirectQuery (live queries). Direct Lake is a third storage mode exclusive to Microsoft Fabric that reads Delta tables directly from OneLake without importing or querying a database. Think of semantic model as the what and Direct Lake as the how. Kanerika helps organizations design semantic models with the right storage mode for their performance and freshness requirements.

Why use Direct Lake?

Direct Lake eliminates the traditional trade-off between data freshness and query performance in Power BI. Unlike Import mode, you avoid scheduled refreshes and data duplication across storage layers. Unlike DirectQuery, you get in-memory performance without database query overhead. Direct Lake semantic models automatically reflect lakehouse changes, reducing data latency from hours to minutes. This simplifies architecture, lowers storage costs, and accelerates time-to-insight for enterprise analytics. Organizations with large datasets benefit most from Direct Lake’s efficient columnar loading. Kanerika implements Direct Lake strategies that maximize Fabric capacity utilization—schedule a consultation to evaluate your use case.

What is the difference between Delta Lake and Direct Lake?

Delta Lake is an open-source storage format that adds ACID transactions, schema enforcement, and time travel to Parquet files. Direct Lake is a Microsoft Fabric connectivity mode that reads Delta Lake tables directly into Power BI semantic models. Delta Lake defines how data is stored and versioned in your lakehouse. Direct Lake defines how Power BI accesses that stored data without importing or issuing SQL queries. They work together—Direct Lake semantic models require Delta format tables in OneLake to function. Understanding both is essential for Fabric analytics architecture. Kanerika architects solutions that leverage Delta Lake storage with Direct Lake reporting for unified analytics.

How to connect semantic model to lakehouse?

Connecting a semantic model to a lakehouse in Microsoft Fabric requires selecting OneLake as your data source. Open Power BI Desktop, choose Get Data, then select Microsoft OneLake. Browse to your Fabric workspace and select the target lakehouse or its SQL analytics endpoint. Choose specific tables or views to include in your model. This connection automatically enables Direct Lake mode for optimal performance. Define relationships and measures after loading table metadata. Publish the semantic model to your Fabric workspace for report development. Kanerika streamlines lakehouse-to-semantic-model connections with standardized patterns for enterprise deployments.

How to create a semantic model from a lakehouse?

Creating a semantic model from a lakehouse begins when Fabric automatically generates a default model upon lakehouse creation. Access it through your workspace by selecting the semantic model artifact linked to your lakehouse. To build a custom model, open the lakehouse, select tables from the explorer, and choose Create semantic model. Add relationships between tables, define measures using DAX, and configure hierarchies for drill-down analysis. The resulting Direct Lake semantic model reads Delta tables without data movement. Set appropriate permissions before sharing with report developers. Kanerika delivers lakehouse-native semantic models engineered for performance and governed for enterprise compliance.

When should you use DirectQuery instead of Import mode?

Use DirectQuery when data freshness requirements demand real-time or near-real-time reporting and scheduled refreshes cannot meet business needs. DirectQuery suits scenarios where source data exceeds Power BI dataset size limits or when security must be enforced at the database level. However, DirectQuery introduces query latency and database load concerns. In Microsoft Fabric, Direct Lake often provides a better alternative—combining Import-like performance with DirectQuery-level freshness. Evaluate your data volume, refresh frequency needs, and infrastructure constraints when choosing. Kanerika assesses your analytics requirements to recommend the optimal storage mode for each use case.

What is the difference between a data model and a semantic model?

A data model describes the technical structure of data—tables, columns, data types, and relationships at the database level. A semantic model adds a business-meaning layer with friendly names, hierarchies, measures, and calculations that translate raw data into understandable metrics. Semantic models abstract complexity so business users can explore data without writing queries. In Power BI and Microsoft Fabric, semantic models serve as the analytical layer between storage and visualization. Direct Lake semantic models specifically connect this business layer directly to lakehouse Delta tables. Kanerika builds semantic models that bridge technical data structures with business intelligence needs.

Why is it called a semantic model?

The term semantic model refers to data structures that carry meaning beyond raw technical definitions. Semantic comes from the study of meaning—these models add business context like descriptive names, logical groupings, calculated measures, and defined relationships that give data significance. Microsoft adopted this terminology to distinguish business-ready analytical models from raw data models. A semantic model enables users to ask questions in business terms rather than database terminology. Direct Lake semantic models in Fabric maintain this meaningful layer while accessing lakehouse data directly. Kanerika creates semantic models that translate complex data into intuitive analytics experiences for your organization.

Why use semantic models?

Semantic models provide a single source of truth for business metrics, ensuring consistent calculations across all reports and dashboards. They abstract complex joins and transformations so business users can self-serve analytics without SQL knowledge. Semantic models enforce governance through defined measures, preventing ad-hoc calculation errors. They optimize query performance by pre-defining aggregation paths and relationships. In Microsoft Fabric, Direct Lake semantic models extend these benefits with real-time lakehouse connectivity and reduced infrastructure complexity. Organizations achieve faster insights, better data quality, and lower maintenance overhead. Kanerika develops governed semantic models that scale with your enterprise analytics demands.

What is a data lake vs lakehouse?

A data lake stores raw, unstructured, and semi-structured data in its native format without enforcing schema—flexible but challenging for analytics. A lakehouse combines data lake storage economics with data warehouse structure by adding schema enforcement, ACID transactions, and SQL query capabilities. Microsoft Fabric’s OneLake is a lakehouse architecture using Delta Lake format. Direct Lake semantic models specifically leverage lakehouse structures, reading Delta tables directly for Power BI reporting. Lakehouses enable both data science workloads and business intelligence from unified storage. Kanerika helps enterprises migrate from legacy data lakes to modern lakehouse architectures with Direct Lake-ready analytics.

Does Direct Lake support RLS?

Direct Lake semantic models support row-level security through two approaches. Object-level security and standard RLS work when the model falls back to DirectQuery mode during restricted queries. For native Direct Lake RLS without fallback, use Direct Lake on SQL endpoint, which enforces security rules at the SQL analytics layer. Define RLS roles in your semantic model and assign users through Power BI service. Be aware that complex RLS expressions may trigger DirectQuery fallback, impacting performance. Monitor your capacity metrics to track fallback frequency. Kanerika implements secure Direct Lake deployments with properly architected RLS strategies—contact us for a security assessment.

How to enable Direct Lake in Power BI?

Direct Lake is automatically enabled when you create a semantic model sourced from Microsoft Fabric lakehouse or warehouse artifacts. No manual toggle exists—the storage mode is determined by your data source connection. Ensure you have Fabric capacity (F64 or higher for production workloads) and appropriate workspace permissions. Connect through OneLake data hub in Power BI Desktop or create models directly in Fabric workspace. Verify Direct Lake is active by checking the storage mode property in model view. Legacy Power BI Pro workspaces cannot use Direct Lake; migration to Fabric is required. Kanerika accelerates Fabric adoption with Direct Lake enablement roadmaps tailored to your infrastructure.