Data migration in 2026 looks nothing like it did three years ago. Teams that used to plan one big cutover now run continuous migration programs across multiple clouds. AI assists the planning. Fabric platforms replaced siloed warehouses. Streaming replication replaced overnight batch jobs. Security and governance are designed in from day one instead of bolted on at cutover.

Gartner still finds 83% of data migration projects fail or exceed budgets, which tells you the work is harder than the tooling makes it look. The shift in 2026 is structural. Migration is no longer a project to finish. It’s a capability to run, and the companies treating it that way are pulling ahead.

In this article, we have the top 10 data migration trends shaping 2026 and a readiness scorecard you can run in ten minutes.

Key Takeaways

- AI is now used for migration planning, dependency mapping, and code conversion, not autonomous execution. The “set and forget” pitch lost credibility in 2025.

- Cloud-native fabric and lakehouse platforms (Microsoft Fabric, Databricks, Snowflake) are replacing siloed warehouses as the default migration target, with cloud-to-cloud projects now outnumbering on-prem-to-cloud.

- Real-time streaming and zero-downtime cutover replaced batch transfers as the default for customer-facing systems.

- Security, governance, and compliance are designed in from day one rather than bolted on at cutover.

- Enterprises treating migration as a long-term capability are increasingly using accelerators like Kanerika FLIP to standardize execution, reduce risk, and speed up delivery.

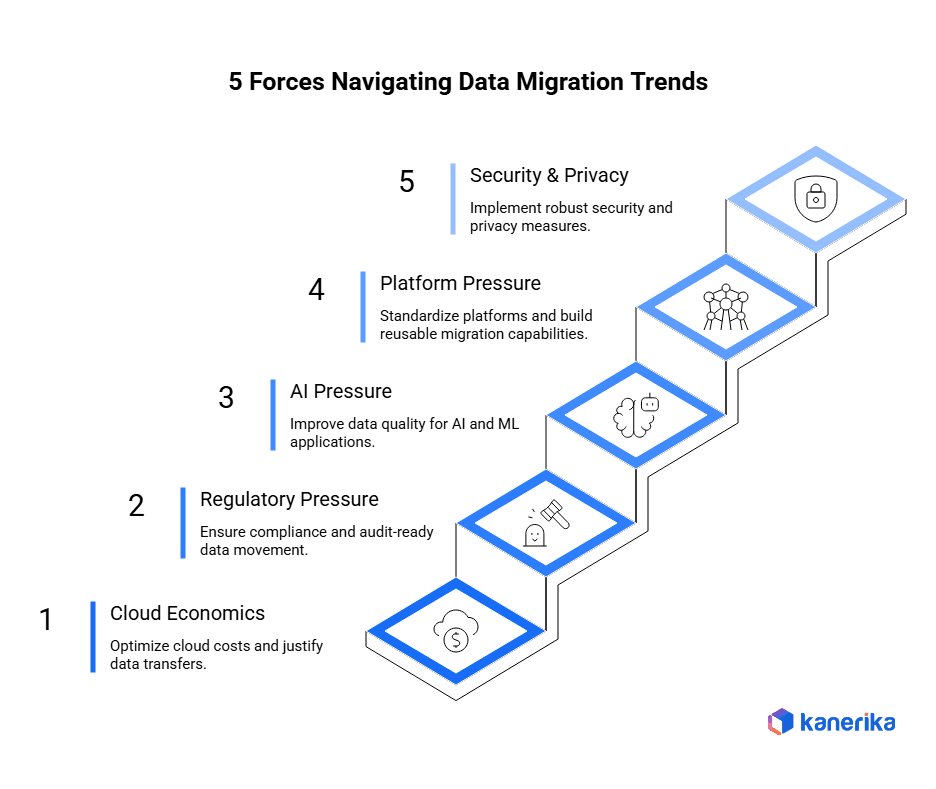

Trend Radar: 5 Forces Reshaping Data Migration Decisions

Five forces are converging on enterprise migration programs in 2026, and they’re changing how teams plan, sequence, and run the work. Each one creates pressure that older migration playbooks don’t handle well.

1. Cloud Economics Constraints

Cloud bills got large enough in 2025 that finance teams now have a permanent seat at the migration table. FinOps governance demands a clear answer to which workloads move to cloud, which stay on premises, and what each move actually costs.

What this looks like in practice:

- Migration plans without a TCO model attached no longer get signed off

- Sequencing follows financial impact rather than technical convenience

- Cloud waste from poorly planned migrations gets flagged before the next wave starts

- Workloads that don’t justify cloud economics stay on premises or get retired

2. Regulatory Requirements

Regulators want audit-ready data movement with complete traceability, and the bar keeps rising. Manual audit trails can’t keep up anymore. Compliance by default, automated and embedded in the pipeline, has become the operating standard.

The frameworks driving this in 2026:

- GDPR requires documented evidence of where personal data travels

- SOX mandates financial data lineage across migration boundaries

- HIPAA expects proof of protection throughout the migration lifecycle

- DORA raises the bar on operational resilience and incident reporting in financial services

- Industry regulators expect automated tracking, not retrospective audits

3. AI Expectations

AI workloads are exposing data quality issues that older systems hid. Models trained on dirty or poorly classified data fail in production, and leadership teams chasing AI use cases are discovering that the data isn’t ready. Migration is now the cheapest moment to fix that.

What this changes about migration scope:

- Data quality cleanup moves from post-migration to pre-migration

- Sensitive data classification happens during migration, not after

- Metadata enrichment becomes a core deliverable rather than a follow-on

- Vector embeddings and AI-specific structures are designed into the target from day one

- Governance includes AI-specific policies (training data lineage, model access controls)

4. Platform Standardization

Enterprises are consolidating onto fewer platforms to reduce complexity and tool sprawl. Microsoft Fabric, Databricks, and Snowflake are absorbing workloads that used to live across three or four systems. Standardization makes migration repeatable, but it also means teams migrate to the same target multiple times across different business units.

What standardization enables:

- Reusable migration frameworks across business units

- Consistent governance applied to every migration regardless of owner

- Lower cost per migration because the second one reuses the first

- Faster onboarding for new teams running their first migration

- Centralized expertise rather than scattered tribal knowledge

5. Security and Privacy Risk

Migration windows are now considered a high-risk attack surface. Cyber insurance policies ask migration-specific questions before renewal. Breaches during transitions are expensive, and regulators no longer accept “we were in the middle of a migration” as an explanation.

What’s changed in security posture:

- Lineage and access logs capture every transformation automatically

- Security teams are in design reviews from day one, not at cutover

- Encryption in transit and at rest is mandatory, not a discussion point

- Zero-trust architectures shape how migration pipelines authenticate

- Data loss prevention rules are embedded in the pipeline itself

Trend #1: AI Now Runs the Planning

The “fully autonomous migration” pitch from 2024 is dead. Vendors who promised set-and-forget AI lost credibility after enough production failures, and 2026 buyers are skeptical of any tool that claims it can do the whole migration without human approval. What replaced it is more useful and more honest. AI assists with planning and humans approve the critical calls.

The shift matters because the bottleneck in most migrations isn’t execution speed. It’s the upfront discovery, dependency mapping, and impact analysis that takes months when done manually. AI compresses that work to days, but only when humans review the output before it drives a decision.

Where AI is actually earning its keep in 2026:

- Dependency discovery across legacy systems where documentation is missing or wrong

- Impact analysis on schema changes before they hit downstream systems

- First-draft documentation that humans clean up rather than write from scratch

- Code conversion for SQL dialects, ETL workflows, and report logic

- Anomaly detection during reconciliation, flagging records that need human review

The teams getting the most out of AI migration tools treat them like a senior analyst with no judgment. The output is fast and mostly right, but nothing ships without review. That posture produces better results than either fully manual planning or fully autonomous execution.

Trend #2: Fabric and Lakehouse Platforms Replace Siloed Warehouses

The default migration target changed in 2026. Five years ago, the answer was “move to a cloud data warehouse.” Today, it’s “move to a unified analytics platform that combines warehouse, lakehouse, streaming, and governance in one place.”

Microsoft Fabric, Databricks, and Snowflake all converged on this model. The driver is that enterprises got tired of stitching together separate systems for batch warehousing, real-time analytics, ML feature stores, and governance. Each integration point was a failure mode. Each tool had its own access model, its own metadata, and its own bill.

What’s pulling migrations toward fabric platforms in 2026:

- One copy of the data serves warehousing, lakehouse, real-time, and AI workloads

- Native governance through tools like Microsoft Purview removes the need for a separate catalog

- Built-in semantic layers reduce the metric-mismatch problem that plagues migrated reporting

- Pricing models are unified, which makes FinOps tracking easier

- AI workloads run on the same platform as the data, not in a separate environment

The complication is that the migration target is now a moving target. Fabric, Databricks, and Snowflake all release new capabilities quarterly. Teams that lock their migration design too early end up rebuilding pieces of it before they go live.

Trend #3: Cloud-to-Cloud Migrations Now Outnumber On-Prem-to-Cloud

The default migration in 2026 is cloud-to-cloud, not on-prem-to-cloud. Most large enterprises did their initial cloud migration between 2018 and 2023, and now they’re moving between clouds, between regions, between platforms within the same vendor, or between data warehouse generations.

The drivers are different from the original wave. Cost optimization pushes workloads from one hyperscaler to another. Vendor consolidation programs collapse three cloud accounts into one. Modernization pulls workloads off first-generation cloud warehouses (Redshift, Synapse, BigQuery v1) onto lakehouse platforms.

Where cloud-to-cloud migrations are most active in 2026:

- Redshift or Synapse onto Snowflake, Databricks, or Microsoft Fabric

- Multi-region consolidation driven by data sovereignty rules

- Hyperscaler-to-hyperscaler shifts driven by AI workload pricing

- ETL platform consolidation (Informatica or Talend onto cloud-native equivalents)

- BI tool migration (Tableau, Cognos, or Crystal Reports onto Power BI)

The discipline these projects require is different. Source systems are documented, APIs exist, and tooling is mature. The hard part is not extraction. It’s reconciliation, semantic alignment, and avoiding the trap of carrying old design decisions into the new platform unchanged.

Trend #4: Real-Time Streaming Replaces Batch Cutover

Big-bang cutover with overnight batch transfers is dying for customer-facing systems. Most enterprise migrations in 2026 use change data capture (CDC) and streaming replication to keep source and target in sync during the migration window, then cut over with minimal downtime.

The reason is that the business no longer tolerates the migration weekend. Banks can’t take their core systems offline. Healthcare providers can’t pause patient records. E-commerce can’t be down during a promotion. Streaming-based cutover keeps both systems alive, lets traffic shift gradually, and reduces the cost of mistakes.

What modern cutover looks like in 2026:

- CDC streams capture changes on the source system as they happen

- Target system stays in sync within seconds of the source during the migration window

- Reconciliation runs continuously, not just at the end

- Traffic shifts in stages (blue-green, canary, or shadow modes)

- Rollback is fast because the source system is still live and current

The trade-off is operational complexity. Streaming cutover requires more infrastructure, more monitoring, and more test cycles than a batch approach. The teams that pull it off either invest heavily in observability or partner with someone who already has the playbook.

Trend #5: Security and Compliance Are Designed In

Security used to be a checklist at the end of a migration. In 2026, security and compliance teams are in design reviews from the start. The shift is partly regulatory pressure and partly the cost of getting it wrong.

GDPR, HIPAA, SOX, and DORA all expect documented evidence throughout the migration lifecycle. SOC 2 audits look for proof that data was protected during transit, not just at rest in the new system. Cyber insurance policies now ask migration-specific questions before renewal. The cost of bolting security on after the fact runs into the millions for any large enterprise.

What “designed in” actually means in practice:

- Encryption in transit and at rest is mandatory, not a question

- Zero-trust architectures shape how migration pipelines authenticate

- Data loss prevention rules are embedded in the pipeline, not applied after

- Lineage and access logs capture every transformation automatically

- Compliance officers participate in design reviews, not just final audits

Programs that build governance in from day one spend less on remediation and clear audit reviews faster. The ones that retrofit it after cutover usually discover gaps during the first compliance audit and spend the next two quarters fixing them.

Trend #6: Embedded Governance Replaces Bolt-On Catalogs

Data governance used to live in a separate tool. You ran the migration, then you bought a catalog, mapped the lineage manually, and hoped someone kept it up to date. By 2026, that pattern is being abandoned in favor of governance baked into the migration pipeline itself.

The driver is that governance only works when it’s automatic. A catalog that gets updated quarterly is a catalog nobody trusts. Lineage that requires manual curation falls behind the first time someone changes a pipeline without telling the data steward. Access rules that live outside the platform get forgotten the first time a new user is provisioned.

What embedded governance looks like in 2026:

- Lineage is captured automatically as data moves, not entered manually

- Access policies enforce at query time through the platform itself

- Data classification is applied at ingestion, not retrofit

- Catalog updates trigger automatically when schemas change

- Governance tools like Microsoft Purview integrate directly with migration pipelines

Tools matter less than the pattern. The teams getting governance right treat it as part of the migration deliverable, not a follow-on project. If governance ships after cutover, it usually never catches up.

Trend #7: AI Readiness Becomes the Real Goal of Migration

Migration in 2026 is rarely just about modernizing infrastructure. The actual driver in most enterprise programs is AI readiness. Leadership wants the new platform because the old one can’t support the AI use cases the business is asking for, and the data quality problems that AI exposes can’t be fixed without restructuring the underlying data estate.

The shift means migration scope expands. You’re not just moving data. You’re cleaning it, normalizing it, classifying it, and making sure it’s structured the way an AI model needs it to be. Teams that ignore this end up with a working migration that fails the first AI project that touches it.

What AI-ready migration looks like in 2026:

- Data quality is fixed before migration, not after

- Sensitive data is classified and tagged so AI training respects it

- Vector embeddings and semantic search are designed into the platform from day one

- Metadata is rich enough that an AI agent can understand what fields mean

- Governance includes AI-specific policies (training data lineage, model access controls)

The harder truth is that most enterprises discover their data isn’t AI-ready until they try to use it for AI. Migration is the cheapest moment to fix that. After cutover, the cleanup costs multiply.

Trend #8: FinOps Decides What Migrates First

Through 2024 and most of 2025, migration sequencing was a technical decision. Easiest workloads first, hardest last, dependencies mapped on a Gantt chart. In 2026, finance teams are in the room before sequencing is finalized, and they’re often changing the order.

Cloud bills got large enough that CFOs care about them line by line. A migration plan without a TCO model attached doesn’t get approved anymore. Once you start modeling the financial impact of each wave, the sequence often inverts. Expensive legacy systems that nobody wanted to touch first become priority one because they’re costing seven figures a year.

A FinOps-aware migration plan now includes:

- Cost-per-wave modeled before kickoff (data transfer, storage, compute, licensing)

- Guardrails set per pipeline and per job, with real-time alerts on threshold breaches

- Unit economics tracked across the program (cost-per-gigabyte-migrated, cost-per-table-converted)

- Spend attributed to the business unit that owns the workload

- ROI calculated for each migration wave, sequencing based on financial impact

A pattern we see often: the workload finance flags as expensive has 30 to 40% of its cost tied up in licensing for tools the new platform replaces natively. Modeling that swap before sequencing pulls a previously bottom-of-list workload into the first wave.

Make Your Migration Hassle-Free with Trusted Experts!

Work with Kanerika for seamless, accurate execution.

Trend #9: Migration Observability Becomes Standard

The shift from blind migration to monitored migration is happening across enterprise programs in 2026. The pattern is familiar to anyone who watched application performance monitoring become standard a decade ago. The difference is that migration observability is operational, not retrospective.

The control loop is straightforward. Track throughput, transformation success rates, validation failures, and reconciliation status in real time. Detect data drift the moment a source system changes mid-migration. Trigger alerts on threshold breaches before issues compound. Surface a single dashboard for executives so they stop demanding status meetings.

What migration observability covers in practice:

- Real-time throughput, error rates, and reconciliation status

- Data drift detection on source systems during the migration window

- Automated alerts on SLO breaches before they cascade

- Predictive completion forecasts based on current velocity

- Audit trails that double as compliance evidence

The real value is operational, not informational. Teams adjust strategy mid-flight when reconciliation rates drop, instead of discovering the problem at UAT. In FLIP-led migrations, observability is built into the pipeline rather than added on top, which closes the data drift detection gap from days down to under an hour.

Trend #10: The Migration Factory Model Goes Mainstream

Migration factories in 2026 are no longer a McKinsey diagram. Mid-market and large enterprises with 20 or more migrations a year are operationalizing the model and treating migration as a productized capability rather than a series of one-off projects.

The core idea is industrialization. The recurring components of every migration become reusable assets. You build them once, maintain them centrally, and deploy them per project. The factory doesn’t reduce the expertise required. It concentrates that expertise in fewer people who run more migrations more reliably.

What sits inside a migration factory:

- Reusable templates for common patterns (database-to-cloud, BI consolidation, ETL modernization)

- Pre-configured validation packs with quality gates already wired up

- A shared governance model so every migration follows the same approval path

- Scheduled release windows with documented rollback playbooks

- A center of excellence that owns the institutional knowledge and onboards new teams

Any organization with serious migration volume will eventually build something resembling this. The question is whether you build it in-house, buy it as a platform, or partner with someone who already runs one. Kanerika’s FLIP is structured exactly this way: accelerators, validation packs, and rollback procedures maintained as a product with versioning and a release cycle, which is why the second migration costs less than the first and the tenth costs a fraction of either.

A Readiness Scorecard You Can Run in 10 Minutes

Score each area on a 0 to 3 scale. 0 means ad-hoc, 1 means inconsistent, 2 means standardized, 3 means measured and improving.

| Capability area | What “3” looks like |

|---|---|

| AI in planning | AI-assisted dependency discovery, impact analysis, and code conversion with human review gates |

| Target platform | Migration target is a unified fabric or lakehouse platform, not a siloed warehouse |

| Multi-cloud readiness | Migration tooling is portable across at least two clouds |

| Real-time cutover | CDC and streaming replication used for customer-facing systems |

| Security by design | Encryption, zero-trust, and DLP designed in from day one |

| Embedded governance | Lineage, classification, and access controls integrated into the pipeline |

| AI-readiness | Data is cleaned, classified, and structured for AI before migration |

| FinOps integration | Cost-per-wave modeled before kickoff, guardrails enforced |

| Observability | Real-time dashboards, drift detection, and reconciliation alerts |

| Factory model | Reusable templates, validation packs, and shared governance |

Total your score out of 30. Most enterprises we see come in between 10 and 18 on first measurement. Anything below 15 means migration is still being run as a project. Anything above 22 means you have a working capability and the question shifts to scaling it.

The diagnostic value isn’t the score. It’s seeing which two areas drag the average down. Those are the investment priorities for the next two quarters.

How Kanerika Approaches Modern Migrations

Kanerika runs enterprise migration programs across Microsoft Fabric, Databricks, Snowflake, and Power BI ecosystems. Delivery centers on FLIP, a migration accelerator that bundles automation, validation, governance, and observability into a single platform.

Supported migration paths include Tableau to Power BI, Crystal Reports to Power BI, SSRS to Power BI, SQL Services to Microsoft Fabric, Cognos to Power BI, Informatica to Talend, Informatica to Microsoft Fabric, Informatica to Databricks, Alteryx to Microsoft Fabric, and Azure to Microsoft Fabric. The Azure to Fabric Migration Accelerator is generally available as a Microsoft Fabric workload.

Most of what’s in this article reflects patterns we see directly in delivery. The trends are real because the failure modes that drive them are real. If you’re planning a migration program for 2026 or auditing one already in flight, we offer a structured readiness assessment that maps directly to the scorecard above.

Case Study: Crystal Reports to Power BI Migration for a Clinical Lab Organization

A leading clinical lab organization running on SAP Crystal Reports needed to modernize before end-of-support cut off their reporting continuity. The legacy reporting stack was holding back analytics adoption, slowing decisions, and creating compliance risk in a regulated business environment.

Challenges:

- SAP Crystal Reports 2016 reached end of support in December 2024, with no extended path forward.

- Hundreds of operational and clinical reports built up over a decade, many undocumented and tightly coupled to legacy schemas.

- Manual report rewrites would have taken months and risked reporting downtime during regulated business cycles.

- Clinical analysts needed governed self-service BI on the new platform, not just a like-for-like rebuild.

Solutions:

- Used Kanerika’s FLIP migration accelerator to automate conversion of report logic, calculations, and data sources into Power BI on Microsoft Fabric.

- Built a phased migration approach with parallel running, keeping legacy and new reports in sync until cutover.

- Implemented automated validation packs to reconcile report outputs row by row and catch logic mismatches before go-live.

- Designed the Power BI semantic model from day one so downstream metrics stayed consistent across clinical and operational teams.

Results:

- Foundation in place for self-service BI and AI-driven analytics on Microsoft Fabric.

- 80% reduction in report preparation effort on the new platform.

- Zero reporting downtime during cutover, even in regulated business cycles.

- Faster analytics adoption across clinical and operational teams within the first quarter post-migration.

Accelerate Your Data Transformation by Migrating to Power BI!

Partner with Kanerika for Expert Data Modernization Services

Wrapping Up

Migration in 2026 is less about tools and more about how the work is organized. The teams getting it right run migration as a permanent capability, use AI for planning rather than execution, target unified fabric platforms, build security and governance in from day one, and treat each migration as a wave in a longer program rather than a one-off project. The trends above aren’t predictions. They’re descriptions of what already separates the teams shipping reliably from the teams paying the rework tax.

Frequently Asked Questions

How is AI changing data migration in 2026?

AI is mostly used for planning where humans verify the output, not for end-to-end automation. The high-value use cases are dependency discovery across legacy systems, impact analysis on schema changes, code conversion for SQL and ETL workflows, anomaly detection during reconciliation, and first-draft documentation. Vendors who promised fully autonomous migration in 2024 lost credibility. The pattern that’s working is AI as planning assistance with human approval gates on critical decisions.

Why are migration strategies different in 2026 than in 2025?

Three specific changes. Compliance teams now participate in design reviews rather than reviewing at the end. FinOps modeling is required before sequencing decisions get made. AI readiness, not just infrastructure modernization, is the actual driver in most enterprise programs. Lift-and-shift is rare. Phased, governance-first migrations with continuous validation are the default.

How does security shape modern data migration?

Security teams are involved from day one rather than reviewing at cutover. Encryption in transit and at rest is mandatory. Zero-trust architectures shape how migration pipelines are designed. DLP rules are embedded in the pipeline rather than applied after. Compliance frameworks like GDPR, HIPAA, and SOC 2 require documented evidence throughout the migration lifecycle, not just at endpoints.

What role does scalability play in modern migration?

Data volumes are growing faster than migration windows are expanding. Scalable architectures let teams handle terabytes or petabytes without proportional resource growth. Cloud-native platforms like Microsoft Fabric and Databricks scale elastically during peak load and scale down to control cost. Programs that don’t plan for scale hit performance walls and miss cutover windows.

Why is governance central to migration decisions now?

Regulatory penalties and reputational risk now exceed the cost of building proper controls. Organizations need demonstrable lineage, access control, and audit trails throughout the migration. Tools like Microsoft Purview integrate governance into the pipeline so policies enforce automatically. Programs that bolt on governance after the fact spend significantly more on remediation than programs that build it in from the start.

What is a migration factory and why is the model spreading?

A migration factory is a standardized, repeatable framework that treats migration as a product line rather than a series of one-off projects. It includes pre-built templates, automation, reusable validation packs, and a shared governance model. The model spreads because enterprises with hundreds of legacy applications can’t migrate them one bespoke project at a time. Factories also reduce dependency on scarce specialist skills by codifying expertise into reusable components.

How should an enterprise prepare for the next wave of migration trends?

Build modular, cloud-native architectures that adapt as platforms evolve. Develop internal capability around AI-assisted planning tools. Stand up a governance framework now rather than retrofitting one. Evaluate partners on platform expertise across Microsoft Fabric, Databricks, and Snowflake. Run regular data audits to identify migration candidates before technical debt makes them harder.

What's the most reliable approach to a complex migration?

Discovery first, then phased execution with continuous validation. Start with data profiling to understand volumes, dependencies, and quality issues. Define SLOs and acceptance criteria before moving anything. Migrate in waves with operational checkpoints. Validate continuously rather than at the end. Build the rollback playbook before you need it.