As organizations modernize legacy systems and move to cloud or hybrid environments, data migration is no longer a one-time technical task. Failed or delayed migrations have made it clear that success depends on structure, not just tools. This is where a data migration framework becomes essential. In fact, a well-defined framework provides a repeatable, governed approach to planning, executing, and validating data movement across systems while minimizing risk and disruption.

The need for structure is backed by numbers. According to Gartner, nearly 83% of data migration projects either fail outright or exceed their original budget and timeline. Additionally, organizations that follow a formal migration framework report faster execution, fewer data quality issues, and up to 40% reduction in migration errors, especially in large-scale cloud and multi-platform migrations.

In this blog, we explain what a data migration framework is, its key phases and components, and how it helps organizations deliver predictable, secure, and scalable migration outcomes.

Key Takeaways

- A data migration framework treats migration as a governed business process with defined ownership, checkpoints, and success criteria.

- Most migration failures trace back to poor assessment, unclear data ownership, and the absence of a formal validation process.

- The five core phases (assessment, preparation, execution, validation, and post-migration optimization) each carry specific risks that a framework helps contain.

- Strategy decisions around approach, downtime tolerance, and automation level should be locked in before execution begins.

- Data quality work done before migration consistently reduces post-go-live defects and speeds up user adoption.

- Kanerika’s FLIP platform and migration accelerators enable faster, repeatable, and lower-risk migrations by automating conversion while preserving business logic.

What Is a Data Migration Framework?

A data migration framework is a structured approach that defines how data gets assessed, prepared, moved, validated, and stabilized when transitioning from one system to another. It brings defined ownership, checkpoints, and success criteria to a process that often runs on instinct alone.

Migrations have grown more complex as organizations move toward cloud platforms, modern ERPs, data lakehouses, and analytics systems like Microsoft Fabric. A single enterprise migration can involve terabytes of data, dozens of source systems, and hundreds of business rules. Without a framework, these variables stack up silently until they surface as failures mid-execution.

A solid framework covers:

- Clear migration objectives tied to specific business outcomes

- Defined scope with documented data ownership per domain

- Standard processes for data profiling, cleansing, and transformation

- Documented mapping rules and validation acceptance criteria

- Security, compliance, and access controls embedded from the start

Organizations using structured frameworks report fewer delays, lower rework costs, and faster adoption of the new system. More practically, they build stakeholder trust in the migrated data from day one.

Why Most Data Migrations Fail Without a Framework

Migration failure follows predictable patterns. The same causes repeat across industries and project types. Understanding them is the first step to designing a framework that actively prevents them.

1. Poor Planning and Incomplete Assessment

Teams consistently underestimate data volumes, system dependencies, and transformation complexity. Legacy systems often carry years of undocumented logic, inconsistent data structures, and hidden dependencies. When these surface during execution, the result is last-minute fixes and missed deadlines.

Research from IBM shows that nearly 40% of migration delays trace directly to insufficient upfront analysis. A thorough assessment phase closes this gap before it costs the project.

2. Data Quality Issues Carried Forward

Enterprise data is rarely clean. Duplicates, missing values, outdated records, and inconsistent formats are common in any system that has been in production for more than a few years. Migrating without addressing quality first carries existing problems into the new environment, where they become harder to diagnose.

IBM’s Cost of Poor Data Quality report estimates that bad data costs organizations between 15% and 25% of revenue annually. A framework with a formal data preparation phase catches these issues before they migrate.

3. Lack of Clear Ownership

When migrations run outside a formal framework, data ownership gets assumed rather than assigned. IT focuses on technical execution. Business teams assume someone else handles accuracy. The gap between those two assumptions is where validation failures accumulate.

A framework assigns explicit data owners per domain, defines who approves validation results, and sets escalation paths when discrepancies arise. Even technically sound migrations will fail business acceptance without this structure.

4. Over-reliance on Manual Processes

Manual extraction, transformation, and validation are manageable for small datasets. At enterprise scale, they introduce compounding error risk. Each handoff between individuals creates an opportunity for inconsistency.

A framework pushes teams toward automation and repeatability. Consistent, automated processes produce audit trails, catch errors earlier, and scale across multiple migration cycles without degrading quality.

5. Cost Overruns and Timeline Slippages

Unstructured migrations discover issues late. Late discovery means repeated testing cycles, extended parallel system operation, and emergency fixes under pressure. Industry data shows that projects running without a formal framework regularly exceed budget by 30% to 50%.

A framework front-loads the discovery work. Teams resolve known issues on a schedule rather than scrambling to address surprises under deadline pressure.

Common Migration Failure Causes:

| Failure cause | Root issue | Framework fix |

| Poor upfront assessment | Teams skip discovery, assume scope is smaller than it is | Mandatory assessment phase with documented scope sign-off |

| Data quality carried forward | Dirty data migrates into the new environment unchanged | Data preparation phase with profiling, cleansing, and validation rules |

| Unclear data ownership | Accountability is assumed rather than assigned | Governance layer with named owners per data domain |

| Manual processes at scale | Human handling introduces inconsistency and error | Automation embedded across extraction, transformation, and validation |

| Issues discovered too late | No checkpoints before final cutover | Phase gates with go/no-go criteria throughout the lifecycle |

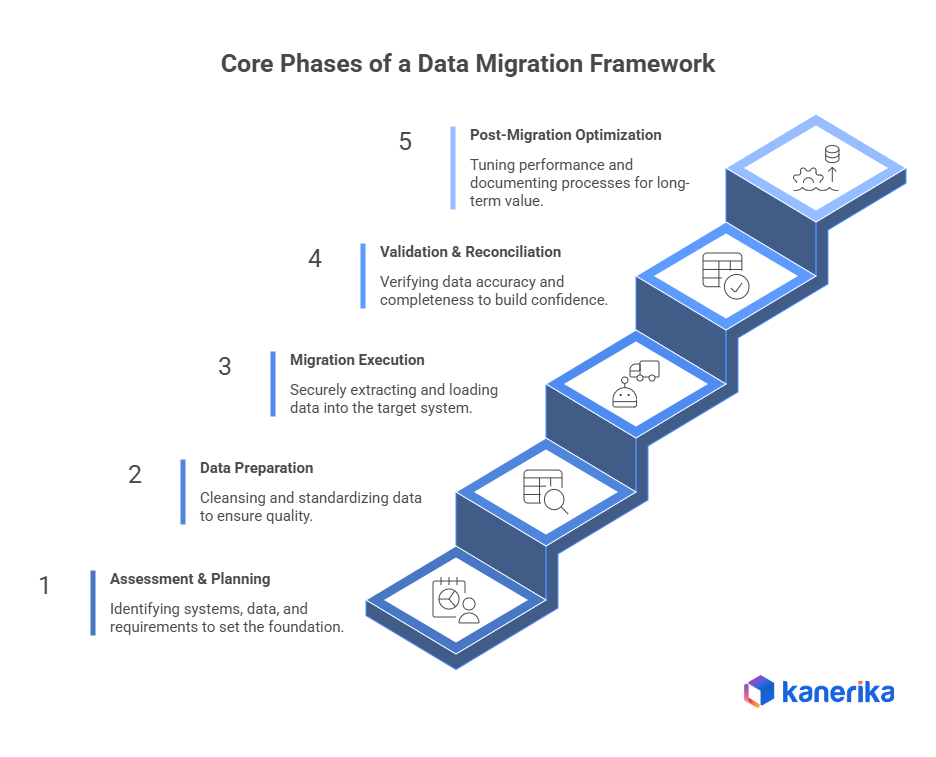

Core Phases of a Data Migration Framework

A well-built framework structures migration into five phases. Each phase has a specific objective, defined outputs, and a gate condition that determines whether the team can proceed. Compressing or skipping phases is where most migrations get into trouble.

Phase 1: Assessment and Planning

This phase defines scope, identifies risks, and sets the foundation for every decision that follows. The output is a complete picture of what data exists, where it lives, how it is used, and what moving it will require.

Key activities include:

- Map source and target systems, including all data formats, volumes, and inter-system dependencies

- Catalog business-critical datasets and flag any with regulatory or compliance requirements

- Define success metrics, cutover criteria, and rollback conditions before execution starts

- Run a pilot migration in a staging environment using representative data to surface schema mismatches and hidden dependencies early

Teams that skip the assessment phase consistently run into scope surprises mid-execution. A staging pilot is where most of those surprises surface at a cost that is still manageable.

Phase 2: Data Preparation and Cleansing

Most enterprise data carries quality problems built up over years of normal operation. Migrating it without cleaning first transfers those problems into the new environment, where they become harder to trace and fix.

Key activities include:

- Profile source data to identify duplicates, missing values, and format inconsistencies

- Remove records that are obsolete, redundant, or outside the migration scope

- Standardize field values, date formats, and reference data to match target schemas

- Apply business transformation rules and validate them against expected outputs before the migration window opens

Organizations that invest in this phase consistently report faster user adoption and fewer post-go-live defects.

Phase 3: Migration Execution

Execution is where data moves from source to target based on predefined, validated rules. It follows a controlled, monitored process with clear error handling at every stage.

Execution typically covers:

- Extract data securely from source systems using access-controlled, auditable methods

- Transform records to match target schemas, applying validated business rules throughout

- Load data in incremental or batch strategies depending on volume and downtime tolerance

- Monitor continuously and log errors in real time, with pre-defined thresholds for pause or rollback

A structured execution framework makes the process repeatable and auditable, enabling staged rollouts and clean recovery from failures.

Phase 4: Validation and Reconciliation

Validation confirms that migrated data is accurate, complete, and usable for business operations. It is the phase most often compressed under deadline pressure, and the one where technically successful migrations start failing in practice.

Validation activities include:

- Run record count and completeness checks against source system baselines

- Validate field-level accuracy across all migrated datasets, including edge cases and nulls

- Verify that business rules applied during transformation produced expected outputs

- Conduct user acceptance testing with domain stakeholders who know what the data should look like

Running validation continuously across the migration lifecycle, rather than only at cutover, catches issues earlier and significantly reduces last-minute risk.

Phase 5: Post-Migration Optimization

Migration does not end at go-live. The final phase focuses on stabilization, performance tuning, and making sure the new environment is ready to operate without hand-holding.

This phase typically includes:

- Optimize queries and pipelines for the target environment’s architecture and scale

- Fine-tune access controls, security policies, and monitoring configurations

- Decommission or archive legacy systems once data integrity is confirmed

- Document the migration process and transfer institutional knowledge to operations teams

This is also where lessons learned get captured. Organizations running multiple migrations see measurably faster execution on later projects as that knowledge compounds.

When Does Your Migration Need a Full Framework?

A small internal table move to a new schema can be handled with a well-documented runbook. A full migration framework is warranted when the complexity, risk, or regulatory exposure crosses certain thresholds.

You need a formal framework if any of the following apply:

- Moving to AWS, Azure, or GCP from on-premise infrastructure

- Consolidating data platforms after a merger or acquisition

- Replacing a core database or analytics platform: Oracle to Snowflake, SQL Server to Microsoft Fabric, Teradata to Databricks

- Migrating terabytes or more of historical data

- Operating in a regulated industry where HIPAA, GDPR, SOC 2, or PCI-DSS governs data handling

- Running systems that must stay live during migration because any downtime has direct revenue impact

If one or more of these apply, an informal approach will expose the project to scope failures, compliance gaps, and cutover risk that a framework is specifically designed to prevent.

Cognos vs Power BI: A Complete Comparison and Migration Roadmap

A comprehensive guide comparing Cognos and Power BI, highlighting key differences, benefits, and a step-by-step migration roadmap for enterprises looking to modernize their analytics.

Designing the Right Migration Strategy for Your Business

A data migration strategy translates the framework into execution. The framework defines what needs to be done. In contrast, the strategy defines how and in what sequence it happens. Choosing the wrong strategy can increase downtime, inflate costs, and disrupt core business operations. An effective migration strategy balances technical feasibility with business priorities.

1. Aligning Strategy with Business Objectives

Migration strategy should start with business intent, not tooling. Different objectives require different approaches.

- A cost-driven migration may prioritize moving cold or archival data first to reduce licensing spend.

- A customer-experience initiative may require near-zero downtime throughout the transition.

- A compliance-driven migration may need additional audit controls and validation checkpoints built into every phase.

Aligning strategy to objectives also determines which datasets are critical path, which can be deprioritized, and which data should stay in the source system entirely. This prevents unnecessary data movement and contains scope creep.

2. Choosing the Right Migration Approach

The migration approach determines how data moves and how risk is distributed across the timeline. The choice should be driven by system sensitivity, downtime tolerance, and team capacity.

| Approach | How it works | Best for | Key risk | Downtime |

| Big bang | All data migrated in a single cutover window | Small datasets or when speed is the priority | High risk; limited rollback window | Planned window required |

| Phased | Data migrated in logical groups by module, region, or business unit | Large, complex environments where teams need incremental validation | Slower overall; dependencies between phases | Minimal per phase |

| Incremental (trickle) | Ongoing sync between source and target until final cutover | Systems that cannot tolerate downtime; real-time environments | Sync complexity and drift risk | Near-zero |

| Zero downtime | Parallel systems run simultaneously until cutover is complete | Business-critical systems with no acceptable outage window | Highest infrastructure cost; complex consistency management | None |

For most large enterprise migrations, phased or incremental approaches offer the best balance of risk management and operational continuity. Big bang migrations carry the most cutover risk and require the most rigorous pre-migration testing to be viable.

3. Deciding between Manual and Automated Migration

Manual migration methods remain common for small or one-off data moves, but they become increasingly risky as data volumes grow. The core problem is consistency. Manual processes create dependency on specific individuals and introduce variation across migration runs.

Automated migration approaches deliver:

- Consistent execution across multiple migration cycles

- Built-in logging and audit trails

- Faster error detection and resolution

- Scalability for organizations planning future migrations

For enterprises running large-scale modernization programs, automation is a structural investment rather than a convenience.

4. Planning for Downtime, Risk, and Rollback

Every migration strategy must plan for something going wrong. Downtime windows need business stakeholder sign-off alongside IT approval. Rollback procedures need to be tested well before the cutover date.

A mature strategy defines:

- Clear go/no-go checkpoints during cutover

- Pre-tested rollback procedures linked to specific failure conditions

- Communication plans for business users and leadership

- Documented recovery timelines with accountable owners

Rollback planning signals responsible risk management. Teams that build it in before go-live are consistently better positioned to handle cutover issues cleanly.onsible risk management.

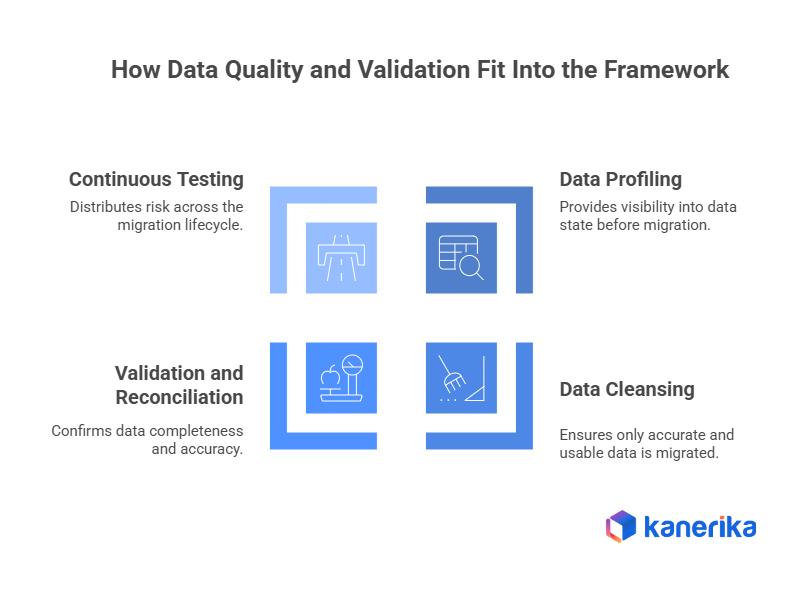

How Data Quality and Validation Fit Into the Framework

Data quality and validation aren’t supporting activities. Instead, they’re central to the success of the entire migration framework. Without them, even a technically successful migration can fail from a business perspective. Poor data quality leads to incorrect reports, broken workflows, and loss of confidence in the new system.

1. Data Profiling as the Foundation of Quality Control

Data profiling provides visibility into the actual state of the data before migration. Essentially, it replaces assumptions with evidence.

Through profiling, teams can:

- Identify duplicate records and conflicting values

- Detect missing or incomplete fields

- Understand data distribution and anomalies

- Reveal hidden dependencies between datasets

This insight is essential for creating accurate mappings and transformation rules. Moreover, skipping profiling often results in incorrect assumptions that surface late in the migration.

2. Data Cleansing to Reduce Downstream Risk

Cleansing ensures that only relevant, accurate, and usable data gets migrated. Migrating poor-quality data simply transfers existing problems into the new environment.

Effective cleansing involves:

- Removing outdated or unused records that no longer serve business needs

- Standardizing formats, codes, and reference values

- Correcting invalid or inconsistent entries based on business rules

- Resolving duplicates using defined matching logic

Organizations that prioritize cleansing before migration consistently report fewer post-go-live issues and faster system adoption.

3. Validation and Reconciliation to Build Trust

Validation confirms that the migrated data is complete and accurate. Meanwhile, reconciliation ensures alignment between source and target systems.

A comprehensive validation process includes:

- Comparing record counts across systems

- Verifying field-level accuracy for critical attributes

- Ensuring business rules and calculations behave as expected

- Involving business users in acceptance testing

Trust in migrated data is built through systematic validation, not informal checks or assumptions.

4. Continuous Testing Instead of End-Only Testing

Testing only at the end of migration concentrates risk at the most critical moment. In contrast, continuous testing distributes risk across the lifecycle.

Benefits of continuous testing include:

- Early detection of mapping or transformation issues

- Reduced rework and remediation effort

- More predictable cutover timelines

- Lower stress during final migration phases

Embedding validation into each phase strengthens the overall framework and improves outcomes.

Best Practices to Build a Scalable and Repeatable Migration Framework

A strong migration framework should be reusable. Organizations that treat migration as a one-time exercise often struggle when future migrations arise. Scalability and repeatability should be designed into the framework from the beginning.

1. Governance and Accountability

Migrations without clear ownership create approval bottlenecks and slow decision-making. Every domain requires a defined decision-maker who validates outputs and unblocks execution when conflicts arise.

- Assign data owners per domain with explicit sign-off authority at each phase gate

- Establish standardized data handling policies early to guide consistent decisions across teams

- Run structured checkpoints with documented outcomes, ownership clarity, and escalation paths

- Align technical and business stakeholders on accountability to prevent delays during execution

2. Automation for Consistency

Manual intervention introduces variance where consistency drives success. Each deviation in extraction, transformation, or validation increases the risk of downstream defects and rework.

- Build templated pipelines that execute consistently across dev, staging, and production environments

- Automate record count, field-level, and business rule validation to ensure full coverage

- Capture detailed error context at the point of failure to accelerate diagnosis and resolution

- Standardize logging and monitoring to maintain visibility across all migration stages

3. Documentation as an Asset

Documentation created during migration preserves critical context and accelerates future initiatives. Decisions made under pressure gain long-term value when captured systematically.

- Record transformation logic, mappings, and schema conflict resolutions in real time

- Maintain versioned runbooks covering extraction, load, rollback, and cutover procedures

- Log incidents with root cause, impact, and resolution to build an institutional knowledge base

- Ensure documentation remains accessible and structured for reuse across teams and projects

4. Rollback and Contingency Planning

Defined contingency planning strengthens execution confidence and reduces risk during cutover. Prepared teams respond faster and maintain control under pressure.

- Set clear error rate and time thresholds that trigger rollback actions

- Take verified, restorable backups immediately before cutover to preserve system integrity

- Conduct full rollback simulations during testing to validate readiness

- Define ownership and communication protocols for contingency execution

5. Security and Compliance Controls

Migration phases increase data exposure, making embedded security controls essential from the start. A proactive approach ensures compliance and reduces operational risk.

- Classify data by sensitivity before pipeline design to apply appropriate handling standards

- Encrypt data in transit and at rest, with secure and separate key management practices

- Maintain detailed activity logs to meet audit requirements across regulatory frameworks

- Implement role-based access controls to restrict data exposure throughout migration

6. Stakeholder Communication

Successful migration depends on user readiness as much as technical execution. Early and structured communication drives adoption and minimizes disruption.

- Establish feedback channels to capture user input and address issues quickly post-go-live

- Involve data owners early in scope definition and validation criteria to align expectations

- Share pre–go-live communication outlining changes, continuity, and escalation contacts

- Deliver role-based training tailored to user responsibilities and system interactions

Kanerika: Your Trusted Partner for Seamless Data Platform Migrations

Kanerika is a trusted partner for organizations looking to modernize their data platforms efficiently and securely. Modernizing legacy systems unlocks enhanced data accessibility, real-time analytics, scalable cloud solutions, and AI-driven decision-making. Traditional migration approaches can be complex, resource-intensive, and prone to errors, but Kanerika addresses these challenges through purpose-built migration accelerators and our FLIP platform, ensuring smooth, accurate, and reliable transitions.

Our migration services cover 12 automated migration paths, including Tableau to Power BI, Crystal Reports to Power BI, SSRS to Power BI, SSIS to Fabric, SSAS to Fabric, Cognos to Power BI, Informatica to Talend, and Azure to Fabric. Each path uses pre-built automation, standardized templates, and documented transformation logic.

We hold Microsoft Solutions Partner status for Data and AI with Analytics Specialization, and are ISO 27001, SOC II Type II, and CMMI Level 3 certified.

Case Study 1: SQL Server Services to Microsoft Fabric

Challenge

A manufacturing client ran SSIS pipelines, SSAS semantic models, and SSRS reports as separate systems. Each operated independently, maintenance costs were rising, and reporting delivery was slow. A manual migration to Microsoft Fabric carried substantial risk of logic breakage and extended timelines.

Solution

Using our FLIP migration accelerator, we converted SSIS packages, SSAS models, and SSRS reports into a unified Microsoft Fabric environment. Business logic, security configurations, and data structures were preserved throughout the automated conversion. The client received validated pipelines, models, and reports ready to operate inside a single Fabric workspace.

Results

- One unified analytics environment replaced multiple legacy tools.

- Migration completed in weeks through automated conversion.

- Maintenance effort dropped because Fabric runs on a scalable cloud model.

- Teams delivered analytics faster due to unified workflows.

Case Study 2: Informatica to Talend Migration

Challenge

A global manufacturer depended on complex Informatica PowerCenter workflows. Licensing costs were rising, update cycles were slow, and scaling required disproportionate effort. Manual reconstruction of mappings was estimated to take 12 to 18 months and carried high risk of logic errors.

Solution

We automated the conversion of Informatica mappings and workflows into Talend jobs, with all transformation rules recreated accurately. Business teams were able to test and validate Talend jobs without rewriting logic by hand, which compressed the review cycle substantially.

Results

- Complete preservation of workflow logic throughout the transition

- 70% reduction in manual migration effort

- 60% faster overall delivery compared to the manual approach estimate

- 45% lower total migration cost

Wrapping Up

Data migration is one of the highest-risk activities an enterprise IT team takes on. The organizations that get it right share one thing: a framework that defines what to do, in what order, with clear ownership at every step.

The five phases covered here (assessment, preparation, execution, validation, and post-migration optimization) are the structure that prevents the most common and most costly migration failures. The strategy decisions around approach, downtime, and automation are what make that structure practical for your specific environment.

If your organization is planning a migration and wants to understand what a structured, accelerated approach would look like, we would be glad to walk through it.

Elevate Your Enterprise Reporting with Expert Migration Solutions!

Partner with Kanerika for Data Modernization Services

FAQs

What is a data migration framework?

A data migration framework is a structured methodology that guides the planning, execution, and validation of moving data between systems, storage formats, or environments. It defines processes for data extraction, transformation, loading, and quality assurance while establishing governance protocols and risk mitigation strategies. Unlike ad-hoc approaches, a well-designed framework ensures consistency, minimizes downtime, and maintains data integrity throughout the migration lifecycle. Enterprise organizations rely on frameworks to standardize complex migrations across platforms like cloud environments or modern data warehouses. Kanerika’s data platform migration services deliver proven frameworks tailored to your infrastructure—schedule a consultation to explore your options.

Why is a data migration framework important?

A data migration framework is important because it reduces project failure risk, prevents data loss, and ensures business continuity during complex transitions. Without a structured approach, organizations face extended downtime, corrupted records, and compliance violations that can cost millions. A robust framework establishes clear milestones, validation checkpoints, and rollback procedures that keep migrations on track and within budget. It also enables teams to document dependencies and maintain audit trails for regulatory requirements. Enterprises moving to modern analytics platforms benefit significantly from standardized processes. Kanerika helps organizations implement migration frameworks that protect critical data assets—connect with our specialists today.

What are the key components of a data migration framework?

The key components of a data migration framework include data profiling, mapping and transformation rules, extraction protocols, validation procedures, and governance policies. Data profiling assesses source quality and identifies anomalies before migration begins. Mapping defines how fields translate between source and target systems, while transformation rules handle format conversions and business logic. Validation procedures verify accuracy post-migration through reconciliation and testing. Governance ensures compliance, security, and proper documentation throughout the process. A comprehensive framework also incorporates rollback mechanisms and stakeholder communication plans. Kanerika builds frameworks incorporating all these elements for seamless enterprise migrations—request a free assessment to get started.

What are the common types of data migration frameworks?

Common types of data migration frameworks include big bang migration, phased migration, parallel adoption, and hybrid approaches. Big bang frameworks execute complete cutover in a single event, suitable for smaller datasets with acceptable downtime windows. Phased frameworks migrate data incrementally, reducing risk by validating each segment before proceeding. Parallel adoption runs source and target systems simultaneously, enabling real-time comparison and gradual transition. Hybrid frameworks combine elements based on specific business requirements and system constraints. Each type addresses different organizational needs regarding risk tolerance, timeline, and complexity. Kanerika evaluates your environment to recommend the optimal framework type—reach out for expert guidance on your migration strategy.

What is an example of data migration?

A common example of data migration is moving an enterprise data warehouse from on-premises SQL Server infrastructure to Microsoft Fabric or Snowflake in the cloud. This involves extracting historical transactional data, customer records, and analytics workloads from legacy databases, transforming schemas to match the target platform’s architecture, and loading validated datasets into the new environment. Another example includes migrating BI tools from Cognos or Tableau to Power BI while preserving dashboard logic and report configurations. Each scenario requires careful planning to maintain data integrity and minimize operational disruption. Kanerika has executed hundreds of such migrations across industries—explore our case studies to see real transformation results.

What is the best approach for data migration?

The best approach for data migration combines thorough planning, incremental execution, and continuous validation within a structured framework. Start with comprehensive data profiling to understand source quality, dependencies, and transformation requirements. Develop detailed mapping documentation and establish success criteria before moving any data. Execute in controlled phases with validation checkpoints rather than risky single-cutover events. Implement automated testing to verify record counts, data accuracy, and business rule compliance at each stage. Maintain rollback capabilities until target system stability is confirmed. This risk-managed methodology ensures successful outcomes for complex enterprise migrations. Kanerika’s migration specialists design custom approaches matching your specific environment—schedule a discovery call today.

How does AI help in a data migration framework?

AI enhances data migration frameworks by automating data profiling, intelligent mapping, anomaly detection, and quality validation processes. Machine learning algorithms can analyze source schemas and recommend transformation rules, dramatically reducing manual mapping effort for complex migrations. AI-powered tools detect data quality issues and inconsistencies that human reviewers might miss, flagging problems before they propagate to target systems. Natural language processing assists in understanding unstructured data and legacy documentation. Predictive analytics help estimate migration timelines and identify potential failure points. These capabilities accelerate execution while improving accuracy across enterprise-scale projects. Kanerika integrates AI-driven automation into migration frameworks for faster, more reliable outcomes—discover how our intelligent solutions transform your migration journey.

What are best practices for implementing a data migration framework?

Best practices for implementing a data migration framework include establishing clear ownership, conducting thorough source data assessment, and creating detailed documentation before execution begins. Define success metrics and validation criteria upfront, then build automated testing into every phase. Engage business stakeholders early to verify transformation logic matches operational requirements. Implement incremental migration waves rather than single cutover events to contain risk. Maintain comprehensive audit trails for compliance and troubleshooting. Execute multiple dry runs in non-production environments before touching live data. Finally, plan for post-migration support and optimization to address issues that emerge after go-live. Kanerika applies these proven practices across all migration engagements—partner with us to ensure your framework delivers results.

Is ETL the same as data migration?

ETL and data migration are not the same, though they share overlapping techniques. ETL (Extract, Transform, Load) is a specific process pattern for moving and transforming data between systems, typically used in ongoing data integration workflows. Data migration is a broader initiative that relocates data from one environment to another, often as a one-time or phased project. Migration frameworks frequently incorporate ETL processes as execution mechanisms but also include planning, governance, validation, and cutover management components that ETL alone does not address. Understanding this distinction helps organizations allocate appropriate resources and select proper tooling. Kanerika provides both ETL pipeline development and comprehensive migration framework services—contact us to discuss your specific requirements.

Which tool is best for data migration?

The best tool for data migration depends on your source and target platforms, data complexity, and enterprise requirements. Microsoft Fabric excels for organizations consolidating analytics infrastructure within the Microsoft ecosystem. Databricks provides powerful Lakehouse capabilities for complex transformations and large-scale data processing. Informatica and Talend offer mature ETL functionality for diverse integration scenarios. Snowflake delivers excellent performance for cloud data warehouse migrations. Rather than selecting tools in isolation, successful migrations require tooling aligned with a comprehensive framework that addresses governance, validation, and business continuity. Kanerika maintains deep expertise across leading migration platforms—request a free assessment to identify the optimal toolset for your environment.

What are the four types of data migration?

The four types of data migration are storage migration, database migration, application migration, and cloud migration. Storage migration moves data between physical or virtual storage systems while maintaining accessibility. Database migration transfers data between database platforms, often requiring schema transformation and query optimization. Application migration relocates data alongside software systems, ensuring integrated functionality in new environments. Cloud migration moves on-premises data to cloud infrastructure, enabling scalability and modern analytics capabilities. Each type presents unique challenges regarding data integrity, performance, and business continuity that a structured migration framework must address. Kanerika has delivered all four migration types across enterprise environments—talk to our team about your specific migration category.

What are the 6 Rs migration strategies?

The 6 Rs migration strategies are Rehost, Replatform, Repurchase, Refactor, Retain, and Retire. Rehosting lifts and shifts applications without modification. Replatforming makes minor optimizations during migration for cloud benefits. Repurchasing replaces existing systems with cloud-native alternatives. Refactoring re-architects applications to leverage modern platform capabilities fully. Retaining keeps certain workloads in current environments when migration offers insufficient value. Retiring decommissions applications no longer needed. Within a data migration framework, these strategies guide decision-making for each workload based on business value, technical complexity, and transformation goals. Kanerika helps enterprises evaluate and execute the right R-strategy for each system—connect with us for strategic migration planning.

How can Kanerika help with a data migration framework?

Kanerika delivers end-to-end data migration framework services from assessment through execution and optimization. Our specialists conduct thorough source analysis, design transformation architectures, and build validation protocols tailored to your environment. We leverage migration accelerators for platforms like Microsoft Fabric, Databricks, and Snowflake that reduce project timelines by automating repetitive tasks. Our frameworks incorporate AI-powered data quality checks, governance controls, and comprehensive testing to ensure zero data loss. With experience across industries including banking, healthcare, manufacturing, and retail, Kanerika applies proven methodologies that minimize risk while maximizing business value. Start with a free migration assessment to understand your path forward.

Will ETL be replaced by AI?

AI will not replace ETL but will significantly transform how ETL processes operate within data migration frameworks. Traditional ETL requires extensive manual coding for transformations, mapping, and error handling that AI can increasingly automate. Machine learning models now generate transformation logic, detect schema changes, and resolve data quality issues with minimal human intervention. However, ETL fundamentals—extracting, transforming, and loading data—remain essential operations that AI enhances rather than eliminates. The future combines AI-augmented automation with human oversight for complex business logic and governance requirements. Kanerika integrates intelligent automation into ETL pipelines and migration frameworks—explore how our AI-powered solutions accelerate your data initiatives.