Consider a retail giant processing millions of daily transactions across stores, e-commerce platforms, and POS systems. Without a structured pipeline, valuable data remains isolated, hindering key insights for inventory management or customer experience. This is where an efficient ETL (Extract, Transform, Load) framework plays a pivotal role—integrating data from diverse sources, transforming it, and loading it into centralized systems for analysis.

The ETL tool market is expected to surpass $16 billion by 2025, underscoring the critical need for streamlined data integration to stay competitive. An ETL framework is essential for managing, cleaning, and processing data to deliver actionable insights and support smarter, data-driven decisions.

This blog will explore the core components of an ETL framework, its growing importance in modern data management, and best practices for implementation, helping businesses optimize data pipelines and drive digital transformation.

What is ETL Framework?

An ETL (Extract, Transform, Load) framework is a technology infrastructure that enables organizations to move data from multiple sources into a central repository through a defined process. It serves as the backbone for data integration, allowing businesses to convert raw, disparate data into standardized, analysis-ready information that supports decision-making.

Purpose and Value of ETL Framework

ETL frameworks deliver significant organizational value by:

- Ensuring data quality through systematic validation and cleaning

- Streamlining integration of multiple data sources into a unified view

- Enabling historical analysis by maintaining consistent data over time

- Improving data accessibility for business users through standardized formats

- Enhancing operational efficiency by automating repetitive data processing tasks

- Supporting regulatory compliance through documented data lineage

As data volumes grow, robust ETL frameworks become increasingly essential for deriving meaningful insights from complex information ecosystems.

Why Do Businesses Need an ETL Framework?

In today’s data-driven environment, an Extract, Transform, Load (ETL) framework plays a vital role in enabling organizations to manage, consolidate, and analyze data efficiently. Below are key reasons why businesses rely on ETL frameworks:

1. Data Integration

- ETL frameworks enable seamless extraction of data from diverse systems such as legacy databases, cloud applications, CRMs, and IoT platforms. Moreover, they consolidate this data into a centralized repository, ensuring consistency across departments.

- By integrating data across marketing, sales, finance, and operations, organizations gain a holistic view of their business. This integration breaks down data silos and helps uncover insights that would otherwise be hidden in disconnected systems.

2. Data Quality

- Raw data typically contains errors, duplications, or inconsistencies that reduce its reliability. Also, ETL frameworks apply cleansing and validation rules to correct inaccuracies and standardize formats.

- These processes enforce business logic and ensure that the data used for analysis is accurate and dependable. Consistent transformation rules across datasets promote a “single version of truth,” minimizing the risk of conflicting insights.

3. Scalability

- ETL frameworks are built to accommodate increasing data volumes and growing complexity as businesses scale. They offer modular and distributed processing capabilities that support expansion without requiring complete system overhauls.

- New data sources and processing logic can be added without disrupting existing pipelines. This scalability ensures long-term adaptability of the data infrastructure as business needs evolve.

4. Real-Time Data Processing

- Modern ETL frameworks support real-time or near real-time data integration in addition to traditional batch processing. Correspondingly, this allows businesses to access up-to-date information for time-sensitive decisions, such as tracking transactions or monitoring user activity.

- Real-time processing delivers continuous visibility into key metrics, improving responsiveness and agility. In the fast-growing industries, this capability provides a significant competitive edge by enabling quicker, data-driven actions.

Enhance Data Accuracy and Efficiency With Expert Integration Solutions!

Partner with Kanerika Today.

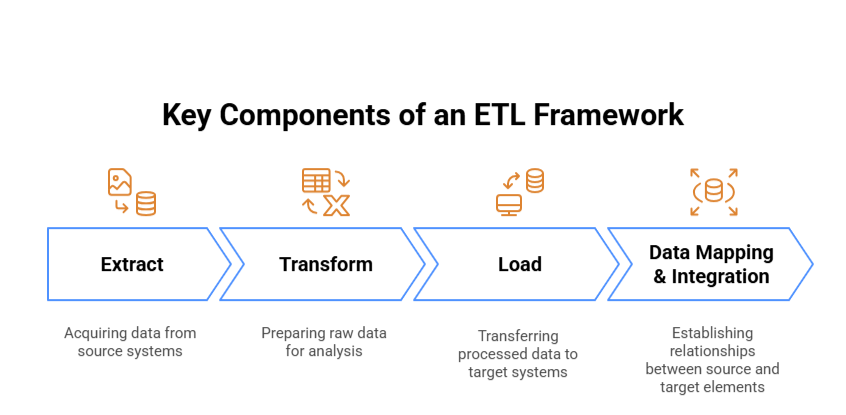

Key Components of an ETL Framework

Extract

The extraction phase acquires data from source systems like databases, APIs, and flat files. Organizations typically pull from multiple sources, using either full or incremental extraction methods. Common challenges include connecting to legacy systems, managing diverse data formats, and handling API rate limits. These can be addressed using extraction schedulers, custom connectors, and implementing retry mechanisms.

Transform

Transformation prepares raw data for analysis through cleansing, standardization, and enrichment. Key operations include:

- Removing duplicates and correcting inconsistencies

- Enriching data with additional information

- Standardizing formats across data points

Common tasks involve aggregating transactions, filtering irrelevant records, validating against business rules, and converting data types to match target requirements.

Load

The loading phase involves transferring the processed data into target systems for storage and analysis. Loading strategies vary based on business requirements and destination systems:

- Batch loading processes data in scheduled intervals, suitable for reporting that doesn’t require real-time updates

- Real-time loading continuously streams transformed data to destinations, essential for operational analytics

- Incremental loading focuses on adding only new or changed records to optimize system resources

The approach must consider factors like system constraints, data volumes, and business SLAs to determine appropriate loading frequency and methods.

Data Mapping & Integration

Data mapping establishes relationships between source and target elements, serving as the ETL blueprint. Proper mapping documentation includes field transformations, business rules, and data lineage. Integration mechanisms ensure referential integrity while supporting business processes, often through metadata repositories that track origins, transformations, and quality metrics.

Effective ETL frameworks balance these components while considering scalability, performance, and maintenance requirements. Modern solutions increasingly incorporate automation and self-service capabilities to reduce technical overhead while maintaining data quality and governance standards.

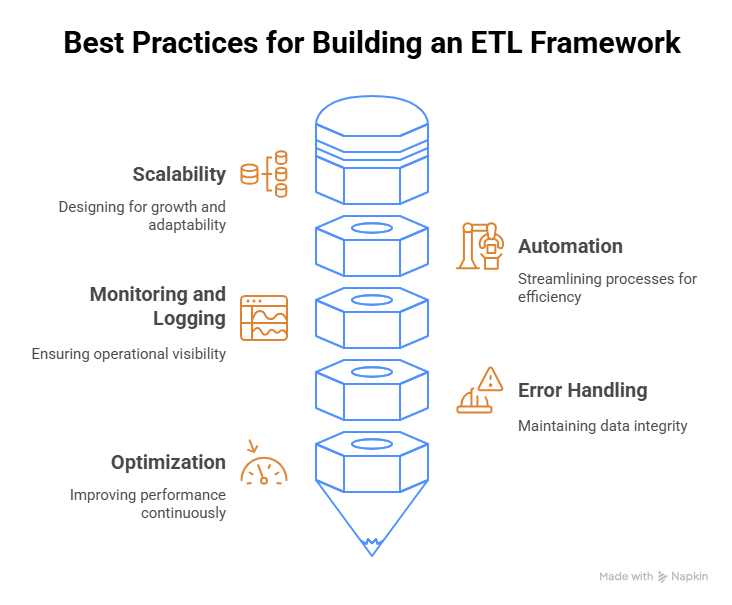

Best Practices for Building an ETL Framework

1. Scalability

Design your ETL framework with scalability as a foundational principle. Implement modular components that can be independently scaled based on workload demands. Use distributed processing technologies for large datasets and consider cloud-based solutions that offer elastic resources. Design data partitioning strategies to enable horizontal scaling as volumes grow.

2. Automation

Automate repetitive tasks throughout the ETL pipeline to enhance efficiency and reliability. Implement workflow orchestration tools to manage dependencies between tasks. Use configuration-driven approaches rather than hard-coding parameters. Automate validation checks at each stage to verify data quality without manual intervention.

3. Monitoring and Logging

Establish comprehensive monitoring and logging systems to maintain operational visibility. Track key metrics including job duration, data volume processed, and error rates. Implement alerting mechanisms for performance anomalies and failed jobs. Create dashboards visualizing ETL performance trends to identify potential bottlenecks proactively.

4. Error Handling

Develop robust error handling strategies to maintain data integrity. Implement retry mechanisms with exponential backoff for transient failures. Design graceful degradation patterns to handle partial failures without stopping entire pipelines. Create clear error messages that facilitate quick diagnosis and implement circuit breakers to prevent cascading failures.

5. Optimization

Continuously optimize your ETL framework for improved performance. Profile jobs regularly to identify bottlenecks and implement incremental processing where possible instead of full reloads. Consider pushdown optimization to perform filtering at source systems. Use appropriate data compression techniques and implement caching strategies for frequently accessed information.

Tools and Technologies for ETL Frameworks

1. ETL Tools Overview

The ETL landscape offers diverse tools to match varying business needs:

- Enterprise Solutions: Informatica PowerCenter and IBM DataStage provide robust, mature platforms with comprehensive features for complex enterprise data integration needs, though with higher licensing costs.

- Open-Source Options: Apache NiFi offers visual dataflow management, while Apache Airflow excels at workflow orchestration with Python-based DAGs. These tools provide flexibility without licensing fees.

- Mid-Market Tools: Talend and Microsoft SSIS deliver user-friendly interfaces with strong connectivity options, balancing functionality and cost for medium-sized organizations.

2. Cloud-Based ETL Solutions

Cloud-native ETL services have evolved to meet modern data processing needs:

AWS offers Glue (serverless ETL) and Data Pipeline (orchestration tool), Azure provides Data Factory, and Google Cloud features Dataflow supporting both batch and streaming workloads.

These solutions typically feature consumption-based pricing, simplified infrastructure management, and native connectivity to cloud data services. Most provide visual interfaces with underlying code generation.

3. Custom ETL Frameworks

Organizations develop custom ETL solutions when:

- Existing tools lack specific functionality for unique business requirements

- Processing extremely specialized data formats requires custom parsers

- Performance optimization needs exceed off-the-shelf capabilities

- Integration with proprietary systems demands custom connectors

- Cost concerns make open-source foundations with tailored components more attractive

Custom frameworks typically leverage programming languages like Python, Java, or Scala, often built on distributed processing frameworks such as Apache Spark.

4. Integrating with Data Warehouses

Modern ETL tools provide seamless integration with destination systems:

Cloud data warehouses like Snowflake, Redshift, and BigQuery offer optimized connectors for efficient data loading. The ELT pattern has gained popularity for cloud implementations, leveraging the computing power of modern data warehouses to perform transformations after loading raw data.

ETL vs. ELT: Understanding the Difference

1. ETL vs. ELT

ETL (Extract, Transform, Load) processes data on a separate server before loading to the target system. ELT (Extract, Load, Transform) loads raw data directly into the target system where transformations occur afterward. ETL represents the traditional approach, while ELT emerged with cloud computing and big data technologies.

2. ETL for Structured Data

- ETL excels when working with structured data that requires complex transformations before storage. It’s particularly valuable when:

- Data quality issues must be addressed before loading

- Sensitive data requires masking or encryption during the transfer process

- Target systems have limited computing resources

- Integration involves legacy systems with specific data format requirements

- Strict data governance requires validation before entering the data warehouse

ELT for Big Data

ELT has gained popularity in modern data architectures because it:

- Handles large volumes of raw, unstructured, or semi-structured data

- Utilizes the processing power of cloud data warehouses and data lakes

- Supports exploratory analysis on raw data

- Provides flexibility in transformation logic after data is centralized

- Enables faster initial data loading

ELT excels in cloud environments with cost-effective storage and scalable computing resources for transformations.

Data Ingestion vs Data Integration: How Are They Different?

Uncover the key differences between data ingestion and data integration, and learn how each plays a vital role in managing your organization’s data pipeline.

Challenges in Implementing an ETL Framework

1. Data Complexity

Implementing ETL solutions often involves integrating heterogeneous data sources with varying formats, schemas, and quality standards. Organizations struggle with reconciling inconsistent metadata across systems and handling evolving source structures.

Semantic differences between similar-looking data elements can lead to incorrect transformations if not properly mapped. Schema drift—where source systems change without notice—requires building adaptive extraction processes.

2. Real-Time Processing

Traditional batch-oriented ETL frameworks face challenges when adapting to real-time requirements. Stream processing demands different architectural approaches with minimal latency tolerances.

Technical difficulties include managing backpressure when downstream systems can’t process data quickly enough, handling out-of-order events, and ensuring exactly-once processing semantics.

3. Data Security

Security concerns permeate every aspect of the ETL process, particularly in regulated industries. Challenges include securely extracting data while respecting access controls, protecting sensitive information during transit, and implementing data masking during transformation. Compliance requirements may dictate specific data handling protocols that complicate ETL design.

4. Managing Large Data Volumes

Processing massive datasets strains computational resources and network bandwidth. ETL frameworks must implement efficient partitioning strategies to enable parallel processing while managing dependencies between related data elements. Organizations frequently underestimate infrastructure requirements, leading to performance bottlenecks that can cause processing windows to be missed.

Maximizing Efficiency: The Power of Automated Data Integration

Discover the key differences between data ingestion and data integration, and learn how each plays a vital role in managing your organization’s data pipeline.

Real World Implementation Examples of ETL Framework

1. Financial Services Industry

JPMorgan Chase implemented an enterprise ETL framework to consolidate customer data across their numerous legacy systems. Using Informatica PowerCenter, they created a customer 360 view by extracting data from mainframe systems, CRM databases, and transaction processing platforms.

Their framework included robust change data capture mechanisms that significantly reduced processing time through incremental updates. The implementation enforced strict data quality rules to maintain regulatory compliance while encryption protocols secured data throughout the pipeline.

2. E-commerce Platform

Amazon developed a sophisticated ETL framework using Apache Airflow to process their massive daily transaction volumes. Their implementation includes real-time inventory management through Kafka streams connected to warehouse systems across global fulfillment centers.

The framework features intelligent data partitioning that distributes processing across multiple AWS EMR clusters during peak shopping periods like Prime Day, automatically scaling based on load. Their specialized transformations support dynamic pricing algorithms by combining sales history, competitor data, and seasonal trends.

3. Healthcare Provider

Cleveland Clinic built an ETL solution with Microsoft SSIS and Azure Data Factory to integrate electronic health records, billing systems, and clinical research databases. Their implementation includes advanced data anonymization during the transformation phase to enable research while maintaining HIPAA compliance.

The framework processes nightly batch updates for analytical systems while supporting near-real-time data feeds for clinical dashboards. Custom validation rules ensure data quality for critical patient information.

4. Manufacturing Company

Siemens implemented Talend for IoT sensor data processing across their smart factory floors. Their ETL framework ingests time-series data from thousands of production line sensors, applies statistical quality control algorithms during transformation, and loads results into both operational systems and a Snowflake data warehouse.

The implementation features edge processing that filters anomalies before transmission to central systems, reducing bandwidth requirements significantly. Automated alerts trigger maintenance workflows when patterns indicate potential equipment failures.

The Future of ETL Frameworks

1. Cloud Adoption

ETL frameworks are rapidly migrating to cloud environments with organizations embracing platforms like AWS Glue, Azure Data Factory, and Google Cloud Dataflow. This shift eliminates infrastructure management burdens while enabling elastic scaling. Cloud-native ETL solutions offer consumption-based pricing models that optimize costs by aligning expenses with actual usage rather than peak capacity requirements.

2. Real-Time Data Processing

The demand for real-time insights is transforming ETL architectures from batch-oriented to stream-based processing. Stream processing technologies like Apache Kafka, Apache Flink, and Databricks Delta Live Tables are becoming core components of modern ETL pipelines, enabling businesses to reduce decision latency from days to seconds.

3. AI and Automation

Machine learning is revolutionizing ETL processes through automated data discovery, classification, and quality management. AI-powered tools now suggest optimal transformation logic based on historical patterns. Natural language interfaces make ETL accessible to business users without deep technical expertise.

4. Serverless ETL

Serverless architectures eliminate the need to provision and manage ETL infrastructure, automatically scaling resources in response to workload demands. Function-as-a-Service approaches enable granular cost control with per-execution pricing models. Event-driven triggers are replacing rigid scheduling, allowing ETL processes to respond immediately to new data.

Simplify Your Data Management With Powerful Integration Services!!

Partner with Kanerika Today.

Experience Next-Level Data Integration with Kanerika

Kanerika is a global consulting firm that specializes in providing innovative and effective data integration services. We offer expertise in data integration, analytics, and AI/ML, focusing on enhancing operational efficiency through cutting-edge technologies. Our services aim to empower businesses worldwide by driving growth, efficiency, and intelligent operations through hyper-automated processes and well-integrated systems.

Our flagship product, FLIP, an AI-powered data operations platform, revolutionizes data transformation with its flexible deployment options, pay-as-you-go pricing, and intuitive interface. With FLIP, businesses can streamline their data processes effortlessly, making data management a breeze.

Kanerika also offers exceptional AI/ML and RPA services, empowering businesses to outsmart competitors and propel them towards success. Experience the difference with Kanerika and unleash the true potential of your data. Let us be your partner in innovation and transformation, guiding you towards a future where data is not just information but a strategic asset driving your success.

Enhance Data Accuracy and Efficiency With Expert Integration Solutions!

Partner with Kanerika Today.

Frequently Asked Questions

What are ETL frameworks?

ETL frameworks are structured systems that standardize how data is extracted from source systems, transformed into usable formats, and loaded into target destinations like data warehouses or lakes. These frameworks provide reusable components, error handling, logging, and scheduling capabilities that accelerate data pipeline development. Unlike standalone scripts, a well-designed ETL framework enforces consistency across projects, reduces maintenance overhead, and improves reliability. Modern frameworks support both batch and streaming workloads. Kanerika designs enterprise ETL frameworks tailored to your data architecture—connect with our team to modernize your data integration strategy.

Will ETL be replaced by AI?

ETL will not be replaced by AI but rather enhanced by it. Artificial intelligence is transforming ETL frameworks by automating schema mapping, detecting anomalies, and optimizing transformation logic—tasks that previously required extensive manual coding. AI-powered ETL tools can suggest data quality rules and predict pipeline failures before they occur. However, the fundamental extract-transform-load process remains essential for moving and preparing data. The future is intelligent ETL, not the elimination of it. Kanerika integrates AI capabilities into ETL solutions to accelerate your data operations—let us show you how.

Is ETL the same as SQL?

ETL and SQL are not the same, though they work together frequently. ETL refers to the complete process of extracting data from sources, transforming it, and loading it into targets—a workflow methodology. SQL is a query language used to interact with relational databases. Within an ETL framework, SQL often handles extraction queries and transformation logic, but ETL encompasses much more: orchestration, scheduling, error handling, and cross-platform data movement. SQL is one tool within the broader ETL toolkit. Kanerika builds ETL frameworks leveraging SQL and modern technologies—reach out to optimize your data workflows.

Is ETL the same as API?

ETL and API serve different purposes in data architecture. An API is an interface that enables applications to communicate and exchange data in real-time. ETL is a process framework for systematically extracting, transforming, and loading data between systems, typically in batch operations. APIs often serve as data sources within ETL pipelines, providing the extraction mechanism for pulling data from SaaS applications or web services. Modern ETL frameworks incorporate API connectors as standard components for cloud data integration. Kanerika builds ETL solutions with robust API integration capabilities—contact us to streamline your data connectivity.

Why do businesses need an ETL framework?

Businesses need an ETL framework to ensure consistent, reliable, and scalable data movement across their organization. Without a structured framework, data pipelines become fragmented, error-prone, and difficult to maintain. An ETL framework standardizes development practices, enforces data quality rules, provides centralized logging, and enables faster delivery of new pipelines. It reduces technical debt by creating reusable components and simplifies compliance with data governance requirements. For enterprises managing multiple data sources, a robust framework is essential. Kanerika develops enterprise ETL frameworks that drive operational efficiency—schedule a consultation to assess your needs.

What are the core components of an ETL process?

The core components of an ETL process include extraction modules that connect to diverse data sources, transformation engines that cleanse and reshape data, and loading mechanisms that write to target systems. Supporting these are metadata repositories tracking data lineage, scheduling systems for orchestration, error handling routines for fault tolerance, and logging components for monitoring pipeline health. Advanced ETL frameworks also incorporate data quality validation layers and configuration management for environment portability. Each component must integrate seamlessly for reliable data operations. Kanerika architects comprehensive ETL frameworks with all essential components—talk to our data engineers today.

How does an ETL framework improve data quality?

An ETL framework improves data quality by embedding validation rules, cleansing routines, and standardization logic directly into the transformation layer. During extraction, the framework identifies missing or malformed records. Transformation steps apply deduplication, format normalization, referential integrity checks, and business rule validation. Rejected records route to exception handling queues for review rather than corrupting downstream systems. Built-in data profiling detects anomalies early, while audit trails maintain quality metrics over time. This systematic approach prevents garbage-in-garbage-out scenarios. Kanerika implements ETL frameworks with robust data quality controls—reach out to improve your data reliability.

Can ETL frameworks handle real-time data?

Modern ETL frameworks can handle real-time data through streaming ETL capabilities, though traditional batch-oriented designs require adaptation. Real-time ETL processes data continuously as it arrives using technologies like Apache Kafka, Spark Streaming, or cloud-native services. These frameworks apply transformations with minimal latency, enabling use cases like fraud detection, live dashboards, and operational analytics. Many organizations adopt hybrid approaches, using streaming for time-sensitive data and batch for historical processing within the same framework. Kanerika designs ETL frameworks supporting both batch and real-time data processing—contact us to enable streaming analytics in your environment.

How does an ETL framework support scalability?

An ETL framework supports scalability through parallel processing, partitioned workloads, and distributed architecture patterns. Well-designed frameworks separate compute from storage, allowing independent scaling of each layer. They implement incremental loading to process only changed data rather than full reloads. Cloud-native ETL frameworks leverage auto-scaling infrastructure that expands during peak loads and contracts during idle periods, optimizing costs. Modular framework design enables adding new data sources without redesigning existing pipelines. These architectural principles ensure performance remains consistent as data volumes grow. Kanerika builds scalable ETL frameworks on modern cloud platforms—let us architect a solution that grows with your business.

What are the benefits of using a custom ETL framework over off-the-shelf tools?

A custom ETL framework offers precise alignment with your specific data architecture, business logic, and performance requirements that generic tools cannot match. Custom frameworks eliminate licensing costs, avoid vendor lock-in, and integrate seamlessly with existing technology stacks. They provide complete control over optimization, security implementation, and feature development priorities. However, custom solutions require deeper technical investment and ongoing maintenance expertise. Organizations with unique data processing needs or regulatory constraints often find custom frameworks deliver superior long-term value. Kanerika builds custom ETL frameworks tailored to enterprise requirements—schedule a discovery session to evaluate your options.

What are the 5 steps of the ETL process?

The five steps of the ETL process are: extraction, where data is pulled from source systems; data profiling, where source data quality and structure are analyzed; cleansing and transformation, where data is standardized, deduplicated, and reshaped; loading, where transformed data moves to the target destination; and validation, where loaded data is verified for accuracy and completeness. Some frameworks add orchestration as a sixth step, managing dependencies and scheduling across pipeline stages. Each step requires proper error handling and logging. Kanerika implements comprehensive ETL processes following industry best practices—connect with us to optimize your data workflows.

Is ETL outdated?

ETL is not outdated but has evolved significantly to address modern data challenges. Traditional batch-only ETL approaches have expanded into real-time streaming, ELT patterns for cloud data warehouses, and AI-augmented pipelines. The core principle of systematically moving and transforming data remains fundamental to every data architecture. What has changed is how ETL frameworks execute—leveraging cloud scalability, parallel processing, and intelligent automation. Organizations still require structured data integration; the tools and patterns simply matured. Kanerika modernizes legacy ETL implementations with contemporary frameworks and cloud-native approaches—reach out to future-proof your data infrastructure.

What will replace ETL?

Nothing will fully replace ETL, but the approach continues evolving. ELT has gained prominence for cloud data warehouses, loading raw data first then transforming in-place using destination compute power. Data virtualization offers query-time integration without physical movement. Reverse ETL pushes analytics data back to operational systems. AI-driven data integration automates mapping and transformation logic. These patterns complement rather than eliminate traditional ETL—most enterprises use multiple approaches based on specific requirements. The need to integrate, transform, and deliver quality data persists regardless of methodology. Kanerika helps organizations adopt the right data integration patterns—consult with our architects to design your optimal approach.

Which ETL tool is used most?

The most widely used ETL tools vary by enterprise segment, but Microsoft SSIS, Informatica PowerCenter, and Talend consistently rank among the most deployed. In cloud environments, Azure Data Factory, AWS Glue, and Google Cloud Dataflow dominate. For big data workloads, Apache Spark-based frameworks lead adoption. Open-source options like Apache Airflow handle orchestration across many organizations. Tool selection depends on existing technology investments, cloud strategy, and specific use cases rather than universal rankings. Kanerika has expertise across leading ETL platforms including Informatica, Microsoft Fabric, and Databricks—contact us to evaluate the right tool for your environment.

Which is the best ETL tool?

The best ETL tool depends entirely on your specific requirements, existing infrastructure, and technical capabilities. Microsoft Azure Data Factory excels for Microsoft-centric environments. Databricks offers superior performance for large-scale data engineering. Informatica provides enterprise-grade governance and broad connectivity. Talend balances open-source flexibility with enterprise features. Fivetran and Airbyte simplify SaaS data extraction. Evaluating tools requires considering total cost of ownership, learning curve, scalability needs, and vendor roadmap alignment with your strategy. There is no universal best—only best fit. Kanerika helps enterprises select and implement optimal ETL solutions—request a personalized assessment based on your requirements.

Can I use SQL for ETL?

SQL can absolutely be used for ETL operations, particularly for extraction queries and transformation logic within relational databases. Many ETL frameworks generate SQL statements to execute transformations directly in source or target systems, leveraging database engine optimization. ELT patterns rely heavily on SQL for in-database transformations. However, SQL alone lacks orchestration, scheduling, error handling, and cross-platform connectivity that complete ETL frameworks provide. Most practitioners combine SQL with orchestration tools like Airflow or platform-native schedulers for production-grade pipelines. Kanerika develops SQL-optimized ETL solutions that maximize database performance—reach out to streamline your transformation workflows.

Is ETL backend or frontend?

ETL is a backend process that operates behind the scenes, invisible to end users. It runs on servers, cloud infrastructure, or dedicated data platforms to move and transform data between systems. Users interact with the results of ETL—clean data in dashboards, reports, or applications—but never directly with the ETL process itself. ETL frameworks typically include administrative interfaces for developers and data engineers to design, monitor, and troubleshoot pipelines, but these are operational tools rather than user-facing applications. The actual data processing remains firmly in backend infrastructure. Kanerika architects robust backend ETL frameworks that power your analytics—connect with us to strengthen your data foundation.

Is ETL a coding language?

ETL is not a coding language—it is a methodology and process for data integration. ETL stands for Extract, Transform, Load, describing the workflow of moving data between systems. ETL frameworks and tools may use various programming languages including Python, SQL, Scala, or Java to implement pipeline logic. Some platforms offer visual, low-code interfaces that generate underlying code automatically. The confusion arises because ETL work often involves coding, but ETL itself describes what you accomplish rather than how you write it. Understanding this distinction helps in selecting appropriate tools and skills. Kanerika builds ETL solutions using best-fit technologies for your environment—contact us to discuss your implementation approach.