As Microsoft Copilot becomes a core part of Microsoft 365, organizations are seeing major productivity gains. From drafting emails to summarizing complex data, Copilot is changing how we work, saving time and boosting efficiency. However, as with any powerful tool, businesses must address Microsoft Copilot security concerns to ensure that sensitive data is protected, and compliance standards are met.

Recent innovations, like Agent Flows in Copilot Studio, further expand Copilot’s capabilities. While Microsoft has embedded strong security features within Copilot, organizations must remain proactive. By understanding and managing potential risks such as data access, privacy, and user behavior, user can confidently embrace the power of AI without sacrificing security.

In this blog, we’ll explore the key security considerations of Microsoft Copilot and provide actionable best practices to help businesses securely implement it, ensuring sensitive information stays protected.

What is Microsoft Copilot?

Microsoft Copilot is an AI tool embedded into Microsoft 365 apps—Word, Excel, PowerPoint, Outlook, and Teams. It helps user complete tasks faster by offering suggestions, generating text, and performing repetitive tasks. For example, you can tell Copilot to compose an email instead of creating one from scratch. As a result, it saves time and reduces the effort needed for everyday work.

What makes Copilot even more powerful is its seamless integration into the tools you use every day. It can rewrite a paragraph, check grammar in Word, explain a formula, or surface insights from data in Excel. Moreover, it keeps learning over time, so its help improves the more you use it.

Source : Microsoft

Security Concerns with Microsoft Copilot

Microsoft Copilot, integrated into Microsoft 365 applications, offers significant productivity benefits but also introduces notable security and privacy risks. Below is a detailed breakdown of the primary concerns:

1. Data Protection and Privacy

Security Concern:

Copilots can access and summarize sensitive information such as emails, documents, and spreadsheets. Poor access controls can expose sensitive data such as internal financial details or personal records to unauthorized users. Moreover, external queries issued by Copilot can inadvertently expose sensitive information.

Mitigation:

Ensure proper access controls are in place to limit who can access sensitive information. You should periodically audit user permissions and apply data loss prevention (DLP) policies. Limit external interactions such as web queries and log any outbound data requests for signs of exposure.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

2. Prompt Injection Attacks

Security Concern:

Hackers can exploit prompt injection techniques, inserting hidden commands into emails or documents processed by Copilot. These malicious commands could trigger Copilot to perform unauthorized actions, such as retrieving sensitive emails or summarizing confidential documents.

Mitigation:

To reduce the risk of prompt injection, regularly train employees on the importance of secure document handling and validating content before sharing. Implement input validation and security measures to detect and prevent malicious code injections.

3. Over-Permissioned Access

Security Concern:

With the addition of Agent Flows and other new agents in Copilot Studio, the scope of what Copilot can do expands significantly. However, this also increases the risk of over-permissioned access, where Copilot may gain access to data that isn’t necessary for a user’s role.

Source : Microsoft

Mitigation:

Role-based access control (RBAC) should be used, which limits data access according to a user’s role. User permissions should be contained on a need-to-know basis and require regular auditing to align with job functions. Enforce the least privilege principle, ensuring that Copilot only accesses what is required for the user to perform their job.

4. Flawed Data Classification

Security Concern:

Microsoft Purview’s sensitivity labels are crucial for protecting sensitive data. However, Copilot-generated content may not always inherit these labels correctly, leaving unprotected data exposed. Mislabeling files or inconsistent classification can lead to data breaches.

Mitigation:

Ensure that sensitivity labels are applied consistently across all documents, including those generated by Copilot. Use Microsoft Purview to enforce classification policies and audit Copilot’s document creation process to make sure it inherits the correct protections.

5. Amplification of Existing Security Weaknesses

Security Concern:

Copilot doesn’t just retrieve data—it can also summarize and present it. As a result, poorly secured or overlooked content, such as old emails or shared files, may be exposed quickly, even if they were previously forgotten or improperly secured.

Mitigation:

Conduct regular security audits to identify and secure old data or forgotten documents. Implement strict data retention policies and ensure that access controls are consistently enforced across all files, even those less frequently accessed.

6. Intellectual Property Risks

Security Concern:

Copilot’s ability to generate content based on internal data may inadvertently expose proprietary information, trade secrets, or internal strategies. If this generated content is shared externally or stored insecurely, it can lead to intellectual property theft.

Mitigation:

Implement IP protection protocols, including encryption and access controls, to secure Copilot-generated content. Ensure sensitive documents, such as proprietary algorithms or internal strategies, are flagged and protected before sharing or distribution.

7. Overreliance and Lack of Review

Security Concern:

Users may over-rely on Copilot’s AI-generated outputs, taking them at face value without verifying the results. This could lead to the dissemination of incorrect, biased, or incomplete information.

Mitigation:

Promote a culture of verification where employees double-check AI-generated content before acting on it. Encourage critical thinking and provide training on the limitations and potential inaccuracies of AI tools.

Upcoming Webinar: Secure Your AI Environment with Microsoft Purview

As AI tools like Microsoft Copilot become more common in the workplace, understanding how to protect your data is critical. Join our upcoming webinar, “Microsoft Purview for Data and AI,” where leading experts will share insights on building a secure, compliant AI strategy using Microsoft Purview.

Hear from Naren Babu, Head of Data Governance & compliance at Kanerika, who brings over 16 years of experience helping organizations achieve regulatory compliance and data privacy. Alongside him, Pedro Ferreira from Concentric AI will share practical strategies for aligning cybersecurity solutions with business needs.

Register Now — Limited spots available.

Comparison of Microsoft Copilot vs. ChatGPT vs. Google Gemini in Security Concerns

1. Data Protection

Microsoft Copilot: Ensures that prompts and responses are not saved or used to train models. It features encryption for data at rest and in transit and offers commercial data protection with Entra ID for eligible users.

ChatGPT: Uses encryption for data transfer and undergoes annual security audits. It also has a bug bounty program to identify vulnerabilities.

Google Gemini: Emphasizes data privacy with features like data deletion controls, user activity logs, and confidential computing protections for sensitive workloads.

2. Access Controls

Microsoft Copilot: Provides strict access controls through Microsoft Entra ID and integrates with Microsoft 365’s role-based permissions to limit data exposure.

ChatGPT: Implements strict access controls to protect sensitive areas of its codebase and user interactions.

Google Gemini: Offers advanced IAM (Identity and Access Management) recommendations and integrates with Google Workspace for granular access control.

3. Compliance

Microsoft Copilot: Complies with GDPR, HIPAA, and other global standards, ensuring enterprise-grade compliance within the Microsoft ecosystem.

ChatGPT: Lacks specific enterprise compliance certifications but adheres to general privacy principles.

Google Gemini: Operates on Google Cloud, which is known for its robust compliance framework, including certifications like ISO/IEC 27001 and SOC.

Copilot in Microsoft Fabric: Simplifying Data Management with AI

Learn how Copilot in Microsoft Fabric utilizes AI to automate data tasks, enhancing productivity and simplifying data management.

4. Threat Detection

Microsoft Copilot: Uses AI-powered tools to detect insider risks and external threats within the Microsoft environment.

ChatGPT: Relies on its bug bounty program and external audits but lacks specialized threat detection capabilities.

Google Gemini: Integrates Mandiant’s threat intelligence for real-time threat detection and analysis, making it strong in cybersecurity applications.

5. Privacy Concerns

Microsoft Copilot: Accesses organizational data through Microsoft Graph but ensures suggestions are relevant without retaining user inputs beyond diagnostics.

ChatGPT: Collects interaction data for improvement but does not save prompts or responses permanently.

Google Gemini: Provides tools for secure file sharing and document access but has potential risks related to Google Workspace extensions being exploited by attackers.

| Feature | Microsoft Copilot | ChatGPT | Google Gemini |

| Data Protection | Encrypted; no prompt retention | Encrypted; annual audits | Data deletion & activity logs |

| Access Controls | Role-based via Entra ID | Strict internal controls | Advanced IAM & Workspace tools |

| Compliance | GDPR, HIPAA | General privacy principles | ISO/IEC 27001, SOC 2 |

| Threat Detection | AI-powered risk detection | Bug bounty program | Mandiant threat intelligence |

| Privacy | Limited diagnostic data collection | No prompt retention | Risks in Workspace extensions |

What Steps Has Microsoft Taken to Address Security Concerns with Copilot

Microsoft has implemented several measures to address security concerns associated with Microsoft 365 Copilot:

1. Strict Data Privacy and Access Control

- Copilot can only access and show data that the user already has permission to view. It doesn’t go beyond existing access controls. This means it won’t pull in private chats, documents, or emails that the user isn’t supposed to see.

- Microsoft 365’s built-in permissions (like those in SharePoint and Teams) are respected fully. Organizations don’t need to create new permission rules just for Copilot—it follows what’s already in place.

- In cases where teams work across companies or tenants—such as in shared Microsoft Teams channels—Copilot won’t expose anything beyond what the user is allowed to access. This helps reduce accidental data sharing between different organizations.

Source: Microsoft

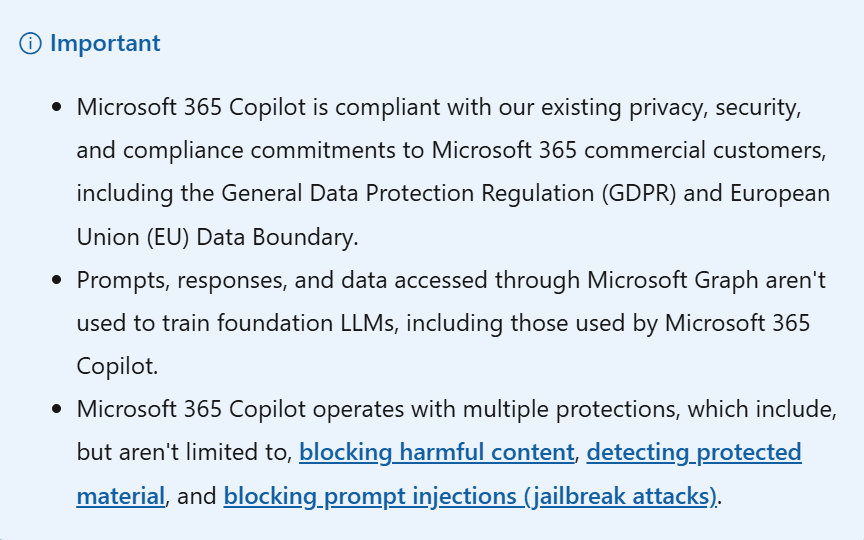

2. No Use of Customer Data to Train AI Models

- Microsoft has made it clear that none of the user prompts, responses, or accessed organizational content is used to train the foundation models that power Copilot.

- The data remains within Microsoft 365’s secure environment. Unlike public tools from OpenAI, Copilot uses Azure OpenAI services, which do not store or reuse customer content for model training.

- This helps prevent your organization’s internal information from being reused in any way outside your environment.

3. Content Filtering to Block Harmful or Unsafe Output

- Microsoft uses a content filtering system that checks both user inputs and AI responses. It works to block anything that falls into categories like hate speech, violence, sexual content, or self-harm.

- These filters are built to recognize and stop content that’s offensive, discriminatory, or inappropriate in any context, even across different languages.

- This helps keep interactions safe and professional, especially in work environments where inappropriate AI responses could cause problems.

4. Protection Against Prompt Injection (Jailbreak Attacks)

- Prompt injection is when someone tries to trick the AI into breaking its rules by using specially written inputs. These attacks can force AI tools to reveal restricted content or behave in unsafe ways.

- Microsoft 365 Copilot is built to defend against such attacks using specialized filters and monitoring tools through Azure AI Content Safety.

- These defenses are designed to catch and block such manipulation before any damage is done.

The Ultimate Databricks to Fabric Migration Roadmap for Enterprises

Explore AI’s impact on robotics and follow our step-by-step guide to efficiently migrate enterprise analytics from Databricks to Microsoft Fabric with minimal disruption.

5. Data Residency and EU Data Boundary Compliance

- Microsoft routes Copilot data to the closest data centers based on the user’s region. For users in the European Union, data is kept inside the EU boundary as much as possible.

- During high usage, data may temporarily move across regions, but safeguards ensure it’s still handled according to EU privacy laws.

- These practices are important for companies that have strict requirements about where their data is processed and stored.

6. End-to-End Encryption

- All data, whether it’s being transferred or stored, is encrypted using methods like TLS, BitLocker, and IPsec. This prevents unauthorized access, even if data is intercepted or stored in the cloud.

- Microsoft uses a layered approach to encryption, meaning even if one system is compromised, additional barriers are in place to keep the data safe.

7. Plugin and Extensibility Controls

- Organizations often use external tools along with Microsoft 365. When Copilot connects with these tools using plugins or Graph connectors, strict rules are in place.

- Only admins can enable or approve plugins. Users can’t just install them on their own. Even when a plugin is used, it can only access data the user is already allowed to see.

- This prevents third-party tools from reaching private or sensitive company data without admin review and control.

8. Activity History and User Data Management

- Copilot keeps a log of user interactions, including prompts and responses. This helps users go back and review what Copilot generated.

- Admins can manage, monitor, or delete this data using tools like Microsoft Purview. They can also apply retention policies to control how long it stays in the system.

- Users also have the option to delete their own Copilot activity history at any time through the My Account portal.

9. Compliance with Global Privacy and Security Laws

- Microsoft 365 Copilot meets global regulations like the GDPR and privacy standards like ISO/IEC 27018.

- These rules are not optional. Copilot’s design is built around them, ensuring that users and organizations stay compliant without needing to take extra steps.

- Microsoft also commits to keeping up with future AI laws as they evolve, especially around transparency and data protection.

10. Responsible AI and Copyright Protection

- Microsoft is working to reduce issues like misinformation, unfair bias, or harmful content in Copilot responses. These areas are part of its Responsible AI standards.

- While users still need to review what Copilot creates, Microsoft provides support and clear guidelines to help users avoid problems.

- If a customer is sued for copyright issues based on Copilot’s output—provided the built-in filters and safety systems were used—Microsoft will step in to defend the customer and cover costs. This is part of their “Copilot Copyright Commitment.

Kanerika: Your Trusted Partner for Strong, Practical Data Governance Solutions

At Kanerika, we know that good data governance is essential for any business that depends on data. With companies making more data-driven decisions than ever, it’s important to manage, protect, and maintain the quality of that data. At the same time, growing concerns around privacy, security, and compliance make it critical to have clear strategies in place for handling sensitive information.

As a trusted Microsoft Data & AI Solutions Partner, we help businesses set up solid data governance systems using Microsoft Purview. Our team has deep experience in deploying Microsoft Purview to create secure, scalable, and regulation-friendly frameworks. This allows our clients to stay compliant, improve data security, and run more efficiently — all while keeping full control over their data.

We also understand that every organization’s data environment is unique. That’s why we take a hands-on approach — assessing your current setup, aligning with your business goals, and customizing solutions that work in the real world. Whether you’re just starting with governance or looking to strengthen an existing setup, Kanerika brings the tools, expertise, and support to get it right.

Empower Your Team with Smart AI Tools for Maximum Efficiency!

Partner with Kanerika for Expert AI implementation Services

Frequently Asked Questions

Is Microsoft Copilot a high-risk AI system?

Microsoft Copilot is classified as a high-risk AI system under the EU AI Act when used in regulated sectors like healthcare, finance, or HR decision-making. The classification depends on deployment context rather than the tool itself. Organizations must conduct risk assessments, maintain transparency logs, and implement human oversight for compliance. Microsoft provides enterprise controls, but businesses remain accountable for how Copilot accesses and processes sensitive data within their environments. Kanerika helps enterprises navigate Copilot security concerns with governance frameworks tailored to your regulatory landscape—schedule a compliance consultation today.

What is the controversy with Microsoft Copilot?

The primary controversy with Microsoft Copilot centers on data exposure risks and overpermissioning. Copilot indexes all content users can access, meaning poorly configured SharePoint permissions can inadvertently surface confidential documents in AI responses. Organizations have discovered sensitive HR records, financial data, and executive communications appearing in Copilot outputs due to legacy permission sprawl. Critics also raise concerns about training data usage and regulatory compliance gaps. These Copilot security issues require proactive governance before deployment. Kanerika’s data governance specialists audit your Microsoft 365 environment to eliminate exposure risks—connect with us for a security assessment.

Does Copilot expose your data?

Copilot can expose your data if Microsoft 365 permissions are not properly configured. It inherits existing access controls, meaning any document a user can view may appear in Copilot-generated responses. This creates significant risks when organizations have accumulated overshared folders, orphaned permissions, or outdated access groups. Sensitive contracts, personnel files, or strategic plans become searchable through natural language queries. The exposure is not a Copilot flaw—it amplifies existing permission gaps within your environment. Kanerika conducts comprehensive permission audits to secure your data before Copilot deployment—reach out for a free assessment.

How safe is Microsoft Copilot?

Microsoft Copilot is built on enterprise-grade Azure infrastructure with encryption at rest and in transit, role-based access controls, and compliance certifications including SOC 2 and ISO 27001. However, Copilot safety depends heavily on your organization’s data governance maturity. Without proper sensitivity labels, access reviews, and permission hygiene, Copilot becomes a searchable interface for every overshared document. Microsoft provides the security foundation; organizations must configure it correctly. The tool is safe when deployed with proper controls but risky without preparation. Kanerika ensures your Copilot implementation is secure from day one—talk to our security team.

What are the risks of Copilot for Microsoft 365?

Copilot for Microsoft 365 introduces risks including data oversharing, compliance violations, and shadow AI exposure. Because Copilot surfaces content based on user permissions, misconfigured SharePoint sites or Teams channels can leak confidential information across departments. Regulatory risks emerge when Copilot processes personal data without proper GDPR or HIPAA safeguards in place. Additionally, employees may unknowingly share AI-generated summaries containing sensitive details externally. These Copilot risks require pre-deployment governance reviews, sensitivity labeling, and access control audits. Kanerika helps enterprises mitigate Copilot for Microsoft 365 risks through structured security frameworks—request your risk assessment today.

Can Copilot see my files?

Copilot can see any files you have permission to access within Microsoft 365, including OneDrive documents, SharePoint libraries, and Teams conversations. It does not bypass security controls but operates within your existing access boundaries. If you can open a file, Copilot can read and reference it when generating responses. This means sensitive documents stored in shared folders become part of Copilot’s searchable index for all permitted users. Organizations must review and tighten permissions before rollout to prevent unintended file visibility. Kanerika helps enterprises configure Copilot file access securely—contact us for a permissions audit.

What is the security problem with Copilot?

The core security problem with Copilot is permission inheritance combined with data sprawl. Copilot accesses everything users can reach, exposing years of accumulated oversharing, broken inheritance, and forgotten group memberships. Organizations often discover confidential executive communications, merger documents, or salary data surfacing through innocent queries. Unlike traditional search, Copilot synthesizes information across sources, making leaks harder to trace. The security problem is not Copilot’s architecture—it’s unprepared data environments. Enterprises need thorough permission reviews and sensitivity labeling before deployment. Kanerika identifies and resolves Copilot security problems before they become breaches—schedule your security assessment now.

Does Microsoft Copilot collect data?

Microsoft Copilot collects interaction data including prompts, responses, and usage patterns to deliver personalized experiences and improve service quality. For enterprise customers, Microsoft states it does not use business data to train foundation models. However, Copilot does process and temporarily store conversation context during sessions. Diagnostic data collection settings can be configured through Microsoft 365 admin controls. Organizations subject to strict data residency requirements should review Microsoft’s data processing agreements carefully. Understanding what Copilot collects is essential for compliance planning. Kanerika helps enterprises configure Copilot data collection settings aligned with privacy policies—reach out for guidance.

Is Microsoft Copilot compliant with data laws like GDPR?

Microsoft Copilot is designed with GDPR compliance capabilities, including data processing agreements, EU data residency options, and privacy controls. Microsoft acts as a data processor under GDPR, but your organization remains the data controller responsible for lawful use. Compliance depends on how you configure Copilot—enabling sensitivity labels, managing consent, and ensuring proper retention policies. Organizations must also address data subject access requests that may include Copilot-generated content. GDPR compliance is achievable but requires deliberate configuration and governance. Kanerika ensures your Copilot deployment meets GDPR and other regulatory requirements—contact us for compliance planning.

Is Copilot more secure than ChatGPT?

Copilot offers stronger enterprise security than ChatGPT consumer versions because it operates within Microsoft 365’s compliance boundary with data encryption, access controls, and tenant isolation. Microsoft commits to not training models on enterprise customer data, unlike free ChatGPT tiers. Copilot inherits Azure Active Directory authentication, conditional access policies, and audit logging. However, ChatGPT Enterprise also provides robust security features for business use. The key difference is Copilot’s native integration with Microsoft 365 governance tools and existing security investments. Kanerika helps enterprises evaluate Copilot vs ChatGPT security for your specific requirements—talk to our AI specialists.

What are the downsides of Microsoft Copilot?

Microsoft Copilot downsides include significant licensing costs, data governance prerequisites, and occasional response inaccuracies. Organizations unprepared for Copilot often discover overshared content surfacing in employee queries, creating compliance and confidentiality risks. The learning curve frustrates users expecting instant productivity gains without proper training. Copilot can also generate hallucinated information, requiring human verification for critical outputs. Integration gaps exist with non-Microsoft applications, limiting utility in heterogeneous environments. These downsides are manageable with proper planning but catch unprepared enterprises off guard. Kanerika helps organizations address Copilot limitations through strategic implementation planning—connect with our team today.

Can Copilot see your screen?

Copilot does not see your screen through continuous monitoring or screen capture. It accesses content within Microsoft 365 applications you actively use, such as the document open in Word or the email you’re composing in Outlook. Copilot reads application context, not screen pixels. When using Windows Copilot features like Recall, screenshot-based functionality exists but operates under separate privacy controls users can configure. Standard Microsoft 365 Copilot does not perform screen surveillance or capture visual data outside its application context. Kanerika helps enterprises understand and configure Copilot privacy settings appropriately—reach out for a configuration review.

Does Copilot listen to you?

Copilot does not passively listen to conversations or record ambient audio. Voice interaction requires explicit activation through microphone-enabled features in Microsoft 365 apps or Teams meetings. During Teams meetings with transcription enabled, Copilot can process audio content, but this requires meeting organizer configuration and participant awareness. Outside active voice commands or transcription features, Copilot has no audio capture capability. Organizations concerned about listening should review Teams meeting policies and disable transcription features where appropriate. Understanding Copilot audio access is essential for privacy compliance. Kanerika assists enterprises with Copilot privacy configuration aligned to your security policies—contact us for guidance.

Is Microsoft moving away from Copilot?

Microsoft is not moving away from Copilot—it remains central to the company’s AI strategy across Windows, Microsoft 365, and Azure. Microsoft continues investing billions in Copilot development, expanding capabilities with autonomous agents, deeper application integration, and industry-specific features. Recent organizational changes and product consolidations reflect strategic refinement, not retreat. Copilot branding may evolve, but the underlying AI assistant functionality is being embedded more deeply into Microsoft’s product ecosystem. Enterprises should plan for long-term Copilot adoption rather than expecting discontinuation. Kanerika helps organizations build sustainable Copilot strategies aligned with Microsoft’s roadmap—schedule a consultation with our team.