In 2024, Starbucks used analytics to personalize customer recommendations based on buying behavior, weather, and location—boosting both engagement and sales. Walmart improved inventory accuracy by integrating real-time data analytics across its supply chain, reducing overstock and enhancing shelf availability. Meanwhile, Netflix continued refining its recommendation engine, leveraging viewer behavior to increase watch time and retention.

These examples show that when used strategically, data analytics is more than just reporting—it’s a competitive advantage. But success doesn’t come from tools alone. It comes from applying data analytics best practices that align insights with business goals, ensure quality at every stage, and make analytics accessible across teams.

Whether you’re building dashboards, forecasting demand, or driving customer engagement, following proven practices ensures your analytics are actionable, scalable, and impactful. In this blog, we’ll explore key best practices—from choosing the right tools and defining clear objectives to promoting data literacy and building a culture of insight-driven decision-making.

10 Data Analytics Best Practices

Let’s explore 10 best practices for data analytics that can help you navigate the data landscape with confidence.

1. Start with a Clear Business Question

Organizations often fall into the trap of collecting vast quantities of information without a clear purpose, leading to “data-rich but insight-poor” operations. The most successful data initiatives begin not with data collection or analysis, but with a well-defined business question.

The Purpose-Driven Approach

Data should serve a specific purpose rather than merely populating dashboards or reports. When data collection and analysis are untethered from clear business objectives, the result is often wasted resources and missed opportunities. By starting with a precise business question, organizations can:

- Focus data collection efforts on relevant information

- Design appropriate analytical approaches

- Measure success against tangible business outcomes

- Communicate findings in ways that drive action

Defining Effective Business Questions

An effective business question should be:

- Specific: Narrowly focused rather than broad or vague

- Measurable: Answerable through quantifiable metrics

- Actionable: Capable of driving decisions and changes

- Relevant: Directly connected to business objectives

- Time-bound: Defined within a specific timeframe

Examples of Clear Business Questions

- Marketing

- “Which digital marketing channel delivers the highest customer lifetime value for our premium product line?”

- “How does a 10% increase in email frequency affect customer retention rates across different segments?”

- “What is the optimal content mix to maximize conversion rates for our millennial target audience?”

- Operations

- Where are the top three bottlenecks in our current fulfillment process driving the longest delays?”

- “Which suppliers account for 80% of our quality control rejections in the past quarter?”

- “How would implementing Just-In-Time inventory affect our stockout rates during seasonal demand peaks?”

- “Which three features of our enterprise software drive the highest usage rates among our top-tier customers?”

- “What is the correlation between specific product attributes and customer return rates?”

- “How does product usage behavior differ between customers who renew versus those who churn?”

- Financial Performance

- “What are the primary cost drivers that account for our margin variance compared to last year?”

- “Which customer segments generate the highest contribution margin after accounting for support costs?”

- “How do different payment terms affect our days sales outstanding and cash conversion cycle?”

Common Pitfalls to Avoid

- Data-first thinking: Starting with available data rather than business needs

- Analysis paralysis: Getting lost in excessive detail without clear direction

- Vague questions: Pursuing questions too broad to drive specific actions

- Unmeasurable inquiries: Asking questions that can’t be answered with available data

- Insights without application: Generating insights without a path to implementation

2. Define Clear Business Objectives

Successful analytics initiatives begin by aligning data efforts with strategic business priorities. Before diving into technical implementation, organizations must:

- Identify core business challenges and opportunities that analytics can address

- Establish specific, measurable objectives with clear business value

- Develop key performance indicators (KPIs) that directly connect to business outcomes

Effective business objectives for analytics should be:

- Specific enough to guide implementation decisions

- Measurable with quantifiable success metrics

- Achievable within resource constraints

- Relevant to stakeholders across departments

- Time-bound with clear evaluation periods

Examples of well-defined analytics objectives include:

- Reduce customer churn by 15% within 12 months by identifying at-risk segments

- Increase operational efficiency by optimizing inventory levels to reduce carrying costs by 10%

- Improve marketing ROI by identifying highest-performing channels and reallocating spend

By starting with clear business objectives rather than technological solutions, organizations ensure analytics investments deliver tangible value. This approach also facilitates cross-functional alignment, helps prioritize data initiatives, and provides a framework for measuring success beyond technical implementation metrics.

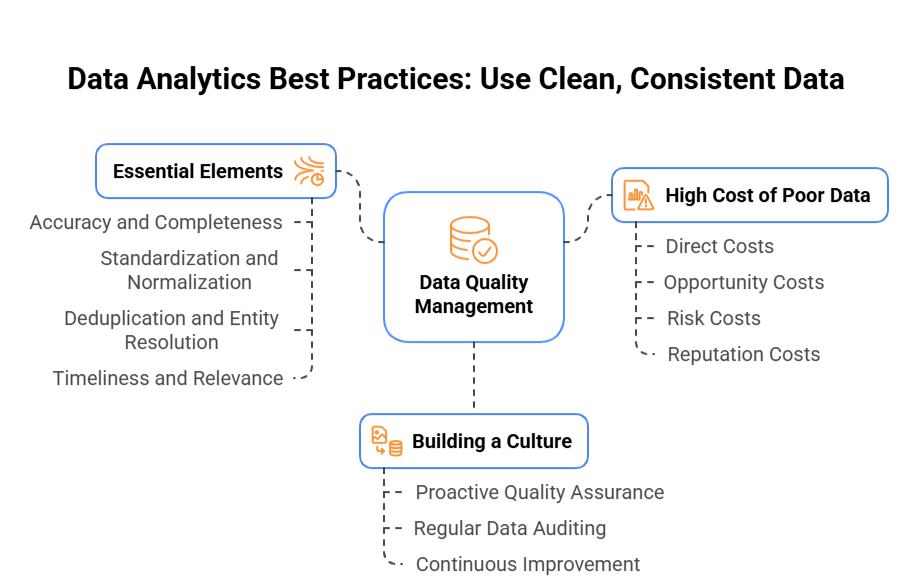

3. Use Clean, Consistent Data

The age-old computing principle “garbage in, garbage out” remains the fundamental truth of data analytics. Even the most sophisticated analytical models and AI systems cannot overcome the limitations of poor-quality data.

1. The Foundation of Reliable Insights

Clean, consistent data serves as the bedrock of effective analytics. Organizations that prioritize data quality experience:

- Greater decision confidence: Stakeholders trust insights built on reliable data

- Faster time-to-insight: Less time wasted reconciling inconsistencies

- Higher analytical ROI: More valuable outcomes from analytical investments

- Improved cross-functional alignment: Consistent data creates “one version of truth”

2. Essential Elements of Data Quality

1. Accuracy and Completeness

- Ensure data correctly represents the real-world entities or events it describes

- Address missing values through appropriate collection procedures or imputation methods

- Validate inputs at collection points to prevent errors from entering systems

2. Standardization and Normalization

- Establish consistent formats for dates, addresses, product codes, and other common fields

- Define and enforce naming conventions across systems and departments

- Implement master data management to maintain coherent reference data

3. Deduplication and Entity Resolution

- Identify and merge duplicate records to prevent skewed analyses

- Establish reliable matching rules to recognize when different records represent the same entity

- Create unique identifiers that persist across systems and processes

4. Timeliness and Relevance

- Ensure data is refreshed at appropriate intervals for its intended use

- Archive or clearly label historical data that may no longer represent current conditions

- Maintain metadata about collection times and update frequencies

3. Building a Culture of Data Quality

1. Proactive Quality Assurance

- Implement automated validation rules at data entry points

- Create data quality dashboards to monitor key metrics

- Establish clear ownership for data quality across the organization

2. Regular Data Auditing

- Conduct periodic comprehensive reviews of critical data assets

- Use statistical methods to identify anomalies and potential quality issues

- Document and track common quality problems to address root causes

3. Continuous Improvement

- Establish feedback loops from data consumers to data producers

- Prioritize quality issues based on business impact

- Invest in tools and training to systematically enhance data quality

4. The High Cost of Poor Data

Organizations often underestimate the true cost of poor data quality:

- Direct costs: Time spent cleaning and reconciling data

- Opportunity costs: Delayed or missed insights due to data limitations

- Risk costs: Poor decisions based on flawed data

- Reputation costs: Lost credibility when analyses prove unreliable

By treating data quality as a strategic priority rather than a technical concern, organizations create the essential foundation for analytics that drive meaningful business impact.

4. Choose the Right Tools and Tech Stack

Selecting the optimal analytics technology stack is critical to data success, but many organizations fall into the trap of tool infatuation rather than business alignment. The most effective approach matches tools to specific business requirements and existing team capabilities.

1. Match Tools to Business Needs

Begin by clearly defining your analytics objectives:

- For basic reporting and analysis, Microsoft Excel or Google Sheets may be entirely sufficient

- For interactive dashboards and visualizations, consider Tableau, Microsoft Power BI, or Looker

- For complex statistical analysis, R or Python with libraries like Pandas and scikit-learn

- For large-scale data processing, evaluate solutions like Spark or Snowflake

2. Consider Team Capabilities

Your team’s skills should heavily influence technology choices:

- Business analysts may excel with SQL and visualization tools like Tableau

- Data scientists typically leverage Python or R for statistical modeling

- Engineers might prefer programmatic approaches with frameworks like Apache Airflow

3. Common Tool Categories

Data Storage

- Databases: PostgreSQL, MySQL, SQL Server

- Data Warehouses: Snowflake, BigQuery, Redshift

- Data Lakes: Azure Data Lake, AWS S3

Data Transformation

- SQL-based: dbt, Dataform

- Python/Java: Apache Spark, Pandas

- GUI-based: Alteryx, Talend

Visualization

- Self-service: Tableau, Power BI, Looker

- Developer-focused: D3.js, Plotly

- Embedded: Sisense, Logi Analytics

Machine Learning

- AutoML: DataRobot, H2O.ai

- Libraries: scikit-learn, TensorFlow, PyTorch

- Cloud Services: AWS SageMaker, Azure ML

4. Start Simple, Scale Thoughtfully

Avoid over-engineering your initial solution:

- Begin with the minimum viable stack that delivers value

- Add complexity only when business needs demand it

- Prioritize integration capabilities for future expansion

Common Pitfalls

- Shiny Object Syndrome: Choosing cutting-edge tools without clear use cases

- Over-specialization: Selecting tools that only one team member can use

- Under-investment: Trying to solve enterprise problems with personal tools

- Fragmentation: Creating disconnected analytics silos across departments

The right tool stack enables your team to answer business questions efficiently while creating a foundation for future analytics maturity.

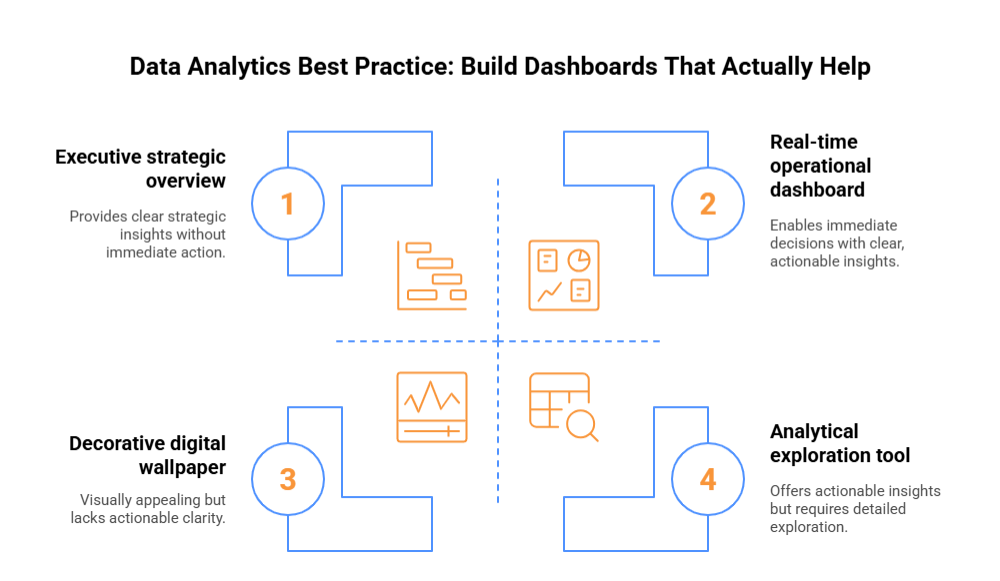

5. Build Dashboards That Actually Help

Too many dashboards become digital wallpaper—impressive to look at but rarely driving action. The difference between a decorative dashboard and one that creates business value lies in thoughtful design focused on decision support, not just data display.

- Focus on Actionable Metrics, Not Vanity KPIs

The most elegant dashboard filled with vanity metrics like total page views or social media followers often fails to drive meaningful business decisions. Instead:

- Highlight metrics directly tied to business outcomes (conversion rates, customer acquisition costs, profit margins)

- Include leading indicators that enable proactive responses

- Show context through targets, benchmarks, and historical comparisons

- Make clear what “good” and “bad” look like for each metric

- Include guidance on potential actions when metrics deviate from expectations

- Keep It Clean: Clarity Trumps Complexity

Visual clutter creates cognitive overload, making it harder to extract insights:

- Limit each dashboard to 5-7 key metrics that tell a cohesive story

- Embrace white space to improve readability and focus attention

- Use color purposefully to highlight exceptions or critical information

- Choose appropriate visualizations for each metric (avoid fancy charts when simple ones work better)

- Remove decorative elements that don’t enhance understanding

- Minimize filters and interactive elements to those that serve essential analysis paths

- Tailor Dashboards to Specific Audiences

Different stakeholders need different views of the data:

- Executive dashboards: High-level KPIs with strategic focus and minimal detail

- Operational dashboards: Real-time metrics enabling daily decisions with clear thresholds for action

- Analytical dashboards: More detailed views with exploration capabilities for analysts and managers

- Team-specific dashboards: Metrics directly relevant to specific functions (marketing, sales, product)

- Design for Action, Not Just Information

The ultimate test of a dashboard is whether it changes behavior:

- Include clear calls to action based on data thresholds

- Provide drill-down capabilities for root cause analysis

- Highlight exceptions and anomalies that require attention

- Enable annotations to document context around unusual patterns

- Schedule regular reviews to discuss insights and necessary actions

- Continuously refine based on user feedback and actual usage patterns

- Questions to Ask Before Building

Before creating a new dashboard, ask:

- What specific decisions will this dashboard inform?

- Who needs to make these decisions and what context do they require?

- What is the minimum information needed to drive those decisions?

- How will we know if this dashboard is actually being used?

- What actions should be taken when metrics change significantly?

Remember: The best dashboard isn’t the one with the most data or the fanciest visualizations—it’s the one that helps people make better decisions faster.

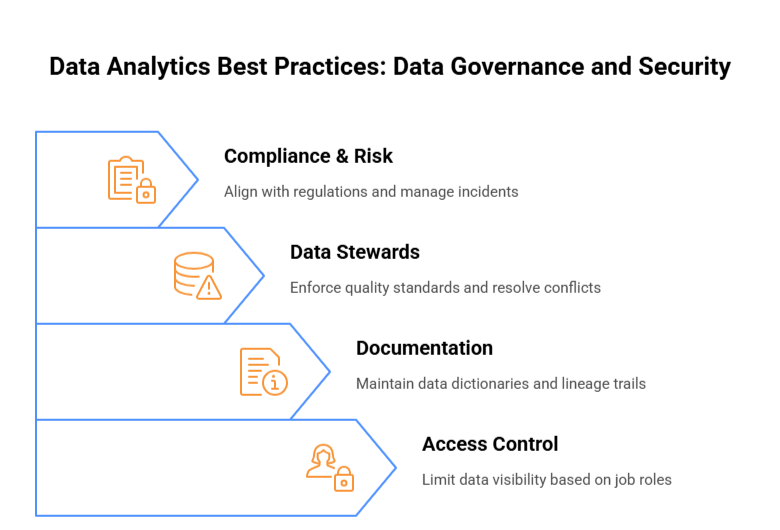

6. Ensure Data Governance and Security

Effective data analytics requires robust governance and security frameworks to protect valuable information assets while enabling productive use. Organizations must implement comprehensive measures to maintain data integrity and compliance.

1. Access Control Essentials

- Implement role-based access controls (RBAC) to limit data visibility based on job requirements

- Employ the principle of least privilege, granting only necessary permissions

- Create data classification systems that categorize information by sensitivity level

- Utilize strong authentication mechanisms, including multi-factor authentication for sensitive datasets

- Maintain detailed access logs to track who accessed what data and when

2. Documentation Requirements

- Maintain comprehensive data dictionaries documenting field definitions and relationships

- Create clear data lineage trails showing the origin and transformation of all datasets

- Document processing rules and algorithms to ensure transparency in data manipulation

- Establish version control for datasets and analytics models

- Develop standardized metadata frameworks that facilitate discovery and proper usage

3. The Critical Role of Data Stewards

- Assign dedicated data stewards responsible for specific domains or datasets

- Empower stewards to enforce quality standards and governance policies

- Task stewards with resolving data discrepancies and definition conflicts

- Position stewards as the bridge between technical and business stakeholders

- Require regular stewardship reviews to ensure ongoing compliance and quality

4. Compliance and Risk Management

- Align governance practices with relevant regulations (GDPR, CCPA, HIPAA, etc.)

- Conduct regular privacy impact assessments on data collection and usage

- Implement data retention policies that balance analytical needs with compliance requirements

- Develop incident response plans specifically for data breaches or governance failures

- Perform regular audits of governance practices and security controls

By establishing strong governance and security protocols, organizations create the foundation for trusted analytics while protecting both their data assets and stakeholder privacy.

7. Establish a Single Source of Truth

Data fragmentation across organizational silos creates significant challenges for analytics initiatives. When departments maintain separate data stores with inconsistent definitions and metrics, decision-makers struggle with contradictory insights and eroding trust in analytics.

A centralized data infrastructure provides the foundation for reliable analytics by:

- Creating consensus on data definitions and business rules

- Eliminating redundant, outdated data copies

- Providing consistent metrics across departments

- Simplifying access for authorized users

Organizations should invest in integrated data platforms—whether cloud data warehouses, data lakes, or lakehouse architectures—to consolidate information from disparate sources. This centralization requires:

- Strong data governance practices

- Standardized ETL/ELT processes

- Clear data ownership and stewardship

- Robust master data management

While implementation requires significant coordination across teams, the benefits are substantial: unified reporting, consistent decision-making, reduced maintenance costs, and simplified compliance. Most importantly, a single source of truth establishes the credibility needed for analytics to drive strategic business decisions.

8. Make It a Team Sport: Cross-Functional Collaboration

Data analytics thrives on collaboration. While data teams bring technical expertise, they need business context that only comes from cross-functional partnerships.

Product managers offer insights on user behavior, marketing teams provide campaign performance context, and sales representatives understand customer pain points. These perspectives transform raw data into actionable intelligence.

Successful organizations create structured collaboration through:

- Weekly syncs between data and business teams

- Shared KPI dashboards reflecting department-specific and company-wide goals

- Data literacy workshops to empower non-technical stakeholders

- Joint problem-solving sessions addressing business challenges

When analytics becomes a team sport, organizations avoid the common pitfall of data-rich but insight-poor analysis. Cross-functional collaboration ensures analyses answer the right questions and findings translate into meaningful action.

The most valuable analytics insights often emerge at the intersection of technical expertise and domain knowledge—making collaboration not just beneficial, but essential.

9. Track and Measure Data Analytics Performance

Effective analytics programs require rigorous performance tracking to ensure they deliver business value. Implementing feedback loops is essential—regularly assess whether dashboards and reports are genuinely influencing decisions or merely creating digital dust. If stakeholders aren’t acting on insights, recalibrate your approach.

Establish specific KPIs for your data team that go beyond technical metrics. Track report usage statistics, time-to-insight, issue resolution speed, and stakeholder satisfaction. Set targets for how quickly insights translate to business actions.

Each analytics cycle presents learning opportunities. Document what worked, what didn’t, and why. Were insights actionable? Did they address the right business questions? Was data delivered at the right time to the right people?

This continuous improvement mindset ensures analytics efforts remain aligned with evolving business needs while building credibility across the organization as a value-driving function rather than a cost center.

10. Train Teams on Data Literacy

In today’s business environment, tools are only as useful as the people using them. While companies invest heavily in advanced analytics platforms, the true ROI comes from ensuring teams can effectively leverage these resources.

Comprehensive data literacy training transforms how organizations operate. By offering structured education on interpreting reports, navigating dashboards, and understanding basic analytical concepts, companies empower employees to make informed decisions independently.

This approach creates several advantages:

- Reduces the analytics bottleneck where data teams become overwhelmed with basic requests

- Enables faster decision-making as teams don’t wait for analyst support

- Improves data quality as more users understand proper data practices

- Builds organizational confidence in using metrics for everyday decisions

Most importantly, data literacy training helps create a culture where evidence-based thinking becomes the norm rather than the exception. When employees across departments—from marketing to operations—can confidently interact with data, the entire organization becomes more agile and responsive to market changes.

The most successful companies recognize that data literacy isn’t a specialized skill—it’s a core competency for the modern workforce.

Why AI and Data Analytics Are Critical to Staying Competitive

AI and data analytics empower businesses to make informed decisions, optimize operations, and anticipate market trends, ensuring they maintain a strong competitive edge.

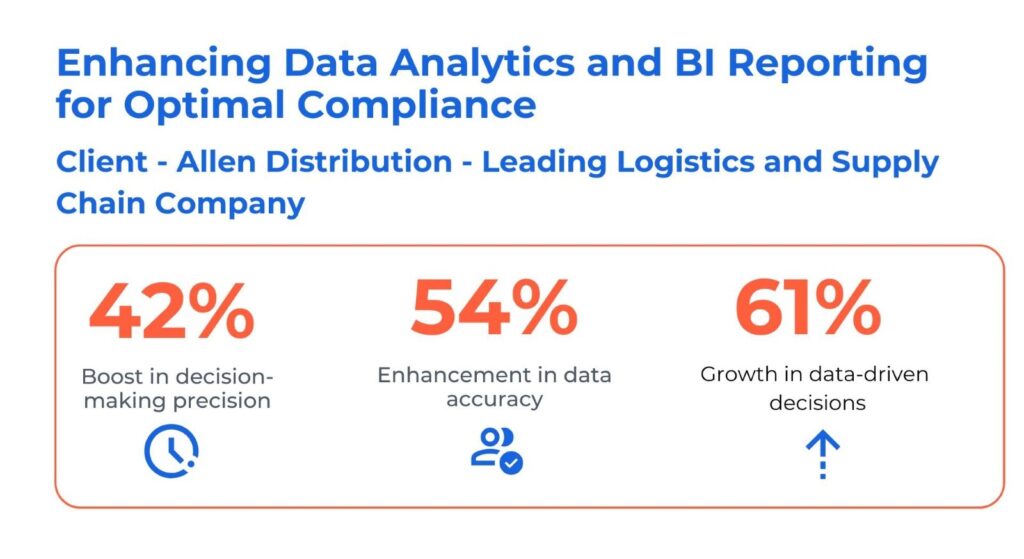

Case Study: How Allen Distribution Transformed Analytics with Microsoft Fabric & Power BI

Allen Distribution, a leading logistics and supply chain company, faced major roadblocks in their analytics journey. Data was scattered across disconnected systems, slowing down decision-making and exposing the business to security risks due to lack of a unified view. Manual reporting processes further created inefficiencies in performance tracking and operational planning.

To overcome these challenges, Allen Distribution partnered with Kanerika and implemented a powerful analytics stack using Microsoft Fabric Data Lakehouse and Power BI. They centralized data to eliminate fragmentation and enabled a unified view across operations. Additionally, row-level security (RLS) was implemented to enforce role-based access, enhancing compliance and data privacy.

With real-time reporting in Power BI, teams could access key metrics faster and make quicker, more informed decisions.

Outcomes:

- 42% boost in decision-making precision

- 61% growth in data-driven decisions

This transformation highlights the power of aligning modern tools with analytics best practices to unlock business intelligence at scale.

Case Study: Modernizing Financial Analytics for an Agro-Manufacturing Leader

A leading agro-manufacturing company faced serious data challenges—including poor data quality, integration issues, and performance lags—leading to inaccurate financial reporting and compliance risks. The complexity intensified post-acquisitions, making financial consolidation even more difficult.

To address this, the company partnered with Kanerika to implement a modern analytics stack. They used Informatica Cloud to improve data integration and Snowflake with Power BI for reliable access to over 70 KPIs and financial reports. Additionally, Oracle Golden Gate was deployed to streamline general ledger reporting and post-acquisition financial processes.

Outcomes:

- 40% decrease in response time

- 100% improvement in data integration

- 60% boost in operational performance

This transformation demonstrates the power of aligning the right tools with strategic analytics practices for scalable, high-impact results.

Level Up Your Enterprise Data Strategy with Kanerika’s Advanced Data Analytics Solutions

Kanerika is a premier data and AI solutions company offering innovative data analytics services that help businesses gain fast, accurate insights from their vast data estates. As a certified Microsoft Data and AI solutions partner, we utilize Microsoft’s powerful analytics and BI tools, including Fabric and Power BI, to deliver robust solutions. These tools help businesses not only address current challenges but also enhance their data operations, driving growth and innovation.

Advanced analytics plays a pivotal role in enabling businesses across various industries to overcome operational pitfalls. By optimizing resources, reducing costs, and increasing efficiency, our solutions help you make informed decisions that improve productivity and profitability. Whether it’s streamlining processes, enhancing customer experiences, or boosting decision-making capabilities, Kanerika’s advanced analytics solutions empower your business to thrive in a competitive marketplace. Let us help you unlock the full potential of your data and fuel sustainable growth.

Elevate Your Business Operations with Advanced Analytics Today!!

Partner with Kanerika Today!

Frequently Asked Questions

What are the best practices in data analytics?

Data analytics best practices include establishing clear business objectives, ensuring data quality through validation and cleansing, implementing robust data governance frameworks, and fostering cross-functional collaboration. Organizations should also invest in scalable infrastructure, maintain comprehensive documentation, and prioritize data security throughout the analytics lifecycle. Effective analytics strategies combine automated pipelines with human oversight to deliver actionable insights. Regular audits and continuous improvement cycles keep your analytics capabilities aligned with evolving business needs. Kanerika helps enterprises implement end-to-end data analytics best practices that drive measurable ROI—connect with our team for a tailored assessment.

What are the 7 steps of data analysis?

The seven steps of data analysis are: defining objectives, collecting relevant data, cleaning and preparing datasets, exploring data through descriptive analysis, performing in-depth analysis using statistical methods, visualizing findings for stakeholders, and interpreting results to drive decisions. Each step builds upon the previous one, ensuring analytical rigor and business relevance. Skipping steps often leads to flawed conclusions and wasted resources. A structured data analysis process transforms raw information into strategic intelligence that powers competitive advantage. Kanerika’s analytics specialists guide organizations through each step with proven methodologies—reach out to accelerate your data analysis journey.

What are the 4 pillars of data analytics?

The four pillars of data analytics are descriptive analytics, diagnostic analytics, predictive analytics, and prescriptive analytics. Descriptive analytics reveals what happened, diagnostic explains why, predictive forecasts future outcomes, and prescriptive recommends optimal actions. Together, these pillars form a comprehensive analytics framework that moves organizations from reactive reporting to proactive decision-making. Mature enterprises leverage all four pillars simultaneously, embedding insights across operations, finance, and customer engagement. Understanding these foundational pillars helps prioritize investments and build analytics capabilities systematically. Kanerika designs analytics architectures that strengthen each pillar—schedule a consultation to assess your current maturity.

What are the 5 V's of data analytics?

The five V’s of data analytics are Volume, Velocity, Variety, Veracity, and Value. Volume refers to the massive scale of data generated; Velocity addresses the speed of data creation and processing; Variety encompasses structured, unstructured, and semi-structured formats; Veracity concerns data accuracy and trustworthiness; Value represents the business insights extracted. Managing these dimensions effectively determines big data analytics success. Organizations must architect solutions that handle high-volume, real-time data while maintaining quality standards. Kanerika builds scalable data platforms addressing all five V’s—contact us to modernize your data infrastructure.

What are the six phases of data analytics?

The six phases of data analytics are Ask, Prepare, Process, Analyze, Share, and Act. During Ask, you define business questions; Prepare involves gathering and organizing data; Process includes cleaning and transformation; Analyze applies statistical and computational techniques; Share communicates insights through visualizations and reports; Act implements data-driven decisions. This cyclical framework ensures continuous improvement and alignment between analytics efforts and strategic goals. Each phase requires specific tools, skills, and governance protocols to execute effectively. Kanerika partners with enterprises to optimize every phase of their analytics lifecycle—let us help you build a repeatable framework.

What are the 7 data principles?

The seven data principles guide responsible data management: data should be treated as an asset, shared across the organization, accessible to authorized users, managed with clear accountability, defined consistently through common vocabulary, protected with appropriate security, and governed by documented policies. These principles establish the foundation for enterprise data governance and analytics excellence. Organizations adhering to these principles experience fewer compliance issues, improved data quality, and faster time-to-insight. Embedding these principles into culture requires executive sponsorship and continuous training. Kanerika implements data governance frameworks built on these core principles—engage our experts to strengthen your data foundation.

Why are data analytics best practices important?

Data analytics best practices are important because they ensure consistent, reliable insights that drive confident business decisions. Without standardized processes, organizations face data quality issues, compliance risks, duplicated efforts, and conflicting reports across departments. Best practices reduce analytical errors, accelerate time-to-insight, and maximize return on technology investments. They also foster trust in data among stakeholders, encouraging broader adoption of analytics across the enterprise. Companies following rigorous analytics standards outperform competitors in operational efficiency and market responsiveness. Kanerika helps organizations establish and scale analytics best practices—connect with us to benchmark your current capabilities.

What is the first step in applying data analytics best practices?

The first step in applying data analytics best practices is clearly defining your business objectives and key questions. Without well-articulated goals, analytics initiatives lack direction and often deliver irrelevant insights. Start by identifying specific outcomes you want to achieve, whether improving customer retention, optimizing supply chains, or reducing operational costs. Collaborate with stakeholders to prioritize use cases based on business impact and data availability. This alignment ensures subsequent steps in data collection, preparation, and analysis remain focused and valuable. Kanerika conducts discovery workshops that translate business priorities into actionable analytics roadmaps—schedule yours today.

What tools support data analytics best practices?

Tools supporting data analytics best practices include Microsoft Fabric for unified data integration, Power BI for interactive visualization, Databricks for scalable lakehouse analytics, and Snowflake for cloud-based data warehousing. Data governance platforms like Microsoft Purview ensure compliance and security. Automation tools streamline data pipelines, reducing manual errors and accelerating delivery. The right toolset depends on your data volumes, technical capabilities, and integration requirements. Selecting best-of-breed platforms that work together creates a cohesive analytics ecosystem driving enterprise-wide insights. Kanerika is a certified partner across leading analytics platforms—consult with us to design your optimal technology stack.

What role does data governance play?

Data governance plays a critical role in ensuring data accuracy, security, compliance, and accessibility across the organization. It establishes policies, standards, and accountability structures that maintain data integrity throughout its lifecycle. Effective governance prevents unauthorized access, reduces regulatory risk, and creates a single source of truth for analytics. Without governance, organizations struggle with inconsistent definitions, duplicated datasets, and unreliable reports. Strong data governance frameworks support scalable analytics by building trust in the underlying data assets. Kanerika implements comprehensive data governance solutions tailored to your compliance and operational requirements—reach out to establish governance that enables growth.

How can I ensure the quality of my data?

Ensuring data quality requires implementing validation rules at ingestion, establishing data profiling routines, and conducting regular cleansing processes to remove duplicates and correct errors. Define clear data quality metrics including accuracy, completeness, consistency, timeliness, and uniqueness. Automated monitoring tools flag anomalies before they propagate through analytics pipelines. Assign data stewards responsible for maintaining quality standards within their domains. Document data lineage to trace issues back to their source and implement corrections systematically. High-quality data is the foundation of trustworthy analytics and confident decision-making. Kanerika deploys end-to-end data quality frameworks that maintain enterprise-grade standards—talk to our specialists today.

How do I avoid data silos?

Avoiding data silos requires implementing unified data platforms, establishing cross-functional governance, and promoting data sharing through standardized APIs and integration layers. Silos emerge when departments manage data independently without enterprise-wide coordination. Break them down by creating centralized data repositories, adopting common data models, and incentivizing collaboration across teams. Cloud-based architectures like lakehouses enable seamless access while maintaining security controls. Executive sponsorship is crucial for driving cultural change toward data democratization. Organizations without silos achieve faster insights, reduced redundancy, and better customer experiences. Kanerika specializes in data platform consolidation that eliminates silos—contact us for a free architecture review.

What are the 5 C's of data analytics?

The five C’s of data analytics are Clean, Connected, Comprehensive, Current, and Compliant. Clean data is free from errors and inconsistencies; Connected data integrates across systems for holistic views; Comprehensive data covers all relevant dimensions; Current data reflects real-time or near-real-time states; Compliant data adheres to regulatory and privacy requirements. Mastering these characteristics ensures analytics outputs are reliable and actionable. Organizations neglecting any C risk flawed insights, regulatory penalties, or missed opportunities. Building systems that maintain all five C’s requires deliberate architecture and governance investments. Kanerika engineers data ecosystems optimized for all five C’s—partner with us to elevate your analytics foundation.

What are the 4 types of data analytics?

The four types of data analytics are descriptive, diagnostic, predictive, and prescriptive. Descriptive analytics summarizes historical data to show what happened. Diagnostic analytics investigates causes behind observed patterns. Predictive analytics uses statistical models and machine learning to forecast future outcomes. Prescriptive analytics recommends specific actions to optimize results. Most organizations begin with descriptive capabilities and progressively mature toward prescriptive applications. Each type builds upon the previous, requiring increasingly sophisticated data infrastructure and analytical talent. A balanced analytics strategy leverages all four types appropriately. Kanerika helps enterprises advance through each analytics maturity stage—request an assessment to identify your next steps.

What are the 6 C's of data quality?

The six C’s of data quality are Completeness, Consistency, Conformity, Currency, Correctness, and Coverage. Completeness ensures no critical data is missing; Consistency maintains uniformity across datasets; Conformity aligns data with defined formats and standards; Currency keeps data up-to-date; Correctness verifies factual accuracy; Coverage confirms data represents the full scope needed for analysis. Measuring and monitoring these dimensions identifies quality gaps before they impact analytics outcomes. Strong data quality programs embed these checks throughout ingestion, transformation, and reporting processes. Kanerika implements automated data quality monitoring aligned with these six dimensions—connect with us to strengthen your data foundation.

How can I promote data literacy across my organization?

Promoting data literacy requires executive commitment, structured training programs, and embedding analytics into daily workflows. Start with baseline assessments to understand current skill levels, then deliver role-specific training covering data interpretation, visualization, and critical thinking. Create self-service analytics tools that empower non-technical users to explore data safely. Establish data champions within departments who mentor colleagues and advocate for data-driven practices. Recognize and reward teams that effectively leverage analytics in their decisions. A data-literate workforce maximizes technology investments and accelerates organizational transformation. Kanerika offers data literacy workshops and enablement programs—engage us to build analytics capabilities across your teams.

What are the top 3 trends in data analytics?

The top three trends in data analytics are AI-powered analytics, real-time data processing, and augmented analytics. AI and machine learning automate pattern discovery and predictive modeling at scale. Real-time analytics enables instant decision-making through streaming data architectures. Augmented analytics uses natural language processing and automated insights to democratize analysis for business users. These trends converge to make analytics faster, smarter, and more accessible across organizations. Enterprises adopting these capabilities gain competitive advantages through faster innovation cycles and superior customer experiences. Kanerika implements cutting-edge analytics solutions incorporating these trends—schedule a demo to see AI-powered analytics in action.

What are the 4 big data strategies?

The four big data strategies are performance management optimization, data exploration and discovery, enhanced customer experience, and operational efficiency improvement. Performance management uses analytics dashboards to monitor KPIs and drive accountability. Data exploration enables discovery of hidden patterns and new opportunities through advanced analytics. Customer experience strategies leverage data for personalization and journey optimization. Operational efficiency focuses on process automation and cost reduction through data-driven insights. Successful organizations align their big data strategy with specific business outcomes rather than pursuing technology for its own sake. Kanerika develops tailored big data strategies that deliver measurable business impact—consult with our strategists to define your roadmap.