TL;DR: Kubeflow, Apache Airflow, and Prefect are not the same tool wearing different names. They serve different teams, different infrastructure realities, and different stages of ML maturity. Picking the wrong one doesn’t just slow you down — it creates the kind of technical debt that compounds quietly until it’s expensive to fix. This guide goes beyond feature tables: total cost of ownership, CI/CD integration, practitioner feedback, migration realities, and a decision framework built around organizational context, not hype.

Key Takeaways

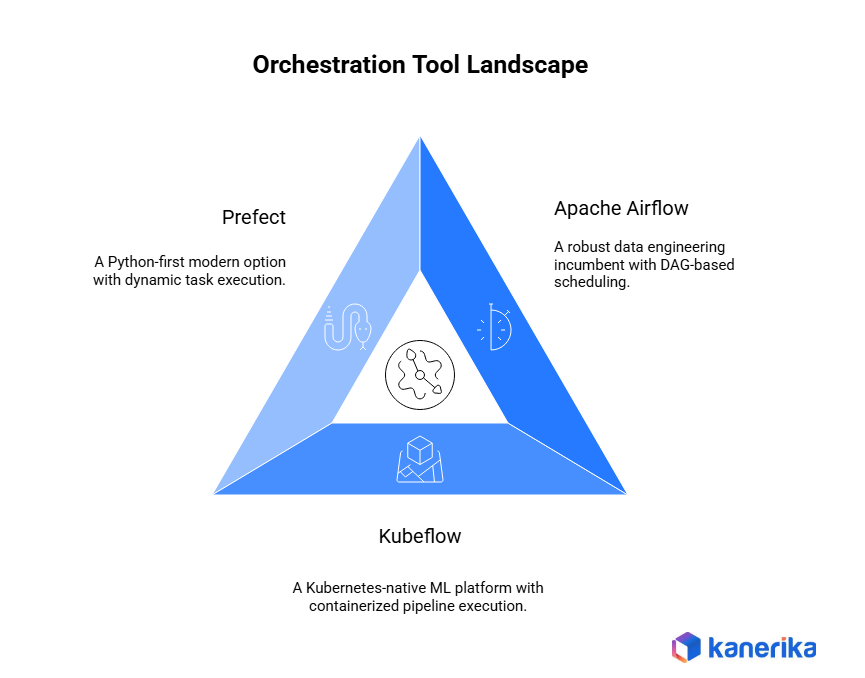

- Apache Airflow is the data engineering incumbent. Best for teams with existing Airflow expertise and complex scheduling needs. Airflow 3.0 adds asset-aware scheduling and event-driven triggers, closing some of the gap with newer tools.

- Kubeflow is ML-native and Kubernetes-first. Right for large-scale distributed training when a dedicated DevOps function already exists. Kubeflow Pipelines v2 improved the Python SDK and artifact lineage.

- Prefect is the Python-first modern option. Fastest time to productivity, best built-in observability, and growing use for AI agent and LLM pipeline work. Prefect 3.0 introduced autonomous task execution and significant performance gains.

- All three are open-source, but “free” understates real TCO. Infrastructure, people, and maintenance costs vary significantly.

- Migrating between orchestrators mid-project is a project in itself. Teams consistently underestimate the scope.

- The orchestration decision is organizational, not purely technical. Team composition, ML maturity, and infrastructure readiness matter more than feature checklists.

- If none of the three fit cleanly, Dagster, Flyte, and Metaflow are worth a look before committing.

Three Tools, One Heated Conference Room

Marcus, a data engineering lead at a mid-size financial firm, had three pipeline proposals on the table and a meeting already running forty-five minutes over time. His data team swore by Airflow — two years in production, no plans to change. The ML team wanted Kubeflow because one senior data scientist had used it at his last company. A new hire, three weeks in, had already started a Prefect pilot on his laptop and was ready to demo it to anyone who would sit still long enough.

Everyone had strong opinions. Nobody agreed on much except that the current setup wasn’t working.

This happens constantly. According to VentureBeat, citing Algorithmia’s 2020 State of Enterprise Machine Learning report, 87% of data science projects never reach production. The orchestration layer is a documented part of that problem. Meanwhile, the MLOps market is projected to hit $13 billion by 2027 at a 49.4% CAGR, per MarketsandMarkets. The decisions made today about ML pipeline infrastructure compound over time — for better or worse.

This guide covers what these tools actually cost to run, how they plug into CI/CD, what practitioners say after a year of living with them, how to think about switching, and a practical decision framework that matches tool to team.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Why Orchestration Is the Spine of MLOps

Most teams treat orchestration as something to sort out after the models are built. That instinct tends to cost them later.

ML pipeline orchestration handles the unglamorous work that keeps models alive: scheduling retraining, managing step dependencies, surfacing failures before they cascade, tracking data lineage, and handling retries when infrastructure wobbles. Without it, even a technically solid model becomes operationally fragile. This is a decision intelligence problem as much as a technical one — choices at the infrastructure layer ripple directly into business outcomes.

One distinction most comparison articles blur: data orchestration manages ETL pipelines, moving and transforming data between systems. ML orchestration manages the full lifecycle — training, evaluation, experiment tracking, versioning, and deployment cycles. Related problems, but not the same problem. A tool built for one won’t automatically serve the other well.

The orchestration decision is also an organizational call. Asking which tool is most feature-rich is the wrong starting question. The right question is which tool fits the team that has to operate it day to day.

What Each Tool Actually Is

Every tool’s origin story explains its strengths and its blind spots.

1. Apache Airflow: The Data Engineering Incumbent

Airflow was born at Airbnb in 2014, open-sourced in 2016, and is now an Apache top-level project. Its core concept: Directed Acyclic Graphs — pipelines defined as Python code, executed on complex scheduling logic.

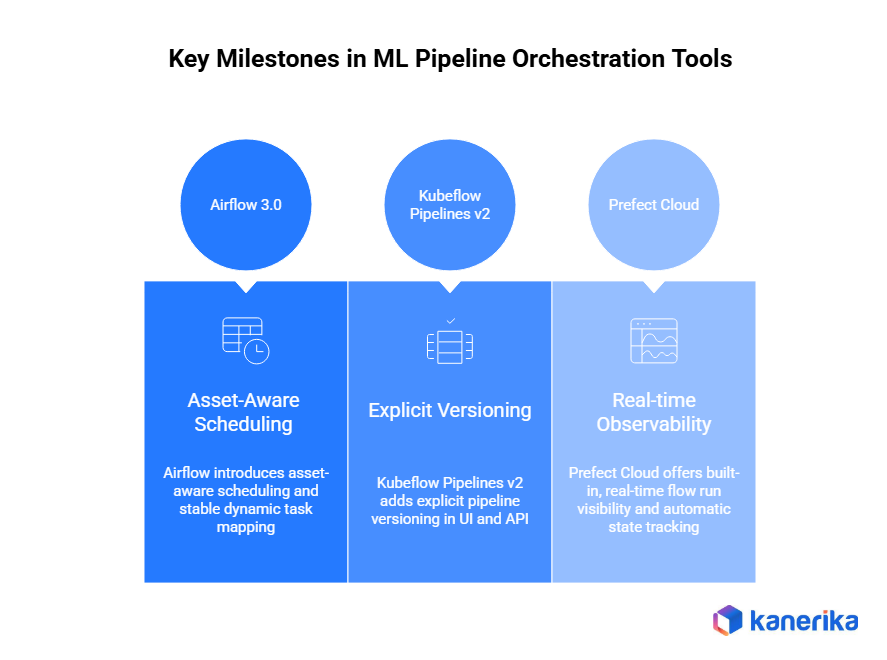

Airflow 3.0 is a meaningful release. It adds asset-aware scheduling (DAGs triggered by data changes, not just time), an overhauled UI, improved scheduler performance, and event-driven triggers as a first-class feature. Teams evaluating Airflow based on articles from a year or two ago may be working with an outdated picture.

Airflow’s ideal home: a data engineering team with existing expertise that needs to orchestrate ETL and ML workloads under one scheduling framework. For teams managing complex enterprise data flows, it also integrates naturally with data consolidation strategies spanning multiple source systems.

2. Kubeflow: The Kubernetes-Native ML Platform

Google introduced Kubeflow in 2018. It’s not purely an orchestrator — it’s a full ML platform: pipelines, notebook servers, model serving via KServe, hyperparameter tuning via Katib. Pipeline steps run as containerized pods on Kubernetes, with Argo Workflows handling execution underneath.

Kubeflow Pipelines v2 improved the Python SDK, artifact lineage tracking, and integration with the broader Kubeflow ecosystem. The platform is now a CNCF project, which has stabilized its governance and roadmap — relevant if your organization has a procurement process that cares about project health.

The Kubernetes requirement and operational overhead haven’t changed. Kubeflow makes sense for ML-heavy teams with strong DevOps capability that treat distributed training orchestration as the primary workload.

3. Prefect: The Python-First Modern Option

Prefect takes a different approach. Its core abstraction is flows and tasks with dynamic execution — workflows can be created and modified at runtime based on actual data conditions, not just at definition time. That distinction matters more than it sounds.

Prefect 3.0 added autonomous task execution (tasks running independently without a parent flow), significant server performance improvements, and better event-driven capabilities. Available as open-source self-hosted or Prefect Cloud with usage-based pricing. Best fit: a Python-first ML engineering team scaling up that values developer speed and doesn’t want to manage Kubernetes to get there.

For teams already embedded in the Databricks ecosystem, Databricks Lakeflow is worth understanding alongside these three — it offers a complementary orchestration perspective for Databricks-native workloads.

Feature Comparison: What Actually Separates These Tools

Feature tables are only useful if you know what to look for before reading them. The real differentiators aren’t scheduling syntax or UI design. They’re the things that surface at 2 AM when a retraining job fails, or when a new ML engineer needs to debug a pipeline they’ve never seen before.

Five dimensions dominate practitioner discussions: how dynamic the execution model is, whether the tool is ML-native or general-purpose, how painful local development is, how well it versions pipelines, and what debugging looks like under pressure.

| Dimension | Apache Airflow | Kubeflow | Prefect |

| Pipeline Model | Static DAG (asset-aware in 3.0) | Static (containerized K8s pods) | Dynamic Flows |

| ML-Native Features | No (integrations required) | Yes (Katib, KServe built-in) | Partial (community integrations) |

| Kubernetes Required | No (optional executor) | Yes — hard requirement | No |

| Observability | Moderate (log drilling required) | Low (extra tooling needed) | High (built-in, real-time) |

| Local Testability | Medium (TaskFlow API helps) | Low (Kind/Minikube required) | High (pure Python, pytest) |

| Pipeline Versioning | Git-based (informal) | Built-in version registry | Deployment-based |

| Learning Curve | High | Very High | Low–Medium |

| Scheduling Maturity | Best-in-class | Basic | Good |

| Scale Ceiling | High | Very High | High |

| Self-Host Base Cost | Low | Medium–High | Low |

| Managed Option | MWAA, Composer, Astronomer | Limited | Prefect Cloud |

| Community Size | Largest (37K+ GitHub stars) | Medium (14K+, CNCF) | Growing (16K+) |

| Event-Driven Scheduling | Yes (Airflow 3.0) | Limited | Yes (native) |

| CI/CD Integration Ease | High (mature patterns) | Low (container build cycle) | High (simple deploy) |

Kubeflow’s scale ceiling is genuinely the highest of the three — it’s the only tool in this group designed for multi-GPU distributed training at enterprise scale. But that ceiling comes with the highest floor: standing up and maintaining Kubeflow takes more infrastructure investment than the other two combined.

Prefect’s observability advantage reflects the out-of-the-box experience. Airflow and Kubeflow can reach comparable observability with the right monitoring stack, but that stack requires separate configuration and ongoing maintenance.

Static vs. Dynamic Execution

Airflow 3.0 has made progress here. Asset-aware scheduling lets DAGs react to data changes, and dynamic task mapping is now stable. But the underlying model is still static — DAG structure is defined at parse time, and runtime branching has real limits.

Kubeflow compiles pipeline definitions to YAML or JSON before execution. Prefect is genuinely dynamic: tasks can be created at runtime based on data conditions. For ML teams whose pipelines need to adapt to data characteristics — variable training subset sizes, conditional preprocessing steps — this difference shows up every sprint.

ML-Native Capabilities vs. General Scheduling

Airflow has no native model registry or experiment tracking. Everything needs custom integration with tools like MLflow. Kubeflow is ML-native by design — hyperparameter tuning and model serving are first-class features. Prefect is ML-friendly but not ML-native; MLflow and Weights & Biases integrations exist as community packages.

This boundary also matters for teams building cognitive computing applications, where pipelines handle iterative model refinement rather than simple linear ETL flows.

Pipeline Versioning in Production

Production ML teams run multiple pipeline versions simultaneously — retraining in staging, live version in production, hotfix in testing. How each tool handles this matters, especially in regulated environments.

Airflow handles versioning at the DAG level. DAG files live in Git; Airflow tracks run history per DAG ID. There’s no built-in concept of pipeline version promotion. Teams manage this via Git branching conventions and deployment tooling.

Kubeflow Pipelines v2 introduced explicit pipeline versioning in its UI and API. A pipeline can have multiple registered versions, and experiments can reference specific versions. This is the most mature model of the three — directly useful in regulated environments where audit trails connecting model versions to pipeline versions are a compliance requirement.

Prefect tracks versions via deployment objects. Each deployment can point to a specific flow code version, and Prefect Cloud surfaces deployment history. Practical, though less formal than Kubeflow’s registry.

Observability and Debugging

Airflow logs are visible in the UI, but debugging failed runs is notoriously slow — practitioners describe it as digging through logs across multiple places. Kubeflow needs additional setup for observability, and its UI draws consistent criticism for being unintuitive. Prefect delivers the best out-of-the-box experience: real-time flow run visibility, automatic state tracking, and clean failure surfacing without extra configuration.

Local Development: Where Daily Friction Accumulates

This dimension rarely appears in orchestration comparisons. But it’s where day-to-day friction builds up quietly over months.

A tool can have excellent production characteristics and still frustrate engineers during development. The inner loop — write, test, debug, repeat — determines how fast a team can move on ML pipeline work.

Prefect wins clearly here. A Prefect flow is standard Python. You can unit test it with pytest without any infrastructure running. Functions decorated with @flow and @task run locally exactly as they would in production. The test command is just pytest. Teams that invest in data literacy across their engineering function tend to gravitate toward Prefect’s local testability because it lowers the barrier for non-specialist contributors to understand and validate pipeline logic.

Airflow has improved meaningfully with the TaskFlow API. Individual tasks are now testable as Python functions. But testing full DAG integrity still requires the Airflow metadata database — either a local Airflow instance or the DagBag testing pattern. It works, but it adds setup overhead that slows iteration.

Kubeflow is the most painful for local development. Every pipeline step runs as a container in a Kubernetes cluster. Getting a pipeline running locally requires Kind or Minikube — which means 30 to 60 minutes of environment setup before writing a single line of pipeline logic. The gap between local development and production is real and felt by ML engineers every time they iterate on pipeline code.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

CI/CD Integration: What It Actually Looks Like

Getting an orchestrator running locally is one thing. Getting it wired into a repeatable automated delivery pipeline is another — and this is where teams spend time that rarely shows up in vendor documentation.

| CI/CD Dimension | Apache Airflow | Kubeflow | Prefect |

| Local Test Command | pytest with DagBag or TaskFlow | Kind/Minikube cluster required | pytest (pure Python) |

| Container Build Required | No (for DAG logic changes) | Yes (every component change) | No (for flow logic changes) |

| Deployment Mechanism | S3 sync, Astro deploy, Cloud Build | KFP SDK upload + image push | prefect deploy |

| CI Step Complexity | Medium (DAG validation + pytest) | High (build → push → compile → upload) | Low (pytest + deploy) |

| GitHub Actions Support | Yes (community templates) | Yes (custom, no official template) | Yes (official guide) |

| GitLab CI Support | Yes | Yes (custom setup) | Yes (official guide) |

| Managed Deploy Integration | MWAA, Composer, Astronomer | Vertex AI Pipelines | Prefect Cloud |

| CI/CD Documentation Quality | High (Astronomer ecosystem) | Medium (community-maintained) | High (official guides) |

The Kubeflow row tells the most important story here. Because every pipeline step is a container, even changing a single import statement means rebuilding and pushing a Docker image before the change is testable in CI. Teams mitigate this by architecting components to minimize rebuilds — but that discipline takes time to establish and maintain.

Airflow’s CI/CD story is the most mature overall. Community-maintained GitHub Actions templates handle automated DAG testing and deployment. A typical pipeline lints DAGs, runs unit tests, validates DAG integrity, and deploys on merge. The API integration patterns for connecting Airflow to external deployment targets are also the most thoroughly documented of the three.

Prefect is the simplest. Deployments are defined as code in prefect.yaml, so deployment configuration lives in Git alongside the flow. CI is just pytest plus prefect deploy.

Ecosystem Integration: What Connects to What

The orchestrator sits in a stack alongside experiment tracking tools, feature stores, model registries, and cloud ML services. Integration gaps tend to surface after the orchestrator is already in production.

| Integration | Apache Airflow | Kubeflow | Prefect |

| MLflow (Experiment Tracking) | Provider package (community-maintained) | External server support | Community package |

| Weights & Biases | Custom operator required | Python SDK in components | Community collection |

| Feast / Tecton (Feature Store) | Python SDK calls in tasks | Python SDK in components | Python SDK in tasks |

| Amazon SageMaker | First-class provider (AWS-maintained) | Via SageMaker Pipelines bridge | prefect-aws collection |

| Google Vertex AI | First-class provider (Google-maintained) | Native (Vertex AI Pipelines) | prefect-gcp collection |

| Azure ML | Provider package available | Via AKS deployment | prefect-azure collection |

| MLflow Model Registry | Via Python SDK in tasks | External server support | Via Python SDK in tasks |

| Hugging Face Hub | Custom operator | Python SDK in components | Community integration |

| DVC (Data Versioning) | Via BashOperator/Python | Via component scripts | Via task Python calls |

| Provider Breadth | Highest (hundreds of providers) | Medium | Growing |

The cloud ML service row matters. Airflow’s SageMaker and Vertex AI providers are maintained by AWS and Google directly — not community packages that fall out of date when cloud APIs change. For teams building on cloud-native ML infrastructure, this vendor-maintained support translates to reliability and faster compatibility updates.

Vertex AI Pipelines deserves specific mention. It’s essentially managed Kubeflow — teams on GCP can use the same Kubeflow Pipelines SDK while offloading the Kubernetes operational burden to Google. This changes the TCO calculation significantly for GCP-centric organizations.

None of the three has a built-in model registry as a first-class concept. All three connect to external registries like MLflow via Python SDK calls. The orchestrator triggers the registration step; the registry itself is always external.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

What Practitioners Say After a Year

Vendor documentation tells you what a tool does. Practitioner communities tell you what it costs to live with.

1. Apache Airflow

The DAG model creates friction for ML teams used to notebook-style iteration. No native model registry or experiment tracking means every integration is custom plumbing. Metadata database performance becomes a bottleneck as pipeline volume grows. A common refrain from the r/mlops community: “Airflow is great if you’re a data engineer. If you’re an ML engineer, you spend half your time fighting the tool.” Airflow 3.0 addresses some of this — but teams on 2.x represent a large installed base, and upgrading is its own project.

2. Kubeflow

G2 reviewers consistently flag that maintaining Kubeflow requires a dedicated DevOps team. Version compatibility issues between Kubeflow, Kubernetes versions, and Argo Workflows create recurring instability. Cluster resource consumption runs higher than initial estimates. Multi-tenancy setup is complex and under-documented. One senior ML engineer’s summary from r/mlops: “Kubeflow does exactly what it promises, but the promise includes maintaining a Kubernetes platform forever.”

3. Prefect

The Prefect 1.0 to 2.0 migration introduced breaking changes that early adopters found genuinely painful. Prefect 3.0 resolved most of them, but some teams remain cautious about version stability. Advanced features are gated behind paid Cloud tiers. The operator ecosystem is much smaller than Airflow’s — teams with niche integrations sometimes hit gaps that need custom work.

Total Cost of Ownership: The Real Numbers

“Open source” is sometimes the most expensive phrase in enterprise infrastructure when no one accounts for what comes after the download. All three tools have a licensing cost of zero at their core. But direct costs diverge quickly, and hidden costs are where decisions get distorted.

| Cost Category | Apache Airflow | Kubeflow | Prefect |

| Licensing | Free (open-source) | Free (open-source) | Free self-host / Cloud pricing |

| Infrastructure (self-host) | Metadata DB + compute | K8s cluster + GPU compute ($2K–$10K+/mo) | Minimal (agent-based) |

| Managed Option | Astronomer $500+/mo; MWAA usage-based | Vertex AI Pipelines (GCP usage-based) | Prefect Cloud (usage-based) |

| People Required | 1 data engineer can own it | 2–3 platform engineers minimum | 1 ML engineer can own it |

| Upgrade Overhead | Medium (metadata DB migrations) | High (K8s + Argo compatibility) | Low |

| Migration Cost (if switching) | Medium (large DAG libraries accumulate) | High (full containerization required) | Medium (DAG rewrites required) |

| Hidden Scaling Cost | Metadata DB performance at volume | GPU cluster right-sizing | Prefect Cloud tier escalation |

The people cost row is the most underestimated line across all three tools. Kubeflow is only operationally justified when a team has at least two to three dedicated platform engineers. Running it with one shared DevOps resource creates a knowledge risk — one departure creates a vacuum that can take months to fill. Good change management planning before adopting Kubeflow should include succession planning for Kubernetes operations, not just user onboarding.

The infrastructure cost for Kubeflow deserves context. The $2,000 to $10,000+ monthly range assumes meaningful GPU compute. CPU-only Kubeflow clusters can run cheaper, but a CPU-only Kubeflow deployment gives up the tool’s main advantage over simpler alternatives. If the workload is CPU-bound, the Kubernetes overhead is hard to justify.

Migration cost is the most consistently underestimated line. Teams underestimate scope, start mid-production, and discover the true cost after the project is already underway.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

Enterprise Considerations That Change the Decision

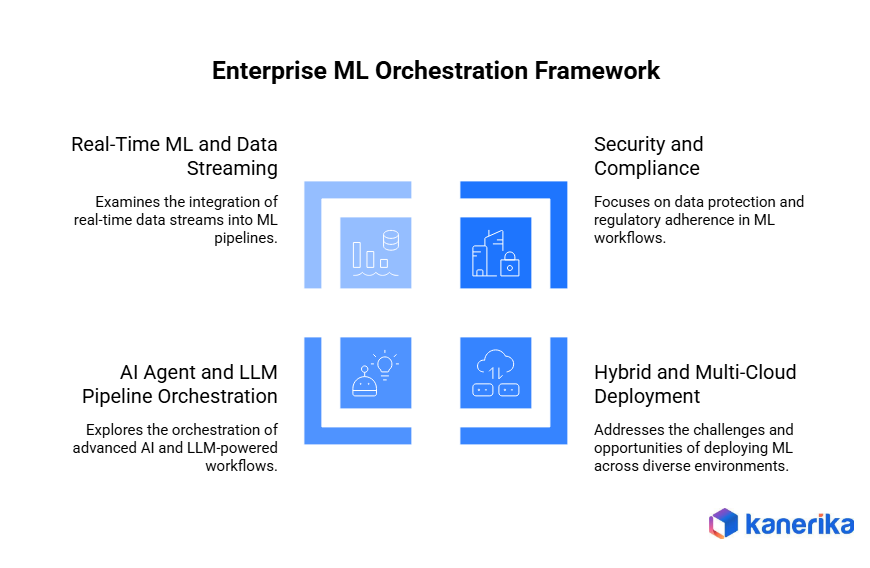

Security, Compliance, and Multi-Tenancy

Airflow’s secrets management works via environment variables and Vault integrations, but requires deliberate configuration. Kubeflow’s Kubernetes-native RBAC and namespace isolation provide strong workload separation — better positioned for regulated industries with strict data access controls. Multi-tenancy in Kubeflow runs through Kubernetes namespace isolation with Dex-based authentication; powerful, but complex to configure and maintain. Prefect Cloud has built-in secrets management and workspace-level isolation for multi-team use; self-hosted deployments need additional configuration.

For HIPAA, SOC 2, and GDPR contexts, Kubeflow’s isolation model offers more granular control — but that comes with real operational complexity as the trade-off. Understanding cloud security posture management is essential before assuming any tool is inherently compliant by default.

Hybrid and Multi-Cloud Deployment

Airflow is cloud-agnostic by design, with managed offerings on AWS, GCP, and Azure. Kubeflow runs anywhere Kubernetes runs, making it viable for private cloud or on-premises GPU clusters. Prefect’s hybrid model is a first-class design principle — workers can orchestrate across environments from a single control plane.

Teams operating across hybrid cloud environments should weigh deployment posture explicitly. The process control implications of hybrid orchestration — ensuring consistent pipeline behavior across cloud and on-premises execution — deserve scoping before the decision is made.

AI Agent and LLM Pipeline Orchestration

Orchestrating LLM inference, RAG workflows, and AI agent pipelines is a growing production use case. Airflow is usable but architecturally awkward for async, long-running LLM tasks. Kubeflow’s GPU scheduling makes it viable specifically for LLM fine-tuning jobs. Prefect is gaining real traction for AI agent orchestration — its dynamic task execution model and event-driven triggers fit generative AI patterns well.

Teams building AI agent workflows or advanced RAG pipelines should treat this as a first-order consideration. The OpenAI AgentKit integration patterns emerging in 2025 lean toward orchestrators with dynamic task creation — another point in Prefect’s favor for teams building at the generative AI edge.

Real-Time ML and Data Streaming

None of the three is a native streaming tool. Airflow integrates with Kafka and Flink via operators. Kubeflow handles streaming inputs, but pipeline execution is fundamentally batch-oriented. Prefect’s event-driven triggers enable near-real-time pipeline kicks without a full streaming infrastructure.

For teams building real-time ML scoring or monitoring pipelines, data streaming integration should be an explicit evaluation criterion. Teams in AI-driven supply chain contexts — where demand signals must trigger real-time retraining — will hit this constraint quickly in production.

Alternatives Worth Evaluating Before You Commit

Most comparison articles ignore this question. But for a meaningful slice of teams, the answer to “Airflow vs Kubeflow vs Prefect” is actually “none of the above.” Worth naming that possibility directly.

- Dagster is the leading alternative for teams that want data asset lineage baked into the orchestration model from the start, not added on later. Its software-defined assets concept makes lineage and data quality a core abstraction. Teams building data mesh architectures often find Dagster a better fit than any of the three primary tools.

- Flyte targets the same Kubernetes-native ML use case as Kubeflow, but with a more opinionated, developer-friendly SDK. Teams that want Kubeflow’s scalability without Kubeflow’s operational overhead often find Flyte the better trade-off.

- Metaflow takes a different approach entirely — designed to feel like ordinary Python data science code with minimal orchestration ceremony. For research-oriented ML teams that want to scale from laptop to cloud without rewriting workflows, it’s worth evaluating before committing to any of the three primary tools.

- ZenML acts as an abstraction layer over other orchestrators. It can run on top of Kubeflow, Airflow, or Vertex AI Pipelines, providing a consistent ML pipeline API while keeping the underlying orchestrator swappable. For teams that want orchestrator portability, ZenML deserves a look before locking in.

The point isn’t that alternatives are better. It’s that forcing a choice between three options when your context doesn’t fit any of them well is a mistake worth avoiding.

How to Choose: A Decision Framework Built Around Organizational Context

The best orchestrator for a given organization is determined by five factors: ML maturity, team composition, infrastructure reality, pipeline complexity, and budget versus time-to-productivity. The framework below maps organizational profile to tool recommendation with a one-line rationale.

| Organizational Profile | Recommended Tool | Why |

| Data engineering-led, existing Airflow expertise, complex scheduling | Apache Airflow | Build on what the team already knows; Airflow 3.0 closes the ML pipeline gap |

| Data engineering team, no existing orchestrator, mixed ETL + ML | Apache Airflow | Largest ecosystem, best scheduling maturity, one engineer can own it |

| ML-first team, Kubernetes already in prod, dedicated DevOps, large-scale training | Kubeflow | Only tool with native distributed training + model serving at K8s scale |

| ML-first team, GCP-native, wants managed Kubeflow | Vertex AI Pipelines | Managed Kubeflow — K8s benefits without the operational burden |

| Python-first ML team, no Kubernetes, scaling up, 2–8 engineers | Prefect | Fastest time-to-productivity, best observability, lowest operational overhead |

| AI agent / LLM pipeline orchestration, dynamic workflows | Prefect | Dynamic task execution and event-driven triggers fit generative AI patterns |

| Team wants data asset lineage as a core concept, data mesh | Dagster | Purpose-built asset awareness; the others treat it as an afterthought |

| ML team wants K8s-native scale without Kubeflow’s complexity | Flyte | Kubeflow scalability with materially better developer ergonomics |

| Research-first team, minimal orchestration ceremony | Metaflow | Scales Python notebook code to cloud without structural rewrites |

| Team wants orchestrator portability across cloud backends | ZenML | Abstraction over Airflow/Kubeflow/Vertex AI; swap backends without rewriting |

| Large enterprise, strict workload isolation (HIPAA, SOC 2, GDPR) | Kubeflow (if K8s exists) or Airflow with Vault | Kubeflow’s namespace RBAC is most mature; Airflow is proven in regulated industries |

| Small team (2–4 people), no DevOps engineer, needs fast iteration | Prefect | Lowest operational overhead; CI/CD simple enough for a small team to own |

Reading down the table, one pattern is clear: team composition is the single most decisive factor. The same pipeline complexity can be handled by any of the three tools. But only the right tool can be operated well by the team that actually exists. A technically superior choice that the team can’t sustain isn’t superior at all.

Quick decision tree: Does Kubernetes already exist in prod with DevOps support? If yes, is ML the primary workload? If yes, evaluate Kubeflow or Flyte. If no, Airflow. If no, is scheduling complexity the dominant requirement? If yes, Airflow. If not, is the team Python-first and ML-focused? If yes, Prefect. If no, Airflow or Dagster.

There’s also an organizational dimension the table doesn’t capture. Business process modeling the current ML pipeline architecture before evaluating tools often reveals that the pain attributed to the orchestration layer is actually upstream — in data quality or team structure. Solving the right problem is worth more than choosing the best orchestrator for the wrong one.

Migration Paths and the Cost of Switching

Choosing a tool is one decision. Moving from an existing orchestrator to a new one is a different project entirely — and the scope is the most consistently underestimated number in MLOps planning.

Airflow to Prefect

All DAGs must be rewritten as Prefect flows. No automated migration tool exists. The standard approach: run both platforms in parallel and migrate pipeline by pipeline. The most common failure mode is underestimating the scale of rewriting large DAG libraries accumulated over the years. Start with the pipelines causing the most operational pain, not the simplest ones. Proving value early builds the organizational support to finish.

Airflow to Kubeflow

Every pipeline step must be containerized before migration begins. That alone is a multi-week project for complex pipelines. Kubernetes operational readiness must exist before migration starts. Trying to build Kubernetes capability and migrate orchestrators simultaneously is a reliable path to failure.

Kubeflow to Prefect

Usually driven by a desire to reduce Kubernetes operational overhead — a legitimate reason. Prefect’s agent model genuinely simplifies infrastructure management post-migration. The main challenge is re-architecting GPU workloads designed around Kubernetes scheduling primitives. Teams migrating for this reason should consider Flyte as an intermediate option — it may deliver similar operational simplification while retaining Kubernetes-native GPU scheduling.

The same universal principles apply across all three scenarios: never migrate orchestrators and rewrite pipeline architecture at the same time; establish an observability baseline before migration so you can measure performance afterward; treat migration as a scoped project with its own timeline and budget. The failure patterns parallel what shows up in broader data migration failures.

Real-World MLOps: What This Looks Like in Practice

Kanerika and ABX Innovative Packaging Solutions

ABX faced fragmented data workflows and high manual effort across business units. Kanerika delivered an end-to-end data management transformation covering pipeline automation, workflow orchestration, and cross-functional data accessibility. The result: reduced manual effort in data operations and meaningfully improved data accessibility across the organization.

The lesson from ABX is precise: the right orchestration approach was determined by the team’s existing infrastructure and operational maturity — not which tool had the longest feature list.

Hybrid Orchestration in Financial Services (Illustrative Scenario)

A mid-size financial services firm running 30+ Airflow DAGs for risk model retraining was experiencing real pain. The static DAG model created constant friction for the quant team’s iterative retraining workflows. Debugging failed runs was slow enough to affect incident response time for risk systems.

The assessment showed that a full platform migration would be both expensive and disruptive. The recommended approach: keep Airflow for ETL scheduling, migrate ML-specific pipelines to Prefect. The hybrid avoided a full rewrite, cut pipeline debugging time by roughly 40%, and improved ML team productivity without disrupting existing data engineering operations.

Both scenarios reflect the same principle: the right starting point is always the organization’s actual context, not the tool generating the most buzz. Kanerika carries hands-on implementation experience across all three orchestration platforms and across industries from manufacturing to financial services. As a Microsoft Solutions Partner for Data and AI, Kanerika integrates orchestration decisions with Azure ML, Databricks, and cloud-native data platforms.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

The Right Tool Is the One Your Team Can Actually Operate

Kubeflow, Airflow, and Prefect aren’t competing for the same user. They serve different organizational contexts — and the teams failing to get ML into production aren’t choosing bad tools. They’re choosing tools mismatched to their team and infrastructure reality.

The 87% failure-to-production number is a people and process problem as much as a technology one. The orchestration layer is where that mismatch becomes visible — in debugging sessions that run too long, in pipelines that break under scale, in ML engineers spending more time on infrastructure than on models.

The right answer starts with the team that has to operate the tool, the infrastructure that actually exists, and the organization’s ML maturity — not which tool generated the most conference talks this year. And if Airflow, Kubeflow, and Prefect don’t fit cleanly? The answer might be Dagster, Flyte, or ZenML. That’s a legitimate outcome, not a failure of the evaluation.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

What is orchestration in MLOps?

Orchestration in MLOps is the automated coordination of machine learning workflows, including data ingestion, model training, validation, and deployment. It ensures each pipeline stage executes in the correct sequence with proper dependency management and error handling. Effective ML orchestration eliminates manual handoffs between teams, reduces deployment cycles from weeks to hours, and maintains reproducibility across environments. Workflow orchestration tools manage compute resources, track experiments, and trigger retraining based on data drift or performance degradation. Kanerika designs enterprise-grade MLOps orchestration frameworks that scale with your AI ambitions—connect with our team to streamline your ML pipelines.

What are the best ML orchestration tools?

The best ML orchestration tools include Apache Airflow for complex DAG-based workflows, Kubeflow for Kubernetes-native ML pipelines, and Prefect for modern Python-first orchestration. MLflow handles experiment tracking alongside orchestration, while Dagster offers data-aware pipeline management. Argo Workflows excels in container-native environments, and AWS Step Functions suits serverless architectures. Selection depends on your infrastructure, team expertise, and scaling requirements. Enterprise teams often combine multiple tools—using Kubeflow for training pipelines and Airflow for broader data orchestration. Kanerika evaluates your ML stack and implements the optimal orchestration tool combination—schedule a technical consultation today.

What is the best tool for MLOps?

The best tool for MLOps depends on your specific workflow requirements, but leading platforms include MLflow for experiment tracking, Kubeflow for end-to-end ML pipeline orchestration, and Databricks for unified analytics and ML. Azure Machine Learning and AWS SageMaker provide managed MLOps capabilities for cloud-native teams. For workflow automation, Airflow and Prefect dominate enterprise deployments. Most production environments require a toolchain rather than a single solution—combining orchestration, versioning, monitoring, and deployment tools. Kanerika helps enterprises architect integrated MLOps platforms using best-in-class tools tailored to your environment—reach out for a comprehensive assessment.

Is MLOps just DevOps?

MLOps is not just DevOps—it extends DevOps principles to address machine learning’s unique challenges. While DevOps focuses on code deployment and infrastructure automation, MLOps adds data versioning, model training pipelines, experiment tracking, and continuous model monitoring. ML systems require managing three artifacts: code, data, and models, whereas traditional DevOps handles only code. MLOps introduces concepts like feature stores, model registries, and drift detection that don’t exist in standard DevOps. The orchestration layer becomes more complex, coordinating data preparation, training, and inference workflows. Kanerika bridges DevOps and MLOps practices—let us help you operationalize your ML initiatives effectively.

How do Airflow, Kubeflow, and Prefect handle CI/CD integration?

Airflow integrates with CI/CD through DAG deployment automation, supporting Git-sync for version-controlled workflows and REST APIs for triggering pipelines from Jenkins or GitHub Actions. Kubeflow natively leverages Kubernetes for CI/CD, enabling containerized pipeline deployments through Tekton or Argo CD with built-in model versioning. Prefect offers cloud-native CI/CD through its deployment system, allowing workflow registration via CLI commands in any CI pipeline. All three support programmatic pipeline definitions, making infrastructure-as-code practices straightforward. Prefect’s Python-native approach often simplifies testing compared to Airflow’s configuration files. Kanerika implements CI/CD-integrated ML orchestration that accelerates your deployment velocity—contact us to modernize your ML delivery pipeline.

What are the best alternatives to Airflow, Kubeflow, and Prefect?

Strong alternatives to Airflow, Kubeflow, and Prefect include Dagster for data-aware orchestration with built-in observability, Argo Workflows for Kubernetes-native container orchestration, and Flyte for type-safe ML pipelines at scale. Luigi offers lightweight Python orchestration, while AWS Step Functions and Azure Data Factory provide managed serverless options. Metaflow from Netflix excels at data science workflows with versioning built in. Temporal handles long-running workflows with durability guarantees. For simpler use cases, Kedro provides pipeline abstraction without infrastructure complexity. Your choice depends on scale, cloud strategy, and team familiarity. Kanerika evaluates orchestration alternatives against your requirements—book a discovery session to find your optimal fit.

Is Prefect suitable for LLM and AI agent pipeline orchestration?

Prefect is well-suited for LLM and AI agent pipeline orchestration due to its async-first design and dynamic workflow capabilities. It handles the non-deterministic nature of LLM calls effectively, supporting retries with exponential backoff, timeout management, and concurrent API requests. Prefect’s task-level caching reduces redundant LLM API costs during development. For AI agent workflows requiring conditional branching and human-in-the-loop patterns, Prefect’s imperative Python syntax offers flexibility that DAG-based tools lack. Its lightweight deployment model scales from single prompts to complex multi-agent systems. Kanerika builds production-ready LLM orchestration pipelines using Prefect and other modern tools—let us architect your AI agent infrastructure.

What is the purpose of orchestration?

The purpose of orchestration is to automate and coordinate complex workflows across multiple systems, ensuring tasks execute in the correct sequence with proper dependency management. In MLOps, orchestration eliminates manual intervention between pipeline stages—from data extraction through model deployment. It provides centralized monitoring, automatic failure recovery, and resource optimization across distributed infrastructure. Orchestration ensures reproducibility by codifying workflow logic, making pipelines auditable and version-controlled. Without orchestration, teams waste hours on manual coordination, experience inconsistent deployments, and struggle to scale ML operations. Kanerika implements intelligent orchestration frameworks that transform fragmented processes into automated, observable workflows—discuss your automation goals with our engineers.

What is an example of orchestration?

A common MLOps orchestration example is an automated model retraining pipeline. When new data arrives in your data lake, the orchestrator triggers data validation, then executes feature engineering transformations. Next, it launches distributed model training across GPU clusters, followed by automated model evaluation against baseline metrics. If performance thresholds pass, the orchestrator deploys the model to staging, runs integration tests, and promotes to production with traffic shifting. Throughout, it logs metadata, sends alerts on failures, and maintains full audit trails. This entire workflow runs without human intervention, triggered on schedule or by events. Kanerika builds these end-to-end orchestrated ML pipelines—start with a proof of concept to see orchestration in action.

Is MLflow an orchestrator?

MLflow is not primarily an orchestrator—it’s an ML lifecycle management platform focused on experiment tracking, model versioning, and deployment. While MLflow Projects can define and run multi-step workflows, its orchestration capabilities are limited compared to dedicated tools like Airflow or Prefect. MLflow excels at tracking metrics, parameters, and artifacts across experiments, then packaging models for deployment. Most enterprise teams pair MLflow with a workflow orchestrator: Airflow triggers training jobs while MLflow logs experiment data and stores models. This combination separates concerns effectively, using each tool for its strengths. Kanerika integrates MLflow into comprehensive MLOps architectures with proper orchestration layers—reach out to optimize your ML infrastructure.

Is MLflow an MLOps tool?

MLflow is a core MLOps tool widely adopted for experiment tracking, model registry, and deployment management. It addresses critical MLOps requirements: versioning models and datasets, comparing experiment results, packaging models in reproducible formats, and serving predictions via REST APIs. MLflow integrates with major platforms including Databricks, Azure ML, and AWS SageMaker. However, MLflow handles specific MLOps functions rather than the entire lifecycle—teams typically combine it with orchestration tools for pipeline automation and monitoring solutions for production observability. Its open-source nature and language-agnostic design make it a foundational component in most enterprise ML stacks. Kanerika deploys MLflow as part of integrated MLOps platforms—contact us to design your complete ML infrastructure.

Is Kubernetes an orchestration platform?

Kubernetes is a container orchestration platform that automates deployment, scaling, and management of containerized applications. It schedules workloads across clusters, handles service discovery, manages storage, and maintains desired state through self-healing. In MLOps, Kubernetes provides the infrastructure layer where ML orchestration tools like Kubeflow and Argo Workflows run. It orchestrates compute resources—spinning up GPU nodes for training, scaling inference endpoints based on traffic—while higher-level ML orchestrators manage workflow logic and dependencies. This separation creates a powerful combination: Kubernetes handles infrastructure orchestration while MLOps tools handle pipeline orchestration. Kanerika implements Kubernetes-native ML platforms that maximize resource efficiency—explore our cloud solutions for scalable AI infrastructure.

Is Kubernetes part of MLOps?

Kubernetes plays a significant role in MLOps as the underlying infrastructure platform for scalable ML workloads. It provides containerized execution environments ensuring reproducibility across development and production. Kubernetes-native tools like Kubeflow, KServe, and Seldon deploy directly on clusters, offering distributed training, model serving, and pipeline orchestration. Teams use Kubernetes for autoscaling inference endpoints, managing GPU resources efficiently, and implementing canary deployments for models. While not strictly required for MLOps, Kubernetes has become the standard for enterprise-scale machine learning operations due to its flexibility and ecosystem. Kanerika architects Kubernetes-based MLOps platforms optimized for performance and cost—schedule a consultation to modernize your ML infrastructure.

What tools do you use for MLOps or CI/CD?

Enterprise MLOps and CI/CD toolchains typically combine multiple specialized platforms. For orchestration, teams deploy Airflow, Kubeflow, or Prefect to manage pipeline execution. MLflow or Weights & Biases handle experiment tracking and model versioning. CI/CD relies on GitHub Actions, GitLab CI, or Jenkins for automated testing and deployment triggers. Infrastructure provisioning uses Terraform or Pulumi, while container registries store versioned Docker images. Model serving leverages KServe, Seldon, or managed endpoints like SageMaker. Monitoring combines Prometheus with Grafana or specialized tools like Evidently for drift detection. The specific stack depends on cloud provider, scale, and team expertise. Kanerika implements end-to-end MLOps toolchains tailored to your enterprise requirements—let us build your production ML platform.