Power BI users have had to choose between speed and flexibility for years. Direct Lake gave you fast access to lakehouse data but no Power Query transformations. Import gave you transformations but forced a full refresh cycle. The choice was binary.

The new Power BI composite model breaks that trade-off. You can now combine Direct Lake tables with Import tables in a single semantic model, keeping large datasets in OneLake while loading smaller external datasets through Power Query. This makes it easier to share modeling work between IT (heavy data lifting in the lakehouse) and business teams (smaller, business-specific datasets layered on top).

In this article, we’ll cover what Power BI composite models do, the two ways to build them, current preview limitations, the step-by-step setup for both approaches, and where they fit in real reporting workflows.

Key Takeaways

- Power BI composite models combine Direct Lake and Import tables in one semantic model, with each storage mode handling what it does best.

- The feature is in public preview. Direct Lake on SQL endpoints cannot participate. Only Direct Lake on OneLake works in composite models.

- Power BI Desktop supports live editing, but cannot add new tables. Add-table operations require web modeling.

- Cross-source relationships default to many-to-many cardinality and have DAX function restrictions.

- OneLake Catalog adds Direct Lake tables. Get Data brings in Import mode tables. Tables from both modes work together in reports.

What Are Composite Models in Power BI?

A composite model is a Power BI semantic model where multiple storage modes coexist. In Microsoft Fabric, this currently means combining Direct Lake on OneLake tables with Import mode tables.

Direct Lake queries data directly from OneLake without importing it, which keeps performance high for large datasets. Import mode loads data into memory, which works well for smaller datasets or external sources that don’t live in OneLake. Combining both in one model means you stop choosing one over the other. Each storage mode is used where it fits.

Direct Lake vs Import vs Composite vs DirectQuery

| Capability | Direct Lake | Import | Composite (Direct Lake + Import) | DirectQuery |

|---|---|---|---|---|

| Data location | OneLake (Delta tables) | In-memory copy | Mixed | Source system |

| Refresh model | Auto-synced with OneLake | Scheduled refresh | Per table | Live at query time |

| Power Query transformations | No | Yes | Yes (on Import tables only) | Limited |

| Best for | Large fact tables in lakehouse | Smaller dimensions, external data | Mix of both | Real-time source data |

| Power BI Desktop add-table | Yes (web modeling) | Yes | No (web modeling only) | Yes |

| Calculated columns | No | Yes | Yes (on Import tables) | Yes |

| GA status | Generally available | Generally available | Public preview | Generally available |

Key Characteristics of Composite Semantic Models

- Mix of Direct Lake and Import mode tables: Enables combining high-performance Direct Lake data with selectively imported datasets.

- Unified semantic layer: All tables, regardless of storage mode, exist within a single model for reporting and analysis.

- Flexible data integration: Supports combining data from Fabric sources and external sources through Power Query.

- Optimized for different use cases: Large datasets can stay in Direct Lake, while smaller or custom datasets can be imported.

Current Preview Limitations You Should Know

Several constraints apply that the public preview status does not advertise on the surface. Test these against your reporting requirements before committing.

- Direct Lake on SQL endpoints cannot participate. Only Direct Lake on OneLake supports composite models. If your model uses Direct Lake on SQL, you cannot mix it with Import tables in the same model.

- Power BI Desktop cannot add new tables. You can live edit a Direct Lake plus Import composite model in Desktop, but adding tables requires web modeling. The OneLake Catalog and Get Data buttons are web-only.

- Cross-source relationships default to many-to-many. Even when the actual cardinality is one-to-many, the system creates a many-to-many relationship between Direct Lake and Import tables. You can change this manually, but the cross-source behavior persists.

- DAX restrictions across source groups. You cannot use DAX functions to retrieve values on the one side from the many side across source groups. Some measures behave differently than they would in a single-storage-mode model.

- Import table editing in Desktop requires external tools. For some operations, Tabular Editor, Fabric Studio, or Semantic Link Labs are needed.

These are documented by Microsoft Learn and the Power BI blog. None of them block production use, but each can surprise teams that assume composite models behave like a regular Power BI model.

Import Mode Flexibility in Composite Semantic Models

One of the more practical aspects of this setup is how easily you can add Import mode tables to a model that already contains Direct Lake data. You are not locked into a single source or storage type.

You can pull Import tables from hundreds of connectors through Power Query, including web APIs, flat files, databases, and more. This approach lets you enrich an existing Direct Lake model with external or business-specific data without modifying the underlying data architecture.

Key Capabilities of Import Mode Integration

- Add data from multiple sources: Import tables can be added from a wide range of sources, such as web APIs, files, and databases, using Power Query. This allows you to bring in external or supplemental data that is not available in your Direct Lake environment, making the model more complete.

- Use Power Query for data transformation: Before loading the data, you can clean and shape it using Power Query. This includes steps like renaming columns, filtering rows, changing data types, and preparing the data so it fits well with your existing model.

- Combine same or different data sources: Import tables can come from the same system as your Direct Lake tables or from entirely different external sources. This flexibility allows you to blend enterprise data with external or ad-hoc datasets in a single model.

- Support for business-level data additions: Business users can add smaller datasets, such as manually maintained files or external data extracts, without changing the core data model managed by IT. This makes it easier to extend analysis without impacting the main pipeline.

Two Ways to Build Composite Semantic Models in Power BI

Power BI supports two starting points depending on your existing setup. You can begin with Direct Lake and add Import tables, or start with Import mode and layer in Direct Lake tables later. Neither approach is locked in — you can extend as the use case grows.

Approach 1: Start with Direct Lake and Add Import Tables

- Begin by creating a Direct Lake semantic model using data from OneLake

- Use Get Data to bring in additional tables in Import mode

- Apply transformations using Power Query if needed

- Combine both datasets within the same model

This approach works well when your core data already exists in Fabric.

Approach 2: Start with Import and Add Direct Lake Tables

- Create a semantic model using Import mode tables

- Use OneLake Catalog to add Direct Lake tables

- Connect these tables to your existing model

- Build relationships and extend analysis

This is the right starting point when you already have external or smaller datasets ready to work with.

Which Approach Should You Use?

Start with Direct Lake first when your enterprise data is already in OneLake (lakehouses, warehouses, mirrored databases) and you want to add smaller business datasets on top. This is the IT-led pattern.

Start with Import first when your base model is built on external sources (web APIs, files, on-prem databases) and you want to layer in Fabric data later. This is the business-led pattern.

Both produce the same end state. The starting point only changes which UI button you click first.

How Power BI Composite Models Work: Tools and UI Overview

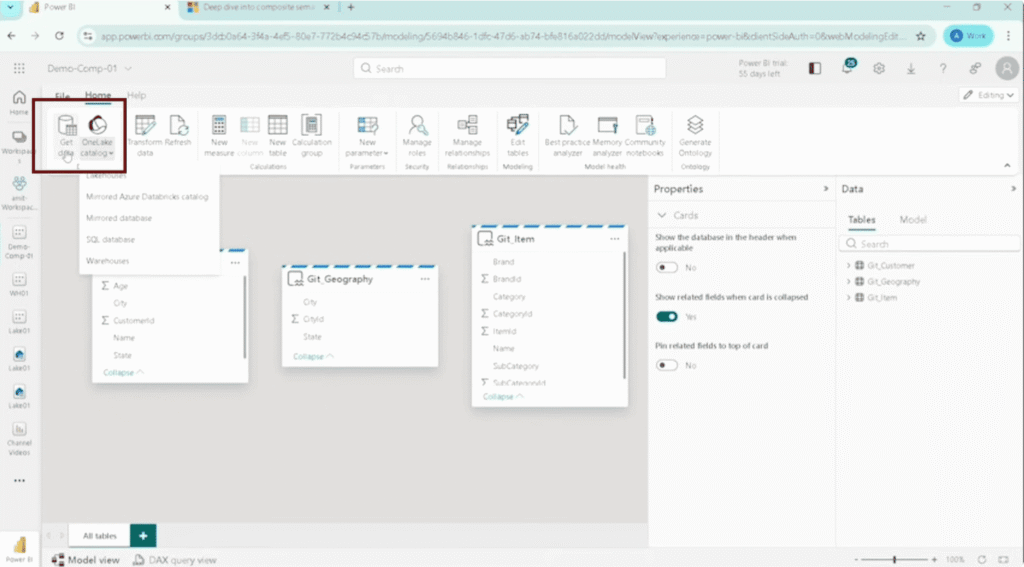

Building a composite model involves two main tools: OneLake Catalog and Get Data. Both are accessible directly from the semantic model editing interface. No switching between tools or workspaces.

| Tool | Description |

| OneLake Catalog | Add Direct Lake tables from Fabric sources such as lakehouses. It connects your model directly to data stored in OneLake. |

| Get Data | Add Import mode tables from various sources using Power Query. |

| Transform Data (Power Query Online) | Clean, shape, and prepare imported data before adding it to the semantic model. |

How These Tools Work Together

- Start with an existing semantic model: Begin with a base model, either created using Direct Lake or Import mode. This acts as the foundation where all additional tables and logic will be added.

- Use OneLake Catalog to bring in Direct Lake tables: Add tables directly from Fabric sources such as lakehouses using OneLake Catalog. These tables stay connected to OneLake and provide high-performance access without importing data.

- Use Get Data to import additional datasets: Bring in external or supplementary data using the Get Data option. These tables are loaded in Import mode and can come from various sources like APIs, files, or databases.

- Apply transformations where needed: Use Power Query to clean and prepare imported data. This step ensures consistency in data types, column names, and structure before combining it with existing tables.

- Combine all tables into a single model: Once all tables are added, create relationships and organize the model. This allows Direct Lake and Import tables to work together seamlessly within the same semantic layer.

Semantic Modeling Capabilities in Composite Models

Core Modeling Capabilities

| Capability | What You Actually Do | Practical Impact |

| Rename tables and columns | Rename tables like get_customer → Customer, or columns to business-friendly names | Makes fields easier for report users to understand and avoids confusion in visuals |

| Create relationships | Link Import table (Sales) with Direct Lake tables (Customer, Item, Geography) using keys like CustomerID, ItemID | Ensures filters from dimensions correctly apply to fact data across both storage modes |

| Add measures | Create DAX measures like Gross = Quantity × Price using Import table data | Allows you to build KPIs and calculations even when data comes from mixed sources |

| Add calculated columns/tables | Add calculated fields for derived logic (e.g., category grouping, flags) | Helps extend the model when source data does not include required logic |

Data Organization Features

| Capability | What You Actually Do | Practical Impact |

| Hide unnecessary columns | Hide columns like CustomerID, ItemID, or technical fields not needed in reports | Keeps report field pane clean and prevents users from selecting irrelevant fields |

| Create hierarchies | Create hierarchies like Geography → City → Country using fields from Direct Lake tables | Enables drill-down in visuals without manually adding multiple fields |

| Use display folders | Group measures like Total Sales, Quantity, and Profit into folders like “Sales Metrics” | Makes it easier to find and use measures in large models |

Security and Model Management

| Capability | What You Actually Do | Practical Impact |

| Role-level security (RLS) | Define rules like Region = “East” so users only see their region’s data in reports | Ensures users only access relevant data when reports are shared |

| Version history | Track changes when tables, relationships, or measures are updated in the model | Helps revert changes if something breaks in reports or visuals |

Real-world Scenarios

| Scenario | What You Actually Do | Practical Impact |

| Mixing Direct Lake + Import | Combine the Direct Lake tables (Customer, Item) with the Import table (Sales) in one model | Let’s you analyze live and imported data together in one report |

| Building reports | Use fields like Brand (Direct Lake) and Gross (Import measure) in the same visual | Enables seamless reporting across different storage modes |

| Performance + flexibility | Keep large datasets in Direct Lake and import only small or custom datasets | Improves performance while allowing flexibility for business logic |

Steps Create Composite Semantic Models in Power BI Starting with Direct Lake

Here’s how to build a composite model using the Direct Lake-first approach. You start with OneLake data and then extend it with Import mode tables.

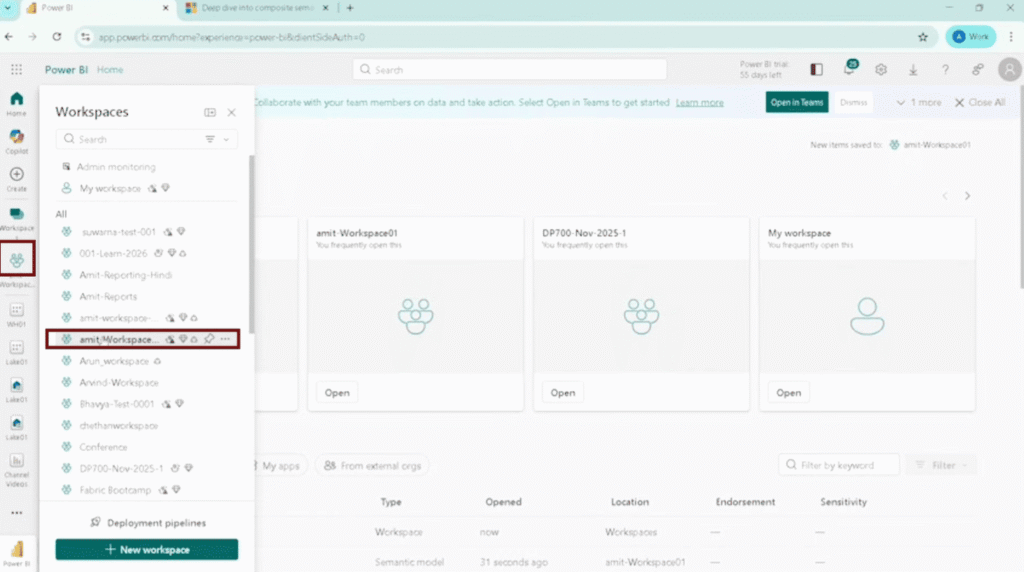

Step 1: Create a Direct Lake Semantic Model from a Lakehouse

- Go to your Fabric workspace.

- Open your Lakehouse (e.g., lake01).

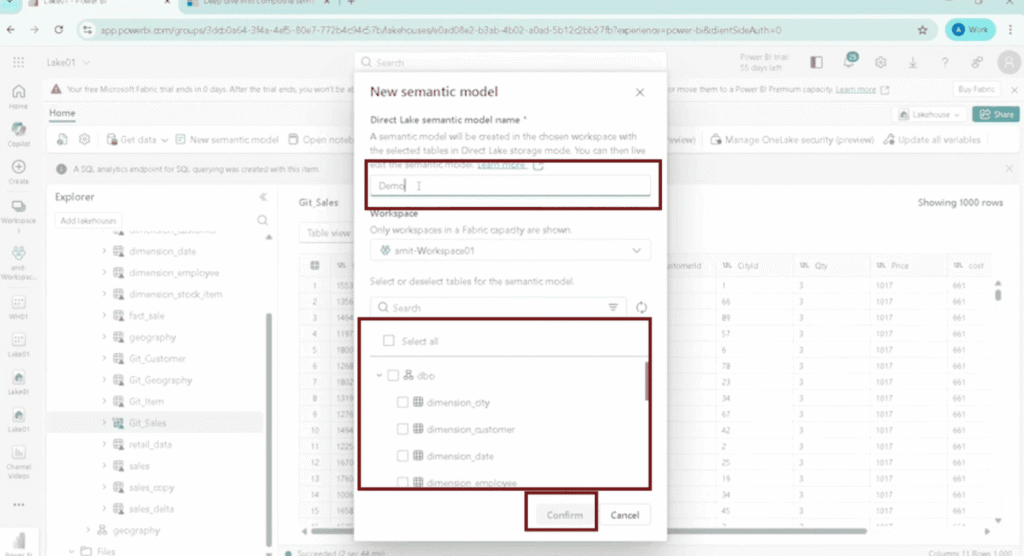

- Select tables: Customer, Geography, Item.

- Click New Semantic Model.

- Enter a name (e.g., Demo_Composite_01) and click Confirm.

The model is now created with Direct Lake tables.

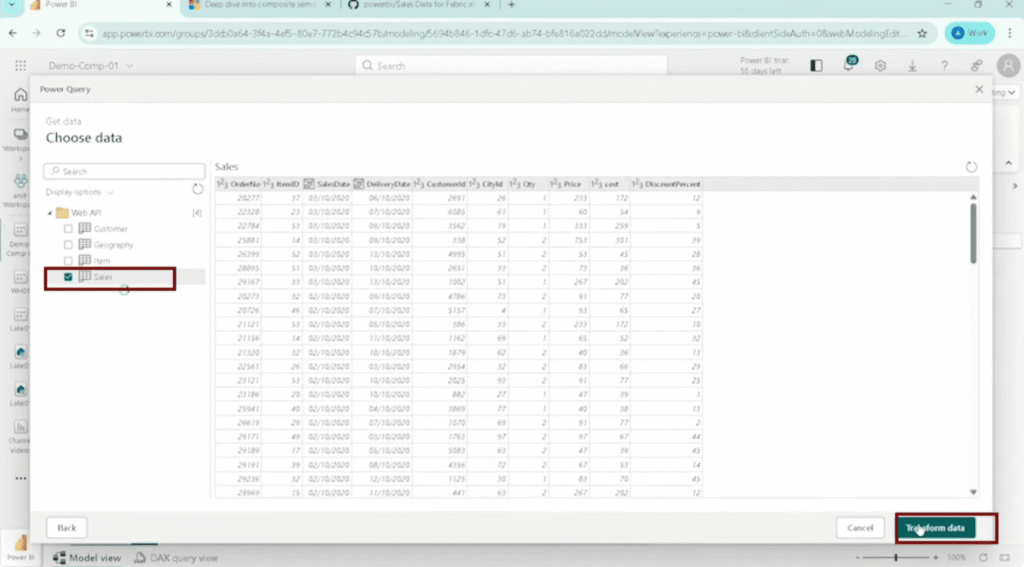

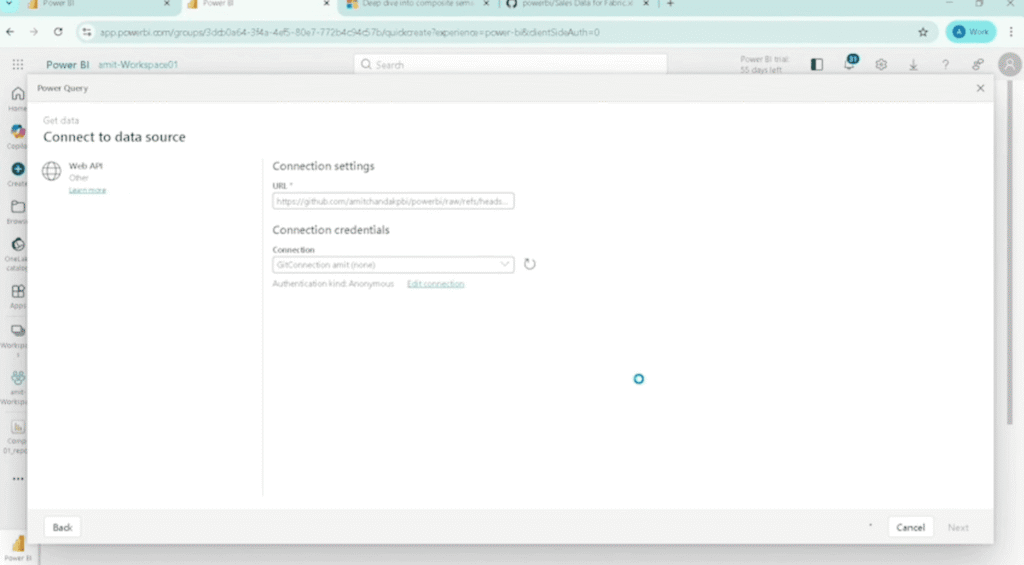

Step 2: Add an Import Mode Table via Get Data

- Open the semantic model.

- Click Get Data.

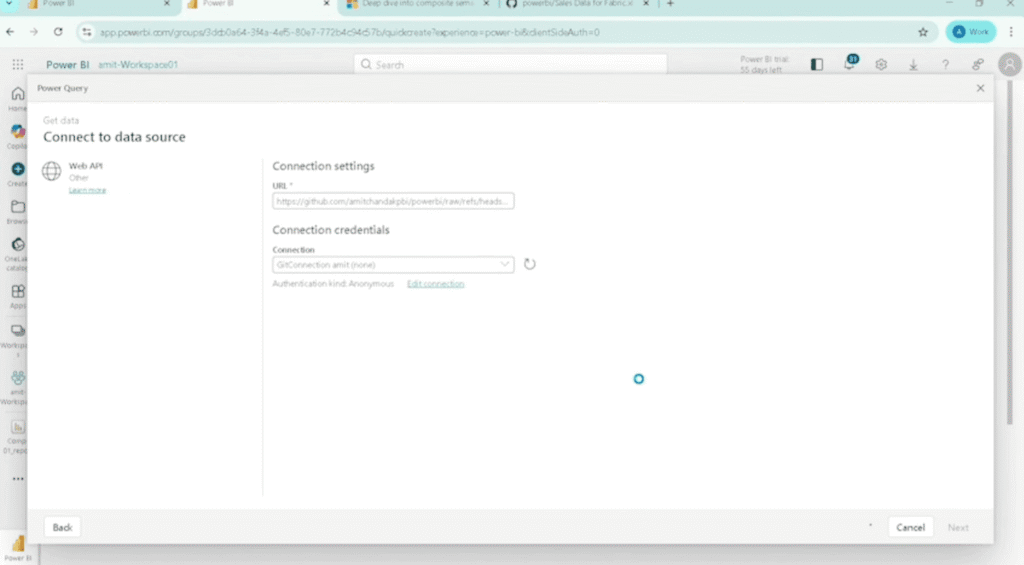

- Choose a source (e.g., Web or API).

- Paste the dataset URL and proceed.

A new table is added in Import mode.

Step 3: Transform Data Using Power Query

- Open Transform Data.

- Apply required changes: use first row as header, detect data types, rename columns if needed.

- Click Save.

The transformed table is added to the model.

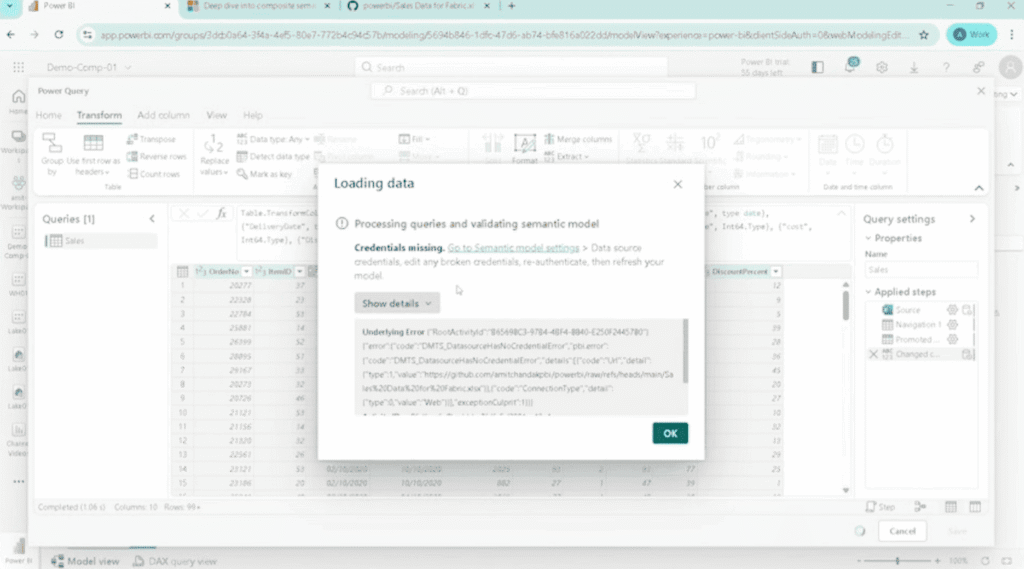

Step 4: Fix Credential Issues (If Required)

- Go to Semantic Model Settings.

- Locate the failed connection and click Edit Credentials.

- Select the authentication method, save, and validate.

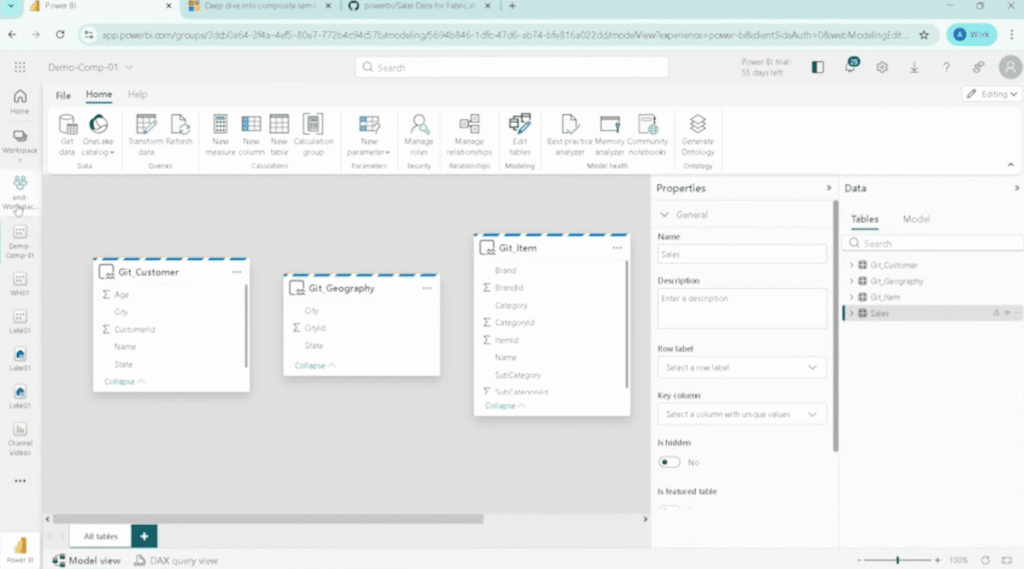

Step 5: Validate the Model Structure

Confirm tables in the model:

- Customer, Geography, Item → Direct Lake

- Sales → Import

Both storage modes are now active in the same model.

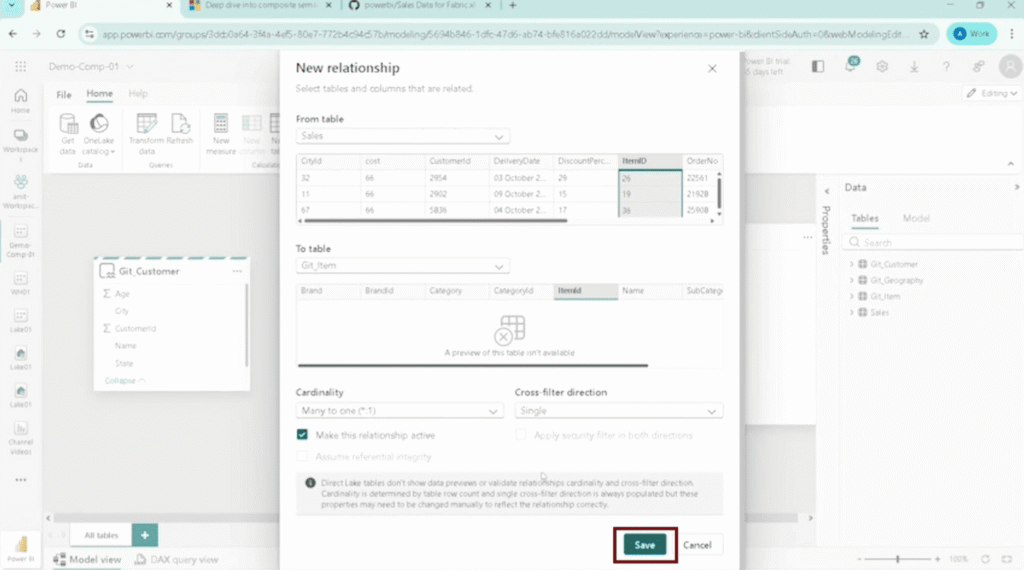

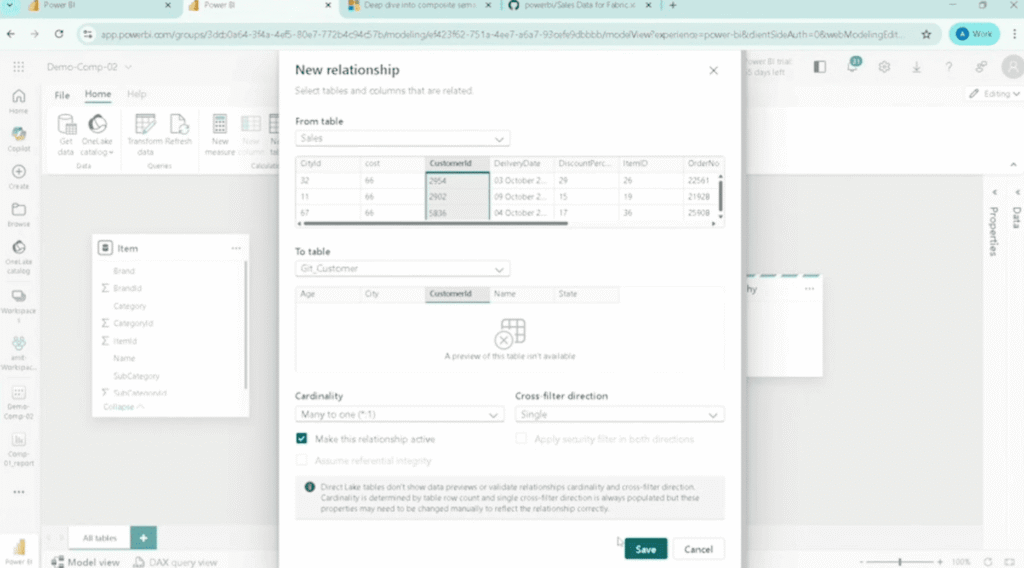

Step 6: Create Relationships

- Sales → Item (ItemID)

- Sales → Geography (CityID)

- Sales → Customer (CustomerID)

- Set cardinality to Many-to-One and save.

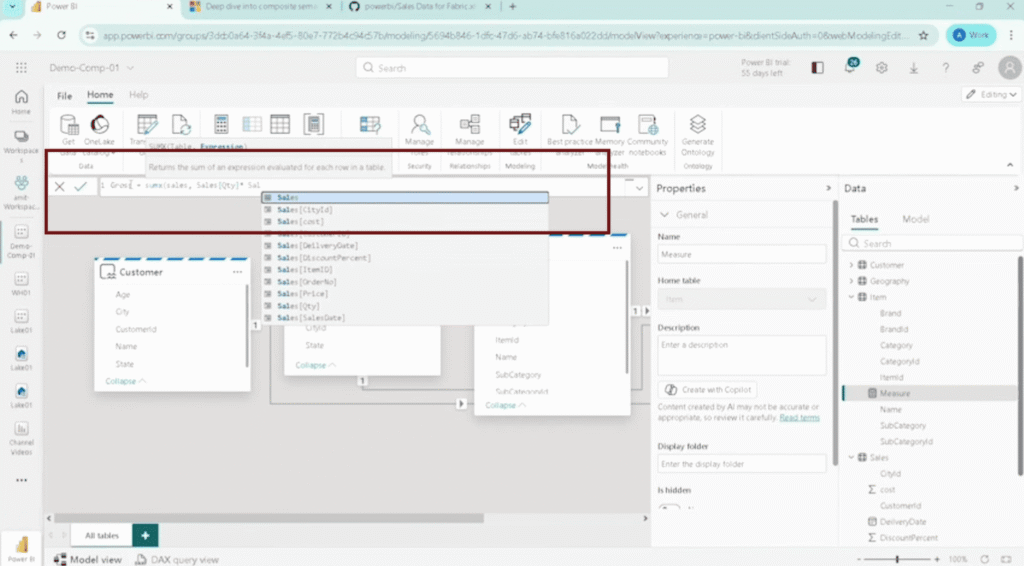

Step 7: Add a Measure

Gross = Quantity × Price

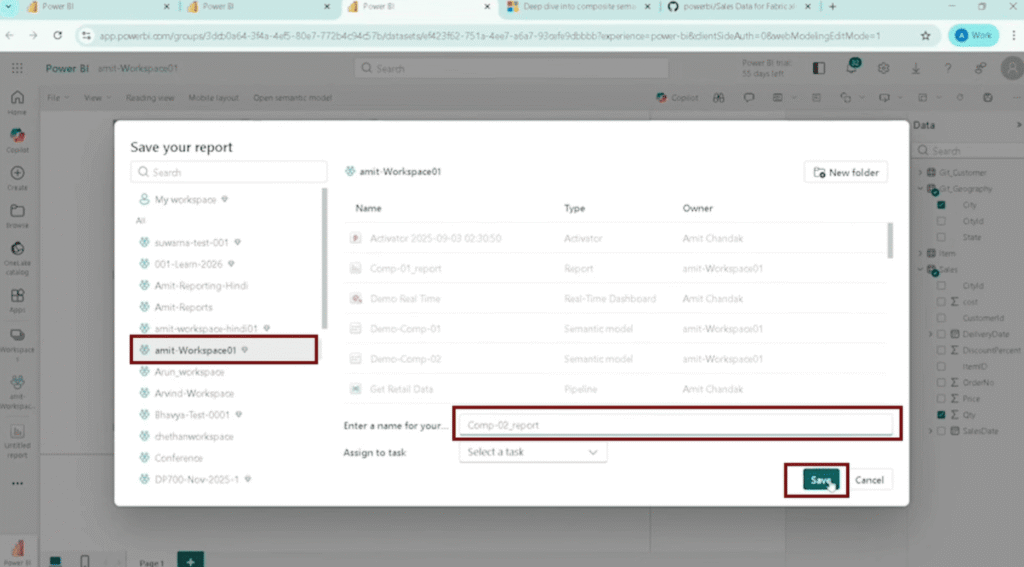

Step 8: Build a Report to Test the Model

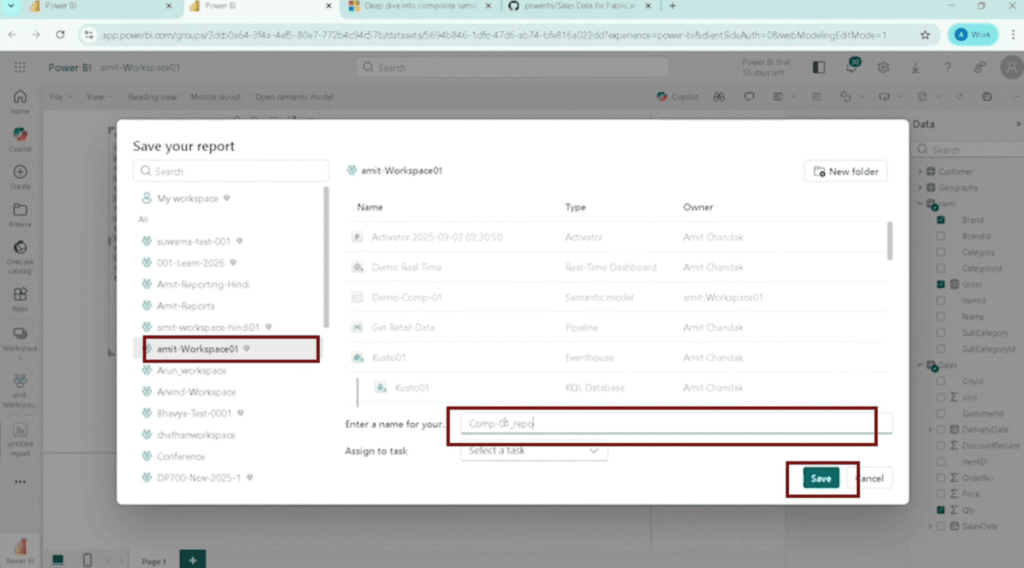

- Click Create New Report.

- Add fields: Brand (Direct Lake), Gross (Import measure), Quantity.

- Save the report.

Step-by-Step: Create Composite Semantic Models in Power BI Starting with Import Mode

This approach works well when your base data comes from external sources and you want to add Fabric data later.

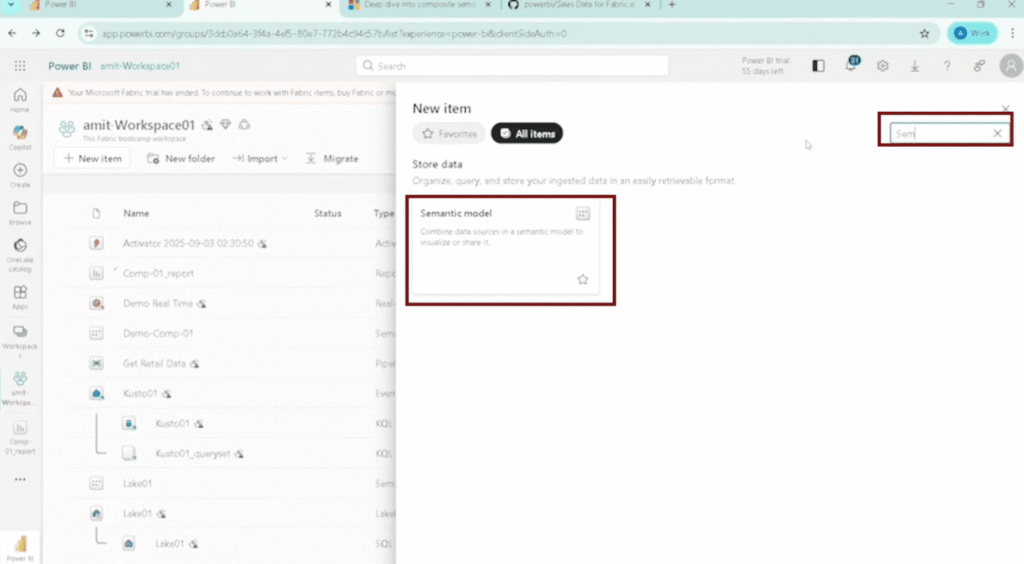

Step 1: Create an Import Mode Semantic Model

- Go to your Fabric workspace and click New Item.

- Select Semantic Model, then click Get Data.

- Choose a source (e.g., Web API) and provide the dataset URL.

- Select required tables: Sales, Item.

- Click Transform Data, validate headers and data types.

- Click Create Semantic Model, name it (e.g., Demo_Composite_02), and save.

The model is created with Import mode tables.

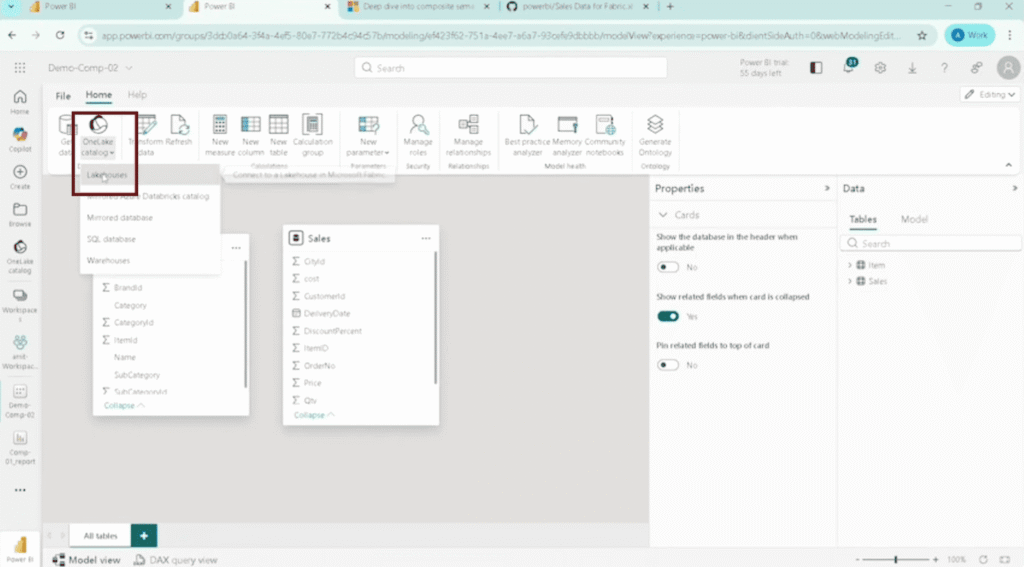

Step 2: Add Direct Lake Tables via OneLake Catalog

- Open the semantic model.

- Click OneLake Catalog.

- Navigate to your Lakehouse (e.g., lake01).

- Select Customer and Geography, then click Connect.

The tables are added as Direct Lake.

Step 3: Create Relationships

- Sales → Customer (CustomerID)

- Sales → Geography (CityID)

- Sales → Item (ItemID)

- Set cardinality to Many-to-One and save.

Step 4: Build a Report to Validate

- Click Create New Report.

- Add City (Geography, Direct Lake) and Quantity (Sales, Import).

- Use a table visual and save.

Performance and Benefits of Power BI Composite Models

The core value of composite models is that you stop forcing all data into a single storage mode. Large datasets that belong in Direct Lake stay there. External or custom datasets come in through Import. You get speed and flexibility in the same model.

Performance and Benefits Breakdown

| Area | Benefit | Practical Impact |

|---|---|---|

| Query performance | Direct Lake provides fast access to large datasets without full import | Reports load quickly even with large data volumes |

| Data flexibility | Import mode allows adding external or smaller datasets | Extend models without modifying core data pipelines |

| Reduced data duplication | Large datasets stay in OneLake instead of being copied | Saves memory and avoids unnecessary storage usage |

| Faster development | Add new tables using Get Data without rebuilding the model | Speeds up report development and iteration |

| Hybrid modeling | Combine live and imported data in one model | Mixes enterprise and business data in one model |

| Reporting across modes | Use fields from both Direct Lake and Import in the same visual | No need to create separate models or reports |

| Scalability | Direct Lake handles large-scale data while Import handles targeted datasets | Supports both enterprise-scale and small-scale use cases |

When to Use Power BI Composite Models?

Composite models earn their complexity when you genuinely need both storage modes. They cost you complexity if you only need one.

Use a composite model when:

- Your enterprise fact data lives in OneLake but you need to enrich it with external data (commission rates, third-party benchmarks, manually maintained reference tables).

- IT and business want to share a single model. IT manages the lakehouse layer, business owns the supplementary Import tables.

- You’re migrating from a classic Import-only model to Fabric incrementally and want to move large tables to OneLake first while keeping smaller dimensions in Import.

- Refresh cost matters. Composite models reduce capacity consumption because only Import tables participate in scheduled refreshes.

Avoid composite models when:

- All your data fits comfortably in Direct Lake or comfortably in Import. You don’t need the extra surface area.

- Your DAX measures need to cross storage modes for one-side-from-many-side calculations. The cross-source restrictions will bite you.

- Your team uses Direct Lake on SQL endpoints. Composite models only work with Direct Lake on OneLake.

- You depend on Power BI Desktop for the entire authoring workflow. Add-table operations require web modeling.

Build Production-Ready Power BI Models With Kanerika

Kanerika is a Microsoft Solutions Partner for Data and AI with Analytics specialization, and a Microsoft Fabric Featured Partner. We design and build Power BI semantic models, Microsoft Fabric pipelines, and lakehouse architectures for enterprises across pharma, healthcare, banking, retail, and manufacturing.

Our work with Direct Lake and composite semantic models includes large fact-table dashboards, IT-and-business shared models where enterprise data lives in OneLake and supplementary data comes through Import, and migrations from classic Import-only models to Direct Lake. We’ve delivered 520+ KPIs across client engagements, with 98% client retention and 5.0 ratings on Clutch, Capterra, and GoodFirms.

If you’re rebuilding semantic models on Fabric or planning a Direct Lake migration, our FLIP migration accelerator cuts migration effort by 75%. Most migrations land in 2 to 8 weeks depending on pipeline volume.

Wrapping Up

Power BI composite models give you a real way to mix performance and flexibility in the same semantic model, but the public preview status matters. Test the limitations against your real reporting needs before building production work on top of them. The cross-source DAX restrictions and the missing Power BI Desktop add-table support are the two constraints most teams underestimate.

For the IT-and-business shared workflow, this feature is genuinely useful. For everything else, a single-mode model is usually still the right call until GA.

FAQs

How Is a Power BI Composite Model Different From a Regular Model?

A standard Power BI model uses one storage mode: Import, DirectQuery, or Direct Lake. A composite model mixes two or more storage modes in the same model, so different tables can use whichever mode fits their data size, refresh needs, and source. Direct Lake plus Import is the newest composite mode and is in public preview.

Can I Mix Direct Lake and Import Mode in the Same Power BI Report?

Yes. Once you build a composite semantic model with both Direct Lake and Import tables, you can use fields from either table type in the same visual and the same report. No additional configuration needed. Just be aware that DAX measures crossing source groups behave differently than single-source measures.

What Is Direct Lake Mode and When Should I Use It?

Direct Lake is a storage mode in Microsoft Fabric that reads data directly from OneLake without importing it into memory. It works best for large fact tables where you need fast query performance and don’t want the overhead of a full import. Composite models extend Direct Lake by letting you add Import tables alongside it.

What Types of Data Sources Can I Add as Import Mode Tables?

Import mode tables can come from any source supported by Power Query: web APIs, flat files, SQL databases, cloud services, and more. If it has a connector in Power Query, it can be added as an Import table in a composite model. The 100+ connector library opens up almost any external source.

Do I Need to Rebuild My Existing Model to Use Composite Features?

No. You can start with an existing Direct Lake model and add Import tables through Get Data, or start with an existing Import model and add Direct Lake tables through OneLake Catalog. Neither approach requires rebuilding from scratch. Both add-table operations require web modeling, not Power BI Desktop.

Does Mixing Storage Modes Affect Report Performance?

Model design determines the outcome. Direct Lake tables stay fast for large datasets. Import tables load into memory, which works well for smaller datasets. Performance issues usually appear when DAX measures cross source groups. Test your measures specifically across the boundary before deploying to production.

How Do Relationships Work Between Direct Lake and Import Tables?

Cross-source relationships default to many-to-many cardinality, even when the actual data relationship is one-to-many. You can change the cardinality manually, but cross-source relationships have different behavior than same-source ones. DAX functions cannot retrieve values on the one side from the many side across source groups, which affects some measure patterns.