As of 2025, businesses continue to face a major disconnect between data collection and actual insight. According to recent research, 68% of enterprise data still goes unused in analytics workflows. This isn’t due to lack of access, it’s because the data often lives in unstructured, all-in-one tables that aren’t fit for analysis at scale.

Compounding the issue, 71% of organizations now report that their business intelligence tools struggle to scale with growing data, and 76% say slow or cluttered dashboards are affecting decision-making. The root cause? Poor data modeling. Without clear fact and dimension separation, even modern BI tools can’t perform efficiently.

Addressing these challenges, Microsoft Build 2025 introduced significant enhancements to Power BI and unveiled Microsoft Fabric, a unified data platform designed to streamline data management and analytics. These advancements aim to empower organizations to transform raw data into structured, insightful information efficiently.

That’s why, in this blog, we focus on one of the most practical and powerful modeling approaches available in Power BI, creating a Star Schema from a Single Table using Power Query. We’ll show you how to take a flat table and break it into a clean, scalable data model, step by step, using native Power BI tools.

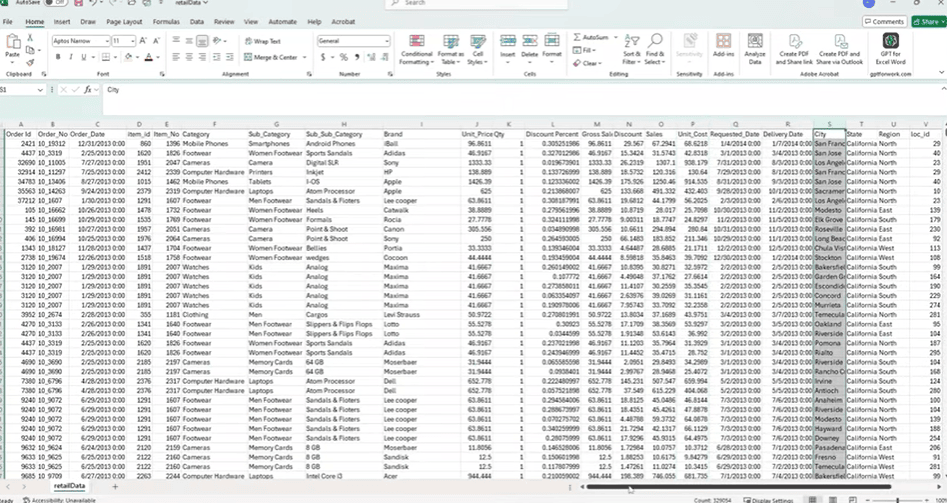

Step 1: Understanding the Dataset to Create Star Schema

Many business datasets are delivered as flat tables, single sheets containing a mix of transactional and descriptive data. These tables often include sales values, product details, customer information, payment methods, and geographic fields, all combined in one structure.

While this format is easy to work with initially, it creates several issues in Power BI. Mixing repeated descriptive fields with transactional data leads to larger file sizes, unnecessary redundancy, and slower performance. It also complicates relationship management and limits the flexibility of the data model.

To resolve this, the data needs to be restructured into a star schema. In this approach:

- A fact table holds transactional data such as sales, quantity, cost, and discount.

- Dimension tables store descriptive attributes like product categories, regions, or payment methods.

The dataset used in this example contains:

- Sales details: Order ID, Order Date, Unit Price, Quantity, Discount

- Product attributes: Item ID, Category, Subcategory, Brand

- Geography: City, State, Region, Location ID

- Business descriptors: Payment Method, Order Type, Customer ID

This structure makes it suitable for building a star schema, which improves performance and supports scalable reporting in Power BI. In the next steps, Power Query will be used to transform this flat table into a clean, relational model.

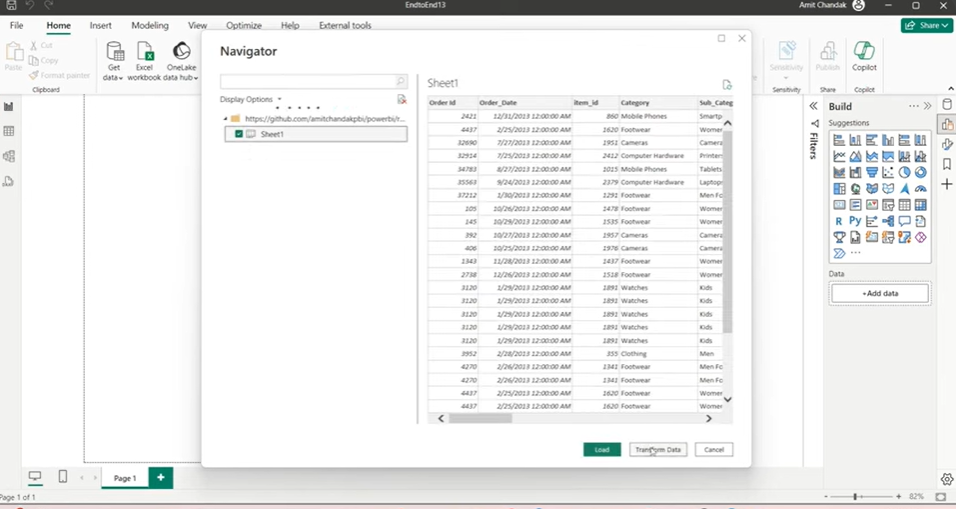

Step 2: Loading the Flat File into Power BI Using Power Query

To begin building the star schema, the first step is importing the dataset into Power BI using Power Query. Here’s how the process works, broken down for clarity.

1. Open Power BI and Get the Data

- Launch Power BI Desktop.

- Go to the Home tab and select Get Data.

- Choose your data source:

- Use Web if the file is hosted online (e.g. a GitHub or Dropbox link).

- Use Excel if it’s stored locally.

2. Select the Table

- After connecting, Power BI will show a list of available sheets or tables.

- Select the one that contains the raw data.

- Do not load the data yet, click Transform Data instead.

- This opens the Power Query Editor.

3. Review the Table Structure

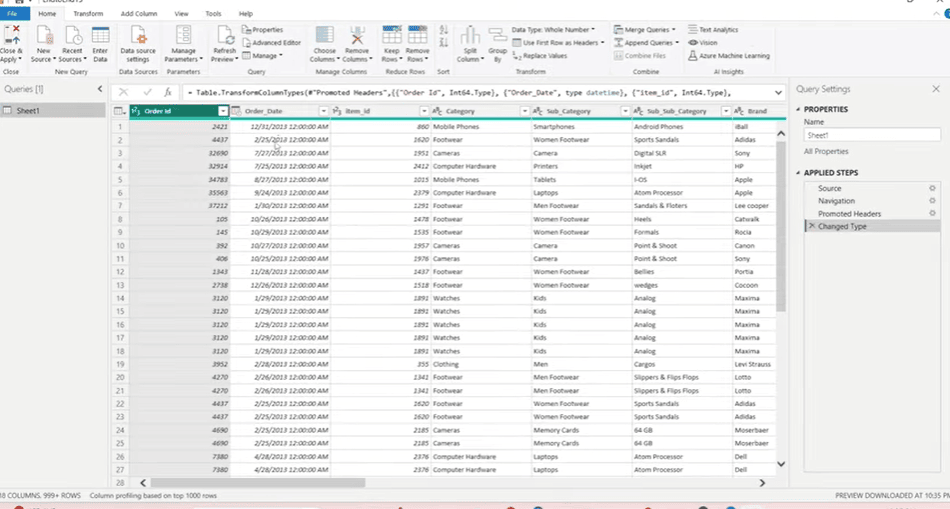

The table should now be open in Power Query. It contains:

- Transactional fields – Order ID, Order Date, Unit Price, Quantity, Discounts

- Product attributes – Item ID, Category, Subcategory, Brand

- Geographic fields – City, State, Region, Location ID

- Business fields – Payment Method, Order Type, Customer ID

This flat structure includes both descriptive fields and numeric values. It’s not optimized for reporting but provides everything we need to build a structured model.

4. Why Use Power Query

Power Query lets you:

- Split one table into many (facts and dimensions)

- Remove duplicates

- Create index columns

- Merge and join tables

- Apply all transformations before the data reaches the model

This gives you full control over how the data is shaped and ensures the final model in Power BI is clean, fast, and reliable.

Step 3: Creating Dimension Tables for Power BI Star Schema

Once the data is loaded into Power Query, the next step is to break the flat table into dimension tables. These dimensions will store repeated descriptive values such as product info, locations, and payment types. This improves performance and helps create a proper star schema.

We’ll create dimension tables using the Reference option in Power Query.

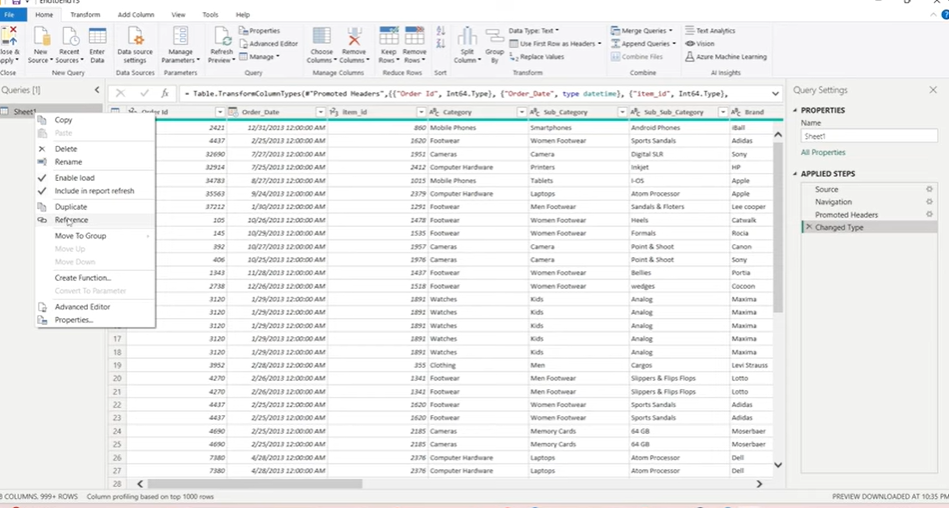

1. Use “Reference” Instead of “Duplicate”

In Power Query, you can either:

- Duplicate a table – creates a separate copy with no link to the original

- Reference a table – creates a linked version that shares the data source

Always use Reference when building dimensions. It loads data only once and reduces memory usage.

To create a reference:

- Right-click the main table

- Select Reference

- A new query will be created, linked to the original

2. Build the Product (Item) Dimension

This table contains product-related attributes.

Steps:

- Reference the main table

- Select the following columns:

- Item ID

- Category

- Subcategory

- Sub-subcategory

- Brand

- Right-click → Remove Other Columns

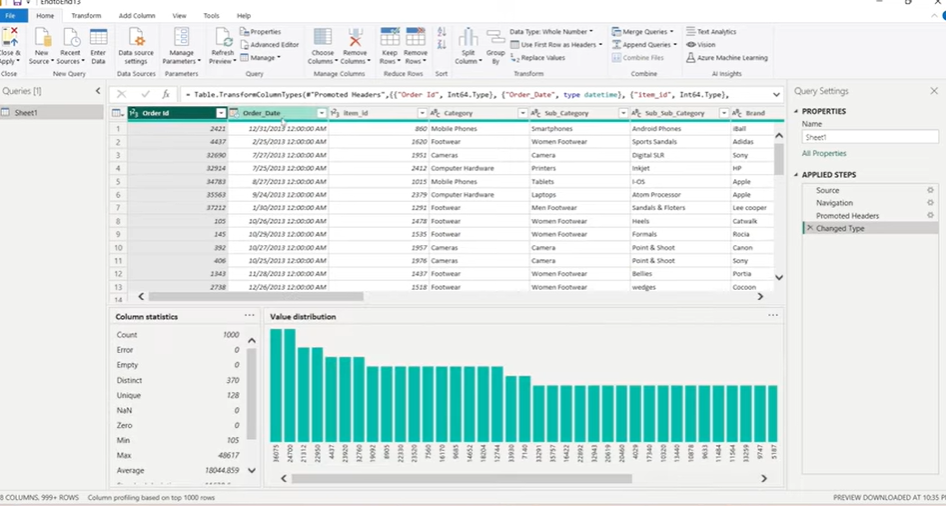

- Use Remove Duplicates to ensure uniqueness

- Go to View > Column Distribution to confirm Item ID is unique

- Rename this query to Item

This becomes your Item Dimension.

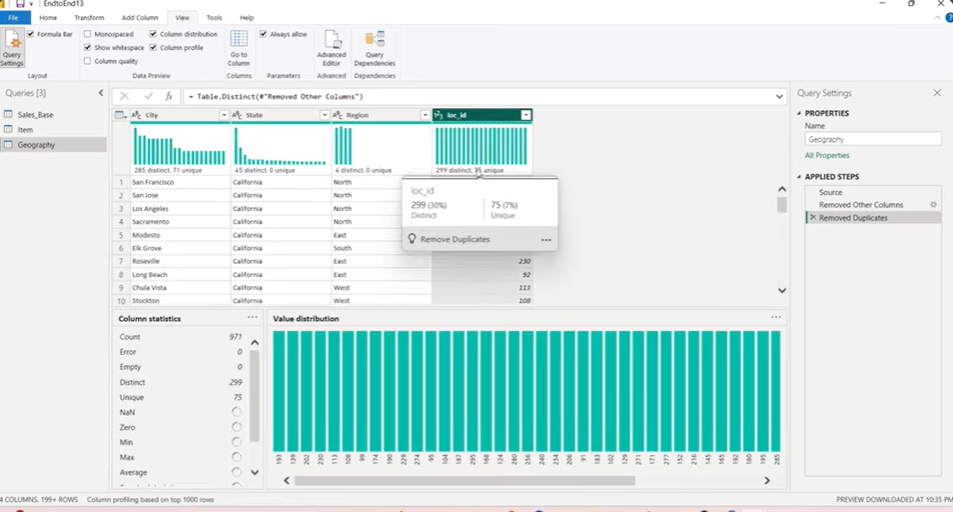

3. Build the Geography Dimension

Next, we create a table with geographic data.

Steps:

- Reference the main table

- Select:

- City

- State

- Location ID

- Remove other columns

- If Region causes duplication, remove it

- Use Remove Duplicates

- Check column distribution to ensure Location ID is unique

- Rename the query to Geography

4. Build Single-Column Dimensions (Optional but Recommended)

Some fields like Payment Method, Order Type, or Region are standalone values that still deserve their own dimensions.

Steps (for each field):

- Reference the main table

- Keep only one column (e.g. Payment Method)

- Remove duplicates

- Add an Index Column (starting from 1) to act as a unique key

- Rename the query to match the column (e.g. Payment Method)

This approach ensures even small lookup tables are normalized.

Designing a data model for Power BI?

Kanerika builds the full stack, from data warehouse to final dashboard

Step 4: Building the Fact Table for the Star Schema Using Power Query

With the dimension tables ready, the next step is to build the fact table. This table holds the transactional data—sales amounts, quantities, costs—and connects to each dimension using ID columns.

We’ll build the fact table by referencing the base table and merging it with the dimension tables.

1. Start from the Base Table

- Go back to the main query (the original flat table)

- Rename it to something like Sales Base

- Do not make any changes to this query, it will serve only as a source

2. Create a Reference for the Fact Table

- Right-click Sales Base

- Choose Reference

- Rename the new query to Sales Fact

- This will become your main fact table

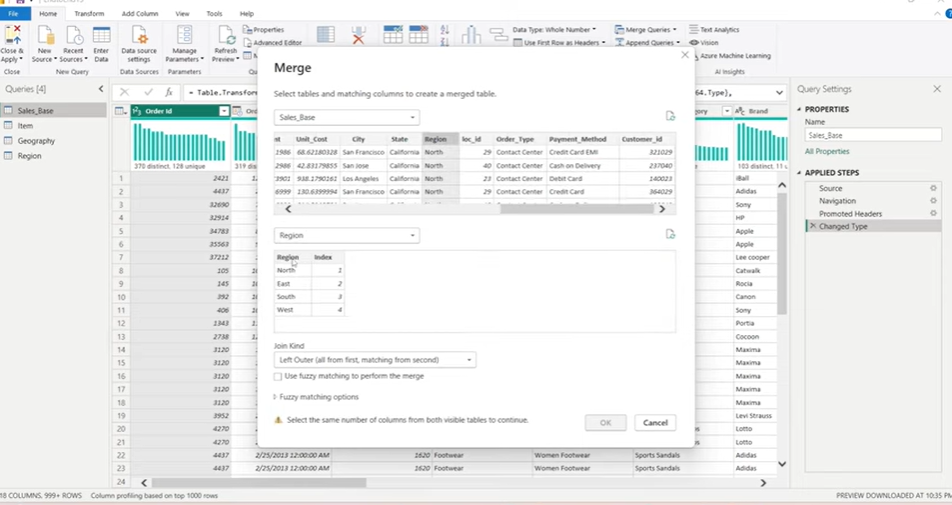

3. Merge Dimension Tables

You’ll now join each dimension to the fact table to bring in the related ID fields.

Example: Merge Geography

- In Sales Fact, go to Home > Merge Queries

- Select the Region column in both tables (Sales Fact and Geography)

- Use Left Outer Join

- Click OK

- Expand the merged column

- Select only the index or ID column (e.g. Region ID)

- Rename the column properly

Repeat the same steps to merge:

- Item table using Item ID

- Payment Method table using Payment Method

- Order Type table if needed

- Any other single-column dimensions you’ve created

4. Finalize the Fact Table

Once all merges are done:

- Keep only necessary fields

- Ensure all dimension IDs are present

- Confirm numeric fields like:

- Unit Price

- Quantity

- Gross Sales

- Discount

- Net Sales

- Optionally, create calculated columns (e.g. Gross Amount = Quantity * Unit Price)

Now the fact table is complete, with proper keys linking it to each dimension.

Need a data engineering team behind your Power BI?

Kanerika builds pipelines, models, and BI layers end-to-end.

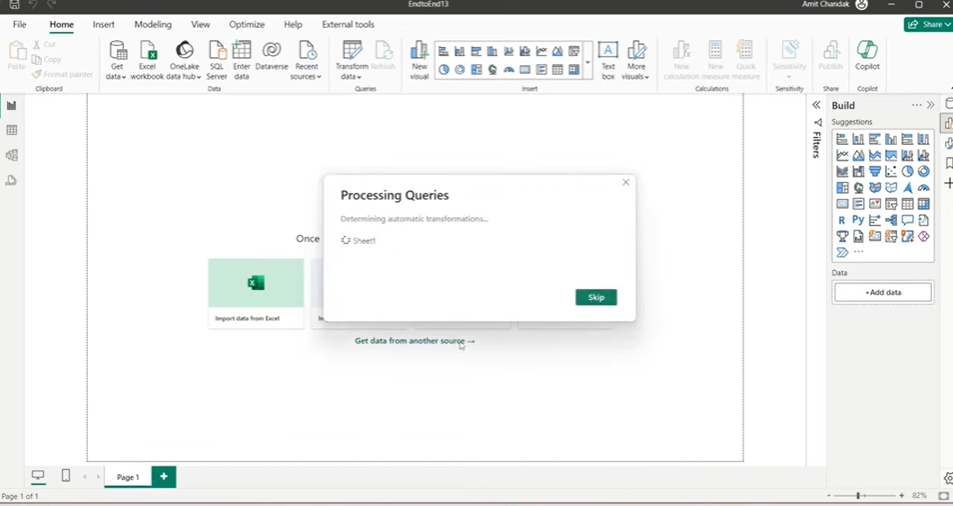

5. Apply the Changes

- Click Close & Apply in Power BI

- Power BI will load all the tables into the data model

- Loading order matters, dimension tables will load first, followed by the fact table

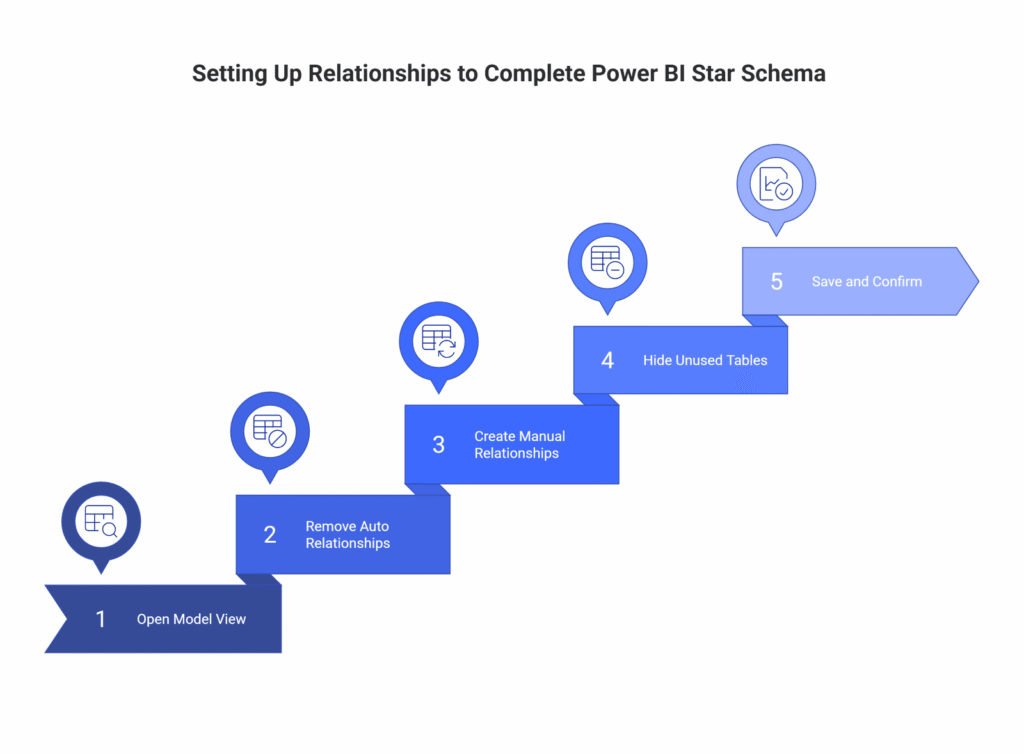

Step 5: Setting Up Relationships to Complete Power BI Star Schema

Once the fact and dimension tables are loaded into Power BI, the final step is to define relationships between them. This creates the actual star schema, a central fact table connected to multiple dimension tables.

Power BI may auto-detect relationships, but it’s best to set them manually to ensure accuracy.

1. Open Model View

- In Power BI Desktop, switch to the Model view from the left sidebar

- You’ll see all your tables laid out as blocks

- If Power BI created relationships automatically, review them carefully

2. Remove Incorrect Auto Relationships

- Select and delete any automatically created relationships

- These are often based on column name matches and may not reflect the actual keys you want to use

- It’s better to define all joins manually

3. Create Manual Relationships

For each dimension, connect its key column to the matching column in the fact table.

Examples:

- Item[Item ID] → Sales Fact[Item ID]

- Geography[Location ID] → Sales Fact[Location ID]

- Payment Method[Payment Method ID] → Sales Fact[Payment Method ID]

- Region[Region ID] → Sales Fact[Region ID] (if applicable)

Settings to use:

- Cardinality: Many-to-One (fact → dimension)

- Cross Filter Direction: Single

Power BI should detect these settings correctly but always double-check.

4. Hide Unused Tables (Optional)

To keep the model clean:

- Right-click on Sales Base (the untouched reference table)

- Select Hide in Report View

- This keeps it in the background for queries but removes clutter from visuals

5. Save and Confirm

- At this point, your star schema is complete

- Save your file

- Test visuals to confirm that relationships work as expected

With all joins defined, Power BI can now read the model efficiently. This improves performance, reduces redundancy, and simplifies visualizations and DAX logic.

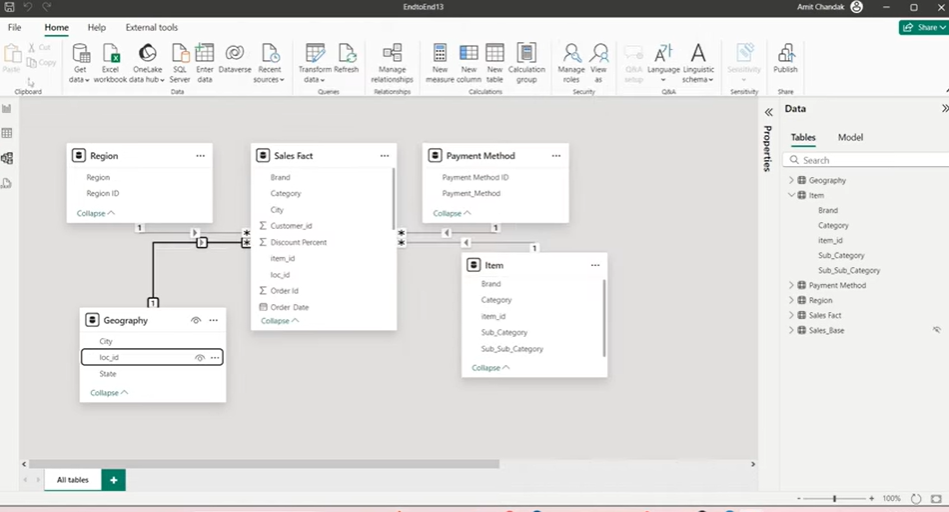

Step 6: Apply and Load Star Schema Model in Power BI

With all tables loaded and cleaned, the final task is to connect the fact table to the dimension tables. This is done in Power BI’s Model View, where you define relationships and complete the star schema.

1. Hide the Base Table (Optional)

The original flat table, now renamed as Sales Base, is no longer needed for reports. To reduce clutter:

- Right-click on Sales Base

- Select Hide in Report View

- This keeps the query for reference but removes it from visual-level tools

2. Define Relationships Manually

Power BI may try to auto-detect joins, but it’s best to create them yourself. Each dimension should connect to the fact table using its ID column.

Set up these joins:

- Sales Fact[Item ID] → Item[Item ID]

- Sales Fact[Location ID] → Geography[Location ID]

- Sales Fact[Payment Method ID] → Payment Method[Payment Method ID]

- Sales Fact[Region ID] → Region[Region ID] (if a Region table was created)

3. Set Relationship Properties

For each join:

- Cardinality: Many-to-One (the fact table has repeated values, the dimension doesn’t)

- Cross Filter Direction: Single (from dimension to fact)

These settings ensure your model behaves predictably in visuals and DAX calculations.

Once relationships are in place, your star schema is complete. The model is now ready for building fast, scalable, and reliable reports in Power BI.

Real-World Use Case: Applying Star Schema Using Power Query in Power BI

Use Case 1: E-commerce Sales Dashboard

Scenario:

An online retail company stores monthly transaction data in a centralized system. The export includes:

- Order ID, Order Date, Product Name, Category, Subcategory

- Quantity, Unit Price, Discount, Total Amount

- Payment Method, Shipping City, Customer ID

All of this is in a single flat Excel or CSV file shared with the BI team.

Problem with the Flat Table:

- Every row repeats product and category info

- City names are spelled inconsistently (e.g. “New York”, “NYC”, “new york”)

- Adding a new metric or dimension becomes harder over time

- Reports get slower as data grows

Star Schema Fix:

Split the data into:

- Fact_Sales: Order-level transactional data (Order ID, Quantity, Unit Price, Discount, Total)

- Dim_Product: Product ID, Product Name, Category, Subcategory

- Dim_Payment: Payment Method, with an assigned PaymentMethodID

- Dim_Geography: City, State, Region, Location ID

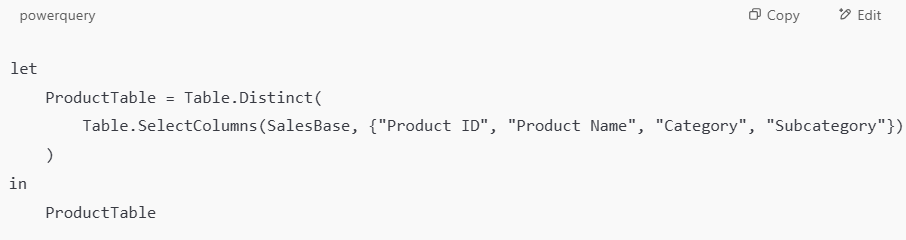

Example Power Query Steps (Product Table):

Business Impact:

- Clean slicers for Category or Payment Method

- Reports like “Top-Selling Products by Region” load instantly

- Easy to add measures like Gross Margin or Average Discount

- Can use Calendar Table and DATEADD() for YTD, MTD, or LY analysis

Use Case 2: Financial Performance Dashboard for a Services Company

Scenario:

A consulting firm tracks billing data across projects. Their Excel report contains:

- Invoice ID, Invoice Date, Client Name, Client Region, Service Type

- Consultant Name, Billing Rate, Hours Worked, Total Billed

The data is flat and includes many repeated names and service types.

Problem with the Flat Table:

- Every invoice row repeats client and service info

- Changing a client region requires updating many rows

- Complex filters (e.g., all hours billed for a certain service in a region) are slow and unreliable

Star Schema Fix:

Split the data into:

- Fact_Billing: Invoice ID, Consultant ID, Service ID, Client ID, Billing Rate, Hours, Total

- Dim_Client: Client ID, Client Name, Region

- Dim_Service: Service ID, Service Name, Type

- Dim_Consultant: Consultant ID, Name, Department

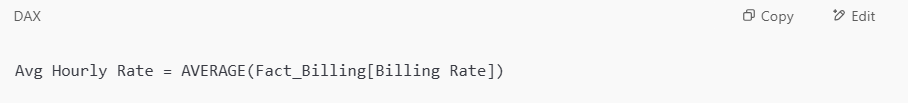

Example Measure in Power BI:

Business Impact:

- Analysts can easily slice by service, region, or consultant

- Drill-throughs to see billing by client or department become fast and reliable

- New service types can be added without touching historical data

- Regional managers get dashboards filtered to their territory with row-level security

These examples help highlight why star schema isn’t just a “best practice”, it’s the difference between a slow, fragile report and a robust analytics solution that scales.

Why Kanerika is Your #1 Choice for Data Modernization Services

As a leading provider of data and AI solutions, Kanerika recognizes the need for businesses to transition from legacy systems to modern data platforms. Upgrading outdated infrastructure enhances data accessibility, improves reporting accuracy, enables real-time insights, and lowers maintenance costs. With modern platforms, businesses can harness advanced analytics, cloud scalability, and AI-powered decision-making to stay ahead in a competitive landscape.

However, manual migration processes can be complex, time-intensive, and prone to errors, often disrupting critical business operations. Even a minor mistake in data mapping or transformation can lead to inconsistencies, historical data loss, or extended system downtime.

To address these challenges, Kanerika has developed custom automation solutions that streamline migrations across multiple platforms with precision and efficiency. Our automated tools ensure seamless transitions from SSRS to Power BI, SSIS and SSAS to Fabric, Informatica to Talend/DBT, and Tableau to Power BI, minimizing manual effort while preserving data integrity.

Partner with Kanerika for a seamless, automated, and risk-free data modernization experience.

Take Your Business to the Next Level with Innovative Power BI Solutions!

Partner with Kanerika today.

FAQs

What is the difference between star schema and snowflake schema in Power BI?

Star schema uses denormalized dimension tables directly connected to a central fact table, while snowflake schema normalizes dimensions into multiple related tables. In Power BI, star schema delivers faster query performance because fewer joins are required. Snowflake schema reduces storage through normalization but increases complexity and slows DAX calculations. Star schema also simplifies report building since relationships remain straightforward. Most Power BI implementations favor star schema for its balance of performance and usability in analytical workloads. Kanerika’s Power BI consultants design optimized data models that maximize dashboard performance—connect with us for a schema assessment.

What is star schema with example?

Star schema is a data modeling approach where a central fact table connects to multiple dimension tables in a star-like pattern. For example, a retail sales model might have a Sales fact table storing transaction amounts and quantities, surrounded by dimension tables for Product, Customer, Date, and Store. Each dimension provides descriptive attributes for slicing and analyzing fact data. This structure enables efficient aggregations and intuitive report building in tools like Power BI. Kanerika helps enterprises implement star schema designs tailored to their analytics requirements—reach out to discuss your data modeling needs.

Is star schema still relevant?

Star schema remains highly relevant and is the recommended data modeling approach for modern BI platforms including Power BI, Tableau, and Looker. Its denormalized structure optimizes query performance for analytical workloads where read speed matters more than storage efficiency. Cloud data warehouses like Snowflake and Databricks continue to leverage star schema principles for dimensional modeling. The pattern’s simplicity also accelerates report development and reduces DAX complexity. Far from outdated, star schema is foundational to enterprise analytics success today. Kanerika’s data architects implement modern star schema designs that scale with your business—let’s discuss your analytics roadmap.

What are the 4 main components of star schema?

The four main components of star schema are fact tables, dimension tables, primary keys, and foreign keys. Fact tables store quantitative metrics like sales amounts or order quantities. Dimension tables contain descriptive attributes such as product names, customer details, or date hierarchies. Primary keys uniquely identify each dimension record, while foreign keys in the fact table establish relationships to dimensions. Together, these components create the characteristic star pattern that enables efficient analytical queries in Power BI and other BI tools. Kanerika’s data engineers build robust star schema architectures optimized for your reporting needs—schedule a consultation today.

How to design a star schema?

Designing a star schema starts with identifying your business process and key metrics for the fact table. Next, determine the granularity—the most detailed level of data stored. Then identify dimensions that provide context for analysis, such as time, product, or customer. Create surrogate keys for each dimension and establish foreign key relationships to the fact table. Finally, denormalize dimension attributes to minimize joins. In Power BI, validate relationships in Model view and test DAX measures against expected results. Kanerika delivers end-to-end star schema design services for Power BI—contact us for expert guidance on your implementation.

Which schema is best for Power BI?

Star schema is the best schema for Power BI because Microsoft’s VertiPaq engine is optimized for this denormalized structure. Star schema reduces table joins, accelerates DAX calculations, and simplifies relationship management in Power BI Desktop. The pattern also improves compression ratios, reducing memory consumption for large datasets. While snowflake schema works, it introduces unnecessary complexity and slower performance for most reporting scenarios. Power BI documentation explicitly recommends star schema for optimal results. Kanerika specializes in Power BI data modeling and can design the ideal schema for your enterprise analytics—request a free assessment to get started.

Does Power BI prefer star schema?

Power BI strongly prefers star schema for data modeling. Microsoft explicitly recommends this approach because the VertiPaq in-memory engine performs optimally with denormalized dimension tables connected to central fact tables. Star schema enables faster DAX query execution, better compression, and simpler relationship navigation. The pattern also aligns with how business users naturally think about data—analyzing measures by various dimensions. Power BI’s automatic relationship detection works best with properly structured star schemas. Kanerika’s Power BI experts build high-performance data models using star schema best practices—connect with us to optimize your reports.

Which is better, star or snowflake schema?

Star schema is better for most Power BI and analytics use cases due to faster query performance and simpler maintenance. Its denormalized dimensions require fewer joins, accelerating report refresh and DAX calculations. Snowflake schema suits scenarios requiring strict normalization, minimal storage, or complex hierarchical dimensions with frequent updates. However, the performance trade-off rarely justifies snowflake complexity in modern BI environments where storage costs are low. For analytical workloads prioritizing speed and usability, star schema consistently outperforms. Kanerika evaluates your specific requirements to recommend the optimal schema approach—reach out for a personalized data architecture review.

What are the disadvantages of a star schema?

Star schema disadvantages include data redundancy from denormalized dimensions, which increases storage requirements. Updates to dimension attributes must propagate across redundant records, complicating ETL processes. The structure also lacks flexibility for complex many-to-many relationships without bridge tables. Additionally, star schema requires careful upfront design—changes to granularity or dimensions can necessitate significant rework. For highly normalized transactional systems, maintaining a separate star schema adds transformation overhead. Despite these limitations, the performance benefits typically outweigh drawbacks for Power BI analytics. Kanerika helps enterprises navigate these trade-offs with proven data modeling strategies—contact us to optimize your architecture.

When should you not use star schema?

Avoid star schema when building OLTP systems requiring frequent inserts, updates, and deletes where normalization prevents anomalies. It’s also unsuitable for applications needing real-time transactional consistency or when storage constraints prohibit denormalized redundancy. Complex many-to-many relationships without clear dimensional hierarchies may require alternative approaches. Additionally, if your data source already provides pre-aggregated flat files with minimal analytical dimensions, star schema overhead adds unnecessary complexity. For operational reporting directly against source systems, normalized schemas may suffice. Kanerika assesses your specific use case to determine the right modeling approach—schedule a consultation to explore your options.

What is a good alternative to star schema?

Good alternatives to star schema include snowflake schema for storage-optimized scenarios, Data Vault for auditable enterprise data warehouses, and flat wide tables for simple analytical datasets. One Big Table approaches work when dimension cardinality is low and query simplicity matters most. For graph-based analytics, property graph models outperform dimensional structures. In Power BI specifically, composite models combining DirectQuery and import can supplement star schema when real-time data access is required. The best alternative depends on your analytical requirements and performance priorities. Kanerika evaluates your data landscape to recommend the optimal modeling approach—let’s discuss your specific needs.

Is star schema faster than snowflake?

Star schema is faster than snowflake schema for analytical queries because it requires fewer table joins. In Power BI, the VertiPaq engine processes denormalized star schema dimensions more efficiently, resulting in quicker DAX calculations and report rendering. Snowflake schema’s normalized structure demands multiple joins to reconstruct dimension attributes, adding computational overhead. While snowflake saves storage through normalization, modern columnar compression in Power BI minimizes this advantage. For dashboard performance where query speed directly impacts user experience, star schema consistently delivers superior results. Kanerika optimizes Power BI data models for maximum performance—connect with our team to accelerate your reports.

Why is it called star schema?

Star schema gets its name from its visual appearance when diagrammed. The central fact table sits at the core with dimension tables radiating outward, creating a star-like pattern. Each dimension connects directly to the fact table through foreign key relationships, forming distinct points around the center. This intuitive layout makes star schema easy to understand and navigate in data modeling tools like Power BI’s Model view. The name reflects both the structure and the simplicity that makes dimensional modeling accessible to business analysts. Kanerika designs clear, well-structured star schemas for Power BI—reach out to streamline your data architecture.

What is the difference between 3NF and star schema?

Third Normal Form (3NF) eliminates data redundancy through full normalization, optimizing for transactional write operations in OLTP systems. Star schema intentionally denormalizes data to optimize read performance for analytical queries. 3NF structures data integrity and update efficiency, while star schema prioritizes query speed and simplicity for BI tools like Power BI. Converting 3NF source systems to star schema requires ETL transformations that flatten hierarchies and pre-aggregate metrics. Each serves different purposes—3NF for operations, star schema for analytics. Kanerika transforms normalized source data into optimized star schemas for Power BI reporting—contact us to accelerate your data pipeline.

Can you have multiple fact tables in a star schema?

Yes, you can have multiple fact tables in a star schema, creating what’s called a galaxy schema or fact constellation. This design accommodates different business processes sharing common dimensions—for example, Sales and Inventory facts both relating to Product and Date dimensions. In Power BI, multiple fact tables connect to shared dimensions through separate relationships, enabling cross-functional analysis. The key is ensuring conformed dimensions with consistent keys and attributes across fact tables. Proper grain alignment prevents ambiguous relationships and calculation errors. Kanerika architects complex multi-fact Power BI models that deliver reliable insights—reach out for expert data modeling support.

What is the purpose of a star schema?

The purpose of star schema is to optimize data structures for analytical queries and business intelligence reporting. It organizes data into intuitive fact and dimension tables that mirror how users analyze information—measuring metrics across various business dimensions. Star schema accelerates query performance by minimizing joins and enabling efficient aggregations. For Power BI specifically, it improves DAX calculation speed, reduces model complexity, and enhances report development productivity. The pattern bridges technical data storage with business-friendly analytics, making insights accessible to non-technical users. Kanerika implements purpose-built star schemas that unlock your data’s full analytical potential—let’s discuss your BI objectives.

Is star schema OLAP or OLTP?

Star schema is designed for OLAP (Online Analytical Processing) workloads, not OLTP (Online Transaction Processing). OLAP systems prioritize read-heavy analytical queries, aggregations, and reporting—exactly what star schema optimizes through denormalization. OLTP systems handle high-volume transactional writes requiring normalized structures to maintain data integrity and minimize redundancy. Star schema’s fact and dimension model supports the multidimensional analysis characteristic of OLAP cubes and BI tools like Power BI. Data typically flows from normalized OLTP sources through ETL into star schema structures for analytics. Kanerika builds OLAP-optimized star schemas that transform your transactional data into actionable insights—contact us to get started.

What is the difference between star schema and wide table?

Star schema separates data into normalized fact and dimension tables connected by relationships, while a wide table consolidates everything into a single denormalized structure. Wide tables eliminate joins entirely but create massive redundancy and complicate maintenance when dimension attributes change. Star schema balances performance with manageability, allowing dimension updates without touching fact data. In Power BI, star schema enables proper relationship modeling and efficient VertiPaq compression, whereas wide tables can bloat memory usage. Star schema also supports reusable dimensions across multiple facts. Kanerika designs efficient Power BI data models using proven dimensional modeling techniques—reach out for architecture guidance.