Businesses are increasingly adopting real-time data processing and artificial intelligence (AI) to enhance decision-making and operational efficiency. A recent Deloitte report highlights the growing importance of real-time data, with companies building advanced data pipelines to make swift, data-driven decisions.

To meet these evolving demands, Microsoft has enhanced Microsoft Fabric, a cloud-native platform that streamlines data analytics. Fabric Runtime 1.3, released in September 2024, integrates Apache Spark 3.5, offering improved performance and scalability for data processing tasks.

This blog will guide you through loading data into a Lakehouse using Spark Notebooks within Microsoft Fabric. Additionally, we’ll demonstrate how to leverage Spark’s distributed processing power with Python-based tools to manipulate data and securely store it in the Lakehouse for further analysis.

What is Microsoft Fabric?

Microsoft Fabric is an integrated, end-to-end platform that simplifies and automates the entire data lifecycle. It brings together the tools and services a business needs to integrate, transform, analyze, and visualize data — all within one ecosystem. Microsoft Fabric eliminates the need for organizations to manage multiple platforms or services for their data management and analytics needs. Thus, the entire process—from data storage to advanced analytics and business intelligence—can now be accomplished in one place.

Transform Your Data Analytics with Microsoft Fabric!

Partner with Kanerika for Expert Fabric implementation Services

What are Spark Notebooks in Microsoft Fabric?

One of the standout features of Microsoft Fabric is the integration of Spark Notebooks. These interactive notebooks are designed to handle large-scale data processing, which is, therefore, essential for modern data analytics, especially when working with big data.

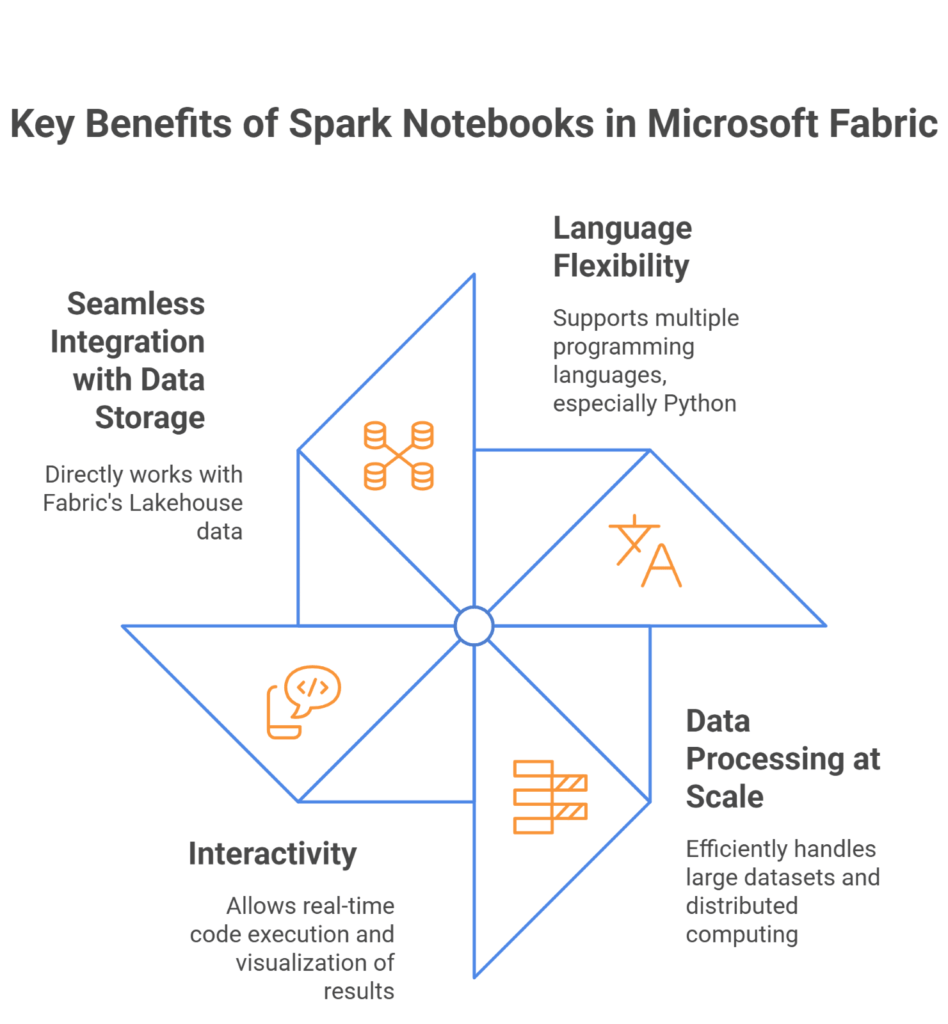

Key Benefits of Spark Notebooks in Fabric:

- Language Flexibility: Microsoft Fabric builds Spark Notebooks to support multiple programming languages. Moreover, they are specifically known for natively supporting Python, especially within the data science and analytics community.

- Data Processing at Scale: The underlying engine, which is Apache Spark, is built for large datasets and distributed computing. Spark Notebooks allow users to write code to quickly load, process and analyze large volumes of data. This is particularly useful for businesses dealing with Big Data that need to process it without delays.

- Interactivity: Spark Notebooks are highly interactive. This means users can write code in blocks and execute them in real-time. The interactive nature of the notebooks allows users to visualize intermediate results, test different approaches, and quickly iterate on their work.

- Seamless Integration with Data Storage: One of the major advantages of Spark Notebooks in Microsoft Fabric is their built-in integration with the underlying data storage solutions, like the Lakehouse. This integration allows users to work directly with data from Fabric’s Lakehouse, efficiently manipulating and transforming data without cross-platform data movement.

How to Set Up Microsoft Fabric Workspace

Step 1: Sign in to Microsoft Fabric

Access Microsoft Fabric

- Open a web browser and go to app.powerbi.com.

- Sign in using your Microsoft account credentials. If you’re new to Microsoft Fabric, you may need to create a new account otherwise sign up for a trial version.

Navigate to Your Workspace

After logging in, you will be directed to the Microsoft Fabric workspace. This is where all your projects, datasets, and tools are housed.

Step 2: Create a New Lakehouse

Why Create a New Lakehouse?

Creating a fresh Lakehouse ensures your current project remains separate from any previous data experiments or projects. It’s a clean slate that makes managing your data and processes more efficient.

Navigate to the Workspace

In your workspace, find the option to create a new Lakehouse. Microsoft Fabric offers an easy-to-use interface that guides you through the creation process.

Ensure the Lakehouse is Empty

When creating a new Lakehouse, make sure it’s set up as an empty environment. This ensures that you don’t have any legacy data or configurations interfering with your current data processing tasks.

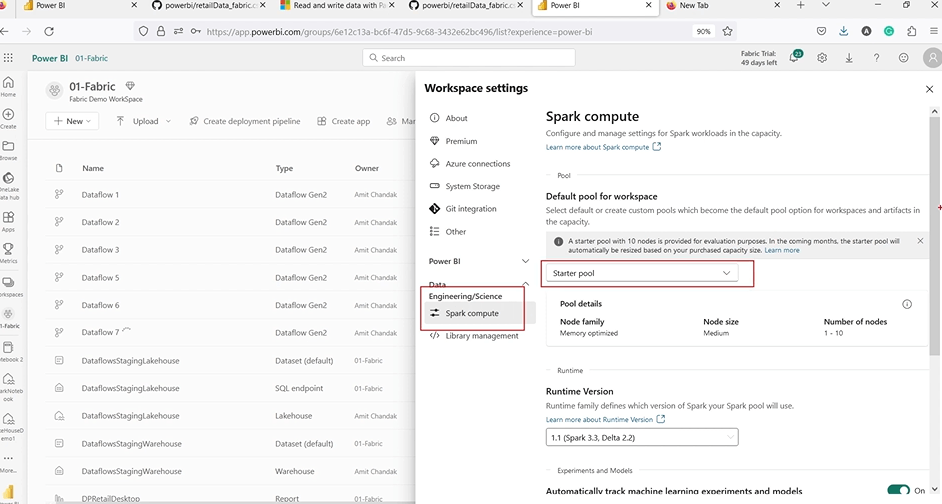

Step 3: Configure Your Spark Pool

Setup Spark in Fabric

Before you can use Spark Notebooks for data manipulation, you need to generally configure a Spark pool within your Fabric environment. Spark pools allocate resources for distributed data processing.

Check Available Options

- For trial users, Microsoft Fabric offers a starter pool with default settings. Additionally, if you are on a paid plan, you may have the flexibility to customize your Spark pool configuration, such as adjusting the size or selecting the version of Spark to use.

- Verify that the settings are correct for your workload and ensure that Spark is ready for use in your Lakehouse.

Step 4: Start Your First Notebook

Create a New Notebook

- Once the Spark pool is configured, open a Spark Notebook from your Lakehouse workspace.

- Notebooks in Microsoft Fabric allow you to run code interactively. You’ll use this notebook to perform tasks like data cleaning, transformation, and analysis, all within the same environment.

Select the Language and Spark Session

- Choose Python (or another supported language) for your Spark Notebook, depending on your preference and the tasks you plan to perform.

- Initialize the Spark session to ensure your notebook can connect with the configured Spark pool, allowing it to process and analyze data efficiently.

Step 5: Verify Environment and Test Setup

- After setting up your environment and creating your Lakehouse, it’s important to test that everything is working as expected.

- Try loading a sample dataset into Lakehouse and run a basic query or transformation in your notebook. This will help verify that your Spark pool is properly connected, and your data is loading correctly.

Data Visualization Tools: A Comprehensive Guide to Choosing the Right One

Explore how to select the best data visualization tools to enhance insights, streamline analysis, and effectively communicate data-driven stories.

How to Configure Spark in Microsoft Fabric

Spark Settings

Spark runs on clusters, and in Microsoft Fabric, you have the option to configure the Spark pool for your environment. If you’re using a trial account, you’ll have limited configurations available. Make sure you’re aware of the available options.

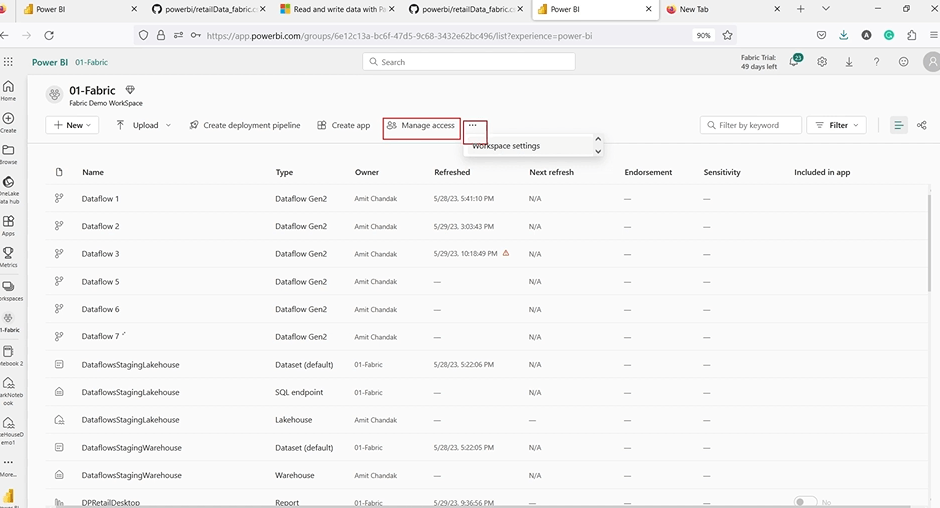

Step 1: Access Spark Settings

To configure Spark in Microsoft Fabric, you’ll need to access the workspace settings where you can modify the Spark configuration. Here’s how you can do it:

Go to Your Workspace: Navigate to the Microsoft Fabric workspace where your data resides.

Click the Three Dots Next to Your Workspace Name: In the workspace menu, click on the three vertical dots (also known as the ellipsis).

Select Workspace Settings: From the dropdown menu, choose Workspace Settings to access all available configuration options for your workspace.

Find the Spark Compute Section

- Inside the workspace settings, locate the Spark Compute section. This is where you can configure the Spark clusters that will run your data processing tasks.

- The Spark Compute section allows you to create, manage, and monitor the Spark pools available in your workspace.

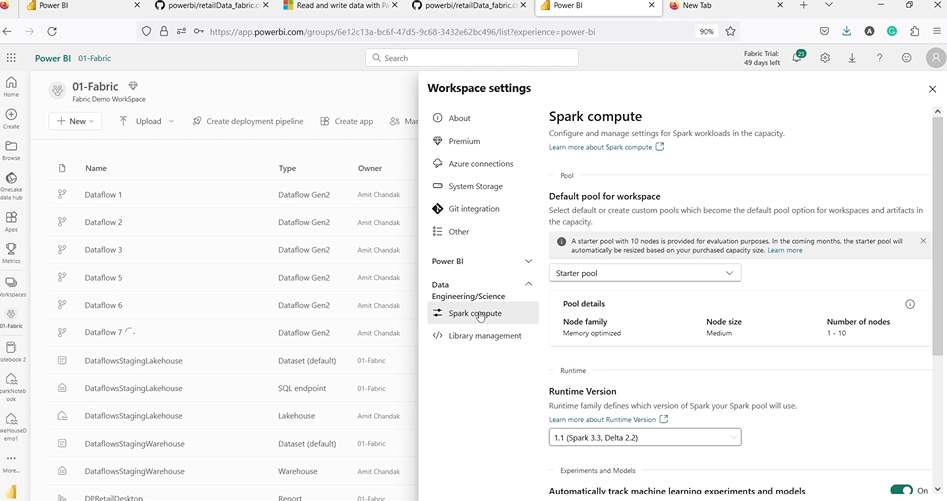

Step 2: Selecting the Spark Pool

Once you’re in the Spark Compute section of the workspace settings, you can configure and select the Spark pool that suits your needs. Here’s what you can do:

Choose the Appropriate Spark Pool

If you’re a trial user, you’ll typically have access to a starter pool. This default pool is fine for smaller data processing tasks and experiments but has limited resources compared to a full Spark pool. You can select the starter pool for testing purposes or for small-scale operations.

Configure Spark Version and Properties

- In the Spark pool settings, you can choose the version of Spark you want to use (e.g., Spark 3.x). Different versions offer varying performance improvements, features, and compatibility with specific tools or libraries.

- Additionally, you can adjust other properties of the Spark pool, such as memory allocation, number of workers, and compute power. These configurations are critical for larger datasets or more complex processing tasks, although they are restricted on trial accounts.

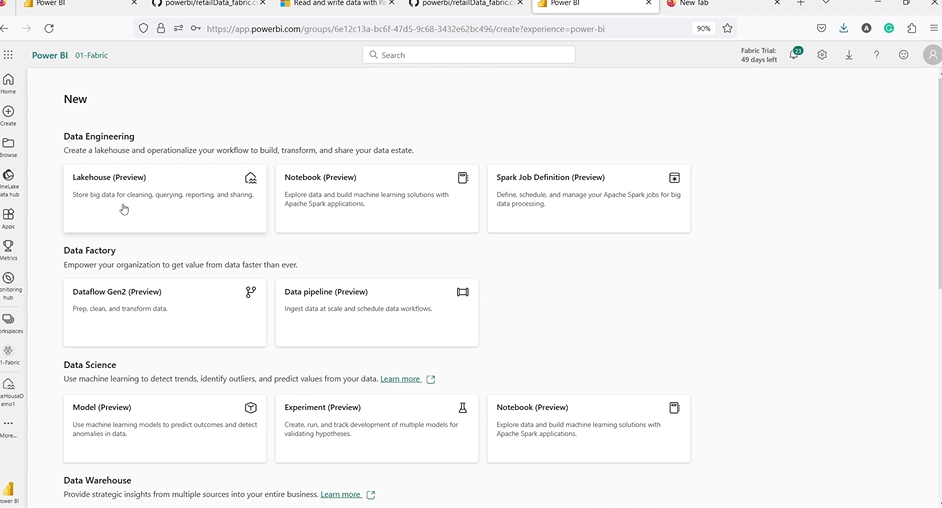

Creating and Opening Spark Notebooks in Microsoft Fabric

Step 1: Open a New Notebook

Navigating to the Notebook Interface

- Within Microsoft Fabric, you can create a new Spark Notebook by first navigating to your Lakehouse or Workspace.

- Once there, locate the option to create a new notebook. The platform provides an intuitive interface for creating and managing notebooks, which will allow you to execute code and interact with your data.

Choosing Between a New or Existing Notebook

You can choose to start a new notebook from scratch, which is ideal for starting fresh and writing new code, or you can open an existing notebook if you want to continue with previous work or use pre-written code for your analysis.

Setting Up the Environment

- When creating a new notebook, you’ll select the Spark pool where the code will be executed. If you’re just testing or running smaller workloads, you can use the default starter pool available for trial users.

- The notebook interface allows you to write and execute code cells interactively, making it easier to test snippets of code and see results instantly.

Step 2: Writing Python Code

Spark and Python Integration

- Once you set up your notebook, you write code directly within it using Python. While Spark Notebooks support multiple languages, Python is commonly used for its simplicity and powerful data manipulation libraries.

- In this notebook, you’ll use Pandas, a popular Python library, to read, manipulate, and process data. The combination of Spark for large-scale data processing and Pandas for easy data handling makes this notebook an ideal tool for analytics.

Python Code Execution

- With Spark running in the background, Python code will be executed on Spark’s distributed compute infrastructure, which means it can handle large datasets efficiently.

- Every time you execute a code cell in the notebook, Spark processes the data, and you can instantly see the result of your operations.

Steps to Load Data Using Spark Notebooks in Microsoft Fabric

Step 1: Importing Pandas

- To begin working with data in Python, you need to import Pandas. This library provides easy-to-use data structures, such as DataFrames, that are perfect for working with tabular data.

- Importing Pandas allows you to load, clean, and manipulate the data efficiently within the notebook.

Step 2: Reading Data from an External Source

- In most cases, your data might not reside within the Microsoft Fabric environment, so you’ll need to load it from an external source like a CSV file hosted on GitHub or a file from another cloud storage location.

- Here’s an example of loading a CSV file directly from a URL:

This reads the data into a Pandas DataFrame (df), which is a Python object that holds the data in a tabular format, making it easy to manipulate.

Step 3: Running Spark Jobs

- Executing Code on Spark: After loading your data, you can execute code to process and analyze the data. Additionally, when you run your Python code, Spark automatically processes the operations in memory, leveraging its distributed compute resources to handle large datasets.

- Real-Time Execution: Running Spark jobs allows you to see the results instantly in your notebook. For example, when you manipulate the DataFrame or apply any transformations, Spark manages the heavy lifting behind the scenes.

How to Transform Data into Spark in Microsoft Fabric

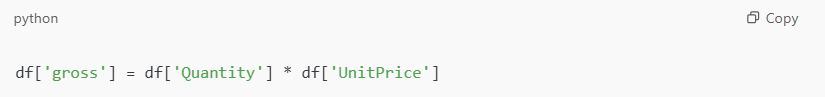

Adding a New Column

Data Transformation Example

One common task in data manipulation is adding new columns or features to your dataset. For instance, if you want to create a new column that represents the total gross amount (calculated by multiplying the quantity and price), you can easily do this with Pandas:

This code multiplies the Quantity and UnitPrice columns and stores the result in a new column called gross.

Verifying the Transformation

After transforming the data, you can display the DataFrame again to ensure the new column was added correctly:

Steps to Save Transformed Data in Microsoft Fabric

Step 1: Save Data as CSV

After manipulating the data, you may want to save the transformed DataFrame for later use. You can easily save the data as a CSV file using the .to_csv() method:

This will save the data to your Lakehouse, and the file will be accessible for future analysis or reporting.

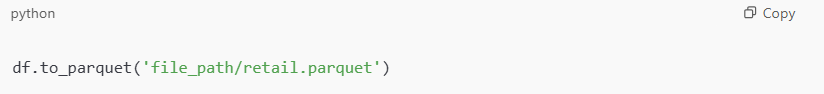

Step 2: Save Data as Parquet

- For larger datasets, the Parquet format is more efficient than CSV as it is a columnar storage format optimized for analytics.

- You can save your data as a Parquet file:

Parquet is especially suitable for big data workloads and ensures that it processes your data quickly and efficiently.

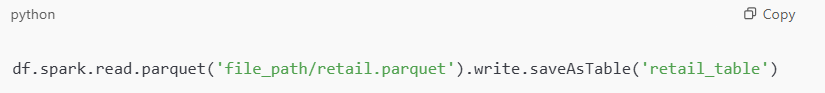

Converting Parquet Data into a Lakehouse Table

- After you save your data as a Parquet file, you can easily convert it into a table within the Lakehouse environment. You can structure tables as objects optimized for querying.

- You can convert the Parquet file into a table using Spark code:

Alternatively, you can right-click the Parquet file in your Lakehouse and select “Load to Tables” from the context menu.

Analyzing Data in Power BI

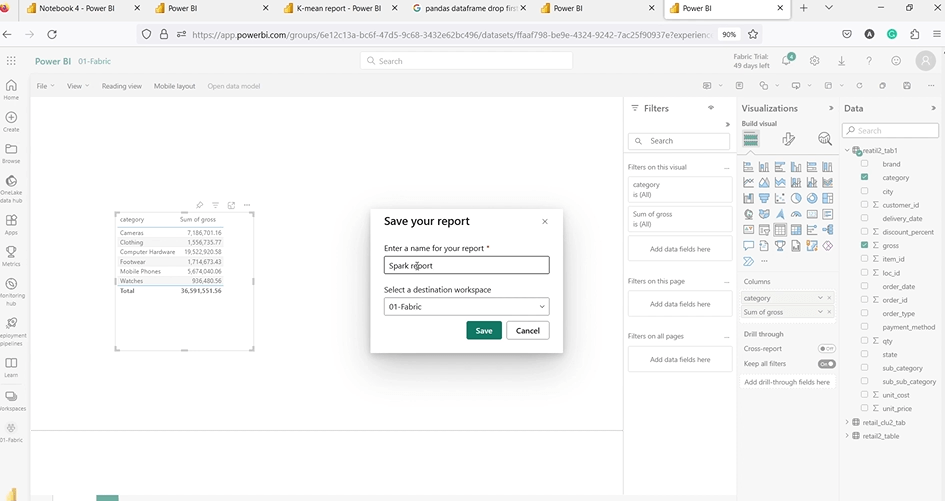

Step 1: Create a Power BI Report

- Once your data is transformed and stored in the Lakehouse as a table, you can easily connect it to Power BI for visualization.

- Power BI allows you to create reports and dashboards based on the data stored in the Lakehouse, helping you analyze trends, create charts, and share insights with stakeholders.

Step 2: Visualize and Explore

- Inside Power BI, you can use different visuals like bar charts, line graphs, and tables to explore your data. The tool lets you build interactive dashboards where users can filter information, drill into specific sections, and uncover more detailed insights.

- These features make it easier to spot trends, track performance, and understand what’s really going on with your data.

Partner with Kanerika to Unlock the Full Potential of Microsoft Fabric for Data Analytics

Kanerika is a leading provider of data and AI solutions, specializing in maximizing the power of Microsoft Fabric for businesses. With our deep expertise, we help organizations seamlessly integrate Microsoft Fabric into their data workflows, enabling them to gain valuable insights, optimize operations, and make data-driven decisions faster.

As a certified Microsoft Data and AI solutions partner, Kanerika leverages the unified features of Microsoft Fabric to create tailored solutions that transform raw data into actionable business insights.

By adopting Microsoft Fabric early in the process, businesses across various industries have achieved real results. Kanerika’s hands-on experience with the platform has helped companies accelerate their digital transformation, boost efficiency, and uncover new opportunities for growth.

Partner with Kanerika today to elevate your data capabilities and take your analytics to the next level with Microsoft Fabric!

Frequently Asked Questions

What is the difference between a data warehouse and Fabric?

A traditional data warehouse is a structured repository optimized for SQL-based analytics on relational data, while Microsoft Fabric is a comprehensive analytics platform that includes data warehouse capabilities alongside data engineering, data science, and real-time analytics workloads. Fabric provides a unified architecture where the data warehouse component shares OneLake storage with lakehouses and other experiences, eliminating data silos. This integration enables seamless data movement without complex ETL pipelines. Kanerika helps enterprises evaluate whether Fabric’s unified analytics approach aligns with their data strategy—schedule a consultation to explore your options.

What is the data lake in Microsoft Fabric?

The data lake in Microsoft Fabric is OneLake—a single, unified storage layer built on Azure Data Lake Storage Gen2 that automatically provisions with every Fabric tenant. OneLake eliminates the need for separate storage accounts by centralizing all organizational data in one hierarchical namespace using Delta Parquet format. Every Fabric workload, including lakehouses, warehouses, and datamarts, reads and writes directly to OneLake. This architecture ensures consistent governance and eliminates data duplication across analytics experiences. Kanerika’s Fabric implementation specialists can help you architect OneLake for optimal performance—connect with our team today.

What is a Fabric Lakehouse?

A Fabric Lakehouse is a data architecture within Microsoft Fabric that combines the flexibility of data lakes with the performance of data warehouses in a single platform. It stores data in open Delta Lake format on OneLake, supporting both structured and unstructured data while enabling SQL analytics through an auto-generated SQL endpoint. Data engineers use notebooks and Spark for transformation, while analysts query the same data using familiar T-SQL. This eliminates data movement between systems entirely. Kanerika builds production-ready Fabric Lakehouse solutions tailored to enterprise requirements—reach out for a technical assessment.

Is Microsoft Fabric the same as Snowflake?

Microsoft Fabric and Snowflake are not the same—they represent different approaches to cloud analytics. Snowflake is a cloud-native data warehouse focused primarily on structured data storage and SQL analytics across multiple clouds. Microsoft Fabric is a unified analytics platform encompassing data engineering, data science, real-time analytics, and business intelligence within a single SaaS experience. Fabric uses consumption-based capacity units while Snowflake uses compute credits. Organizations deeply invested in Microsoft 365 often find Fabric’s native integration more compelling. Kanerika has implemented both platforms and can guide your selection—request a comparative analysis today.

What are the disadvantages of a data lake?

Traditional data lakes suffer from several limitations that lakehouses address. Without proper governance, data lakes become data swamps with poor discoverability and inconsistent quality. They lack ACID transaction support, making concurrent writes unreliable and potentially corrupting data. Query performance on raw files is significantly slower than optimized warehouse formats, requiring separate processing layers. Schema enforcement is absent, leading to downstream analytics failures. Security management across unstructured files proves complex at scale. Microsoft Fabric Lakehouse solves these issues with Delta Lake’s transactional guarantees. Kanerika helps organizations modernize legacy data lakes into governed lakehouses—let’s discuss your transformation roadmap.

What's the difference between a lakehouse and warehouse in Fabric?

In Microsoft Fabric, the lakehouse stores data in open Delta Parquet format, supports both structured and unstructured data, and enables Spark-based processing alongside SQL queries. The warehouse stores data in proprietary format optimized exclusively for T-SQL workloads with full DML support including UPDATE and DELETE operations. Lakehouses excel at data engineering and machine learning workloads, while warehouses deliver superior performance for enterprise BI reporting. Both share OneLake storage, enabling cross-querying without data movement through shortcuts. Kanerika architects hybrid implementations leveraging both Fabric lakehouse and warehouse capabilities—schedule a design session with our experts.

What is the difference between Azure Lakehouse and Fabric?

Azure Lakehouse typically refers to building lakehouse architecture using separate Azure services—Synapse Analytics, Data Lake Storage Gen2, and Databricks—requiring manual integration and management. Microsoft Fabric delivers a fully integrated SaaS lakehouse experience where compute, storage, governance, and analytics tools work together natively. Fabric eliminates infrastructure provisioning, unifies billing under capacity units, and provides OneLake as automatic storage. Azure-based lakehouses offer more customization but demand significant engineering overhead. Fabric simplifies operations while maintaining enterprise capabilities. Kanerika specializes in migrating Azure analytics workloads to Microsoft Fabric—contact us for a migration assessment.

Does Microsoft have a data lakehouse?

Yes, Microsoft offers a fully managed data lakehouse through Microsoft Fabric. The Fabric Lakehouse combines scalable data lake storage with data warehouse performance using Delta Lake as its underlying format. It provides ACID transaction support, schema enforcement, and time travel capabilities while storing data in open formats on OneLake. Users access data through Spark notebooks for engineering workloads or SQL endpoints for analytics queries. This architecture supports structured, semi-structured, and unstructured data in a single platform with unified governance. Kanerika delivers end-to-end Microsoft Fabric Lakehouse implementations—talk to our team about your data modernization goals.

What is Microsoft Fabric used for?

Microsoft Fabric is used for end-to-end enterprise analytics, unifying data engineering, data integration, data warehousing, data science, real-time analytics, and business intelligence in one platform. Organizations use Fabric to ingest data from diverse sources, transform it using notebooks or pipelines, store it in lakehouses or warehouses, and visualize insights through Power BI. The platform eliminates tool fragmentation by providing integrated experiences that share OneLake storage and common governance. Fabric simplifies analytics operations while reducing total cost of ownership significantly. Kanerika helps enterprises unlock Fabric’s full potential across all analytics workloads—request a platform walkthrough today.

Which is better, Databricks or Microsoft Fabric?

Neither Databricks nor Microsoft Fabric is universally better—the right choice depends on your specific requirements. Databricks excels at advanced data engineering, machine learning workloads, and multi-cloud deployments with mature MLOps capabilities. Microsoft Fabric provides tighter integration with Microsoft ecosystem tools including Power BI, Office 365, and Azure services, with simpler administration through unified capacity billing. Databricks offers more granular compute control while Fabric prioritizes ease of use. Organizations with heavy ML workloads often prefer Databricks; those seeking unified analytics favor Fabric. Kanerika implements both platforms and provides objective recommendations—schedule a discovery call for personalized guidance.

Can a lakehouse replace a data warehouse?

A lakehouse can replace a traditional data warehouse for many use cases, though the decision depends on workload requirements. Modern lakehouses like Microsoft Fabric Lakehouse deliver warehouse-grade SQL performance through optimized query engines while supporting broader data types and processing patterns. Organizations with heavy BI reporting requirements may still benefit from dedicated warehouse structures for predictable performance. However, lakehouse architecture eliminates data duplication between lake and warehouse tiers, reducing costs and simplifying governance. Many enterprises adopt hybrid approaches within Fabric. Kanerika assesses your workloads to determine optimal lakehouse migration strategies—connect with us for an evaluation.

What are the challenges of implementing a lakehouse?

Implementing a lakehouse presents several challenges organizations must address. Data governance across mixed structured and unstructured data requires comprehensive metadata management and access controls. Performance tuning demands expertise in partition strategies, file compaction, and query optimization specific to Delta Lake formats. Migrating existing ETL pipelines and transforming legacy warehouse schemas introduces complexity. Teams need upskilling on both Spark-based processing and SQL analytics paradigms. Cost management requires understanding consumption patterns across compute and storage tiers. Microsoft Fabric reduces many challenges through its integrated platform approach. Kanerika’s lakehouse implementation methodology addresses these challenges systematically—let’s discuss your specific requirements.

What is the purpose of a lakehouse?

The purpose of a lakehouse is to unify data lake flexibility with data warehouse reliability in a single architecture, eliminating the need for separate systems. Lakehouses store all data in open formats while providing ACID transactions, schema enforcement, and governance capabilities previously exclusive to warehouses. This architecture enables data engineers, data scientists, and business analysts to work on the same data without costly movement between systems. Organizations reduce infrastructure complexity, lower storage costs, and accelerate time-to-insight by consolidating analytics workloads. Kanerika designs lakehouse architectures on Microsoft Fabric that align with your business objectives—start with a free consultation.

When to use lakehouse vs warehouse in Fabric?

Use a Fabric Lakehouse when your workloads involve data engineering with Spark, machine learning model development, or processing semi-structured and unstructured data formats. Lakehouses excel when teams need open Delta Lake format access for external tools. Choose a Fabric Warehouse when workloads are exclusively T-SQL based, require frequent UPDATE and DELETE operations, or demand maximum BI query performance for enterprise reporting. Many organizations implement both—storing raw and transformed data in lakehouses while serving curated datasets through warehouses for business users. Kanerika architects optimal Fabric data strategies combining lakehouse and warehouse capabilities—reach out for tailored recommendations.

Is Microsoft Fabric a data warehouse or data lake?

Microsoft Fabric is neither exclusively a data warehouse nor a data lake—it is a unified analytics platform that includes both capabilities plus much more. Fabric provides dedicated warehouse experiences for T-SQL workloads and lakehouse experiences combining lake flexibility with warehouse reliability. Both store data on OneLake, Microsoft’s single storage layer built on Azure Data Lake Storage Gen2. Additionally, Fabric encompasses data engineering, data integration, real-time analytics, data science, and Power BI reporting workloads. This comprehensive approach eliminates the traditional lake-warehouse dichotomy entirely. Kanerika helps enterprises leverage Fabric’s full analytics spectrum—contact us to explore implementation options.

Is Microsoft Fabric the same as Azure Data Factory?

Microsoft Fabric is not the same as Azure Data Factory, though Fabric incorporates data integration capabilities that evolved from ADF. Azure Data Factory is a standalone data integration service for building ETL and ELT pipelines across diverse sources. Microsoft Fabric is a comprehensive analytics platform where Data Factory experiences represent just one component alongside lakehouses, warehouses, notebooks, and Power BI. Fabric pipelines share similar functionality with ADF but operate within the unified OneLake storage environment. Existing ADF pipelines can connect to Fabric workspaces, enabling gradual migration. Kanerika migrates Azure Data Factory workloads to Microsoft Fabric seamlessly—discuss your integration roadmap with our specialists.

What's the difference between a data lake and lakehouse?

A data lake is a storage repository holding raw data in native formats without built-in processing or governance capabilities, often requiring separate tools for transformation and analytics. A lakehouse adds data warehouse features directly on lake storage—ACID transactions, schema enforcement, indexing, and optimized query performance through formats like Delta Lake. Lakehouses support both SQL analytics and data science workloads on the same data without movement. Data lakes offer maximum flexibility but risk becoming ungoverned data swamps; lakehouses maintain that flexibility while ensuring reliability. Microsoft Fabric Lakehouse exemplifies this modern approach. Kanerika transforms data lakes into governed lakehouses—explore our modernization services today.

Is data lakehouse ETL or ELT?

Data lakehouses primarily support ELT (Extract, Load, Transform) patterns where raw data lands in storage first, then transforms occur in place using powerful compute engines. This approach leverages lakehouse scalability for heavy transformations rather than preprocessing data before loading. However, lakehouses accommodate both patterns—ETL remains viable when source system processing proves more efficient or data reduction before loading reduces costs. Microsoft Fabric Lakehouse enables flexible ELT workflows through Spark notebooks and Data Factory pipelines, transforming Delta Lake tables directly. The architecture supports medallion patterns from bronze through gold layers. Kanerika designs ELT pipelines optimized for Fabric Lakehouse performance—let’s architect your data flows together.