Microsoft Fabric has experienced significant growth, with its customer base expanding by nearly 75% over the past year—from 11,000 to over 19,000 organizations. This surge underscores the platform’s appeal as a unified solution for data engineering, analytics, and business intelligence.

A standout feature contributing to this adoption is OneLake Shortcuts. These shortcuts enable organizations to reference data across different domains, clouds, and accounts without the need to move or duplicate data. By creating a single virtual data lake, OneLake Shortcuts facilitate seamless data access and collaboration across various teams and departments.

In this blog, we’ll explore how OneLake Shortcuts addresses these needs by providing a streamlined approach to data sharing, reducing redundancy, and enhancing performance across the board.

What Is a OneLake Shortcut?

A OneLake Shortcut in Microsoft Fabric is a smart way to link to data without actually moving or copying it. Think of it as a virtual bridge — it connects to data stored in another location (like a different workspace, storage account, or even a separate capacity), letting you access and use that data as if it were local. The actual data never moves. It stays right where it was created. But thanks to the shortcut, you can still explore it, query it, build semantic models, and create Power BI reports on top of it — all from a different workspace.

It works just like a shortcut in Google Drive or a symbolic link in a file system—the same data, different access points.

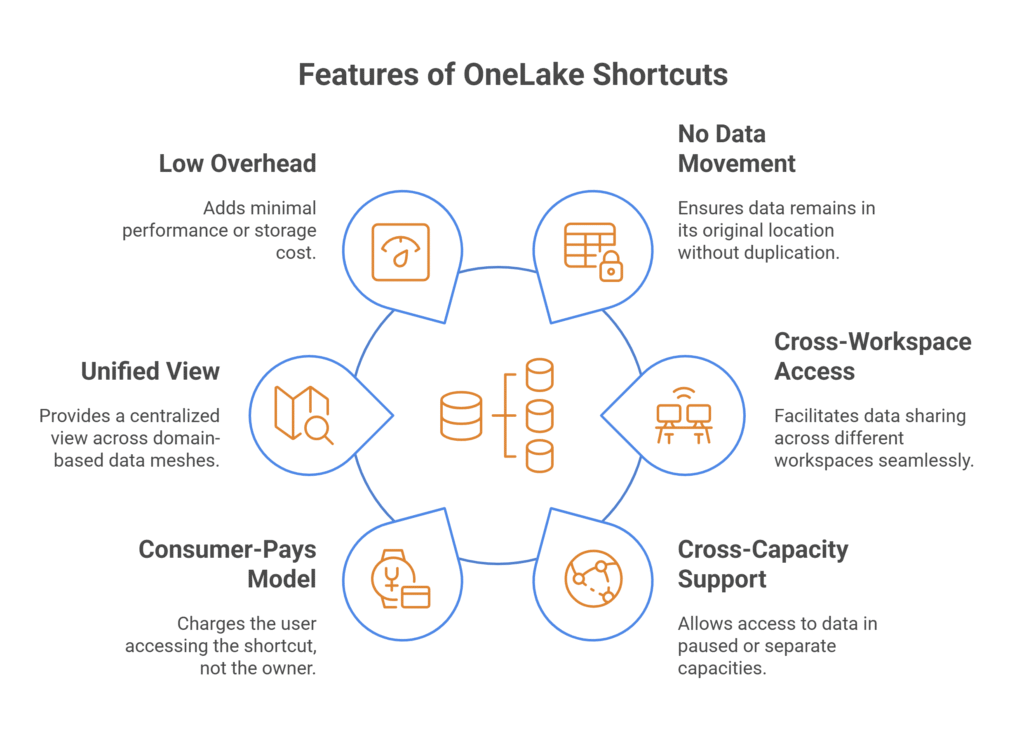

Here are a few features that make OneLake Shortcuts super useful:

- No Data Movement: Data stays in its source location — no duplication, sync jobs, or migration tasks.

- Cross-Workspace Access: Easily bring data from one Fabric workspace into another without importing or copying.

- Cross-Capacity Support: You can access data even if the source is in a paused or separate capacity.

- Consumer-Pays Model: When someone uses the shortcut, their capacity is charged — not the owner’s.

- Unified View Across Domains: Perfect for domain-based data mesh setups — centralize data, decentralize access.

- Low Overhead: Shortcuts are just pointers, so almost no performance or storage cost is added.

Where Can You Use OneLake Shortcuts in Microsoft Fabric?

OneLake Shortcuts are built to be flexible and work across many parts of the Microsoft Fabric ecosystem. Here’s where and how you can use them:

- Across Microsoft Fabric Workspaces: Link data from one workspace to another without importing or copying. This is perfect for keeping your data layer separate from your reporting or modeling layer.

- Across Capacities (Trial, Paid, On-Demand): Access data from a workspace that’s on a paused or different capacity. Shortcuts pull data through the active capacity where the shortcut lives — keeping things running even when the source is offline.

- From Azure Data Lake Storage Gen2 (ADLS Gen2): Create shortcuts to external storage so you can read data files directly without uploading or duplicating them in Fabric.

- Across Domains and Business Units: This allows teams to share certified datasets across domains (great for data mesh setups) without shifting data ownership or causing version chaos.

- In Hybrid Storage Environments: Use shortcuts to unify access to data that may live partly in Fabric and partly in external systems like Azure or other cloud storage.

- No Ownership Conflicts: The original data remains owned and controlled by the source workspace or team. Shortcuts don’t change permissions or access rules, so you avoid accidental edits and permission headaches.

- Lightweight and Non-Intrusive: Since shortcuts are just pointers, they don’t take up extra storage and add very little overhead — making them ideal for scaling access without scaling complexity.

- Bottom Line: If you’re using Microsoft Fabric across departments, domains, or workloads, OneLake Shortcuts lets you share and access data without hassle—no movement, no duplication, and no need to redesign your architecture.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Who Pays for the Data Access?

One of the most important—and often overlooked—features of OneLake Shortcuts is how billing works. It’s not just about linking to data; it’s also about who gets charged when that data is used.

Consumer Capacity Gets Billed, Not the Owner’s

When a user queries data through a shortcut — whether via a Power BI report, a SQL query, or a Spark job — the processing happens in their capacity, not the one that holds the original data. So:

- The source workspace doesn’t take the hit.

- The destination workspace (where the shortcut lives) pays the compute cost.

- The storage charges still apply to the original location — but that’s based on GB stored, not compute used.

Why This Matters in Practice?

- No resource conflicts: Your data engineering team can load and transform data without being slowed down by report users running heavy dashboards simultaneously.

- Cleaner performance management: You can isolate noisy workloads (like large reports or frequent refreshes) to a separate capacity, keeping your main data processing smooth and stable.

- Easier cost tracking: Since compute billing is tied to usage, each team can pay for what they use, which is especially useful in large organizations with multiple business units.

- Works even when source capacity is paused: This is a game-changer. If the original workspace’s capacity is turned off (for example, paused to save costs), shortcuts still work — as long as the consuming capacity is active.

Example Scenario

Let’s say:

- Team A owns a Lakehouse in Workspace A, using a paid capacity.

- Team B wants to build reports off that data, but they’re in a different capacity (maybe a trial or a lower-tier one).

- Instead of duplicating the data, Team B creates shortcuts.

- Any queries, model refreshes, or reports built by Team B use their own capacity — not Team A’s.

So, Team A’s capacity isn’t throttled, and Team B gets the data access they need without stepping on anyone’s toes.

Why Use Multiple Capacities?

In real-world setups, one capacity often can’t handle everything smoothly. When data engineers run heavy jobs like Spark notebooks or SQL pipelines, and analysts are refreshing Power BI reports simultaneously, the system starts to lag.

The better approach is to split workloads:

- Use one capacity for data processing — this handles ETL, data ingestion, and transformations.

- Use another for reporting and analysis. This takes care of semantic models and Power BI dashboards without competing for resources.

This separation improves performance, reduces delays, and helps avoid resource conflicts. Reporting teams get faster results, while data teams can process without interruption.

OneLake Shortcuts make this possible without duplicating data. Using shortcuts, you can keep data in one workspace (on a processing capacity) and access it from another (on a reporting capacity). It keeps everything connected — and keeps your users out of each other’s way.

Shortcuts in Data Mesh & Domain-Driven Architecture

Microsoft Fabric is built to support modern data architecture patterns, two of the most relevant ones today being data mesh and domain-driven design. Both approaches focus on decentralizing data ownership by assigning responsibility to the teams who know the data best—the domain experts.

But they also introduce a new challenge: How do you share and reuse data across domains without duplicating it or losing control? That’s where OneLake Shortcuts step in.

1. How It Works in a Domain-Centric Setup

In a typical domain-driven setup:

- Each team or department — like Sales, Finance, or Operations — has its workspace.

- They own and manage the data they create.

- They build pipelines, transform data, and produce certified datasets for others to use.

But instead of everyone downloading or copying the same dataset into their own workspace (leading to multiple versions of the same data), other domains can simply create shortcuts to the certified data in place.

With OneLake Shortcuts:

- Data ownership stays with the producing domain.

- Consuming teams can access the latest version instantly through a virtual link.

- There’s no duplication, no extra storage, and no outdated copies floating around.

2. Why This Matters for Data Mesh

A true data mesh requires four key principles:

- Domain-oriented ownership

- Self-serve data infrastructure

- Data as a product

- Federated governance

OneLake Shortcuts help reinforce all four:

- Domain-oriented ownership: Teams keep control over their data while still enabling access.

- Self-serve infrastructure: Consumers don’t need help from IT to get access — they can create shortcuts in their own workspace.

- Data as a product: Certified, high-quality datasets can be shared broadly without risk of duplication or drift.

- Federated governance: Permissions and access control stay centralized and consistent because the data doesn’t move.

3. Example Use Case

Let’s say the Finance team owns a master dataset of monthly revenue stored in a Fabric Lakehouse. The Marketing team needs to build a report using that data. Instead of exporting it or asking Finance to load it elsewhere, Marketing simply creates a shortcut to the dataset in their own workspace.

Now:

- Finance keeps ownership and maintains data quality.

- Marketing gets live access to the same version of the data.

- There’s no confusion over which version is correct.

- Everyone avoids duplication, sync errors, and redundant storage costs.

Microsoft Fabric Vs Tableau: Choosing the Best Data Analytics Tool

A detailed comparison of Microsoft Fabric and Tableau, highlighting their unique features and benefits to help enterprises determine the best data analytics tool for their needs.

How OneLake Storage Billing Works

OneLake in Microsoft Fabric follows a straightforward billing model that separates storage from compute. This gives you the flexibility to scale workloads and manage costs independently. Think of it like a modern cloud storage service — you pay for what you store, not for how often you use it.

1. Storage Is Billed Per GB

OneLake charges based on the volume of data stored, much like Amazon S3 or Azure Blob Storage. If you store 500 GB of data, you’re billed for exactly that. There’s no added cost for simply keeping your data in OneLake. This approach makes it predictable and efficient, especially for teams managing large volumes of structured or semi-structured data.

2. No Extra Charges for Read or Write Operations

OneLake doesn’t tack on transaction fees when you read from or write to your data. Whether you’re querying data using Power BI, running transformations in Spark, or pushing updates via pipelines, you’re not penalized for how often you access it. This is a big plus for active environments where data is being refreshed or reported on frequently — the cost remains based solely on storage, regardless of usage intensity.

3. Storage Remains Active Even When Capacity Is Paused

Your data is always available, even if the workspace or capacity it was created in is currently paused. Storage billing continues in the background, but compute usage stops, which can help reduce costs. If you have a Lakehouse or Warehouse in a paused capacity, you can still access its data using a shortcut from another active workspace. This makes it easier to manage workloads and budgets without disrupting access to important datasets.

4. Separation of Storage and Compute Enables Flexibility

This design — keeping storage and compute separate — allows teams to plan resources more effectively. You can centralize your storage in OneLake and activate compute only when needed. Shortcuts let you reuse the same datasets across multiple teams and workspaces, all while keeping control of compute consumption.

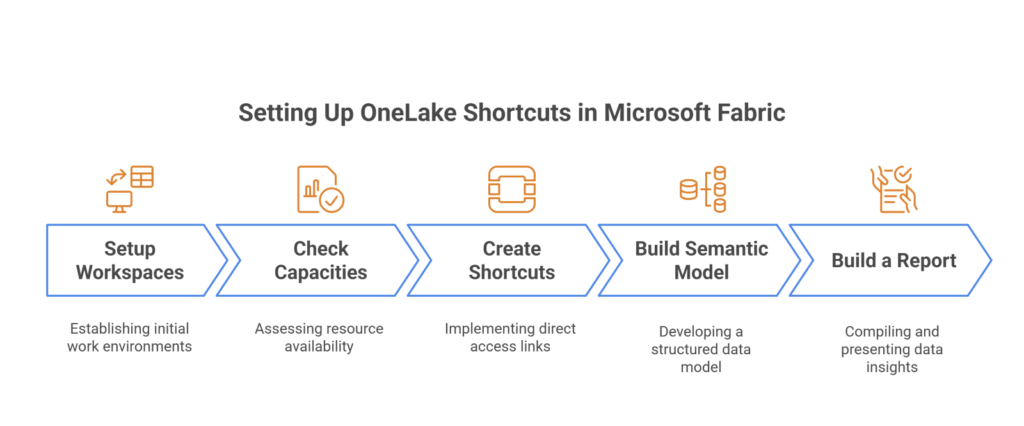

Setting Up OneLake Shortcuts in Microsoft Fabric

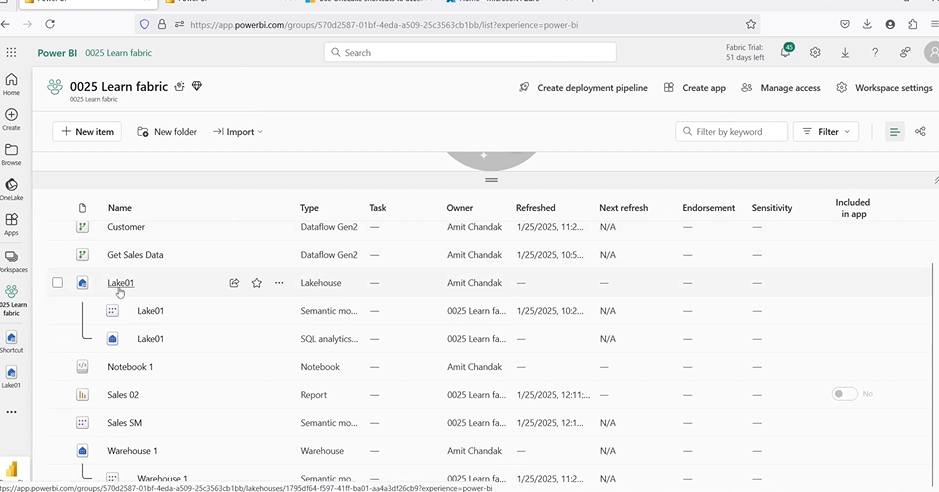

1. Setup Workspaces

Workspace A: Holds the Lakehouse with real data (Lake01). This is where the actual tables like Customer, Date, Geo, Item, and Sales are stored. It’s your core data processing environment.

Workspace B: This workspace will be used for reporting. It starts out empty but will later contain shortcuts to the tables in Workspace A. This setup helps separate compute workloads and avoids putting strain on the data processing layer.

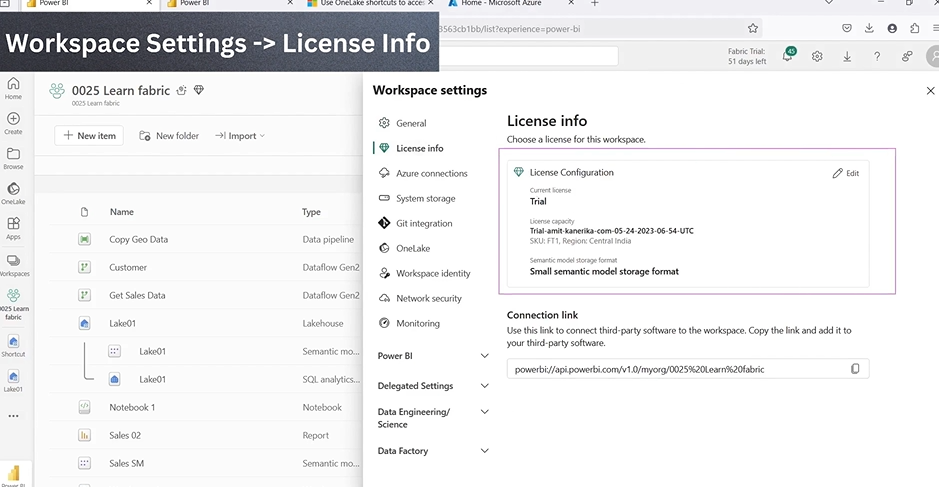

2. Check Capacities

Both workspaces begin on trial capacities, which is common in initial development or testing. Later in the process, you will switch Workspace A to a paid Fabric capacity to handle heavy data loads more reliably. Workspace B can remain on a trial or lighter capacity since it will be used mostly for reading and reporting.

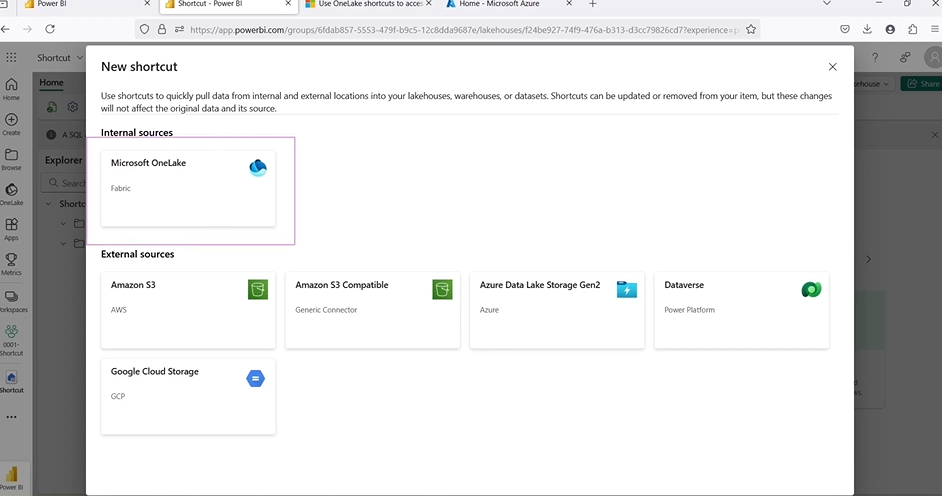

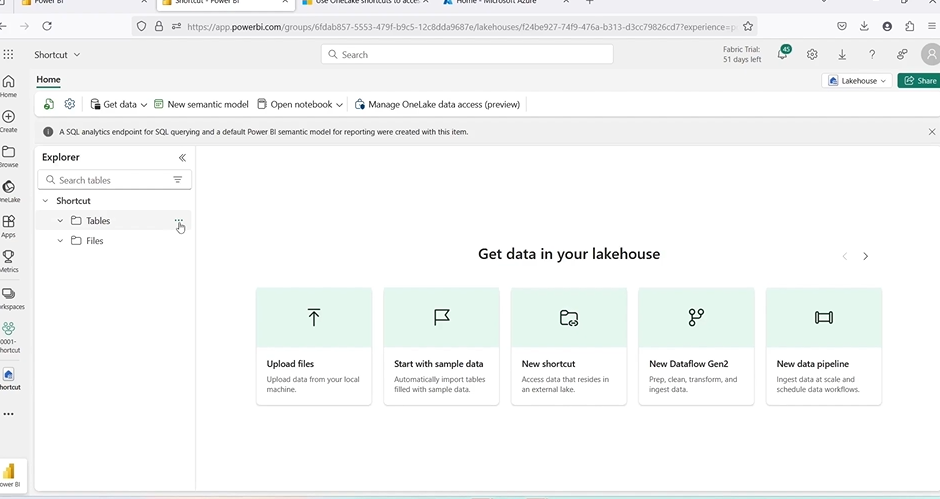

3. Create Shortcuts

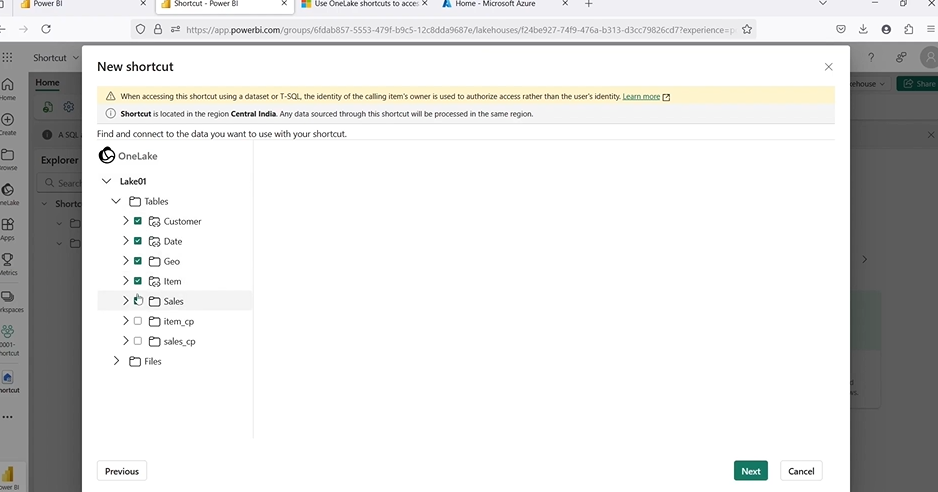

In Workspace B:

- Create a new Lakehouse (this Lakehouse will not store any real data).

- Click on the “New Shortcut” option inside the Lakehouse.

- Point the shortcut to Workspace A’s Lake01.

- Select the required tables: Customer, Date, Geo, Item, and Sales.

- Confirm and create the shortcuts.

At this point, Workspace B will have access to the tables, but they are not duplicated. All data still lives in Workspace A, and Workspace B only references it through shortcuts.

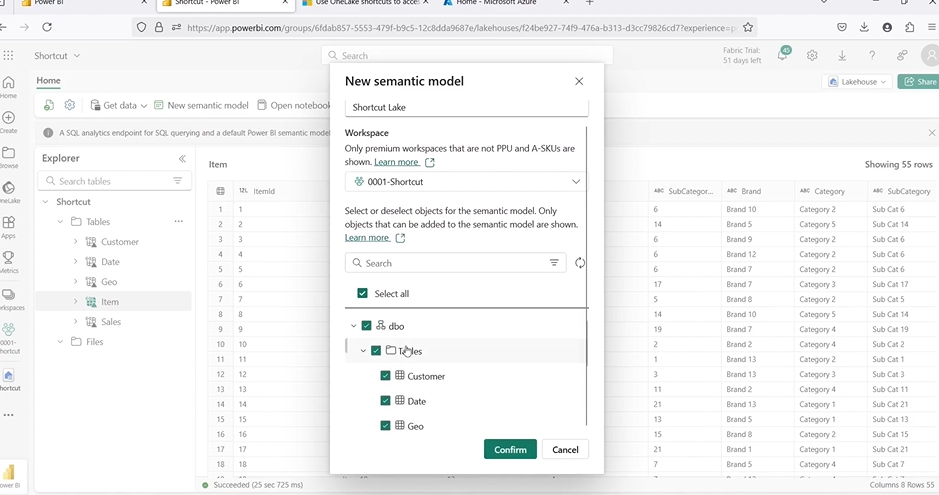

4. Build Semantic Model

Use the shortcut tables inside Workspace B to build a semantic model. This model will serve as the base for your Power BI reports. Set up key relationships such as:

- Item ID in Sales → Item table

- Customer ID in Sales → Customer table

- Geo ID in Sales → Geography table

- Sales Date → Date table

This step ensures your model is logically connected and ready for reporting.

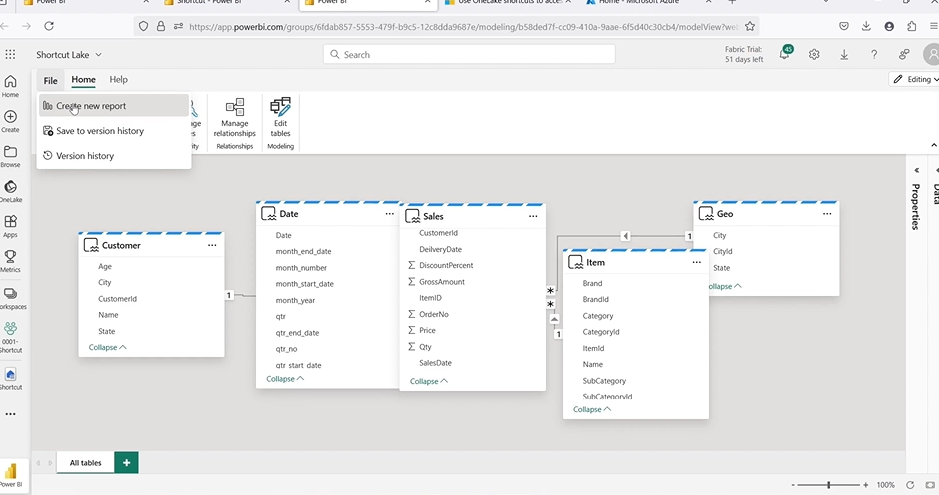

5. Build a Report

Create a simple Power BI report using the semantic model — for example, a chart showing total quantity by item category.

You can save this report in Workspace B, but it’s highly recommended to use a third workspace for reporting. This gives you better control over access, versioning, and user permissions — especially if different teams are handling modeling and reporting.

Switching to Paid Capacity

Once the setup is tested and working on trial capacities, it’s time to move Workspace A — the one holding your actual data — to a paid Fabric capacity. This ensures better performance, more consistent availability, and avoids the limitations of trial usage.

1. Turn on Your on-demand Fabric capacity

Go to the Azure portal or Microsoft 365 admin center, depending on how your organization manages Fabric. Locate your on-demand capacity (such as F2, F4, etc.), and start it. You’ll know it’s active when the status changes and you see the “Pause” option enabled — this means the capacity is now running and ready to handle workloads.

2. Assign Workspace A to the paid capacity

Open Microsoft Fabric (app.powerbi.com), navigate to Workspace A and click on the settings icon in the top right corner. Under “Settings”> “Licenses,” you’ll see the current capacity assignment. Click Edit, and from the dropdown list, choose the active paid capacity that you just started.

This step shifts all compute operations for that workspace — including Spark jobs, SQL queries, and pipeline runs — to the paid capacity. This helps you avoid throttling and resource limits often seen in the trial setup.

3. Confirm it’s active under workspace settings

After assigning the workspace to the new capacity, double-check the change by refreshing the workspace page and going back to the “Licenses” section in settings. You should now see that Workspace A is running at the fabric capacity you selected.

This change doesn’t affect the data itself — the Lakehouse and its tables remain intact. But from this point forward, any processing done in Workspace A will use the more powerful paid capacity, giving you higher throughput and more stability for production-level workloads.

Testing Access When Source Capacity Is Paused

Now it’s time to see how OneLake Shortcuts handles real-world conditions — like a workspace going offline.

1. Pause the paid capacity assigned to Workspace A

In the Azure portal, pause the Fabric capacity that Workspace A is using. This simulates a scenario where the computer is turned off, either to save costs or due to a planned downtime.

2. Try opening the Lakehouse in Workspace A — it fails

Go to Lake01 in Workspace A and try to open it. You’ll get an error because the workspace no longer has active computing. The data is still stored, but you can’t access it directly without capacity.

3. Go to Workspace B (still on trial capacity)

Now switch to Workspace B, which contains the shortcuts pointing to Lake01. This workspace is still running and has compute available.

4. Open the shortcut Lakehouse and report — they work

Open the Lakehouse in Workspace B. The shortcut tables load without issues. Open the Power BI report — it still shows the data and refreshes as expected.

The Ultimate Databricks to Fabric Migration Roadmap for Enterprises

Explore AI’s impact on robotics and follow our step-by-step guide to efficiently migrate enterprise analytics from Databricks to Microsoft Fabric with minimal disruption.

Best Practices for Structuring Workspaces with OneLake Shortcuts

For a scalable and clean setup in Microsoft Fabric, it’s a good idea to separate your workloads into purpose-specific workspaces. This approach helps you manage permissions, organize roles, and control performance more effectively — especially when you’re using OneLake Shortcuts to share data across layers.

Workspace 1: Raw Data

Used for storing raw or curated data in Lakehouses or Warehouses. This is where your ingestion, transformation, and ETL tasks run. Data engineers typically manage it, and it is computed heavily.

Workspace 2: Shortcuts and Semantic Models

This is where you create OneLake Shortcuts pointing to the raw data from Workspace 1. You also build semantic models here, which define tables, relationships, and business logic used by reports. It acts as a bridge between the data layer and reporting.

Workspace 3: Reports

Dedicated to report building and publishing. Analysts and business users use this layer to create dashboards using semantic models. This workspace stays lean and doesn’t handle heavy computing.

Kanerika: Helping You Build Smarter Data Architectures with Microsoft Fabric

Implementing Microsoft Fabric the right way — especially with features like OneLake Shortcuts and layered workspace design — can make a big difference in how teams access, manage and act on data. At Kanerika, we help organizations do exactly that.

As a certified Microsoft partner with deep expertise in data and AI, Kanerika works closely with businesses to integrate Fabric into real-world workflows. From setting up multi-capacity environments to designing shortcut-driven models that avoid duplication, we build practical, scalable solutions tailored to your goals.

Our hands-on experience across industries means we don’t just recommend best practices—we implement them fast. Whether you’re modernizing reporting, consolidating data across teams, or building for scale, we ensure your Fabric environment is built to deliver results from day one.

Partner with Kanerika and take the next step toward faster insights, cleaner architecture, and smarter decisions.

Frequently Asked Question

What is the difference between OneLake and data lake?

OneLake is Microsoft’s unified, SaaS-based data lake built into Microsoft Fabric, whereas a traditional data lake is a generic storage repository requiring separate provisioning and management. OneLake eliminates infrastructure overhead by providing automatic data organization, built-in governance, and seamless integration across all Fabric workloads. Traditional data lakes demand manual configuration for security, access controls, and compute connections. OneLake also supports Delta Parquet format natively, enabling ACID transactions without additional tooling. Kanerika helps enterprises migrate from legacy data lakes to OneLake with minimal disruption—connect with our Fabric specialists today.

What is OneLake in Microsoft Fabric?

OneLake is the foundational storage layer in Microsoft Fabric that serves as a single, unified data lake for your entire organization. It automatically provisions with every Fabric tenant and stores all data in Delta Parquet format, ensuring consistency across analytics workloads. OneLake centralizes data from warehouses, lakehouses, and pipelines while enforcing consistent security and governance policies. Unlike standalone storage accounts, OneLake integrates natively with Power BI, Data Factory, and Synapse experiences. Kanerika’s Microsoft Fabric consultants can architect your OneLake environment for optimal performance—schedule a discovery call.

How to access Fabric OneLake?

You can access Fabric OneLake through multiple pathways including the Microsoft Fabric portal, OneLake file explorer for Windows, Azure Storage Explorer, and APIs compatible with ADLS Gen2. Developers can connect programmatically using REST APIs or SDKs, while business users leverage the browser-based Fabric interface. OneLake shortcuts also enable access to external data sources without copying data. Permissions are managed through Fabric workspace roles and item-level security. Kanerika provides hands-on training and implementation support to help your teams access and utilize OneLake effectively—reach out for a guided walkthrough.

What is the difference between OneLake and lakehouse?

OneLake is the underlying storage infrastructure for all Microsoft Fabric data, while a lakehouse is a specific workload item built on top of OneLake that combines data lake flexibility with data warehouse capabilities. Think of OneLake as the foundation and the lakehouse as an analytics application layer. The lakehouse enables SQL queries, schema enforcement, and BI reporting while storing files in OneLake’s Delta Parquet format. Multiple lakehouses can exist within a single OneLake environment. Kanerika architects lakehouse solutions tailored to your analytics requirements—let us design your optimal Fabric architecture.

Is OneLake part of Fabric?

Yes, OneLake is an integral, inseparable component of Microsoft Fabric that provisions automatically with every Fabric tenant. You cannot deploy Fabric without OneLake—it serves as the unified storage backbone connecting all Fabric experiences including Data Factory, Synapse, and Power BI. This tight integration eliminates data silos and ensures consistent governance across your analytics estate. Unlike add-on storage solutions, OneLake requires no separate licensing or configuration. Every workspace in Fabric stores its data within OneLake by default. Kanerika helps enterprises maximize their Fabric investment by optimizing OneLake configurations—contact us for a free assessment.

What are the benefits of OneLake?

OneLake delivers unified data storage, eliminating silos by centralizing all organizational data in one governed location. Key benefits include automatic provisioning without infrastructure management, native Delta Parquet format for ACID compliance, and single security model across workloads. OneLake shortcuts enable data virtualization from external sources without duplication, reducing storage costs. Built-in integration with Power BI enables Direct Lake mode for faster reporting. Organizations gain simplified governance, reduced data movement, and consistent access patterns across analytics teams. Kanerika’s data platform experts help enterprises unlock these OneLake benefits faster—book a consultation today.

What is the difference between Microsoft Fabric and Azure?

Microsoft Fabric is a unified SaaS analytics platform, while Azure is a comprehensive cloud infrastructure offering hundreds of discrete services. Fabric integrates data engineering, data science, warehousing, and BI into one platform with shared storage via OneLake. Azure requires you to provision and connect separate services like Azure Data Lake, Synapse Analytics, and Data Factory individually. Fabric simplifies licensing with capacity-based pricing, whereas Azure involves multiple service-specific costs. Fabric runs on Azure infrastructure but abstracts away the complexity. Kanerika specializes in both Azure and Fabric migrations—let us assess which path suits your enterprise.

Is Microsoft Fabric the same as Snowflake?

No, Microsoft Fabric and Snowflake are distinct platforms with different architectures. Fabric is an end-to-end analytics platform encompassing data integration, engineering, warehousing, science, and BI—all unified by OneLake storage. Snowflake focuses primarily on cloud data warehousing and data sharing capabilities. Fabric offers native integration with the Microsoft ecosystem including Power BI, Teams, and Microsoft 365. Snowflake provides cross-cloud portability and a consumption-based pricing model. Both support structured and semi-structured data, but Fabric’s lakehouse approach offers broader workload coverage. Kanerika implements both platforms—contact us to determine which aligns with your data strategy.

What is the difference between data lake and lakehouse?

A data lake stores raw, unstructured data without schema enforcement, while a lakehouse combines data lake scalability with data warehouse capabilities like ACID transactions and SQL querying. Lakehouses impose structure through Delta Lake or similar formats, enabling reliable analytics on large datasets. Data lakes often suffer from data swamps due to poor governance, whereas lakehouses enforce schema and data quality. In Microsoft Fabric, the lakehouse workload stores data in OneLake using Delta Parquet, bridging both paradigms. Kanerika designs lakehouse architectures that maximize performance and governance—start with our expert consultation.

Does Microsoft Fabric require OneLake?

Yes, Microsoft Fabric requires OneLake as its foundational storage layer—they are inseparable by design. OneLake provisions automatically with every Fabric capacity and cannot be disabled or replaced. All Fabric workloads including lakehouses, warehouses, and pipelines store data in OneLake by default. This mandatory integration ensures consistent data governance, security, and access patterns across the platform. OneLake shortcuts can reference external data, but the core Fabric storage remains OneLake-based. Organizations cannot use Fabric without OneLake underneath. Kanerika’s Fabric architects help enterprises leverage this unified architecture effectively—reach out for implementation guidance.

Is Microsoft Fabric similar to Databricks?

Microsoft Fabric and Databricks share lakehouse architecture principles but differ significantly in scope and integration. Fabric is an all-in-one SaaS platform covering data integration, warehousing, engineering, science, and BI with OneLake as unified storage. Databricks focuses on data engineering, machine learning, and collaborative notebooks built on Apache Spark. Fabric integrates tightly with Power BI and Microsoft 365, while Databricks offers deeper MLOps capabilities and cross-cloud flexibility. Both use Delta Lake format for reliable data management. Kanerika implements both Databricks and Microsoft Fabric solutions—contact us to evaluate which platform fits your requirements.

What is OneLake shortcut?

OneLake shortcuts are pointers that enable access to data stored outside OneLake without physically copying it. Shortcuts can reference external sources including Azure Data Lake Storage Gen2, Amazon S3, Google Cloud Storage, and Dataverse. This virtualization approach eliminates data duplication, reduces storage costs, and ensures you always query current data. Shortcuts appear as folders within OneLake and support the same security and governance controls as native data. They enable seamless integration of multi-cloud data into your Microsoft Fabric analytics. Kanerika configures OneLake shortcuts to unify your distributed data estate—schedule a technical session with our team.

How is data stored in OneLake?

Data in OneLake is stored in Delta Parquet format, an open-source columnar storage format with ACID transaction support. OneLake organizes data hierarchically by tenant, workspace, and item, similar to ADLS Gen2 folder structures. All Fabric workloads—lakehouses, warehouses, and pipelines—write data directly to OneLake without requiring separate storage configuration. The Delta format enables versioning, time-travel queries, and efficient upserts. OneLake stores both structured tables and unstructured files, accessible via APIs compatible with Azure Data Lake Storage Gen2. Kanerika optimizes OneLake data organization for performance and cost efficiency—talk to our data architects.

What is the difference between Direct Lake on OneLake and Direct Lake on SQL?

Direct Lake on OneLake reads Parquet files directly from OneLake storage into Power BI memory, bypassing imports and providing near-real-time reporting. Direct Lake on SQL endpoint queries the lakehouse SQL endpoint, translating DAX to SQL queries against Delta tables. OneLake mode offers faster performance for large datasets since data loads directly without query translation. SQL mode provides fallback capabilities when data requires transformations or when Direct Lake limitations apply. OneLake mode handles billions of rows efficiently, while SQL mode suits complex calculated columns. Kanerika configures optimal Direct Lake modes for your reporting workloads—connect with our Power BI experts.

What is the difference between Microsoft Fabric and Service Fabric?

Microsoft Fabric is a unified data analytics platform featuring OneLake, data integration, and BI capabilities, while Service Fabric is a distributed systems platform for building microservices and containerized applications. They serve entirely different purposes despite the shared naming. Service Fabric manages application deployment, scaling, and failover for developers building cloud-native applications. Microsoft Fabric targets data engineers, analysts, and scientists working on analytics workloads. Service Fabric predates Microsoft Fabric by nearly a decade and powers Azure’s own infrastructure. Kanerika focuses on Microsoft Fabric implementations for analytics modernization—contact us for your data platform needs.

Does Microsoft have a data lake?

Yes, Microsoft offers multiple data lake solutions including OneLake within Microsoft Fabric and Azure Data Lake Storage Gen2 as standalone cloud storage. OneLake is a fully managed, SaaS-based data lake that eliminates infrastructure provisioning and integrates natively with Fabric analytics workloads. Azure Data Lake Storage Gen2 provides scalable, cost-effective storage with hierarchical namespace capabilities for traditional Azure deployments. OneLake represents Microsoft’s latest evolution, designed specifically for unified analytics rather than general-purpose storage. Both support open formats like Parquet and Delta Lake. Kanerika migrates organizations to OneLake from legacy storage solutions—request your migration assessment today.

Is Microsoft Fabric a data lake?

Microsoft Fabric includes a data lake—OneLake—but extends far beyond storage alone. Fabric is a comprehensive analytics platform encompassing data integration, engineering, warehousing, real-time analytics, data science, and business intelligence. OneLake serves as the unified storage foundation, but Fabric adds compute engines, orchestration tools, and visualization capabilities. Calling Fabric just a data lake undersells its scope; it competes with complete analytics stacks rather than simple storage services. OneLake differentiates Fabric by eliminating storage silos across all workloads. Kanerika helps enterprises harness Fabric’s full capabilities beyond basic storage—explore our Fabric implementation services.

What is replacing Azure Synapse?

Microsoft Fabric is positioned as the successor to Azure Synapse Analytics, consolidating its capabilities into a unified SaaS platform with OneLake storage. Fabric incorporates Synapse’s data engineering, warehousing, and integration features while adding native Power BI, real-time analytics, and Data Activator capabilities. Microsoft continues supporting Synapse but encourages new implementations on Fabric. The migration path from Synapse to Fabric benefits from architectural similarities and shared workspace concepts. Organizations gain simplified governance and reduced infrastructure management by moving to Fabric. Kanerika accelerates Synapse to Microsoft Fabric migrations with proven methodologies—let us plan your transition.

Is Synapse end of life?

Azure Synapse Analytics is not officially end of life, but Microsoft is directing strategic investment toward Microsoft Fabric. Synapse remains supported and receives maintenance updates, though major new features appear in Fabric first. Microsoft encourages customers to evaluate Fabric for new projects while existing Synapse workloads continue running. The Synapse workspace experience integrates with Fabric, providing migration pathways when organizations are ready. No formal deprecation timeline has been announced, but Fabric represents Microsoft’s future analytics direction with OneLake at its core. Kanerika guides organizations through Synapse-to-Fabric transitions—contact us for migration planning.

Is Fabric replacing Azure?

No, Microsoft Fabric is not replacing Azure—it runs on Azure infrastructure as a specialized analytics service. Fabric consolidates multiple Azure analytics tools into one unified platform with OneLake storage, but Azure continues offering hundreds of compute, networking, security, and application services. Fabric simplifies analytics deployments that previously required combining Azure Data Factory, Synapse, and Power BI separately. Organizations still use Azure for virtual machines, databases, web apps, and infrastructure beyond analytics scope. Fabric complements Azure rather than replacing it. Kanerika implements both Azure solutions and Microsoft Fabric—discuss your complete cloud strategy with our architects.

What is the difference between data lake and data fabric?

A data lake is a centralized storage repository for raw structured and unstructured data, while a data fabric is an architectural approach that integrates data across distributed systems using metadata-driven automation. Data lakes store information; data fabrics connect and govern information regardless of location. Microsoft Fabric embodies data fabric principles by unifying analytics workloads through OneLake, applying consistent governance, and enabling seamless data access across sources via shortcuts. Data fabric architectures reduce data silos without requiring all data in one physical location. Kanerika designs data fabric solutions leveraging Microsoft Fabric—schedule your architecture workshop today.

Is OneLake a data warehouse?

No, OneLake is not a data warehouse—it is a unified data lake that serves as the storage foundation for Microsoft Fabric. Data warehouses in Fabric are separate workload items built on top of OneLake, providing SQL-based schema enforcement, indexing, and optimized query performance. OneLake stores raw files and Delta tables, while the warehouse item adds relational database capabilities with T-SQL support. You can have multiple warehouses and lakehouses within OneLake, each serving different analytical purposes. OneLake provides storage; warehouses provide structured query experiences. Kanerika implements Fabric warehouses optimized for your reporting needs—connect with our data warehouse specialists.