Organizations today generate massive volumes of data from applications, IoT devices, websites, and enterprise systems. As businesses move toward AI-driven decision-making, the need for structured, reliable, and analytics-ready data has become more critical than ever. This shift has pushed enterprises to modernize their data infrastructure, and data-warehouse architecture has become the backbone of business-intelligence and analytics initiatives.

The importance of data warehousing is reflected in market growth. According to industry reports, the global data warehouse market is expected to exceed $30 billion by 2030, driven by the rapid adoption of cloud platforms, advanced analytics, and AI workloads. Additionally, organizations that implement modern data architectures report significantly faster query performance and improved decision-making capabilities compared to traditional data management approaches.

In this blog, we will explore what data warehouse architecture is, how it works, its key layers and components, and the different architectural models used by modern enterprises.

Modernize Data and RPA Platforms for Enterprise Automation

Learn how organizations modernize legacy data and RPA systems to improve scalability, governance, and operational efficiency.

Key Takeaways

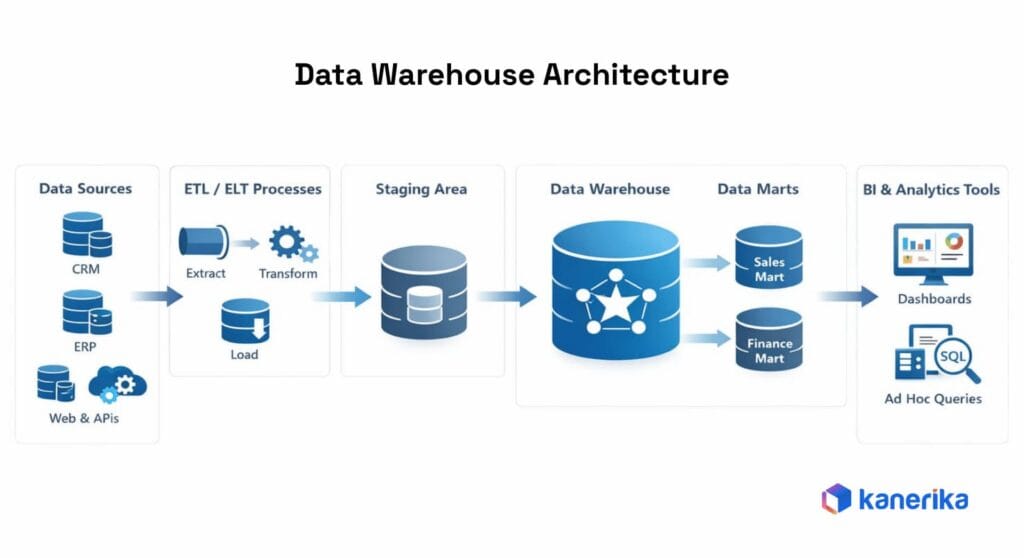

- Data warehouse architecture provides the structural framework for collecting, transforming, storing, and accessing data for analytics and business intelligence.

- Data flows through multiple stages, including ingestion, transformation (ETL/ELT), staging, centralized storage, and finally analytics or BI tools for reporting.

- A layered architecture improves scalability and maintainability, typically consisting of source, integration, staging, storage, and presentation layers.

- Core components such as ETL tools, metadata management, data marts, and BI platforms work together to enable reliable data processing and self-service analytics.

- Modern data warehouse architectures are cloud-native, using scalable platforms, separating compute and storage, enabling real-time data pipelines, and integrating with data lakes or lakehouses.

- Successful data warehouse implementations require strong governance, performance optimization, and scalable design to handle growing data volumes and evolving analytics needs.

What Is Data Warehouse Architecture

Organizations generate data from dozens of systems simultaneously: CRMs, ERPs, IoT devices, web applications, and third-party platforms. None of these systems produces data in a format ready for analysis, and none were built to work together. A data warehouse solves this by acting as a centralized, governed repository where data from all those sources lands in a consistent, queryable structure.

Data warehouse architecture is the design of that entire system. It defines how data moves from source systems into the warehouse, how it gets transformed and stored, and how analysts and BI tools access it. Every component in that chain sits within the architecture: ingestion pipelines, storage schemas, processing layers, and access controls.

A data warehouse is optimized for analytical workloads: historical queries, aggregations, and reporting. It differs fundamentally from an operational database, which handles transactional workloads like processing orders or updating records. Building on the same infrastructure in both directions degrades performance.

Getting the architecture right from the start matters because decisions made here shape query speed, storage costs, governance, and how well the system handles growing data volumes.

How Data Warehouse Architecture Works (End-to-End Data Flow)

Data moves through a defined sequence before it reaches an analyst. Each stage plays a specific role in preparing data for analysis, and each one creates a failure point if it isn’t properly designed.

1. Data Ingestion from Multiple Source Systems

Data enters the warehouse from CRMs, ERPs, transaction databases, marketing platforms, flat files, IoT streams, and external APIs. Each source runs on its own schedule and schema. The ingestion layer extracts data from these systems using either full-load (copying all records periodically) or incremental-load (capturing only new or changed records since the last run).

Tools like Azure Data Factory, Fivetran, and Apache Kafka handle this stage. The ingestion layer’s responsibility is reliability: pulling data in on schedule without losing records, creating duplicates, or silently failing when a source system changes.

2. Data Transformation Through ETL or ELT Pipelines

Raw ingested data is rarely ready for analysis. Transformation cleans the data, resolves field definition conflicts across sources, applies business rules, and restructures it into a consistent format. In ETL (Extract, Transform, Load), transformation runs before data enters the warehouse on a separate infrastructure.

In ELT (Extract, Load, Transform), raw data is loaded first, and transformation runs within the warehouse using its own compute. Cloud warehouses like Snowflake, BigQuery, and Azure Synapse have made ELT the more common approach because warehouse compute scales elastically, and transformation logic is easier to iterate on when it lives inside the platform.

3. Data Staging and Processing Layers

Before reaching final storage, data passes through a staging area. This temporary zone decouples source systems from the warehouse, so a pipeline failure in one place doesn’t cascade across the system. The staging layer also preserves a raw copy of ingested data, which teams can use to reprocess records when transformation logic changes.

Some architectures include an operational data store (ODS) alongside staging, which holds near-real-time data for operational reporting separately from the main analytical warehouse. The processing layer above storage handles compute-heavy aggregations and query preparation, typically through an OLAP engine.

4. Storage in the Centralized Data Warehouse

After transformation, the data is loaded into the core storage layer and organized into fact and dimension tables. Fact tables store measurable events like sales transactions or page views. Dimension tables store descriptive attributes like customer names, product categories, and date hierarchies.

Star schemas structure these into a central fact table surrounded by dimensions, minimizing join complexity for BI queries. Snowflake schemas further normalize dimensions, trading query simplicity for storage efficiency. The storage layer is read-optimized by design, which is fundamentally different from transactional databases tuned for write performance.

5. Data Access Through BI and Analytics Tools

Business users and analysts access the warehouse through BI tools such as Power BI, Tableau, and Looker, as well as SQL clients, data science notebooks, and APIs. A semantic layer often sits in front of the physical schema, mapping technical table and column names to business-friendly terms so analysts work with “monthly recurring revenue” rather than raw schema identifiers. Role-based access controls enforce data visibility rules at this layer, ensuring users see only what their roles permit. The reliability of everything at this layer depends directly on the quality of every layer below it.

Key Layers of Data Warehouse Architecture

A well-structured warehouse separates responsibilities into distinct layers. Each layer has a defined job, and the boundaries between them matter for maintainability and troubleshooting.

1. Data Source Layer

The source layer is everything upstream of the warehouse: operational databases, ERP and CRM platforms, marketing tools, financial systems, APIs, flat files, and event streams. These systems carry their own schema conventions, update frequencies, and data quality characteristics. A “customer” in the CRM is often a different concept than a “customer” in the billing system.

Teams that skip a thorough source inventory before building pipelines spend months later fixing broken joins and mismatched records. The source layer doesn’t belong to the warehouse, but it determines what data is available for ingestion and sets the baseline for data quality throughout the downstream pipeline.

2. Data Integration Layer (ETL or ELT)

The integration layer moves data from source systems into the warehouse. It handles extraction, transformation, scheduling, error handling, and lineage tracking. ETL processes transform data before loading it. ELT loads raw data first and transforms it inside the warehouse.

The integration layer enforces business rules, standardizes field definitions across sources, and resolves duplicate records. Pipeline monitoring belongs here too: most production failures originate at this layer, and without alerting on failures or schema changes, bad data propagates into reports before anyone notices.

3. Staging Layer

The staging layer is a temporary holding area between source systems and the core warehouse tables. Raw data lands here before transformation begins. It serves as a buffer so source system failures don’t directly impact the warehouse, and it preserves a raw snapshot for reprocessing when downstream logic changes. Most production warehouses use a persistent staging area rather than a transient one, keeping a rolling window of raw data available for debugging and audits.

4. Data Warehouse Storage Layer

The core storage layer holds cleaned, transformed data in a structured format optimized for analytical queries. Schema design at this layer determines query performance and long-term maintainability. Star schemas suit most BI workloads because they minimize join complexity.

Snowflake schemas offer greater normalization, but at the cost of query complexity. This layer also houses data marts, which are domain-specific subsets of the warehouse organized by business function. Summary and aggregation tables often live here too, pre-computing expensive recurring queries to keep dashboards fast.

5. Data Presentation or Analytics Layer

The presentation layer is what business users interact with daily. It includes BI tools, SQL interfaces, data science notebooks, and APIs that connect to the warehouse. A semantic layer often sits at this level, translating the physical data model into business-friendly metrics and dimensions so analysts don’t need to understand the underlying schema. Data marts surface at this layer as focused, departmental views. Performance and accuracy here are direct functions of how well the source, integration, staging, and storage layers were built.

Core Components of Data Warehouse Architecture

The layers define the structure. The components are the systems and tools that operate within them.

1. Data Warehouse Database

The warehouse database is the central repository for structured, analytics-ready data. It’s typically a columnar or relational database optimized for read-heavy workloads rather than transactional writes. In cloud-native deployments, teams use managed services like Azure Synapse Analytics, Snowflake, Google BigQuery, or Amazon Redshift.

On-premises options such as Teradata and Oracle remain in use by organizations with strict data residency requirements. The database’s partitioning strategy, indexing approach, and schema design directly determine query speed, concurrency handling, and cost at scale.

2. ETL or ELT Tools

These tools manage the movement and transformation of data from source systems into the warehouse. ETL tools like Azure Data Factory, Informatica, and Talend transform data before it loads. ELT tools like dbt and Apache Spark transform it inside the warehouse after loading.

The right choice depends on data volume, latency requirements, team skill set, and existing infrastructure. Regardless of the approach, pipelines need schema validation during ingestion, automated quality checks in the staging layer, and alerting for failures. Silent pipeline failures are among the most common sources of data quality issues in production warehouses.

3. Metadata Management and Data Catalog

A metadata catalog stores information about the data itself: table names, field definitions, data types, lineage, ownership, and pipeline run history. Tools like Azure Purview, Collibra, and Alation handle this. Without a catalog, analysts spend significant time trying to understand what a field means before they can use it.

Governance becomes nearly impossible to enforce at scale when nobody can trace where data came from or who owns it. A well-maintained catalog also supports compliance: auditors can follow data lineage end-to-end rather than relying on manual documentation.

4. Data Marts for Departmental Analytics

Data marts are focused subsets of the warehouse scoped to specific business functions: finance, sales, HR, or supply chain. Rather than giving every team access to the full warehouse, marts give them a curated, pre-organized view of the data they actually need.

Dependent marts draw from the central warehouse and inherit its governance and data quality standards. Independent marts pull data directly from source systems, which is faster to build but tends to create silos over time. Most mature architectures use dependent marts and treat independent ones as a pattern to avoid.

5. Query Engines and Reporting Tools

The query engine processes analytical requests against the warehouse. In cloud platforms, engines run massively parallel workloads by distributing computation across nodes, which returns results faster at high concurrency. BI tools like Power BI, Tableau, and Looker sit on top of the query engine and give business users visual interfaces for building dashboards and running reports. Query performance depends on schema design, partition configuration, and whether the storage layer is organized around actual query patterns rather than how data originally arrived.

Types of Data Warehouse Architecture

1. Single-Tier Architecture

In a single-tier setup, source data, storage, and analytics share the same infrastructure with no staging and no separation between operational and analytical workloads. Queries and transactions compete for the same compute, slowing both. This model suits only very small deployments or proof-of-concept environments and rarely survives production scale.

2. Two-Tier Architecture

Source systems and the warehouse occupy two separate tiers. Data moves from sources into a central warehouse, and users query it directly through BI tools with basic transformation running in between. This reduces the load on operational systems and allows some data quality work, but the lack of a middle-tier for processing means query concurrency creates bottlenecks as usage grows. Many early enterprise warehouses used this model and outgrew it as data volumes and user counts increased.

3. Three-Tier Architecture

The most widely adopted pattern in enterprise data warehousing. The bottom tier is the storage layer where processed data lives. The middle tier is an OLAP or analytics processing layer that handles aggregations and prepares data for user queries.

The top tier is the presentation layer, where BI tools and end users access dashboards and reports. Because each tier operates independently, teams can change or scale one without rebuilding the others. Cloud-native architectures extend this foundation rather than replace it.

4. Enterprise Data Warehouse Architecture

An enterprise data warehouse (EDW) is a centralized, organization-wide repository that integrates data across all business units into a single governed source of truth. It includes a full governance framework, role-based access controls, data lineage tracking, and connections to domain-specific data marts.

The EDW serves finance, operations, HR, marketing, and executive reporting from one consistent data foundation. Platforms like Azure Synapse, Snowflake, and Microsoft Fabric are common choices for modern EDW deployments. The trade-off is implementation complexity and cost: EDWs take longer to build and require more cross-team coordination than smaller, domain-specific designs.

5. Data Mart Architecture

A data mart is a focused, domain-specific subset of the warehouse built for a particular business function. Finance, marketing, and supply chain teams each get a mart scoped to the data and metrics they need. Dependent data marts draw from the central warehouse and stay in sync with its governance and definitions.

Independent data marts pull directly from source systems, which makes them faster to build but prone to data inconsistencies over time. Organizations that build independent marts without central coordination often end up with conflicting numbers across departments, recreating the same problems a warehouse was built to solve.

Modern Data Warehouse Architecture

The structural goals of data warehousing haven’t changed: centralized, governed, analytics-ready data. What has changed is the infrastructure, the cost model, and the pace at which architectures need to adapt to new requirements.

1. Cloud-Based Data Warehouse Platforms

Cloud-native platforms like Snowflake, Google BigQuery, Amazon Redshift, and Azure Synapse have become the default for new warehouse deployments. These platforms offer elastic scaling, fully managed infrastructure, and pay-as-you-go pricing that on-premises systems can’t match.

For organizations migrating from legacy on-premises warehouses, cloud adoption also removes the burden of hardware provisioning, software patching, and capacity planning from the data team. Most enterprises migrating to cloud warehouses also adopt ELT as their primary transformation pattern, taking advantage of the platform’s elastic compute rather than running separate transformation infrastructure.

2. Separation of Compute and Storage

Traditional warehouse systems coupled compute and storage on the same hardware, meaning scaling one required scaling both. Cloud platforms separate them entirely. Storage scales independently as data volumes grow. Compute scales with query load and idles when teams aren’t running workloads, directly reducing costs.

Teams can also run multiple independent compute clusters against the same storage layer, isolating workloads so heavy analytical jobs don’t degrade the performance of operational dashboards. This separation is one of the primary structural reasons cloud-native platforms have displaced on-premises systems for most new deployments.

3. Integration with Data Lakes

Modern warehouse architectures rarely operate in isolation. Many organizations run a data lake alongside the warehouse, using the lake for raw data storage and ML training sets while the warehouse handles governed BI and compliance reporting. The lakehouse pattern, implemented through platforms like Microsoft Fabric, Databricks, and Delta Lake, blends both into a single architecture.

A lakehouse combines the flexible, low-cost storage of a data lake with the query performance and governance of a warehouse, removing the overhead of managing two separate systems and the pipelines between them.

4. Real-Time Data Processing and Streaming

Batch ingestion, whether once a day or once an hour, is no longer sufficient for many workloads. Financial fraud detection, live operational dashboards, and supply chain monitoring all depend on data that reflects the current state, not last night’s batch run. Streaming platforms like Apache Kafka and Azure Event Hubs enable near-real-time ingestion into the warehouse. This adds pipeline complexity, including the need to handle out-of-order events and late-arriving data, but for time-sensitive use cases, it’s the only architecture that delivers current data at the presentation layer.

5. AI and Machine Learning Integration

Modern data warehouses increasingly serve as the foundation for AI and ML workloads, not just BI reporting. Clean, governed warehouse data feeds model training pipelines, feature stores, and inference workflows. Platforms like Microsoft Fabric and Databricks integrate ML tooling directly with warehouse storage, so data teams can manage both analytical and ML workloads on the same infrastructure.

Metadata management and lineage tracking become especially critical here: ML models are only as reliable as the data they were trained on, and without lineage, it’s impossible to audit model inputs when results are questioned.

Data Warehouse vs Data Lake vs Lakehouse

These three patterns address overlapping but distinct needs. The differences matter when making architecture decisions.

| Functions | Data Warehouse | Data Lake | Lakehouse |

| Data type | Structured only | All types (raw) | All types |

| Schema | Schema-on-write | Schema-on-read | Both supported |

| Users | BI analysts | Data engineers, scientists | All user types |

| Query performance | High (optimized) | Moderate | High |

| Storage cost | Higher | Low | Balanced |

| Governance | Strong | Limited | Strong |

| Best for | Reporting, dashboards | ML, raw storage | Unified analytics |

A data warehouse is the right choice when structured, reliable reporting for business users is the priority. A data lake is well-suited to large volumes of raw data at low cost, particularly for machine learning or exploration workloads. A lakehouse combines both patterns in a single system, supporting structured BI workloads and unstructured data science workflows from the same storage layer.

Many modern enterprises run all three in combination. Data flows from the lake through transformation pipelines into the warehouse, feeds reports and dashboards, and ML outputs go back to the lake for future use. The lakehouse increasingly serves as the consolidation point where that separation becomes unnecessary.

Data Lake vs. Data Warehouse: Which One Powers Better Business Insights?

Explore the key differences between a data lake and a data warehouse to understand which one offers better insights for your business needs.

Common Challenges in Data Warehouse Architecture

1. Integrating Data from Multiple Sources

Source systems use different data formats, naming conventions, and update frequencies. “Customer” in the CRM often means something different than “customer” in the billing system, and reconciling these differences requires both technical work and business alignment. Stakeholders need to agree on canonical definitions before engineers build pipelines. Teams that skip this step spend significant time later debugging contradictory reports and lose trust in the warehouse as the single source of truth.

2. Managing Large Volumes of Data

As data volumes grow, query performance degrades, and storage costs climb unless the architecture was designed for scale. Partitioning strategies, columnar storage formats, and tiered storage policies all help, but teams need to plan them upfront. Retrofitting these into a warehouse that wasn’t built for scale is disruptive and expensive, particularly once BI dashboards and pipelines are already running on top. Tables that return results in seconds at millions of rows can take minutes at billions without proper partitioning and clustering.

3. Ensuring Data Quality and Consistency

Data quality problems at the source amplify in the warehouse. Null values, duplicate records, inconsistent date formats, and mismatched keys across tables corrupt analytical results and erode analyst trust. Automated quality checks at each pipeline stage, schema validation on ingestion, and data lineage tracking catch most issues before they reach reports. Manual review doesn’t scale, so teams that rely on it rather than automated checks consistently face recurring data quality incidents.

4. Performance Optimization for Analytics Queries

Slow queries are usually a schema problem rather than a hardware problem. A warehouse built around how data is stored rather than how teams query it produces complex joins and full table scans that degrade at scale.

Star schemas, proper partitioning, materialized views, and query result caching address most performance issues, but teams must base these decisions on actual query patterns rather than theoretical data structures. Optimizing after dashboards are already running on a poorly designed schema is significantly more disruptive than designing for performance upfront.

5. Managing Infrastructure Costs

Cloud warehouses are elastic by design, which is both a benefit and a risk. Unbounded queries, over-provisioned compute clusters, and storing everything at full granularity when aggregates would suffice all drive costs up quickly. Effective cost management means monitoring query patterns, setting compute auto-suspend policies, tiering infrequently accessed data to lower-cost storage, and regularly reviewing resource consumption. Teams that treat cost governance as an afterthought typically face a billing surprise and scramble to optimize reactively.

Best Practices for Designing Data Warehouse Architecture

1. Choose Scalable Storage and Processing Systems

Select platforms that separate storage from compute and scale each independently. Cloud-native warehouses like Snowflake, Azure Synapse, and BigQuery handle this by design. For organizations with multi-cloud or hybrid requirements, the storage layer needs to grow without requiring a proportional increase in compute spend.

Columnar storage formats and tiered storage policies determine how efficiently teams query large datasets without unnecessary cost. Evaluating platform fit against actual query patterns and data volumes before committing prevents expensive migrations later.

2. Implement Efficient ETL or ELT Pipelines

Pipeline efficiency directly affects data freshness, cost, and reliability. ELT fits cloud-native warehouses well because transformations run on elastic warehouse compute rather than separate infrastructure. Tools like dbt, Azure Data Factory, and Apache Spark handle transformation at scale.

Regardless of tooling choice, pipelines need schema validation at ingestion, automated quality checks at the staging layer, and alerting on failures. Silent pipeline failures are among the most common sources of data quality problems in production warehouses.

3. Design for Performance and Query Optimization

Schema design is the single biggest factor in query performance. Star schemas are the standard for BI-heavy workloads because they minimize join complexity and work well with columnar storage. Partitioning tables on frequently filtered columns, clustering on high-cardinality fields, and building materialized views for expensive recurring aggregations are the primary levers. Teams need to base these decisions on actual query patterns from the business, not theoretical data structures, and revisit them as usage evolves.

4. Establish Strong Data Governance and Security

Governance needs to go into the architecture from the start rather than being added later. Data lineage tracking lets analysts trace the origins of numbers. A metadata catalog keeps field definitions documented and accessible. Role-based access controls apply consistently across all access points, including BI tools, SQL connections, and APIs.

Automated data quality monitoring runs at each pipeline stage. Tools like Microsoft Purview and Collibra integrate with cloud warehouse platforms, making governance operationally sustainable rather than a manual process dependent on individual contributors.

5. Plan for Future Scalability and Data Growth

Architecture decisions made today constrain what’s possible a year from now. Source systems add fields. New data sources come online on short timelines. Query volumes increase as more teams adopt the warehouse. Designing around the current state rather than anticipated growth leads to constant rework.

Data Vault modeling for the core storage layer gives teams the flexibility to add sources without breaking existing structures. Lifecycle policies for moving data from hot to warm to cold storage need to be defined upfront, since retroactively tiering large datasets disrupts running pipelines and dashboards.

How Kanerika Helps with Data Warehouse Architecture

Kanerika helps enterprises across manufacturing, healthcare, financial services, and logistics design and modernize their data warehouse architecture. Many projects begin when organizations face issues such as fragmented data pipelines, inconsistent integrations, governance gaps, or legacy on-premises systems that cannot support modern analytics workloads.

As a Microsoft Solutions Partner for Data & AI and Microsoft Fabric Featured Partner, Kanerika designs and deploys cloud-native warehouse architectures on Azure Synapse, Microsoft Fabric, and Snowflake. The team specializes in data warehouse migration, helping organizations move from legacy systems or data lake environments to modern Fabric Lakehouse architectures using the FLIP platform while maintaining data integrity and lineage.

Governance is built into the architecture from the start. Kanerika implements Microsoft Purview for metadata management, lineage tracking, and compliance. Databricks integration supports large-scale transformations and ML pipelines alongside the warehouse layer. ISO 9001, ISO 27001, and ISO 27701 certifications reflect strong security and compliance standards.

Turn Raw Data Into Insights With End-to-End Analytics Solutions!

Partner with Kanerika Today.

FAQs

1. What is data warehouse architecture?

Data warehouse architecture refers to the overall structure that defines how data is collected, stored, processed, and accessed within a data warehouse system. It acts as a blueprint that organizes components such as data sources, ETL processes, storage layers, and analytics tools. The goal is to transform raw data from multiple systems into a centralized, structured repository that supports reporting and business intelligence. A well-designed architecture ensures data consistency, scalability, and fast query performance for analytical workloads.

2. What are the key layers in data warehouse architecture?

Most modern data warehouse architectures are built using multiple layers that handle different stages of the data pipeline. These typically include the data source layer, staging layer, data warehouse storage layer, and analytics or access layer. Data first enters from operational systems, then passes through a staging area where it is cleaned and transformed before being stored in the warehouse. Finally, BI tools and analytics platforms access the processed data to generate insights, dashboards, and reports.

3. What are the main components of data warehouse architecture?

The core components of data warehouse architecture include a central database, ETL tools, metadata management, and query or reporting tools. ETL processes extract data from multiple sources, transform it into a consistent format, and load it into the warehouse. Metadata stores information about data definitions, sources, and transformations, helping maintain data governance. Query and reporting tools allow analysts and business users to explore the data and generate insights.

4. How does ETL fit into data warehouse architecture?

ETL plays a central role in data warehouse architecture because it manages the flow of data from source systems into the warehouse. During the extract stage, data is collected from databases, applications, or external systems. The transform stage cleans, standardizes, and aggregates the data to ensure quality and consistency. Finally, the load stage moves the processed data into the warehouse where it becomes available for analysis and reporting.

5. Why is data warehouse architecture important for enterprises?

Data warehouse architecture is critical for enterprises because it enables organizations to integrate large volumes of data from multiple systems into a unified analytical platform. By organizing data in a structured way, it improves reporting accuracy, query performance, and decision-making capabilities. A scalable architecture also allows businesses to handle growing data volumes while supporting advanced analytics, machine learning, and business intelligence initiatives across departments.