Ever feel like your business is drowning in data but starving for insight? You’re not alone. In today’s hyper-connected world, organizations collect data from dozens of sources—CRMs, ERPs, marketing platforms, IoT devices, and more. But without a way to unify it all, even the most advanced analytics tools fall short.

That’s where data integration comes in.

According to a recent Gartner report, over 80% of data analytics projects fail due to poor data integration and quality issues. In a landscape where real-time decision-making is critical, siloed data isn’t just inefficient—it’s a liability.

Take Netflix, for example. The streaming giant integrates data from content licensing, user behavior, device logs, and regional trends to deliver hyper-personalized recommendations and make billion-dollar content decisions. Without seamless data integration, that kind of agility would be impossible.

So, what is data integration—and why does it matter more now than ever? In this blog, we’ll break down the concept, explore real-world benefits, share common challenges, and guide you through best practices for integrating data across systems in a scalable, future-proof way.

What is Data Integration?

Data integration is a critical process that enables you to combine data from various sources, providing a cohesive view across different datasets. The primary goal is to create a unified and actionable data landscape for your organization. In your quest to make informed decisions, data integration is a foundational step that empowers you with comprehensive insights by merging data residing in diverse formats and locations. Whether you’re analyzing market trends or combining customer information from separate databases, data integration ensures that you have access to consistent and high-quality data.

Understanding the types and techniques of data integration is essential for efficient implementation. You may encounter methodologies like Extract, Transform, Load (ETL) and Extract, Load, Transform (ELT), each with its advantages, depending on your needs for real-time processing and the scale of data operations. Moreover, with tools and services from technology providers like IBM and Google Cloud, you have the option to streamline this process through code-free solutions that can reduce complexity and increase agility. As you explore the world of data integration, consider the challenges, such as data quality and security, alongside the benefits like enhanced data analytics and better strategic decision-making.

Enhance Data Accuracy and Efficiency With Expert Integration Solutions!

Partner with Kanerika Today.

Data Integration Techniques

Effective data integration methods streamline your business processes by allowing more efficient access to and analysis of data from disparate sources.

1. ETL Process

Extract, Transform, Load (ETL) is a sequential process where you first extract data from homogeneous or heterogeneous sources. Next, in the transform stage, this data is cleansed, enriched, and converted into a format suitable for analysis. Finally, the processed data is loaded into the target system, such as a data warehouse. ETL is traditionally used in batch integration, where data movement occurs at scheduled intervals.

- Pros:

- Ensures data quality through the transformation stage

- Data is consolidated, which aids in performance and analysis

- Cons:

- Can be time-consuming due to the batch processing model

- Less responsive to real-time data changes

2. ELT Process

Extract, Load, Transform (ELT), on the contrary, alters the traditional sequence. Here, you extract data and immediately load it into the target system. The transformation happens after loading, leveraging the power of modern data storage systems. ELT is particularly well-suited for handling large volumes of data and supporting real-time analytics applications.

- Pros:

- Enables real-time data processing

- More scalable as it handles vast data volumes efficiently

- Cons:

- May have implications for data governance and quality control

- Relies heavily on the capabilities of the target system for transformation

3. Data Virtualization

In Data Virtualization, you integrate data from different sources without physically moving or storing it in a single repository. Instead, it provides an abstracted, integrated view of the data which can be accessed on-demand. Additionally, this approach supports real-time data integration, often with less overhead compared to traditional methods.

- Pros:

- No need for a physical data warehouse

- Offers greater agility with real-time access to data

- Cons:

- May face performance issues with complex queries

- Requires a robust network and system infrastructure

4. Data Federation

Data Federation software creates a virtual database that provides an integrated view of data spread across multiple databases. It differs from data virtualization in that federation often targets more complex queries and transactional consistency. And, this method unifies data from multiple sources while maintaining their physical autonomy.

- Pros:

- Centralized access to data without consolidating into a single physical location

- Useful for complex data environments with diverse data stores

- Cons:

- Can encounter performance bottlenecks

- Data freshness might be a concern as it typically works better with batch processing rather than real-time

Data Ingestion vs Data Integration: How Are They Different?

Uncover the key differences between data ingestion and data integration, and learn how each plays a vital role in managing your organization’s data pipeline.

The Business Impact of Data Integration

Data integration leverages technology to streamline business processes and improve operational efficiency. Moreover, it is a catalyst in transforming raw data into valuable business insights, fostering collaboration, and driving smarter decision making.

1. Improving Decision Making

Your ability to make informed business decisions is significantly enhanced through data integration. By consolidating data from various sources, you gain a comprehensive view of your business landscape. Additionally, this allows you to identify trends, assess performance metrics, and make decisions backed by data. The speed at which you can access integrated data means you can respond more swiftly to market changes and operational demands.

2. Enhancing Collaboration and Communication

Integrated data breaks down silos and encourages unified collaboration among different departments within your company. When everyone has access to the same information, inter-departmental communication is more efficient, reducing misunderstandings and errors. This unity can lead to improved business processes and operational workflow, which are essential for a well-functioning business environment.

3. Gaining Business Insights and Fostering Innovation

Data integration is a gateway to gaining deeper business insights and drives innovation. By analyzing the integrated data, you can uncover patterns and opportunities that may not be visible in isolated datasets. This insight propels strategic initiatives and can lead to innovative solutions to business challenges, directly impacting your company’s growth and adaptability in a competitive market.

4. Calculating the Return on Investment (ROI)

Understanding the ROI of data integration is crucial to justify its implementation. You must consider both tangible benefits, like cost savings from improved operational efficiencies, and intangible benefits, such as enhanced data quality. Measuring ROI involves scrutinizing the costs of data integration against the financial gains—whether through direct revenue increases or indirect cost reductions—to ensure that your investment is yielding a positive return.

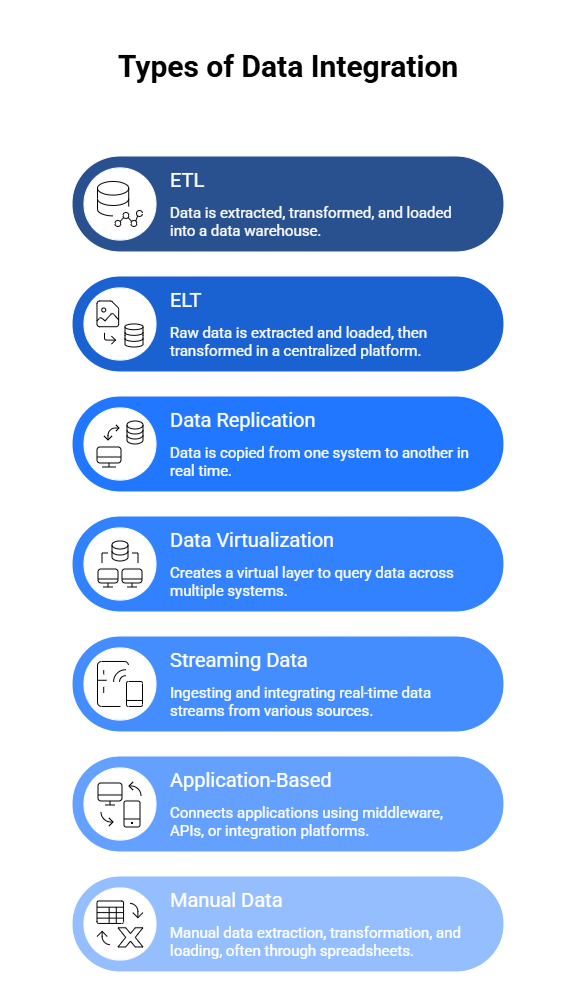

Types of Data Integration

There are several types of data integration that can be used to combine data from multiple sources.

1. ETL (Extract, Transform, Load)

ETL is the most traditional and widely used method. Data is first extracted from multiple sources (databases, CRMs, flat files), then transformed into a standardized format (cleaned, enriched, deduplicated), and finally loaded into a target data warehouse. It’s best suited for structured data and batch processing, such as overnight analytics jobs or monthly reporting.

2. ELT (Extract, Load, Transform)

In this approach, raw data is extracted and loaded directly into a centralized platform like a cloud data lake. The transformation happens after loading, leveraging the target system’s scalable compute. ELT is ideal for modern cloud-first architectures where real-time or near-real-time data is needed, and flexibility in transformation is a priority.

3. Data Replication

This method copies data from one system to another, either in real time or at scheduled intervals. It ensures systems remain in sync, and is commonly used for disaster recovery, failover, or mirroring operational data to analytics systems. It minimizes latency and allows continuous availability of critical data.

4. Data Virtualization

Unlike other methods, data virtualization does not physically move data. It creates a virtual layer that allows users to query data across multiple systems (databases, APIs, cloud storage) as if it were in one place. It reduces storage redundancy, accelerates access, and supports real-time decision-making.

5. Streaming Data Integration

This method focuses on ingesting and integrating real-time data streams from sources such as IoT devices, sensors, applications, or logs. It is essential for use cases that require low latency, such as fraud detection, dynamic pricing, or live dashboards.

6. Application-Based Integration

This uses middleware, APIs, or integration platforms (like MuleSoft or Zapier) to connect applications and ensure smooth data exchange. It’s ideal for synchronizing SaaS tools, automating workflows, or enabling real-time operational insights across systems.

7. Manual Data Integration

Still used in some smaller or legacy environments, this approach involves manual data extraction, transformation, and loading—often through spreadsheets or scripts. It’s labor-intensive, error-prone, and not scalable, but may serve in limited one-time migrations or non-critical processes.

Popular Tools and Platforms

Choosing the right data integration tool depends on your data architecture, scalability needs, and budget. Here are some widely used platforms:

1. Informatica

Informatica is a market leader in enterprise-grade data integration. It offers powerful ETL capabilities, cloud-native tools, and robust data governance features—ideal for large-scale, complex environments.

2. Talend

Talend is an open-source data integration platform known for its flexibility and user-friendly interface. It supports both batch and real-time processing, with built-in tools for data quality, transformation, and pipeline orchestration.

3. Microsoft Azure Data Factory

Azure Data Factory is a cloud-based ETL and data orchestration service that integrates well with Microsoft’s ecosystem. It allows building scalable data pipelines with minimal code, making it suitable for hybrid and multi-cloud environments.

4. Apache NiFi

An open-source project from the Apache Foundation, NiFi offers a visual interface for designing data flows. It excels at streaming data integration and real-time data routing with fine-grained control.

5. Fivetran / Stitch

Both Fivetran and Stitch provide fully managed ELT solutions for quick, code-free integration. They’re ideal for startups and mid-sized businesses that want to move data from multiple sources into a warehouse like Snowflake or BigQuery with minimal setup.

Maximizing Efficiency: The Power of Automated Data Integration

Discover the key differences between data ingestion and data integration, and learn how each plays a vital role in managing your organization’s data pipeline.

Common Use Cases of Data Integration

Data integration plays a critical role across industries by breaking down silos and making data accessible, accurate, and actionable. Here are some of the most impactful use cases:

1. Customer 360 Views in Marketing and CRM

By integrating data from CRM systems, email platforms, social media, and customer support tools, businesses can create a comprehensive view of each customer. This unified perspective enables personalized marketing, better customer service, and improved retention strategies.

2. Real-Time Analytics in E-Commerce

E-commerce platforms use data integration to combine information from websites, inventory systems, payment gateways, and user behavior analytics tools. This enables real-time dashboards, dynamic pricing, fraud detection, and smarter product recommendations.

3. IoT and Sensor Data in Manufacturing

Manufacturers rely on data integration to collect and unify sensor data from machinery, production lines, and logistics systems. This allows for predictive maintenance, improved quality control, and increased operational efficiency.

4. Healthcare Data Interoperability

Hospitals and healthcare providers integrate data from EMRs, lab systems, and insurance platforms to enable a more complete patient record. This supports better diagnosis, treatment planning, and regulatory compliance.

5. Financial Reporting and Risk Compliance

Financial institutions consolidate data from transactional systems, risk management platforms, and external data sources to ensure accurate reporting, risk assessment, and adherence to compliance standards such as IFRS and GDPR.

Simplify Your Data Management With Powerful Integration Services!!

Partner with Kanerika Today.

Challenges and Best Practices in Data Integration

In the realm of data integration, you’ll encounter an array of challenges that can impact the efficiency and effectiveness of your systems. On the cusp of delving into these issues, it’s crucial to also identify the best practices that can mitigate these hurdles and enhance your data integration processes.

1. Dealing with Data Silos

Data silos create barriers due to their isolated nature, making it tough to achieve a unified view. A primary challenge you’ll face is the fragmentation of data across multiple sources, which hinders your ability to extract meaningful insights. To counteract this:

- Identify and catalog your data sources

- Implement integration tools that support various data types and sources

- Foster a collaborative culture to reduce resistance to data sharing

2. Integration of Structured and Unstructured Data

You must tackle the complexity of merging structured and unstructured data, as they require different approaches for processing and analysis. The process includes:

- Using ETL (Extract, Transform, Load) practices for structured data

- Applying data mining and NLP (Natural Language Processing) for unstructured data

- Ensuring semantic integration for meaningful aggregation and analysis

3. Technical Challenges and Solutions

Technical challenges in data integration span interoperability, real-time data processing, and maintaining data quality. Your solutions should focus on:

- Adopting interoperable systems that ensure seamless data exchange

- Utilizing middleware or ESB (Enterprise Service Bus) for real-time data processing needs

- Instituting stringent data quality protocols to maintain the integrity of integration

4. Adaptability and Future-Proofing Your Integration Strategy

The rapidly evolving data landscape requires you to be proactive and adaptable. Future-proof your strategy by:

- Choosing scalable integration platforms that can grow with your data needs

- Planning for emerging data formats and standards to avoid future compatibility issues

- Creating an agile integration framework that permits quick adaptation to new technologies and methodologies

Current Trends and Future Directions

The data integration landscape is rapidly evolving with significant advancements in technology influencing how you manage and leverage data for strategic advantages.

1. Machine Learning and AI

Machine learning (ML) and artificial intelligence (AI) are redefining data integration processes. Your systems are becoming more predictive and intelligent, enabling them to automate data management tasks and provide insights. This integration of ML and AI aids in data quality and governance, ensuring your data is accurate and usable.

- Predictive Analytics: By incorporating ML algorithms, you unlock the ability to forecast trends and behaviors

- Data Enhancement: AI empowers the enrichment of data, providing additional context and relevance

2. Data Integration and the API Economy

The proliferation of the API economy has made API data integration a key element in your digital strategy. APIs simplify the way distinct systems communicate, and they enable a more seamless flow of data across various platforms.

- Standardization: APIs lead to standardized methods of data exchange, enhancing compatibility between systems

- Real-time Access: Through APIs, you gain immediate access to data, thus supporting up-to-date decision-making

3. DevOps and DataOps Integration

Your operational efficiency improves with the integration of DevOps and DataOps. These methodologies focus on streamlining the entire data lifecycle, from creation to deployment and analysis, thus reducing the time-to-market for new features and improving the data quality.

- Continuous Deployment: DevOps principles facilitate rapid and reliable software delivery

- Improved Collaboration: DataOps fosters a culture of collaboration, aligning teams toward common data-related goals

4. The Evolution of Data Platforms

Data platforms are becoming more sophisticated, incorporating advanced analytics and machine learning capabilities. The evolution ensures that your data platforms are not just storage repositories but analytical powerhouses that drive decision-making.

- Scalability: Modern data platforms scale on-demand to manage varying data loads

- Integration Capabilities: These platforms now offer built-in support for integrating a wide array of data sources, including IoT devices and unstructured data

Data Integration Services in the California

Explore Kanerika’s data integration services in California, designed to seamlessly connect diverse data sources, streamline workflows, and enhance data accessibility.

Case Studies: Kanerika’s Successful Data Integration Projects

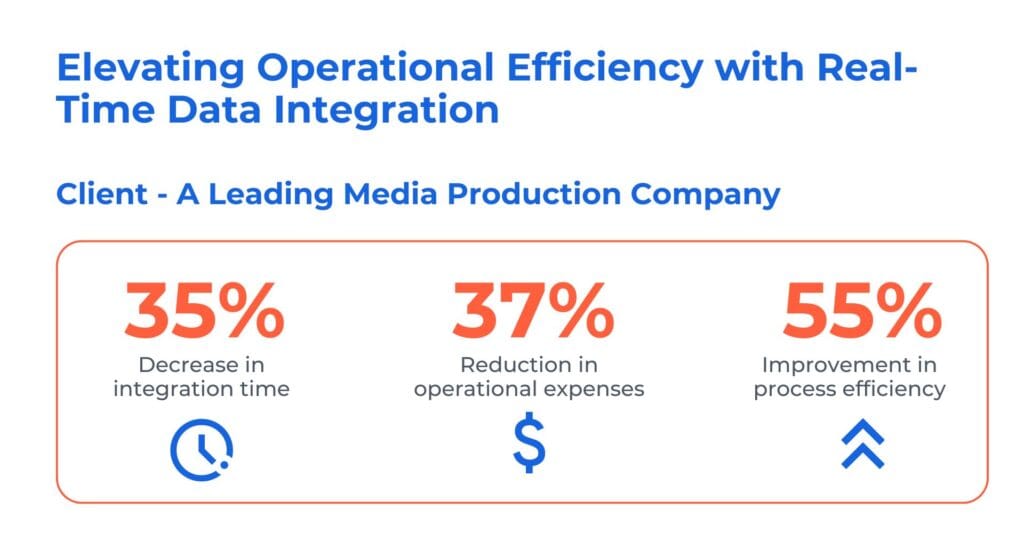

1. Unlocking Operational Efficiency with Real-Time Data Integration

The client is a prominent media production company operating in the global film, television, and streaming industry. They faced a significant challenge while upgrading its CRM to the new MS Dynamics CRM. This complexity in accessing multiple systems slowed down response times and posed security and efficiency concerns.

Kanerika has reolved their problem by leevraging tools like Informatica and Dynamics 365. Here’s how we our real-time data integration solution to streamline, expedite, and reduce operating costs while maintaining data security.

- Implemented iPass integration with Dynamics 365 connector, ensuring future-ready app integration and reducing pension processing time

- Enhanced Dynamics 365 with real-time data integration to paginated data, guaranteeing compliance with PHI and PCI

- Streamlined exception management, enabled proactive monitoring, and automated third-party integration, driving efficiency

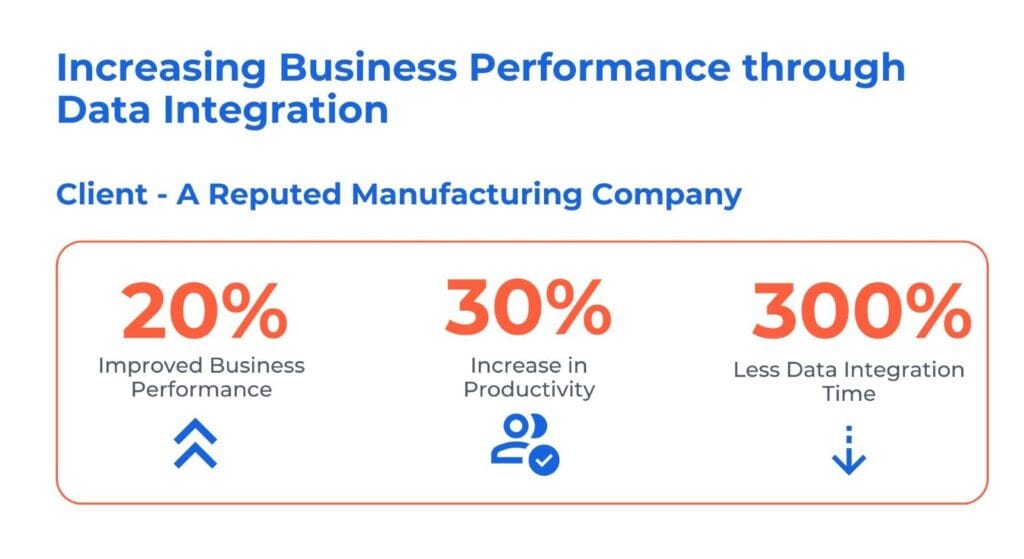

2. Enhancing Business Performance through Data Integration

The client is a prominent edible oil manufacturer and distributor, with a nationwide reach. The usage of both SAP and non-SAP systems led to inconsistent and delayed data insights, affecting precise decision-making. Furthermore, the manual synchronization of financial and HR data introduced both inefficiencies and inaccuracies.

Kanerika has addressed the client challenges by delvering follwoing data integration solutions:

- Consolidated and centralized SAP and non-SAP data sources, providing insights for accurate decision-making

- Streamlined integration of financial and HR data, ensuring synchronization and enhancing overall business performance

- Automated integration processes to eliminate manual efforts and minimize error risks, saving cost and improving efficiency

Kanerika: The Trusted Choice for Streamlined and Secure Data Integration

At Kanerika, we excel in unifying your data landscapes, leveraging cutting-edge tools and techniques to create seamless, powerful data ecosystems. Our expertise spans the most advanced data integration platforms, ensuring your information flows efficiently and securely across your entire organization.

With a proven track record of success, we’ve tackled complex data integration challenges for diverse clients in banking, retail, logistics, healthcare, and manufacturing. Our tailored solutions address the unique needs of each industry, driving innovation and fueling growth.

We understand that well-managed data is the cornerstone of informed decision-making and operational excellence. That’s why we’re committed to building and maintaining robust data infrastructures that empower you to extract maximum value from your information assets.

Choose Kanerika for data integration that’s not just about connecting systems, but about unlocking your data’s full potential to propel your business forward.

Empower Your Data-Driven Workflows With Robust ETL Solutions!

Partner with Kanerika Today.

FAQs

A common data integration example is consolidating customer records from a CRM, e-commerce platform, and support ticketing system into a unified customer data platform. This integration merges purchase history, service interactions, and demographic information into a single view, enabling personalized marketing and faster support resolution. Retailers frequently use this approach to synchronize inventory data across warehouses, online stores, and point-of-sale systems in real time. Kanerika helps enterprises implement similar data integration solutions that unify disparate sources seamlessly—connect with our team to explore your use case. The four primary types of system integration are point-to-point integration, hub-and-spoke integration, enterprise service bus, and microservices-based integration. Point-to-point connects systems directly but becomes complex at scale. Hub-and-spoke centralizes data flow through a single hub. Enterprise service bus provides middleware-driven communication between applications. Microservices architecture enables modular, API-driven connections that scale independently. Each approach suits different enterprise data integration requirements based on complexity, budget, and scalability needs. Kanerika evaluates your infrastructure to recommend the optimal integration architecture—schedule a consultation to find your best fit. Popular data integration tools include Microsoft Fabric, Informatica PowerCenter, Talend, Apache NiFi, and Databricks. Microsoft Fabric offers end-to-end analytics integration with built-in governance, while Databricks excels at large-scale Lakehouse ETL pipelines. Talend provides open-source flexibility, and Informatica delivers enterprise-grade data management capabilities. Selecting the right tool depends on your data volume, existing tech stack, and transformation complexity. Kanerika holds deep expertise across leading integration platforms and helps enterprises select, implement, and optimize tools for their specific environment—reach out for a personalized recommendation. Data integration is the process of combining data from multiple disparate sources into a unified, consistent view for analysis and decision-making. It involves extracting information from databases, applications, and files, then transforming and loading it into a target system like a data warehouse or Lakehouse. Effective data integration eliminates silos, ensures data consistency, and enables real-time business intelligence across the organization. Modern approaches incorporate automation, governance, and quality controls throughout the pipeline. Kanerika delivers comprehensive data integration services that unify your enterprise data—contact us to start your integration journey. Data integration is important because it eliminates information silos, enabling organizations to access complete, accurate data for strategic decisions. Without integration, teams work from fragmented datasets that lead to inconsistent reporting, missed insights, and operational inefficiencies. Integrated data accelerates analytics, improves customer experiences, and supports regulatory compliance by maintaining a single source of truth. Enterprises with mature data integration capabilities make faster, more confident decisions and respond to market changes with agility. Kanerika’s integration specialists help organizations unlock these benefits quickly—talk to us about building your unified data foundation. The main types of data integration include ETL (Extract, Transform, Load), ELT (Extract, Load, Transform), data virtualization, data federation, and application integration. ETL transforms data before loading into warehouses, while ELT leverages modern cloud processing power post-load. Data virtualization provides real-time access without physical movement. Data federation queries distributed sources as a unified dataset. Application integration synchronizes data between business software. Each type addresses specific latency, volume, and transformation requirements. Kanerika implements the integration approach that aligns with your infrastructure and analytics goals—reach out for expert guidance. Data integration improves business performance by providing unified, timely insights that drive faster and smarter decisions. When sales, finance, and operations access consistent data, forecasting accuracy increases and cross-functional collaboration strengthens. Integrated data pipelines automate manual reporting tasks, freeing teams to focus on strategic initiatives. Real-time integration supports dynamic pricing, inventory optimization, and personalized customer engagement. Companies with mature integration capabilities report higher operational efficiency and reduced time-to-insight across departments. Kanerika builds integration solutions that directly impact revenue and efficiency—let us show you measurable ROI through a tailored assessment. Common data integration challenges include handling diverse data formats, maintaining data quality across sources, managing schema changes, ensuring security compliance, and scaling for growing data volumes. Legacy systems often lack modern APIs, requiring custom connectors. Data silos create governance gaps, while real-time requirements demand robust infrastructure. Organizations also struggle with aligning integration initiatives across departments with different priorities. Addressing these challenges requires strategic planning, the right technology stack, and experienced implementation partners. Kanerika has solved these challenges across industries and can help you navigate complexity—connect with our team for proven solutions. ETL stands for Extract, Transform, Load—a foundational data integration process that moves data from source systems into target repositories. The extract phase pulls data from databases, applications, or files. The transform phase cleanses, formats, and enriches the data according to business rules. The load phase writes transformed data into a data warehouse or analytics platform. ETL ensures data consistency and prepares information for reporting and analysis. Modern ETL pipelines incorporate automation and monitoring for reliability. Kanerika designs and deploys ETL solutions optimized for your data ecosystem—contact us to modernize your pipelines. Data integration and ETL are not the same, though ETL is one method within the broader data integration discipline. Data integration encompasses all techniques for combining data from multiple sources, including ETL, ELT, data virtualization, APIs, and streaming pipelines. ETL specifically refers to the extract, transform, and load process for batch data movement. Modern integration strategies often combine multiple approaches based on latency requirements and source complexity. Understanding this distinction helps organizations select the right architecture for each use case. Kanerika designs holistic integration strategies beyond ETL alone—explore your options with our experts. The main goal of data integration is to create a unified, accurate, and accessible view of enterprise data that supports informed decision-making. By consolidating information from disparate systems into a single source of truth, organizations eliminate inconsistencies and reduce manual reconciliation efforts. This unified view enables faster analytics, improved operational efficiency, and better customer experiences. Effective integration also ensures data governance and compliance by maintaining lineage and quality standards throughout the data lifecycle. Kanerika helps enterprises achieve this goal with tailored integration strategies—schedule a discovery session to define your path forward. The top five data integration patterns are migration, broadcast, aggregation, bidirectional sync, and correlation. Migration moves data from legacy to modern systems in bulk. Broadcast replicates data from one source to multiple targets simultaneously. Aggregation combines data from several sources into a central repository. Bidirectional sync maintains consistency between two systems in real time. Correlation matches and merges related records across sources without moving data. Selecting the right pattern depends on data freshness requirements, system architecture, and business processes. Kanerika applies proven integration patterns to enterprise challenges—reach out to discuss which pattern fits your needs. ETL remains the most common method for data integration, particularly for batch processing into data warehouses. Organizations extract data from operational systems, apply transformation rules for cleansing and standardization, then load results into analytics platforms. API-based integration has grown popular for real-time synchronization between cloud applications. Data virtualization offers another common approach, providing unified access without physical data movement. The best method depends on latency requirements, data volume, and infrastructure maturity. Kanerika implements integration methods aligned with your technical and business requirements—connect with us for a tailored recommendation. The data integration process follows five key steps: planning, data extraction, data transformation, data loading, and validation. Planning defines source systems, target architecture, and business rules. Extraction pulls data from databases, files, and applications. Transformation cleanses, standardizes, and enriches data according to quality requirements. Loading moves transformed data into the destination system. Validation confirms accuracy, completeness, and consistency through automated testing. Ongoing monitoring ensures pipeline reliability and data freshness over time. Kanerika guides enterprises through each integration step with structured methodologies—start with a free assessment to map your process. Data quality and integration work together to ensure enterprise data is accurate, consistent, and usable for analytics. Data quality involves validating completeness, accuracy, timeliness, and consistency of information. Integration brings data together from multiple sources while applying quality rules during transformation. Poor quality at source systems propagates through pipelines without proper controls. Modern integration platforms embed profiling, cleansing, and monitoring to maintain quality throughout the data lifecycle. This combination delivers trustworthy insights that drive confident business decisions. Kanerika builds integration pipelines with embedded data quality governance—talk to our team about ensuring clean, reliable data. Data integration improves data quality by applying standardization, deduplication, and validation rules during the transformation phase. As data moves through pipelines, integration processes identify inconsistencies, correct formatting errors, and merge duplicate records. Centralized integration enables consistent quality standards across all sources rather than addressing issues system by system. Automated profiling detects anomalies before they reach analytics platforms. Master data management integrated with pipelines maintains golden records for critical entities. This systematic approach transforms fragmented, error-prone data into trusted information assets. Kanerika embeds quality controls into every integration pipeline—let us help you achieve cleaner data faster. Data integration is not exclusive to large enterprises—businesses of all sizes benefit from unified data. Small and mid-sized companies often operate with multiple cloud applications, spreadsheets, and databases that create silos. Integration connects these systems affordably using modern cloud-native tools with usage-based pricing. Unified data enables smaller teams to compete with enterprise-level analytics capabilities without massive IT investments. Scalable integration platforms grow with business needs, making early adoption strategic rather than premature. Organizations that integrate data early build stronger foundations for growth. Kanerika delivers right-sized integration solutions for businesses at every stage—reach out to explore cost-effective options. AI will augment rather than fully replace ETL processes in data integration. Machine learning automates schema mapping, anomaly detection, and transformation recommendations that previously required manual effort. AI-powered tools accelerate pipeline development and improve data quality through intelligent cleansing. However, ETL’s core functions of extraction, transformation, and loading remain essential for structured data movement. AI enhances these capabilities with predictive monitoring and self-healing pipelines. The future combines traditional ETL reliability with AI-driven intelligence for faster, smarter integration. Kanerika implements AI-enhanced integration solutions that maximize automation—discover how AI can transform your data pipelines.What is an example of data integration?

What are the 4 types of system integration?

Which tool is used for data integration?

What is data integration?

Why is data integration important?

What are the main types of data integration?

How does data integration improve business performance?

What are the challenges of data integration?

What is ETL in data integration?

Is data integration the same as ETL?

What is the main goal of data integration?

What are the top 5 data integration patterns?

What is a common method for data integration?

What are the steps of data integration?

What is data quality and integration?

How does data integration improve data quality?

Is data integration only for large enterprises?

Will ETL be replaced by AI?