Last week, a developer posted a screenshot showing an AI fixing a failing test suite, explaining the bug, and committing the patch in under a minute. The tool running behind the scenes was not a traditional code assistant but a coding agent. Moments like this are driving curiosity about GPT Codex vs. Claude Code, two tools designed to work directly with developers within real coding workflows. Instead of only suggesting snippets, these systems can read repositories, generate functions, debug errors, and help engineers navigate complex codebases.

Interest in AI coding tools is growing fast. Surveys show that more than 70% of developers now use AI assistants for coding tasks, and GitHub research indicates that developers can complete certain tasks up to 55% faster with AI support. As adoption increases, developers are actively comparing GPT Codex and Claude Code to understand which tool works better for debugging, building features, and managing large projects.

In this blog, we break down GPT Codex vs Claude Code, exploring how each tool works, where they perform well, and which one might fit your development workflow better.

Enable Responsible and Ethical AI in Your Enterprise

Discover a practical roadmap for implementing transparent and accountable AI systems.

Key Takeaways

- GPT Codex and Claude Code represent a new generation of AI coding agents that go beyond code suggestions by reading repositories, debugging issues, and autonomously executing development tasks.

- Codex focuses on speed, token efficiency, and cloud-based execution, making it well-suited for fast feature development, automated code review, and CI/CD-driven workflows.

- Claude Code emphasizes deeper reasoning, large context windows, and local execution, which helps developers navigate large legacy codebases and maintain stronger data residency control.

- The choice between the two often depends on workflow preferences such as terminal-native development, multi-agent coordination, compliance requirements, and team adoption barriers.

- For enterprises, governance and security considerations matter as much as model performance because these agents can access repositories, execute commands, and modify code autonomously.

Why Developers Are Comparing GPT Codex and Claude Code

Both tools crossed a threshold in early 2026 that made direct comparison worth doing for the first time. OpenAI shipped GPT-5.3-Codex in February with a claimed 25% speed improvement and state-of-the-art results on SWE-bench Pro. Within weeks, Anthropic released Claude Opus 4.6 and Claude Sonnet 4.6, introducing a 1-million-token context window in beta and a new multi-agent feature called Agent Teams. Both tools are now running on models released within weeks of each other, making a head-to-head more meaningful than ever.

The tools look similar on the surface: both accept natural language, write and execute code autonomously, and integrate with Git. Developers who’ve used both in production describe them differently, though:

- Codex is fast, concise, and defensive. It adds input validation you didn’t ask for and ships quickly.

- Claude Code is thorough, sometimes verbose, and better at understanding vague or ambiguous prompts.

A 2025 Stack Overflow developer survey found GPT at 82% overall usage but Claude at 45% among professional developers, a gap that points to different use patterns rather than one being definitively better.

One thing most comparison articles skip: tool selection here is a governance decision as much as a capability one. Both tools have file-system access, execute shell commands, and can open pull requests autonomously. That significantly changes the enterprise risk profile. Shadow adoption of AI coding tools is one of the most common governance gaps Kanerika sees across enterprise AI programs, ahead of model selection or infrastructure fit. The right tool depends on what controls are already in place, not just which one benchmarks better.

GPT Codex vs Claude Code: Key Differences

1. Architecture and Execution Model

Codex runs as a cloud-based agent. It accepts goal descriptions and works through them autonomously in a sandboxed environment, planning execution sequences, managing dependencies, and coordinating multi-file changes. Configuration is handled through AGENTS.md files, an open standard already used by tens of thousands of open-source projects — teams already using Cursor or Aider can drop their existing configuration directly into Codex without rewriting anything.

Claude Code takes a different approach entirely. It executes locally: your code stays on your machine, Claude Code reads your local filesystem, runs commands in your actual terminal, and uses your local git setup. Only the prompt travels to Anthropic’s API for processing. Nothing is sent to a cloud container, which matters significantly for teams with strict data residency requirements or those working in air-gapped environments.

2. Context Window

Opus 4.6 supports the 200,000-token standard, with a 1-million-token context window available in beta via the API. Codex operates with up to 192,000 tokens. The difference is meaningful in practice when navigating large legacy codebases with tangled dependencies across dozens of files, not just a spec sheet advantage.

3. Configurability

Claude Code has significantly more configuration surface: CLAUDE.md files, sub-agents, custom hooks, slash commands, MCP support, and the ability to completely replace the system prompt. Replacing the system prompt is the standout capability — it lets teams build specialized agents in about 20 minutes, something Codex cannot do because it verifies the system prompt hash on the backend.

Codex trades that flexibility for consistency. It tends to produce high-quality results with less setup, which suits teams that want reliable output without spending time tuning configuration files.

4. Agentic Coherence Over Long Task Chains

This is the dimension most reviews skip and the one that separates these tools in production. Codex is faster on the first pass. Claude Code is more consistent when a task runs 20+ autonomous steps. The pattern that surfaces repeatedly in developer forums: Codex loses context on long autonomous tasks and starts making changes that conflict with what it already fixed. This is a predictable behavior rooted in how async parallel execution interacts with task context, not a random bug.

Developer feedback on communication style also diverges. Codex is consistently described as concise and direct. When it makes a mistake, it states what went wrong and offers concrete options. Claude Code can be verbose; some developers report it generating thousands of markdown files or lengthy explanatory responses for simple questions, and its tendency to preface corrections with affirmations irritates engineers who want directness.

Feature Comparison

| Feature | GPT Codex | Claude Code |

| Execution environment | Cloud sandbox | Local machine |

| Underlying model | GPT-5.3-Codex | Claude Opus 4.6 / Sonnet 4.6 |

| Context window | 192,000 tokens | 200K standard, 1M beta |

| Configuration | AGENTS.md | CLAUDE.md, hooks, MCP, sub-agents |

| System prompt control | No | Yes |

| Multi-agent support | Limited | Agent Teams (native) |

| IDE integration | ChatGPT, cloud agent | Terminal, VS Code, JetBrains (beta) |

| MCP support | stdio only (no HTTP endpoints) | Full native support |

| Open source CLI | No | Yes |

| Data residency | Cloud-dependent | Local execution |

| Token efficiency | 2-4x fewer tokens per task | Higher token usage |

| Code review strength | Strong (Terminal-Bench lead) | Strong (vulnerability detection) |

| Pricing | $6/$30 per million tokens | Opus $5/$25; Sonnet $3/$15 |

One number worth flagging on token efficiency: a Figma cloning task run by Composio found that Codex used approximately 1.5 million tokens, while Claude Code used 6.2 million. At scale, that difference compounds into a meaningful cost gap, even though Codex has a slightly higher per-token rate.

Benchmark scores are close. On SWE-bench Verified, Opus 4.6 scores 80.8%, and Codex 5.3 scores approximately 80%. SWE-bench Pro, which tests more demanding real-world tasks, shows Opus 4.5 leading with 45.89%, while Codex 5.2 is 41.04%. Codex holds a noticeable lead on Terminal-Bench 2.0, which tests terminal-style execution tasks specifically. The gap is real but context-dependent.

Which One Fits Better Into a Developer’s Workflow?

The answer comes down to three things: where you work, how you prefer to interact with an agent, and whether you need the agent to understand ambiguous intent or execute clearly defined tasks quickly.

1. Terminal-Native Developers

Claude Code fits naturally here. It reads your actual filesystem, uses your local git, and stays entirely within your existing toolchain. The VS Code and JetBrains integrations mean you never have to leave your editor. The 1 million-token context window in beta makes it the stronger option for navigating large, legacy codebases, where relevant context spans dozens of interconnected files.

2. Speed and Token Efficiency

Codex is the practical choice when configuration overhead needs to stay low. The AGENTS.md standard means existing project configurations carry over without rewriting anything. Cloud-based execution handles long-running tasks autonomously without tying up local compute, and the Terminal-Bench lead translates to real-world strength on catching edge cases, logical errors, and security issues during code review.

3. Multi-Agent Work

Claude Code’s Agent Teams feature handles multi-file refactors where a change in one file ripples through many others, using a shared task list that keeps agents from losing track of interdependencies. Codex handles multi-step tasks within a single-agent loop but lacks comparable native orchestration for distributed multi-agent workflows.

4. Mixed Teams with Non-CLI Developers

Codex’s web interface removes real adoption barriers here. QA engineers, data analysts, and technical PMs can use it without touching a terminal. Claude Code requires Node.js 18+, CLI installation, and API key setup — about 10 minutes for CLI-native developers, but a genuine friction point for everyone else, affecting adoption rates and ultimately ROI.

5. Compliance and Data Residency

Claude Code’s local execution model is often the deciding factor in regulated environments. Data never leaves the developer’s machine except for the API call to Anthropic. Codex’s cloud sandbox raises questions that security reviews will ask, and those reviews add time.

A workflow that appears frequently in developer discussions: use Claude Code to generate refactored code, then run Codex as a reviewer before merging. The two tools complement each other more often than they compete.

Use Cases: When to Use GPT Codex vs Claude Code

GPT Codex

- Bug fix sprints: Push 20 well-scoped issues simultaneously. Codex runs each in its own sandbox and returns PR-ready diffs without blocking other work.

- Greenfield microservice scaffolding: Spec out a new service, hand it to Codex, get a working skeleton with routing, error handling, and input validation faster than writing it manually.

- CI/CD-integrated code review: Codex reviews PRs automatically as part of the pipeline, catching edge cases, logical errors, and missing security headers that weren’t in the original requirements.

- High-volume repetitive tasks: Generating unit tests for a defined function, writing boilerplate for API integrations, or reformatting code to match a style guide — bounded tasks where speed matters more than reasoning depth.

- Non-engineering participation: QA engineers writing test scenarios, PMs checking implementation against specs, data analysts running ad hoc scripts — Codex’s web UI makes this accessible without CLI setup.

Claude Code

- Production refactors: Upgrading a major dependency across a 50-file module, where a change in one file has implications three layers deep. Claude Code traces those dependencies before touching anything.

- Legacy codebase navigation: A 200K+ token codebase with undocumented business logic. Claude Code’s extended context and CLAUDE.md memory mean it builds familiarity across sessions rather than starting cold each time.

- Multi-agent pipeline development: Building LangChain or AutoGen workflows where architectural consistency across many files determines whether the system holds together in production.

- Debugging with unclear root cause: Claude Code’s built-in web search lets it pull current docs, check API changelogs, and cross-reference recent GitHub issues in real time — useful when the problem isn’t in your codebase but in a dependency’s behavior.

- Security-sensitive codebases: In evaluations on real-world codebases, Claude Code found significantly more true positives for IDOR bugs and similar vulnerability categories than Codex. Local execution also means code never leaves the machine.

- SQL and data pipeline work: Complex cross-schema transformations, ETL logic, schema-aware query generation — tasks where getting the relationships wrong has downstream consequences.

Some teams structure their workflow around Codex’s token efficiency for generation and Claude Code’s reasoning depth for review and refactoring. This approach costs more in toolchain management but extracts the genuine strengths of each model rather than forcing one tool to cover tasks it handles less well.

GPT Codex vs Claude Code: Which One Should You Choose?

Neither tool is universally better. The benchmarks are close enough that workflow fit matters more than model scores.

GPT Codex

- Primary need is fast, cloud-based, autonomous execution with strong code review

- Your team has clearly defined, bounded tasks where prompt precision is manageable

- Already on ChatGPT Enterprise with SSO and compliance posture in place

- Token efficiency matters at scale, and large context windows aren’t required

- Non-CLI developers like QA, PMs, or analysts need access, and the web UI removes friction

Claude Code

- Local execution is required for data residency or air-gapped environments

- The codebase is large or complex and benefits from extended context

- Custom agent behavior matters: replacing the system prompt is a capability Codex doesn’t offer

- Multi-file refactoring and multi-agent coordination are core to your work

- Your team is terminal-native, and the CLI is natural, not a barrier

Running Both

- Codex handles high-velocity bounded feature work; Claude Code handles architectural changes, and production-sensitive refactors

- Different teams have different risk profiles — front-end product teams on Codex, platform and data teams on Claude Code

The broader point: the harness matters more than the model. SWE-bench Pro data shows a 22-point swing between basic and optimized scaffolds using the same underlying model. Prompt engineering, configuration quality, and workflow design affect output quality more than the tool itself.

Enterprise decisions often come down to security posture and governance before feature comparison. Claude Code’s local execution addresses a real constraint that Codex’s cloud sandbox does not — once that’s settled, the rest is a workflow question. Neither tool includes built-in secret detection or PII scanning, so pre-scan accessible codebases with tooling like TruffleHog or git-secrets, and confirm all credentials are in environment variables or a secrets manager before either tool goes live.

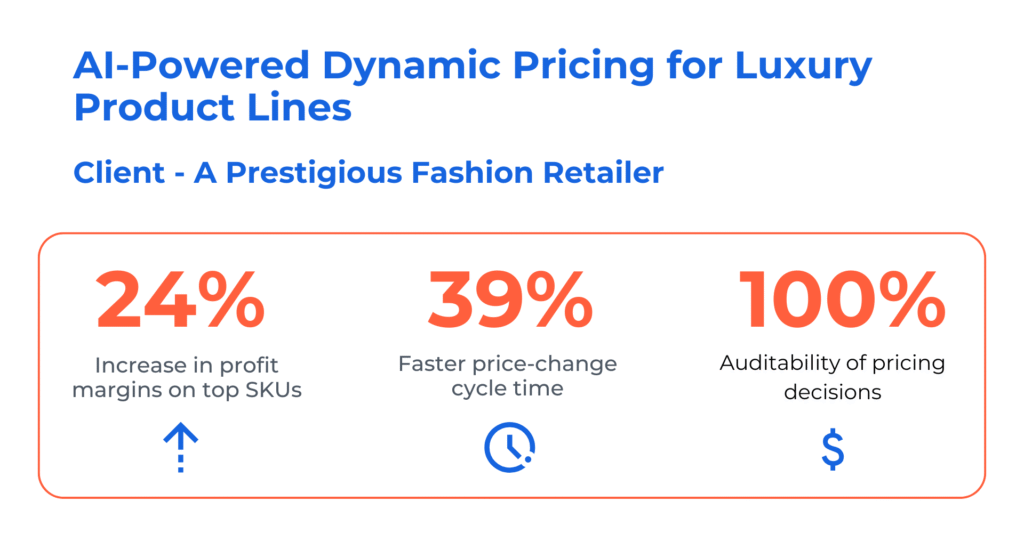

Case Study 1: AI‑Powered Dynamic Pricing for Luxury Product Lines

Challenges

The retailer struggled with inconsistent pricing across regions, causing revenue leakage and mixed customer experiences. Their manual repricing processes were slow, making it difficult to respond quickly to competitor moves or market fluctuations. Limited data visibility also prevented effective pricing for seasonal or limited-edition SKUs, reducing their ability to stay competitive.

Solutions

Kanerika implemented a machine learning pricing engine that combined demand signals, competitor data, and customer lifetime value to automatically optimize pricing. Pricing managers gained real‑time simulation capabilities through Karl, helping them to run simulations before adjusting prices. With FLIP analytics added for governance and traceability, the retailer achieved end‑to‑end visibility into every pricing decision.

Results

- 24% increase in profit margins on top SKUs

- 39% faster price‑change cycle time

- 100% auditability of pricing decisions

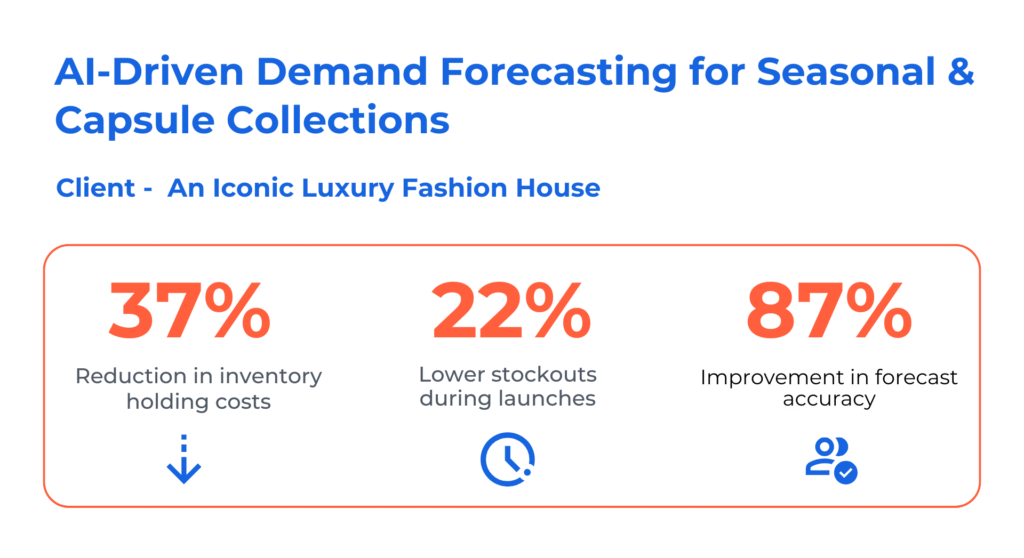

Case Study 2: AI‑Driven Demand Forecasting for Seasonal & Capsule Collections

Challenges

The fashion house faced uncertainty when predicting demand for seasonal and capsule launches, often resulting in overstock, markdowns, or stockouts. Their forecasting relied heavily on intuition, with fragmented coordination between design, merchandising, and retail teams. Without reliable insights into consumer behavior and upcoming trends, planning and production decisions remained reactive.

Solutions

Kanerika deployed machine learning models that merged historical sales, influencer trends, and macro signals to generate accurate forecasts. FLIP processed unstructured inputs like reviews and media mentions to deepen consumer insight. Using Karl for agile forecasting enabled teams to anticipate demand shifts and align inventory, production, and launch planning more effectively.

Results

- 37% reduction in inventory holding costs

- 22% fewer stockouts during launches

- 87% improvement in forecast accuracy

How Kanerika Delivers Agentic AI Solutions for Enterprises

Kanerika builds and deploys production-ready AI agents for enterprises across financial services, healthcare, manufacturing, and logistics. Its agents, including KARL for data insights, DokGPT for document intelligence, Susan for PII redaction, and Alan for legal document summarization, are purpose-built for specific business functions rather than adapted from general-purpose tools. Each one integrates with existing data pipelines, CRMs, ERPs, and cloud platforms, and is trained on structured enterprise data from day one.

What separates Kanerika’s approach is governance-first architecture. Every agent deployment includes role-based access controls, audit trails, and compliance documentation aligned to the client’s regulatory environment. Kanerika holds ISO 9001, ISO 27001, and ISO 27701 certifications, and HIPAA and SOC 2 compliance is built into engagements in regulated industries, not added after the fact.

As a Microsoft Solutions Partner for Data & AI and Microsoft Fabric Featured Partner, Kanerika builds on Azure, Microsoft Fabric, and the broader Microsoft data ecosystem. For enterprises evaluating agentic AI, Kanerika offers a practical path from proof-of-concept to production without the governance retrofit that typically adds months to timelines.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

Is Codex OpenAI's version of Claude Code?

GPT Codex is OpenAI’s AI coding assistant, but it is not a direct equivalent of Claude Code. While both tools assist developers with code generation and debugging, they differ significantly in architecture, execution model, and integration approach. Codex operates within ChatGPT’s ecosystem using cloud-based task execution, whereas Claude Code functions as a terminal-based agentic coding tool. Each platform reflects its parent company’s distinct AI philosophy and technical priorities. Kanerika helps enterprises evaluate AI coding tools against their specific development requirements—connect with our team for a tailored assessment.

What is the difference between Codex and Claude Code Generation?

Codex and Claude Code differ in execution model, interface, and autonomy level. GPT Codex runs tasks asynchronously in a sandboxed cloud environment, allowing parallel code operations without blocking your workflow. Claude Code operates directly in your terminal as an agentic assistant, providing real-time interaction with local files and repositories. Codex excels at isolated coding tasks, while Claude Code offers deeper codebase navigation and multi-step reasoning. Both support enterprise code generation but suit different development styles. Kanerika’s AI specialists can help you determine which AI code generation tool aligns with your engineering workflows.

Is Claude much better than GPT for coding?

Claude demonstrates strong performance for complex, multi-file coding tasks requiring extended context understanding. Its 200K token context window enables comprehensive codebase analysis that GPT models struggle to match in single interactions. However, GPT Codex offers advantages in parallel task execution and integration within existing OpenAI ecosystems. Performance varies by use case—Claude often excels at refactoring and architectural decisions, while Codex handles isolated code generation efficiently. Neither is universally superior; the best choice depends on your specific development needs. Kanerika evaluates both platforms against enterprise requirements—schedule a consultation to identify your optimal fit.

Is Claude Code better than Codex?

Claude Code outperforms Codex in specific scenarios, particularly for complex refactoring and codebase-wide understanding due to its larger context window and agentic terminal interface. Its ability to navigate repositories, edit files directly, and execute multi-step coding plans gives it an edge for senior developers managing intricate projects. However, Codex offers superior parallel task execution and seamless ChatGPT integration that benefits teams already invested in OpenAI’s ecosystem. The better tool depends on your workflow complexity and integration requirements. Kanerika’s AI implementation team helps enterprises benchmark both solutions—reach out for a comparative analysis.

Which is cheaper, Claude Code or Codex?

Codex is generally more accessible for cost-conscious users, as it’s included with ChatGPT Pro subscriptions at $200/month with usage limits. Claude Code operates on consumption-based API pricing, which can escalate quickly during intensive coding sessions involving large context windows. For light usage, Codex offers predictable costs; for heavy enterprise development, Claude Code’s pricing scales with actual consumption. Total cost depends on task volume, context size, and session frequency. Kanerika helps enterprises model AI coding tool costs against projected usage patterns—contact us for a detailed cost-benefit analysis.

Is Codex a CLI tool like Claude Code?

Codex is not a CLI tool in the same manner as Claude Code. While Claude Code operates natively in your terminal, allowing direct interaction with local files and system commands, Codex functions primarily through ChatGPT’s web interface with cloud-based sandboxed execution. OpenAI does offer a separate Codex CLI for terminal-based access, but it differs architecturally from Claude Code’s deeply integrated agentic approach. Claude Code provides more seamless local development environment interaction, whereas Codex emphasizes isolated task execution. Kanerika assists enterprises in implementing the right AI coding interface for their development environments—let’s discuss your setup.

Why is Codex so slow?

Codex can feel slow because it executes tasks asynchronously in sandboxed cloud environments rather than providing instant responses. Each task involves spinning up isolated containers, processing code, and returning results—adding latency compared to real-time AI assistants. Complex multi-file operations amplify this delay. However, this architecture enables parallel task execution; you can queue multiple coding requests simultaneously while continuing other work. The tradeoff favors throughput over immediate feedback. For time-sensitive development requiring instant AI responses, Claude Code’s real-time terminal interaction may suit better. Kanerika helps teams optimize AI coding tool selection for their speed and workflow requirements.

Is there anything better than Claude Code?

Whether alternatives outperform Claude Code depends on your specific use case. GPT Codex offers superior parallel task execution and better integration with OpenAI’s broader ecosystem. GitHub Copilot provides tighter IDE integration for inline code completion. Cursor delivers an excellent AI-native editor experience. For pure coding benchmark performance, Claude Code ranks among the top, but no single tool dominates every scenario. Enterprise teams often benefit from combining multiple AI coding assistants strategically. Kanerika evaluates AI development tools holistically against your engineering workflows—schedule an assessment to identify your ideal tooling stack.

Is OpenAI Codex good?

OpenAI Codex is a capable AI coding assistant, particularly strong for isolated code generation tasks and developers already embedded in the ChatGPT ecosystem. Its sandboxed execution environment ensures safe code testing without affecting local systems, and parallel task handling boosts productivity for multi-component projects. Codex performs well on standard coding benchmarks and handles common programming languages effectively. Limitations include slower response times compared to real-time tools and less sophisticated codebase navigation than competitors like Claude Code. Overall, it’s a solid choice for specific workflows. Kanerika helps enterprises assess whether Codex fits their development requirements—connect with our AI team.

Is GPT Codex or Claude Code safer for regulated enterprise codebases?

Both GPT Codex and Claude Code offer enterprise-grade security features, but their approaches differ. Codex executes code in isolated cloud sandboxes, preventing direct access to local systems and reducing accidental data exposure risks. Claude Code operates locally, keeping sensitive code on-premises but requiring careful permission configuration. For regulated industries, Claude Code’s local execution may satisfy data residency requirements, while Codex’s isolation appeals to teams prioritizing separation of concerns. Both providers offer enterprise agreements with compliance certifications. Kanerika specializes in implementing AI coding tools within compliant enterprise environments—contact us to discuss your regulatory requirements.

How do Codex and Claude Code compare to GitHub Copilot for enterprise teams?

Codex and Claude Code serve different purposes than GitHub Copilot for enterprise teams. Copilot excels at inline code completion within IDEs, providing real-time suggestions as developers type. Claude Code functions as an autonomous coding agent handling complex multi-file tasks through terminal commands. Codex offers async task execution ideal for parallel code generation workflows. Enterprise teams often benefit from combining Copilot for micro-completions with Claude Code or Codex for larger autonomous tasks. Each tool addresses distinct development needs rather than directly competing. Kanerika architects AI-assisted development environments integrating multiple tools—reach out to design your optimal stack.

Can I run Codex and Claude Code in the same development workflow?

Running Codex and Claude Code together in one development workflow is entirely feasible and often advantageous. Teams can leverage Claude Code’s terminal-based agentic capabilities for complex refactoring and codebase navigation while using Codex for parallel background tasks and isolated code generation. Since they operate through different interfaces—terminal versus web-based ChatGPT—there’s no technical conflict. This hybrid approach lets you capitalize on each tool’s strengths: Claude Code’s deep context understanding and Codex’s async execution model. Kanerika helps engineering teams design integrated AI coding workflows that maximize productivity—start with a workflow consultation.

Which tool scores better on coding benchmarks in 2026?

Current 2026 benchmarks show Claude Code leading on complex reasoning tasks like SWE-bench, where it demonstrates superior performance on real-world GitHub issue resolution. GPT Codex, powered by o3 and o4-mini models, performs competitively on structured coding challenges and excels in parallel execution scenarios. Benchmark rankings fluctuate as both Anthropic and OpenAI release updates. Importantly, benchmark scores don’t always predict real-world utility—workflow fit, integration capabilities, and team adoption matter equally. Kanerika tracks AI coding benchmark trends and translates them into practical enterprise recommendations—contact us for current performance insights.

Is GPT Codex the same as the original OpenAI Codex API?

GPT Codex is not the same as the original OpenAI Codex API, which was deprecated in March 2023. The original Codex API powered early GitHub Copilot versions and offered direct programmatic access to code generation. Today’s GPT Codex represents a fundamentally different product—a cloud-based coding agent integrated into ChatGPT that uses newer o3 and o4-mini models. It operates through task-based async execution rather than API calls. Developers expecting the legacy API experience will find significant architectural differences. Kanerika guides enterprises through OpenAI’s evolving product landscape—reach out to understand current Codex capabilities.

Is Codex free on ChatGPT?

Codex is not entirely free on ChatGPT. Currently, Codex access is limited to ChatGPT Pro subscribers paying $200/month, with usage caps preventing unlimited operations. OpenAI has announced plans to expand access to Plus, Team, and Enterprise tiers, but with varying usage limits based on subscription level. Free ChatGPT users do not have Codex access. For enterprises requiring predictable AI coding costs, understanding these subscription tiers and usage limits is essential for budgeting. Kanerika helps organizations evaluate ChatGPT subscription options against their development team needs—contact us for guidance on optimizing your AI tool investment.

Is Codex part of ChatGPT?

Codex is integrated within ChatGPT as a specialized coding agent accessible through the ChatGPT interface. Rather than functioning as a separate product, Codex operates as a feature within ChatGPT Pro subscriptions, appearing in the model selector alongside other OpenAI models. Users access Codex directly through their existing ChatGPT environment, where it handles software engineering tasks in sandboxed cloud containers. This integration leverages ChatGPT’s familiar interface while adding autonomous code execution capabilities. Codex will expand to additional ChatGPT subscription tiers progressively. Kanerika assists enterprises in maximizing ChatGPT’s integrated AI coding features—schedule a demo to explore implementation options.

Does Claude Code work offline?

Claude Code does not work fully offline, as it requires API connectivity to Anthropic’s servers for AI model inference. However, its terminal-based architecture means your code and files remain local rather than being uploaded to cloud sandboxes. This hybrid approach keeps sensitive codebases on-premises while only transmitting prompts and context to generate responses. For air-gapped environments, neither Claude Code nor competing AI coding tools currently offer complete offline functionality. Teams with strict network isolation requirements should evaluate on-premises AI model deployment options. Kanerika helps enterprises navigate AI tool deployment constraints—connect with us to explore compliant implementation strategies.

Which AI is better than Claude at coding?

No single AI consistently outperforms Claude across all coding tasks. GPT Codex matches or exceeds Claude in specific scenarios, particularly parallel task execution and integration-heavy workflows. Google’s Gemini models show competitive performance on certain benchmarks. Specialized tools like Cursor and Windsurf offer superior IDE integration that some developers prefer. Claude’s advantages lie in extended context handling and complex multi-file reasoning. Performance leadership shifts with each model update from major AI providers. The optimal choice depends on your specific coding requirements and workflow. Kanerika benchmarks AI coding tools against enterprise use cases—reach out for personalized recommendations based on your development needs.