Are you struggling to decide between Data Fabric vs Data Warehouse for your organization’s data strategy? In 2025, companies like IBM and Snowflake are leading the way with innovative solutions. IBM is enhancing its Data Fabric platform for AI-driven analytics across cloud and on-premises systems, while Snowflake scales its cloud-based data warehouse for faster storage and insights. These developments show how organizations are exploring modern architectures to meet growing data demands.

According to Gartner, the global data fabric market is expected to reach $14.5 billion by 2028, while the data warehouse market is projected to grow from $25 billion in 2024 to $35 billion by 2030. Enterprises using these solutions report faster decision-making, better data accessibility, and significant cost savings, making it crucial to choose the right architecture.

In this blog, we’ll explore the differences between Data Fabric vs Data Warehouse, their unique use cases, and how to select the best solution for your business. Continue reading to see which approach can increase the value of your data.

Key Takeaways

- Data fabric provides a unified, real-time view across distributed systems, while data warehouses centralize structured data for deep analytics.

- A data fabric excels at integration, governance, and agility across hybrid environments.

- Data warehouses remain ideal for historical reporting, batch analysis, and high-performance queries.

- Many organizations will use both: fabric for data access and agility, warehouse for analytics and reporting.

- Choosing the right approach depends on your data volume, real-time needs, technical architecture, and governance requirements.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

Data Fabric vs Data Warehouse – Key Differences

Data fabric and data warehouses serve different purposes in modern data management. A data warehouse is a centralized repository optimized for storing structured data and performing historical reporting and analysis. It works best for batch analytics, business intelligence, and compliance reporting. However, traditional warehouses often struggle with real-time processing, unstructured data, and integration across multiple platforms.

On the other hand, a data fabric is an intelligent, unified architecture that connects data across hybrid, multi-cloud, and on-premises environments. It enables easy access to structured and unstructured data. It supports real-time processing and offers dynamic metadata management. Key differences include:

| Feature | Data Fabric | Data Warehouse |

| Data Type Support | Handles structured, semi-structured, and unstructured data | Primarily structured data |

| Integration | Connects seamlessly across cloud, on-premises, and hybrid systems | Requires ETL pipelines for cross-platform data |

| Real-Time Processing | Supports streaming and real-time data updates | Mostly batch-oriented updates |

| Scalability | Easily scales horizontally in cloud environments | Vertical scaling, can be costly and slower |

| Governance & Metadata | Centralized metadata management, data lineage, and quality tracking | Limited metadata and governance capabilities |

| Flexibility | Adapts quickly to new data sources and formats | Rigid schema design, less adaptable |

| Cost Efficiency | Pay-as-you-grow model reduces redundant storage | Higher upfront and scaling costs |

| Use Cases | Real-time analytics, operational reporting, cross-platform integration | Historical reporting, business intelligence dashboards, compliance |

Is Data Fabric Better for AI and Machine Learning?

Yes, in most modern enterprise scenarios, data fabric provides a superior foundation for AI and machine learning applications. AI models and machine learning pipelines require large volumes of high-quality, diverse data from multiple sources. Additionally, data fabric simplifies this by providing unified access, consistent governance, and real-time availability.

For example, a retail company using a data fabric can combine customer purchase history from a warehouse, real-time online behavior from e-commerce platforms, and social media sentiment data to train more accurate machine learning models. This unified approach allows predictive analytics, personalized recommendations, and adaptive pricing strategies—all in real time.

Key advantages of data fabric for AI/ML:

- Unified Data Access: Connects structured, semi-structured, and unstructured data across multiple platforms.

- Real-Time Data Availability: Enables models to be trained and updated with live data streams, improving accuracy.

- Governance and Compliance: Metadata management ensures data lineage, quality, and compliance for AI workflows.

- Faster Model Deployment: Pre-integrated pipelines reduce preparation time for training and inference.

In contrast, while data warehouses provide reliable historical data, they often fall short for AI and machine learning applications due to slower batch updates, limited support for unstructured data, and rigid schemas. Therefore, enterprises aiming for predictive analytics, advanced AI, and machine learning adoption often rely on data fabric as a strategic choice.

Databricks vs Snowflake vs Fabric: A Complete Comparison Guide

Compare Databricks, Snowflake, and Microsoft Fabric to see which unified platform is best for your enterprise data strategy.

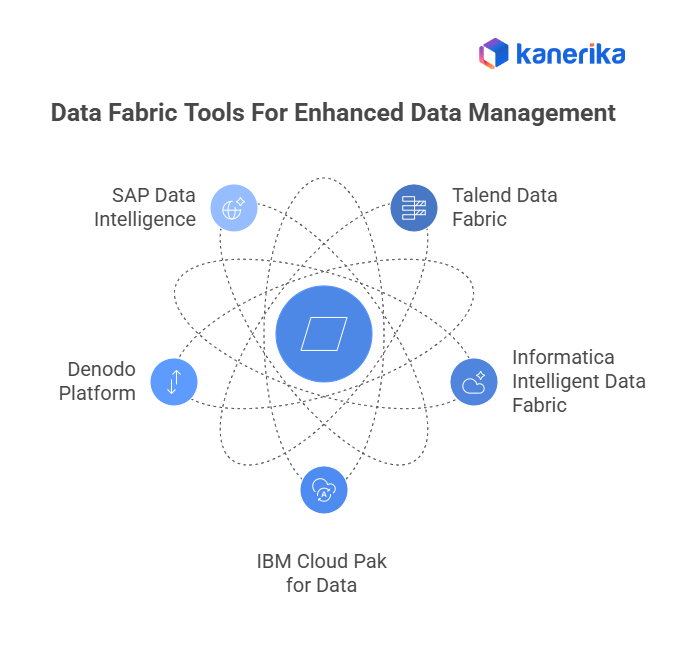

Which Tools Are Used for Data Fabric and Data Warehousing?

Data fabric and data warehouse architectures rely on specialized tools to manage, integrate, and store data efficiently. A data fabric focuses on easy connectivity, real-time integration, unified data access, and automated governance across multiple platforms—cloud, on-premises, and edge systems.

- Talend Data Fabric: Connects multiple data sources, manages metadata, ensures data quality, and supports real-time pipelines for analytics and AI.

- Informatica Intelligent Data Fabric: Supports hybrid and multi-cloud environments with automation, governance, and lineage tracking for compliance.

- IBM Cloud Pak for Data: Enables real-time integration, analytics, AI-ready workflows, and operational efficiency.

- Denodo Platform: Provides data virtualization, allowing access to distributed data without duplication.

- SAP Data Intelligence: Integrates, organizes, and governs data from diverse sources, supporting machine learning model deployment.

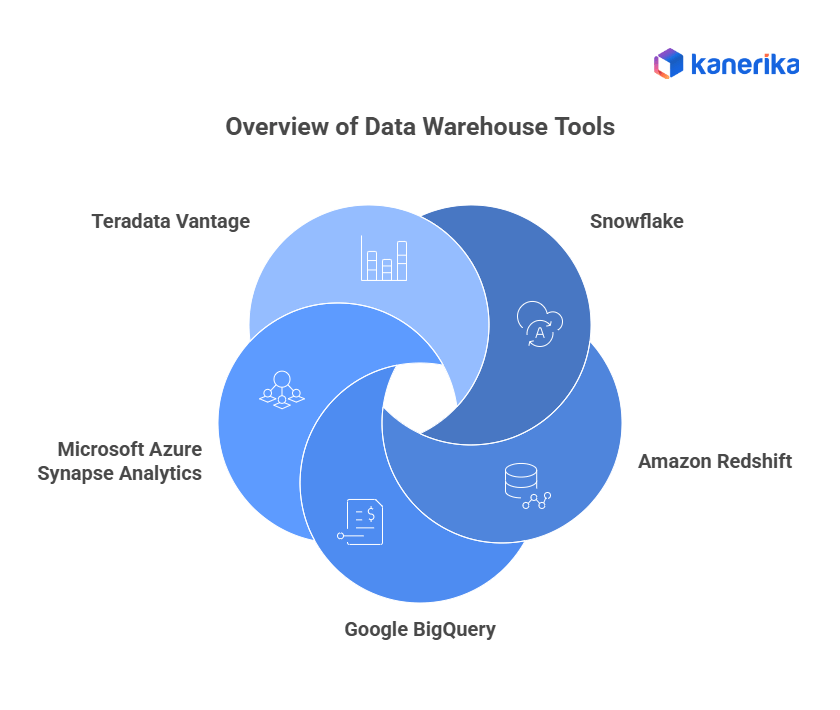

Data warehouse tools, by contrast, are optimized for storing structured data, high-speed querying, batch processing, and business intelligence reporting.

- Snowflake: Cloud-native warehouse for historical structured data with scalable compute and storage, supporting analytics at enterprise scale.

- Amazon Redshift: High-performance analytics and reporting for large datasets, integrated within AWS ecosystems.

- Google BigQuery: Serverless, cloud-based warehouse that auto-scales and enables advanced analytics, SQL queries, and machine learning integration.

- Microsoft Azure Synapse Analytics: Combines data storage, processing, and big data analytics, tightly integrated with Power BI and Azure ML.

- Teradata Vantage: Enterprise-grade warehouse offering advanced analytics, multi-cloud support, and optimized query performance.

In practice, many organizations combine data fabric for integration, governance, and real-time access with a data warehouse for structured reporting, historical analysis, and large-scale analytics. Moreover, this hybrid setup allows businesses to gain flexibility, speed, and reliable insights from diverse data sources.

Data Fabric vs Data Mesh: What You Need to Know About Modern Data Management

Compare the centralized Data Fabric vs the decentralized Data Mesh architecture for modern data management.

Data Fabric vs Data Warehouse: Which One Should You Choose?

Choosing the right system depends on business needs, data complexity, and operational goals. Data fabric is ideal when organizations have a variety of data types—structured, semi-structured, and unstructured—and require real-time integration, flexible access, governance, and AI readiness.

- Supports operational analytics, cross-platform reporting, and AI/ML applications.

- Provides centralized metadata, lineage, and monitoring for compliance.

- Ensures real-time data flow and accessibility across cloud and on-prem systems.

Data warehouses are best suited for structured data analytics, historical reporting, and consistent query performance.

- Optimized for business intelligence dashboards, regulatory compliance, and structured data storage.

- Supports large-scale batch processing and fast, reliable query response.

- Ideal for long-term historical analysis and enterprise reporting.

A hybrid approach often provides the best of both worlds:

- Use data fabric for integrating distributed sources, real-time updates, and governance.

- Moreover, use data warehouse for storing structured historical data, advanced analytics, and reporting.

Example: A retail enterprise might use Amazon Redshift as a centralized warehouse for sales reporting while using Informatica Data Fabric to connect inventory systems, customer databases, and IoT devices in real time—enabling both BI dashboards and AI-driven predictive analytics.

Data Fabric vs Data Warehouse: Kanerika’s Enterprise Approach

At Kanerika, we help enterprises choose the right data architecture based on their operational needs, data complexity, and long-term analytics goals. While traditional data warehouses are ideal for storing structured, historical data used in reporting and business intelligence, they often fall short in today’s hybrid, real-time environments. That’s where data fabric comes in—a modern architecture that connects, integrates, and governs data across cloud, on-prem, and edge systems without requiring data movement.

Kanerika is a Microsoft Solutions Partner for Data & AI and an early implementer of Microsoft Fabric, enabling us to deliver unified data platforms that combine the strengths of both architectures. For clients needing centralized, schema-based reporting, we implement robust data warehouse solutions. For those managing distributed, real-time, and unstructured data, we design intelligent data fabric layers that provide easy access, automated governance, and AI-ready infrastructure. Our solutions are built to scale, adapt, and support enterprise-wide data democratization.

All Kanerika implementations follow global compliance standards, including ISO 27001, ISO 27701, SOC II, and GDPR. Whether you’re modernizing legacy systems or building a future-ready data foundation, Kanerika ensures your architecture is secure, compliant, and aligned with business outcomes. With our expertise in both traditional and modern data ecosystems, we help enterprises move from fragmented data to unified intelligence—without compromise. With our expertise in both traditional and modern data ecosystems, we help enterprises move from fragmented data to unified intelligence without compromise.

FAQs

Is Fabric a data warehouse?

Microsoft Fabric is not solely a data warehouse but an integrated analytics platform that includes data warehousing capabilities alongside other services. Fabric combines a Synapse-based data warehouse, lakehouse storage, data engineering, and business intelligence into a unified SaaS environment. This makes it more comprehensive than a traditional data warehouse, offering end-to-end analytics from ingestion to visualization. Organizations evaluating Microsoft Fabric for enterprise analytics benefit from understanding how its warehousing component fits their broader data architecture. Kanerika helps enterprises leverage Fabric’s full potential—connect with our specialists for a tailored implementation roadmap.

How is data fabric different from data warehouse?

Data fabric is an architectural approach that connects disparate data sources through metadata-driven integration, while a data warehouse is a centralized repository storing structured, historical data for analytics. The key difference lies in philosophy: data fabric provides virtualized access to data wherever it resides without mandatory replication, whereas data warehouses require physical data movement and transformation. Data fabric excels in distributed environments with diverse data types, while warehouses optimize query performance for structured reporting. Kanerika’s data integration experts help organizations determine the right architecture for their specific analytics requirements—schedule a consultation today.

Can a data fabric replace a data warehouse?

Data fabric cannot fully replace a data warehouse in most enterprise scenarios. While data fabric provides unified data access and integration across sources, data warehouses deliver optimized storage, fast query performance, and robust support for complex analytical workloads. Many organizations use data fabric as a complementary layer that orchestrates data across multiple systems, including warehouses. The choice depends on workload requirements—high-performance structured analytics still benefit from dedicated warehouse infrastructure. Kanerika architects hybrid data solutions that combine both approaches for maximum flexibility—reach out for a comprehensive architecture assessment.

Which is better for real-time analytics: data fabric or data warehouse?

Data fabric generally performs better for real-time analytics because it enables direct access to source systems without waiting for batch ETL processes. Traditional data warehouses rely on scheduled data loads, introducing latency between data generation and availability. However, modern cloud data warehouses now support streaming ingestion, narrowing this gap significantly. Data fabric shines when you need real-time insights across heterogeneous systems without consolidation delays. For sub-second query performance on historical data, warehouses remain superior. Kanerika designs real-time analytics architectures tailored to your latency requirements—let’s discuss your specific use case.

Do companies need both data fabric and data warehouse?

Many enterprises benefit from deploying both data fabric and data warehouse within their data ecosystem. Data warehouses handle structured, curated data for business intelligence and regulatory reporting, while data fabric provides the connective tissue linking warehouses with lakes, operational databases, and cloud applications. This combination delivers governed analytics alongside flexible, real-time data access. Organizations with complex, distributed data landscapes particularly gain from this hybrid approach. The investment depends on data diversity, compliance needs, and analytics maturity. Kanerika helps enterprises build integrated data platforms using both technologies—contact us for a strategy session.

What are the main use cases of data fabric vs data warehouse?

Data fabric excels at cross-system data discovery, real-time operational analytics, data virtualization, and enabling self-service access across distributed environments. Data warehouse use cases include enterprise business intelligence, financial reporting, historical trend analysis, and compliance-driven analytics requiring auditable, governed datasets. Data fabric suits organizations managing hybrid cloud environments and needing agile data access without physical consolidation. Data warehouses serve teams requiring consistent, high-performance queries on cleaned, transformed data. Understanding these distinctions helps align technology investments with business outcomes. Kanerika maps your analytics requirements to the optimal architecture—book a discovery workshop with our team.

What is a data fabric example?

A practical data fabric example is a healthcare organization connecting electronic health records, insurance claims databases, IoT medical devices, and research systems through a unified metadata layer. The data fabric enables clinicians to query patient information across all sources without moving data into a central repository, while enforcing HIPAA compliance through automated governance policies. IBM Cloud Pak for Data and Informatica Intelligent Data Management Cloud represent commercial data fabric implementations. These platforms use AI-driven metadata management and knowledge graphs to automate data discovery and lineage. Kanerika implements enterprise data fabric solutions across industries—explore how we can modernize your data architecture.

Why is it called data fabric?

The term data fabric draws from the textile metaphor of interwoven threads creating a unified material. In data architecture, the fabric represents interconnected data services, metadata layers, and integration capabilities woven together to provide seamless access across distributed systems. Just as fabric threads remain distinct yet form a cohesive whole, data fabric keeps data in its source locations while creating a unified access layer. This metaphor emphasizes flexibility, interconnection, and the ability to stretch across diverse environments without breaking continuity. Kanerika weaves together your data sources into a cohesive enterprise fabric—let’s start your integration journey.

Is Fabric a lakehouse?

Microsoft Fabric incorporates lakehouse architecture as one of its core components. The Fabric lakehouse combines the flexibility of data lakes with the structure and performance of data warehouses, storing data in Delta Lake format on OneLake storage. This enables both SQL-based analytics and open data formats for machine learning workloads. Fabric’s lakehouse works alongside its dedicated warehouse service, giving users flexibility based on workload requirements. The lakehouse approach suits exploratory analytics and data science, while the warehouse optimizes structured reporting. Kanerika guides organizations in leveraging Fabric’s lakehouse capabilities effectively—connect with our Microsoft Fabric specialists today.

Is Fabric an ETL tool?

Microsoft Fabric includes ETL capabilities through Data Factory pipelines and dataflows, but it is not primarily an ETL tool. Fabric is a comprehensive analytics platform encompassing data integration, engineering, warehousing, science, and business intelligence. Its ETL functionality enables data extraction, transformation, and loading within the broader platform ecosystem. Data Factory within Fabric provides visual pipeline designers and hundreds of connectors for data movement. However, Fabric’s value extends far beyond ETL into unified analytics and governance. Kanerika implements end-to-end Fabric solutions including optimized ETL pipelines—reach out to accelerate your data platform modernization.

Is Fabric the same as Databricks?

Microsoft Fabric and Databricks are distinct platforms with different architectures and vendor ecosystems. Databricks pioneered the lakehouse concept on Apache Spark, offering an open, multi-cloud platform for data engineering and machine learning. Fabric is Microsoft’s integrated SaaS analytics suite, combining Power BI, Data Factory, Synapse, and new capabilities under unified governance. Databricks emphasizes open-source standards and multi-cloud flexibility, while Fabric prioritizes deep Microsoft 365 integration and simplified licensing. Both support lakehouse workloads but target different organizational preferences. Kanerika implements both Databricks and Fabric solutions—consult our experts to determine the best fit for your environment.

Is Fabric replacing ADF?

Microsoft Fabric incorporates Azure Data Factory capabilities rather than eliminating ADF entirely. Data Factory pipelines and dataflows exist within Fabric as the primary data integration and orchestration tools. Standalone Azure Data Factory remains available for organizations not ready to adopt Fabric’s unified platform. Microsoft positions Fabric as the evolution of its analytics services, with ADF functionality deeply embedded. Existing ADF investments can migrate into Fabric with minimal rework. The transition timeline depends on organizational readiness and licensing considerations. Kanerika streamlines ADF to Fabric migrations with minimal disruption—contact us for a migration assessment and transition plan.

Is Fabric a PaaS or SaaS?

Microsoft Fabric operates as a SaaS platform, distinguishing it from PaaS offerings like Azure Synapse Analytics. As SaaS, Fabric handles infrastructure management, scaling, and maintenance automatically, letting users focus on analytics rather than operations. Licensing follows a capacity-based model where organizations purchase compute units consumed across all Fabric workloads. This differs from PaaS platforms requiring resource provisioning and configuration decisions. The SaaS model simplifies adoption but offers less infrastructure customization than PaaS alternatives. Understanding this distinction affects architecture planning and cost modeling. Kanerika helps organizations optimize Fabric capacity planning and cost management—schedule a Fabric readiness consultation.

Is ETL a part of data warehousing?

ETL is a foundational component of data warehousing, responsible for extracting data from source systems, transforming it to match warehouse schemas, and loading it into the target repository. Without ETL pipelines, data warehouses cannot receive the cleansed, integrated data they need to support analytics. Modern data warehousing also embraces ELT approaches, where transformations occur within the warehouse using its compute resources. Both methodologies serve the same purpose of preparing data for analytical consumption. Robust ETL processes directly impact data warehouse quality and performance. Kanerika builds optimized ETL and ELT pipelines for enterprise data warehouses—explore our data integration services.

What are the 5 types of data warehouse architecture?

The five primary data warehouse architecture types include single-tier architecture minimizing storage through direct querying, two-tier architecture separating sources from the warehouse, and three-tier architecture adding a middle OLAP layer. Hub-and-spoke architecture centralizes data in an enterprise warehouse feeding departmental data marts. Cloud-native architecture leverages elastic compute and storage services from providers like Snowflake or Databricks. Each architecture suits different organizational scales, query patterns, and governance requirements. Selecting the right architecture impacts performance, cost, and scalability significantly. Kanerika architects data warehouse solutions matched to your enterprise requirements—request a data architecture assessment.

Is data warehousing dead?

Data warehousing is far from dead and continues evolving with cloud-native platforms like Snowflake, BigQuery, and Microsoft Fabric. Modern data warehouses offer elastic scaling, separation of storage and compute, and support for semi-structured data that legacy systems lacked. While data lakes and lakehouses expanded architectural options, warehouses remain essential for governed, high-performance business intelligence. The market continues growing as organizations prioritize reliable analytics over experimental approaches. What has changed is how warehouses integrate with broader data ecosystems including data fabric architectures. Kanerika modernizes legacy warehouses to cloud-native platforms—discover how we can transform your analytics infrastructure.

What are the 4 pillars of data mesh?

The four pillars of data mesh are domain-oriented decentralized ownership, data as a product, self-serve data infrastructure platform, and federated computational governance. Domain ownership assigns accountability to business units closest to the data. Treating data as a product emphasizes quality, discoverability, and user experience. Self-serve infrastructure empowers domains to publish and consume data without central team bottlenecks. Federated governance balances autonomy with enterprise-wide standards for interoperability and compliance. Data mesh principles often complement data fabric technology implementations. Understanding mesh concepts helps organizations design scalable data architectures. Kanerika guides enterprises through data mesh adoption aligned with their organizational structure—connect with our data strategy team.

Is data mesh obsolete?

Data mesh is not obsolete, though industry enthusiasm has matured from initial hype. Organizations continue adopting data mesh principles selectively, focusing on domain ownership and data-as-product concepts that improve accountability and quality. Full data mesh implementations require significant organizational change, leading many enterprises to adopt hybrid approaches combining mesh governance with centralized infrastructure. Data fabric technology often supports mesh architectures by providing the connective platform for federated domains. The approach suits large, complex organizations with mature data cultures. Kanerika helps enterprises implement practical data mesh strategies adapted to their organizational readiness—schedule a consultation to evaluate your options.