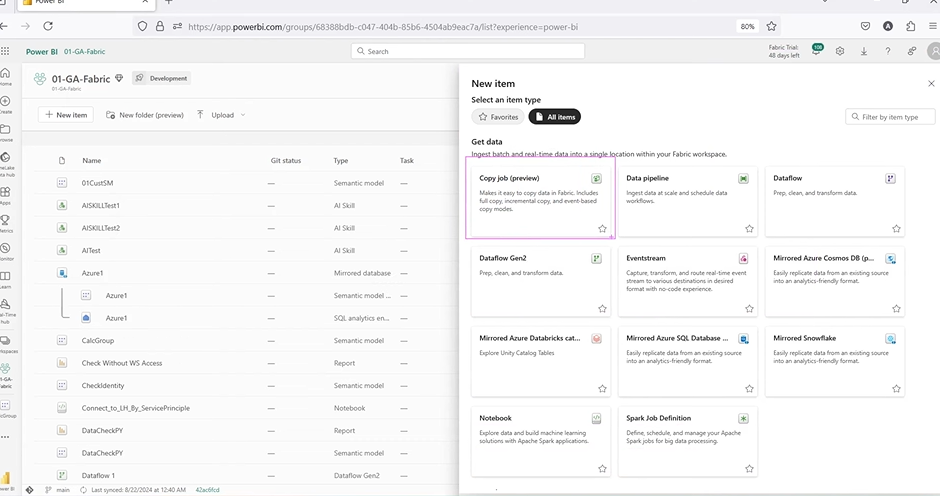

In March 2024, Microsoft quietly rolled out a preview feature in Fabric that didn’t make huge headlines — but probably should have. It’s called Copy Job, and it’s designed to tackle something nearly every data team struggles with moving data smoothly and reliably from one place to another.

Data engineers and analysts deal with this daily — pulling from Azure SQL, syncing across systems, managing refreshes — usually with scheduled pipelines, manual scripts, and crossed fingers. Even with tools like Data Factory, adding a new table or switching to incremental often means redoing big chunks of the setup.

This is where Microsoft Fabric’s Copy Job steps in. It offers a much simpler way to move data, with built-in support for both batch and incremental loads, and without the usual setup overhead. It’s meant to reduce manual work, speed things up, and let you focus on what really matters — the data itself.

In this blog, you’ll get a complete walkthrough of how Copy Job works, what makes it different, and how to set it up from start to finish.

What is the Microsoft Fabric Copy Job?

The Microsoft Fabric Copy Job is a new feature built into the Data Factory experience inside Microsoft Fabric. It simplifies how data is moved between sources and destinations — whether you’re working with SQL databases, OneLake, or other supported systems.

Instead of setting up complex pipelines or writing custom scripts, Copy Job lets you quickly create repeatable, reliable data transfers with just a few clicks.

It supports:

- Full (batch) copy – load the entire dataset, replacing previous data.

- Incremental copy – brings in only new data since the last run, without touching existing rows.

- Column-level mapping – choose and adjust how source and destination columns match up.

- Data type transformation – change column types (e.g., decimal to double) during setup.

- Multi-table support – copy multiple tables in one job, each with its own mapping and settings.

- Manual or scheduled runs – trigger jobs on demand or set them to run automatically on a defined schedule.

- Monitoring and metrics – view rows read/written, job duration, and data throughput per table.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

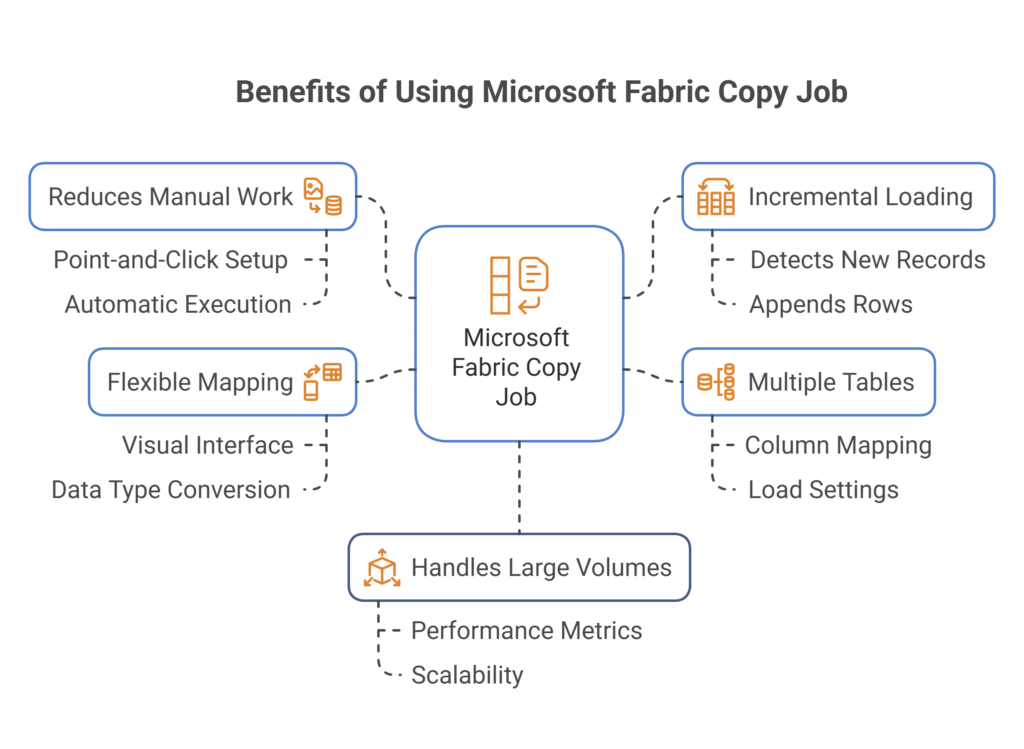

Benefits of Using Microsoft Fabric Copy Job

1. Cuts Down Manual Work

Most data movement processes require a mix of pipeline design, scripting, and error handling — all of which take time and introduce room for mistakes. Copy Job simplifies this by giving you a point-and-click setup that handles common tasks like detecting schema, mapping columns, and capturing new records. Once set up, it runs automatically or on demand, with very little hands-on work needed.

2. Built-In Incremental Loading

One of the most useful features is support for incremental copy. Instead of pulling all records every time (which wastes time and resources), Copy Job can detect what’s new based on a specific column — like a date or an ID — and just append those rows. It’s perfect for keeping large datasets up to date without reloading everything from scratch.

3. Supports Multiple Tables in One Job

You’re not limited to one table at a time. With Copy Job, you can bring in several tables from your source system, each with its own column mapping and load settings. This is useful for syncing multiple related datasets — such as sales, customers, and products — without building separate pipelines for each one.

4. Flexible Column Mapping and Data Types

Copy Job includes a visual mapping interface where you can match columns between source and destination. You can also modify data types if needed — for example, convert a column from decimal to double. This helps avoid compatibility issues later on and gives you control over how data is shaped during the transfer.

5. Designed for Large Volumes

Whether you’re moving thousands of records or millions, Copy Job is built to handle it. It’s optimized for speed and stability, and since it’s backed by Microsoft Fabric’s infrastructure, it can scale to handle heavy loads. You also get visibility into performance metrics like job duration, rows read/written, and throughput, helping you monitor, and fine-tune as needed.

Steps to Set Up a Copy Job in Microsoft Fabric

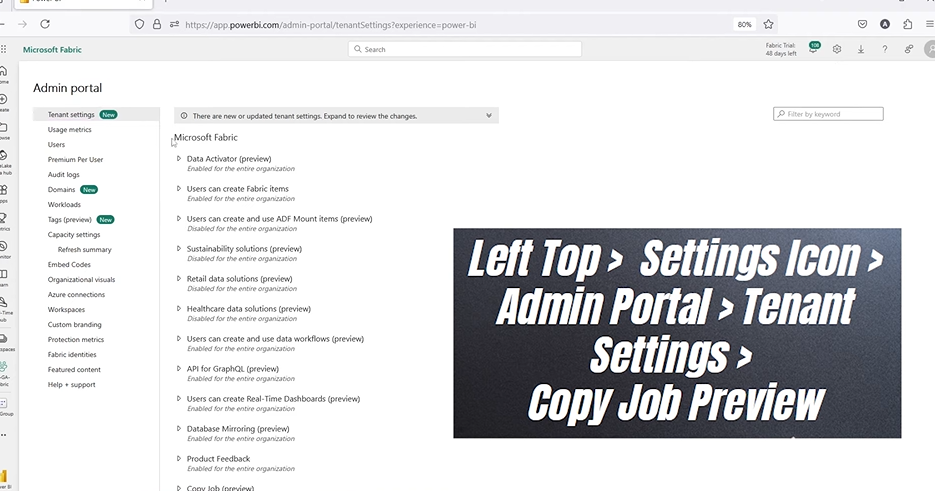

Step 1: Enable Copy Job in Tenant Settings

Before you can create or even view a Copy Job in Microsoft Fabric, you need to enable the feature at the tenant level. This setting controls whether Copy Job is available in your environment, and by default, it may be turned off — especially in enterprise or production environments where features in preview are restricted.

This step is important because if you skip it, you won’t see the Copy Job option in the UI at all. You might also run into errors like “Microsoft Fabric not enabled” or “Copy Job not available,” even if you have Fabric capacity assigned.

Here’s how to enable it:

- Open Microsoft Fabric (Power BI Portal)

Go to app.powerbi.com and sign in with your admin account. This is the same portal used for Power BI and Microsoft Fabric.

- Access the Admin Portal

Click the gear icon in the top-right corner. From the dropdown, choose Admin portal.

If you don’t see this option, your account might not have admin permissions.

- Go to Tenant Settings

Inside the Admin portal, scroll down the sidebar to find Tenant settings. This section controls access to various features across your organization.

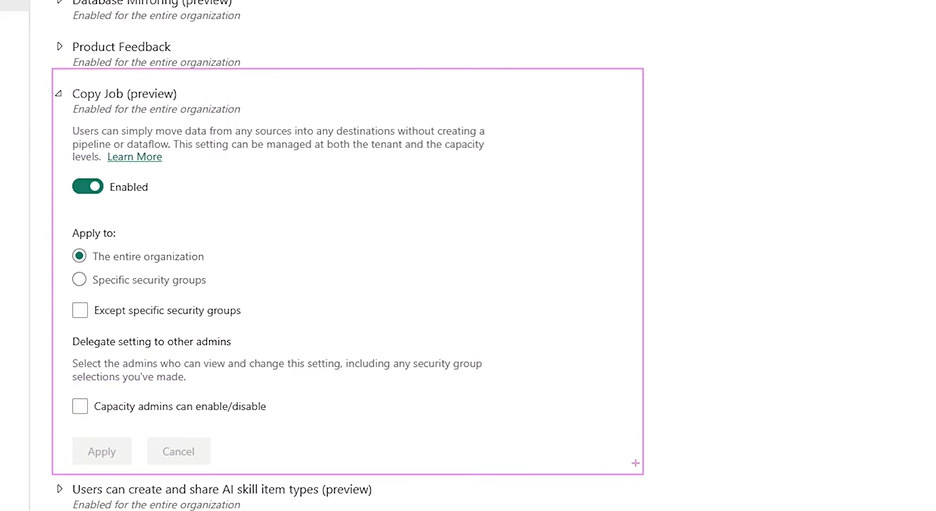

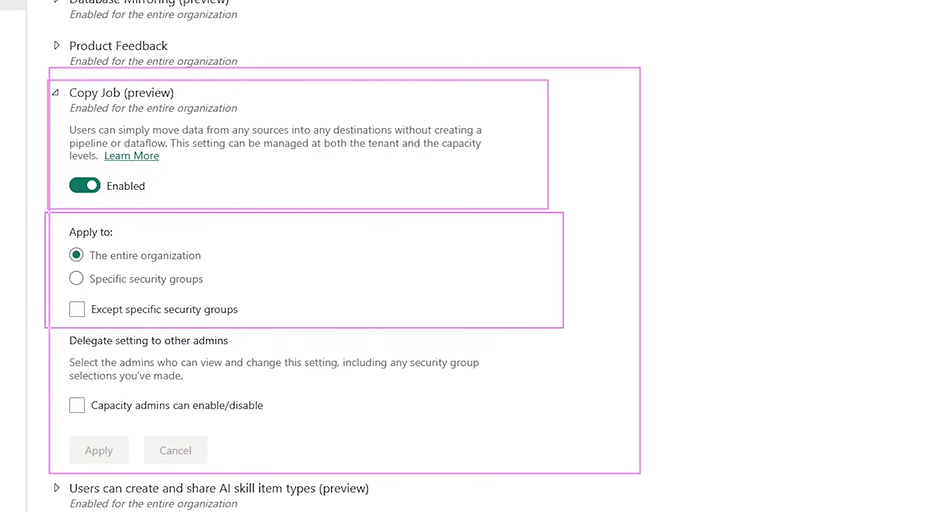

- Enable Copy Job (Preview)

Scroll within Tenant settings to find the feature called Copy Job (Preview). Toggle it on.

You’ll get three visibility options:

- Entire organization: Everyone in the tenant can use Copy Job.

- Specific security groups: Limit access to certain teams or users.

- Except specific groups: Enable for everyone except selected users.

Choose what fits your org’s policy. In most cases, enabling it for selected security groups is a safe approach when testing preview features.

- Save the Changes

After selecting your preferred access level, click Apply to save the setting. It may take a few minutes to propagate across your environment.

Step 2: Prepare Your Source Tables

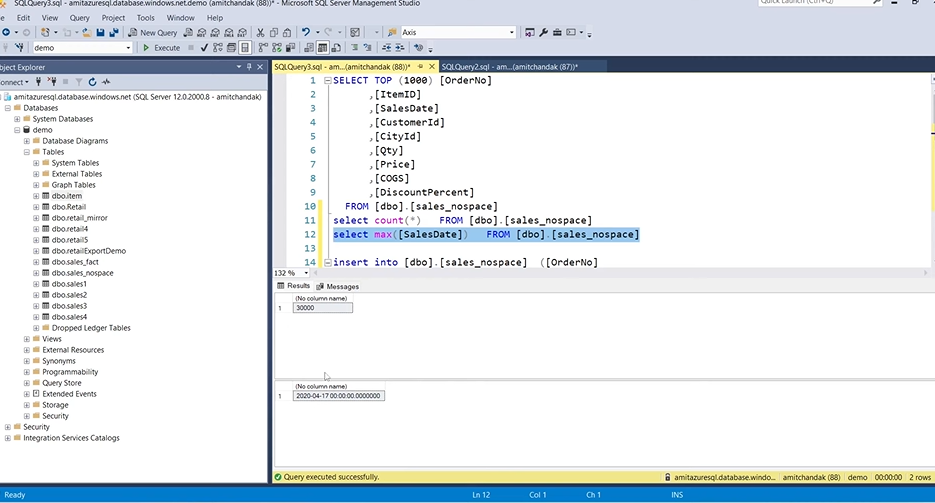

After enabling Copy Job, the next step is to select your source data. In this example, two tables from an Azure SQL Database were used:

- item – a smaller table with 59 rows

- sales – a larger one with 30,000 rows

You can use any supported source, but Azure SQL is commonly used and works well for testing incremental loads.

How to Connect:

- Choose Azure SQL Database as your source type.

- Enter the connection string and authentication details (basic, service principal, or organizational account).

- Once connected, Fabric will auto-load your database schema.

From the list of available tables, select the ones you want to bring into Fabric. You can preview them before selecting.

Step 3: Set the Destination and Map Columns

Once you’ve chosen your source tables, the next step is to define where the data should go inside Microsoft Fabric. The destination is typically a Lakehouse or a Warehouse within your workspace, both of which are built on OneLake — Microsoft Fabric’s unified data storage layer.

Choosing Your Destination

When setting up the Copy Job:

- You’ll be prompted to select a destination from your available Lakehouses or Warehouses.

- Pick the target environment where you want your data to land.

- If needed, create a new Lakehouse directly from the interface.

Each source table you selected will now be paired with a matching destination. Fabric assigns default names, but you can rename the destination tables to something more meaningful. For example:

- Source table item → Destination table dbo.item_CJ

- Source table sales → Destination table dbo.sales_CJ

This helps keep track of which tables are part of your Copy Job and avoids confusion with existing tables.

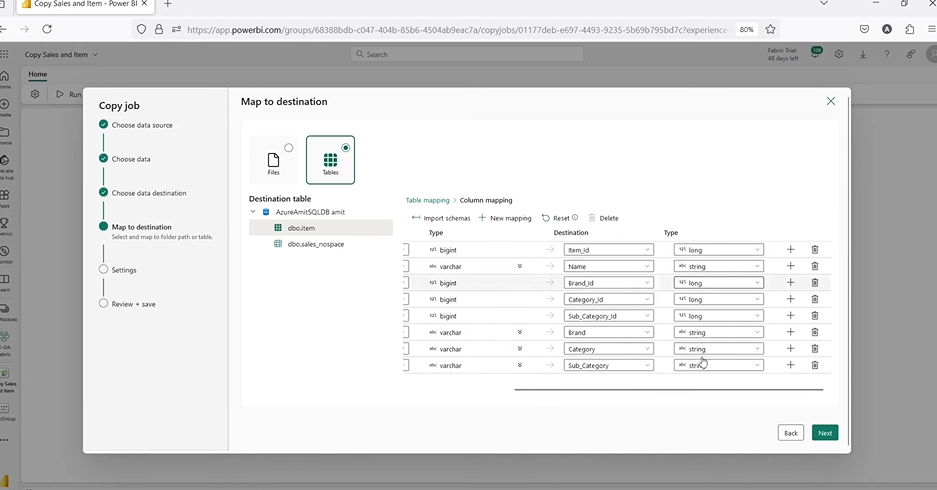

Column Mapping

After setting the destination, you’ll see an option to edit column mappings. This part lets you define how source columns align with destination columns — and it’s available for each table individually.

You can:

- Preview the columns on both sides to ensure they match.

- Change column names in the destination if needed.

- Adjust data types, such as converting a decimal to double or changing a bigint to int.

- Skip columns that don’t need to be copied.

This flexibility is useful when:

- Source and destination schemas don’t match exactly.

- You want to standardize data types in Lakehouse.

- You’re combining tables from different systems.

If no changes are needed, you can keep the default mappings and move on. But it’s good practice to review the mappings, especially if your source system has inconsistent naming or legacy column types.

Step 4: Choose Between Full and Incremental Copy

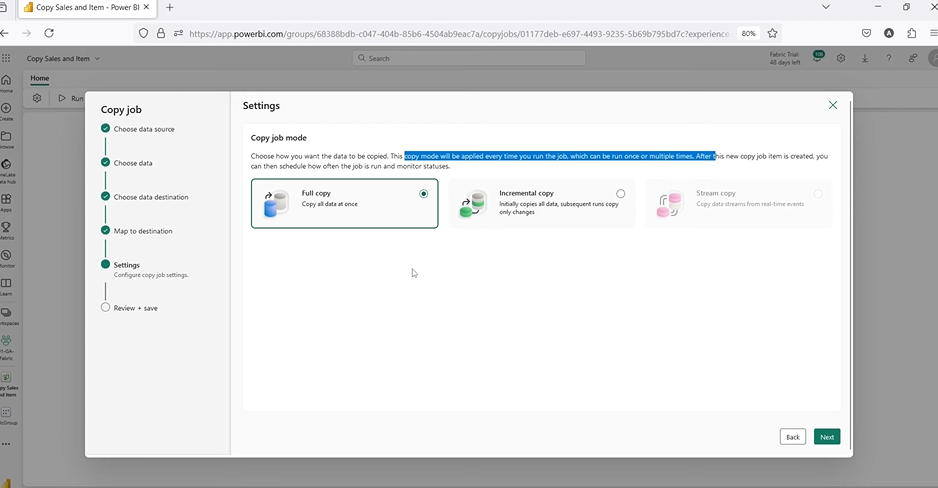

After setting up your source and destination, the next decision is how you want the data to be copied. Microsoft Fabric Copy Job gives you two clear options:

- Full Copy

- Incremental Copy

Each approach has its own use case, and choosing the right one depends on how your source data changes over time and how you want it reflected in your destination.

Full Copy

A full copy means the job will remove the existing data in the destination table and reload everything from the source each time it runs.

When to use:

- The source data changes frequently and in unpredictable ways.

- You don’t have a reliable column to track incremental changes.

- You want a complete refresh on every run (e.g., for testing or one-time migrations).

Key points:

- It’s simple and safe when your tables are small.

- But for larger datasets, it can be resource-heavy and slower, since it reprocesses all rows even if only a few changed.

- Every run wipes and replaces the table in the destination.

This mode is best for small, static datasets or when precision and consistency matter more than performance.

Incremental Copy

An incremental copy only brings in the new records since the last run. It’s faster, more efficient, and ideal for tables where data is added regularly — like logs, sales transactions, or order histories.

To use incremental copy, you need:

- A column that can identify new records — usually a date, timestamp, or an auto-incremented ID.

- This column is known as the incremental column.

Example:

- For the item table, you could use item_id if each new item has a higher ID than the last.

- For the sales table, sales_date works well if new transactions are dated later than previous ones.

Choosing the Right Option

| Use Case | Recommended Copy Type |

| One-time load | Full Copy |

| Daily or scheduled updates | Incremental Copy |

| Source data changes unpredictably | Full Copy |

| Source is append-only (e.g., logs) | Incremental Copy |

| No good incremental column | Full Copy |

Step 5: Running the Job and Scheduling Options

Once your Copy Job is fully configured — with source, destination, mappings, and copy mode — you’re ready to run it. This is where you decide how often and when the data transfer should happen.

By default, Microsoft Fabric schedules the Copy Job to run every 15 minutes. While that sounds convenient, it may not always be ideal — especially if you’re using pay-per-query sources like serverless SQL pools, where each scheduled run could rack up unexpected costs.

Two Ways to Run the Job

1. Manual Run

- Best suited for testing, one-time loads, or infrequent updates.

- You trigger the job manually by clicking the Run button.

- Helpful when you’re still fine-tuning mappings or testing incremental logic.

- You stay in control — no automatic refresh, no background activity.

2. Scheduled Run

- Ideal for production workloads where data needs to be kept up to date.

- Once enabled, the job runs automatically at regular intervals.

- You can customize the frequency based on your needs.

Custom Scheduling Options

When configuring the schedule, you can set:

- Frequency: Every X minutes, hourly, daily, weekly

- Start and End Dates: Define when the schedule becomes active

- Start Time: Choose the exact time of day to begin

- Time Zone: Align with your regional or business operating hours

Things to Watch Out For

1. Hidden Costs with Frequent Runs

If your source system charges per query — like serverless SQL or APIs — each run could trigger extra costs. This is especially important when the job runs every 15 minutes, regardless of whether new data is present or not.

2. Overloading the System

Frequent jobs on large tables can add unnecessary load to both source and destination systems. If the data only changes hourly, there’s no need to run it every 5 minutes. Balance refresh needs with system performance.

3. Unintended Overwrites (if Full Copy is used)

If you’re using Full Copy and have it running every few minutes, be cautious. It will delete and reload the data each time — which may slow down performance, create table locks, or impact reporting layers using the destination tables.

Step 6: Check the Output in the Lakehouse

Once the Copy Job has run, it’s important to verify that the data actually landed in the destination and that it matches what you expected. Microsoft Fabric lets you do this easily using Lakehouse and the SQL endpoint built into the platform.

Whether you’re doing a full copy or incremental load, this step is key to validating:

- The data structure (schema)

- Row counts

- Load completeness

- Whether new records were appended successfully

Where to Check the Output

The destination tables you configured during setup (e.g., item_CJ, sales_CJ) are stored inside your selected Lakehouse.

To verify the load:

1. Go to the Workspace

Navigate to the workspace where the Copy Job was created. This is the same workspace you selected earlier when defining your destination.

2. Open the Lakehouse

Inside the workspace, look for the Lakehouse object (e.g., Lake01) that you set as the target for your data.

You’ll see the list of available tables in the Lakehouse, including the ones loaded by your Copy Job. They’ll appear with the names you gave them — like item_CJ and sales_CJ.

3. Use the SQL Endpoint

Microsoft Fabric provides a built-in SQL endpoint for every Lakehouse. This allows you to run SQL queries directly against the data in your Lakehouse tables.

Click the three dots (⋮) next to your Lakehouse and select Open SQL Endpoint. This opens a query editor where you can run standard SQL commands to inspect your data.

What to Query

Start with something simple — like checking the row count. This tells you whether the expected number of records were copied over.

Run queries like:

These will return the total number of rows in each table. Compare this to the row count in your source database.

Example:

Let’s say:

- The original item table in Azure SQL had 59 rows

- The sales table had 30,000 rows

After the Copy Job runs:

- dbo.item_CJ should have 59 rows

- dbo.sales_CJ should have 30,000 rows

If you run the job again with incremental mode after inserting one new row into each table:

- item_CJ should now show 60 rows

- sales_CJ should show 30,001 rows

This is how you confirm the incremental load is working correctly.

Step 7: Test Incremental Copy by Adding Data

After setting up and running a Copy Job with incremental copy mode, you’ll want to verify that it works the way you expect — pulling in only new records, without touching or overwriting existing ones.

This step is critical for two reasons:

- It confirms that your incremental column is working correctly.

- It shows you exactly what Copy Job does (and doesn’t do) during repeat runs.

Let’s go through how to test this in a clear, practical way.

How to Test Incremental Load

1. Add a New Row in the Source Table

Go back to your source database (e.g., Azure SQL), and manually insert a new row into the same table you’ve already loaded with Copy Job.

The new row must include a higher value in the incremental column you selected earlier (e.g., a later date or a larger ID).

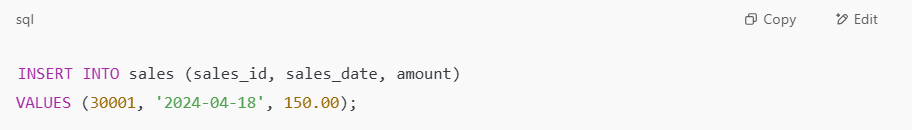

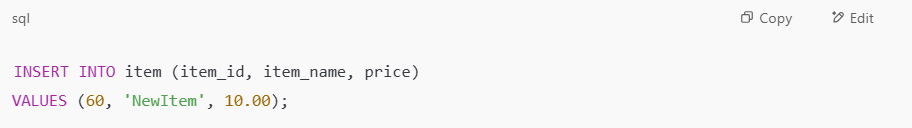

Example for sales table: If your incremental column is sales_date and the last entry was 2024-04-17, insert a new row with 2024-04-18.

Example for item table: If the incremental column is item_id, and your last item had ID 59, insert a row with ID 60.

2. Run the Copy Job Again

Once your new data is in the source table, return to Microsoft Fabric and manually re-run the Copy Job.

Because you’re using incremental mode, the Copy Job will now scan the incremental column for new values — and only pull in rows that meet that criteria.

What Should Happen

After the second run completes:

- New rows will be appended to the destination table in the Lakehouse.

- Previously loaded rows remained unchanged.

- Updated rows in the source will be ignored.

- Deleted rows in the source will not be removed from the destination.

You can confirm the behavior by:

- Running a simple count check:

- Querying for the new row:

Note (2025 Update): The behavior described above applies to basic watermark-based incremental copy. If you enable CDC (Change Data Capture) on your source, Copy Job will automatically capture inserts, updates, and deletes — keeping your destination fully synced without manual intervention.

Microsoft Fabric Vs Tableau: Choosing the Best Data Analytics Tool

A detailed comparison of Microsoft Fabric and Tableau, highlighting their unique features and benefits to help enterprises determine the best data analytics tool for their needs.

![]() Learn More

Learn More

Step 8: Add More Tables to the Same Copy Job

You’re not limited to just the tables you started with. One of the useful features of Microsoft Fabric Copy Job is that you can edit an existing job to include more tables later — without having to rebuild or duplicate anything.

How to add more tables:

- Open the existing Copy Job.

- Click Choose Data Source in the job settings.

- Select one or more new tables (e.g., sales_one).

- Configure column mapping as needed — just like you did for the earlier tables.

- If you’re using incremental copy, set the incremental column for each new table.

- Save the job and run it again.

This is especially helpful in projects that grow over time. You might start with two or three tables and later need to add others from the same source. Instead of maintaining multiple jobs, you can manage them all under one job, keeping things organized.

Step 9: Reviewing Job Statistics and Logs

Once a Copy Job runs, Microsoft Fabric gives you a detailed breakdown of what happened during that run. This is important for monitoring, debugging, and understanding performance — especially as your data grows or job complexity increases.

What’s included:

- Rows read and written for each table

- Time taken to read from source and write to destination

- Total duration of the job

- Throughput in kilobytes per second (KB/s)

- Status (e.g., succeeded, failed, in progress)

You can also:

- Filter the view by status or table name — useful when managing large jobs with many tables.

- Use the “More info” tab for additional job-level stats and historical performance data.

These logs make it easy to track down issues (like zero rows written or schema mismatches) and optimize jobs based on run time or throughput.

Enhance Your Analytics with Kanerika’s Microsoft Fabric Expertise

Implementing Microsoft Fabric the right way — especially with features like Copy Job, incremental loading, and seamless data movement into OneLake — can make a big difference in how teams automate pipelines, reduce manual work, and keep data fresh across systems. At Kanerika, we help organizations do exactly that.

As a certified Microsoft partner with deep expertise in data and AI, Kanerika works closely with businesses to integrate Fabric into real-world workflows. From setting up multi-capacity environments to designing shortcut-driven models that avoid duplication, we build practical, scalable solutions tailored to your goals.

Our hands-on experience across industries means we don’t just recommend best practices—we implement them fast. Whether you’re modernizing reporting, consolidating data across teams, or building for scale, we ensure your Fabric environment is built to deliver results from day one.

Partner with Kanerika and take the next step toward faster insights, cleaner architecture, and smarter decisions.

FAQ

What is a Copy Job in Microsoft Fabric?

A Copy Job in Microsoft Fabric is a dedicated data movement workload that transfers data between supported sources and destinations within the Fabric ecosystem. Unlike pipeline-based copy activities, Copy Jobs provide a streamlined interface for bulk data transfers, supporting both full and incremental loads. They simplify data ingestion into OneLake, Lakehouses, and Warehouses without requiring complex orchestration logic. Copy Jobs are ideal for scheduled, repeatable data replication scenarios across cloud and on-premises environments. Kanerika’s Microsoft Fabric experts help enterprises configure Copy Jobs for optimal performance and reliability—connect with us for a consultation.

How do you copy activity in Microsoft Fabric?

Copy activity in Microsoft Fabric executes within Data Factory pipelines to move data between sources and sinks. You configure it by selecting source and destination connections, mapping columns, and defining transfer behavior such as full or incremental copy. The activity supports over 100 connectors including Azure SQL, Snowflake, and REST APIs. You can enable parallel copy and staging for large datasets to optimize throughput. Copy activity integrates seamlessly with Fabric’s unified analytics platform for end-to-end data workflows. Kanerika builds production-ready Fabric pipelines with optimized copy activities—reach out to accelerate your data integration.

What are Microsoft Fabric jobs?

Microsoft Fabric jobs are discrete workloads that execute specific data operations within the Fabric platform. These include Copy Jobs for data movement, Spark jobs for transformation, and pipeline jobs for orchestration. Each job type serves a distinct purpose in the modern data lifecycle, from ingestion to analytics. Jobs can be scheduled, triggered on-demand, or chained together in workflows. They integrate natively with OneLake, ensuring unified governance across all data assets. Fabric jobs eliminate the need for multiple disconnected tools in your analytics stack. Kanerika helps enterprises architect Fabric job strategies—contact us for a tailored implementation plan.

How do I enable the Copy Job feature in Microsoft Fabric?

Enable Copy Job in Microsoft Fabric through the workspace settings or admin portal by ensuring the feature is activated for your tenant. Navigate to the Data Factory experience within Fabric, select New, and choose Copy Job from the available options. Your Fabric capacity must be active with appropriate permissions assigned to your account. Some organizations may need tenant administrators to enable preview features if Copy Job is in preview status. Once enabled, you can configure sources, destinations, and scheduling directly from the interface. Kanerika’s Fabric specialists streamline feature enablement and configuration—let us handle your setup.

What types of data transfers does the Copy Job support?

Copy Job in Microsoft Fabric supports full load transfers that replicate entire datasets and incremental transfers that capture only changed records. It handles structured data from databases like SQL Server and Oracle, semi-structured formats including JSON and Parquet, and cloud storage sources such as Azure Blob and Amazon S3. The job supports cross-cloud data movement and on-premises to cloud transfers using data gateways. Both batch and scheduled transfer patterns are available for flexible data ingestion strategies. Kanerika designs data transfer architectures using Fabric Copy Jobs for enterprise-scale requirements—schedule a discovery call today.

How does the incremental copy feature work?

Incremental copy in Microsoft Fabric transfers only new or modified records since the last execution, reducing processing time and compute costs. The feature uses watermark columns such as timestamps or sequential IDs to track changes between runs. You configure the high watermark column during Copy Job setup, and Fabric automatically maintains state across executions. This approach is essential for near-real-time data synchronization and large-scale data pipelines where full loads are impractical. Incremental copy minimizes network bandwidth usage while keeping destination data current. Kanerika implements incremental copy strategies optimized for your data volume—talk to our Fabric team.

Can I copy multiple tables in one job?

Yes, Copy Job in Microsoft Fabric supports multi-table copying within a single job configuration. You can select multiple tables from your source connection and map them to corresponding destinations in one operation. This capability streamlines bulk data migration scenarios and reduces administrative overhead compared to creating separate jobs for each table. The job processes tables sequentially or in parallel depending on configuration and capacity constraints. Schema mappings can be applied individually or inherited from source structures. Kanerika accelerates multi-table migrations in Fabric with proven methodologies—contact us for a migration assessment.

How do I set column mappings in a Copy Job?

Column mappings in a Microsoft Fabric Copy Job are configured during the job setup under the mapping section. You can auto-map columns by matching source and destination names or manually define mappings for columns with different names or data types. The interface allows type conversions, column exclusions, and default value assignments for handling schema mismatches. Proper column mapping ensures data integrity during transfers between heterogeneous systems. You can also apply transformations using expressions for basic data manipulation within the mapping layer. Kanerika’s data integration experts configure precise column mappings for complex migration scenarios—get in touch for support.

How do you copy data in a Fabric pipeline?

Copying data in a Fabric pipeline involves adding a copy activity to your pipeline canvas within Data Factory. You configure source and sink connections, define column mappings, and set copy behaviors like parallelism and fault tolerance. The pipeline orchestrates the copy activity alongside other activities such as data flows, notebooks, and stored procedures. You can parameterize connections for dynamic data movement across environments. Pipelines support triggers for scheduled, event-based, or manual execution. Copy activities within pipelines integrate with Fabric monitoring for full observability. Kanerika builds scalable Fabric pipelines for enterprise data movement—connect with our team today.

What is Microsoft Fabric used for?

Microsoft Fabric is an end-to-end analytics platform that unifies data integration, engineering, warehousing, science, and business intelligence in one environment. It consolidates tools like Data Factory, Synapse, and Power BI under a single SaaS offering with OneLake as the central data lake. Organizations use Fabric to eliminate data silos, reduce infrastructure complexity, and accelerate time-to-insight. The platform supports real-time analytics, machine learning workloads, and governed data sharing across teams. Fabric’s consumption-based pricing simplifies cost management for analytics initiatives. Kanerika delivers complete Microsoft Fabric implementations for data-driven enterprises—reach out for a platform assessment.

Is Fabric replacing ADF?

Microsoft Fabric incorporates Azure Data Factory capabilities rather than replacing ADF entirely. Data Factory in Fabric provides enhanced pipeline and copy activity features native to the Fabric ecosystem, while standalone ADF remains available for Azure-centric workloads. Organizations can continue using ADF independently or migrate pipelines to Fabric for unified analytics. Fabric’s Data Factory experience offers tighter integration with OneLake, Power BI, and other Fabric workloads. Microsoft positions Fabric as the evolution of its analytics services without deprecating existing Azure tools. Kanerika guides enterprises through ADF to Fabric transitions—contact us for a migration strategy.

How do you fast copy in Fabric?

Fast copy in Microsoft Fabric leverages optimized data transfer mechanisms including parallel copy, staging through blob storage, and intelligent partitioning. Enable these settings within copy activity or Copy Job configuration to maximize throughput for large datasets. Using Parquet or Delta formats as destinations improves write performance compared to row-based formats. Allocating sufficient Fabric capacity units ensures compute resources match data volumes. Avoiding complex transformations during copy and deferring them to downstream processing also accelerates transfers. Network proximity between sources and Fabric regions further reduces latency. Kanerika optimizes Fabric copy performance for enterprise-scale data—talk to our specialists.

What is copy activity?

Copy activity is a data movement component in Azure Data Factory and Microsoft Fabric that transfers data between source and destination datastores. It supports over 100 connectors spanning cloud services, databases, file systems, and SaaS applications. Copy activity handles schema mapping, data type conversion, and error handling during transfers. You can configure parallelism, fault tolerance thresholds, and staging options for performance tuning. It serves as the foundation for data ingestion pipelines, feeding warehouses, lakehouses, and analytical workloads. Copy activity operates within pipeline orchestration for scheduled or triggered execution. Kanerika implements robust copy activity configurations for reliable data pipelines—schedule a consultation.

How to copy a pipeline in Fabric?

Copying a pipeline in Microsoft Fabric involves using the duplicate or clone function within the Data Factory workspace. Right-click the pipeline in the workspace explorer and select Clone to create an identical copy with a new name. You can also export pipeline JSON definitions and import them into another workspace for cross-environment replication. This approach accelerates development when building similar data workflows or promoting pipelines across development, test, and production environments. Cloned pipelines retain all activities, parameters, and connections from the original. Kanerika establishes DevOps practices for Fabric pipeline management—reach out for CI/CD implementation support.

Is Fabric the same as Databricks?

Microsoft Fabric and Databricks are distinct platforms with different architectures and value propositions. Fabric is Microsoft’s unified SaaS analytics platform combining Data Factory, Synapse, and Power BI under one roof with OneLake storage. Databricks is a lakehouse platform built on Apache Spark, offering advanced data engineering and machine learning capabilities. Fabric provides tighter Microsoft 365 and Azure integration, while Databricks offers multi-cloud flexibility. Both support Delta Lake format and can complement each other in hybrid architectures. Choice depends on existing investments, skill sets, and workload requirements. Kanerika implements both Fabric and Databricks solutions—contact us to evaluate the right fit.

What is the difference between copy activity and data flow in ADF?

Copy activity in ADF performs direct data movement between sources and destinations with minimal transformation capabilities like column mapping and type conversion. Data flows provide a visual, code-free environment for complex transformations including joins, aggregations, pivots, and derived columns during data movement. Copy activity offers higher throughput for simple transfers, while data flows execute on Spark clusters for transformation-heavy workloads. Use copy activity for ETL scenarios where transformation occurs downstream, and data flows when inline transformation is required. Both integrate within ADF pipelines for orchestrated execution. Kanerika architects ADF solutions balancing copy activity and data flow usage—talk to us today.

Is Fabric going to replace Azure?

Microsoft Fabric is not replacing Azure but operates as a SaaS analytics service built on Azure infrastructure. Fabric consolidates multiple Azure analytics services into a unified platform while Azure continues providing foundational cloud compute, storage, and networking. Organizations can use Fabric alongside other Azure services like Azure SQL, Azure ML, and Azure Functions. Fabric simplifies analytics workloads without requiring deep Azure resource management, making it accessible to broader teams. Azure remains the comprehensive cloud platform, while Fabric focuses specifically on end-to-end analytics capabilities. Kanerika helps enterprises integrate Fabric within their Azure ecosystems—reach out for architectural guidance.

What is copy behavior in ADF?

Copy behavior in Azure Data Factory controls how data writes to destination when files or records already exist. The three primary behaviors are PreserveHierarchy, which maintains source folder structure; FlattenHierarchy, which collapses all files into a single destination folder; and MergeFiles, which combines source files into one destination file. For database destinations, behaviors include Insert, Upsert, and StoredProcedure modes that determine how records are written or updated. Configuring appropriate copy behavior prevents data loss and ensures idempotent pipeline execution. These settings apply equally to copy activities in Microsoft Fabric’s Data Factory. Kanerika configures copy behaviors for reliable, repeatable data pipelines—contact us for expert assistance.