Most AI projects don’t fail at the model level. They fail quietly in production. A 2024 Gartner survey of 400 CIOs found that only 26% are satisfied with their AI investment returns, and 77% struggle to show business value. That gap is usually an observability problem. This guide covers the top 10 AI observability tools for 2025, the metrics to track, and Kanerika’s TRACE framework for choosing the right tool for your stack.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Key Takeaways

- AI observability tools go beyond monitoring by helping teams understand why a model produced a specific output.

- Selecting the right tool category matters since AI observability tools vary across tracing, monitoring, and governance platforms.

- Multi-agent workflows remain difficult to trace, as only a few tools support distributed observability for complex agent systems.

- RAG systems need stage-level visibility, covering retrieval, ranking, and generation rather than only the final output.

- The AI observability market is growing rapidly, expected to expand from $833M in 2024 to $3.69B by 2029.

- Teams should start with lightweight tools and scale observability platforms as AI systems become more complex.

What AI Observability Actually Means

AI observability gives teams the ability to ask questions about system behavior after the fact, trace root causes, and understand why a model responded a certain way in a specific context. It’s not monitoring with a fancier name.

Generative AI adds another layer of complexity beyond classical machine learning observability. Outputs are non-deterministic, models are sensitive to prompt changes, and multi-step agent behavior means failure modes aren’t predictable upfront. You can’t pre-define a threshold for ‘reasoning went wrong.’ Decision support systems built on LLMs inherit all of this complexity, which is why the observability layer matters so much for trust.

Traditional monitoring asks ‘Is it running?’ and responds to threshold alerts with binary up/down outputs – useful for infrastructure SRE teams. ML monitoring tracks performance degradation through statistical drift alerts, generating score-based outputs that serve MLOps teams. AI and LLM observability goes further: it asks ‘Why did it respond that way?’ using root cause trace analysis across traces, spans, prompt context, and token usage – the layer AI engineering and compliance teams actually need.

The distinction matters most as teams move from single LLM calls to multi-step custom AI agents. One agent that spawns three sub-agents doesn’t just multiply complexity. It multiplies failure modes.

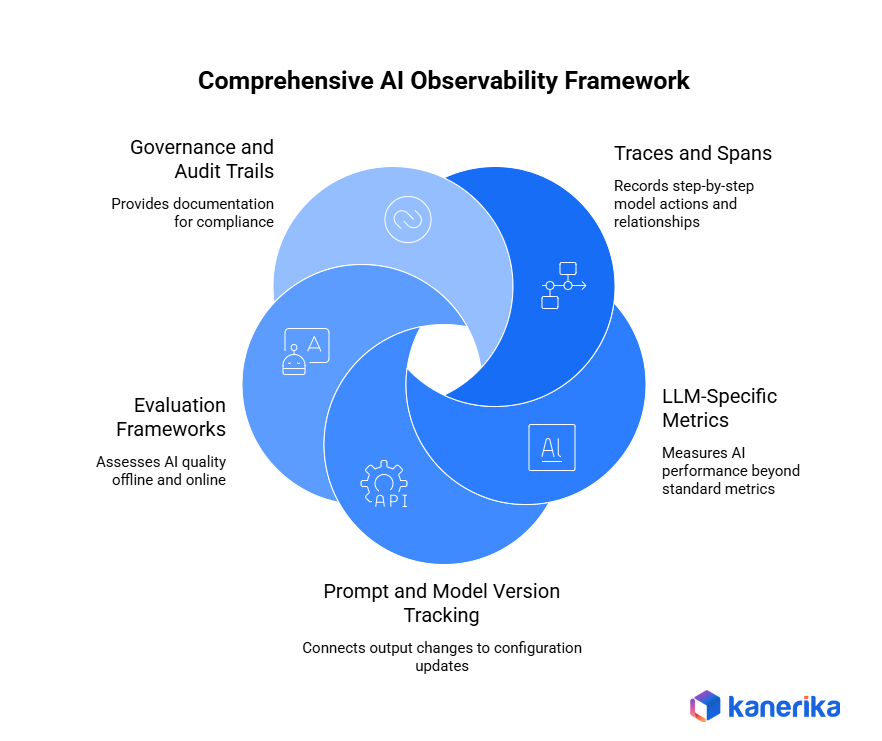

Key Components of AI Observability

Five components make AI observability work in practice. Most tools cover some of these. Only enterprise platforms cover all five.

1. Traces and Spans:

Step-by-step records of what a model or agent did, in what order, with what inputs. For multi-agent workflows, spans capture parent-child relationships between agents, so teams can see exactly which sub-agent produced a bad output and why. OpenTelemetry’s semantic conventions for AI are pushing toward standardizing how these traces look across tools.

2. LLM-Specific Metrics:

Standard infrastructure metrics like latency and uptime matter, but aren’t enough on their own. You also need token usage per request, cost per call by model version, hallucination rate, faithfulness, and relevance scores, and prompt injection detection. These signals don’t exist in traditional APM tooling. Text analytics capabilities embedded in observability platforms help surface semantic quality signals that raw logs miss entirely.

3. Prompt and Model Version Tracking:

A model that worked last week may behave differently today if the prompt changed, the underlying model was updated, or the retrieval context shifted. Versioned prompt tracking connects output changes to specific configuration changes. That’s essential for any quality management system applied to LLM outputs.

4. Evaluation Frameworks:

Offline and online evaluation: comparing outputs against reference answers, running RAG Triad evaluations, or applying LLM-as-judge scoring to production samples. Evaluation tells teams whether quality is drifting before the user complaint logs do. Named entity recognition and text analytics pipelines often feed evaluation frameworks with structured output signals.

5. Governance and Audit Trails:

In regulated industries, observability has to produce documentation in a format that compliance teams can actually use. Inputs, outputs, decision logic, model version, and data provenance. This is the layer most engineering-focused tools skip, and the layer compliance teams actually need. It aligns directly with AI TRiSM, the framework Gartner uses to describe AI Trust, Risk, and Security Management as an enterprise discipline.

Key Metrics Every AI Observability Stack Should Track

Before picking a tool, teams need to be clear on what they’re measuring. Model performance monitoring covers more than latency.

Latency per LLM call (P50, P95, P99) drives user experience and SLA compliance – best tracked in Helicone, Langfuse, and LangSmith. Cost per request and per token matters for budget control and model version comparison, where Helicone, Arize AI, and W&B Weave lead. The hallucination rate is about output trustworthiness and compliance risk; Galileo, TruLens, and Arize AI handle this best.

Retrieval relevance for RAG pipelines measures whether retrieved context actually answers the right question – TruLens and Arize Phoenix are purpose-built for this. Prompt drift tracks input distribution changes that affect output quality, which Evidently AI covers well. Token utilization efficiency catches cost waste from oversized prompts – Helicone and LangSmith have the clearest visibility here.

Agent tool call success rate tells you whether multi-agent workflows complete their intended steps – LangSmith, Langfuse, and Arize AI all support this. Model faithfulness and groundedness – whether outputs stay anchored in retrieved context – is best measured in TruLens and Galileo. Bias and fairness scores matter for regulatory compliance and ethical AI, where Fiddler AI and Arize AI have the deepest coverage.

The same rigor that drives Microsoft licensing optimization applies directly to AI API spend. Cost per token is a boardroom-level metric at scale. Data streaming infrastructure plays a direct role here, too: real-time metrics only work if telemetry data flows without latency from model endpoints to dashboards.

Three Tool Categories That Matter Before Tool Names

Most teams pick a tool name before picking a category. That’s where misalignment starts.

Category 1:

LLM Tracing and Debugging Platforms. Trace individual LLM calls, prompt chains, and agent steps at the code level. Built for development and QA teams. Tools: Langfuse, LangSmith, Helicone, Traceloop.

Category 2:

Production AI Monitoring and Drift Detection Platforms. Continuous monitoring of model performance, data drift, bias, and output quality at scale. Built for MLOps teams managing live deployments. Tools: Evidently AI, W&B Weave, TruLens.

Category 3:

Enterprise AI Governance and Observability Suites. Full-stack visibility across models, agents, pipelines, and compliance reporting. Built for enterprises with regulatory requirements or multi-model deployments. Tools: Arize AI, Fiddler AI, Galileo. These tools align with AI TRiSM governance frameworks and Gartner’s Magic Quadrant criteria for AI governance platforms.

Top 10 Data Observability Tools for Smarter and More Reliable Data in 2026

Explore top data observability tools to monitor data health, detect issues early, and ensure reliable analytics.

Open Source vs. Commercial: How to Decide

Go open-source (Langfuse, TruLens, Evidently AI, Traceloop) when: the team is small or the deployment is early-stage; data sovereignty requirements mean SaaS routing isn’t acceptable; the team has engineering capacity to self-host and maintain; or budget is tight and instrumentation is the priority, not analytics.

Go commercial (Arize AI, Fiddler, Galileo, LangSmith) when: active compliance or regulatory requirements need audit-grade logging; multi-model or multi-agent deployments need unified visibility; the team lacks capacity to maintain infrastructure; SLA guarantees and vendor support are required for production systems; or hybrid cloud architectures need observability that spans multiple environments without data routing constraints.

The hybrid approach works well too: open-source tracing (Langfuse or Helicone) for developer-facing visibility, commercial governance layer (Fiddler or Arize) for compliance and audit. For teams currently modernizing data and RPA platforms, AI observability is often the first new discipline that gets added alongside existing monitoring stacks. The open-source route reduces adoption friction at that stage.

Top 10 AI Observability Tools for Enterprise Teams

| Tool | Category | Best For | Open Source? | Pricing Model |

| Langfuse | Tracing | LLM tracing, self-hosted deployments | Yes | Free + Cloud tiers |

| LangSmith | Tracing | LangChain-native apps | No | Usage-based |

| Arize AI | Governance Suite | Enterprise ML + LLM unified observability | Partially (Phoenix is open source) | Enterprise pricing |

| Fiddler AI | Governance Suite | Regulated industries needing explainability | No | Enterprise pricing |

| W&B Weave | Monitoring | ML-to-LLM lifecycle teams | Partially | Free + Pro tiers |

| Evidently AI | Monitoring | Drift detection, batch evaluation | Yes | Free + Cloud |

| Helicone | Tracing | API cost tracking, fast setup | Yes | Free + usage-based |

| Traceloop | Tracing | OTel-native infrastructure teams | Yes | Open source |

| TruLens | Monitoring | RAG pipeline evaluation | Yes | Open source |

| Galileo | Governance Suite | Hallucination detection at scale | No | Enterprise pricing |

That overview gives you the landscape at a glance. But a tool list alone doesn’t answer the most useful question: which capabilities does each tool actually cover, and where are the gaps?

Feature Coverage Matrix

| Tool | LLM Call Tracing | Multi-Agent Tracing | RAG Evaluation | Cost Tracking | Hallucination Detection | Bias / Fairness | Audit-Grade Logging |

| Langfuse | Yes | Yes | Partial | Basic only | No | No | Via export only |

| LangSmith | Yes | Yes (LangGraph only) | Partial | Basic only | No | No | Via export only |

| Arize AI | Yes | Yes | Yes (via Phoenix) | Yes | Yes | Yes | Yes |

| Fiddler AI | Yes | Partial | Partial | Basic only | Yes | Yes | Yes |

| W&B Weave | Yes | Partial | Partial | Yes | Via eval pipeline | No | Via export only |

| Evidently AI | Output-level only | No | Partial | No | Via eval pipeline | Yes (drift-based) | Via export only |

| Helicone | Yes | No | No | Yes | No | No | Basic logs only |

| Traceloop | Yes | Via OTel spans | No | No | No | No | Via OTel backend |

| TruLens | Eval-level only | No | Yes | No | Yes (faithfulness) | No | No |

| Galileo | Yes | Partial | Yes | Basic only | Yes | Partial | Yes (Enterprise tier) |

A few things stand out. No single open-source tool covers all seven capabilities. That’s the main architectural argument for enterprise platforms like Arize AI. Helicone and Traceloop are strong instrumentation layers but weak standalone platforms. And the three tools with real multi-agent tracing support – Langfuse, LangSmith, Arize AI – are the same three that keep coming up in complex agent deployment conversations.

LLM Provider Compatibility

Provider lock-in is a real concern, especially for enterprises running Azure OpenAI, Bedrock, and direct API calls simultaneously. Langfuse, LangSmith, Arize AI, Evidently AI, Traceloop, and TruLens have the broadest provider coverage – all support OpenAI, Anthropic, Azure OpenAI, AWS Bedrock, and Google Vertex natively. Helicone’s proxy-based architecture means Bedrock and Vertex aren’t natively supported, which is a real constraint for AWS-centric or GCP-native teams. Galileo covers OpenAI, Anthropic, Azure OpenAI, and Bedrock well but has only partial support for Google Vertex and no Groq support. For teams reviewing the broader list of generative AI tools, provider compatibility is the first filter to apply before any feature comparison.

1. Langfuse: Open-Source LLM Tracing for Self-Hosted Deployments

Langfuse is an open-source LLM observability platform. It supports prompt tracing, scoring, and evaluation. Teams can run it entirely within their own infrastructure, which matters for regulated industries that can’t route data through third-party SaaS. It’s a natural fit for private cloud and air-gapped enterprise environments.

It’s lighter on enterprise governance features. No native bias detection or audit-grade logging. Teams with active compliance requirements will need to add other tooling.

Best fit: Mid-size engineering teams prioritizing data sovereignty and open-source control. Pricing: Self-hosted free; Cloud Pro ~$59/month; Enterprise custom.

2. LangSmith: Native Observability for LangChain and LangGraph Workflows

LangSmith is LangChain’s native observability platform. It traces LangChain and LangGraph-based applications with near-zero configuration. For AI agent builder workflows using LangGraph, the agent graph visualization is particularly useful. It shows parent-child span relationships across multi-step agent execution in a way most tools don’t.

The downside: pricing gets steep at scale, and the tool loses a lot of value outside the LangChain ecosystem. If the stack is LangChain-native, this is the obvious choice. If it isn’t, there are better options.

Best fit: Teams building on LangChain/LangGraph who accept the framework dependency. Pricing: Free up to 5,000 traces; Plus ~$39/month; Enterprise custom.

3. Arize AI: Unified Enterprise AI Observability Across ML and LLM

Arize takes a two-product approach. Phoenix is open-source, built for development and evaluation. The Arize cloud platform handles production monitoring. It’s one of the few platforms that covers both classical machine learning observability and LLM/agent observability in a single UI. Multi-agent tracing support is more mature than most competitors.

The trade-off: steep learning curve, opaque enterprise pricing, and some reviewer feedback that LLM-native feature iteration has lagged newer entrants.

Best fit: Larger MLOps teams managing multiple model types across the ML-to-LLM spectrum. Pricing: Phoenix open-source free; Arize cloud estimated $30K-$100K+ annually.

4. Fiddler AI: AI Monitoring and Explainability for Regulated Industries

Fiddler AI was founded in 2018 and is one of the more mature vendors in this space. It was built originally for ML explainability and bias detection. Its compliance and explainability capabilities are the strongest in this list: audit-grade logging, bias detection, and the ability to surface why a model made a specific decision.

The consistent criticism: price is firmly enterprise-only, and iteration on LLM-native tracing has been slower than newer tools. For customer analytics teams and financial services institutions, Fiddler’s explainability depth often justifies the premium.

Best fit: Financial services, healthcare, and insurance teams with active compliance requirements. Pricing: Enterprise-only; estimated $40K-$150K+ annually.

5. W&B Weave: LLM Tracing and Evaluation for ML-to-LLM Teams

Weights & Biases Weave extends the W&B ML experiment tracking platform into LLM tracing and evaluation. It’s the best option for teams already using W&B for traditional machine learning observability. You get a familiar platform that now covers LLM calls, prompt versioning, and production tracing without introducing a new tool.

It feels over-engineered for teams with no traditional ML workloads at all. Teams weighing small language models against full LLMs will find Weave’s multi-model tracking useful during that comparison phase.

Best fit: Research-to-production teams bridging ML and LLM workloads. Pricing: Free tier; Pro ~$50/seat/month; Enterprise custom.

6. Evidently AI: Open-Source AI Monitoring for Drift Detection and Batch Evaluation

Evidently AI is an open-source ML observability platform with strong batch evaluation. Data drift, model performance, and data quality reports. Python-native, it integrates cleanly into existing data science workflows and produces reports legible enough to share with non-technical stakeholders.

LLM tracing capabilities are less mature than Langfuse or LangSmith, and real-time alerting for live production is limited. It works at the output level, so it’s provider-agnostic by default. That makes it a natural complement for teams using Databricks Lakeflow or similar pipeline orchestration platforms where output-level quality tracking is the priority.

Best fit: Data science teams running structured ML who need drift reports and model quality documentation. Pricing: Open-source free; Evidently Cloud ~$500/month.

7. Helicone: Lightweight LLM API Cost Tracking and Request Logging

Helicone sits between an application and LLM API providers as a lightweight proxy. It captures every call with near-zero code changes. One URL change in an OpenAI or Anthropic client gets you immediate logging, cost tracking, and latency data.

Helicone gives per-request cost breakdowns, historical spend trends, and model version comparisons out of the box. No custom logging required. But it’s a logging and cost tool, not a full observability platform. For teams with API integration work already underway, the proxy-based setup is usually the least disruptive entry point into LLM observability.

Best fit: Teams at early instrumentation stages who want immediate visibility into LLM API spend. Pricing: Free up to 100K requests/month; Pro $80/month; Enterprise custom.

8. Traceloop: OpenTelemetry-Native LLM Observability for Existing Infrastructure

Traceloop (OpenLLMetry) is an OpenTelemetry-native SDK for LLM tracing. It generates OTel-standard spans from LLM calls that flow into any existing observability backend: Grafana, Datadog, Honeycomb, Jaeger. Supports OpenAI, Anthropic, Cohere, Bedrock, and Vertex AI via SDK.

Platform engineering teams who’ve invested in an OTel stack shouldn’t need a separate AI-specific SaaS. OpenTelemetry’s published semantic conventions for AI standardize how LLM traces are structured, and Traceloop implements those conventions directly. No built-in evaluation, drift detection, or governance features, so it’s not a standalone platform.

Best fit: Platform and SRE teams who want LLM traces inside Grafana or Datadog alongside existing service observability. Pricing: Open-source, free.

9. TruLens: Open-Source RAG Evaluation and LLM Quality Assessment

TruLens is an open-source evaluation framework built specifically for LLM and RAG pipeline quality. The RAG Triad evaluation methodology covers context relevance, groundedness, and answer relevance. It’s one of the most widely cited frameworks for RAG quality assurance in the AI engineering community. Provider-agnostic by design, it operates at the evaluation layer rather than the API proxy layer.

Strong for pre-deployment quality gates and sampling-based live evaluation. But it lacks real-time alerting and drift monitoring for sustained production use. It works best as one layer of a broader observability stack.

Best fit: Teams building Advanced RAG pipelines who need structured quality evaluation. Pricing: Open-source, free.

10. Galileo: Enterprise LLM Monitoring for Hallucination Detection at Scale

Galileo is an enterprise LLM quality platform. It focuses on hallucination detection, prompt quality scoring, and production monitoring at scale. The Chainpoll methodology for hallucination scoring uses a chain-of-thought-based sampling approach and achieves a mean correlation of 0.88 with human raters on the ARES benchmark dataset, according to Galileo’s published research. That’s one of the more rigorously documented methods for hallucination detection. Supports OpenAI, Anthropic, Azure OpenAI, and Bedrock.

Enterprise-only pricing, no self-serve tier, and a thin open-source community compared to Langfuse or TruLens. Teams report long sales cycles before getting platform access.

Best fit: Enterprises running LLMs in high-stakes, customer-facing, or regulated contexts where hallucination risk carries real business or legal consequences. Pricing: Enterprise custom; estimated $25K-$100K+ annually.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Best AI Observability Tool by Use Case

For RAG pipeline evaluation, TruLens is purpose-built, with Arize Phoenix as a strong second. Multi-agent workflow tracing points to LangSmith (for LangGraph-based systems) or Langfuse, with Arize AI as support for complex deployments. LLM API cost tracking starts with Helicone; W&B Weave adds depth for teams already on that platform.

Regulated industry compliance needs Fiddler AI as primary, with Arize AI for broader ML coverage. For unified ML and LLM monitoring in a single platform, Arize AI leads with W&B Weave as an alternative. Teams with existing OTel infrastructure should start with Traceloop and add Langfuse for deeper LLM-specific tracing.

Hallucination detection at scale points to Galileo first, with TruLens as an open-source fallback. Open-source self-hosted tracing is best served by Langfuse, complemented by Evidently AI for batch evaluation. LangChain and LangGraph apps have a clear answer in LangSmith; Langfuse works as a framework-agnostic alternative. Data drift and batch evaluation belongs to Evidently AI, with W&B Weave for teams that need ML experiment tracking alongside it.

Three AI Observability Gaps Standard Tool Lists Don’t Address

1. Multi-Agent AI Monitoring Is a Different Problem from LLM Tracing

Single LLM call tracing is effectively solved. Multi-agent observability is not. When agents spawn sub-agents, call external tools, pass context across steps, and operate in parallel loops, standard tracing breaks down. A flat log of events doesn’t capture parent-child agent relationships or the causal chain of a 12-step agent workflow.

Right now, only LangSmith (for LangGraph-based agents), Langfuse, and Arize AI have meaningful multi-agent tracing support. Most others are still catching up. The AI agent challenges that make agent deployments hard – tool dependency failures, memory drift, non-deterministic routing – are exactly the failure modes that need distributed tracing, not flat logging. Teams building on OpenAI AgentKit or LangGraph face these failure modes directly and need tooling that can follow execution across agent boundaries.

2. RAG Pipeline Observability Needs Coverage at Every Retrieval Stage

RAG failures don’t only happen at the generation step. Wrong documents get retrieved. Irrelevant context gets passed to the model. Hallucination occurs despite successful retrieval. Latency spikes at the vector search layer, not at the LLM call.

Effective RAG observability has to operate at every stage of the pipeline. For embedding quality, Arize Phoenix has full native support; TruLens and Galileo offer partial coverage. Retrieval recall – whether the right documents come back – is fully covered by TruLens and Arize Phoenix; Langfuse and LangSmith can approximate it through custom log scoring. Chunk relevance scoring and context faithfulness are where TruLens’s RAG Triad methodology is strongest, covering both natively. Answer relevance follows the same pattern. Generation latency by stage, which pinpoints whether bottlenecks sit in retrieval or generation, is where Langfuse, LangSmith, and Arize Phoenix lead.

TruLens and Arize Phoenix have the deepest RAG pipeline coverage overall. Both treat retrieval and generation as distinct observability problems rather than collapsing them into a single LLM call. For teams building on Advanced RAG architectures – multi-hop retrieval, hybrid search, and reranking layers – the gap between purpose-built RAG observability and general tracing tools is real.

3. LLM Cost Observability Is Underestimated at Enterprise Scale

Enterprise teams routinely underestimate LLM API spend before implementing observability. Token-level cost attribution by team, product feature, or business unit becomes a genuine finance-level concern as AI workloads scale. Mature cost observability means per-request cost attribution, model version cost comparison, budget alerting by tenant or feature, and cost-per-outcome tracking – not just cost-per-call.

For organizations with AI in supply chain deployments or customer relationship management workflows where LLM calls happen at volume, cost observability is a direct operational discipline. You can’t optimize what you can’t measure, and attribution is the first measurement that matters.

The TRACE Framework: Kanerika’s Guide to Choosing the Right Tool

Choosing an AI observability tool isn’t a list-reading exercise. It depends on the stack, the regulatory context, the team’s maturity, and what’s actually being observed. TRACE is what Kanerika uses with enterprise clients to make this decision systematically. It’s a sequenced set of filters that narrows the field to a real shortlist.

T – Tech Stack Fit. Building on LangChain/LangGraph? Start with LangSmith or Langfuse. Running raw API calls against OpenAI, Anthropic, or Azure OpenAI? Helicone for cost visibility, Langfuse for deeper tracing. Have existing OpenTelemetry infrastructure? Traceloop brings AI traces into what’s already running. Running custom multi-framework agent architectures? Arize AI or Langfuse for framework-agnostic coverage.

R – Regulatory and Compliance Requirements. Need bias detection, explainability reports, or audit-grade logging? Fiddler AI or enterprise Arize. Operating under the EU AI Act regulatory framework or comparable mandates? Audit trail capability is the deciding factor, not feature count. No active regulatory mandate? Open-source options are viable and often preferable for flexibility.

A – Agent vs. LLM Complexity. Single LLM calls only? Any tool in this list works. Simple tool-calling agents? LangSmith, Langfuse, or Helicone handle this. Complex multi-agent systems with sub-agent spawning, memory, and parallel execution? Only LangSmith (LangGraph), Langfuse, and Arize AI have the distributed tracing support to handle this reliably.

C – Cost and Team Scale. Team under 10 people: open-source self-hosted (Langfuse, TruLens, Evidently). Avoid enterprise pricing at this stage. Team of 10-100: managed cloud tiers (Langfuse Cloud, W&B Weave Pro, Helicone) offer the right capability-to-cost ratio. Enterprise 100+: Arize AI, Fiddler, or Galileo. Budget explicitly for onboarding, integration, and training time, not just license cost.

E – Evaluation vs. Monitoring Priority. Need offline quality evaluation before deployment? TruLens, Evidently AI, W&B Weave. Need live production AI monitoring with alerting and dashboards? Arize AI, Fiddler, Galileo. Need both on one platform? Arize AI is the most complete option. RAG-specific evaluation? TruLens is purpose-built; Arize Phoenix is the strong second option.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

TRACE Decision Matrix

Use this sequentially. Start with Tech Stack and work downward. Each answer narrows the shortlist.

| TRACE Factor | Your Situation | Recommended Tools | Tools to Eliminate |

| T – Tech Stack | LangChain / LangGraph native | LangSmith, Langfuse | Traceloop, TruLens |

| Raw API calls (OpenAI, Anthropic, Azure) | Helicone, Langfuse | LangSmith | |

| OTel / existing infra backend | Traceloop | Helicone, Galileo | |

| Custom / multi-framework agents | Langfuse, Arize AI | LangSmith | |

| R – Regulatory | Active compliance (finance, health, insurance) | Fiddler AI, Arize AI | Helicone, Traceloop, Evidently |

| EU AI Act or audit trail required | Fiddler AI, Galileo | All open-source options | |

| No active mandate | Any open-source option | Fiddler, Galileo (cost) | |

| A – Agent Complexity | Single LLM calls only | Any tool works | – |

| Tool-calling agents | LangSmith, Langfuse, Helicone | TruLens, Evidently | |

| Multi-agent / distributed systems | LangSmith (LangGraph), Langfuse, Arize AI | All others | |

| C – Cost & Scale | Team under 10 / early stage | Langfuse, TruLens, Evidently, Helicone | Fiddler, Galileo, Arize |

| Team 10-100 / scaling | Langfuse Cloud, W&B Weave Pro, Helicone Pro | Fiddler, Galileo | |

| Enterprise 100+ / mission-critical | Arize AI, Fiddler, Galileo | Free tiers only | |

| E – Priority | Offline evaluation / pre-deployment QA | TruLens, Evidently, W&B Weave | Helicone, Traceloop |

| Live production monitoring | Arize AI, Fiddler, Galileo | TruLens, Evidently | |

| RAG-specific evaluation | TruLens, Arize Phoenix | Helicone, Traceloop, Fiddler | |

| Both evaluation and monitoring needed | Arize AI; or Langfuse + Evidently combo | Single-purpose tools |

At Kanerika, we recommend starting with a lightweight open-source tracer, such as Langfuse or Helicone, to build baseline visibility in the first two weeks. Then layer in a production monitoring platform once failure modes are understood. Buying enterprise observability before knowing what to monitor is the most common mistake we see.

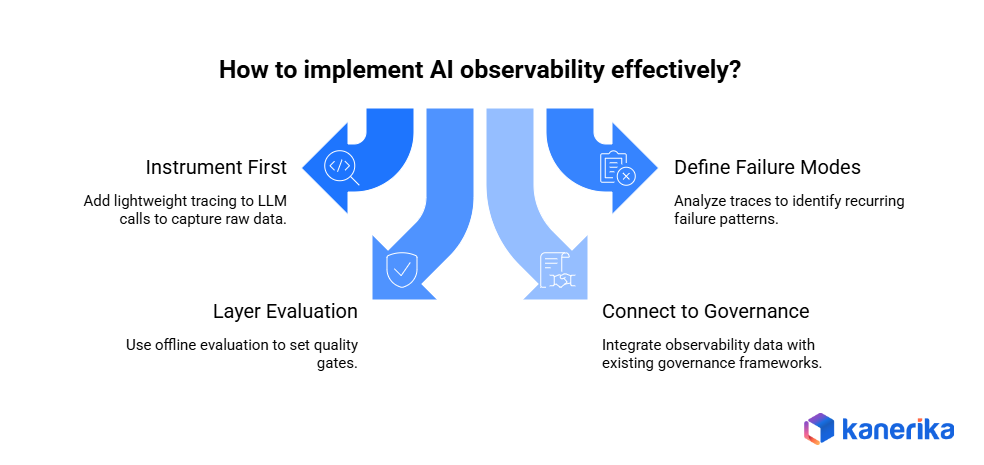

How to Implement AI Observability: Four Stages

Selecting a tool is the easy part. Getting AI observability to work in production requires a sequenced implementation. These four stages reflect what Kanerika deploys with enterprise clients.

Stage 1 – Instrument First (Weeks 1-2):

Add lightweight tracing to all LLM calls before anything else. Use Helicone (one URL change) or Langfuse SDK (10-20 lines of code) to capture inputs, outputs, latency, and token usage. Don’t design dashboards yet. The goal is raw data.

Stage 2 – Define Failure Modes (Weeks 2-4):

Review the first two weeks of traces. Identify recurring failure patterns: which prompts produce inconsistent outputs, where latency spikes occur, which agent steps fail most often. This analysis determines what metrics need active monitoring – and which tool category is actually needed.

Stage 3 – Layer Evaluation (Month 2):

Add offline evaluation against a reference dataset. Use TruLens for RAG pipelines, W&B Weave for ML-adjacent workloads, or Langfuse’s scoring API for custom quality metrics. Set quality gates that block deployment if evaluation scores drop below the threshold.

Stage 4 – Connect to AI Governance (Months 2-3):

Connect observability outputs to the organization’s existing data governance and compliance framework. Audit logs should flow to the same systems that handle other regulated data. Alerting logic should route to teams with authority to act, not just engineering. This stage requires real organizational change management – getting compliance, legal, and risk teams aligned on what observability data they’ll receive and how they’ll use it.

This is the stage most implementations skip, and the stage that matters most for decision intelligence at the organizational level. The alarm goes off. The question is whether anyone knows what to do next.

Realistic setup timelines: Helicone or Langfuse basic instrumentation: 1-4 hours. LangSmith for an existing LangChain app: under an hour. Arize AI or Fiddler enterprise deployment with governance integration: 4-8 weeks.

AI Observability in Production: Real Enterprise Examples

ABX Innovative Packaging: Data Pipeline Observability First

When Kanerika led a data management transformation for ABX Innovative Packaging, one of the central lessons was about sequencing. Data pipeline observability has to come before model-level observability. Monitoring model outputs is meaningless if the upstream data feeding those models is inconsistent or untracked.

Kanerika implemented a layered approach – pipeline health monitoring (completeness, freshness, schema consistency) as the foundation, with model output monitoring built on top. The two layers were connected, not siloed.

The takeaway: data consolidation work that precedes AI deployment is itself an observability exercise. Organizations that instrument their data pipelines before their models catch issues faster and at lower remediation cost.

Financial Services LLM Deployment: When Monitoring Isn’t Enough

The following is an anonymized composite based on patterns Kanerika observes across financial services AI deployments.

A regional financial institution deployed an LLM-based workflow for loan application document classification. The model performed well in testing. In production, a compliance audit requested decision-level justification for a batch of flagged applications. The team found they had logs but no explainability. Timestamps existed; reasoning traces did not.

The remediation stack: Fiddler AI for bias detection and explainability scoring at the decision layer, Langfuse for prompt-level tracing and input/output logging, and a custom audit log pipeline connecting Langfuse traces to the institution’s existing compliance data warehouse.

Within 60 days, model-related escalations dropped by approximately 30% due to earlier detection of edge-case failures, and the internal compliance review passed without issue. AI in Finance deployments consistently require this dual-layer approach: tracing for engineering teams, explainability and audit trails for compliance functions.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

How Kanerika Implements AI Observability for Enterprise Clients

Kanerika’s AI practice treats AI systems with the same operational rigor as any mission-critical enterprise system. That means visibility, and visibility means deliberate instrumentation from the first sprint – not the first production incident.

As a Microsoft Solutions Partner for Data & AI, Kanerika deploys observability architectures that integrate with Azure Monitor, Azure OpenAI, and the broader Microsoft data ecosystem. For teams using Microsoft Copilot across business workflows, that telemetry layer extends to Copilot usage patterns and output quality, not just raw model API calls. For organizations deploying KARL, Kanerika’s AI data insights agent, observability is embedded in the architecture from day one.

Three things Kanerika does differently from the standard implementation playbook:

- Stack-matched tool selection: Recommends AI observability tools based on the client’s actual LLM framework, data architecture, and regulatory context – not a generic best practice list.

- Governance-first design: Connects observability outputs directly to existing data governance and compliance frameworks rather than treating them as a separate DevOps concern. This aligns with Kanerika’s broader AI TRiSM approach and the Ethical AI Implementation roadmap applied across regulated engagements.

- Incident response integration: Every AI deployment includes a defined escalation path and model response runbook before go-live. Not after.

Observability tools provide the data. The practice – defined alerting thresholds, clear ownership, regular offline evaluation against production samples, and a documented incident response runbook – is what makes that data actionable.

Conclusion

Most AI observability failures aren’t tool failures. They’re sequencing failures – teams that bought enterprise platforms before understanding their failure modes, or that instrumented production systems but never connected the outputs to anyone with the authority to act on them.

The right tool depends on the stack, the regulatory context, the team’s maturity, and what’s actually being monitored. Start lightweight. Build baseline visibility first. Layer governance and evaluation once the failure modes are understood. That sequencing holds regardless of tool choice.

The LLM observability market is growing fast, and the tooling is maturing. But the most important capability isn’t in any of the platforms on this list. It’s the internal practice – defined ownership, regular evaluation cycles, and a documented response path when something goes wrong.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

What is AI observability and how is it different from AI monitoring?

Monitoring tracks predefined metrics — latency, error rate, uptime — and tells teams when something breaks. Observability gives teams the ability to ask questions about system behavior after the fact: why did the model produce that output, what was the context, which agent step failed. For generative AI systems, where outputs are non-deterministic and failure modes are non-obvious, observability is what makes diagnosis possible. Not just detection. The distinction maps directly to what decision intelligence requires from AI systems: not just “it ran” but “here’s why it decided what it decided.”

Which AI observability tool is best for teams just getting started?

Langfuse and Helicone are the lowest-friction starting points. Helicone requires only a URL change to start capturing LLM API traces and cost data — no code refactoring needed. Langfuse is open-source, self-hostable, and well-documented for teams needing data sovereignty. Both have meaningful free tiers and can be running within hours.

Which AI observability tools support multi-agent monitoring?

Multi-agent observability requires distributed tracing with parent-child span relationships and tool call attribution across agent steps. LangSmith (specifically for LangGraph-based agents), Langfuse, and Arize AI are currently the strongest options. Most other tools handle single-agent or single-LLM-call scenarios but lack the span correlation needed for complex multi-agent workflows. Teams using OpenAI AgentKit or similar frameworks should validate multi-agent trace support before committing to a platform.

How do AI observability tools handle RAG pipeline monitoring?

RAG pipelines need observability at multiple stages — document retrieval, context ranking, chunk relevance scoring, and final generation quality — not just the last LLM call. TruLens is purpose-built for RAG evaluation using the RAG Triad (context relevance, groundedness, answer relevance). Arize Phoenix and Langfuse support multi-step RAG tracing. Most general-purpose AI monitoring tools treat the entire RAG pipeline as a single LLM call and miss the retrieval failure modes. For teams building Advanced RAG systems, that distinction is critical.

How long does it take to implement an AI observability platform?

Basic instrumentation with Helicone or Langfuse takes 1–4 hours for most LLM applications. LangSmith integration for an existing LangChain app takes under an hour. Enterprise deployments with Arize AI or Fiddler — including data pipeline connections, governance integration, and stakeholder onboarding — typically take 4–8 weeks. The implementation timeline scales with governance complexity, not technical complexity.

Is AI observability required for regulatory compliance?

In regulated industries — financial services, healthcare, insurance — AI observability is increasingly tied to compliance requirements. AI systems that make or inform automated decisions need audit trails documenting inputs, outputs, decision logic, model version, and data provenance. Fiddler AI and enterprise Arize are built with compliance-grade logging in mind. The EU AI Act regulatory framework creates documentation requirements for high-risk AI systems that observability tooling directly helps fulfill.

How much do enterprise AI observability tools cost?

Open-source tools (Langfuse, TruLens, Evidently AI) are free to self-host, with infrastructure as the main cost. Managed cloud tiers (Helicone Pro ~$80/month, Langfuse Cloud Pro ~$59/month, W&B Weave Pro ~$50/seat/month) suit growing teams. Enterprise platforms (Arize AI, Fiddler AI, Galileo) typically run $25,000–$150,000+ annually depending on scale and support requirements. Most enterprise vendors require a sales conversation before disclosing specific pricing.