TL;DR: Looker and Tableau are both genuinely good business intelligence platforms — but they solve different problems for different organizations. Tableau wins on accessibility, speed, and visualization. Looker wins on governance, consistency, and embedded analytics at scale. The wrong choice isn’t about picking a bad tool — it’s about picking the right tool for the wrong stage of your data journey.

Key Takeaways

- Looker and Looker Studio are two completely different products. This article covers Looker Enterprise vs Tableau — the comparison enterprise buyers actually need.

- Philosophy over features. Tableau is exploration-first. Looker is governance-first. That difference determines fit more than any feature checklist.

- Architecture has consequences. Looker’s in-database model and LookML semantic layer enforce consistency by design. Tableau’s extract model delivers speed and flexibility, but governance requires deliberate process discipline to maintain.

- Total cost of ownership is the real comparison. Published pricing tells half the story. Analytics engineering capacity, implementation timelines, and governance add-ons tell the rest.

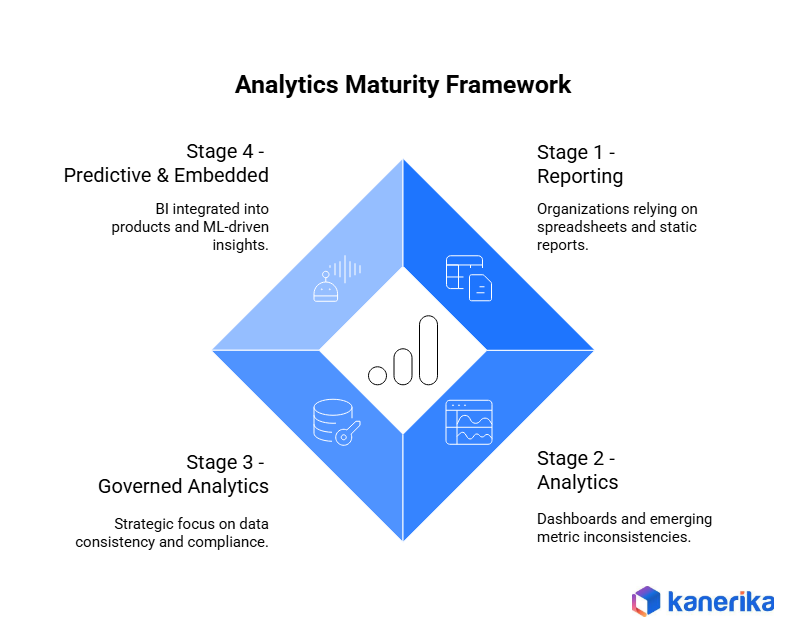

- Maturity stage matters more than company size. A Stage 1 organization deploying Looker isn’t accelerating — it’s overbuilding. A Stage 3 organization still on ad hoc Tableau workbooks is accumulating governance debt.

- The right BI partner is tool-agnostic. Vendors sell products. A partner certified across Tableau, Looker, and Power BI starts with strategy — not a demo.

Optimize Your Data Strategy with Intelligent Analytics Solutions!

Partner with Kanerika Today.

The Dashboard That Showed Three Different Revenue Numbers

A VP of Analytics at a mid-size logistics firm ran a three-week evaluation. Her team compared features, watched demos, scored vendors — and chose Tableau. It was a reasonable decision, well-researched and defensible.

Twelve months later, her team had built 400 dashboards across six departments. Depending on which one someone opened, “monthly revenue” showed three different numbers. Nobody had done anything wrong. The self-service analytics tool performed exactly as advertised. But the evaluation had focused on features, not fit.

She hadn’t picked a bad business intelligence tool. She had picked the wrong tool for where her organization actually was.

That distinction is what most Looker vs Tableau comparisons miss entirely. The feature lists are accurate. The enterprise ratings are real. But neither tells you what you need to know before making a decision that takes 12–18 months to unwind if it goes sideways.

First: Are You Comparing the Right Products?

Before going further — there’s a clarification that almost every comparison article skips, and it trips up a lot of buyers.

Many people searching “Looker vs Tableau” have Looker Studio in mind. That’s Google’s free, browser-based visualization tool, formerly known as Google Data Studio. Widely used for marketing dashboards and lightweight reporting. No engineering required, costs nothing.

Looker Enterprise is an entirely different product. Google acquired it for $2.6 billion and rebuilt it as a full-scale, governance-heavy enterprise analytics platform powered by a proprietary semantic modeling language called LookML. The two products share a name and a parent company. They serve completely different audiences.

This article covers Looker Enterprise vs Tableau — the comparison that matters for data and analytics teams making serious decisions about cloud analytics infrastructure.

| Looker (Enterprise) | Looker Studio | |

| Cost | Custom enterprise pricing | Free |

| Primary User | Data/analytics engineers | Marketers, analysts |

| Semantic Layer | LookML (proprietary) | None |

| Governance | Enterprise-grade | Minimal |

| Deployment | Cloud-native, GCP-hosted SaaS | Browser-based, no setup |

| Use Case | Governed enterprise BI | Lightweight data visualization |

One more thing worth flagging upfront. Looker has gone fully cloud-native on Google Cloud Platform — the legacy self-hosted deployment option has been deprecated. Organizations evaluating Looker today are buying a SaaS product with no on-premises path. For regulated industries or enterprises with strict data residency requirements, that’s a hard stop — not a configuration option.

Two Leaders, One Very Different Philosophy

Both Looker and Tableau sit in Gartner’s Magic Quadrant Leaders quadrant for Analytics and Business Intelligence Platforms. Both hold strong ratings on G2 and Gartner Peer Insights. On paper, they look nearly identical in market standing.

But the gap isn’t in ratings. It’s in philosophy. Tableau has tens of thousands of enterprise customers globally. Its design centers on exploration — letting business users move fast, build dashboards independently, and surface insights without waiting on a data engineering team. Looker’s footprint is more focused, with a sharper emphasis on enterprise governance and the data modeling layer.

Where Tableau gives teams autonomy, Looker gives organizations control. That difference — self-service analytics versus governed analytics — determines fit more reliably than any feature comparison. Research from Forrester consistently shows that a large share of business decisions still aren’t being made using data, despite significant BI investment across enterprises. A tool misaligned with organizational philosophy is one of the most consistent reasons why.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Architecture Is Where the Real Decision Gets Made

Most enterprise BI comparisons touch on architecture briefly and move on. That’s a mistake. Architectural decisions create consequences that play out over years, not quarters.

How Looker Works: In-Database Analytics

Looker never stores data. It queries live from the source every time a dashboard loads. Business logic — what “revenue” means, how “customer lifetime value” is calculated, what counts as an “active user” — is defined once in LookML and enforced everywhere.

The result: every team, every dashboard, every report uses identical metric definitions. The tradeoff is real, though. Building and maintaining LookML data models requires dedicated analytics engineers. Most organizations need at least one to two full-time people before meaningful dashboard coverage is achievable. And because Looker relies on live database queries, performance depends entirely on how well the underlying cloud data warehouse is optimized.

How Tableau Works: Flexible Extract-Based Analytics

Tableau’s drag-and-drop authoring was built for speed and accessibility. Business users can build dashboards without writing SQL or understanding data modeling. It supports both live database connections and Hyper-format data extracts — which dramatically accelerate dashboard performance at the cost of real-time data freshness.

Business logic is defined at the workbook level. That’s flexible, but it creates risk when dozens of teams build independently without governance standards. Governance features exist across Tableau’s product ecosystem, but enterprise-grade data governance requires the Data Management add-on — meaningful additional cost on top of base Tableau Cloud tiers.

Looker vs Tableau Performance: What Patterns Actually Emerge

Performance comparisons between these platforms are heavily context-dependent. Looker’s performance ceiling is set by the underlying cloud data warehouse. Tableau’s Hyper extract architecture buffers against warehouse performance issues — at the cost of data recency. They handle performance differently rather than one being universally faster.

| Scenario | Looker | Tableau |

| Optimized cloud warehouse (BigQuery/Snowflake) | Fast dashboard loads | Sub-second with Hyper extracts |

| High-concurrency, large query volume | Depends on warehouse optimization | Can degrade without extract layer |

| Real-time or near-real-time data requirements | Strong (live query by design) | Limited (extracts refresh on schedule) |

| Under-optimized underlying data models | Noticeable slowdown (fix is in the warehouse) | Less affected (Hyper buffers issues) |

| Small-to-medium dataset, standard dashboards | Comparable | Comparable |

If near-real-time data is a hard requirement — operational monitoring, live AI-based fraud detection, or dynamic pricing — Looker’s live query model fits better by design. If interaction speed and dashboard responsiveness matter more than data freshness, Tableau’s extract architecture wins on user experience.

What the Architecture Difference Means in Practice

Looker’s in-database architecture prevents metric inconsistency by design. Tableau’s workbook-level architecture enables faster exploration but requires disciplined governance processes to prevent the three-revenue-numbers problem. A strong data consolidation strategy should inform which model an organization actually needs before tool selection begins. Getting this wrong at the architecture stage is significantly more expensive than getting it wrong at the feature comparison stage.

Looker vs Tableau Head-to-Head: Eight Dimensions That Actually Matter

Feature comparisons are the most-read section of any BI evaluation and often the least useful for making a final decision. That said, knowing where each platform leads and where it trails is a necessary part of any thorough assessment.

The observations below reflect consistent patterns across G2, Gartner Peer Insights, and TrustRadius reviews — not vendor marketing.

| Dimension | Looker | Tableau | Edge |

| Ease of Use (Business Users) | Moderate — requires pre-built LookML models | High — drag-and-drop, no SQL needed | Tableau |

| Data Visualization Depth | Functional, consistent | Best-in-class, wide chart library | Tableau |

| Data Governance | Best-in-class (LookML enforces it structurally) | Good (add-ons and process discipline required) | Looker |

| Embedded Analytics | Strong (API-first architecture) | Available, more complex setup | Looker |

| AI and ML Integration | Gemini, Vertex AI, BigQuery ML | Tableau AI, Pulse, TabPy | Context-dependent |

| Cloud Ecosystem Fit | Google Cloud-native | Salesforce / multi-cloud | Context-dependent |

| Real-Time Collaboration | Browser-native, Git-integrated version control | Tableau Server/Cloud required for sharing | Looker |

| Self-Service for Business Users | Moderate (needs LookML foundation first) | High (authoring without engineering background) | Tableau |

Data Visualization: Tableau’s Clearest Advantage

Tableau offers a wide library of chart types, advanced geospatial analysis, animation capabilities, and granular formatting control. Its visualization engine has set the industry benchmark for years. Reviewers on G2 and Gartner Peer Insights consistently rate Tableau higher on ease of use and visual output quality — and that gap is real.

Looker’s visualizations are functional and consistent with brand standards. But visualization isn’t Looker’s primary value proposition. Organizations that need to communicate complex data stories to non-technical executive audiences or external stakeholders will find Tableau’s output more compelling and faster to produce.

Governed Analytics: Looker’s Structural Edge

Looker’s LookML semantic layer eliminates the “multiple versions of truth” problem at the architecture level. It’s version-controlled and auditable — data governance is baked into the code, not bolted on as process. This matters particularly for regulated industries — financial services, healthcare, insurance — where metric traceability is a compliance requirement, not a preference.

Tableau can achieve strong governance. But it requires the organizational discipline and operational processes that Looker enforces automatically through architecture. That distinction — structural governance versus process-dependent governance — is the sharpest practical difference between the two platforms.

2024–2025 AI Capabilities: What’s Actually New

Both platforms made meaningful AI moves in the past year.

Tableau launched Tableau Pulse — an AI-driven insights digest that proactively surfaces anomalies and trends to business users via email and Slack, without requiring them to open a dashboard. It also released Tableau AI, built on Salesforce Einstein, which adds natural language query and AI-generated explanations directly into dashboards.

Looker’s AI additions center on Conversational Analytics powered by Google Gemini — allowing users to ask natural language questions and receive LookML-generated query results in response. Gemini assistance within Looker also helps analytics engineers write and validate LookML data models faster, reducing build time on the semantic layer.

The more useful question still isn’t which AI feature list is longer. It’s which AI ecosystem the organization is already invested in. Google Cloud shops get more from Looker’s Gemini integration. Salesforce-heavy organizations get more from Tableau AI and Pulse. Organizations should map AI capabilities to existing data stack investments before treating the AI feature comparison as a primary differentiator.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Looker vs Tableau Pricing: The Full Total Cost of Ownership Picture

Published pricing is the starting point, not the answer. The per-user numbers get most of the attention. The real cost gap usually shows up elsewhere.

Tableau’s published tiers are transparent: Creator at $75/user/month, Explorer at $42/user/month, Viewer at $15/user/month. Looker’s pricing operates through custom enterprise agreements with no public pricing page — deployments start at higher price points and scale by users and data volume.

But both sets of numbers miss most of the real total cost of ownership. The table below surfaces what most buyers don’t budget for until they’re already mid-implementation.

| Cost Component | Looker | Tableau | Power BI |

| Base licensing | Custom enterprise pricing | $15–$75/user/month | $10–$20/user/month |

| Analytics engineering FTEs | 1–2 FTEs required (hard dependency) | Optional but recommended | Optional |

| Governance add-ons | Included in LookML architecture | Data Management add-on (extra cost) | Premium features vary by tier |

| Implementation timeline | 3–6 months | 4–8 weeks | 2–6 weeks |

| Cloud infrastructure dependency | Best on GCP; additional considerations on AWS/Azure | Multi-cloud friendly | Best on Azure; M365 integration included |

| Desktop authoring license | N/A (fully browser-native) | Additional per-user cost | Included in base license |

| Training investment | High (LookML data modeling proficiency required) | Moderate (business user accessible) | Moderate |

The hidden cost that surprises most Looker buyers isn’t the platform fee — it’s the analytics engineering requirement. One to two dedicated FTEs to build and maintain LookML data models is a hard dependency, not a recommendation.

For Tableau, the surprise is usually governance. The base product is accessible and fast to deploy. But meaningful data governance requires the Data Management add-on, structured workbook standards, and disciplined ownership models that someone has to maintain. That ongoing effort has a real cost even when it doesn’t appear on a licensing invoice.

Total Cost of Ownership by Organization Scale

| Organization Size | Likely TCO Leader | Key Determining Variable |

| Under 50 users | Tableau | Per-user licensing predictable; minimal analytics engineering overhead |

| 50–500 users | Context-dependent | Governance requirements and available technical resources determine the answer |

| 500+ users (strong data engineering team) | Looker | Metric consistency and governance automation reduce long-run operational cost |

| 500+ users (limited data engineering capacity) | Tableau | Looker’s analytics engineering requirements create ongoing FTE cost |

| Microsoft-native infrastructure (any size) | Power BI | Azure/M365 ecosystem integration and licensing bundling creates significant TCO advantage |

Organizations that overspend on BI tools are rarely the ones that chose the wrong license tier. They’re the ones that didn’t account for implementation complexity, training time, and the ongoing engineering support required to keep the platform producing value.

The Analytics Maturity Framework: The Question Every BI Comparison Skips

Most organizations don’t fail at business intelligence because they chose the wrong feature set. They fail because they chose a tool mismatched to their current analytics maturity level.

There are four recognizable stages of BI and analytics maturity. Each maps to a specific tool recommendation — not based on ambition, but on where the organization actually is today.

| Maturity Stage | Organizational Characteristics | Best Fit Tool | Why This Tool Fits |

| Stage 1 — Reporting | Spreadsheets dominant, static reports, ad hoc data requests | Tableau | Fast time to value; no analytics engineering overhead; designed for business-user access |

| Stage 2 — Analytics | Dashboards exist; metric inconsistency emerging; governance gaps becoming visible | Tableau | Governance issues are still manageable through process; Looker’s build overhead not yet justified |

| Stage 3 — Governed Analytics | Single source of truth is a strategic priority; compliance requirements real; multiple teams need identical metric definitions | Looker | LookML enforces data consistency architecturally; governance is structural, not a process burden |

| Stage 4 — Predictive and Embedded | BI embedded in customer-facing products; ML integration required; API-first architecture a hard requirement | Looker | API-first design, in-database model, and ML platform integration are built into the architecture |

The most common mismatch is a Stage 1 or Stage 2 organization buying Looker because it signals organizational sophistication. Looker at Stage 1 is expensive infrastructure for problems the organization doesn’t have yet.

The second most common mismatch runs in the opposite direction: a Stage 3 or Stage 4 organization still running on ad hoc Tableau workbooks with no governance model. The tool handles it technically. But governance debt compounds every month.

“Deploying a Stage 4 enterprise analytics tool into a Stage 1 organization doesn’t accelerate maturity. It creates an expensive deployment that nobody knows how to maintain.”

Data literacy across the organization shapes which stage a team is actually at — independently of what leadership believes on paper. An honest read of where users actually are matters more than where the roadmap says they’ll be.

Who Should Use Looker vs Tableau: Role-by-Role Breakdown

The maturity framework answers the organizational question. But individual roles inside a single enterprise often pull in different directions — and that internal tension is real in most large-organization BI evaluations.

Every enterprise BI selection surfaces some version of the same conflict: the data engineering team wants one platform, the finance team wants another, and the marketing team has already been using something else for six months.

| Role / Persona | Recommended Platform | Primary Reason |

| Data / Analytics Engineer | Looker | LookML maps to existing SQL and data modeling workflows; Git version control built in |

| Business Analyst / Functional Lead | Tableau | Self-service dashboard authoring without SQL or semantic modeling knowledge |

| CFO / Finance Team | Looker | Metric consistency and full auditability by design; one definition of revenue across every report |

| Marketing / Growth Teams | Tableau (or Looker Studio) | Fast iteration, visual storytelling, ad platform connectors; Tableau Pulse for automated anomaly alerting |

| Product / Engineering (Embedded Analytics) | Looker | API-first architecture makes embedding BI in customer-facing products significantly cleaner |

| Operations / Supply Chain Leaders | Context-dependent | Depends on data source complexity and real-time requirements |

| Executive / C-Suite Dashboard Consumer | Either | Both offer executive-layer dashboards; outcome depends on what the data team can sustainably maintain |

The split that appears most often in large enterprises: engineers advocate for Looker, business analysts push for Tableau, and the organization ends up running both simultaneously. That isn’t necessarily wrong — but it requires clear architectural ownership, a deliberate API integration model, and an explicit governance framework covering both. Without those, two tools become two separate data silos with the same metric inconsistency problem the organization was trying to solve.

Looker vs Tableau by Industry

1. Financial Services and Banking

Regulatory reporting and auditability requirements favor Looker’s LookML governance architecture. Consistent, traceable metric definitions matter at the compliance level — not just the analytics level. When “net revenue” means something specific to regulators, it needs to mean the same thing in every dashboard, every quarter.

Kanerika deployed Tableau for integrated financial analytics at a financial services client — reducing reporting time by 55%, improving data accuracy by 40%, and automating compliance reporting that saved 200+ hours monthly.

2. Retail and Consumer Packaged Goods

Fast-moving merchandising decisions require rapid visual exploration and ad hoc analysis across high-SKU inventory environments. Tableau’s flexible extract model handles sales and inventory data well, and Tableau Pulse’s anomaly alerting is a natural fit for retail operations teams.

Kanerika’s AI-powered Tableau solution for a leading retailer delivered a 45% improvement in inventory management efficiency, 35% better sales forecast accuracy, and real-time decision-making across 50+ retail locations.

3. Healthcare and Life Sciences

HIPAA compliance and data privacy requirements favor Looker’s controlled, auditable access model. But clinical and operational staff who aren’t data analysts need dashboards they can actually use — which is where Tableau’s accessibility matters at the point of care.

In practice, many healthcare organizations use Looker as the governance and semantic layer while surfacing data through more accessible front-end tooling for frontline staff.

4. Operations and Supply Chain

Multi-source data complexity across SAP, Salesforce, ERP systems, and operational platforms demands a consolidation-first approach before tool selection. For a large enterprise client, Kanerika consolidated 200+ fragmented reports across supply chain and operations into a unified BI platform — with revenue improvements of 2x to 3x post-implementation.

| Industry | Dominant BI Requirement | Natural Platform Fit | Key Consideration |

| Financial Services / Banking | Auditability, compliance, metric traceability | Looker | Regulatory requirements favor centralized semantic control through LookML |

| Retail / Consumer Packaged Goods | Speed, visual flexibility, merchandising analysis | Tableau | Fast iteration and ad hoc visual exploration drive business decisions |

| Healthcare / Life Sciences | Access control, HIPAA compliance, operational usability | Looker (governance) + Tableau (access layer) | Clinical staff need usable dashboards; governance must be structural |

| Operations / Supply Chain | Multi-source consolidation, real-time operational visibility | Context-dependent | Data architecture complexity should precede tool selection |

| Technology / SaaS | Embedded analytics, product usage data, developer-first workflows | Looker | API-first architecture fits product engineering team requirements |

| Mid-Market (Microsoft-native stack) | Cost efficiency, integration, speed to initial value | Power BI | Azure/M365 ecosystem makes Power BI the clear TCO winner at this segment |

Data Source Connectivity

Data source connectivity is among the most frequently asked questions in any BI platform evaluation — and the answer matters differently depending on how heterogeneous the underlying data environment is.

Tableau’s connectivity model is breadth-first. Looker’s is depth-first. Tableau connects to a wider range of source types across cloud warehouses, SaaS platforms, flat files, and on-premises databases. Looker’s connections to SQL-compatible cloud data warehouses are significantly deeper and more tightly optimized — particularly the native BigQuery integration.

| Data Source Category | Tableau | Looker |

| Cloud Data Warehouses | BigQuery, Snowflake, Redshift, Databricks, Azure Synapse | BigQuery (first-class, native), Snowflake, Redshift, Databricks, Trino |

| Relational Databases | SQL Server, PostgreSQL, MySQL, Oracle, SAP HANA | PostgreSQL, MySQL, SQL Server, and all SQL-compatible sources |

| SaaS and CRM Platforms | Salesforce, Google Analytics, Marketo, HubSpot (native connectors) | Limited native SaaS connectors (requires upstream data transformation into warehouse) |

| Flat Files and Spreadsheets | Excel, CSV, Google Sheets (native, no engineering required) | Not natively supported (requires warehouse ingestion first) |

| REST APIs | Via Web Data Connector or third-party ETL tools | No direct API connectivity (warehouse-mediated only) |

| On-Premises Databases | Strong support via Tableau Bridge agent | Limited — cloud-native SaaS architecture prioritized |

The practical implication: organizations with heterogeneous data environments will find Tableau’s connector breadth a meaningful advantage. Organizations running predominantly on BigQuery or Snowflake lose very little from Looker’s narrower model, and gain from the depth of optimization in those native connections.

Implementation: Picking the Tool Is the Easy Part

Both Looker and Tableau underperform when implementation is weak. The tool doesn’t deliver outcomes. The implementation does.

The most common failure modes aren’t surprising in hindsight. Tableau deployed without a dashboard governance model produces hundreds of workbooks with no standards and metric sprawl within 12 months. Looker deployed without analytics engineering capacity produces LookML data models that are never completed, or quietly abandoned after six months.

Learning Curve: Honest Time-to-Proficiency Estimates

| User Type | Tableau | Looker |

| Business analyst (basic dashboards, filters, standard charts) | 2–4 weeks | 4–6 weeks via Explore, with pre-built LookML models |

| Analytics engineer / LookML data model developer | Limited applicability | 4–8 weeks to productive output; 3–6 months to production-ready semantic layer |

| Tableau Server / Looker admin | 6–12 months to full proficiency | 3–6 months (SaaS-managed reduces infrastructure admin burden) |

| Executive or C-suite dashboard consumer | Days | Days (comparable experience for consumers) |

The most consequential number in that table is the analytics engineer timeline for Looker: four to eight weeks to productive output, but three to six months to build a production-ready semantic layer from scratch. Organizations that underestimate this are the ones that end up with an expensive deployment and six months of delayed value before anyone in the business sees a useful dashboard.

Change management is consistently underbudgeted in BI implementations. The technical deployment is rarely the hardest part. Getting business users to trust and adopt new dashboards — and abandon the spreadsheets they’ve relied on for years — takes deliberate, sustained organizational effort.

What a BI Platform Migration Actually Costs

Migrating from Tableau to Looker — or vice versa — means rebuilding every dashboard from scratch. LookML data models don’t translate to Tableau workbooks, and the reverse is equally true. Switching enterprise BI platforms mid-journey is a 6–18 month project in a large organization.

Most organizations that end up in this situation share one characteristic: they chose based on a demo, not a data strategy assessment.

Shadow IT is a related risk during BI transitions. When a mandated platform doesn’t meet user needs, teams build workarounds — separate workbooks, Google Sheets dashboards, PowerPoint decks with embedded charts. Those workarounds become the real reporting layer while the official platform sits underused. Choosing the right tool in the first place is the most effective way to prevent shadow BI from emerging.

What Good Enterprise BI Implementation Looks Like

A sound implementation starts with a data architecture and governance review before tool selection — not after. It includes semantic layer design: LookML modeling for Looker, or a structured governance model for Tableau. User adoption and training are planned as part of the implementation scope, not added as afterthoughts once dashboards are built. A dashboard ownership model is established from day one: who creates, who maintains, what gets deprecated, and how metric definitions are managed across teams.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Why Tool-Agnostic BI Implementation Changes the Outcome

Kanerika is certified across Tableau, Looker, and Power BI — which means no vendor affiliation shapes the recommendation a client receives. A partner with a single-vendor certification has a structural incentive to recommend that vendor regardless of organizational fit. A tool-agnostic partner starts with a different question: what does this organization actually need from its business intelligence infrastructure?

The evaluation practice starts with a data strategy assessment: current analytics maturity level, governance requirements today and in 18 months, technical resources available on an ongoing basis rather than just at go-live, and what the underlying data architecture actually looks like in practice.

Outcomes across Kanerika BI implementations reflect that approach:

- 70% reduction in time to data insights — U.S. education company, Power BI and Tableau

- 55% reduction in financial reporting time — financial services client, Tableau

- 45% improvement in inventory management efficiency — retail client, AI-powered Tableau

- 65% reduction in report generation time — education sector, automated pipelines and BI dashboards

- 200+ hours/month saved on compliance reporting automation — financial services, Tableau

The right enterprise BI tool for one client is frequently the wrong tool for another. That’s a reality no single-vendor partner will share with a prospect.

Custom AI agents layered on top of governed BI infrastructure are where several of Kanerika’s most sophisticated client deployments are heading — using LookML or Tableau’s semantic layer as the trusted data foundation that AI agents query rather than building separate data access models for each AI capability.

Kanerika’s recognition as a Microsoft Solutions Partner for Data and AI reflects the cross-platform evaluation discipline that multi-vendor certification enables. Karl, Kanerika’s AI data insights agent, is built on top of this governed data infrastructure — illustrating how a clean BI foundation enables the next layer of AI capability.

Five Questions That Actually Decide the Choice

No framework replaces a proper assessment. But five questions surface the answer in the majority of enterprise BI platform evaluations. Work through them based on where the organization is today — not where leadership intends it to be in two years.

| Evaluation Question | Signals Toward Looker | Signals Toward Tableau |

| Is the primary user base technical or business-led? | Data engineers and analytics engineers dominate | Business analysts and functional team leads dominate |

| How critical is metric consistency across departments? | Non-negotiable — finance, compliance, or regulated reporting | Important but manageable through process and workbook standards |

| Which cloud ecosystem is already committed? | Deep GCP investment, BigQuery as primary data warehouse | Salesforce CRM as operational core, multi-cloud, or no dominant cloud |

| Is embedded analytics in customer-facing products required? | Yes — developer-first, API-driven embedding is a hard requirement | No — internal business intelligence dashboards are the primary use case |

| What is the realistic implementation timeline and capacity? | 3–6 months, dedicated analytics engineering available and budgeted | 4–8 weeks, current team capacity without adding engineering headcount |

If multiple answers pull in opposite directions, that tension isn’t a framework problem — it’s a signal that the organization has genuine competing needs across roles or departments. That ambiguity is exactly where an experienced, tool-agnostic implementation partner adds the most value, because a vendor will resolve it by recommending their own product regardless.

How to Evaluate Before Committing

Both platforms offer legitimate entry points for hands-on evaluation before a full commitment.

Tableau Public is a free version that allows full dashboard authoring and publishing — with the limitation that data must be publicly accessible. Tableau also offers a 14-day free trial of Tableau Cloud, providing access to the full product including sharing and collaboration capabilities. Most buyers can reach a meaningful proof-of-concept evaluation within two to four weeks.

Looker has no standard free tier or self-serve trial for the Enterprise product. Google Cloud offers a sandbox environment through its console, and Google Cloud partners can facilitate structured proof-of-concept engagements with a defined scope. Buyers evaluating Looker should plan for a formal proof-of-concept with a qualified partner — the LookML semantic layer requires configuration and initial data model build before meaningful evaluation is possible.

This asymmetry shapes enterprise evaluations in a predictable way. Tableau’s lower evaluation barrier means most enterprise buyers have hands-on experience with it before the formal selection process starts. Looker evaluations require more upfront investment in the evaluation itself. That often explains why Tableau enters evaluations with built-in familiarity — not because it’s the better tool in every context, but because it was easier to try first.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Looker vs Tableau vs Power BI: When the Third Option Actually Wins

Most Looker vs Tableau comparisons treat Power BI as an afterthought. That’s a mistake. For a significant segment of enterprise buyers, Power BI is the right answer — and understanding when changes the entire decision frame.

Power BI is the cost leader at $10–20/user/month — significantly below both Tableau and Looker on per-seat pricing. For organizations already running on Microsoft Azure, Microsoft 365, or Teams, the integration is native and the TCO advantage is substantial. Power BI’s Copilot features — natural language query, automated insight generation, and smart narrative summaries — are integrated directly into the Microsoft ecosystem that many enterprises already use daily.

Power BI trails Tableau on visualization flexibility and Looker on semantic layer rigor. But for mid-market organizations with Microsoft-native infrastructure, or enterprises where BI licensing cost is the overriding constraint, it frequently wins on total cost of ownership and speed to initial deployment.

Power BI integrates cleanly with Azure-hosted private cloud environments. Looker is GCP-native. Tableau’s multi-cloud flexibility makes it the most infrastructure-agnostic of the three — a genuine advantage for organizations without a committed cloud provider or running across multiple cloud environments.

Conclusion

Looker and Tableau are both genuinely excellent enterprise analytics platforms. The peer review ratings are real. The companies that struggle with business intelligence aren’t usually using bad tools.

They’re using the right tool at the wrong time, or the right tool with the wrong implementation behind it.

Looker wins when data governance is non-negotiable, when a dedicated analytics engineering team exists, when the organization is built on GCP and BigQuery, and when embedded analytics in customer-facing products is a real requirement. Tableau wins when business-user autonomy matters, when speed of initial deployment is a priority, and when the team doesn’t have the technical depth to sustain a LookML-based semantic layer long-term. Power BI wins when Microsoft ecosystem integration and licensing cost are the overriding constraints.

The enterprise BI decision shouldn’t start with a product. It should start with an honest assessment of where the organization actually is — not where leadership hopes it will be in two years.

FAQs

Is Looker the same as Looker Studio?

No. Looker is a full enterprise BI platform that Google acquired for $2.6 billion, powered by a proprietary LookML semantic modeling layer. Looker Studio, formerly Google Data Studio, is a separate free data visualization tool designed for marketers and lightweight reporting. They share a name but serve entirely different audiences.

Can you self-host Looker Enterprise?

No. The current version of Looker is a fully cloud-hosted SaaS product running on Google Cloud Platform. The legacy self-hosted on-premises deployment option has been deprecated. Organizations with strict data residency requirements or compliance constraints that prevent cloud-hosted SaaS should factor this in at the start of evaluation — it is a hard architectural constraint, not a configuration option.

Is Tableau easier to learn than Looker for business users?

Significantly easier for most business users without SQL or data modeling backgrounds. Tableau’s drag-and-drop interface is designed for analysts who don’t write code. Looker requires familiarity with LookML — a proprietary semantic modeling language — making it better suited for organizations with dedicated analytics engineering resources. Most business users reach productive Tableau proficiency in two to four weeks. LookML proficiency for analytics engineers typically takes four to eight weeks minimum, with three to six months to build a production-ready semantic layer.

Why does Looker cost more than Tableau?

Looker’s pricing reflects its enterprise governance architecture and API-first design. But per-user licensing isn’t the most useful comparison point. Tableau’s costs scale quickly at volume, while Looker requires significant upfront analytics engineering investment regardless of user count. Total cost of ownership — accounting for implementation timeline, analytics engineering FTEs, and ongoing maintenance — is the more accurate comparison than published per-seat pricing.

Can Looker and Tableau be used together in one organization?

Yes, and it’s more common in large enterprises than most BI comparisons acknowledge. A typical pattern: Looker manages the data governance and semantic layer, while Tableau serves front-line teams that need flexible visual exploration and self-service authoring. The approach works well but requires clear architectural ownership, explicit data governance standards, and coordination between the engineering and business analytics teams maintaining each platform.

How long does enterprise BI implementation take for each platform?

A basic Tableau deployment with training typically takes four to eight weeks from kickoff to initial dashboard delivery. Looker implementations, due to LookML semantic modeling requirements, typically take three to six months before meaningful dashboard coverage is achieved across an organization. Both timelines depend significantly on the complexity of the underlying data environment and the governance model being established.