When working with data, mistakes, unexpected changes, and “what was it like before?” questions are pretty common. That’s where Microsoft Fabric’s time travel feature steps in. Think of it like a rewind button for your data tables.

Originally built into Fabric Warehouses, time travel in Lakehouse now gives users an even more flexible way to look back at how data used to be. With built-in support from Spark, Microsoft lets you access earlier snapshots of a table, no backups, no manual versioning.

Need to recover from a wrong update? Want to audit historical values? Or compare a report from last week with today’s numbers? Instead of rebuilding or reloading data, you just add a timestamp or version number to your query, and that’s it.

This blog walks through how to use this feature step by step. It not only saves time but also cuts down human errors and simplifies debugging. Data pros and teams can now handle time-based queries with way less effort and way more accuracy.

Setting Up Microsoft Fabric for Time Travel

1. Accessing Fabric Workspace

To begin, head over to app.powerbi.com. From there:

- Go to Workspaces.

- Choose your workspace (e.g., GA10 Fabric Workspace).

- Filter the content type to Lakehouses.

- Open your target Lakehouse (e.g., Lake 02).

2. Exploring the Target Table

- Use the Lakehouse Explorer to view tables.

- The table used for this example is Sales_Delta.

- Right-click the table and select “View files”.

- You’ll notice multiple files representing different versions created during each update.

Using Fabric’s Lakehouse in production?

Kanerika’s Fabric team handles architecture, implementation, and ongoing optimization.

Steps to Check the Latest Data Snapshot

Before you start exploring older versions of your data, it’s important to first understand what the current state looks like. This gives you a point of reference. You’ll use this snapshot later when comparing results from different timestamps or versions.

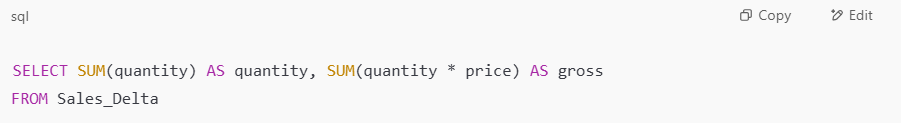

1. Query via SQL Analytics Endpoint

To check the current totals in the Sales_Delta table, go to the SQL Analytics endpoint and run a simple aggregation query like this:

This query returns two key values:

- Total quantity of all items in the table.

- Gross value is calculated by multiplying quantity by price.

These figures help confirm the current data in its most up-to-date form, essentially your “version now” numbers. You’ll be using these same fields in later queries, so knowing their baseline values up front helps make sure time travel queries are working as expected.

2. Referencing GitHub Sales Data

If you’re following along and want to run the same tests, the exact Sales_Delta dataset used in this example is available on GitHub. You can download it and load it into your own Lakehouse or Warehouse environment, so your results will match what’s shown in this blog.

Steps to Run First PySpark Command for Microsoft Fabric Time Travel

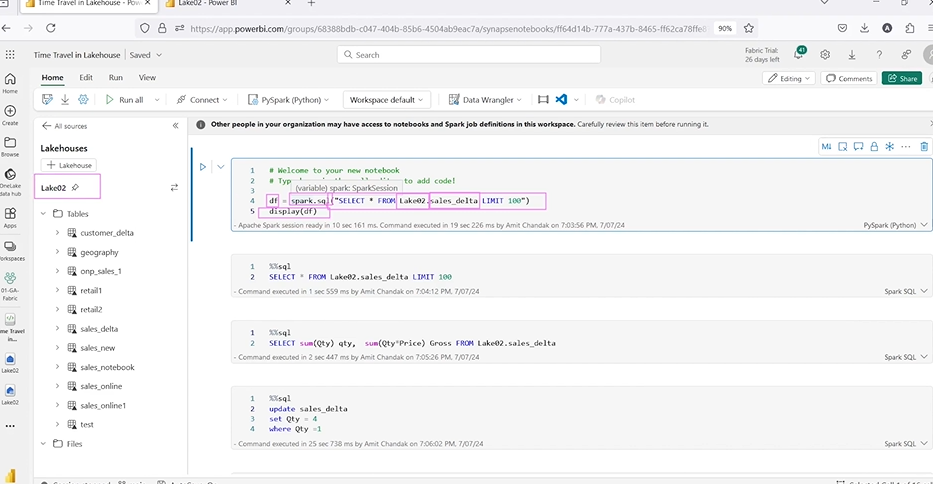

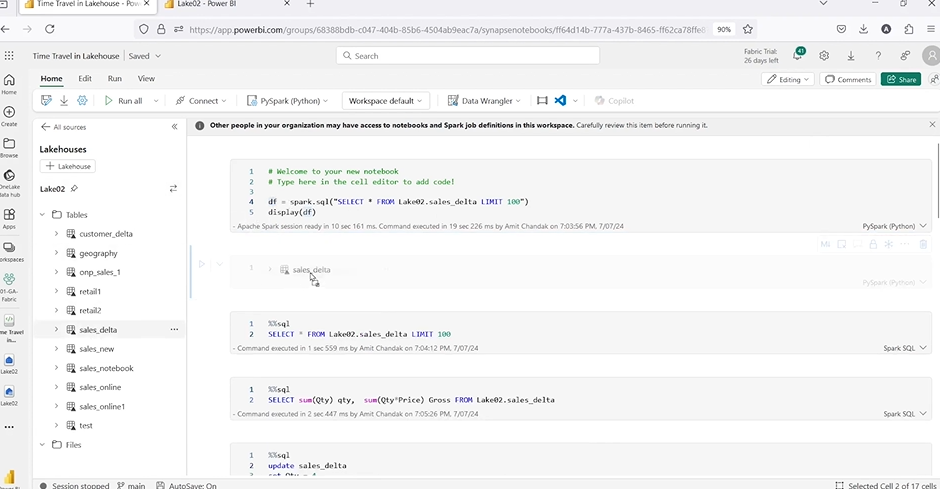

Once you’ve seen your current data snapshot, the next step is to work within a notebook to start running time travel queries. This part happens using Spark, which powers the ability to query different versions of your table.

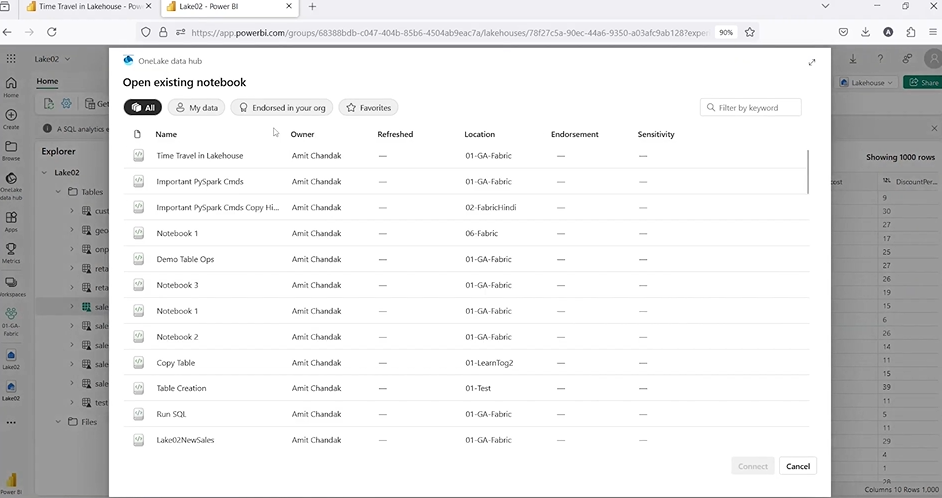

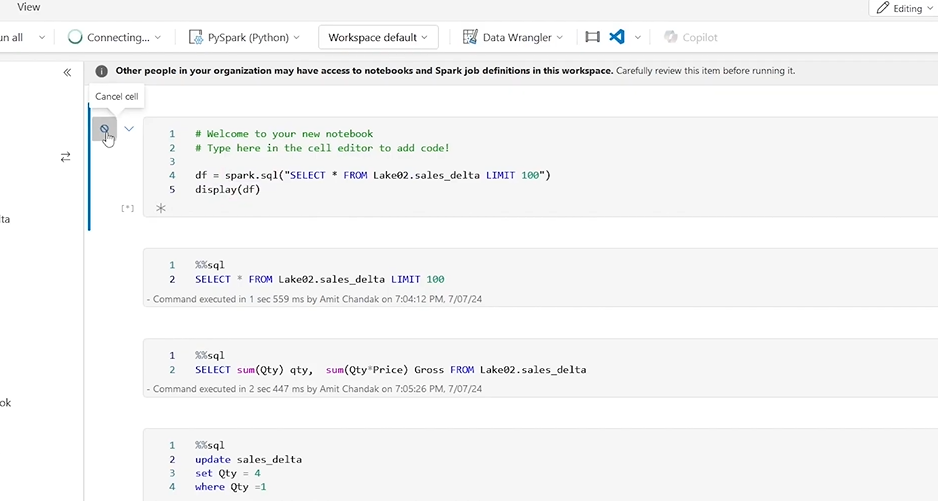

1. Start the Spark Session

To begin, open or create a new notebook in Microsoft Fabric. This notebook is where you’ll run PySpark or Spark SQL commands that access historical versions of your table.

Here’s what to do:

- Open a notebook: Either create a new one or reuse one you’ve already worked with.

- Connect it to your Lakehouse: Make sure the notebook is pointing to the same Lakehouse (e.g., Lake 02) that contains your Sales_Delta table.

- Drag in the table: When you drag the Sales_Delta table into the notebook, Fabric will auto-generate a starter code that helps you quickly reference the table in your PySpark session.

- Start the Spark session: Running any command will trigger the Spark engine to start. This lets you run distributed queries and access features like time travel.

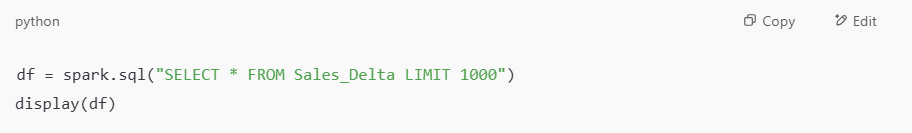

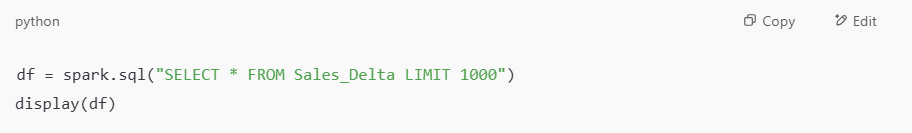

Example code to run:

This will return a sample of 1,000 rows from your table. It confirms that the table is accessible and lets you check the structure and sample values before running deeper queries.

2. Switch Between PySpark and SQL

Not everyone prefers PySpark, and that’s okay. You can use SQL-style queries even inside your notebook.

- Just add %%sql at the top of a cell to switch it to SQL mode.

- This is useful if you’re more comfortable writing SQL than Python code.

- It also helps when you want to copy-paste commands like DESCRIBE HISTORY or RESTORE TABLE, which are easier in SQL syntax.

This flexibility means you can run the same logic using the language you’re most comfortable with, and switch back and forth whenever needed.

How to Look at Table History in Microsoft Fabric for Time Travel

Before you do anything with past data, it’s helpful to first see what versions even exist. Microsoft Fabric tracks each change to your Delta table and logs it as a version, which includes the operation type (like update, restore), timestamps, and user info.

Use DESCRIBE HISTORY

To view this log of past changes, run the following SQL command:

This shows a full history of your table, including:

- Version number

- Timestamp of the change

- User who made the change

- Type of operation (UPDATE, RESTORE, etc.)

You’ll refer to this table often, especially when you want to query older states of the data or restore a specific version.

Performing Updates & Triggering New Versions

Now that you’ve seen your table’s version history, let’s create a new version on purpose, by updating some data.

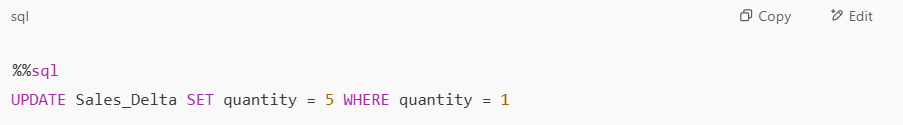

1. Update Records Conditionally

You can simulate a data update by changing values in a controlled way:

This command updates all rows where quantity equals 1, changing them to 5. Once you do this, Fabric automatically logs this as a new version (e.g., version 3).

This is how Fabric tracks every change you make, no manual version control is needed.

2. Re-check History

After the update, run the history command again:

You should see a new row at the top of the output with the latest version. This confirms the update was logged and is now part of your table’s change history.

How Time Travel Works Under the Hood

To make time travel possible, Microsoft Fabric uses Delta Lake under the surface, an open-source storage layer built on top of Parquet files. Every time you update, insert, delete, or restore data, Fabric doesn’t overwrite anything. Instead, it:

- Writes a new version of the data behind the scenes.

- Adds metadata and logs the change in a transaction log (stored as JSON files).

- Preserves earlier versions, so they can be accessed later using a timestamp or version number.

That’s why you see new data files appear in your Lakehouse Explorer after each update, they’re not replacing the old ones; they’re being added to the version history.

This setup gives you:

- Zero manual backups – versioning happens automatically.

- Consistent reads – even if data is being updated, you can query a clean snapshot from the past.

- Efficient rollbacks – just one line of SQL to undo major changes.

So, when you run a TIMESTAMP AS OF or VERSION AS OF query, Fabric knows exactly where to look in the log and which data files to pull together, giving you a full view of what things looked like at that point in time.

Need a data engineering team for your Fabric Lakehouse?

Kanerika builds pipelines and analytics layers end-to-end.

Querying Historical Data by Timestamp

Let’s say you want to look at what the table looked like before that last update. Instead of manually restoring anything, you can query the past using a timestamp.

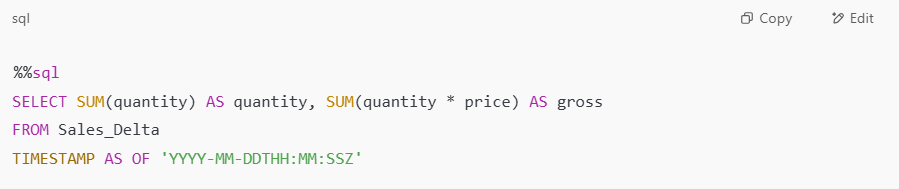

Use TIMESTAMP AS OF

This query returns the data as it existed at that specific moment in time. It’s useful for:

- Comparing values across points in time

- Debugging mistakes

- Auditing older states

Just copy the timestamp from your DESCRIBE HISTORY output and plug it in here.

Querying Data by Version Instead of Time

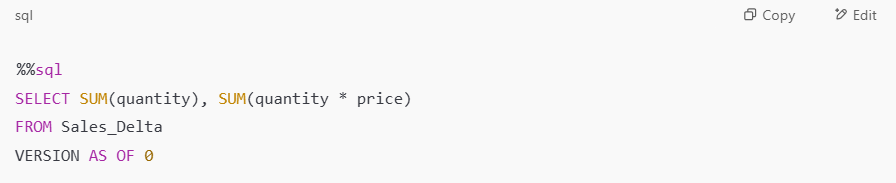

Timestamps can get tricky to manage. If you know the exact version you want, use version numbers instead.

Use VERSION AS OF

This gives you the same result as querying by timestamp, but it’s easier when you track version numbers directly from the history log.

How to Access Version History via PySpark

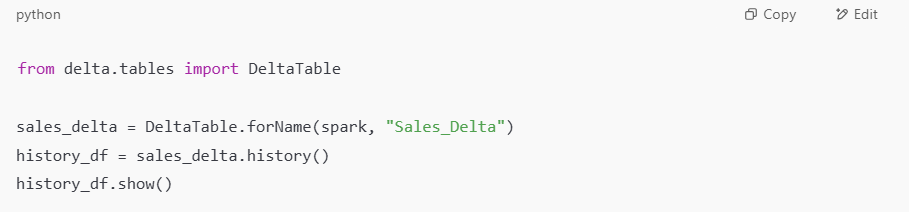

If you prefer working in PySpark or want to automate some of these steps in code, you can access all the same history and query features there too.

1. Get Version History Programmatically

This returns the full version history of the table, just like the SQL DESCRIBE HISTORY command.

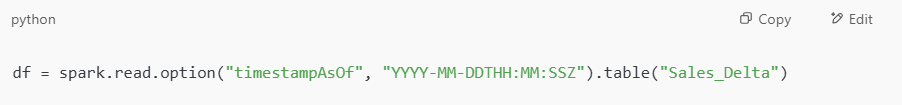

2. Read Data by Timestamp

Use this if you want to pull in data from a specific moment in time.

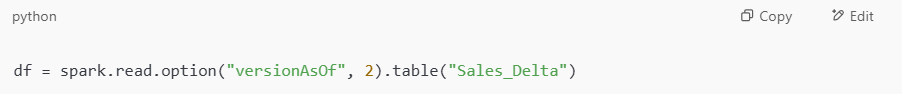

3. Read Data by Version

Use this if you know the version number and want the data from that snapshot.

Optimize Your Data Strategy with Intelligent Analytics Solutions!

Partner with Kanerika Today.

Restoring Previous Versions in Microsoft Fabric

Time travel isn’t just about looking, you can also roll back. If a version of your data looks better than the current one, you can restore it to the new latest state.

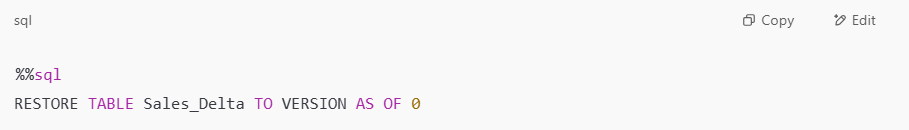

Use the Restore Command

This command brings your table back to version 0, and automatically logs a new version (e.g., version 4) showing the restore action.

There is no need to delete or manually overwrite anything, just restore, and Fabric takes care of the rest.

Checking Delta Log and File Changes

Behind the scenes, every version and update creates new files in your Lakehouse.

Inspect File Changes

- Open the Lakehouse Explorer in Microsoft Fabric.

- Right-click on Sales_Delta and choose View files → then hit Refresh.

- You’ll see new .parquet, .json, and log files generated for each version.

This lets you visually confirm that changes are tracked on the file system too, not just through queries.

Kanerika: Your Expert Partner for Microsoft Fabric Success

Kanerika is a leading provider of data and AI solutions, specializing in maximizing the power of Microsoft Fabric for businesses. With our deep expertise, we help organizations seamlessly integrate Microsoft Fabric into their data workflows, enabling them to gain valuable insights, optimize operations, and make data-driven decisions faster.

As a certified Microsoft Data and AI solutions partner, Kanerika leverages the unified features of Microsoft Fabric to create tailored solutions that transform raw data into actionable business insights.

By adopting Microsoft Fabric early in the process, businesses across various industries have achieved real results. Kanerika’s hands-on experience with the platform has helped companies accelerate their digital transformation, boost efficiency, and uncover new opportunities for growth.

Partner with Kanerika today to elevate your data capabilities and take your analytics to the next level with Microsoft Fabric!

FAQs

Does Microsoft Fabric have time travel?

Microsoft Fabric supports time travel through Delta Lake tables in its Lakehouse architecture. This capability lets you query, restore, and audit historical data versions using timestamps or version numbers. Time travel in Fabric enables point-in-time recovery, compliance audits, and debugging data pipeline issues by accessing previous table states. The feature relies on Delta Lake’s transaction log, which tracks all changes to your data. Enterprises use Fabric time travel for regulatory compliance, ML model reproducibility, and rollback scenarios. Kanerika helps organizations implement robust time travel strategies in Microsoft Fabric—connect with us to optimize your Lakehouse data versioning.

What is Microsoft Fabric?

Microsoft Fabric is a unified analytics platform that integrates data engineering, data science, real-time analytics, and business intelligence into a single SaaS solution. Built on a Lakehouse architecture combining data lake storage with data warehouse capabilities, Fabric provides OneLake as its centralized data repository. The platform includes Data Factory for pipelines, Synapse Data Engineering, Power BI, and Real-Time Intelligence workloads. Fabric eliminates data silos by unifying compute and storage across your analytics stack. Kanerika’s Microsoft Fabric specialists accelerate enterprise adoption with proven migration frameworks—schedule a consultation to explore your modernization roadmap.

What is Microsoft Fabric used for?

Microsoft Fabric is used for end-to-end data analytics, spanning data integration, engineering, warehousing, real-time processing, and business intelligence. Organizations leverage Fabric to consolidate fragmented analytics tools into one unified platform, reducing complexity and licensing costs. Common use cases include building enterprise data lakehouses, creating real-time dashboards, running machine learning workloads, and enabling self-service analytics with Power BI. Fabric’s OneLake storage eliminates data duplication while supporting time travel for auditing and recovery. Kanerika delivers tailored Microsoft Fabric implementations across banking, healthcare, and manufacturing—reach out to discuss your specific analytics requirements.

Is Microsoft Fabric the same as Snowflake?

Microsoft Fabric and Snowflake are not the same, though both serve enterprise analytics needs. Snowflake is a cloud data warehouse focused on SQL-based analytics and data sharing, while Fabric is a comprehensive analytics platform covering data engineering, real-time analytics, BI, and data science. Fabric uses Delta Lake format with native time travel capabilities, whereas Snowflake offers its own Time Travel feature. Fabric integrates tightly with the Microsoft ecosystem including Power BI and Azure, making it ideal for Microsoft-centric organizations. Kanerika helps enterprises evaluate Fabric versus Snowflake based on workload requirements—request a comparative assessment today.

Is Microsoft Fabric the same as Databricks?

Microsoft Fabric and Databricks are distinct platforms with overlapping capabilities. Both support Delta Lake format and Lakehouse architecture, enabling time travel and ACID transactions. Databricks excels in advanced data engineering and ML workloads with Apache Spark optimization, while Fabric offers broader integration including native Power BI, Data Factory, and real-time analytics workloads. Databricks runs across multiple clouds, whereas Fabric is Azure-native SaaS. For organizations already invested in Microsoft tools, Fabric provides seamless ecosystem integration. Kanerika implements both Databricks and Microsoft Fabric solutions—let us help you choose the right platform for your data strategy.

Is Microsoft Fabric better than Azure?

Microsoft Fabric is not a competitor to Azure but rather a SaaS analytics platform built on Azure infrastructure. Fabric consolidates multiple Azure services like Synapse Analytics, Data Factory, and Power BI into a unified experience with simplified licensing and management. While Azure offers granular control over individual services, Fabric provides an integrated approach with shared OneLake storage and consistent governance. Organizations using separate Azure analytics services can migrate to Fabric for reduced complexity and features like built-in time travel. Kanerika specializes in Azure to Microsoft Fabric migrations—talk to our experts about consolidating your analytics infrastructure.

Is Microsoft Fabric a replacement for Synapse?

Microsoft Fabric incorporates Synapse capabilities and represents Microsoft’s strategic direction for unified analytics. Synapse Analytics features including data warehousing, Spark pools, and pipelines are integrated into Fabric with enhanced functionality. While Synapse remains supported, new innovations focus on Fabric, including advanced Lakehouse features like time travel and OneLake storage. Organizations running Synapse should plan migration paths to Fabric to access improved governance, real-time intelligence, and Copilot AI capabilities. Microsoft has provided migration tooling to ease this transition. Kanerika accelerates Synapse to Microsoft Fabric migrations with automated conversion frameworks—schedule your migration assessment now.

Is Microsoft Fabric real-time?

Microsoft Fabric fully supports real-time analytics through its Real-Time Intelligence workload. This includes Eventstreams for ingesting streaming data from sources like Azure Event Hubs, IoT devices, and Kafka. KQL databases enable sub-second querying of streaming data, while real-time dashboards in Power BI visualize live insights. Fabric combines streaming and batch processing in one platform, with Delta Lake tables maintaining transaction logs for time travel even on frequently updated data. This hybrid approach supports both operational analytics and historical analysis. Kanerika builds real-time analytics solutions on Microsoft Fabric—contact us to architect your streaming data infrastructure.

What is the difference between data lake and data lakehouse?

A data lake stores raw, unstructured data at scale without enforcing schema, while a data lakehouse combines data lake flexibility with data warehouse reliability. Lakehouses add ACID transactions, schema enforcement, and governance to lake storage. Microsoft Fabric implements the Lakehouse architecture using Delta Lake format, which enables time travel by maintaining versioned data history. Unlike traditional data lakes where data quality issues are common, lakehouses support reliable BI workloads alongside data science. This architecture eliminates the need for separate lake and warehouse systems. Kanerika designs enterprise Lakehouse solutions on Microsoft Fabric—explore how we can modernize your data architecture.

Is Microsoft Fabric the future?

Microsoft Fabric represents Microsoft’s strategic vision for unified enterprise analytics, consolidating previously separate services into one cohesive platform. Heavy investment in Copilot AI integration, real-time intelligence, and Lakehouse capabilities signals long-term commitment. Features like time travel, OneLake storage, and cross-workload governance address modern data challenges comprehensively. Microsoft’s enterprise customer base and Azure ecosystem integration position Fabric for widespread adoption. Early adopters gain competitive advantages through simplified architecture and reduced operational overhead. However, successful implementation requires experienced partners. Kanerika guides enterprises through Microsoft Fabric adoption strategies—let us help you future-proof your analytics investments.

Is Microsoft Fabric an ETL tool?

Microsoft Fabric includes ETL capabilities but is far more than just an ETL tool. Data Factory within Fabric provides robust data integration with pipelines for extract, transform, and load operations. Dataflows Gen2 enables low-code data transformation, while Notebooks support complex transformations using Spark. Fabric’s ETL pipelines write to Delta Lake tables, automatically enabling time travel and version history for all transformed data. The platform spans the entire analytics lifecycle from ingestion through visualization, making it a complete data platform rather than a point solution. Kanerika migrates legacy ETL tools like Informatica to Microsoft Fabric—start your modernization journey with us.

How expensive is Microsoft Fabric?

Microsoft Fabric uses capacity-based pricing measured in Capacity Units, with pay-as-you-go and reserved options available. Costs depend on workload intensity, data volume, and compute requirements across engineering, warehousing, and BI workloads. Entry-level F2 capacity starts at accessible price points, while enterprise deployments scale to F64 and beyond. OneLake storage is billed separately based on data retained, including time travel version history. Fabric can reduce total cost by eliminating multiple tool licenses and data duplication. Accurate cost projection requires workload analysis. Kanerika provides Microsoft Fabric cost assessments and ROI calculations—request your personalized pricing analysis today.

Is Microsoft Fabric a PaaS or SaaS?

Microsoft Fabric is a SaaS platform, distinguishing it from traditional PaaS analytics services. As SaaS, Fabric handles infrastructure management, scaling, security patches, and updates automatically. Users access workloads through a unified web experience without provisioning compute clusters or managing storage tiers. This differs from Azure Synapse or Databricks on Azure, which require more infrastructure decisions. Fabric’s SaaS model simplifies operations while delivering enterprise features like time travel, governance, and real-time analytics out of the box. OneLake storage and capacity management are abstracted for ease of use. Kanerika helps enterprises transition from PaaS analytics to Microsoft Fabric SaaS—discover the operational benefits with our team.

Is Microsoft Fabric considered AI?

Microsoft Fabric is not AI itself but deeply integrates AI capabilities throughout the platform. Copilot in Fabric uses generative AI to assist with code generation, data transformation, and insight discovery. The Data Science workload supports building and deploying machine learning models with MLflow integration. Real-time intelligence leverages AI for anomaly detection and pattern recognition. Fabric’s AI features augment human analysts rather than replacing core analytics functionality. The platform enables AI-powered workflows while maintaining structured data management with features like time travel for ML experiment reproducibility. Kanerika implements AI-enhanced analytics on Microsoft Fabric—explore how Copilot can accelerate your data team’s productivity.