Choosing the right data integration platform is one of the most consequential infrastructure decisions an enterprise can make, and with Informatica, Azure Data Factory, and Talend all positioned as industry leaders, the choice isn’t always clear-cut.

All three platforms handle data integration, but they take fundamentally different approaches. Informatica IDMC is a full data management suite built for governance-heavy enterprises. ADF is a cloud-native pipeline engine wired into the Microsoft ecosystem. Talend offers open-source flexibility and hybrid deployment – though its landscape has shifted significantly since Qlik’s 2023 acquisition.

This guide breaks down the real architectural differences, TCO, compliance fit, and a structured decision framework, so you can move past the vendor demos and make a call grounded in how these tools perform in production.

Key Takeaways

- Informatica, ADF, and Talend operate at different architectural layers – comparing them on feature counts or base license price alone produces the wrong answer.

- Informatica IDMC is built for governed, multi-cloud environments with native MDM and lineage, making it the strongest fit for regulated industries.

- Azure Data Factory has the lowest entry barrier and deepest Azure ecosystem fit, but retires September 30, 2030 – every new ADF deployment needs a Fabric migration plan from day one.

- Talend offers open-source flexibility and strong hybrid deployment, but Open Studio hit end-of-life in March 2024, leaving organizations still running it on unpatched software.

- For enterprises, total cost of ownership and governance architecture matter as much as pipeline performance – the wrong platform choice compounds through migration debt and compliance gaps that surface at audit time.

Informatica vs Azure Data Factory vs Talend: Quick Comparison:

| Informatica IDMC | Azure Data Factory | Talend | |

| Best for | Regulated enterprise, multi-cloud governance | Azure-first teams, AI pipeline builders | Hybrid deployment, developer-heavy teams |

| Pricing model | IPU subscription (enterprise contract) | Pay-per-activity consumption | Subscription (post-Qlik, pricing less transparent) |

| Deployment | Cloud-native, multi-cloud (AWS/Azure/GCP) | Azure-native | Cloud, on-prem, hybrid |

| Connector count | 650+ certified connectors | 100+ native connectors | 900+ components |

| AI/ML integration | CLAIRE engine (governance-focused) | Azure OpenAI/ML native | External integrations only |

| Real-time streaming | Via PowerExchange and Mass Ingestion | Via Event Hubs / Databricks | Via Talend Real-Time Big Data |

| Governance depth | Best-in-class (MDM, lineage, catalog native) | Via Purview (separate license) | Basic (Qlik roadmap uncertain) |

| Schema drift | Automated via CLAIRE | Manual per-pipeline configuration | Manual configuration |

| Vendor stability | High | Microsoft-backed – ADF retires Sept 2030 | Uncertain (Qlik acquisition 2023) |

| Compliance | SOC 2, ISO 27001, HIPAA, FedRAMP Moderate | SOC 1/2, ISO 27001, HIPAA, FedRAMP High | SOC 2, ISO 27001 |

| Time to first pipeline | 4-8 weeks (certified implementation) | 1-2 weeks | 2-4 weeks |

| 3-year TCO profile | Predictable but high entry | Low entry, often unpredictable at scale | Mid-range (trajectory unclear) |

- Pick Informatica IDMC: When you’re in a regulated industry, running multi-cloud infrastructure, and governance plus MDM are requirements – not nice-to-haves.

- Pick Azure Data Factory / Microsoft Fabric: When your org is Azure-standardized and building toward Fabric. Accept the 2030 ADF retirement as part of the commitment.

- Pick Talend Cloud or Data Fabric: When your team needs hybrid on-premises deployment and strong developer control – but go in with eyes open on Open Studio’s EOL status and the Qlik acquisition risk.

Redefine Your Business Future with Powerful AI Innovations!

Partner with Kanerika for Expert AI implementation Services

Architecture and Core Capabilities: Informatica vs Azure Data Factory vs Talend

1. Informatica IDMC: Governance-First Data Management

Informatica IDMC offers 650+ certified connectors spanning cloud platforms and on-premises data sources. Its strength is in environments where broad connector depth, automated metadata intelligence, and enterprise-grade governance all need to coexist. That combination delivers uniquely – and carries implementation overhead that’s proportionally real.

The CLAIRE AI engine is Informatica’s strongest differentiator. It handles:

- Automated schema mapping: Detects structural changes in source systems and adapts – without per-pipeline manual configuration.

- Data quality anomaly detection: Identifies and flags inconsistencies at ingestion, not discovery.

- Intelligent metadata management: Surfaces lineage and classification without manual tagging.

- Mapping recommendations: Reduces engineering hours at scale across heterogeneous source systems.

Where Informatica creates friction: Steep learning curve, certified implementation requirements, and a broad feature surface mean enterprise deployments typically take four to eight weeks to first pipeline – and several months to full rollout. IPU pricing surprises teams that didn’t model data volume carefully before signing contracts.

2. Azure Data Factory: Azure-Native Pipeline Engine

ADF has the lowest barrier to entry of the three tools. Non-engineers can build and maintain basic pipelines without deep ETL experience. Data engineer onboarding is fastest here – backed by Microsoft’s documentation quality and the largest developer community of the three by volume.

ADF currently supports 100+ native connectors, with additional connectivity through Self-Hosted Integration Runtime. The native integrations that matter most:

- Azure Databricks: Spark-based processing and advanced data transformation.

- Synapse Analytics: Direct warehouse integration without data movement overhead.

- Azure OpenAI and Azure ML: The strongest story for teams building AI-augmented pipelines.

- Power BI: Native semantic model refresh from ADF pipelines.

The Self-Hosted Integration Runtime overhead is frequently underestimated. ADF’s default Azure IR works well for cloud-to-cloud data movement. But as soon as on-premises sources or non-Azure systems enter the picture, the Self-Hosted IR becomes necessary – bringing infrastructure management, VPN configuration, network security rules, and operational overhead that scales non-linearly as source systems multiply. This is ADF’s most cited real-world limitation and is consistently underemphasized in vendor-produced materials.

Where ADF creates friction: Debugging complex pipelines. Error messages are consistently flagged as cryptic. Data governance requires Purview as a separate product with separate licensing. Schema drift requires pipeline-by-pipeline manual configuration. And the 2030 retirement date is now a real planning consideration.

3. Talend: Open-Source Heritage, Hybrid Deployment

Talend’s architectural differentiator is Java code generation. ETL jobs produce readable, modifiable, version-controllable Java code – a transparency level that visual-first tools don’t match. For teams that need auditable transformation logic or want to modify generated code directly, this is genuinely useful.

Where Talend holds structural advantages:

- Legacy system connectivity: 900+ component library covers mainframe, AS/400, and EDI environments where no equivalent ADF connector exists.

- Hybrid deployment: Native on-premises, cloud, and multi-cloud support without Integration Runtime friction. This is architectural, not a configuration workaround.

- Change Data Capture: Handles both log-based and query-based CDC natively across standard RDBMS sources – more flexible than ADF’s native CDC but narrower than Informatica’s PowerExchange.

The Studio UI is dated. Native AI and ML integration is limited. Post-Qlik support quality has become a recurring complaint across practitioner communities. The rebranding to ‘Qlik Talend Data Integration’ creates organizational confusion about roadmap and support contacts.

Technical Capabilities Compared

| Technical Dimension | Informatica IDMC | Azure Data Factory | Talend |

| Schema drift | Automated (CLAIRE detects and adapts) | Manual per-pipeline configuration | Manual configuration |

| CDC | Power Exchange: broadest source range (mainframe, DB2, VSAM) | Query-based or via Databricks Delta Live Tables | Log-based and query-based (standard RDBMS) |

| SAP connectivity | Deepest (BW, HANA, ECC, S/4HANA certified) | Native HANA and SAP Table; ECC via App Server | Component library (more manual config) |

| On-prem source connectivity | Secure Agent (low friction) | Self-Hosted IR (significant operational overhead) | Native (architectural advantage) |

| Transformation transparency | Proprietary mapping format | Visual authoring and JSON definitions | Generated Java code (auditable, portable) |

| Pipeline debugging | Good (CLAIRE-assisted monitoring) | Weak (error messages consistently flagged as cryptic) | Adequate |

| Multi-cloud deployment | Equal capability across AWS, Azure, GCP | Azure-native only | Cloud, on-prem, multi-cloud |

| Streaming / real-time ingestion | Mass Ingestion add-on and cloud event services | Event Hubs and Databricks pairing | Talend Real-Time Big Data (Kafka and Spark) |

Schema drift failures, CDC gaps, and SAP integration breakdowns are the three most common triggers for unplanned platform migrations we encounter at Kanerika. Evaluating these explicitly upfront prevents 12-18 months of downstream remediation work.

Pricing and Total Cost of Ownership

Azure Data Factory Pricing (2026)

ADF charges across four dimensions, per Microsoft’s official pricing documentation:

- Pipeline activity runs: Approximately $0.001 per run.

- Data movement (DIU-hours): Approximately $0.25 per DIU-hour for Azure IR; approximately $0.10 per hour for self-hosted IR.

- Mapping Data Flow compute: Approximately $0.198 per vCore-hour for Azure IR.

- Data movement: Variable by volume and source-destination pairing.

Teams running 1,000+ daily pipeline executions across multi-terabyte workloads consistently see costs three to five times above initial estimates. The full stack changes the calculation substantially:

- Purview: $0.496 per GB per month for data map population.

- Databricks compute: $0.07-$0.55 per DBU depending on tier.

- SHIR infrastructure: On-premises server and network costs not captured in ADF billing.

Teams planning migration to Fabric should note that the Fabric Data Factory pricing model operates under Fabric Capacity Units and needs to be modeled separately.

Informatica IDMC Pricing (2026)

Informatica uses IPU (Informatica Processing Unit) subscription pricing. Different services – data integration, data quality, MDM – draw from the same IPU pool at different rates.

- Entry-level configuration: Approximately $2,000 per month ($24,000 annually).

- Large enterprise contracts: With MDM, catalog, and quality modules active, regularly reach $500,000-$1,000,000+ annually.

- Contract structure: Multi-year with volume locked at signing – costs are predictable, but negotiation at the start matters a lot.

Informatica doesn’t publish IPU pricing publicly. An accurate quote requires a formal sales engagement with detailed volume estimates.

Talend Pricing (2026)

Talend Cloud and Data Fabric pricing is subscription-based and tiered by features and user count. Post-Qlik, pricing transparency has declined.

- Typical mid-market Talend Cloud contracts: Run $50,000-$200,000 annually.

- Direct sales engagement: Required for accurate quotes – no public pricing available.

- Talend Open Studio: Now EOL – should not be evaluated for any new production use.

| Year 1 TCO | Years 2-3 Annual | Predictability | Full Stack | |

| ADF (full stack) | $30K-$150K | $80K-$400K+ (volume-dependent) | Low | ADF + Purview + Databricks + SHIR infrastructure |

| Informatica IDMC | $100K-$600K | $100K-$500K (stable) | High | All modules in IPU subscription |

| Talend Cloud / Data Fabric | $50K-$200K | $50K-$200K (trajectory unclear) | Medium | Base subscription; add-ons vary |

These are illustrative ranges from Kanerika client engagements and publicly referenced deal sizes. Actual costs depend on data volumes, pipeline complexity, module selection, and negotiation.

The pattern we see repeatedly: organizations that chose ADF for its low entry cost found the year-two bill three to four times higher once Purview, Databricks, and SHIR infrastructure were fully operational. Informatica’s year-one sticker shock, by contrast, tends to stabilize as IPU consumption becomes predictable – and the platform’s governance capabilities eliminate manual processes that carry their own hidden costs. Neither trajectory is inherently wrong, but both need to be modeled before signing.

ETL vs. ELT: How to Choose the Right Data Processing Strategy

Determine the optimal data processing strategy for your business by comparing the strengths and use cases of ETL versus ELT.

Data Governance and Compliance: Informatica vs Azure Data Factory vs Talend

For HIPAA, SOX, Basel III, and GDPR requirements, Informatica’s native data lineage tracking, master data management, business glossary, and policy enforcement are purpose-built for regulated environments. These aren’t configurable modules bolted on after the fact – they’re the core architecture. Informatica has been recognized as a Leader in the 2024 Gartner Magic Quadrant for Data Integration Tools, with governance depth as a primary differentiator.

ADF paired with Microsoft Purview is a proven alternative for Azure-standardized organizations – providing unified data catalog, automated lineage, and sensitivity labeling that covers most regulated-industry requirements. But it’s two products, two configuration workstreams, and two licensing conversations. Microsoft was also recognized in the 2024 Gartner Magic Quadrant for Data Integration Tools, validating the Fabric and Purview stack as a serious enterprise option.

Data literacy across the organization matters alongside the technical governance layer. Platforms that surface lineage and catalog information accessibly – as both Informatica and Purview do – reduce the human compliance risk, not just the technical one. And cloud security posture management is what converts platform investment into actual audit-readiness.

Compliance Certifications

| Platform | SOC 2 | ISO 27001 | HIPAA | FedRAMP | PCI DSS |

| Informatica IDMC | Type I and II | Yes | Yes | Moderate (Government Cloud) | Yes |

| Azure Data Factory | Type I and II | Yes | BAA available | High (Azure Government) | Yes |

| Talend Cloud | Type II | Yes | Via configuration | No | Via configuration |

For federal or defense-adjacent workloads, ADF on Azure Government with FedRAMP High is the only realistic path of the three platforms. Informatica offers FedRAMP Moderate via its Government Cloud product for agencies and contractors where Moderate authorization satisfies requirements.

Comparing AI Capabilities Of Informatica, Azure Data Factory, and Talend

ADF’s native connections to Azure OpenAI Service, Azure Machine Learning, and Databricks ML make it the strongest choice for teams where data pipelines need to feed LLM-based workflows or real-time AI inference. If building agentic AI systems is a strategic priority, the Azure ecosystem around ADF – and increasingly around Fabric – is the most naturally connected path. Teams building custom AI agents on Azure have the shortest distance from raw data to model input.

Informatica’s CLAIRE is genuinely powerful – but it’s focused on data management automation: quality scoring, anomaly detection, intelligent schema mapping, and metadata enrichment. CLAIRE accelerates governed data operations. It’s the right tool for AI-assisted governance, not for connecting pipelines to external AI orchestration layers.

Talend’s native AI capabilities are limited. Any intelligent pipeline requirement demands external ML platform connections, adding integration complexity that works against one of Talend’s core developer-experience advantages. This gap has widened as AI pipeline demands have accelerated.

| AI Capability | Informatica IDMC | Azure Data Factory | Talend |

| Native AI engine | CLAIRE (built-in) | Azure OpenAI / Azure ML (native) | None natively |

| Primary AI use | Governance automation (quality, lineage, schema) | Pipeline intelligence and LLM connectivity | External via custom connectors |

| LLM / GenAI integration | Limited (governance layer only) | Native Azure OpenAI and Fabric Copilot | Requires external build |

| ML model invocation | Via API calls | Native Azure ML integration | Via custom components |

| Automated schema mapping | Yes (CLAIRE) | No | No |

| Anomaly detection | Yes (CLAIRE data quality) | Via Azure Monitor / Databricks | Via external tools |

| Agentic pipeline support | Not purpose-built | Best fit (Azure AI ecosystem) | Not purpose-built |

| AI-assisted authoring | Limited | Copilot in Microsoft Fabric | No |

The gap between ADF and the other two is widest in the agentic category. For teams building intelligent data workflows where pipelines trigger reasoning, retrieval, or generation steps, ADF’s Azure ecosystem connectivity is a structural advantage. The challenges of deploying AI agents in production – latency, data freshness, context retrieval – all depend on how tightly the data pipeline layer connects to the AI orchestration layer.

For teams thinking about decision intelligence or machine learning model management pipelines, the integration platform choice determines how fast data reaches models – and how reliably it gets there. Tools like KARL, Kanerika’s AI data insights agent, demonstrate what becomes possible when governed pipelines and AI reasoning are tightly integrated from the start.

Use Case Matchups: Which Platform Fits Each Scenario

1. SAP Integration

Informatica has the deepest SAP connectivity – SAP BW, SAP HANA, SAP ECC, and S/4HANA via certified connectors. Complex SAP-to-cloud migrations, particularly ECC to S/4HANA workstreams, are a documented Informatica strength where data quality and lineage need to be validated at each transformation stage.

ADF supports SAP HANA, SAP BW, and SAP Table connectors natively. ECC requires App Server connector configuration. Talend supports SAP via its component library but requires more manual configuration for complex environments.

2. CDC and Change Data Capture

Informatica PowerExchange handles CDC across the broadest source range – DB2, IMS, VSAM, Oracle, SQL Server, Sybase – via log-based capture. This is the primary reason Informatica dominates in financial services and mainframe-heavy industries where CDC from core banking systems is a baseline requirement.

ADF requires Azure Database CDC features or Databricks Delta Live Tables for log-based CDC. Native ADF CDC is query-based and higher-latency. Talend covers log-based and query-based modes across standard RDBMS sources.

3. Real-Time Data Streaming

None of the three is a streaming-native platform. Each requires pairing with dedicated streaming infrastructure:

- Informatica: Pairs with Mass Ingestion for CDC-based streaming and cloud event services.

- Azure Data Factory: Pairs with Azure Event Hubs, IoT Hub, or Databricks Structured Streaming.

- Talend: Pairs with Real-Time Big Data components for Kafka and Spark.

4. Multi-Cloud Data Architecture

Informatica is the clear choice. Equal support for AWS, Azure, and GCP with no capability degradation across cloud providers. The Secure Agent provides consistent source connectivity regardless of which cloud hosts the compute. Organizations running data workloads across multiple cloud providers, or operating under cloud-agnostic infrastructure policy, have a genuine Informatica advantage here.

ADF is Azure-native. Cross-cloud connectivity requires SHIR configuration and adds meaningful operational complexity. It’s not architecturally suited for multi-cloud data estate management.

| Use Case | Best Fit | Works With Effort | Poor Fit |

| SAP integration (BW, ECC, S/4HANA) | Informatica IDMC | ADF (HANA and Table connectors) | Talend |

| Mainframe CDC (DB2, IMS, VSAM) | Informatica (PowerExchange) | Talend | ADF |

| Multi-cloud data estate | Informatica IDMC | Talend | ADF |

| Azure-native AI pipeline | ADF / Fabric | – | Informatica, Talend |

| On-premises hybrid deployment | Talend | Informatica (Secure Agent) | ADF (SHIR overhead) |

| Real-time streaming | ADF + Event Hubs/Databricks | Talend (Kafka/Spark) | Informatica (add-on) |

| Regulated industry (HIPAA/SOX/Basel III) | Informatica IDMC | ADF + Purview | Talend |

| Developer team, open code transparency | Talend (Java generation) | ADF (JSON pipelines) | Informatica |

| FedRAMP High and federal workloads | ADF (Azure Government) | Informatica (FedRAMP Moderate) | Talend |

| Master Data Management | Informatica IDMC | – | ADF, Talend |

| Fast initial deployment | ADF | Talend | Informatica |

| Customer 360 and CRM integration | Informatica IDMC | ADF + Purview | Talend |

Forcing ADF into a multi-cloud governance role or forcing Informatica into a fast-startup greenfield project – both carry hidden costs that don’t show up in vendor comparisons. Business process modeling clarity before platform selection is what prevents these mismatches.

Cross-Platform Migration: Informatica vs ADF vs Talend

Platform Re-Evaluation Signals

Informatica IDMC:

- Licensing cost: Growing faster than value extracted, particularly when MDM and governance modules are underutilized.

- Azure standardization: Organization has moved toward Microsoft Fabric, making Informatica’s multi-cloud flexibility redundant.

- Talent availability: Certified IDMC talent is difficult to hire and retain in your market.

Azure Data Factory:

- Non-Azure sources dominating: Making SHIR management an escalating operational burden.

- Governance requirements scaling: Beyond what Purview and ADF provide without heavy customization.

- Pipeline complexity: Growing beyond what visual authoring handles cleanly.

- Still running Open Studio: Now EOL and unpatched – in production.

- Post-Qlik support quality: Deteriorating for your contract tier.

- Cloud-native scaling: Requirements hitting architectural limits.

- Pricing transparency issues: Emerging during renewal conversations.

The Business Logic Problem

Informatica mappings embed complex transformation rules, business calculations, and data quality conditions in proprietary formats that don’t translate cleanly into ADF pipelines or Fabric workflows. A single complex Informatica session can contain hundreds of individual transformation steps built over years by engineers who may no longer be with the organization.

Manual migration of a complex enterprise ETL codebase – typically 500-2,000+ mappings – takes 12-24 months. That’s the reality most platform migration projects discover after they’ve already committed to a switch.

Financial planning and analysis teams who depend on accurate pipelines for month-end close processes experience this most painfully when a migration destabilizes production mid-cycle. IT service management processes built around established pipeline SLAs break when those pipelines are disrupted.

FLIP: An Intelligent Workflow Platform Powering Migrations

Flip is a low-code/no-code, AI-powered platform that simplifies and automates data transformation pipelines, helping businesses gain valuable insights from their data faster through:

- Business logic translation: Converts proprietary Informatica mapping formats into target platform equivalents.

- Field mapping: Automated field-level mapping across source and target schemas.

- Dependency analysis: Surfaces pipeline dependencies and downstream impacts before migration begins.

- End-to-end validation: Confirms output parity between source and migrated pipelines.

Supported migration paths: Informatica to Microsoft Fabric, Informatica to Azure Databricks, Informatica to Talend, Informatica to Alteryx, and Azure Data Factory to Microsoft Fabric.

Complex Informatica codebases that would take 18-24 months through manual migration have been completed in 90-day engagements using FLIP – across multiple validated enterprise implementations. FLIP doesn’t eliminate human judgment on complex business rules, but it eliminates the mechanical conversion work that consumes most of a migration timeline.

| Migration Path | Complexity | Typical Manual Timeline | FLIP-Accelerated | Primary Risk |

| Informatica to Microsoft Fabric | High | 18-24 months | 90 days | Business logic translation, proprietary mapping formats |

| Informatica to Azure Databricks | High | 12-18 months | 60-90 days | Spark transformation equivalence, dependency mapping |

| Informatica to Talend | Medium-High | 12-18 months | 60-90 days | Component compatibility, scheduling logic |

| Informatica to Alteryx | Medium | 9-12 months | 60 days | Workflow format translation |

| ADF to Microsoft Fabric | Low-Medium | 3-6 months | 4-8 weeks | Fabric feature parity gaps (minor) |

| PowerCenter to IDMC | Medium-High | 12-18 months | Not FLIP-supported | Platform architecture differences (not a version upgrade) |

| Talend to ADF | Medium | 6-12 months | Not FLIP-supported | Visual mapping equivalence, SHIR configuration |

The FLIP-accelerated timelines are documented across actual client engagements, not projections. PowerCenter to IDMC and Talend to ADF are not currently FLIP-supported paths – that’s worth knowing before setting expectations.

The PRISM Framework: Choosing Between Informatica, ADF, and Talend

PRISM: Platform ecosystem, Regulatory requirements, Integration complexity, Scale trajectory, Migration readiness.

| PRISM Dimension | Questions to Ask | What It Points To |

| P – Platform Ecosystem | Azure-first or multi-cloud? Fabric roadmap adopted? ADF 2030 retirement factored in? | Azure-first + Fabric plan: ADF/Fabric; Multi-cloud: Informatica; Heavy on-prem: Talend |

| R – Regulatory Requirements | HIPAA/SOX/Basel III compliance? Data lineage for audits? Self-service governance needed? | Heavy regulated: Informatica; Azure + moderate requirements: ADF + Purview; Minimal: Any |

| I – Integration Complexity | SAP? Mainframe CDC? Legacy source diversity? | Complex heterogeneous sources: Informatica; Cloud-native sources: ADF; Legacy + hybrid: Talend |

| S – Scale Trajectory | Volume predictable or bursty? Engineering depth for optimization? | Predictable high-volume: Informatica; Bursty variable: ADF; Engineering-optimized: Talend |

| M – Migration Readiness | Current platform? Business logic complexity? Talent availability? | On Informatica + Azure standardizing: evaluate Fabric via FLIP; On ADF + governance scaling: add Purview or evaluate Informatica |

- Azure-first + building toward Fabric + no major compliance burden: ADF / Microsoft Fabric Data Factory (plan explicitly for 2030 ADF retirement).

- Multi-cloud + heavy data governance + regulated industry (HIPAA/SOX/Basel III): Informatica IDMC.

- Hybrid on-premises + developer-heavy team + open transformation code required: Talend Cloud or Data Fabric (Open Studio is EOL; Qlik risk is real).

- Already on PowerCenter, re-evaluating against Azure ecosystem: Evaluate Microsoft Fabric migration via FLIP before committing.

- Already on ADF, data governance requirements scaling up: Add Microsoft Purview first; if still insufficient, evaluate Informatica IDMC.

Most evaluation teams find that two or three PRISM dimensions point to the same platform. The cases where dimensions genuinely conflict – an Azure-first organization with heavy SAP mainframe integration, for example – are exactly where an independent assessment prevents a costly mismatch.

Kanerika’s AI-driven business transformation approach applies this kind of structured analysis before recommending any platform. The data integration layer isn’t an isolated IT decision. It shapes IT service management capabilities, analytics maturity, and the organization’s ability to operationalize AI at scale.

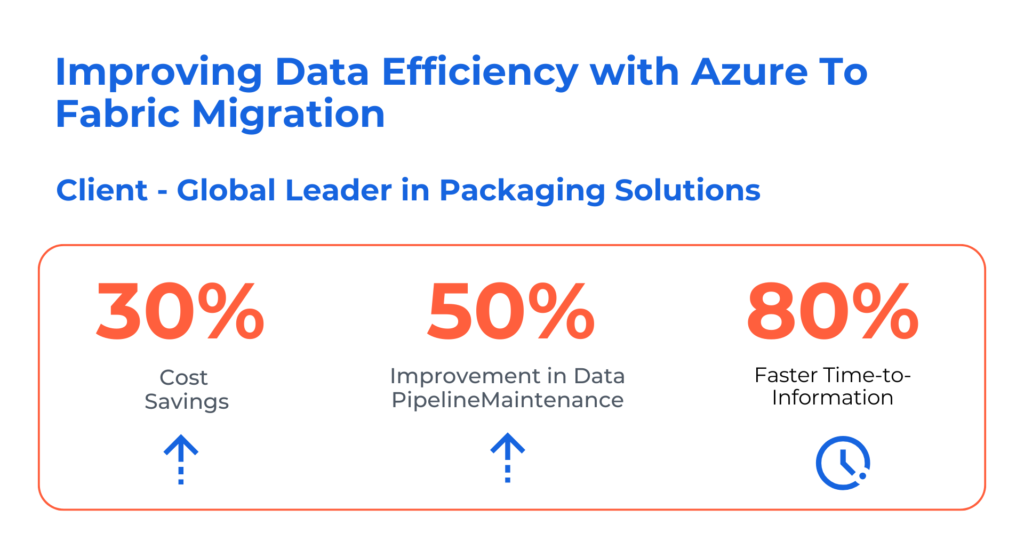

Case Study: Improving Data Efficiency with Azure to Fabric Migration For A Global Leader in Packaging Solutions

Challenges

- Scattered workflows across ADF & Synapsefragmented data operations, reducingefficiency & visibility across the pipeline

- An intermediate Parquet conversionintroduced latency, failure, & architecturalbloat—delaying ingestion, increasing risk

- The absence of a unified governance modelled to inconsistent setups, redundantprocesses, & limited data scalability

Solutions

- Migrated ADF/Synapse assets to Fabric using Kanerika’s utility, accelerating transition while preserving code quality

- Enabled direct SAP C4C–Fabric connection, cutting unnecessary processing steps and improving data flow reliability

- Implemented a unified governance framework that standardized naming, versioning, and documentation

Results

- 30% Cost Savings

- 50% Improvement in Data Pipeline Maintenance

- 80% Faster Time-to-Information

How Kanerika Accelerates Enterprise Data Integration

Kanerika is a premier provider of data-driven software solutions and services that facilitate digital transformation. Specializing in Data Integration, Analytics, AI/ML, and Cloud Management, Kanerika prides itself on its expertise in employing cutting-edge technologies and agile methodologies to ensure exceptional outcomes.

As a Microsoft Solutions Partner for Data and AI, a certified Databricks partner, and a Snowflake partner, Kanerika brings platform-neutral expertise to manufacturing data lakehouse engagements. Platform recommendations are based on enterprise context, existing technology stack, and workload type, not commercial preference. Whether the environment is SAP-heavy, Azure-native, or multi-cloud, the architecture is designed around what the manufacturer actually needs

Migration complexity is almost always underestimated. A complex Informatica codebase takes 12 to 24 months to migrate manually. FLIP reduces that to 90 days by automating business logic translation, field mapping, and dependency analysis – converting what looks like a two-year program into a 90-day technical engagement followed by business validation.

Redefine Your Business Future with Powerful AI Innovations!

Partner with Kanerika for Expert AI implementation Services

FAQs

Which is better, Talend or Informatica?

Informatica outperforms Talend for large-scale enterprise data integration requiring robust governance, metadata management, and complex transformation capabilities. Talend offers stronger appeal for organizations seeking open-source flexibility and faster deployment cycles. Informatica excels in regulated industries needing audit trails and compliance features, while Talend suits mid-market companies prioritizing cost control. The right choice depends on your data volume, existing infrastructure, and long-term integration strategy. Kanerika has executed dozens of ETL platform evaluations and can help you identify which tool aligns with your enterprise requirements.

What is the difference between Azure Data Factory and Talend?

Azure Data Factory is a cloud-native, serverless data integration service designed for Azure ecosystems, while Talend provides a hybrid ETL platform supporting on-premises, multi-cloud, and open-source deployments. ADF uses a pay-per-use consumption model with deep Microsoft integration, whereas Talend offers code-generation flexibility and broader connector coverage across non-Microsoft environments. ADF requires minimal infrastructure management; Talend demands more hands-on configuration but delivers greater customization. Kanerika helps enterprises navigate Azure Data Factory vs Talend decisions through structured assessments tailored to your architecture.

What is the main difference between Informatica and Azure Data Factory?

Informatica delivers an enterprise-grade data management suite with advanced data quality, MDM, and governance capabilities across hybrid environments. Azure Data Factory focuses specifically on cloud-first data orchestration within Microsoft’s ecosystem, offering serverless scalability without infrastructure overhead. Informatica suits organizations needing comprehensive data lifecycle management; ADF appeals to Azure-centric teams wanting tight integration with Synapse, Fabric, and Power BI. Pricing models differ significantly, with Informatica using license-based structures versus ADF’s consumption billing. Kanerika’s data platform experts can map your requirements to the right Informatica vs Azure Data Factory choice.

Who is the competitor of Azure Data Factory?

Azure Data Factory competes primarily with Informatica IDMC, Talend, AWS Glue, Google Cloud Dataflow, and Databricks workflows in the enterprise data integration market. Each competitor targets different strengths: Informatica leads in governance depth, Talend in open-source flexibility, AWS Glue in serverless ETL for Amazon environments, and Databricks in unified analytics pipelines. Organizations often evaluate these platforms based on existing cloud investments, connector requirements, and pricing models. Kanerika provides vendor-neutral platform assessments to identify which ADF competitor best fits your data infrastructure strategy.

Is Talend used for ETL?

Talend is widely used for ETL operations, providing extract, transform, and load capabilities across cloud, on-premises, and hybrid data environments. Its open-source foundation allows developers to build custom data pipelines with extensive connector libraries for databases, SaaS applications, and big data platforms. Talend Data Integration supports batch processing, real-time streaming, and data quality functions within unified workflows. Enterprises leverage Talend for data warehouse loading, migration projects, and API-based integrations. Kanerika’s integration specialists implement Talend ETL solutions optimized for performance and maintainability across complex enterprise architectures.

Is Informatica end of life?

Informatica is not end of life; the company continues active development on Informatica Intelligent Data Management Cloud (IDMC) as its flagship platform. However, legacy products like PowerCenter face reduced investment as Informatica shifts focus toward cloud-native offerings. Organizations running older on-premises versions should evaluate migration paths to IDMC or alternative platforms like Databricks, Microsoft Fabric, or Talend. Informatica remains a viable enterprise choice, though modernization planning is essential for long-term sustainability. Kanerika helps enterprises assess Informatica migration options and build transition roadmaps with minimal business disruption.

What is replacing Informatica?

Organizations are replacing legacy Informatica PowerCenter with modern platforms including Microsoft Fabric, Databricks, Azure Data Factory, Talend, and Informatica’s own cloud-native IDMC. Microsoft Fabric attracts enterprises consolidating analytics infrastructure, while Databricks appeals to data engineering teams building Lakehouse architectures. The choice depends on existing ecosystem investments, real-time processing needs, and governance requirements. Many enterprises adopt hybrid approaches, migrating workloads incrementally rather than complete replacement. Kanerika specializes in Informatica to Databricks and Informatica to Microsoft Fabric migrations, preserving business logic while modernizing your data stack.

Which ETL platform is best for data integration?

The best ETL platform for data integration depends on your cloud strategy, data complexity, and governance needs. Azure Data Factory excels for Microsoft-centric environments requiring serverless orchestration. Informatica leads in enterprise data governance and complex transformation scenarios. Talend offers flexibility for multi-cloud and hybrid deployments with open-source foundations. Databricks suits organizations building unified analytics and AI pipelines. Evaluate platforms based on connector coverage, pricing models, team expertise, and long-term vendor roadmaps. Kanerika conducts platform evaluations across these ETL tools, helping you select the right data integration solution faster.

Is Talend outdated?

Talend is not outdated but faces strategic uncertainty following its acquisition by Qlik in 2023. The platform continues receiving updates, and existing deployments remain functional for enterprise ETL workloads. However, organizations evaluating new data integration investments should consider Qlik’s integration roadmap and whether Talend will maintain independent development momentum. Competitors like Azure Data Factory, Databricks, and Microsoft Fabric now offer compelling alternatives with stronger cloud-native architectures. Teams currently using Talend should assess modernization paths proactively. Kanerika helps enterprises evaluate Talend alternatives and execute seamless ETL platform transitions.

Will Azure Data Factory be replaced by Microsoft Fabric?

Azure Data Factory will not be replaced but is being integrated into Microsoft Fabric as the core data orchestration engine. ADF pipelines work natively within Fabric’s unified analytics platform, and existing ADF investments remain fully supported. Microsoft positions Fabric as the evolution of its analytics stack, combining ADF, Synapse, and Power BI capabilities under one governance layer. Organizations can continue using standalone ADF or adopt Fabric for consolidated data management. Migration from ADF to Fabric is straightforward with minimal rework required. Kanerika executes Azure to Microsoft Fabric migrations that protect existing pipeline investments.

Can you migrate from Informatica to Azure Data Factory or Microsoft Fabric?

Migration from Informatica to Azure Data Factory or Microsoft Fabric is achievable with proper planning, though it requires careful mapping of transformations, workflows, and metadata structures. ADF and Fabric handle data orchestration differently than Informatica PowerCenter, so direct lift-and-shift approaches often fail. Successful migrations involve assessing existing mappings, redesigning complex transformations for cloud-native execution, and validating data quality throughout the transition. Automated migration accelerators can reduce manual effort significantly. Kanerika’s Informatica to Microsoft Fabric migration accelerator streamlines this process while preserving business logic and minimizing downtime.

Is Talend still a good ETL platform choice after the Qlik acquisition?

Talend remains functional as an ETL platform after the Qlik acquisition, but strategic uncertainty affects long-term planning decisions. Qlik has integrated Talend into its broader analytics portfolio, potentially shifting development priorities toward Qlik’s data integration roadmap rather than standalone Talend evolution. Existing Talend deployments continue operating effectively, and enterprise support persists. However, organizations starting new data integration projects should evaluate whether Talend’s future aligns with their multi-year technology strategy. Consider platforms like Databricks or Microsoft Fabric for clearer vendor roadmaps. Kanerika provides objective assessments to determine if Talend still fits your enterprise needs.

What is the future of Talend?

Talend’s future depends on Qlik’s strategic direction following the 2023 acquisition. Qlik has positioned Talend as part of its end-to-end data analytics stack, focusing on data integration capabilities that complement Qlik’s visualization strengths. Standalone Talend product development may slow as resources shift toward unified Qlik offerings. Organizations currently invested in Talend should monitor roadmap announcements and evaluate whether continued investment aligns with enterprise data strategy. Alternative platforms like Microsoft Fabric and Databricks offer clearer independent trajectories. Kanerika helps enterprises plan Talend modernization or migration strategies based on evolving market dynamics.

Does Informatica have a future?

Informatica maintains a strong market position with continued investment in Informatica Intelligent Data Management Cloud (IDMC) as its strategic platform. The company focuses on AI-driven data management, governance automation, and cloud-native capabilities to compete with Microsoft Fabric and Databricks. However, legacy PowerCenter users face pressure to modernize as on-premises product investment declines. Informatica’s future favors organizations committed to its cloud transformation, while those seeking alternatives have viable migration paths. Evaluate whether Informatica’s roadmap matches your hybrid or cloud-first strategy. Kanerika advises enterprises on Informatica modernization paths tailored to long-term business goals.

Why is Informatica the best ETL tool?

Informatica earns recognition as a leading ETL tool due to its comprehensive metadata management, robust data quality capabilities, and enterprise-grade governance features. Its transformation engine handles complex business logic across high-volume data environments reliably. Informatica supports extensive connector coverage spanning legacy systems, cloud platforms, and SaaS applications. The platform’s lineage tracking and impact analysis provide visibility essential for regulated industries. However, cost and complexity make it better suited for large enterprises than mid-market organizations. Kanerika implements Informatica solutions optimized for scalability and helps enterprises determine if Informatica fits their integration requirements.

How does Azure Data Factory pricing compare to Informatica IDMC?

Azure Data Factory uses consumption-based pricing charging for data movement, pipeline orchestration, and integration runtime hours, making costs predictable for variable workloads. Informatica IDMC employs license-based pricing with compute units, typically requiring higher upfront commitments suited for stable, high-volume processing. ADF costs less for intermittent or scaling workloads; Informatica proves more economical at sustained enterprise scale with predictable volumes. Both platforms include additional charges for premium connectors and advanced features. Evaluate total cost of ownership across your specific usage patterns. Kanerika builds pricing models comparing Azure Data Factory vs Informatica IDMC for your data volumes.

Is Azure Data Factory an ETL tool?

Azure Data Factory functions as an ETL and ELT tool, providing cloud-native data integration and orchestration capabilities within Microsoft’s Azure ecosystem. ADF supports extracting data from diverse sources, transforming it through data flows or external compute services, and loading results into destinations like Azure Synapse, Data Lake, or SQL databases. Its serverless architecture scales automatically without infrastructure management. ADF also orchestrates complex workflows involving multiple Azure services, Databricks notebooks, and third-party tools. For organizations embedded in Microsoft environments, ADF serves as the primary ETL engine. Kanerika implements Azure Data Factory pipelines designed for performance and maintainability.

Which data integration platform handles schema drift best?

Azure Data Factory and Databricks handle schema drift most effectively among leading data integration platforms. ADF’s mapping data flows include built-in schema drift detection that automatically accommodates new columns without pipeline failures. Databricks Auto Loader manages schema evolution in streaming workloads, merging new fields into Delta Lake tables seamlessly. Informatica IDMC offers schema change handling but requires more manual configuration. Talend addresses schema drift through design patterns rather than native automation. Choose platforms with dynamic schema capabilities for fast-changing data sources. Kanerika architects data integration solutions with schema drift management built into pipeline design from the start.

Which ETL tool is in demand in 2026?

Microsoft Fabric, Databricks, and Azure Data Factory lead ETL tool demand in 2026, driven by enterprise cloud migration and unified analytics adoption. Fabric’s integrated approach combining ingestion, transformation, and BI attracts organizations consolidating data stacks. Databricks remains dominant for data engineering teams building Lakehouse architectures with AI workloads. Snowflake’s native transformation capabilities grow for Snowflake-centric environments. Traditional tools like Informatica and Talend maintain enterprise presence but face competitive pressure from cloud-native alternatives. Skills in these modern platforms command premium market rates. Kanerika trains teams on in-demand ETL platforms while implementing production-ready data pipelines.

How do I choose between these platforms without a year-long evaluation?

Accelerate your ETL platform decision by defining clear evaluation criteria upfront: cloud strategy, existing infrastructure, governance requirements, team skills, and budget constraints. Run focused proof-of-concept projects on two or three shortlisted platforms using representative data workloads rather than exhaustive feature comparisons. Leverage vendor reference architectures and partner expertise to avoid common pitfalls. Prioritize platforms aligning with your three-year technology roadmap over feature checklists. Document decision factors for stakeholder alignment. Kanerika’s migration ROI calculator and rapid assessment methodology help enterprises make confident platform choices in weeks, not months.

What is Kanerika's FLIP platform?

Kanerika’s FLIP is a unified data platform with built-in governance, data quality, and AI capabilities designed to accelerate enterprise data integration and migration projects. FLIP includes migration accelerators that automate transitions from legacy platforms like Informatica, Talend, and Alteryx to modern environments including Microsoft Fabric, Databricks, and Azure Data Factory. The platform preserves business logic during migration while reducing manual effort significantly. FLIP’s enterprise workflow automation handles complex data pipelines with compliance and security controls embedded throughout. Contact Kanerika to see how FLIP accelerates your data platform modernization with reduced risk.

What tools are top alternatives to Informatica and Talend for ETL?

Top alternatives to Informatica and Talend for ETL include Microsoft Fabric, Azure Data Factory, Databricks, Snowflake with dbt, and AWS Glue. Microsoft Fabric offers unified analytics with integrated data orchestration ideal for Microsoft-centric enterprises. Databricks excels for organizations building Lakehouse architectures combining ETL with machine learning workloads. Azure Data Factory provides serverless orchestration with consumption pricing. Snowflake paired with dbt delivers transformation capabilities for warehouse-first strategies. AWS Glue suits Amazon ecosystem deployments. Each alternative addresses different architectural preferences and cloud strategies. Kanerika evaluates these Informatica and Talend alternatives against your specific requirements for informed platform decisions.