When Zuellig Pharma migrated its SAP landscape across 40 integrated systems into Microsoft Azure, the work involved staged extraction, quality checks, transformation rules, and governance controls running in parallel across 13 Asian markets.

That structure is what separates teams that finish on time from ones that don’t. Gartner research finds that 83% of data migration projects fail outright or exceed budgets and schedules, and across Kanerika’s 100+ enterprise migrations the failure patterns are consistent regardless of source platform. A defined lifecycle is the difference between a controlled rollout and a 90-day emergency.

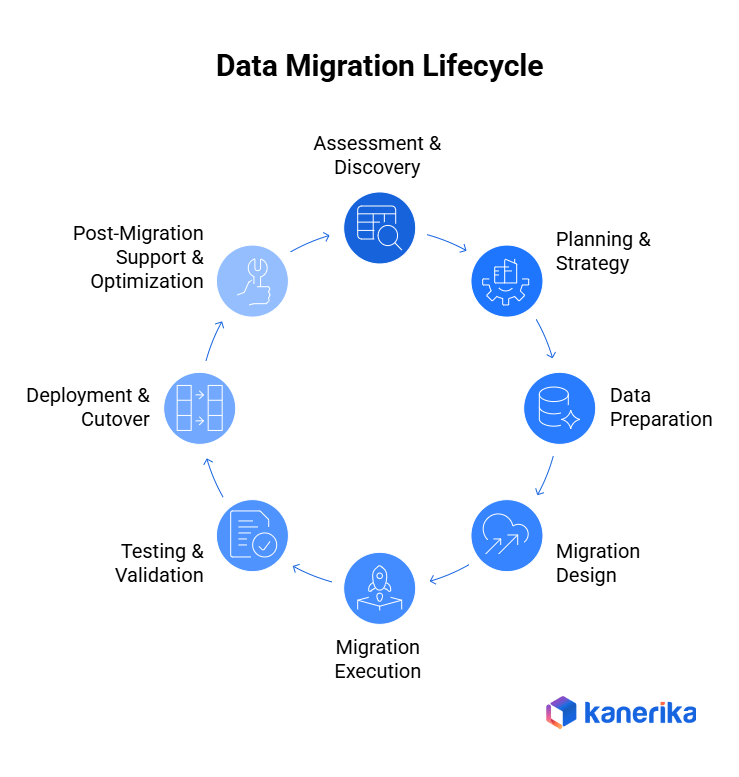

In this article, we’ll cover what the data migration lifecycle is, walk through each of the 8 phases with the failure pattern that derails it, and share the practices that keep enterprise migrations predictable.

Accelerate Your Data Migration with a Proven Lifecycle Approach

Start Your Migration with Kanerika’s Proven Expertise

Key Takeaways

- Enterprise data migration is a structured lifecycle involving assessment, planning, execution, validation, and optimization, not a one-time technical task.

- Skipping early activities like data discovery, profiling, and cleansing is a key reason many migration projects run over budget or miss deadlines.

- Breaking the migration into clear phases reduces risk, improves data quality, and builds confidence in the target system.

- Ongoing testing, reconciliation, and business user involvement ensure the migrated data supports real-world workflows.

- Governance, automation, and security must be part of the process throughout the lifecycle for compliance and scalability.

- Kanerika’s FLIP platform helps automate the migration lifecycle, enabling faster and lower-risk enterprise migrations.

What Is the Data Migration Lifecycle?

The data migration lifecycle is an end-to-end procedure for assessing, preparing, moving, validating, and stabilizing data when transitioning between systems. It treats migration as a controlled business process with checkpoints at each phase, not a single technical handoff.

Lifecycle differs from a migration framework or a migration strategy in scope. A framework defines governance, ownership, and decision rights.

A strategy defines the approach (big bang, phased, hybrid). The lifecycle is the operational sequence of phases that executes both. For broader context that covers all three angles, see Kanerika’s complete data migration guide.

The lifecycle approach works because it forces teams to validate at each step. Issues caught in Phase 1 cost a fraction of issues caught at Phase 7. According to the IBM Institute for Business Value 2025 report, more than 25% of organizations now estimate annual losses exceeding $5 million from data quality issues alone, much of which compounds during migrations when validation is skipped.

Phase 1: Assessment and Discovery

The assessment phase establishes the foundation for the migration project by mapping the current data landscape before strategic decisions get made. Industry analysis consistently finds that around a third of enterprise data is redundant, obsolete, or trivial (ROT) and adds no business value, with the Veritas Global Databerg study being one widely-cited benchmark. Discovery is what surfaces this.

A complete discovery covers source systems, data assets, dependencies, and quality posture before any migration design begins. In practice, this means combining automated profiling tools like Microsoft Purview, Talend Data Profiler, or IBM InfoSphere with manual interviews of legacy system owners, since automated tools catalog tables but rarely surface business rules buried in stored procedures.

- Catalog all data repositories including databases, ERP systems, CRM platforms, file shares, cloud applications, and third-party integrations.

- Document structured, semi-structured, and unstructured data types with their volumes, formats, and retention needs.

- Map information flow between systems to identify dependencies that could disrupt operations during cutover.

- Profile data for completeness, accuracy, consistency, and duplication using automated profiling tools.

- Classify regulated and sensitive information to establish appropriate security and access controls.

Common Failure Pattern In Phase 1: Teams underestimate undocumented dependencies in legacy systems. Years of stored procedures, batch jobs, and informal data feeds rarely have owners, and they only surface when something breaks mid-migration. Build the dependency map by tracing actual data flows in production, not by trusting tribal knowledge or outdated architecture diagrams.

Phase 2: Planning and Strategy

Planning translates discovery into an executable roadmap. The plan defines how the migration proceeds, who owns each decision, and what success looks like in measurable terms.

The strategy choice in this phase shapes everything that follows. Big bang migrations execute in a single cutover window with high speed but high risk.

Phased migrations move data in logical groups, allowing validation between stages. Zero-downtime migrations synchronize source and target in real time and are common in 24/7 environments like financial services and healthcare.

- Select between big bang, phased, hybrid, or zero-downtime approaches based on risk tolerance and business continuity needs.

- Build realistic timelines aligned with business calendars, with buffer time between phases for unexpected issues.

- Define ownership across IT, business, and compliance, with clear escalation paths and decision authority.

- Establish KPIs including data accuracy thresholds, acceptable downtime, and performance benchmarks.

- Document risk mitigation plans for downtime, data loss, and rollback scenarios.

Common Failure Pattern In Phase 2: Picking the strategy before completing discovery. Teams default to “phased” because it sounds safer, then discover their data dependencies require a different approach. The strategy decision should follow the dependency map, not precede it.

Phase 3: Data Preparation

Data preparation determines migration quality. The principle is simple: migrating bad data into a modern system makes the bad data faster, not better.

Preparation focuses on cleansing, standardization, transformation logic, and security controls before any pipeline runs.

- Identify and remove duplicates, missing fields, inconsistent formats, and obsolete records flagged during profiling.

- Standardize reference values, naming conventions, and data formats so the target schema receives consistent inputs.

- Document transformation rules including field mappings, calculated values, and data type conversions.

- Apply data masking, encryption, and role-based access controls for sensitive and regulated data.

- Test prepared data against quality thresholds before initiating any migration workflow.

Common Failure Pattern In Phase 3: Treating cleansing as optional because the timeline is tight. Skipping cleansing on the assumption that “we’ll fix it after go-live” is the most expensive trade-off in the lifecycle. Post-migration cleanup typically costs 10x what pre-migration cleanup would have, and it happens with users actively complaining.

Phase 4: Migration Design

Migration design is the technical blueprint for moving data in a controlled, repeatable manner. It covers extraction logic, transformation pipelines, loading sequences, and orchestration.

Modern cloud environments favor ELT (Extract-Load-Transform) over ETL because platforms like Snowflake, Databricks, and Microsoft Fabric handle transformation natively at scale. ETL still matters for complex cleansing or when working with legacy systems that can’t tolerate raw loads.

Most enterprise migrations land on a hybrid approach: ELT for high-volume operational data using tools like Azure Data Factory or Fivetran, ETL for compliance-sensitive datasets that need pre-load transformations.

- Choose between ETL and ELT based on target platform capability and data complexity.

- Document field-level source-to-target mappings and load sequencing to preserve referential integrity.

- Build error handling, retry logic, and checkpoint management for recovery without restarting full processes.

- Deploy orchestration tools to manage scheduling, dependencies, monitoring, and automated alerts.

- Design validation checkpoints throughout the pipeline to catch errors at the source, not the destination.

Common Failure Pattern In Phase 4: Designing pipelines that work for the happy path but have no error recovery. When a single transformation fails at hour 14 of a 16-hour load, teams without checkpoint management restart from zero. Build for partial recovery from day one.

Phase 5: Migration Execution

Execution is the actual movement of data from source to target. It requires precision, real-time monitoring, and rapid issue resolution.

A controlled execution sequence runs through test, pilot, and full-scale loads, with each layer catching different issue types.

- Run test migrations in controlled environments to validate mappings, transformation logic, and workflow design.

- Execute pilot migrations with subsets of real data to expose performance bottlenecks and edge cases.

- Coordinate full-scale loads during approved downtime windows with business stakeholders informed.

- Monitor system performance, error logs, and data throughput continuously throughout the load.

- Implement clear escalation procedures for rapid issue resolution during the load window.

Common Failure Pattern In Phase 5: Skipping pilot loads to save time. The pilot is where format mismatches and performance issues surface in conditions that mirror production. Going directly from test to full-scale load is the fastest way to turn a 6-hour cutover into a 36-hour incident.

Phase 6: Testing and Validation

Validation confirms that migrated data is accurate, complete, and functional in the target system. Research from the SAP Insights Institute consistently shows that inadequate validation is the leading cause of migration rollbacks.

Validation covers integrity checks, application testing, performance verification, and compliance documentation.

- Compare record counts, validate key fields, and confirm no data loss or duplication using automated reconciliation.

- Test applications and reports to confirm workflows, calculations, and integrations function in the new environment.

- Run parallel testing where source and target systems operate simultaneously to compare outputs.

- Measure query response times, transaction speed, and concurrent user load against established benchmarks.

- Generate audit trails, validation reports, and compliance documentation for regulatory review.

Common Failure Pattern In Phase 6: Validating only at the end of the migration. Concentrating risk at the moment when stakes are highest is the opposite of what continuous validation is designed to do. Validation should run inside every phase, not as a single late checkpoint.

Phase 7: Deployment and Cutover

Cutover marks the transition from legacy to the new environment. It demands precision, coordination, and clear communication with affected users.

A successful cutover combines a final go/no-go review, user transition planning, and tested rollback procedures.

- Conduct final validation checks against predetermined success criteria with all stakeholders present.

- Migrate users according to a defined communication and training plan, with support channels open during the transition.

- Maintain tested rollback plans with clear triggers and decision authority for activation.

- Provide support teams with runbooks, escalation paths, and contact information for the first 72 hours post-cutover.

- Communicate timelines, changes, and impacts in advance to reduce user anxiety and unplanned tickets.

Common Failure Pattern In Phase 7: Untested rollback plans. Teams document rollback procedures but never run them, and they discover the procedures don’t work at the worst possible moment. Run a rollback dry run during the pilot phase.

Phase 8: Post-Migration Support and Optimization

Migration success extends beyond go-live. Phase 8 covers ongoing monitoring, issue resolution, knowledge transfer, and performance optimization based on real usage patterns.

This phase converts a successful migration into sustained business value, not just a completed project.

- Implement continuous monitoring for data quality degradation, performance issues, and anomalies before users notice.

- Prioritize and resolve post-migration defects systematically with severity-based triage.

- Conduct user training and knowledge transfer with detailed documentation and runbooks.

- Fine-tune system performance based on actual usage patterns, not theoretical benchmarks.

- Capture lessons learned to refine the migration framework for the next project.

Common Failure Pattern In Phase 8: Declaring victory at go-live and pulling the team off to the next project. Migration issues compound in the first 30 days post-cutover. A team that disbands on day one is rebuilt under pressure on day fifteen.

Cognos vs Power BI: A Complete Comparison and Migration Roadmap

A comprehensive guide comparing Cognos and Power BI, highlighting key differences, benefits, and a step-by-step migration roadmap for enterprises looking to modernize their analytics.

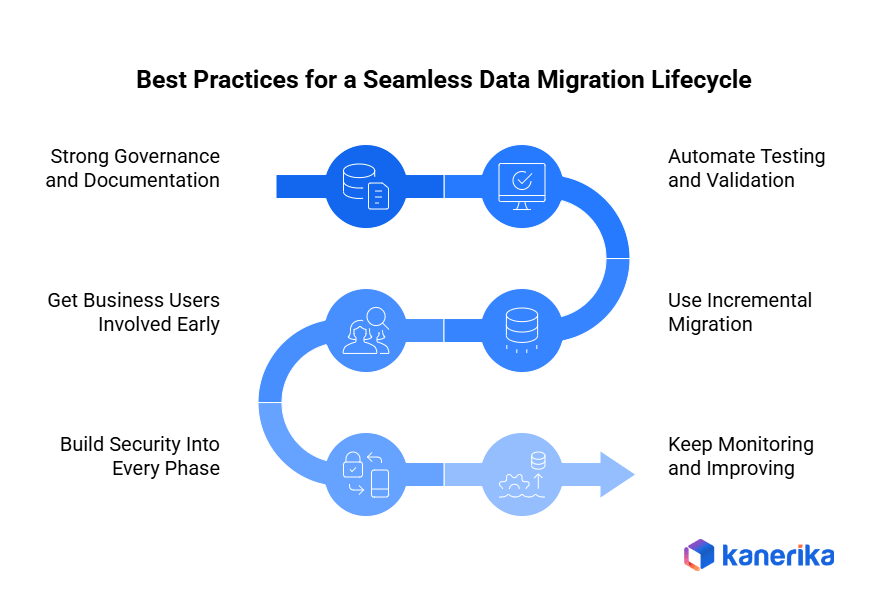

Best Practices for a Seamless Data Migration Lifecycle

Even carefully designed migrations can fall apart without proper execution. Following proven practices helps you reduce risk, maintain quality, and keep business operations running.

1. Strong Governance and Documentation

You need good governance for clarity and accountability. When data ownership is clear, decisions about scope, quality, and approvals happen fast. Getting IT teams and business stakeholders aligned cuts down on miscommunication and delays.

Documentation matters just as much. Capture the critical stuff like data mappings, transformation rules, validation criteria, and operational procedures. When docs are well-maintained, future audits and troubleshooting get much easier. Plus, you can repeat the process for other migrations without starting from scratch.

2. Automate Testing and Validation

Manual testing becomes a problem as your data grows. It’s unreliable and takes forever. Automated testing keeps things consistent across multiple runs. Human error goes down, too.

With automation, teams can run the same integrity checks every time. Source and target reconciliation happens faster. Performance validation becomes routine. The big win? You catch issues early, when they’re easier to fix, not during cutover, when everything’s on the line.

3. Use Incremental Migration

Moving everything at once is risky. A phased approach is usually smarter. You migrate data in stages, which lets teams validate each chunk, get feedback, and fix problems before moving forward. The whole system doesn’t blow up if something goes wrong.

Downtime gets minimized. Business disruption decreases. You maintain better control when things get complex. For enterprise systems that need to stay up and running, this approach makes way more sense.

4. Get Business Users Involved Early

Business users are the ones who know if migrated data actually works. Get them involved during discovery, not after everything’s done. Keep them engaged through validation and post-migration support.

When they join early, you can confirm business rules are correct. They spot data problems that technical teams miss. They help define what success looks like in practical terms. Their ongoing involvement builds confidence in the new system. Adoption rates after launch improve significantly.

5. Build Security Into Every Phase

Don’t bolt security on at the end. Build it into each phase from the start. Find sensitive data early. Protect it with encryption, masking, and proper access controls.

This keeps you compliant with whatever regulations apply to your business. Hence, the risk of data exposure during migration decreases. Customers, partners, and regulators maintain their trust in how you handle information.

6. Keep Monitoring and Improving

Deployment isn’t the finish line. Keep monitoring after launch. Data quality issues pop up. Performance gaps appear. Unexpected behavior happens. Catch these things quickly.

Document what you learn. Refine your processes based on real experience. Future migrations get smoother. What starts as a risky event becomes a standard capability your organization can handle confidently.

Why Data Migration with Kanerika Is Faster and More Accurate

Kanerika offers end-to-end data migration services with a focus on speed, accuracy, and minimal business disruption. Our approach uses automated migration accelerators built for specific paths like Tableau to Power BI, SSIS to Microsoft Fabric, Informatica to Talend, and UiPath to Power Automate. Each accelerator is purpose-built rather than a generic script, which is why our migrations run faster and with fewer surprises than manual rebuilds.

Our FLIP platform is an AI-powered, low-code system that automates conversion, mapping, and validation while preserving business logic. FLIP supports 12 migration paths across data platform, BI, and RPA categories, listed on the Azure Marketplace and eligible for Microsoft Azure Committed Spend. Our governance suite (KANGovern, KANComply, KANGuard), built on Microsoft Purview, carries existing access controls and permissions through the transition so data stays protected end-to-end.

As a Microsoft Solutions Partner for Data and AI, Microsoft Fabric Featured Partner, and Snowflake Consulting Partner, Kanerika brings credentialed expertise across the modern data stack. We were also one of the earliest Microsoft Purview implementors globally, bringing deep governance experience to every migration we run, with 100+ enterprise clients and 98% client retention.

Don’t Let Data Migration Break Your Business Operations

Choose Kanerika for Seamless, Low-Risk Migration

Case Study: Elevating Data Maturity for a Global Packaging Leader

A global leader in packaging solutions partnered with Kanerika to consolidate fragmented data operations and modernize its analytics stack. The migration moved Azure Data Factory and Synapse workloads to Microsoft Fabric while keeping operations running.

Challenges:

- Scattered workflows across Azure Data Factory and Synapse fragmented data operations and reduced visibility across teams.

- An intermediate Parquet conversion process introduced latency, periodic failures, and architectural bloat that delayed ingestion.

- Inconsistent setups, redundant processes, and no unified governance model limited scalability across business units.

Solutions:

- Migrated Azure assets to Microsoft Fabric using Kanerika’s FLIP migration accelerator, preserving code integrity and business logic.

- Enabled direct SAP C4C to Fabric integration, removing redundant processing layers and improving data flow reliability.

- Established a unified governance framework with standardized naming conventions, version control, and documentation.

Results:

- 30% reduction in cloud and data costs.

- 50% improvement in data pipeline performance.

- 80% faster business insights and reporting delivery.

Conclusion

The data migration lifecycle works because it forces structure where teams default to improvisation. Each of the 8 phases has a specific job, a specific risk, and a specific failure pattern.

The early phases are where most projects break without warning. Discovery and preparation deserve more time than they typically get. Validation belongs inside every phase, not just at the end.

The work doesn’t end at cutover. Teams that treat the lifecycle as a repeatable capability instead of a one-time event compound their advantage with every subsequent migration.

FAQs

What is the data migration lifecycle?

The data migration lifecycle is a structured framework that guides organizations through moving data from source systems to target environments. It encompasses planning, profiling, design, extraction, transformation, loading, validation, and go-live phases. Each stage builds on the previous one to ensure data integrity, minimize downtime, and reduce business disruption. A well-defined migration lifecycle helps enterprises avoid costly errors and ensures compliance with data governance standards throughout the transition. Kanerika’s data platform migration experts design lifecycle strategies tailored to your infrastructure—connect with us to plan your migration journey.

What are the stages of migration?

The stages of migration typically include assessment, planning, data profiling, design, extraction, transformation, loading, testing, validation, and cutover. During assessment, teams evaluate source systems and data quality. Planning establishes timelines, resources, and risk mitigation strategies. Extraction pulls data from legacy systems, transformation cleanses and reformats it, and loading transfers it to the target platform. Testing and validation ensure accuracy before final cutover to production. These migration stages create a repeatable process that reduces errors. Kanerika accelerates each stage with proven migration accelerators—reach out to streamline your next project.

What are the steps for data migration?

The steps for data migration begin with discovery and assessment to understand source data structures and quality issues. Next comes planning, where teams define scope, timelines, and success criteria. Data profiling identifies anomalies requiring cleansing. The design phase establishes mapping rules between source and target schemas. Extraction, transformation, and loading move data while applying business logic. Testing validates accuracy, and cutover completes the transition. Post-migration monitoring ensures ongoing data integrity. Each data migration step demands precision to prevent business disruption. Kanerika delivers end-to-end migration services—talk to our specialists for a detailed roadmap.

What are the 6 R's of data migration?

The 6 R’s of data migration are Rehost, Replatform, Repurchase, Refactor, Retain, and Retire. Rehosting lifts and shifts applications without changes. Replatforming makes minor optimizations during migration. Repurchasing replaces legacy systems with new solutions. Refactoring involves re-architecting applications for cloud-native capabilities. Retaining keeps certain workloads on-premises temporarily. Retiring decommissions obsolete systems entirely. Selecting the right R depends on business goals, cost constraints, and technical debt. These migration strategies help enterprises prioritize workloads effectively. Kanerika evaluates your portfolio against the 6 R’s to build a migration strategy that maximizes ROI—schedule your assessment today.

What are the key stages in a data migration lifecycle?

The key stages in a data migration lifecycle include assessment, planning, data profiling, architecture design, extraction, transformation, loading, validation, cutover, and post-migration support. Assessment evaluates existing systems and data quality. Planning defines scope, resources, and timelines. Data profiling uncovers inconsistencies requiring remediation. Architecture design maps source-to-target schemas. ETL processes move and transform data accurately. Validation confirms completeness and accuracy before cutover transitions users to the new environment. Post-migration monitoring catches residual issues. These lifecycle stages ensure controlled, predictable outcomes. Kanerika’s migration lifecycle expertise minimizes risk at every phase—contact us for a consultation.

What is the best approach for data migration?

The best approach for data migration combines thorough planning, incremental execution, and rigorous validation. Start with comprehensive data profiling to understand quality issues and dependencies. Use a phased migration strategy rather than big-bang cutover to reduce risk and allow iterative testing. Implement automated ETL pipelines for consistency and repeatability. Establish clear rollback procedures before each phase. Validate data accuracy at every stage using checksums and reconciliation reports. The right migration approach balances speed with data integrity to minimize business disruption. Kanerika tailors migration approaches to your specific environment—request a free assessment to define yours.

What are the four types of data migration?

The four types of data migration are storage migration, database migration, application migration, and cloud migration. Storage migration moves data between physical or virtual storage systems without altering structure. Database migration transfers data between database platforms, often requiring schema conversion. Application migration shifts entire applications along with their associated data to new environments. Cloud migration moves on-premises data and workloads to cloud infrastructure like Azure or AWS. Each migration type presents unique challenges around compatibility, performance, and security. Kanerika specializes in all four migration types with accelerators that reduce timelines significantly—let us assess your migration needs.

Is ETL the same as data migration?

ETL is not the same as data migration, though ETL is often a critical component within migration projects. ETL stands for Extract, Transform, Load—a process that pulls data from sources, applies transformations, and loads it into target systems. Data migration is the broader initiative of moving data between environments, which may include ETL alongside planning, validation, cutover, and decommissioning activities. Migration encompasses strategy, governance, and change management beyond technical data movement. Understanding this distinction helps organizations allocate resources correctly across the migration lifecycle. Kanerika builds ETL pipelines as part of comprehensive migration solutions—explore how we integrate both seamlessly.

What is the ETL process of data migration?

The ETL process of data migration involves three core operations: extraction, transformation, and loading. Extraction pulls data from source systems including databases, flat files, or APIs. Transformation applies business rules, cleanses inconsistencies, reformats data types, and maps fields to target schemas. Loading inserts the transformed data into destination platforms like data warehouses or cloud environments. ETL pipelines can run as batch processes or near-real-time streams depending on requirements. Proper ETL design ensures data accuracy and consistency throughout the migration lifecycle. Kanerika engineers robust ETL architectures for complex enterprise migrations—connect with us to modernize your data pipelines.

Why is following a data migration lifecycle important?

Following a data migration lifecycle is important because it provides structure, reduces risk, and ensures predictable outcomes. Without a defined lifecycle, migrations often suffer from scope creep, data quality issues, and extended downtime. A structured approach enforces checkpoints for validation, enabling teams to catch errors before they cascade. It also facilitates stakeholder communication and resource planning. Organizations that skip lifecycle phases frequently experience data loss, compliance violations, and budget overruns. The lifecycle framework transforms complex migrations into manageable, repeatable processes. Kanerika implements proven lifecycle methodologies that deliver migrations on time and within budget—speak with our team to get started.

How long does a typical data migration lifecycle take?

A typical data migration lifecycle takes anywhere from a few weeks to over a year depending on data volume, complexity, and organizational readiness. Small-scale migrations with clean data may complete in four to eight weeks. Enterprise migrations involving multiple legacy systems, complex transformations, and regulatory requirements often span six to eighteen months. Factors affecting timeline include data quality, source system accessibility, target platform readiness, and testing rigor. Rushed migrations frequently result in quality issues that extend overall project duration. Kanerika’s migration accelerators reduce typical timelines by up to forty percent—request a consultation to estimate your project duration accurately.

What are the most common challenges in the data migration lifecycle?

The most common challenges in the data migration lifecycle include poor data quality, inadequate planning, schema incompatibility, and insufficient testing. Legacy systems often contain duplicate, incomplete, or inconsistent data requiring extensive cleansing. Underestimating scope leads to timeline and budget overruns. Differences between source and target schemas create mapping complexities. Insufficient validation causes data integrity issues post-migration. Business disruption during cutover and resistance to change further complicate projects. Addressing these migration challenges requires thorough profiling, stakeholder alignment, and iterative testing throughout the lifecycle. Kanerika’s experienced teams navigate these challenges daily—partner with us to avoid common migration pitfalls.

What is the basics of data migration?

The basics of data migration involve transferring data from one system, storage format, or computing environment to another. Every migration requires understanding source data structures, defining target requirements, and establishing transformation rules. Core activities include data extraction, cleansing, mapping, loading, and validation. Success depends on thorough planning, clear ownership, and rigorous testing. Data migration basics also encompass risk assessment, rollback planning, and stakeholder communication. Whether moving to cloud platforms or consolidating databases, these fundamentals remain consistent across migration types. Kanerika helps organizations master migration basics and scale to enterprise complexity—reach out to build your foundation.

Which tool is used for data migration?

Tools used for data migration include Microsoft Fabric, Azure Data Factory, Informatica, Talend, AWS Database Migration Service, and Databricks. Selection depends on source and target platforms, data volumes, transformation complexity, and budget. Microsoft Fabric excels for organizations standardizing on the Microsoft ecosystem. Informatica and Talend offer robust enterprise ETL capabilities. Cloud-native tools like AWS DMS simplify database migrations to cloud platforms. Many enterprises combine multiple migration tools based on workload requirements. The right tool accelerates the migration lifecycle while ensuring data integrity. Kanerika is certified across leading migration platforms—let us recommend the optimal toolset for your environment.