In August 2025, Lenovo’s GPT-4-powered chatbot was compromised, exposing customer data and highlighting the rapid deployment of AI tools without adequate safeguards. Google also reported a mass data theft incident linked to the Salesloft AI agent, prompting emergency shutdowns. These incidents demonstrate that AI systems aren’t only vulnerable but also actively being targeted.

According to Cisco’s 2025 AI Security Report, 84% of enterprises using AI have experienced data leaks, and 75% cite governance as their top concern. AI is now embedded in fraud detection, diagnostics, and customer service—but most organizations still rely on outdated security models. With threats such as prompt injection, model theft, and shadow AI growing rapidly, the need for a structured AI security framework is no longer optional.

In this blog, we’ll break down what an AI security framework actually is, why your enterprise needs one, and which specific frameworks can protect your AI investments. We’ll cover five proven approaches and help you choose the right one for your organization.

What Is an AI Security Framework?

An AI security framework is a structured set of rules, processes, and tools designed to protect AI systems from misuse, adversarial attacks, and compliance risks.

Unlike traditional cybersecurity frameworks, AI security frameworks account for the unique nature of machine learning models, which can drift over time, learn from biased data, and be manipulated in ways that standard software cannot.

Think of them as blueprints for AI protection. They help enterprises:

- Identify AI-specific risks

- Define controls to prevent misuse

- Ensure compliance with laws like the EU AI Act and HIPAA

- Build trust with customers and regulators

Build Trustworthy AI with Strong Security Foundations!

Partner with Kanerika to secure AI across every layer.

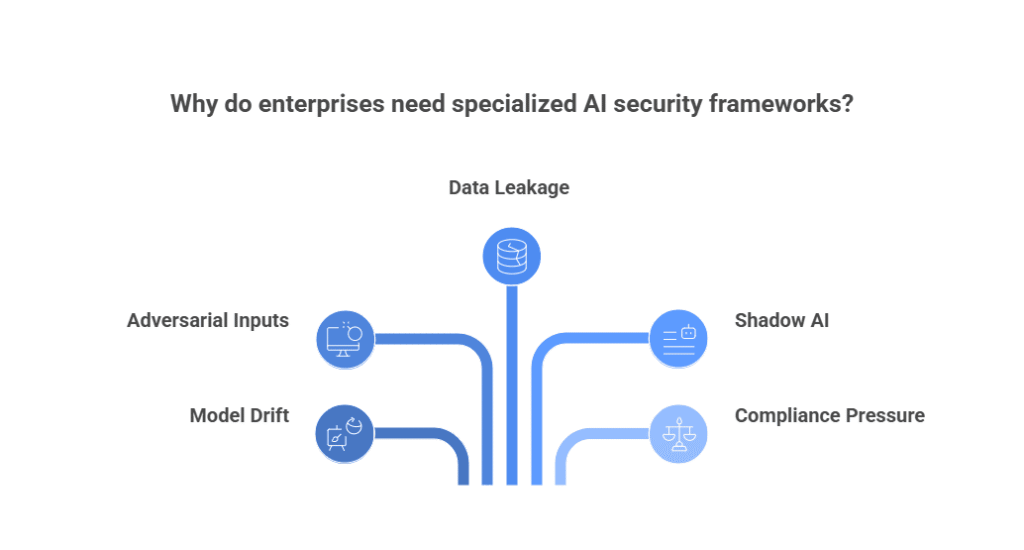

Why Enterprises Need Specialized AI Security Frameworks

AI systems don’t follow fixed rules. They learn, adapt, and sometimes fail in unpredictable ways. That brings new risks:

- Model drift — AI starts making wrong decisions over time

- Adversarial inputs — attackers feed data that tricks the model

- Data leakage — sensitive info gets exposed through outputs

- Shadow AI — unapproved tools used by teams without oversight

- Compliance pressure — laws now demand transparency and accountability

Traditional cybersecurity tools don’t cover these. That’s why enterprises need frameworks built for AI.

Types of AI Security Frameworks

Different frameworks have been created to help companies protect their AI systems. Each one tackles different problems. Some cover the entire AI lifecycle, while others focus on specific threats, and some address advanced autonomous AI agents.

Here’s a breakdown of the most important AI security frameworks in 2025:

1. NIST AI Risk Management Framework (AI RMF)

The NIST AI RMF is one of the most widely referenced AI governance and security frameworks.

Key features:

- Lifecycle-based: Govern, Map, Measure, Manage

- Provides structured risk assessment for AI projects

- Emphasizes trustworthy AI principles (fairness, transparency, accountability)

Best for:

- Highly regulated industries like healthcare, finance, and government

- Enterprises that need a compliance-focused approach

- Teams seeking a systematic way to measure and reduce AI risk

2. Microsoft AI Security Framework

Microsoft has introduced its AI Security Framework to ensure the responsible and secure use of AI.

Key features:

- Covers Security, Privacy, Fairness, Transparency, Accountability

- Works seamlessly with Azure AI and cloud tools, but principles apply to any platform

- Includes best practices for safeguarding data and preventing misuse

Best for:

- Enterprises already using Microsoft Azure

- Organizations prioritizing responsible AI and governance alongside security

- Teams looking for a practical, implementation-ready guide

3. MITRE ATLAS Framework

The MITRE ATLAS (Adversarial Threat Landscape for AI Systems) framework focuses squarely on AI threats and attacker tactics.

Key features:

Catalogs real-world AI attack methods, including:

- Model stealing

- Data poisoning

- Adversarial evasion

- Supports red teaming and threat modeling for AI systems

- Helps defenders anticipate how adversaries target AI models

Best for:

- Security operations (SOC) teams defending AI systems

- Organizations deploying machine learning models in production

- Enterprises wanting to understand and simulate attacker behavior

AI In Cybersecurity: Why It’s Essential for Digital Transformation

Explore AI tools driving threat detection, proactive security, and efficiency in cybersecurity.

4. Databricks AI Security Framework (DASF)

The Databricks AI Security Framework (DASF) bridges the gap between business, data, and security teams.

Key features:

- Lists 62 AI risks and 64 controls

- Platform-agnostic but inspired by NIST AI RMF and MITRE ATLAS

- Provides practical controls that map to enterprise needs

Best for:

- Data-heavy enterprises running large-scale AI pipelines

- Businesses seeking a comprehensive, control-based framework

- Teams wanting actionable steps instead of just principles

5. MAESTRO Framework for Agentic AI

As enterprises adopt autonomous AI systems, like agentic AI, traditional frameworks fall short. That’s where the MAESTRO Framework comes in.

Key features:

Built specifically for agentic and autonomous AI

Detects evolving risks such as:

- Goal manipulation

- Synthetic feedback loops

Includes guidance for AI-driven SOCs, RPA systems, and OpenAI API-based agents

Best for:

- Enterprises experimenting with agentic AI workflows

- Security teams handling autonomous AI deployments

- Early adopters preparing for next-generation AI threats

How to Choose the Right Framework

- Use NIST if you need structured risk management

- Use Microsoft if you care about ethical and responsible AI

- Use MITRE ATLAS if you need deep threat modeling

- Use Databricks DASF for cross-team collaboration

- Use MAESTRO if you’re working with autonomous agents

Many enterprises mix and match based on their AI maturity, risk profile, and compliance needs.

| Framework | Focus Area | Best For | Unique Strength |

| NIST AI RMF | Governance & Lifecycle | Regulated industries | Structured, compliance-ready |

| Microsoft AI Security | Responsible AI principles | Azure ecosystems | Balance of ethics + security |

| MITRE ATLAS | Threat modeling | Security teams | Adversarial attack catalog |

| Databricks DASF | Risk + control mapping | Data-driven enterprises | 62 risks, 64 actionable controls |

| MAESTRO | Agentic AI risks | Autonomous systems | Future-proof against evolving threats |

Real-World Use Cases

Healthcare: Workday and IBM Using NIST for Patient Data Protection

Workday, a global HR and finance software provider, uses the NIST AI Risk Management Framework to align its internal AI governance processes. Their Privacy and Data Engineering team mapped existing controls to NIST’s Govern, Map, Measure, and Manage functions. They created templates and SOPs to operationalize responsible AI across teams.

IBM also adopted NIST AI RMF. Their Chief Privacy Office led a three-phase audit comparing IBM’s internal Ethics by Design methodology to NIST’s framework. IBM now recommends that government agencies adopt NIST RMF for AI governance.

These efforts help ensure AI systems used in healthcare, HR, patient planning, and data analytics meet ethical and legal standards.

Finance: MITRE ATLAS for Threat Modeling in Fraud Detection

Cylance, a cybersecurity firm acquired by BlackBerry, used adversarial inputs to bypass a machine learning malware scanner. This case was documented in the MITRE ATLAS Framework, showing how attackers used public data and reverse engineering to evade detection.

MITRE ATLAS helped security teams understand tactics like model evasion, data poisoning, and prompt injection. It also guided mitigation strategies like retraining models with adversarial samples and tightening API access.

Financial institutions now use MITRE ATLAS to model threats against fraud detection systems, ensuring their AI tools can withstand real-world attacks.

Retail: UiPath Maestro for Agentic AI in Customer Service

Abercrombie & Fitch, Johnson Controls, and Wärtsilä use UiPath Maestro, which is built on the MAESTRO framework, to orchestrate agentic AI in customer service and operations.

- Abercrombie & Fitch uses agentic automation to streamline complex workflows like accounts payable.

- Johnson Controls automates end-to-end processes using AI agents and robots, improving speed and accuracy.

- Wärtsilä, a global marine and energy company, integrates agentic orchestration to manage business processes across systems.

- EY and CGI also partner with UiPath to deploy agentic AI for clients, combining automation, AI agents, and human oversight to deliver scalable customer service solutions.

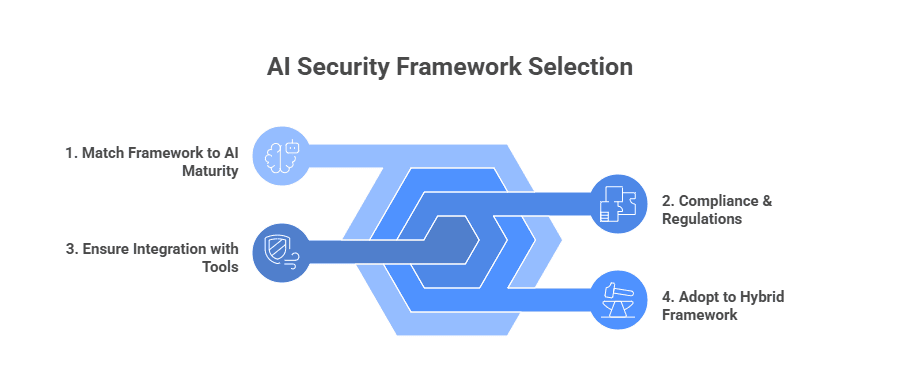

How to Choose the Right AI Security Framework

Selecting the right AI security framework is not a one-size-fits-all decision. The best choice depends on your organization’s AI maturity, regulatory environment, and existing security infrastructure. Here are key considerations:

1. Match the Framework to Your AI Maturity

Early-stage AI adoption: Organizations experimenting with AI pilots should start with flexible frameworks like NIST AI RMF, which provide high-level guidance on managing risks without overwhelming technical requirements.

Advanced AI deployment: Companies already running large-scale AI systems may benefit from more specialized frameworks like MITRE ATLAS for adversarial threats or MAESTRO for multi-agent security.

2. Consider Compliance and Regulatory Needs

If your industry is heavily regulated (healthcare, finance, government), frameworks that align closely with global standards such as ISO/IEC 23894 or NIST are often the safest choices.

These frameworks map directly to compliance requirements like GDPR, HIPAA, or PCI DSS, helping reduce legal risks.

3. Look at Integration with Existing Tools and Processes

Evaluate whether the framework can integrate with your current DevSecOps pipelines, monitoring systems, and governance tools.

For example, MITRE ATLAS aligns well with existing threat modeling tools, making it easier to add AI-specific security without reinventing the wheel.

4. Use Hybrid Approaches if Needed

Many enterprises adopt a hybrid strategy, combining elements from different frameworks.

For instance, a financial institution may use NIST for governance while applying MITRE ATLAS for red-teaming AI models.

This layered approach ensures broader coverage across governance, compliance, and active threat defense.

Case Study: Real-Time Compliance and Risk Detection

Client: A global expert network platform connecting decision-makers with over one million subject-matter experts.

Challenge: The client’s compliance team manually screened experts for negative news across public sources. This caused delays, backlogs, and inconsistent vetting.

Solution: Kanerika built an AI-powered compliance agent that automated expert profiling, scraped news and social media, and applied rule-based logic to flag risks. The agent generated structured reports with citations and mapped findings to compliance rules.

Impact:

- 60% faster screening

- 70% fewer backlog cases

- 40% reduction in event delays

- Standardized and auditable risk assessments

Securing AI Systems with Kanerika’s Proven AI Security Framework

At Kanerika, we design AI security frameworks that help enterprises protect their models, data, and workflows from evolving threats. Our layered approach combines data governance, risk detection, and compliance automation to secure AI systems across industries.

We use tools like Microsoft Purview to classify sensitive data, detect insider risks, and enforce policies automatically. Our framework supports AI TRiSM principles, making sure every AI model we deploy is transparent, accountable, and aligned with ethical standards. This helps our clients stay compliant with regulations like GDPR, HIPAA, and the EU AI Act.

Our partnerships with Microsoft, Databricks, and AWS allow us to deliver scalable, enterprise-grade AI security solutions. With certifications like ISO 27701, SOC II, and CMMi Level 3, we back our work with proven security and quality standards. Whether you’re working with LLMs, RPA bots, or autonomous agents, our AI security framework is built to adapt and protect.

Partner with us to build a trusted AI security framework that protects your data, ensures compliance, and scales with your enterprise.

Maximize AI Potential Without Compromising Security!

Partner with Kanerika for Expert AI implementation Services

FAQs

What is an AI security framework?

An AI security framework is a structured set of guidelines, policies, and best practices designed to protect artificial intelligence systems from threats while ensuring responsible deployment. It addresses unique risks like data poisoning, model theft, adversarial attacks, and bias propagation that traditional cybersecurity frameworks overlook. These frameworks establish governance protocols for the entire AI lifecycle, from data collection through model training to production deployment. They help organizations maintain compliance, build trust, and mitigate emerging vulnerabilities. Kanerika implements enterprise-grade AI security frameworks tailored to your specific risk profile—connect with our specialists to safeguard your AI investments.

What are the 5 pillars of AI framework?

The five pillars of an AI framework typically include governance, risk management, data security, model integrity, and compliance monitoring. Governance establishes accountability structures and decision-making protocols. Risk management identifies and mitigates threats across the AI lifecycle. Data security ensures training datasets remain protected from tampering and unauthorized access. Model integrity safeguards against adversarial manipulation and drift. Compliance monitoring maintains alignment with regulatory requirements like GDPR and industry standards. Together, these pillars create a comprehensive AI security architecture that organizations can operationalize effectively. Kanerika helps enterprises implement all five pillars cohesively—schedule a consultation to strengthen your AI foundation.

What is the NIST security framework for AI?

The NIST AI Risk Management Framework provides voluntary guidance for organizations to design, develop, and deploy trustworthy AI systems. Released by the National Institute of Standards and Technology, it emphasizes four core functions: govern, map, measure, and manage. The framework addresses AI-specific concerns including bias, explainability, security vulnerabilities, and privacy risks throughout the system lifecycle. Unlike traditional cybersecurity frameworks, NIST AI RMF specifically targets machine learning model risks and sociotechnical considerations. It integrates seamlessly with existing enterprise risk programs. Kanerika aligns AI deployments with NIST standards to ensure regulatory readiness—reach out for a compliance assessment.

Why do organizations need AI-specific security frameworks?

Organizations need AI-specific security frameworks because traditional cybersecurity measures cannot address unique vulnerabilities in machine learning systems. AI introduces novel attack vectors like data poisoning, model inversion, prompt injection, and adversarial inputs that conventional security tools miss entirely. Additionally, AI systems face regulatory scrutiny around fairness, transparency, and accountability that general IT frameworks ignore. Without dedicated AI security governance, enterprises risk model manipulation, intellectual property theft, compliance violations, and reputational damage from biased outputs. The complexity of AI supply chains further amplifies these concerns. Kanerika designs AI security strategies that protect your models from emerging threats—book a risk assessment today.

What are the 4 types of AI risk?

The four types of AI risk encompass technical, operational, ethical, and regulatory categories. Technical risks include model failures, adversarial attacks, and data quality issues that compromise system performance. Operational risks involve deployment challenges, integration failures, and inadequate monitoring capabilities. Ethical risks cover bias propagation, fairness concerns, and lack of transparency that erode stakeholder trust. Regulatory risks arise from non-compliance with data protection laws, industry mandates, and emerging AI legislation. Effective AI security frameworks must address all four categories simultaneously to ensure comprehensive protection. Kanerika’s risk assessment methodology evaluates your AI systems across every dimension—contact us for a thorough analysis.

Which industries benefit most from AI security frameworks?

Industries handling sensitive data benefit most from AI security frameworks, including healthcare, financial services, insurance, manufacturing, and government sectors. Healthcare organizations must protect patient data while ensuring diagnostic AI models remain tamper-proof. Banks and insurers rely on AI for fraud detection and underwriting, requiring robust model integrity controls. Manufacturing companies using predictive maintenance AI need protection against operational disruptions. Government agencies deploying AI for critical infrastructure face heightened national security requirements. Retail and pharma also gain significant advantages through secure, compliant AI deployments. Kanerika delivers industry-specific AI security solutions across these verticals—explore how we can address your sector’s unique requirements.

What are some core components covered by AI security frameworks?

Core components of AI security frameworks include data protection, model security, access controls, monitoring systems, and incident response protocols. Data protection ensures training datasets are validated, encrypted, and free from poisoning attempts. Model security addresses adversarial robustness, intellectual property protection, and version control. Access controls implement role-based permissions and authentication for AI systems. Continuous monitoring detects drift, anomalies, and potential breaches in real-time. Incident response establishes procedures for containing and remediating AI-specific security events. These components work together to create defense-in-depth protection throughout the AI lifecycle. Kanerika integrates these components into cohesive security architectures—let us evaluate your current AI security posture.

How should enterprises choose the right AI security framework?

Enterprises should choose an AI security framework based on their regulatory environment, industry requirements, risk tolerance, and existing infrastructure. Start by mapping current AI deployments and identifying specific vulnerabilities unique to your models and data pipelines. Evaluate frameworks like NIST AI RMF, ISO 42001, and industry-specific standards against your compliance obligations. Consider integration capabilities with existing security tools and governance programs. Assess whether the framework addresses your priority concerns: bias mitigation, adversarial defense, or data privacy. Implementation complexity and organizational readiness also matter significantly. Kanerika guides enterprises through framework selection and customization—schedule a discovery session to identify your optimal approach.

What standards and frameworks are available for securing AI?

Several standards and frameworks exist for securing AI systems, including NIST AI Risk Management Framework, ISO/IEC 42001, MITRE ATLAS, and the EU AI Act requirements. NIST AI RMF provides comprehensive risk management guidance across the AI lifecycle. ISO 42001 establishes an AI management system standard for organizational governance. MITRE ATLAS catalogs adversarial threats specific to machine learning systems. The EU AI Act mandates compliance requirements based on risk classification. Additional guidance comes from IEEE, OWASP Machine Learning Security, and sector-specific regulations in healthcare and finance. Kanerika helps organizations navigate this landscape and implement the right combination of standards—connect with us for expert guidance.

What are AI security tools?

AI security tools are specialized software solutions designed to protect machine learning models and AI systems from threats throughout their lifecycle. These tools include adversarial testing platforms that simulate attacks on models, data validation systems that detect poisoning attempts, model monitoring solutions that identify drift and anomalies, and explainability tools that ensure transparency. Other categories encompass access management for AI infrastructure, encryption for model weights, and automated compliance checkers. Leading tools integrate with MLOps pipelines to provide continuous security without disrupting development workflows. Kanerika implements and configures AI security tooling aligned with enterprise requirements—reach out to modernize your AI protection stack.

What is an example of AI security?

A practical AI security example involves protecting a fraud detection model at a financial institution. The security implementation includes encrypting training data containing transaction records, implementing differential privacy during model training, deploying adversarial testing to verify robustness against evasion attacks, and establishing continuous monitoring for model drift. Access controls restrict who can query or modify the model, while audit logs track all interactions for compliance. If attackers attempt to manipulate inputs to bypass fraud detection, the security controls identify and block these adversarial attempts in real-time. Kanerika has deployed similar AI security architectures for banking clients—discuss your use case with our team.

Which AI is best for security?

The best AI for security depends on your specific use case and threat landscape. For network security, AI-powered SIEM and threat detection platforms excel at identifying anomalies and zero-day attacks. For protecting AI systems themselves, specialized adversarial defense tools and model monitoring solutions prove most effective. Cloud-native AI security platforms offer scalability for enterprise deployments. Key evaluation criteria include detection accuracy, false positive rates, integration capabilities, and vendor support. No single solution addresses all security needs—layered approaches combining multiple AI-powered tools deliver strongest protection. Kanerika evaluates and implements AI security solutions matched to your specific environment—request a personalized recommendation today.

What are the 4 principles of AI?

The four foundational principles of responsible AI are fairness, transparency, accountability, and safety. Fairness ensures AI systems do not discriminate or perpetuate bias against protected groups. Transparency requires that AI decisions can be explained and understood by stakeholders. Accountability establishes clear ownership and responsibility for AI outcomes throughout the organization. Safety guarantees that AI systems operate reliably without causing harm to users or society. These principles underpin AI security frameworks by establishing ethical guardrails alongside technical controls. Organizations embedding these principles build trustworthy AI that meets regulatory expectations. Kanerika integrates these principles into every AI deployment—partner with us for responsible AI implementation.

What is the four level AI framework?

The four-level AI framework categorizes artificial intelligence capabilities into reactive machines, limited memory, theory of mind, and self-aware AI. Reactive machines respond to inputs without storing experiences, like basic chess programs. Limited memory AI, including current deep learning systems, uses historical data to inform decisions. Theory of mind AI, still largely theoretical, would understand human emotions and intentions. Self-aware AI would possess consciousness and self-understanding. Most enterprise AI today operates at the limited memory level, requiring security frameworks that address data integrity, model protection, and decision transparency. Kanerika secures AI systems across maturity levels—contact us to assess your AI security needs.