TLDR

Open-source LLMs had one of their most significant weeks in early April 2026. Gemma 4 launched on April 2, Llama 4 followed on April 5, and Muse Spark arrived on April 8, three major open-weight releases in under a week, each with architectural improvements that push open-weight performance closer to proprietary frontier models than ever before. This blog covers the 10 open-source LLMs worth evaluating right now, what each does best, and how to match them to your team’s actual requirements.

The State of Open-Source LLMs in 2026

Open-source LLMs have moved from experimental alternatives to legitimate production choices for enterprise teams. Three major open-weight releases landed within eight days of each other in early April 2026 alone, Gemma 4 on April 2, Llama 4 on April 5, and Muse Spark on April 8. Each brought meaningful architectural improvements that push open-weight performance closer to proprietary frontier models than at any point before.

The momentum behind open-source LLMs goes beyond benchmarks. Enterprise adoption grew 240% between 2023 and 2025, driven by three factors that commercial APIs cannot offer: complete control over data, the ability to fine-tune on proprietary datasets, and zero vendor dependency. For regulated industries, self-hosting an open-source LLM under an MIT or Apache license resolves the data residency concerns that commercial APIs introduce by default, concerns that have become harder to dismiss as AI moves deeper into core business workflows.

The result is a market where open-source LLMs are evaluated alongside GPT and Claude rather than below them. In this blog, we cover the 10 open-weight and frontier models worth evaluating in 2026, what each does best, and how to choose before committing to a deployment.

Choosing an open-source LLM for your deployment?

Kanerika evaluates and deploys the right model for your environment.

Key Takeaways

- Gemma 4, released April 2, 2026 under Apache 2.0, ranks #3 globally among open models on Arena AI and outperforms models 20 times its size on math, coding, and agentic benchmarks.

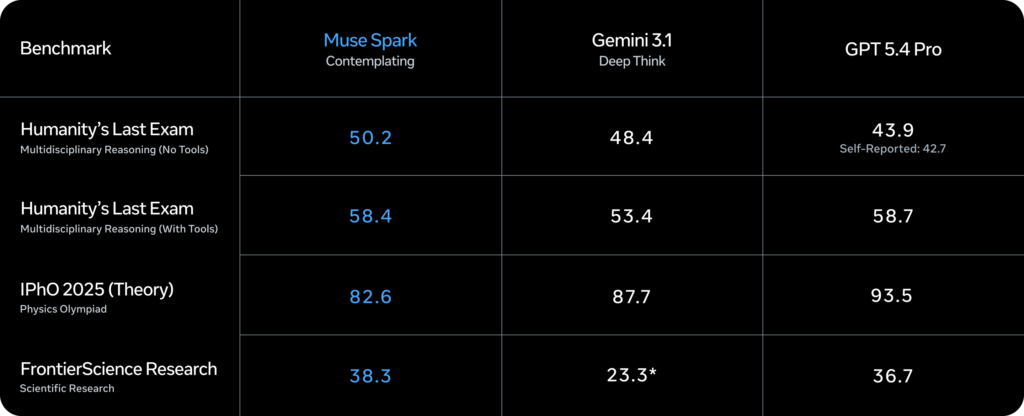

- Meta Muse Spark launched April 8, 2026 from Meta Superintelligence Labs, introducing Contemplating mode that runs parallel reasoning agents, achieving 58% on Humanity’s Last Exam.

- Llama 4 Scout’s 10 million token context window is the largest available in any open-weight model, enabling full codebase analysis and book-length document processing on a single H100 GPU.

- DeepSeek V3.2 remains the most cost-efficient frontier-class model at $0.80 per million input tokens, with MIT licensing for complete self-hosting.

- License terms, hardware requirements, and data residency controls matter as much as benchmark scores when selecting a model for production deployment.

Top 10 Open-Source LLMs in 2026

1. Meta Muse Spark

Meta Superintelligence Labs launched Muse Spark on April 8, 2026, distinct from the Llama family and available at meta.ai with a private API preview. Its Contemplating mode runs specialized agents in parallel, each reasoning independently before converging on a single verified answer, scoring 58% on Humanity’s Last Exam, competitive with GPT Pro and Gemini Deep Think. To build these health reasoning capabilities, Meta collaborated with over 1,000 physicians, and the company reports it reaches Llama 4 Maverick capability with an order of magnitude less compute.

Key capabilities:

- Contemplating mode runs parallel agents that debate and verify before producing one response

- Thought compression reduces token usage without sacrificing output quality

- Visual chain-of-thought across STEM, entity recognition, and health reasoning

- Native tool use and multi-agent orchestration built into the inference layer

Best for: Research teams, health and life sciences organizations, and enterprises that need frontier reasoning without proprietary API dependency.

2. Llama 4 Scout and Maverick

Meta released Llama 4 Scout and Maverick on April 5, 2026, the first Llama models built on MoE architecture and trained as natively multimodal systems using early fusion, processing text, images, and video through a unified model. Scout runs on a single H100 GPU with a 10 million token context window, currently the largest in any open-weight model. Maverick, in turn, scales to 128 experts and 400B total parameters while keeping 17B active per inference pass. Both were pre-trained on 30 trillion tokens across 200 languages. Companies with more than 700 million monthly active users and EU-domiciled organizations should verify licensing terms before deployment.

Key capabilities:

- Scout: 10M token context window on a single H100, native multimodal

- Maverick: 128 experts, 400B total parameters, benchmarks against GPT-4o and Gemini 2.0 Flash

- Early fusion multimodal across text, image, and video on both models

- Pre-trained across 200 languages on 30 trillion tokens

Best for: Long-context enterprise document workflows, multilingual applications, and teams moving off commercial multimodal APIs that need full data control.

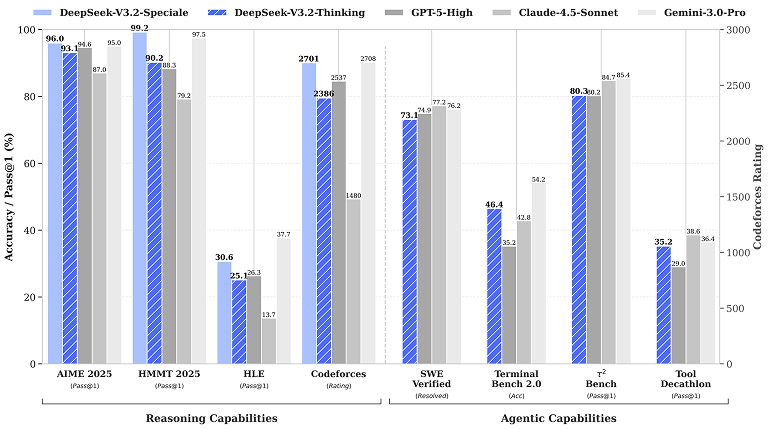

3. DeepSeek V3.2

DeepSeek V3.2 is a 671B parameter MoE model with 37B active parameters per token, trained on 14.8 trillion tokens. Building on V3.1, it adds DeepSeek Sparse Attention, reducing compute costs for long-context inputs while preserving model quality. API pricing is $0.80 per million input tokens under MIT license. For teams considering self-hosting, full deployment requires a minimum of 8 NVIDIA H200 GPUs, which is the main infrastructure consideration to plan for before evaluation begins.

Key capabilities:

- 671B MoE with 37B active parameters per token

- Hybrid thinking and non-thinking modes in a single model

- DeepSeek Sparse Attention for reduced long-context compute

- MIT license with full self-hosting support

- $0.80 per million input tokens via API

Best for: Cost-sensitive teams running high-volume automated pipelines and regulated industry organizations with the infrastructure to self-host.

4. Qwen3.5

Qwen3.5, released by Alibaba Cloud in February 2026, is the flagship open-weight model for multilingual deployments. The Qwen3.5-397B-A17B is a MoE model with 397B total and 17B active parameters, a 1 million token context window, and native multimodality across text, image, and video through early fusion architecture. Notably, it supports 201 languages and delivers 8.6x to 19x higher decoding throughput compared to the previous Qwen3 generation. On top of that, hybrid thinking and non-thinking modes let teams balance reasoning depth and response speed within the same model.

Key capabilities:

- 201 languages and dialects, the broadest multilingual coverage in open-weight models

- 1M token context window

- Native multimodal via early fusion across text, image, and video

- 8.6x to 19x higher decoding throughput than Qwen3

- Hybrid thinking and non-thinking modes

- Apache 2.0 for most variants

Best for: International enterprise deployments, multilingual customer-facing applications, and teams building agentic workflows that need broad language coverage in a single model.

5. Gemma 4

Google DeepMind released Gemma 4 on April 2, 2026 under Apache 2.0 with full commercial freedom. Built from Gemini 3 research, it comes in four sizes: E2B and E4B for edge and mobile, 26B MoE, and 31B Dense for server workloads. The 31B ranks #3 among all open models on Arena AI, scores 89.2% on AIME 2026, 80.0% on LiveCodeBench v6, and 86.4% on τ2-bench agentic tool use. Additionally, the 26B MoE activates only 3.8B parameters per token during inference, delivering near-31B quality at a fraction of the compute cost. The E2B, meanwhile, runs on smartphones under 1.5GB RAM with native audio support.

Key capabilities:

- Four sizes covering edge through server deployment

- Apache 2.0 with no MAU caps

- 256K context window on 26B and 31B

- Native multimodal across text, image, video; audio on E2B and E4B

- 140 plus language support

- Day-one support across Hugging Face, vLLM, llama.cpp, Ollama, NVIDIA NIM, SGLang

Best for: Teams in the Google ecosystem, edge and mobile deployment scenarios, and organizations that need fully permissive commercial licensing with strong reasoning across varied hardware.

6. DeepSeek R1-0528

DeepSeek R1-0528, released May 2025, uses the same 671B MoE architecture as V3 with reinforcement learning post-training that produces visible chain-of-thought output on every response. As a result, every reasoning step is auditable before the final answer is returned. It documented a 45 to 50% reduction in hallucination rates on summarization and structured tasks compared to the original R1 release. Available under MIT license with the same self-hosting requirements as V3.2.

Key capabilities:

- Visible chain-of-thought reasoning on every output

- 45 to 50% hallucination reduction over original R1 on structured tasks

- MIT license with full self-hosting

- Same MoE efficiency as V3.2 for inference cost

- Suited for workflows where methodology needs to be traceable

Best for: Legal, compliance, and financial workflows where auditable reasoning is the primary requirement over raw speed.

7. Mistral Small 3.1

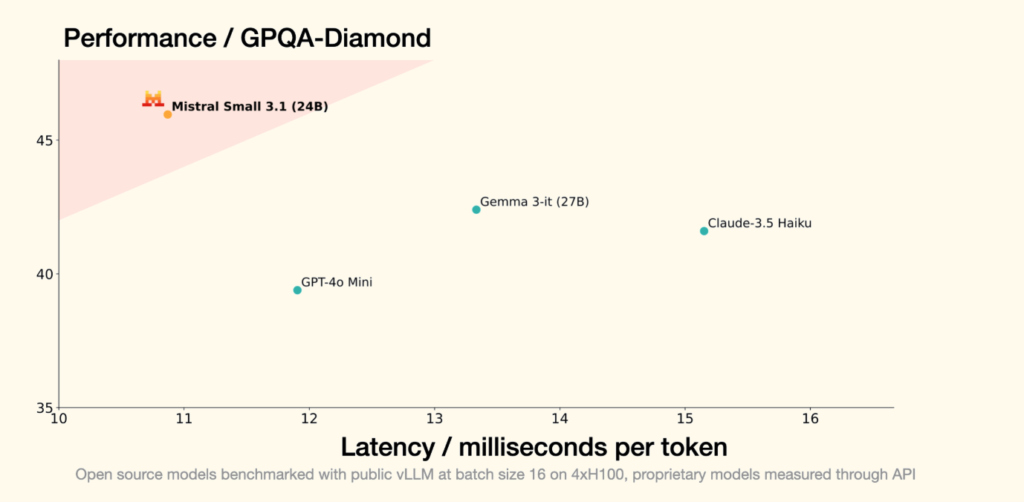

Mistral Small 3.1 is built for real-time applications where response speed and low hardware requirements take priority. It runs on consumer-grade hardware, returns responses in seconds, and keeps operating costs predictable at scale. Apache 2.0 licensing allows commercial deployment with no additional legal review, making it one of the simplest models to clear through enterprise procurement. For customer-facing applications where latency and cost are the binding constraints, it is one of the most reliable sustained production options available in 2026.

Key capabilities:

- Optimized for low latency, delivering 150 tokens per second

- Runs on consumer-grade hardware including a single RTX 4090

- Apache 2.0 license with no usage restrictions

- 128K context window with multimodal support

- Cost-efficient for sustained high-volume deployments

Best for: Customer support tools, live chat applications, real-time assistants, and teams with high query volumes and limited GPU infrastructure.

8. Qwen3-Coder-Next

Qwen3-Coder-Next is a dedicated coding agent model from the Qwen3.5 family, built specifically for agentic coding tasks. With 80B total and 3B active parameters, it achieves performance comparable to models with 10 to 20 times more active parameters on coding benchmarks. In addition, it supports a 256K context window, handles long-horizon tool use and execution failure recovery, and integrates natively with Claude Code, Qwen Code, and major IDE platforms.

Key capabilities:

- 80B total, 3B active parameters via MoE

- 256K context window for full codebase analysis

- Built for long-horizon tool use and execution failure recovery

- Native IDE integration across Claude Code, Qwen Code, and major platforms

- Apache 2.0 license

Best for: Engineering teams building agentic coding pipelines, automated code review workflows, and development environments requiring deep codebase context.

9. GLM-5 (Zhipu AI)

GLM-5 is the latest from Zhipu AI’s GLM family, trained with Slime, an asynchronous RL framework designed for stable, predictable post-training gains. It targets reasoning, coding, and agentic tasks, with GLM-4.5-Air FP8 available as a hardware-efficient variant that fits on a single H200. The GLM family has consistently strong long-context performance and sustained coherence across extended research sessions, a capability that is frequently underweighted in Western-focused model evaluations.

Key capabilities:

- Trained with Slime async RL for stable, predictable post-training gains

- Strong long-context coherence across extended sessions

- GLM-4.5-Air FP8 fits on a single H200

- Competitive on reasoning, coding, and agentic benchmarks

- Open-weight with active development cadence

Best for: Research teams, document-heavy enterprise workflows, and organizations that need strong long-context performance outside the Western open-source mainstream.

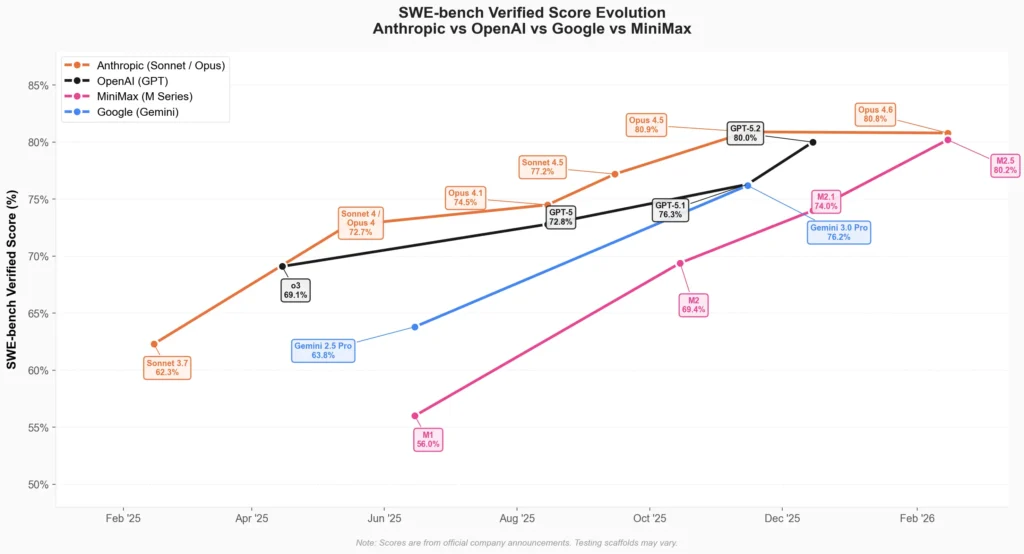

10. MiniMax-M2.5

MiniMax-M2.5 is a frontier text model from MiniMax, trained with reinforcement learning across hundreds of thousands of complex real-world environments. It is built for multi-agent orchestration, long-context handling, and enterprise-scale deployment. Consequently, its RL training on real-world task environments gives it a measurable edge on agentic workloads where task completion over extended sequences is the primary requirement. Available via API with enterprise pricing and a modified MIT license requiring UI attribution for commercial products.

Key capabilities:

- RL training across complex real-world multi-agent environments

- Strong sustained reasoning across extended multi-step workflows

- Competitive MMLU performance at frontier-class level

- API access with enterprise pricing

- Runs at up to 100 tokens per second

Best for: Enterprise teams building multi-agent orchestration systems where sustained sequential task execution is the primary production requirement.

Comparison Table

| Model | Context Window | Architecture | Multimodal | License | API Cost (input/M) |

|---|---|---|---|---|---|

| Muse Spark | TBC | Proprietary | Text, image | API preview | TBC |

| Llama 4 Scout | 10M tokens | MoE (16 experts) | Text, image, video | Meta open-weight | Self-hosted |

| Llama 4 Maverick | 1M tokens | MoE (128 experts) | Text, image, video | Meta open-weight | Self-hosted |

| DeepSeek V3.2 | 128K | MoE (671B/37B active) | Text only | MIT | $0.80 |

| Qwen3.5 | 1M tokens | MoE (397B/17B active) | Text, image, video | Apache 2.0 | Varies |

| Gemma 4 31B | 256K | Dense | Text, image, video | Apache 2.0 | $0.13 (26B MoE via OpenRouter) |

| DeepSeek R1-0528 | 128K | MoE (671B/37B active) | Text only | MIT | $0.80 |

| Mistral Small 3.1 | 128K | Dense | Text only | Apache 2.0 | Low |

| Qwen3-Coder-Next | 256K | MoE (80B/3B active) | Text only | Apache 2.0 | Varies |

| GLM-5 | 128K | Dense + RL | Text only | Open-weight | Varies |

| MiniMax-M2.5 | Long-context | Dense + RL | Text only | Modified MIT | Competitive |

Which Open-Source LLM Works Best for Your Team

1. For Reasoning and Accuracy

Start with Muse Spark if frontier reasoning is the priority. Its Contemplating mode runs agents in parallel before converging on a verified answer, scoring 58% on Humanity’s Last Exam. For teams that also need auditable reasoning, DeepSeek R1-0528 produces visible chain-of-thought on every response, making it a strong fit for legal, compliance, and financial workflows.

2. For Long Documents and Large Codebases

Llama 4 Scout’s 10 million token context window is the largest in any open-weight model, making full codebase analysis and book-length document processing achievable in a single pass. Teams with tighter hardware constraints should look at GLM-5, which handles long-context tasks well and runs on a single H200 via the FP8 variant.

3. For Cost-Sensitive and High-Volume Pipelines

DeepSeek V3.2 at $0.80 per million input tokens is the most economical frontier-class model available. The MIT license makes self-hosting legally clean, removing vendor dependency over long deployments. For teams that need speed over reasoning depth, Mistral Small 3.1 runs on consumer hardware and keeps latency and cost predictable at scale.

4. For Multilingual and Global Deployments

Qwen3.5 covers 201 languages with native multimodal support across text, image, and video. For international teams processing multilingual enterprise data, it removes the need to stitch together multiple specialized models per region. The hybrid thinking and non-thinking modes also let teams tune for speed or depth depending on the market.

5. For Coding and Agentic Engineering Workflows

Qwen3-Coder-Next is built specifically for coding agents, with a 256K context window and training focused on long-horizon tool use and execution failure recovery. It integrates natively with Claude Code, Qwen Code, and major IDEs. Teams in the Google ecosystem can also evaluate Gemma 4, which scores 80% on LiveCodeBench v6 and runs cleanly across Google AI Studio, Vertex AI, and Hugging Face.

6. For Edge and On-Device Deployment

Gemma 4 E2B runs on smartphones and Raspberry Pi under 1.5GB RAM with native audio support, making it the strongest option for teams that need genuine multimodal intelligence on edge hardware. For API-based real-time applications where latency is the binding constraint, Mistral Small 3.1 remains the most reliable low-cost option available in 2026.

Need a full deployment around your chosen model?

Kanerika handles fine-tuning, RAG, and production rollout

How to Choose an Open-Source LLM

Picking the right model is less about finding the highest benchmark score and more about finding the one that actually works in your environment. Here is what to work through before committing to a deployment.

1. Start With Hardware and Infrastructure

The model that fits your current infrastructure is always the right starting point. DeepSeek V3.2 at full scale needs a minimum of 8 NVIDIA H200 GPUs. Llama 4 Maverick runs on a single H100 DGX host. Gemma 4’s 26B MoE runs on a consumer GPU with 16GB VRAM, and the E2B variant runs on a smartphone. Starting with a model your infrastructure can support saves weeks of wasted setup. Once you have a hardware-compatible shortlist, then evaluate capability.

2. Verify License and Data Residency Together

These two checks belong in the same step because they often eliminate the same models. MIT and Apache 2.0 licenses allow unrestricted commercial use with no MAU caps. Llama 4 restricts companies with more than 700 million monthly active users and prohibits EU-domiciled commercial deployment. On the data side, DeepSeek’s standard API routes data through servers in China, making it unsuitable for most regulated industry deployments without self-hosting. Running both checks early prevents switching costs that compound once teams have built workflows around a model.

3. Match the Context Window to Your Actual Workload

Context window size determines which tasks run in a single pass and which require retrieval infrastructure. Llama 4 Scout’s 10 million token window handles full codebases and book-length documents without chunking. Gemma 4’s workstation models support 256K tokens. DeepSeek V3.2 and R1-0528 support 128K, which covers most general enterprise tasks comfortably. The practical question is whether your workload, legal documents, long agent sessions, large codebases fit within the window or require additional engineering around them.

4. Test on Real Queries Before Committing

Generic benchmarks measure controlled performance. Your workload involves ambiguous prompts, mixed inputs, and edge cases that rarely appear in benchmark suites. Run your 20 most representative real queries across two or three candidate models simultaneously and compare for consistency, not just quality on the best response. A smaller model running reliably on your own hardware often tells you more about deployment viability than a larger model performing well in a cloud environment your team will never replicate in production.

5. Account for Total Deployment Cost

API cost per token is the visible expense and rarely the largest one. Infrastructure, integration engineering time, ongoing maintenance, and the rework cost when a new model version changes behavior are the variables that shift the true cost significantly. A model with lower API pricing but thin ecosystem support can end up more expensive overall than one that costs more per token but integrates cleanly with LangChain, vLLM, your IDE plugins, and your CI/CD pipeline from day one. Factor all of it before making a final call.

How Kanerika Delivers Agentic AI Solutions for Enterprises

Kanerika builds and deploys production-ready AI agents across financial services, healthcare, manufacturing, and logistics. Karl for data insights, DokGPT for document intelligence, Susan for PII redaction, and Alan for legal document summarization are each built for a specific business function rather than adapted from general-purpose tools. Every agent connects directly with existing data pipelines, CRMs, ERPs, and cloud platforms, and is trained on structured enterprise data from the start.

The approach is governance-first by design. Role-based access controls, audit trails, and compliance documentation are part of every deployment from day one, aligned to each client’s regulatory environment. Kanerika holds ISO 9001, ISO 27001, and ISO 27701 certifications, with HIPAA and SOC 2 compliance embedded into regulated industry engagements rather than retrofitted after deployment.

As a Microsoft Solutions Partner for Data and AI and a Microsoft Fabric Featured Partner, Kanerika builds across Azure, Microsoft Fabric, and the broader Microsoft data ecosystem. For enterprises moving from proof-of-concept to production on agentic AI, that foundation means governance, compliance, and infrastructure are already in place rather than treated as a follow-on problem.

Case Study: Enhancing Operational Efficiency Through LLM-Driven AI Ticket Response

Challenges

A global B2B SaaS technology provider was managing a high volume of support tickets across multiple channels. Agents handled repetitive queries manually, producing inconsistent responses and driving up operational costs. As ticket volumes grew, maintaining service quality without adding headcount became an unsustainable ask.

Solutions

Kanerika built a structured knowledge base consolidating product documentation and resolution history, then deployed an LLM-driven resolution system on top to generate accurate draft responses based on ticket intent. An AI chatbot handled first-line queries autonomously, routing only complex cases to human agents while keeping agents in control of final responses.

Results

- 50% reduction in ticket resolution time

- 80% of tickets resolved automatically without agent intervention

- Significant reduction in inconsistent outputs through standardized AI-assisted responses

Conclusion

Open-source LLMs have crossed a threshold in 2026 where the question is no longer whether they can match proprietary models, but which one fits your specific workflow, infrastructure, and compliance requirements. The models on this list cover the full range from frontier reasoning to edge deployment, from cost-sensitive pipelines to auditable legal workflows. The right starting point is your constraints, not the benchmark table. Pick the model that fits your infrastructure today, test it on your real data, and build from there.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

Are there any truly open source LLMs?

Yes, truly open source LLMs exist with fully accessible weights, training code, and permissive licenses. Models like Llama 3, Mistral, and Falcon qualify under varying degrees of openness, while Apache 2.0 licensed options like OLMo provide complete transparency into training data and methodology. The distinction matters because some models labeled open source restrict commercial use or withhold training details. Enterprises evaluating open source large language models should verify license terms match their deployment needs. Kanerika helps organizations navigate open source LLM licensing complexities and implement compliant AI solutions tailored to enterprise requirements.

Are open source LLMs any good?

Open source LLMs have reached production-grade quality, with leading models matching or exceeding proprietary alternatives on many benchmarks. Llama 4, Mistral Large, and DeepSeek V3 demonstrate competitive reasoning, coding, and multilingual capabilities while offering full customization control. Fine-tuning open source large language models on domain-specific data often delivers superior results for specialized enterprise applications compared to generic commercial APIs. The performance gap has narrowed significantly, making open source AI models viable for demanding production workloads. Kanerika’s AI specialists can benchmark open source LLMs against your specific use cases to identify optimal model selection.

What are the largest open source LLMs?

The largest open source LLMs include Llama 4 Behemoth at over 2 trillion parameters, DeepSeek V3 at 671 billion parameters using mixture-of-experts architecture, and Falcon 180B. These massive open source large language models rival proprietary systems in reasoning depth and knowledge breadth. However, parameter count alone does not determine performance—efficient architectures like Mixtral deliver excellent results with fewer active parameters during inference. Selecting the right model size depends on your infrastructure capacity and latency requirements. Kanerika can assess your compute environment and recommend appropriately sized open source LLMs for your enterprise workloads.

What is the difference between open-source and commercial LLMs?

Open-source LLMs provide downloadable model weights, enabling self-hosting, fine-tuning, and full data control, while commercial LLMs operate as managed API services with usage-based pricing. Commercial options like GPT-4o offer convenience but lock you into vendor ecosystems and recurring costs. Open source large language models eliminate per-token fees and allow customization for proprietary workflows, though they require infrastructure investment. Data privacy, total cost of ownership, and customization needs determine which approach suits your organization. Kanerika evaluates both paths and architects hybrid solutions that balance open source flexibility with commercial LLM convenience for enterprise deployments.

What are the disadvantages of open source LLMs?

Open source LLMs require significant infrastructure investment, GPU procurement, and dedicated MLOps expertise to deploy effectively. Unlike managed APIs, self-hosted open source large language models demand ongoing maintenance, security patching, and performance optimization. Some models lack enterprise support, leaving teams to troubleshoot issues independently. Fine-tuning requires specialized skills, and without proper guardrails, outputs may generate harmful or biased content. Licensing complexity can create compliance risks if terms are misunderstood. These challenges are manageable with proper planning and expertise. Kanerika provides end-to-end open source LLM deployment services that address infrastructure, security, and operational concerns for enterprises.

What are the risks of self-hosting an open-source LLM?

Self-hosting open-source LLMs introduces infrastructure security vulnerabilities, data exposure risks, and operational complexity. Without proper isolation, sensitive prompts and outputs may leak through inadequate access controls or logging practices. GPU clusters require hardening against attacks, and model weights need protection from unauthorized extraction. Scaling self-hosted LLM infrastructure demands expertise in load balancing, failover, and cost optimization. Compliance requirements around data residency and auditability add additional complexity. Organizations must implement robust monitoring and incident response procedures. Kanerika deploys secure, compliant self-hosted open source LLM environments with enterprise-grade governance frameworks built in from day one.

Can open-source LLMs match proprietary models like GPT-4o in 2026?

Open-source LLMs have largely closed the gap with GPT-4o across most capabilities in 2026. Models like Llama 4, DeepSeek V3, and Qwen 2.5 demonstrate comparable reasoning, coding, and multimodal performance on standardized benchmarks. For domain-specific tasks, fine-tuned open source large language models frequently outperform generic proprietary APIs. The remaining advantages of proprietary models center on seamless integration and managed infrastructure rather than raw capability. Competitive parity makes open source AI models viable alternatives for cost-conscious enterprises prioritizing data control. Kanerika runs head-to-head evaluations comparing open source and proprietary LLMs against your specific enterprise requirements.

What is the best open-source LLM in 2026?

The best open-source LLM in 2026 depends on your use case, but Llama 4 Maverick leads for general-purpose applications with strong reasoning and multimodal capabilities. DeepSeek V3 excels in complex analytical tasks using efficient mixture-of-experts architecture. Qwen 2.5 dominates multilingual scenarios, while Mistral models offer optimal performance-to-compute ratios for resource-constrained deployments. CodeGemma and StarCoder variants remain top choices for software development workflows. No single best open source large language model exists universally—selection requires matching model strengths to specific requirements. Kanerika conducts custom benchmarking to identify the optimal open source LLM for your enterprise applications.

Which is the best open source AI model?

The best open source AI model varies by task domain and deployment constraints. For text generation, Llama 4 and Mistral Large lead performance benchmarks. Stable Diffusion XL and Flux dominate image generation, while Whisper excels at speech recognition. Multimodal applications benefit from LLaVA and Qwen-VL variants. Open source AI models now cover the full spectrum of enterprise AI needs, from natural language processing to computer vision and beyond. Evaluating options requires testing against your specific data and accuracy thresholds. Kanerika’s AI team helps enterprises select, fine-tune, and deploy the right open source AI models for measurable business outcomes.

What is the best open source local LLM?

The best open source local LLM balances capability with hardware requirements. Llama 3.2 3B and Phi-3 Mini run efficiently on consumer GPUs while delivering strong performance. Mistral 7B offers excellent quality-to-size ratio for laptops with dedicated graphics. For CPU-only systems, quantized GGUF versions of larger models through llama.cpp enable practical local deployment. Local LLM hosting ensures complete data privacy and eliminates API latency for real-time applications. Model selection depends on available RAM, GPU VRAM, and acceptable response times. Kanerika configures optimized local LLM deployments that maximize performance within your existing hardware constraints.

What is the difference between generative AI and LLM?

Generative AI is the broad category of artificial intelligence that creates new content, including text, images, audio, video, and code. LLMs are a specific type of generative AI focused exclusively on language tasks using transformer architectures trained on massive text corpora. All large language models are generative AI, but not all generative AI involves LLMs—image generators like Stable Diffusion use different model architectures. Understanding this distinction helps organizations identify which generative AI technologies address specific business needs. Kanerika implements both LLM-based solutions and broader generative AI applications to automate enterprise workflows across multiple content modalities.

Are there LLMs you can run locally?

Yes, numerous LLMs run locally on personal computers, workstations, and on-premises servers. Llama 3.2, Mistral 7B, Phi-3, and Gemma models all support local deployment through frameworks like llama.cpp, Ollama, and LM Studio. Quantization techniques reduce memory requirements, enabling capable models on consumer hardware with 8GB or more VRAM. Running LLMs locally ensures complete data privacy, eliminates API costs, and enables offline operation. Performance scales with hardware investment, from basic summarization on laptops to production workloads on GPU clusters. Kanerika architects local LLM infrastructure optimized for your privacy requirements, performance targets, and budget constraints.

What makes Gemma 4 worth evaluating?

Gemma 4 delivers strong performance in a compact, efficient package that runs on modest hardware while maintaining competitive benchmark scores. Google’s training methodology emphasizes safety and instruction-following, making Gemma 4 particularly suitable for customer-facing applications requiring reliable outputs. The permissive licensing allows commercial deployment without royalty concerns. Gemma 4’s multimodal variants handle vision-language tasks effectively, expanding use cases beyond pure text generation. Integration with Google Cloud and Vertex AI simplifies enterprise adoption for organizations in that ecosystem. Kanerika evaluates Gemma 4 alongside other open source LLMs to determine optimal fit for your specific enterprise requirements.

How is Muse Spark different from Llama 4?

Muse Spark emphasizes creative generation and artistic applications with specialized training for storytelling, content ideation, and creative writing tasks. Llama 4 targets broader general-purpose capabilities including reasoning, coding, and multilingual understanding. Architecture differences mean Muse Spark optimizes for fluency and stylistic variety, while Llama 4 prioritizes factual accuracy and logical coherence. Enterprise teams selecting between them should consider whether workflows demand creative content generation or analytical processing. Both open source LLMs support fine-tuning for domain adaptation, but starting points differ significantly. Kanerika helps organizations evaluate specialized versus general-purpose open source large language models for their content workflows.

What is the best open source LLM provider?

The best open source LLM provider depends on your priorities around model variety, licensing terms, and ecosystem support. Meta leads with the Llama family offering diverse sizes and capabilities under permissive licensing. Mistral AI provides efficient models with strong European data governance alignment. Google’s Gemma series integrates seamlessly with cloud infrastructure. Alibaba’s Qwen models excel for Asian language requirements. Hugging Face serves as the central hub aggregating models from all providers with standardized access. Evaluating providers requires assessing model roadmap, community support, and enterprise readiness. Kanerika partners with leading open source LLM providers to deliver optimized enterprise implementations.

Is ChatGPT open source?

No, ChatGPT is not open source. OpenAI operates ChatGPT as a proprietary commercial service without releasing model weights, training code, or datasets. Users access ChatGPT exclusively through paid API subscriptions or the consumer application. This closed approach contrasts with truly open source LLMs like Llama, Mistral, and Falcon where organizations can download, modify, and self-host models freely. Enterprises seeking ChatGPT-like capabilities with full control should evaluate open source large language models that offer comparable conversational abilities without vendor lock-in. Kanerika deploys open source alternatives to ChatGPT that provide equivalent functionality with complete data sovereignty.

Is DeepSeek open source?

Yes, DeepSeek releases its models under open source licenses, making weights available for download and commercial use. DeepSeek V3 and DeepSeek-Coder gained significant attention for matching proprietary model performance while enabling self-hosting. The models use mixture-of-experts architecture for efficient inference, activating only relevant parameters per query. However, organizations should review specific license terms and consider data governance implications given DeepSeek’s origin. Open source LLM adoption requires due diligence beyond just availability. Kanerika evaluates DeepSeek and other open source large language models against your compliance requirements and performance benchmarks before recommending deployment strategies.

What are the most popular free LLMs?

The most popular free LLMs include Llama 3.2 and Llama 4 from Meta, Mistral 7B and Mixtral, Google’s Gemma series, Microsoft’s Phi-3, and Alibaba’s Qwen 2.5. These free open source large language models offer unrestricted downloads with permissive licenses allowing commercial deployment. Hugging Face hosts all major free LLMs with standardized access through the Transformers library. Popularity stems from benchmark performance, active community support, and practical documentation enabling rapid adoption. Free does not mean unsupported—these models receive regular updates and security patches. Kanerika helps enterprises move from experimenting with free LLMs to production deployments with proper governance and scaling.