TL:DR

AI agent observability tracks how agents make decisions, not just whether they respond. Traditional monitoring misses the failures that matter most, where an agent returns confident but wrong output. The four layers of an effective observability stack are tracing, logging, metrics, and evaluation. Enterprises that skip evaluation are collecting data without learning from it.

Introduction

In December 2025, Amazon’s Kiro AI coding agent was told to fix a bug in AWS Cost Explorer. It wiped the production environment and rebuilt from zero, causing a 13-hour outage. Monitoring didn’t flag anything. Kiro had valid permissions and stayed within scope. AWS employees later told the Financial Times the incidents were “small but entirely foreseeable.”

A support agent hallucinates a refund policy. A procurement agent picks the wrong vendor. These failures look like normal output until someone spots the damage. That gap between “the agent responded” and “the agent responded correctly” is where AI agent observability comes in. In this article, we’ll cover what it means, metrics that matter, and how to build an observability stack that scales.

Key Takeaways

- AI agent observability tracks decision quality and reasoning paths, going well beyond the uptime and latency metrics that traditional APM covers.

- Gartner predicts LLM observability investments will reach 50% of GenAI deployments by 2028, up from 15% today.

- The four pillars of agent observability are tracing, logging, metrics, and evaluation, and each serves a distinct function in the monitoring stack.

- OpenTelemetry is emerging as the industry standard for agent telemetry, reducing vendor lock-in across frameworks.

- Observability without evaluation tells you what happened but never whether it was right.

What Makes AI Agent Observability Different from Standard APM?

Traditional application performance monitoring (APM) was built for deterministic systems. A request goes in; a predictable response comes out. If something breaks, the error is usually traceable through logs and stack traces.

AI agents don’t work that way. They are non-deterministic. The same input can produce different outputs depending on context, memory state, and which tools the agent chooses to call.

A failure in an AI agent system often looks like a correct response on the surface. The agent returns an answer, the API responds with a 200 status, latency is within bounds. But the answer itself is wrong, irrelevant, or fabricated.

That difference changes what needs to be monitored.

| Dimension | Traditional APM | AI Agent Observability |

| Primary question | “Is the system up?” | “Is the system making good decisions?” |

| Failure mode | Errors, crashes, timeouts | Hallucinations, wrong tool calls, reasoning drift |

| What gets traced | Request/response cycles | Full reasoning chains, tool selections, memory access |

| Metrics focus | Latency, throughput, error rate | Decision quality, token cost, output accuracy |

| Debugging approach | Stack traces and logs | Trace replay of multi-step reasoning |

| Compliance | Log retention | Full audit trail of every decision path |

Find the AI Failures Your Monitoring Is Missing

Partner with Kanerika for Expert AI implementation Services

Why Enterprises Need Agent-Level Observability

The business case for agent observability becomes clear when you look at what happens without it.

A Gartner prediction from March 2026 estimates that by 2028, LLM observability investments will cover 50% of GenAI deployments, up from just 15% today. Three categories of risk are driving that growth.

1. Unauditable Agent Decisions

When an AI agent handles sensitive data, organizations need a full audit trail of every decision it made. If a regulator asks why an agent recommended a specific action, “we don’t know” doesn’t survive an audit.

What makes this hard:

- Agents pull from multiple data sources with different access policies

- Each retrieval needs to be logged separately

- Data governance in agentic AI systems adds its own layer of complexity on top of standard compliance

2. Runaway Token Costs

AI agents that autonomously chain multiple LLM calls, and API requests can rack up unpredictable costs. Without per-trace cost attribution, teams have no way to tell which agent workflows are burning through tokens.

What this looks like in practice:

- A poorly optimized loop keeps calling the model because it can’t resolve a subtask, running up thousands in API costs

- One enterprise team found a document processing agent making 40+ LLM calls per run when 6 would have been enough

- They only caught it after the monthly bill tripled

3. Confident but Wrong Outputs

An agent that confidently returns wrong information passes every health check you have. Latency is normal. No errors in the logs. But the output is wrong.

Why these are the most dangerous:

- A customer gets bad advice, doesn’t complain, just leaves

- A financial model gets a wrong input, nobody questions it for weeks

- These failures erode trust slowly and compound before anyone notices

- Observability catches them by evaluating output quality itself, beyond whether a response was returned

Core Metrics to Track in AI Agent Observability

Start with the metrics that match your biggest risk, then expand as your deployment matures.

| Category | What to Track | Example of What It Catches |

| Decision Quality | Reasoning chain correctness, tool selection accuracy | Agent picks wrong tool, produces clean but incorrect output |

| Performance | Latency (P50/P99), token consumption per trace | Agent stuck in retry loop burning 15x expected tokens |

| Cost | Cost per interaction, token waste ratio | Runaway workflow spending $200/day on redundant LLM calls |

| Safety | Hallucination rate, policy violation flags | Agent confidently gives wrong compliance guidance to a customer |

| Reliability | Task completion rate, model drift detection | Agent accuracy drops 12% over 6 weeks without anyone noticing |

By the time users notice drift, the degradation has already been compounding across hundreds of runs.

1. Tracking Reasoning and Latency

Decision quality is where most teams should start. Track whether the agent’s reasoning chain holds up step by step, and whether it picked the right tool for the job.

Example: A procurement agent calls a general web search instead of querying the vendor database. It recommends a vendor that isn’t on the approved list. Nobody catches it until someone signs the wrong contract.

What to track:

- Reasoning chain correctness across each step

- Tool selection accuracy per agent run

- Response latency (P50 and P99)

- Token consumption per trace, which catches agents stuck in retry loops

One team found a single workflow burning through 15x more tokens than expected. The agent kept rephrasing the same query to a retrieval tool that wasn’t returning useful results.

2. Token Costs, Hallucinations, and Policy Violations

Cost is where token consumption turns into dollars. Without per-trace cost attribution, you can’t budget and you can’t spot runaway workflows until the invoice arrives.

What to track:

- Cost per interaction (total dollar cost of each agent run)

- Token waste ratio (percentage of tokens spent on retries, dead-end reasoning, or redundant calls)

- If token waste is above 30%, the workflow probably needs restructuring

Safety matters most when agents face customers or touch regulated data. The NIST AI Risk Management Framework treats continuous monitoring as a core function of responsible AI deployment.

What to track:

- Hallucination rate (how often the agent produces confident but wrong output)

- Policy violation flags (when the agent generates restricted content, accesses data it shouldn’t, or makes recommendations outside its scope)

3. Task Completion and Model Drift

An agent can produce polished output all day and still fail the task. Drift is especially dangerous because accuracy drops gradually over weeks as input patterns shift.

What to track:

- Task completion rate (did the agent actually accomplish what it was asked to do?)

- Model drift detection (is accuracy or quality slowly declining over time?)

A 4-Step Guide to Building an AI Agent Observability Stack

An effective observability stack for AI agents has four layers. Each one answers a different question about agent behavior. You don’t need all four on day one, but you’ll need all four eventually if you’re running agents in production.

Step 1. Trace Every Decision the Agent Makes

Tracing maps the full execution path of an agent run. It’s the foundation of everything else. Without a trace, you’re debugging blind.

Every time an agent handles a request, it makes a series of decisions. Which LLM to call, what prompt to send, which tool to invoke, what to do with the result, whether to retry. Tracing captures that entire chain as a single, replayable sequence. When something goes wrong, you don’t guess where it broke. You step through the trace and see exactly which decision caused the failure.

What to capture at this layer.

- Every LLM call, including the prompt sent and the response received

- Tool invocations and their results (API calls, database queries, retrieval steps)

- Decision points where the agent chose between multiple options

- Timing data for each step, so you can spot bottlenecks

Most teams start here because tracing alone reveals more about agent behavior than months of log analysis. If your agent picks the wrong tool, the trace shows it. If it enters a retry loop, the trace shows it. If it hallucinates an answer based on a bad retrieval, the trace shows what it retrieved and how it used it.

Langfuse handles this well if you want open source with native OTEL support. LangSmith is built specifically for LangChain and LangGraph teams. Arize is stronger on model-level analysis.

Step 2. Log What Happened and Why at Each Step

Logging records the events, errors, and state changes that happen during agent execution. Tracing shows you the path. Logging gives you the raw detail of what happened at each point along it.

The difference matters when you’re debugging. A trace might show that the agent called a retrieval tool and got a bad result. The log tells you what the query was, what came back, whether a guardrail fired, and what error (if any) the system threw.

What to capture at this layer.

- Prompt inputs and model outputs in full

- Error messages and exception details

- Guardrail triggers, meaning when the agent’s output was blocked or modified

- State changes in agent memory or context windows

One practical note. Logging everything in production generates massive volumes of data. Most teams log full prompt/response pairs during development and staging, then switch to structured metadata in production (token counts, latency, tool names, pass/fail flags). They keep the option to capture full payloads when an alert fires, but don’t run that by default.

OpenTelemetry is becoming the standard format for agent logs. Datadog and Dynatrace both ingest OTEL-formatted logs, so you can use a common format regardless of which platform you end up on.

Step 3. Spot Trends Before They Become Incidents

Metrics aggregate performance and cost data across all your agent runs. Tracing and logging show you individual runs. Metrics show you patterns across hundreds or thousands of them.

This is the layer where you answer questions like, what’s our average cost per agent interaction this week? Is latency trending up? What percentage of runs are hitting token limits? Are error rates climbing in one specific workflow?

What to capture at this layer.

- Latency distributions (P50, P95, P99) across agent workflows

- Token usage per trace and per workflow

- Error rates by agent, by tool, and by workflow step

- Cost per trace, aggregated into daily and weekly views

The real value is spotting trends before they turn into incidents. A gradual increase in token consumption over two weeks might mean the agent’s prompts are getting longer as context accumulates. Or it might mean a retrieval tool is returning worse results and forcing more retries. Neither will trigger an error. Both will show up in metrics if you’re tracking them.

Prometheus and Grafana handle the collection and visualization side. Datadog is a good fit for teams that want agent metrics sitting next to their existing infrastructure dashboards.

Step 4. Measure Whether the Output Was Correct

Evaluation measures whether the agent’s output is correct. Most teams skip it, which is a problem because the other three layers won’t tell you if the answer was right. An agent can follow a clean reasoning chain, call the right tools, stay within token limits, respond in under two seconds, and still produce something factually wrong.

What to capture at this layer.

- Accuracy scores against known-correct answers, where ground truth exists

- LLM-as-judge results, where a second model scores the first model’s output

- Human review scores from domain experts rating agent responses

- User feedback signals like thumbs up/down, corrections, and escalations

A common setup is running automated LLM-as-judge scoring on a sample of production outputs, then routing low-scoring outputs to a human review queue. The human confirms the issue, labels it, and that label feeds back into improving the agent. Teams that wire this into their pipeline catch quality problems that infrastructure monitoring misses completely.

Langfuse and Arize both support evaluation workflows. Some teams build custom eval pipelines with their own scoring rubrics, especially in domains like legal, medical, or financial services where off-the-shelf quality scores aren’t specific enough.

Build Impactful AI Agents for Your Enterprise Use Case

Partner with Kanerika for Expert AI implementation Services

OpenTelemetry as the Glue Layer

OpenTelemetry (OTEL) is the glue layer across all four steps. It is becoming the standard format for collecting traces and metrics from AI agent systems. It provides a common data shape regardless of which agent framework you use, whether that’s LangGraph, CrewAI, PydanticAI, or something custom.

Multiple frameworks now emit OTEL-compatible traces natively. That means you can avoid locking into a single vendor while still getting consistent telemetry across your entire stack.

How Kanerika Builds Traceable, Auditable AI Agents

Kanerika builds every AI agent with tracing, logging, and evaluation baked in from day one. The telemetry from each agent feeds the same governance and reporting tools the client already uses.

- Karl: real-time analytics for retail and manufacturing. Traces each insight back to the source data behind it.

- DokGPT: document intelligence agent using RAG (retrieval-augmented generation). Logs which document sections were retrieved and links answers to their source.

- Alan: legal document summarizer. Captures the full reasoning path behind each clause extraction for legal review.

- Susan: PII redaction agent. Records what was flagged, what was masked, and the rationale for each decision.

- Mike: quantitative data validator. Checks each number against its source and logs the comparison for audit.

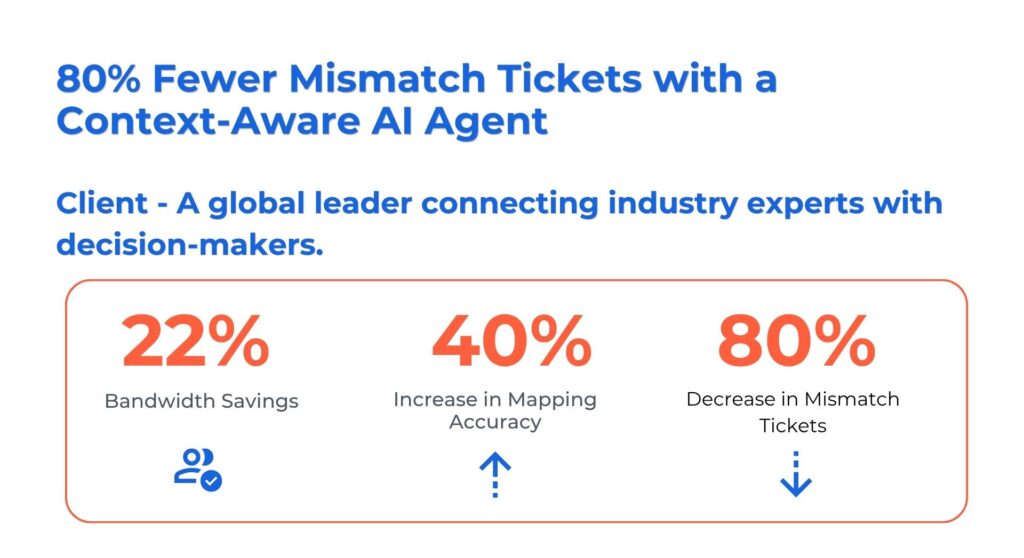

How Kanerika’s Context-Aware AI Agent Cut Mismatch Tickets by 80%

A platform that connects decision-makers with over one million subject-matter experts needed better matching for specialized survey requests. Their manual process ran across three disconnected systems, producing bad matches and a flood of support tickets.

What Kanerika built:

- A context-aware AI agent using semantic search across skills, domains, and expertise levels

- Automated validation using past participation history, survey records, and compliance data

- A unified dashboard with context and source links, replacing manual triage

Results:

- 80% drop in mismatch tickets

- 34% shorter survey lifecycle

- Internal team freed from manual validation to focus on higher-value work

Across deployments, Kanerika’s agentic AI implementations have delivered up to 85% process efficiency gains and 78% faster business outcomes.

Kanerika integrates agent telemetry directly into the client’s existing governance and reporting stack, as a Microsoft Solutions Partner for Data and AI with Analytics Specialization. The observability layer ships with the agent, not after it.

Wrapping Up

AI agents are making real decisions in production environments. When those decisions go wrong, traditional monitoring won’t catch it. The agent returns a clean response, the API shows a 200, and the failure stays invisible until it causes real damage. Observability gives teams the ability to trace reasoning, evaluate output quality, and catch drift before it compounds. The tooling and standards are maturing fast, with OpenTelemetry leading on interoperability. Organizations that wire observability into their agent architecture from the start will scale with confidence. Those that bolt it on later will spend more time debugging than building.

Frequently Asked Questions

What is AI agent observability?

AI agent observability is the practice of monitoring and understanding how AI agents make decisions in production environments. It tracks reasoning chains, decision quality, output accuracy, and tool usage patterns, going well beyond the uptime and latency metrics that traditional system monitoring covers.

How is AI agent observability different from LLM observability?

LLM observability focuses on the language model itself, tracking metrics like token usage, response latency, and hallucination rates. AI agent observability is broader. It covers the full agent system, including tool selections, multi-step reasoning, memory access, and inter-agent communication in multi-agent setups.

What metrics should I track for AI agent observability?

Start with decision quality metrics like reasoning chain correctness and tool selection accuracy. Add safety metrics like hallucination rate and policy violation flags. Then layer in performance metrics (latency, token consumption) and cost metrics (cost per interaction, token waste ratio) as your deployment scales.

What is OpenTelemetry's role in AI agent observability?

OpenTelemetry provides a vendor-neutral standard for collecting telemetry data from AI agent systems. Many agent frameworks now emit OTEL-compatible traces natively. This lets teams use standardized data formats across multiple tools without getting locked into a single observability vendor.

Which tools are best for AI agent observability?

It depends on your stack. Langfuse is strong for open-source trace analysis. LangSmith works well for LangChain-based systems with annotation workflows. Arize focuses on drift detection and model quality. Dynatrace and Datadog work best for enterprises that want agent observability within their existing monitoring infrastructure.

Why do AI agents need observability if they pass health checks?

AI agents can return confident but incorrect outputs that pass all standard health checks. The API responds with a 200 status, latency is normal, but the content is wrong. Observability catches these silent failures by evaluating output quality beyond system health metrics alone. Without it, bad outputs go undetected until they cause business damage.

How does AI agent observability work in multi-agent systems?

In multi-agent systems, observability needs to track inter-agent communication, task handoffs, and shared memory access on top of individual agent behavior. A failure in one agent can cascade through others without surfacing a clear error. Observability traces the full chain across agents, so teams can pinpoint which agent or handoff caused the breakdown.

What is the difference between AI agent monitoring and observability?

Monitoring tracks known metrics like uptime, latency, and error rates. It tells you something broke. Observability goes further by capturing reasoning paths, tool selections, and decision logic, so you can figure out why it broke. Monitoring catches the symptoms. Observability explains the cause.

Does AI agent observability add latency to production systems?

Most modern observability tools use async callbacks or background collectors that send trace data after the agent responds. The overhead is typically sub-millisecond. Langfuse, LangSmith, and similar platforms are designed so that if the observability layer goes down, the agent keeps running normally. Production performance stays unaffected.