Did you know that 97% of Fortune 500 companies use Power BI. But ask most analysts what separates it from Power Query, and you’ll get a vague answer. That gap in understanding is where expensive architecture mistakes begin — teams over-investing in Excel-based workflows that can’t scale, or jumping into Power BI without understanding the transformation layer underneath it.

Power Query sits inside Power BI, not beside it. It handles the ETL work: connecting to data sources, cleaning up inconsistencies, and shaping everything before it enters the model. Power BI is the full platform built around that engine. It adds a relational data model, a DAX calculation engine for dynamic business logic, and an interactive reporting layer that non-technical stakeholders can actually use without analyst involvement every time they need a number.

The practical question most teams face isn’t really “Power BI or Power Query.” It’s whether to keep working in Excel or move to the full Power BI platform, and that choice has real consequences for governance, scale, and how long those reports stay useful. This article breaks down what each tool actually does, where they fit together, and how to make the right call for your environment, whether you’re an individual analyst, a team lead, or an enterprise architect evaluating Microsoft’s data stack.

Key Takeaways

- Power Query runs inside Power BI. Framing these as competing tools is the most common misconception in the Microsoft analytics stack.

- The actual comparison worth making is Power Query in Excel (personal, local, ungoverned) vs the full Power BI platform (cloud, collaborative, governed).

- M language shapes data. DAX measures it. Mix up which tool handles which job, and you’ll have performance problems that compound quietly until a production report breaks.

- Query folding is the hidden performance lever. When Power Query pushes transformation steps back to the source database, everything scales. When it breaks, it silently destroys your refresh architecture.

- Storage mode sets your ceiling. Import, DirectQuery, and Composite each change how Power Query and DAX interact, and the choice determines your data freshness and performance limits.

Why Teams Keep Confusing Power BI and Power Query

Picture a data analyst who spent months building Power Query transformations in Excel. Four source systems, clean connections, reports that work. Stakeholders trust them. Then the business asks for something Excel can’t do: interactive dashboards, automated refresh, access for fifty people across two departments.

The analyst searches for the difference between Power BI and Power Query. Most articles hand them a tangle of definitions, with both tools described as separate platforms. That framing is wrong from the first sentence.

Power Query is not Power BI’s rival. It runs inside Power BI as one of its core engines. The real question is whether to stay with Power Query in Excel or move to the full Power BI platform, and that distinction changes everything about how the decision should be made.

This confusion is expensive. Some teams over-invest in Excel Power Query well past the point where it can scale. Others jump to Power BI without understanding the transformation layer underneath it, then write DAX measures that should have been M transformations, and wonder why reports run slowly. Both errors are avoidable once the architecture is clear.

How Power Query Fits Into the Power BI Architecture

Power Query is a data connectivity and transformation engine. It handles the extract-transform-load layer, pulling data from sources, cleaning it, and shaping it into something a data model can use. Power BI is the complete self-service analytics platform that contains Power Query, plus a relational data model, a DAX calculation engine, and an interactive reporting and publishing layer.

Comparing Power BI vs Power Query is like asking whether a car is better than its engine. One contains the other.

The table below maps each component in Microsoft’s analytics stack to its actual job, because ‘Power BI’ in common usage is shorthand for several distinct pieces working together, and that ambiguity is where most architectural mistakes start.

| Component | Where it lives | What it actually does |

| Power Query | Excel, Power BI Desktop, Microsoft Fabric | Data extraction, transformation, loading |

| Power Pivot | Excel | In-memory data modeling inside Excel |

| DAX | Power BI + Excel Power Pivot | Calculated measures, KPIs, time intelligence |

| Power BI Desktop | Standalone app | Connect, model, and build reports |

| Power BI Service | Cloud (powerbi.com) | Publish, share, govern, and schedule refresh |

| Dataflows Gen2 | Microsoft Fabric | Cloud-native, shared Power Query for enterprise data prep |

| Microsoft Fabric | Cloud platform | Unified data engineering and BI platform |

Same Engine, Different Context: Excel vs Power BI

Power Query in Excel is confined to the workbook. It refreshes manually or through a gateway schedule, and it has real limits above one to two million rows. Power Query inside Power BI connects to a live data model, supports incremental refresh, handles significantly larger datasets, and works with both Import and DirectQuery storage modes.

The M code you build in Excel Power Query transfers directly into Power BI Desktop. That portability is one of the most underappreciated advantages of staying in the Microsoft stack, and one of the strongest reasons to learn Power Query properly before making the move to Power BI.

How to Find Power Query Inside Power BI Desktop

Open Power BI Desktop, connect to any data source, and click Transform Data in the Home ribbon. This opens the Power Query Editor, a separate windowed interface where every transformation step appears visually in the Applied Steps panel on the right. Behind each step is M code you can view or edit directly.

You haven’t left Power BI. You’ve moved one layer deeper into the same application. Many analysts treat Power Query as a separate tool used before opening Power BI. It isn’t. It’s embedded inside it, and understanding that changes how you design the whole pipeline.

Power Query Capabilities: Strengths and Limitations

Power Query’s job is taking data from wherever it lives, messy, multi-source, inconsistent, and shaping it into something a data model can use. It does this through a step-by-step visual interface that generates M language scripts in the background. Analysts who don’t write code can work effectively through the interface. Those who do can go deeper with M directly.

Where Power Query Performs Well

- Connecting to 100+ sources: SQL databases, SharePoint, Excel files, APIs, ERP systems, cloud storage, web endpoints

- Transforming data visually, pivoting, unpivoting, merging, appending, without SQL

- Handling common data quality problems: duplicates, wrong data types, inconsistent formatting, nulls

- Merging datasets from multiple heterogeneous sources in a single query

- Acting as the last-mile transformation layer inside Power BI when upstream pipelines already handle heavy processing

Where It Falls Short

- Batch processing only, not built for real-time or data streaming use cases

- Performance degrades above one to two million rows in Excel

- Complex M logic has a steep learning curve

- No version control, audit trail, or governance in standalone Excel use

- Cannot produce interactive dashboards, calculated KPIs, or shared reports on its own

- Not a substitute for enterprise ETL when complex scheduling, error handling, and SLA requirements exist

Power Query handles data preparation. It’s not an analytics destination, and treating it as one creates structural problems that compound as the business grows.

What M Code Looks Like in Practice

Here’s a minimal but realistic example, connecting to a SQL Server table and filtering to active customers only:

let

Source = Sql.Database("server-name", "database-name"),

CustomerTable = Source{[Schema="dbo", Item="Customers"]}[Data],

FilteredRows = Table.SelectRows(CustomerTable, each [Status] = "Active"),

RemovedColumns = Table.RemoveColumns(FilteredRows, {"InternalID", "LegacyCode"})

in

RemovedColumnsEvery step in the Applied Steps panel generates a line like one of these. The visual interface writes it; advanced users edit it directly. The let…in structure is standard M. Each named step builds on the previous one, and the in clause specifies which step’s output becomes the query result.

What Power BI Does That Power Query Alone Can’t

Power BI is the full business intelligence environment. It includes Power Query for data prep, a relational data model for structuring table relationships, DAX for business calculations, and an interactive report authoring layer that non-technical stakeholders can use without an analyst involved in every request.

What Power BI Adds

- Relationships between multiple tables using star schema data modeling

- DAX measures: time intelligence, running totals, year-over-year comparisons, KPIs that respond dynamically to user filters

- Published reports accessible across the organization, not just whoever built them

- Governance through workspace roles, Row-Level Security, and Microsoft Purview integration

- Live connections to Azure data sources via DirectQuery for near-real-time reporting

- Automated refresh schedules without manual analyst involvement each cycle

Power BI has real limits too. DAX has a steep learning curve, and filter context evaluation is the concept that trips up almost every analyst coming from Excel formulas. Performance can bottleneck with very large datasets unless the data model is properly built with star schema, aggregations, and incremental refresh. And Power BI is primarily a read layer. Write-back capability is limited by default, which matters for planning, budgeting, and forecasting scenarios.

What DAX Actually Looks Like

Where M transforms rows of data, DAX calculates across them dynamically. Here’s a DAX measure for year-over-year revenue, the kind of business logic Power Query simply can’t express:

Revenue YoY % =

VAR CurrentRevenue = [Total Revenue]

VAR PriorYearRevenue =

CALCULATE(

[Total Revenue],

SAMEPERIODLASTYEAR('Date'[Date])

)

RETURN

DIVIDE(CurrentRevenue - PriorYearRevenue, PriorYearRevenue, 0)When a user filters this report to a specific region or product, DAX recalculates dynamically based on the new filter context. Power Query cannot do that. M runs at data load time, not at filter time. This is the core architectural reason you can’t substitute one language for the other.

M Language vs DAX: Understanding the Role of Each in Power BI

Most articles mention both languages without explaining the practical line between them. That line matters enormously in production Power BI environments, and crossing it in the wrong direction creates performance and maintenance problems that compound quietly until something breaks under real data volumes.

M language is generated and executed by Power Query. It handles source connections, data type changes, column operations, merges, appends, and conditional logic at the data preparation stage. M runs before data enters the model, at load time.

DAX works on top of already-clean data to calculate measures, build KPIs, and express business logic that responds to report filters and slicers. DAX runs inside the model, at query time, triggered by each user interaction.

The rule that prevents most common mistakes: Power Query shapes the data. DAX measures it. Doing heavy business calculations in Power Query, or heavy data reshaping in DAX, creates performance problems that neither language was designed to absorb.

At Kanerika, we apply a framework called the Layer-First Design Check before writing any M or DAX in a client engagement. The decision table below captures the core logic:

| Question | Use M (Power Query) | Use DAX |

| Does the result need to respond to report filters or slicers? | No | Yes |

| Is this a data cleaning or reshaping task? | Yes | No |

| Does the value change when a user changes a date range? | No | Yes |

| Is this joining tables from different data sources? | Yes | No |

| Does the formula use time intelligence (YoY, MTD, rolling average)? | No | Yes |

| Will this value aggregate differently depending on which visual uses it? | No, bake it into M as a fixed column | Yes, it’s a DAX measure |

The Most Costly Anti-Pattern: Calculating in the Wrong Layer

Calculating revenue by region as a fixed column in Power Query locks that aggregation. When a stakeholder filters to a different region or time period, Power BI can’t recalculate it. The report either shows the wrong number or needs a full data refresh to update. That’s a process control failure built into the architecture from day one.

This mistake is common when analysts migrate from Excel, where a single formula in a cell often handles both transformation and calculation. In Power BI, those are two different engines. The separation isn’t optional, and the habit of collapsing both into one step is the most frequent source of performance problems Kanerika diagnoses in inherited Power BI environments.

Query Folding in Power BI: What It Is and Why It Affects Performance

Query folding is one of the most important performance concepts in Power Query, and it’s almost never covered in basic comparison articles. Getting this right is the difference between a Power BI environment that scales and one that quietly degrades as data volumes grow.

When Power Query connects to a relational database like SQL Server or PostgreSQL, it can push transformation steps back to the database as native SQL queries. The database does the heavy lifting. Only the filtered, cleaned result comes back to Power BI. This is query folding, and when it works, it’s one of the strongest performance optimizations in the Microsoft data stack.

When folding breaks, because a transformation step can’t be translated into native SQL, Power Query downloads the entire source table and processes it locally. For large tables, that’s a serious problem that doesn’t announce itself. Reports just get slower, and the cause isn’t obvious.

Why Query Folding Matters in Production

- Incremental refresh in Power BI requires query folding. If the fold chain breaks, Power BI falls back to full data reloads on every scheduled refresh, potentially processing tens of millions of rows when only a fraction needed updating.

- You can verify whether a step folds by right-clicking it in the Applied Steps panel. If ‘View Native Query’ is available and not grayed out, that step folds correctly.

- A single non-foldable step inserted early in the pipeline breaks folding for every subsequent step, a cascading problem that’s easy to create and hard to trace.

| Transformation type | Folds? | Why |

| Filter rows | Yes | Translates to SQL WHERE |

| Remove columns | Yes | Translates to SQL SELECT |

| Change data types | Usually | Handled at source level |

| Sort rows | Usually | Translates to SQL ORDER BY |

| Basic aggregations | Usually | Translates to SQL GROUP BY |

| Custom column with M logic | No | M functions have no SQL equivalent |

| Merge across different source types | No | Can’t fold across heterogeneous sources |

| Pivot or unpivot | Rarely | Depends heavily on source capabilities |

| External function calls mid-pipeline | No | Breaks the fold chain entirely |

| Incremental refresh filter (RangeStart/RangeEnd) | Must fold | Non-negotiable for incremental refresh to work |

The last row is the most operationally important. If incremental refresh is part of your architecture, and for large fact tables it should be, every step in the query must preserve folding. One non-foldable step eliminates incremental refresh as an option and forces full reloads every time the dataset refreshes.

Move Beyond Legacy Systems and Embrace Power BI for Better Insights!

Partner with Kanerika Today.

Power BI Storage Modes: Choosing Between The Key Types

Power BI supports three storage modes, and the choice fundamentally changes how Power Query transformations and DAX calculations behave. Most teams pick Import mode by default and never revisit the decision, but understanding all three is essential when designing for scale.

- Import mode: In this mode, Power Query transformations run at refresh time and the results are cached in Power BI’s in-memory VertiPaq engine. DAX operates against that cached data, with very fast query performance and full feature support across both languages. This is the most common choice and the right default for most scenarios.

- DirectQuery mode: In this mode, Power Query transformations are translated into source-native queries that run live with each report interaction. Not all Power Query steps can be translated, and complex M code may be unsupported or perform poorly. DAX also has limitations here because every measure calculation triggers a live database query, which means the source system absorbs the full load of every user interaction.

- Composite mode: This mode mixes in a single dataset. This is a pattern Kanerika commonly applies for supply chain planning and financial services clients who need live operational data alongside historical trend analysis from an imported model. It’s the most flexible option but requires careful data model design to avoid unexpected query fan-out against source systems.

| Feature | Import mode | DirectQuery mode | Composite mode |

| Data freshness | Scheduled refresh only | Near real-time | Both, by table |

| Query performance | Fastest, in-memory VertiPaq | Slower, live source queries | Mixed by table |

| Full DAX support | Yes | Limited | Depends on table type |

| Power Query transformation support | Full M supported | Limited to foldable steps | Mixed |

| Data volume handling | Practical limits apply | Source handles volume | Flexible |

| Source system load | At refresh only | Every user interaction | Mixed |

| Incremental refresh support | Yes, requires query folding | Not applicable | For Import tables only |

| Best fit | Most scenarios | Real-time operational requirements | Historical + live data combined |

Kanerika’s default recommendation across enterprise deployments: start with Import mode for all tables unless there’s a specific, documented reason to deviate. DirectQuery’s performance characteristics and DAX limitations catch teams off guard when they choose it out of convenience rather than necessity. Composite models add architectural complexity that needs deliberate planning, not something you want to discover reactively when report performance degrades under production load.

Modernize Your Analytics Stack with Microsoft Fabric

Learn how Microsoft Fabric levels up your analytics strategy and delivers better ROI for your business.

Power Query in Excel vs Full Power BI: Feature-by-Feature Comparison

The honest framing here isn’t ‘Power Query vs Power BI.’ It’s Power Query in Excel (local, personal, ungoverned) versus the full Power BI platform (cloud, collaborative, governed). The distinction sounds semantic until you try to share a report with fifty people and realize your entire analytics infrastructure lives in a single Excel file on someone’s laptop.

That’s not a hypothetical. It’s the starting point for the majority of Kanerika’s enterprise analytics engagements.

| Power Query in Excel | Full Power BI platform | |

| Primary use case | Data cleaning and ad-hoc transformation | End-to-end self-service analytics and BI |

| Visualization | None (PivotCharts only) | 30+ interactive visual types with drill-through |

| Practical data volume | ~1M rows comfortably | 10M+ rows with proper star schema modeling |

| Collaboration | Manual file sharing only | Cloud-based, role-controlled report sharing |

| Data refresh | Manual or gateway-scheduled | Automated cloud refresh with incremental support |

| Governance | None built-in | Row-Level Security, workspace roles, Purview integration |

| License cost | Included in Microsoft 365 | Additional per-user or capacity licensing required |

| Real-time connectivity | Not supported | DirectQuery and live connections to Azure sources |

| Audit trail and lineage | None | Full lineage tracking with Microsoft Purview |

| Query folding | Yes, where source allows | Yes, plus required for incremental refresh |

| Shared data prep | Not available | Dataflows Gen2 in Microsoft Fabric |

| Microsoft Fabric integration | Limited | Native, first-class |

The column that captures the fundamental difference is governance. Power Query in Excel has none, not limited governance, none. No audit trail, no access control beyond file permissions, no version history, no lineage tracking. For individual analysts doing their own work, that’s fine. For organizations building trustworthy, scalable business intelligence in regulated industries, it’s a structural vulnerability that eventually creates a crisis.

Cognos vs Power BI: A Complete Comparison and Migration Roadmap

A comprehensive guide comparing Cognos and Power BI, highlighting key differences, benefits, and a step-by-step migration roadmap for enterprises looking to modernize their analytics.

When to Use Power Query vs Power BI: A Practical Framework

Stay with Power Query in Excel when:

- The work is genuinely ad-hoc, clean once, use once, done

- The dataset stays under 500,000 rows with no real growth expected

- There’s no sharing requirement beyond sending a file by email

- The output is a static table, not a live dashboard

Move to the full Power BI platform when:

- Reports need to reach five or more stakeholders regularly

- Data refresh needs to happen automatically from source systems

- Three or more data sources need to be combined into a single analytical view

- Business users need to explore data themselves without analyst involvement each time

- Governance, audit trails, or compliance-grade access control are organizational requirements

- The report will still be actively used six months from now

Use both together: the standard enterprise pattern

Most mature analytics deployments land here. Power Query handles the ETL work: source connections, cleaning, joining, shaping. Power BI’s data model and DAX handle the analytics layer on top. Neither replaces the other. They each do their job, in sequence. This combined approach is the foundation of AI-driven business transformation when organizations want to layer intelligent analytics on governed data infrastructure.

Dataflows Gen2 vs Power Query in a Report File

Here’s the scenario that hits every growing Power BI environment eventually: ten different reports all run their own Power Query transformations against the same source tables. Each reconnects, re-transforms, and re-loads independently. Source system load spikes. Refresh times compound. When transformation logic needs to change, it changes in ten separate files with no consistency guarantee.

Dataflows Gen2 inside Microsoft Fabric solves this. It moves Power Query transformations into the cloud, runs them on a schedule, and stores the results in a managed Lakehouse. Every downstream Power BI report connects to the Dataflow output instead of hitting the source directly. Power Query becomes a shared organizational asset.

| Feature | Power Query in report file | Dataflows Gen2 (Microsoft Fabric) |

| Execution environment | Local machine at report refresh | Cloud compute on schedule |

| Output destination | That report’s data model only | Fabric Lakehouse / Warehouse, shareable |

| Reusability across reports | One file only | Multiple reports share one clean output |

| Governance and versioning | None | Managed, versioned, monitored centrally |

| Compute scalability | Limited by local machine | Fabric cloud compute scales out |

| Incremental refresh support | Requires query folding | Supported natively |

| Maintenance overhead | Duplicated across every report file | Centralized single source of truth |

| Enterprise readiness | Good for individual analysts | Built for organization-wide deployment |

For organizations with five or more reports sharing data sources, Dataflows Gen2 is the architectural step that converts individual Power Query work into governed, reusable data infrastructure. It’s effectively the enterprise version of Power Query, and it’s the direction Microsoft is pushing the entire platform through Fabric. Organizations also evaluating Databricks Lakeflow for upstream data engineering can use Dataflows Gen2 as a bridge layer between raw Lakehouse data and Power BI’s reporting layer.

Power Query vs Power BI: Which One Actually Governs Your Data?

Excel Power Query creates invisible data pipelines nobody outside the analyst’s laptop can see, audit, or maintain. When the analyst who built those queries leaves, the business logic walks out with them. Scheduled refreshes break. Reports go dark. Nobody knows why, or how to fix it. The organization has lost not just a report but the institutional knowledge of how a metric was defined.

Power BI, deployed correctly with Microsoft Purview, creates auditable, version-tracked data lineage that survives personnel changes. Organizations building toward real data literacy across business units need this transition to happen deliberately, not reactively when something breaks the night before a board presentation.

Data consolidation is a real driver here too. Excel-based reporting tends to produce multiple conflicting versions of the same metric, with each analyst applying slightly different transformation logic to the same source data. Power BI with a shared, governed data model creates one version of truth. In regulated industries, that’s not a convenience. It’s a compliance requirement.

Power BI Licensing in 2026

| License tier | Cost | What it enables |

| Power BI Free | $0 | Personal use only, can’t publish or share reports |

| Power BI Pro | ~$10/user/month | Share with other Pro users; included in Microsoft 365 E5 |

| Power BI Premium Per User (PPU) | ~$20/user/month | Larger datasets, AI features, paginated reports |

| Microsoft Fabric Capacity (F64) | ~$4,592/month | Replaces Power BI Premium P1; full Fabric platform including Dataflows Gen2 |

One important 2026 update: Microsoft has replaced standalone Power BI Premium P SKUs with Fabric F SKUs. Organizations evaluating enterprise-tier Power BI should look at Fabric capacity rather than legacy Premium P licensing. The F64 tier is roughly equivalent to the former P1 and unlocks the full Fabric platform, Dataflows Gen2, Lakehouse, Data Factory, and not just Premium reporting features.

Learning Path for Power BI: Do You Need Both M and DAX?

For professional Power BI work, eventually yes, but the sequence matters, and skipping steps in the wrong order wastes months.

Recommended Learning Path

Step 1: Start with Power Query’s visual interface. Get comfortable connecting to sources, applying transformation steps, and reading the Applied Steps panel. Significant work is possible without writing M directly, and building this visual intuition first makes the M code far more readable when you encounter it.

Step 2: Learn M language basics. Focus on the let…in structure and common table functions (Table.SelectRows, Table.RemoveColumns, Table.TransformColumnTypes). Understanding M at this level unlocks query folding optimization and custom transformation logic the visual interface can’t express.

Step 3: Build DAX fundamentals. Master filter context first. It’s the hardest conceptual shift from Excel, and everything else in DAX depends on it. Start with basic measures, then move to time intelligence functions like SAMEPERIODLASTYEAR, TOTALYTD, and CALCULATE with date filters.

Step 4: Study data modeling. Star schema, dimension tables, fact tables, and relationship cardinality are the structural foundation that makes DAX work correctly and efficiently. Bad modeling causes bad DAX, and no formula cleverness compensates for a poorly structured relational data model.

For professional certification: the Microsoft PL-300 Power BI Data Analyst Associate exam allocates roughly 25 to 30% of its weight to Power Query and data preparation, another 25 to 30% to data modeling and DAX, with the remainder covering reporting, visualization, and Power BI Service governance. Passing PL-300 validates both sides of the stack and signals that the candidate can build complete Power BI solutions, not just visuals on top of someone else’s data model.

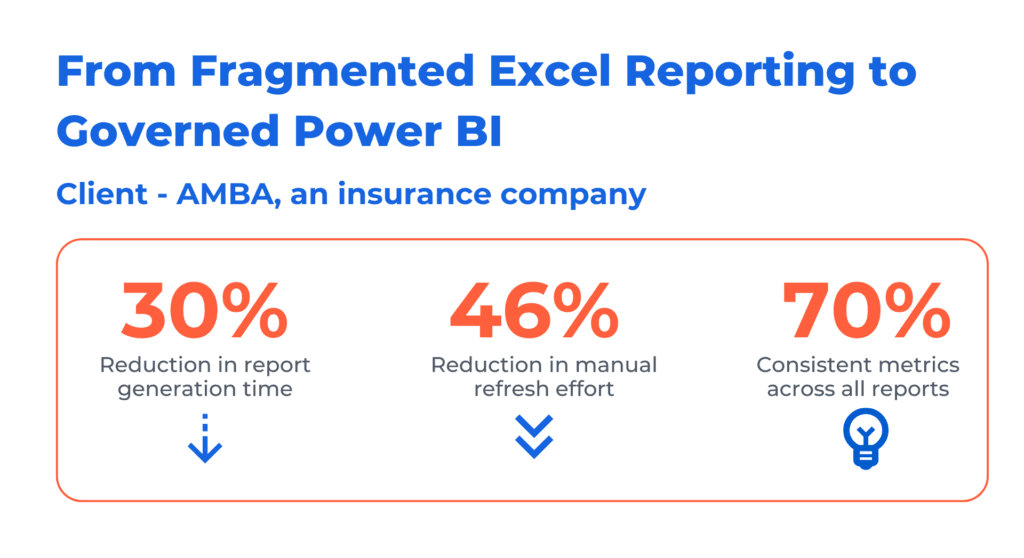

Case Study: From Fragmented Excel Reporting to Governed Power BI: The AMBA Insurance Case

Challenge

AMBA Insurance ran its reporting across dozens of fragmented Excel files, each built by individual analysts pulling from multiple source systems and refreshed manually before key business reviews. Data was owned by individuals rather than the organization. When analysts changed roles or left, reports broke and institutional knowledge went with them, leaving the business one resignation away from a reporting crisis.

Solution

Kanerika deployed a governed Power BI environment for AMBA, migrating fragmented Excel-based reporting into structured, cloud-connected dashboards with automated data refresh. The engagement covered data model design, governance configuration, and stakeholder adoption, consolidating multiple source systems into a single, centrally managed reporting environment.

Results

- 30% reduction in report generation time

- 46% reduction in manual refresh effort

- 70% consistent metrics across all reports

Power BI vs Power Query: Where BI Ends and Data Engineering Begins

Power BI is an analytics and visualization layer. It’s not a full data pipeline platform, a data warehouse, or a governance engine on its own. For enterprises dealing with tens of millions of rows refreshing at high frequency, complex multi-source ETL with SLA requirements, or regulatory reporting tied to certified data lineage, the right architecture puts Microsoft Fabric, Azure Synapse, or Databricks Lakeflow upstream of Power BI.

Power Query inside Power BI handles final report-level transformations well. The actual data engineering happens earlier in the stack. Treating Power Query as a substitute for a full ETL pipeline is one of the most common and costly architecture mistakes enterprise teams make, and it creates technical debt that compounds quickly as data volumes and complexity grow.

This is where decision intelligence architecture becomes practical. Knowing which layer of the data stack each tool belongs to separates ad-hoc analytics from production-grade decision support systems that executives actually trust and act on. In supply chain, insurance, and financial services, the verticals where Kanerika operates most frequently, this architecture decision carries real downstream business impact. Getting it wrong early means expensive rework later.

Power Query and Power BI Mistakes That Cost Teams the Most

Most of the friction Kanerika encounters in Power BI engagements traces back to the same handful of mistakes. They’re not edge cases, they repeat across industries and team sizes.

| Mistake | Why it happens | The right pattern |

| Doing business KPI calculations in Power Query (M) | Analysts used to Excel where one formula does everything | Business calculations belong in DAX; M handles only data shape and cleaning |

| Breaking query folding with custom M columns early in the pipeline | Not knowing which steps fold | Filter and reduce data before adding any non-foldable steps |

| Using DirectQuery everywhere for real-time data | Misunderstanding what real-time actually requires | Use Import for most tables; DirectQuery only where sub-hourly freshness is genuinely required |

| Loading intermediate staging tables into the data model | Over-engineering transformation inside Power BI | Keep staging in Power Query; only load analysis-ready tables into the model |

| Separate Power Query logic in every report file | Each analyst builds their own version | Centralize shared data prep in Dataflows Gen2 |

| No star schema, everything in one flat table | Excel habit; simpler to load initially | Star schema is required for DAX to work correctly at scale |

| Ignoring Row-Level Security until after deployment | RLS feels like a governance detail, not a design requirement | Design RLS rules during the data model phase, not as a post-launch patch |

| Skipping incremental refresh on large fact tables | Not knowing the feature exists | Enable incremental refresh for any fact table exceeding 1M rows with a reliable date column |

| Calculated columns instead of measures | Comfortable with Excel’s column-based logic | Calculated columns increase model size; measures are evaluated at query time and scale better |

| No process mapping before building the data model | Teams jump straight to connecting data | Map business questions to data structure before writing any M or DAX |

The thread connecting most of these mistakes: treating Power BI as a smarter version of Excel when it’s architecturally a different kind of system. Excel is a personal productivity tool. Power BI is an organizational data platform. Habits that work well in Excel systematically undermine Power BI deployments when they’re not consciously left behind.

Why Choose Kanerika for Power BI and Data Modernization

Kanerika is a data and AI solutions company that helps businesses get the most out of their data through advanced analytics. We help organizations pull fast, accurate, and actionable insights from large data sets, so decisions are grounded in real intelligence.

As a Microsoft Solutions Partner for Data and AI, Kanerika helps businesses migrate from legacy systems to modern data platforms. Custom automation tools handle migrations across SSRS to Power BI, SSIS and SSAS to Fabric, Informatica to Talend/DBT, and Tableau to Power BI, cutting manual effort while keeping data intact.

For teams adding AI on top of Power BI, Kanerika’s KARL AI Data Insights Agent enables natural language querying across governed data models, turning a well-architected Power BI environment into a conversational analytics platform without rebuilding the underlying infrastructure.

Partner with Kanerika for a seamless, automated, and risk-free data modernization experience.

Modernize Your Enterprise Operations with Impactful Data & AI Solutions

FAQs

Is Power Query the same thing as Power BI?

No. Power Query is a data transformation engine embedded inside Power BI. It’s also available separately in Microsoft Excel. Power BI is the full self-service analytics platform — it includes Power Query for data prep, plus a relational data model, a DAX calculation engine, and an interactive reporting and sharing layer. Treating them as the same tool is the most common misconception in the Microsoft analytics ecosystem.

Can Power Query be used without Power BI?

Yes. Power Query is available inside Microsoft Excel for Microsoft 365 subscribers. It can connect to data sources, clean and transform data, and load results into Excel worksheets or a Power Pivot data model without ever opening Power BI. It’s also available in Microsoft Fabric as the transformation layer within Dataflows Gen2.

Do you need to learn both M language and DAX for Power BI?

For professional Power BI work, eventually yes — but they serve completely different purposes. M handles data transformation at load time. DAX calculates measures that respond dynamically to filters at query time. You can get started through the visual interface without writing either language, but meaningful business intelligence work eventually requires functional DAX knowledge, and performance optimization requires M knowledge.

What is query folding in Power Query and why does it matter?

Query folding is Power Query’s ability to push transformation steps back to the source database as native SQL queries. When it works, the database does the heavy lifting and only clean, filtered data comes back to Power BI. When it breaks, Power Query downloads entire source tables locally — a serious performance problem for large datasets. Query folding is also a prerequisite for incremental refresh. If the fold chain breaks anywhere in the pipeline, Power BI falls back to full data reloads on every refresh cycle.

What is the difference between Import, DirectQuery, and Composite mode in Power BI?

Import mode copies data into Power BI’s in-memory VertiPaq engine at refresh time — fastest query performance, full DAX and Power Query support, the most common choice. DirectQuery sends queries live to the source system on every report interaction — near-real-time freshness but limited DAX and higher source system load. Composite mode mixes both in a single dataset, useful for combining historical trend data with live operational data.

What are Dataflows Gen2 and when should I use them?

Dataflows Gen2 in Microsoft Fabric runs Power Query transformations in the cloud on a schedule and stores results in a managed Lakehouse that multiple Power BI reports can share. Use them when multiple reports need the same cleaned data, when centralized governance over data prep logic is required, or when per-report Power Query maintenance has become a duplication problem. For organizations with five or more reports sharing the same source data, Dataflows Gen2 is the architectural step that converts individual Power Query work into governed, reusable organizational data infrastructure.

Which handles large datasets better — Power Query in Excel or Power BI?

Neither is a database. Power Query in Excel degrades above one to two million rows. Power BI handles larger datasets better with Import mode and a properly optimized star schema data model. For datasets requiring truly industrial-scale processing, the right architecture uses Microsoft Fabric, Azure Synapse, or Databricks for upstream data engineering, with Power Query inside Power BI handling only final report-level transformations.

Can Power Query replace dedicated ETL tools like Azure Data Factory or Informatica?

For simple, low-volume transformations, it can serve a similar function. For enterprise-scale ETL with complex scheduling, error handling, data lineage tracking, and SLA requirements — no. Power Query works best as a last-mile transformation layer inside the analytics platform. For teams evaluating migration from legacy ETL platforms, Kanerika’s guide on migrating from Informatica to Talend covers the broader ETL platform landscape in detail.

What does Microsoft Fabric change about Power Query and Power BI?

Microsoft Fabric unifies Power BI, Azure Synapse, Data Factory, and related services into one platform. Power Query is now available as a transformation layer within Fabric Dataflows Gen2 and Pipelines — with enterprise-grade scheduling, governance, and compute scale well beyond standalone Excel or traditional Power BI Desktop. Power BI Premium P SKUs have been replaced by Fabric F SKUs. Organizations evaluating the Microsoft data and AI stack today should treat Fabric as the destination architecture, with Power BI as the reporting and analytics layer within it.

How does Power BI support text analytics and AI-driven insights?

Power BI includes native AI visuals — Key Influencers, Decomposition Tree, and Q&A natural language querying — at the Pro level. Premium Per User and Fabric capacity tiers unlock additional AI features including AutoML, cognitive services integration, and smart narratives. For more advanced natural language and text analytics use cases on top of Power BI data, Kanerika’s KARL AI agent integrates with Power BI datasets to enable conversational querying across governed data models without requiring users to understand DAX or navigate reports themselves.

How long does a Power BI migration from Excel typically take?

A focused engagement covering 20 to 40 reports typically runs six to twelve weeks, including data model design, DAX development, governance configuration, and user training. Kanerika’s FLIP migration platform reduces that timeline by automating the technical conversion work — particularly for organizations migrating simultaneously from legacy environments like Crystal Reports, Cognos, or SSRS alongside their Excel-based reports. The change management and stakeholder adoption work typically takes as long as the technical migration. Sometimes longer.