Data transformation is the systematic process of converting raw data from various sources into a clean, consistent, and analytically-ready format that drives business intelligence and strategic decision-making. It’s the difference between having data and having actionable insights that fuel growth, efficiency, and competitive advantage. Modern data transformation tools have become the cornerstone of this process—sophisticated platforms that automate, streamline, and scale the conversion of raw information into strategic business assets.

In today’s data-driven economy, organizations that fail to harness their information assets risk being left behind. With global digital transformation spending reaching $2.5 trillion in 2024 and projected to reach $3.9 trillion by 2027, the race for competitive advantage has never been more intense. At the heart of this transformation lies a critical capability that determines success or failure: the strategic deployment of data transformation tools.

Think of data transformation as the refinery that converts crude oil into high-octane fuel. Your organization generates massive amounts of raw data daily—customer interactions, financial transactions, operational metrics, market intelligence—but this data remains largely unusable until it’s transformed into a format that can power your most critical business decisions.

The Data Transformation Process: Your Competitive Engine

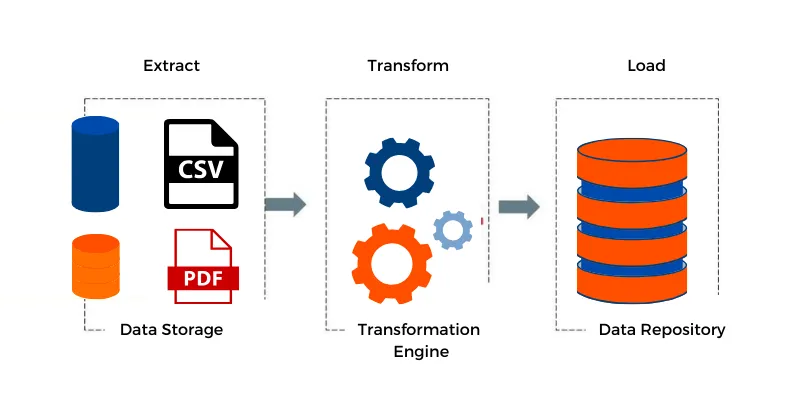

The data transformation process follows a proven methodology known as ETL (Extract, Transform, Load), which serves as the backbone of modern data operations:

Extract: Capturing Value from Every Source

Your organization’s data exists in silos—CRM systems, ERP platforms, social media feeds, IoT sensors, and third-party APIs. The extraction phase systematically pulls this disparate information from multiple sources, ensuring no valuable insight is left behind. This comprehensive data collection forms the foundation for enterprise-wide intelligence.

Transform: Converting Chaos into Clarity

Raw data is often inconsistent, incomplete, or incompatible across systems. The transformation phase applies business rules, data quality standards, and analytical frameworks to convert this information into a unified, reliable format. This includes standardizing formats, removing duplicates, validating accuracy, and enriching data with additional context that drives deeper insights.

Load: Delivering Intelligence Where It Matters

The final phase delivers transformed data to target systems—data warehouses, analytics platforms, and business intelligence tools—where it becomes immediately accessible for strategic analysis, reporting, and decision-making.

Why This Matters to Your Bottom Line

ETL improves business intelligence and analytics by making the process more reliable, accurate, detailed, and efficient, directly impacting operational effectiveness and strategic outcomes. As organizations save time, effort, and resources, the ETL process ultimately helps increase ROI while improving business intelligence to boost profits.

The financial impact extends beyond cost savings. ETL feeds sophisticated analytical processes such as machine learning, enabling prescriptive analytics and advanced statistical models that rely on clean and readily available data. This capability transforms your organization from reactive to predictive, positioning you ahead of market trends and customer needs.

For decision-makers evaluating transformation investments, the question isn’t whether to implement data transformation—it’s how quickly you can deploy it to maintain competitive advantage. Organizations that master data transformation don’t just process information more efficiently; they fundamentally transform how they compete, innovate, and deliver value to customers.

The companies leading tomorrow’s markets are those making strategic investments in data transformation today. The question for your organization is simple: Will you be among them?

Transform Your Business with Smart Solutions!

Partner with Kanerika for Expert Data Transformation Capabilities

What are Data Transformation Tools?

Data transformation tools help convert raw data into usable and structured formats to suit different analytical and reporting purposes. This software includes features like data extraction, data transformation, and data loading (ETL), helping users source data from various sources, applying transformations like filtering, aggregation, and data type conversions, and loading the transformed data into target databases or systems.

Let’s take a look at the 10 best data transformation tools you can get your hands on in 2025.

Best Data Transformation Tools

1.FLIP

Flip is a game-changing AI-powered data operations platform that revolutionizes how businesses scale operations, streamline data transformation, ensure quality, and achieve end-to-end visibility. By automating processes, enriching data, validating accuracy, and providing comprehensive data lineage, Flip boosts efficiency and productivity. Its innovative features and cutting-edge technologies unlock the full potential of data assets, catering to diverse enterprise needs. With AI, low-code development, and cloud compatibility, Flip stands out as a comprehensive and powerful platform in the market.

The tool also offers KPI-driven dashboards, pre-built transformation functions, templates, and validation rules for ease of use, and sends out real-time alerts for missed or delayed feed.

It has an intuitive drag-and-drop feature that helps map elements and establish business rules, ensuring you stay on top of your transformation process. FLIP is the way to go if you’re looking for an automated data transformation tool with flexible implementation options and a seamless interface.

What makes it stand out?

- Drag and drop data mapping and version control

- Proactive alerting and data lineage visibility

- Pre-built RPA connectors and OCR capability

- Pre-built industry-specific templates

- Enterprise-Grade security

- Kubernetes orchestration

To learn more about FLIP and how it can set you up for success, book a free demo today!

2. Matillion

Matillion makes your data work more productive and stress-free. Designed for coders and non-coders, this platform offers instant deployment, allowing you to move, orchestrate, and transform data pipelines at scale.

It’s built for cloud data platforms like Snowflake, Databricks, Amazon Redshift, Microsoft Azure Synapse, and Google BigQuery. Thanks to its seamless visual designer that doesn’t require coding, you can perform complex ELT without using any analytics. Coders can use SQL, dbt, and Python for performing these tasks—there is a lot of flexibility available.

3. Dbt Labs

Dbt has revolutionized data transformation with its SQL-first transformation workflow. Whether you store your data in the cloud, data lake, or a data warehouse, dbt allows you to transform it with ease. It supports both Python and SQL.

With provisions for version control, testing, logging, and sending out notifications, you can get rid of data doubt and deploy confidently. However, it may not be an ideal solution for teams with varied technical abilities. However, more out-of-the-box solutions for managing industry-specific procedures would be nice.

4. Fivetran

Fivetran is an automated data platform providing ELT (extract, load, and transform) functions to businesses. It is handy when you want to move data into, within, or across the cloud. The tool is heavy on automation, which helps reduce the tedious workload of data engineers.

The platform can centralize your ELT and convert them into insights without the help of any third-party software. If you’re looking to speed up data transformation at your company, this is a tool you might want to consider.

5. Keboola

Keboola is a comprehensive data platform where you can get end-to-end ETL, and build data pipelines, all in one place. Designed to speed up the work of data analysts and engineers, this tool promotes automation to reduce dependency on human labor.

When it comes to data transformation, Keboola offers a no-code approach, which is ideal for non-tech teams. If your team is familiar with coding, you could opt for SQL, Python, or R, depending on your preference. It comes with 250+ built-in integrations and fits into your workflow seamlessly, whether you use Snowflake, Airflow, GitHub, Spark, or any other tool.

Transform Your Business with Smart Solutions!

Partner with Kanerika for Expert Data Transformation Capabilities

6. Datameer

Datameer is a data transformation tool designed to make life easier for data engineers and analysts. With this software, you can create new datasets and data pipelines. You can also transform data in Snowflake, and reduce data engineering time at your company. Additionally, the tool streamlines complex SQL operations, gives you visibility of Snowflake’s analytics resources and their costs. It helps you embrace innovation without exceeding your budget, and allows you to automate data analysis with AI.

The simple-to-use canvas interface can be scaled depending on your team’s technical knowledge, ensuring every member can analyze data and have access to insights. The tool offers an option to go with no-code Drag-and-Drop or use SQL code to transform data, fostering a collaborative environment between business users and engineers. Be it creating ad-hoc data flows or advanced pipelines, this tool can do it all. If you’re facing long development cycles at your organization, your team members have different skill sets and preferences, or you want to centralize your analytics, Datameer is a good option.

7. Talend

Talend is a data management solution that brings together data integration, data quality, and data governance under one roof. This end-to-end data management solution supports integrations with Snowflake, MS Azure, AWS, and more, offering ample flexibility. This is a low-code platform, so your team doesn’t have to use complex coding to facilitate data transformation processes.

It’s a great platform for enterprises handling massive volumes of data, businesses rapidly scaling up, and companies looking to invest in advanced data analytics. Talend improves operational efficiency across departments and provides greater visibility into data.

However, it can be a pretty expensive data transformation tool for businesses scaling up rapidly, especially if the budget is one of the major constraints. ‘

Flip on the other hand, is a less expensive alternative to Talend and has a more intuitive interface.

Talend vs. Informatica PowerCenter: An Expert Guide to Selecting the Right ETL Tool

Explore the unique strengths and limitations of Talend and Informatica PowerCenter.

8. SAP Data Services

SAP Data Services is a versatile data transformation and integration tool that helps improve data quality. It empowers enterprises to transform structured and unstructured data by reducing duplicates and fixing quality issues.

When you gain access to contextualized insights, it’s easier to understand the true value of the data you have at hand. You can centralize this data on the cloud or within BigData and discover insights to facilitate better decision-making. The tool is particularly suitable for enterprises, offering features like parallel processing and bulk data loading to improve scalability.

9. CloverDX

CloverDX is a tool that makes automation and data pipeline management seem like a cakewalk. This software prioritizes two goals: control and accessibility. It empowers your developers and allows business users to access relevant data.

With readymade templates and automated transformation, this tool can reduce the workload of busy teams, and improve efficiency and scalability simultaneously. It integrates smoothly with your current IT environment, allows you to monitor or troubleshoot processes in the cloud, on-premise, or hybrid setups, and enables you to publish your data at a desired destination, whether at an API, app, or storage.

10. Informatica

If your company is looking to work across multiple databases, Informatica could be your choice of data transformation tool. This cloud-native software helps you instantly extract, transform, and load data into data warehouses. Depending on your company’s needs and preferences, you can choose between Power Center (end-to-end ETL designed for enterprises) or Cloud Data Integration (IPaaS).

As far as data transformation is concerned, you can give it any data, which will seamlessly transform it into usable data. Thanks to the low code, no code approach, the tool democratizes data across all teams, irrespective of their technical knowledge. Informatica’s Intelligent Data Management Cloud™ utilizes artificial intelligence, helping enterprises to stay ahead of the curve and enhance business results.

Which is the Best Data Transformation Tool for my Business?

When you have the right data transformation tool, your business will have access to high-quality data with minimal or no mistakes or duplicate enhanced retrieval times, and you’ll be better equipped to manage and organize data. It can be overwhelming to choose a tool when so many great solutions are available in the market.

The key is to understand and evaluate what you’re being offered, your requirements, the price you’re paying, and whether the tool is seamless to use for all of your team members.

Transform Your Business with Smart Solutions!

Partner with Kanerika for Expert Data Transformation Capabilities

Rethink Data Transformation with FLIP

FLIP emerges as the best data transformation tool available in 2025. The no-code tool democratizes data across teams. And, it allows you to unlock the real potential of your data pipelines in less time and with minimal costs. Here’s what our clients have achieved after switching to FLIP:

- Based in the USA, a telemetry analysis platform used FLIP to transform messages according to customer requirements.

- Flip helps Global Consumer Good Company gain real-time visibility into their supply chain by integrating data from suppliers, logistics partners, and production systems. This enables better inventory management and demand forecasting.

- A US-based Logistics Company experienced a remarkable 63% increase in productivity and a cost reduction of 38% in processing through FLIP-empowered proactive alerting and AI-enabled processing,

The exciting part is that you can replicate this success for your business using the same tool!

FAQs

What are data transformation tools?

Data transformation tools are software solutions that convert raw data from one format, structure, or value system into another for analysis and business intelligence. These tools automate processes like cleaning, normalizing, aggregating, and enriching data across multiple sources. Modern data transformation platforms support both batch and real-time processing, enabling enterprises to prepare data for analytics, machine learning, and reporting workflows. Popular options include cloud-native solutions, ETL platforms, and code-based frameworks that handle complex data pipelines. Kanerika implements enterprise-grade data transformation solutions tailored to your specific infrastructure—connect with our team to modernize your data workflows.

What are the four types of data transformation?

The four primary types of data transformation are constructive, destructive, aesthetic, and structural transformations. Constructive transformation adds new data through calculations or derivations. Destructive transformation removes fields or records to reduce dataset size. Aesthetic transformation standardizes formats like dates and addresses for consistency. Structural transformation reorganizes data architecture through actions like joining tables or pivoting rows to columns. Each type serves distinct purposes within data pipelines and analytics workflows. Kanerika’s data engineers help enterprises implement the right transformation approach for every use case—schedule a consultation to optimize your data strategy.

What are examples of data transformation?

Common data transformation examples include converting date formats from MM/DD/YYYY to ISO standard, aggregating transactional records into daily summaries, normalizing customer names for deduplication, and joining data from CRM and ERP systems into unified views. Other examples include currency conversion, null value handling, encoding categorical variables for machine learning, and pivoting sales data from rows to columns for reporting. These transformations ensure data consistency across enterprise analytics platforms and business intelligence dashboards. Kanerika delivers automated data transformation pipelines that handle these operations at scale—reach out to streamline your data preparation processes.

What is ETL in data transformation?

ETL stands for Extract, Transform, Load—a data integration methodology where data is pulled from source systems, transformed in a staging area, and loaded into a target destination like a data warehouse. The transformation phase applies business rules, cleanses records, and restructures data before loading. ETL pipelines have powered enterprise data warehousing for decades and remain essential for batch processing workloads requiring pre-load data quality controls. Modern ETL tools offer visual interfaces, pre-built connectors, and automation capabilities for complex data transformation workflows. Kanerika builds robust ETL solutions on platforms like Informatica and Microsoft Fabric—let us architect your data integration strategy.

Is DBT an ETL tool?

DBT is not a traditional ETL tool—it handles only the transformation layer in an ELT workflow. DBT assumes data has already been extracted and loaded into a warehouse like Snowflake or Databricks, then applies SQL-based transformations directly within that environment. Unlike full ETL platforms, dbt does not extract data from sources or load it into destinations. This focused approach makes dbt powerful for analytics engineering but requires complementary tools for complete data pipelines. Organizations often pair dbt with Fivetran or Airbyte for extraction and loading. Kanerika helps enterprises architect comprehensive ELT stacks with dbt at the core—contact us to evaluate your transformation strategy.

What are different types of ETL tools?

ETL tools fall into several categories: enterprise platforms like Informatica PowerCenter and IBM DataStage for complex integrations, cloud-native solutions like Azure Data Factory and AWS Glue for scalable workloads, open-source options like Apache NiFi and Talend Open Studio for cost-conscious teams, and code-first frameworks like Apache Spark for developer-centric workflows. Some tools specialize in real-time streaming while others excel at batch processing. Selection depends on data volume, transformation complexity, existing infrastructure, and team skill sets. Kanerika evaluates your requirements and implements the optimal ETL toolset for your enterprise—request a free assessment to identify your best fit.

Which is the most popular ETL tool?

Informatica PowerCenter consistently ranks as the most widely adopted enterprise ETL tool due to its comprehensive connector library, robust transformation capabilities, and proven scalability. However, cloud-native platforms like Azure Data Factory and AWS Glue are rapidly gaining market share as organizations migrate to cloud infrastructure. For analytics-focused teams, dbt has become the dominant transformation tool in modern data stacks. Popularity ultimately depends on context—legacy enterprises favor Informatica while cloud-first companies prefer managed services. Kanerika holds expertise across all leading ETL platforms and helps you select the right solution for your environment—speak with our specialists today.

Will ETL be replaced by AI?

AI will augment ETL rather than replace it entirely. Machine learning already enhances data transformation through automated schema mapping, intelligent data quality detection, and anomaly identification. AI-powered tools can suggest transformation logic and auto-generate pipeline code, dramatically reducing manual development time. However, ETL fundamentals—extraction, transformation, and loading—remain necessary; AI simply makes these processes smarter and faster. Expect AI to handle routine mapping tasks while humans focus on complex business logic and governance. Kanerika integrates AI capabilities into enterprise data pipelines for intelligent automation—connect with us to explore AI-enhanced data transformation for your organization.

Is ETL obsolete?

ETL is not obsolete but has evolved significantly. Traditional batch ETL remains essential for regulated industries requiring strict data validation before loading into warehouses. What has shifted is the rise of ELT patterns where cloud warehouses handle transformation after loading, reducing pre-processing bottlenecks. Real-time streaming architectures also complement batch ETL for time-sensitive use cases. The transformation step itself is more critical than ever as data volumes explode. Modern enterprises typically blend ETL, ELT, and streaming approaches based on specific workload requirements. Kanerika helps organizations modernize legacy ETL infrastructure while preserving business logic—reach out to plan your data platform evolution.

What will replace ETL?

ETL is being complemented rather than replaced by several approaches: ELT pushes transformation into cloud data warehouses, data virtualization eliminates physical data movement, and real-time streaming processes events as they occur. Zero-ETL integrations like AWS Aurora-Redshift connections bypass traditional pipelines entirely for specific use cases. AI-driven data integration platforms also automate much of manual ETL development. Most enterprises will use hybrid architectures combining these methods based on latency requirements and data complexity. The transformation function persists regardless of methodology. Kanerika architects modern data integration strategies that leverage the right approach for each workload—schedule a consultation to future-proof your pipelines.

Can dbt replace Informatica?

DBT cannot fully replace Informatica because they serve different purposes. Informatica is a complete data integration platform handling extraction, transformation, loading, data quality, and governance across enterprise systems. DBT focuses exclusively on SQL-based transformations within data warehouses, assuming data is already loaded. Organizations migrating from Informatica to dbt typically need additional tools for extraction, orchestration, and data quality management. DBT excels for analytics engineering in cloud-native stacks while Informatica suits complex enterprise integration scenarios. Kanerika has executed numerous Informatica to modern stack migrations and can assess whether dbt fits your transformation needs—contact us for a migration readiness evaluation.

Is dbt the same as Databricks?

DBT and Databricks are fundamentally different tools that often work together. Databricks is a unified analytics platform providing compute infrastructure, storage, and processing capabilities for data engineering and machine learning workloads. DBT is a transformation framework that runs SQL models against data warehouses and lakehouses, including Databricks. You can use dbt to define and execute transformations on data stored in Databricks, making them complementary rather than competing solutions. Databricks handles infrastructure and processing while dbt manages transformation logic and version control. Kanerika implements integrated Databricks and dbt architectures for modern data platforms—let us design your optimal stack.

Is SQL a data transformation tool?

SQL is a data transformation language rather than a standalone tool, but it powers most transformation platforms. SQL statements perform essential transformations including filtering, joining, aggregating, pivoting, and calculating derived fields. Modern transformation tools like dbt use SQL as their core transformation syntax, while platforms like Snowflake and Databricks execute SQL transformations at scale. Writing raw SQL for transformations works but lacks features like version control, testing, and documentation that dedicated tools provide. SQL expertise remains foundational for any data transformation role regardless of tooling choice. Kanerika’s engineers leverage SQL across enterprise transformation platforms—reach out to build scalable, SQL-powered data pipelines.

Is Excel a data transformation tool?

Excel can perform basic data transformation through features like Power Query, pivot tables, formulas, and text functions. Power Query specifically enables extraction from multiple sources and applies transformation steps like filtering, merging, and reshaping data. However, Excel lacks scalability for large datasets, version control for transformation logic, and automation capabilities for production pipelines. It serves well for ad-hoc analysis and prototyping transformations but falls short for enterprise data integration requirements. Organizations often use Excel for initial exploration before implementing transformations in dedicated platforms. Kanerika helps enterprises graduate from Excel-based processes to automated, scalable transformation pipelines—contact us to modernize your data workflows.

Is ETL a data transformation?

ETL includes data transformation as its middle step but encompasses more than transformation alone. The complete ETL process extracts data from source systems, transforms it according to business rules and target requirements, then loads it into a destination like a data warehouse. Transformation within ETL handles data cleansing, format standardization, aggregation, and business logic application. While transformation is central to ETL, the extraction and loading phases address data movement challenges that pure transformation tools do not solve. Understanding this distinction helps organizations select appropriate tooling for complete data pipelines. Kanerika implements end-to-end ETL solutions with robust transformation layers—connect with us to architect your data integration strategy.

Is DBT for ETL or ELT?

DBT is designed specifically for ELT workflows, not traditional ETL. In ELT architectures, raw data is first extracted and loaded into a cloud data warehouse, then transformed in place using the warehouse’s compute power. DBT handles this in-warehouse transformation layer by executing SQL models against platforms like Snowflake, BigQuery, or Databricks. Unlike ETL tools that transform data before loading, dbt assumes data already resides in the target system. This approach leverages modern warehouse scalability and eliminates separate transformation infrastructure. DBT pairs with extraction tools like Fivetran to complete the ELT stack. Kanerika builds comprehensive ELT pipelines with dbt transformations—reach out to modernize your data architecture.

Is ETL the same as API?

ETL and APIs serve different purposes in data architecture. ETL is a methodology for moving and transforming data in batch processes between systems, while APIs are interfaces that enable real-time data exchange between applications. APIs can serve as data sources for ETL pipelines—extraction tools pull data through APIs before transformation and loading occur. Some modern architectures use API-based integration for real-time needs alongside ETL for batch analytics workloads. APIs handle point-to-point connectivity while ETL manages bulk data consolidation and transformation for warehousing. Understanding both concepts helps design comprehensive data integration strategies. Kanerika implements both API integrations and ETL pipelines for enterprise data needs—contact us to unify your data architecture.

What skills are needed for data transformation?

Effective data transformation requires proficiency in SQL for writing transformation logic, understanding of data modeling concepts for designing target structures, and familiarity with ETL or ELT tools like Informatica, dbt, or Azure Data Factory. Additional skills include Python or Scala for complex transformations, data quality principles for validation rules, and knowledge of source system schemas. Business acumen matters equally—understanding what transformations deliver analytical value requires collaboration with stakeholders. Version control with Git and testing methodologies ensure transformation reliability in production environments. Kanerika’s certified data engineers bring deep expertise across transformation technologies—partner with us to accelerate your team’s capabilities.