Did you know that humans create approximately 2.5 quintillion bytes of data every day? Data is the new oil, but it’s useless without refinement. ETL and ELT are the refineries of the information age. Now, the big question is which of these two data processing techniques is suitable for your business. This sparks the ETL vs ELT debate.

ELT (Extract, Load, and Transform) and ETL (Extract, Transform, and Load) are two different ways of data integration. Each has advantages and considerations that organizations may have to take into account when looking for means of improving their data handling processes. The choice between ETL and ELT is more significant now than ever because it determines how quickly, flexibly, and effectively raw data can be turned into actionable insights.

Data landscapes are dynamic and constantly expanding. The battle between ETL vs ELT in data management stimulates innovation and pushes businesses to adapt to the ever-evolving demands of modern data environments.

What is ETL?

ETL stands for Extract, Transform, Load. It is a fundamental process in data warehousing and integration. It functions as a pipeline, receiving raw data from multiple sources, transforming and cleaning it, and then delivering it to a predetermined destination for analysis. ETL involves three primary stages: extraction, transformation, and loading. Request Proposal about each stage in turn:

Extraction

Extraction is the initial step where data is gathered from various sources, such as databases, spreadsheets, or flat files, and transferred to a staging area. This is similar to a library with books scattered across different rooms. Extraction involves retrieving this data from its original locations. ETL tools can connect to various sources, schedule automated extractions, and handle different data formats.

Data is read from source systems and stored in a staging area for further processing. This phase ensures that data is collected accurately and completely from different sources before any modifications are made. The retrieved data might be inconsistent, incomplete, or incompatible.

Transformation

Transformation involves converting and organizing the extracted data into a format suitable for loading into the data warehouse. The retrieved data might be inconsistent, incomplete, or incompatible. Transformation is where the magic happens. Think of it as organizing the library books. Data is cleaned by removing duplicates, correcting errors, and filling in missing values. It can also be standardized to a consistent format and structure.

Additionally, transformations like filtering, aggregation, and calculations can be applied to meet specific analytical needs. Transformation ensures that the data is structured, formatted, and cleansed for accurate analysis and reporting.

Loading

Loading is the final step, where the transformed data is loaded into the target data warehouse, which is similar to carefully placing the organized books on shelves in the library. The loading process ensures the data arrives at the target system efficiently and without errors.

In this step, data is physically structured and loaded into the warehouse, making it available for analysis and reporting. The ETL process is iterative, repeating as new data is added to the warehouse to maintain accuracy and completeness.

A Glimpse of Kanerika’s Data Integration Project

Business Context

The client is a leading technology company offering spend management solutions. They faced challenges managing the entire invoice processing, as handling multiple file formats proved to be a cumbersome and time-consuming task.

We standardized the invoice data exchange to reduce errors and enhance business transaction efficiency. With automated validation and data transformation, we achieved streamlined communication, accelerated data exchange, and improved accuracy.

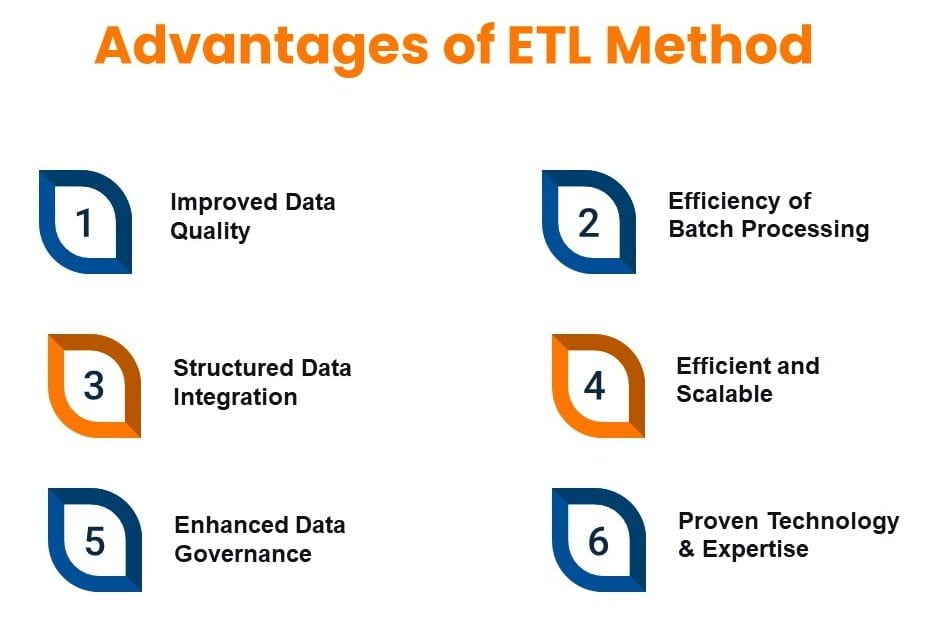

Advantages and Limitations of ETL

Advantages

1. Improved Data Quality

ETL tools enhance data quality by removing inconsistencies and anomalies. It allows for thorough data cleansing and transformation before loading it into the data warehouse. This ensures the data is accurate, complete, and consistent, leading to reliable insights. Techniques like deduplication, error correction, and standardization happen during the transformation stage.

2. Structured Data Integration

ETL simplifies the integration of data from multiple sources, making it more accessible and usable for analysis. This method excels at handling well-defined, structured data from traditional sources like relational databases. The transformation step allows for conversion into a format optimized for data warehouse queries and analysis.

3. Efficiency of Batch Processing

ETL works effectively for processing massive volumes of data in batches. In order to minimize interference with operating systems, scheduled ETL jobs might be executed at off-peak or during night.

4. Improved Data Governance

Since ETL procedures are usually clearly defined and documented, it is simpler to keep track of data lineage (the beginning and ending points of each data point’s transformation). Data security and regulatory compliance rely on this transparency.

5. Proven Technology and Expertise

ETL has been around for many years, and there are several trustworthy and well-developed ETL technologies out there. Building and managing ETL pipelines is a skill that many data professionals have.

6. Efficient and Scalable

Automation in ETL processes reduces manual effort, leading to quicker and error-free workflows. Moreover, modern ETL tools, especially cloud-based solutions, offer scalability to handle large volumes of data effectively.

Limitations

1. Latency in Insights

Since ETL processes data in batches, it may cause delays in data updates. This might not be the best option for situations requiring real-time or almost real-time insights.

2. Complexity and Development Time

Creating and managing intricate ETL pipelines can take a lot of effort and specific expertise. Costs for development and continuous maintenance may increase as a result..

3. Limited Flexibility for Unstructured Data

Unstructured data, such as direct sensor data or social media feeds, is difficult for ETL to handle directly. Additional pre-processing or conversion operations may be necessary.

4. Resource Intensive for Large Data Volumes

Huge datasets may require a lot of processing and storage power to transform before loading, which becomes a major concern in the big data era.

What is ELT?

ELT stands for Extract, Load, Transform. It’s a data processing approach where data is first extracted from various sources, then loaded into a data warehouse or data lake in its raw format, and finally transformed as needed for analysis. This is in contrast to ETL (Extract, Transform, Load) where transformation happens before loading. Let’s discuss the three main stages: extraction, loading, and transformation.

Extraction

During the extraction phase, data is collected from multiple sources and loaded directly into the target system. Similar to ETL, ELT starts by retrieving data from various sources, including databases, customer relationship management (CRM) systems, social media platforms, and more. This phase focuses on efficiently moving data from source to target without any intermediate processing.

Loading

This is the stage where the extracted data is loaded into the target system, typically a data warehouse or data lake– a vast storage repository for all your data, structured or unstructured. Think of dumping all the library books in a central location. Loading ensures that data is efficiently stored in the target system for subsequent analysis and reporting.

Transformation

Transformation occurs after the data has been loaded into the target system, where it is then processed and transformed as needed. Data manipulation happens within the data lake. Cleaning, standardization, filtering, aggregation, and calculations can all be performed on-demand for specific queries. Imagine having librarians sort and organize the books only when a specific topic is requested. Transformation post-loading allows for flexibility in data processing, enabling organizations to adapt data for various analytical needs.

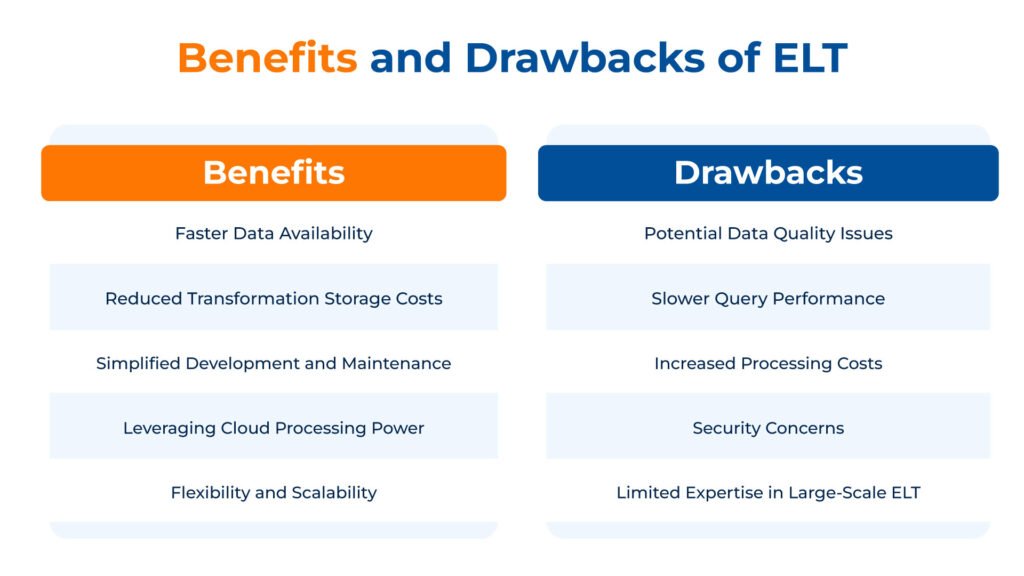

Benefits and Drawbacks of ELT

Benefits

1. Faster Data Availability

By loading data first, ELT provides quicker access to raw information for exploration and analysis. This is crucial for real-time or near-real-time insights.

2. Flexibility and Scalability

ELT excels at handling large and diverse datasets, including unstructured data like social media feeds or sensor readings. The raw data in the data lake can be transformed for various purposes later, providing greater flexibility for evolving analytical needs.

3. Reduced Storage Costs for Transformation

ELT avoids pre-transformation, potentially reducing storage requirements for intermediate processed data. This can be a significant cost-saving for massive datasets.

4. Simplified Development and Maintenance

ELT pipelines can be simpler to set up initially compared to complex ETL transformations. This reduces development time and ongoing maintenance overhead.

5. Leveraging Cloud Processing Power

Cloud data warehouses and data lakes often provide on-demand processing power for data transformation. This allows scaling processing resources to handle large data volumes efficiently.

Drawbacks

1. Potential Data Quality Issues

Since data is loaded raw, data quality checks and transformations happen later. This can lead to issues with data consistency and accuracy if not addressed properly within the data lake.

2. Slower Query Performance

Raw data in the data lake may require additional processing before analysis, potentially impacting query performance compared to pre-transformed data in ETL.

3. Increased Processing Costs

While storage costs may be lower, complex transformations within the data lake can incur processing costs depending on the cloud platform used.

4. Security Concerns

Loading raw data can raise security concerns if sensitive information isn’t anonymized or filtered before entering the data lake. Proper data governance practices are crucial.

5. Limited Expertise in Large-Scale ELT

While ELT tools are emerging, expertise in managing and optimizing large-scale data lakes for transformation is still developing.

ETL vs ELT: Key Differences

Aspect | ETL | ELT |

| Order of Operations | Extract -> Transform -> Load | Extract -> Load -> Transform |

| Data Staging | Requires a separate staging area for data transformation | Data is loaded directly into the target system |

| Data Transformation | Transformations occur before data is loaded, ensuring data quality upfront | Transformations happen on-demand within the target system |

| Data Latency | Can have higher latency due to upfront transformations | Offers lower latency as data is readily available for analysis |

| Data Quality | Generally higher data quality due to pre-processing | Requires additional data governance measures within the data lake |

| Scalability | Can struggle with very large datasets | Scales well for big data volumes |

| Flexibility | Less flexible as transformations are pre-defined | More flexible as transformations can be adapted for specific needs |

| Cost | Can be expensive for complex ETL processes and large datasets | Potentially more cost-effective, especially for big data |

| Suitability | Ideal for situations requiring high data quality and pre-defined analysis needs | Well-suited for big data environments, real-time analytics, and agile data exploration |

ETL vs ELT: Integration with Modern Technologies

ETL and ELT strategies align with emerging technologies like AI, machine learning, and IoT in different ways. While ETL is more suited for structured data and can help with data privacy and compliance by cleaning sensitive data before loading it into the data warehouse, ELT, on the other hand, is more flexible in handling unstructured data and can be more cost-effective, especially with cloud-based data warehouse solutions.

AI & Machine Learning

ETL:

- ETL can be used to prepare high-quality training data for machine learning models.

- By transforming data upfront in the ETL process, you can ensure data consistency and remove anomalies that might impact model training.

- However, ETL might introduce latency, delaying the availability of data for training models requiring real-time updates.

ELT:

- ELT allows for storing all data (including potentially useful data for future models) in the data lake.

- Machine learning pipelines can then access and transform this data on-demand for training various models.

- This flexibility is beneficial for exploring new machine learning use cases without pre-defining transformations in ETL.

IoT (Internet of Things)

ETL:

- ETL can be used to extract data from diverse IoT sensors and devices, potentially performing initial transformations to ensure compatibility with the data warehouse schema.

- This structured data can then be readily used for traditional data warehousing and business intelligence tasks.

ELT:

- ELT is well-suited for handling the high volume and velocity of data generated by IoT devices.

- The raw data can be loaded into the data lake, and transformations can be applied later based on specific analytics needs.

- This is useful for exploratory analysis of sensor data or identifying patterns that might not be initially apparent.

Cloud Platforms & Data Lakes

ETL:

- Modern ETL tools are increasingly cloud-based, offering scalability and easier integration with cloud data warehouses like Snowflake or Google BigQuery.

- ETL pipelines can extract data from various cloud sources and transform it before loading it into the target data warehouse.

ELT:

- ELT leverages cloud data lakes like Amazon S3 or Azure Data Lake Storage.

- These platforms offer flexible storage for the raw, semi-structured, and unstructured data that ELT excels at handling.

- Cloud-based data lake management tools can then be used to catalog, secure, and transform data within the lake for further analysis.

ETL vs ELT: Powering Data Integration Across Industries

ETL and ELT play crucial roles in transforming raw data into valuable insights across various industries. Here’s a glimpse into how these approaches are utilized in different sectors:

1. Finance

Financial institutions heavily rely on ETL for regulatory compliance. Consolidating data from trading platforms, customer accounts, and risk management systems requires data cleansing, standardization, and transformation before loading into data warehouses for reporting and audit purposes.

Fraud detection and real-time market analysis benefit from ELT. Raw transaction data can be quickly loaded into a data lake, allowing for near real-time analysis to identify fraudulent activities and capitalize on market fluctuations.

2. Healthcare

ETL ensures patient data privacy and adherence to HIPAA regulations. Extracting data from Electronic Health Records (EHRs), lab results, and billing systems requires transformation and cleaning before loading into data warehouses for research, quality improvement initiatives, and billing analysis.

Pharmaceutical companies leverage ELT for clinical trial data analysis. Large datasets from wearables, patient sensors, and medical imaging can be readily available in a data lake for faster analysis and drug development processes.

3. Retail

ETL streamlines customer data management for targeted marketing campaigns. Extracting data from CRM systems, loyalty programs, and point-of-sale systems allows for data enrichment and segmentation before loading into data warehouses for customer behavior analysis and targeted promotions.

Real-time customer behavior analysis thrives with ELT. Website clickstream data, social media interactions, and in-store sensor data can be loaded into a data lake, enabling near real-time personalization of product recommendations and in-store promotions.

4. Manufacturing

ETL ensures quality control and production efficiency. Extracting data from machine sensors, production lines, and quality control checks requires transformation and validation before loading into data warehouses for identifying production bottlenecks and optimizing manufacturing processes.

Predictive maintenance benefits from ELT. Sensor data from equipment can be readily available in a data lake for real-time monitoring and analysis, allowing for preventive maintenance and avoiding costly downtime.

5. Media & Entertainment

ETL for marketers streamlines and audience analysis. Extracting data from streaming platforms, social media, and content distribution networks requires transformation and filtering before loading into data warehouses for optimizing content delivery, personalization, and audience segmentation for advertising campaigns.

Real-time social media sentiment analysis utilizes ELT. Social media data can be quickly loaded into a data lake, allowing for near real-time analysis of audience reactions to content and identifying emerging trends.

Factors to Consider While Choosing Between ETL and ELT

1. Data Size and Complexity

ETL: Well-suited for smaller, well-structured datasets where complex transformations are required to ensure data quality before loading into the warehouse.

ELT: Ideal for massive and diverse datasets (structured, semi-structured, unstructured) where initial transformations might not be necessary.

2. Data Quality Requirements

ETL: Offers more control over data quality upfront during the transformation stage, filtering anomalies and ensuring consistency before loading.

ELT: Requires additional attention to data quality within the data lake, potentially involving separate processes after loading.

3. Speed of Insights

ELT: Provides faster access to raw data since transformation happens later, enabling near-real-time analytics for time-sensitive scenarios.

ETL: Can introduce latency due to the upfront transformation step, potentially delaying the availability of insights.

4. Technical Expertise

ETL: Requires expertise in designing and maintaining complex ETL pipelines, especially for intricate transformations.

ELT: ELT pipelines might be simpler to set up initially, but managing large-scale data lakes for efficient transformation demands expertise in data lake management tools and big data processing engines.

5. Security Considerations

ETL: Allows for data filtering and anonymization during transformation, potentially enhancing data security before loading into the warehouse.

ELT: Requires robust security measures for the data lake, as sensitive information might be present in the raw data before any transformations occur.

Our Case Study Video

Business Context

The client is a multi-site global IT enterprise operating in various geographies. They needed help with fragmented HR systems and data islands, primarily resulting from varying local regulations and M&As.

We implemented a common and integrated Data Warehouse on Azure SQL and enabled a Power BI dashboard, consolidating HR data and providing the client with a comprehensive view of their human resources.

Kanerika: Empowering Businesses with Expert Data Processing Services

Kanerika, one of the globally recognized technology consulting firms, offers exceptional data processing, analysis, and integration services that help businesses address their data challenges and utilize the full potential of data. Our team of skilled data professionals is equipped with the latest tools and technologies, ensuring top-quality data that’s both accessible and actionable.

Our flagship product, FLIP, an AI-powered data operations platform, revolutionizes data transformation with its flexible deployment options, pay-as-you-go pricing, and intuitive interface. With FLIP, businesses can streamline their data processes effortlessly, making data management a breeze.

Kanerika also offers exceptional AI/ML and RPA services, empowering businesses to outsmart competitors and propel towards success. Experience the difference with Kanerika and unleash the true potential of your data. Let us be your partner in innovation and transformation, guiding you towards a future where data is not just information but a strategic asset driving your success.

Frequently Asked Questions

What is the difference between ETL and ELT?

ETL transforms data before loading it into the target system, while ELT loads raw data first and transforms it within the destination. ETL processes data in a staging area using dedicated transformation servers, making it ideal for structured data and legacy warehouses. ELT leverages the processing power of modern cloud data platforms like Snowflake or Databricks to handle transformations post-load, enabling faster ingestion of large volumes. The choice between ETL vs ELT depends on your infrastructure, data complexity, and performance requirements. Kanerika helps enterprises evaluate both approaches and design the optimal data integration strategy for their environment.

Why use ELT instead of ETL?

ELT delivers superior performance for large-scale data processing by leveraging the computational power of modern cloud data warehouses. Unlike traditional ETL, ELT eliminates the transformation bottleneck by loading raw data directly and transforming it in-place using platforms like Databricks or Snowflake. This approach reduces data latency, supports schema flexibility, and simplifies pipeline architecture. ELT also enables data teams to iterate on transformations without re-extracting source data, accelerating analytics delivery. Organizations modernizing their data infrastructure increasingly prefer ELT for its scalability. Kanerika’s data engineers can help you migrate from legacy ETL to modern ELT pipelines seamlessly.

Is data lake ETL or ELT?

Data lakes primarily use ELT because they store raw, unstructured data in its native format before transformation. Unlike traditional data warehouses that require ETL preprocessing, data lakes ingest everything first and apply schema-on-read transformations when data is queried or analyzed. This approach supports diverse data types including JSON, logs, images, and streaming data. Modern data lake platforms like Azure Data Lake and Databricks Lakehouse optimize for ELT workflows, enabling scalable analytics on massive datasets. If you’re building or migrating to a data lake architecture, Kanerika can design ELT pipelines that maximize your platform’s capabilities.

Is Databricks ETL or ELT?

Databricks supports both ETL and ELT but is optimized for ELT workflows within its Lakehouse architecture. The platform’s distributed processing engine handles transformations efficiently after data lands in Delta Lake, making ELT the preferred pattern. Databricks also accommodates traditional ETL when integrating with legacy systems or applying complex pre-load transformations. With features like Delta Live Tables and structured streaming, Databricks enables real-time ELT pipelines at enterprise scale. Many organizations use Databricks to modernize from legacy ETL tools like Informatica. Kanerika specializes in Informatica to Databricks migrations, helping you build modern Lakehouse ETL pipelines efficiently.

Is Snowflake an ETL or ELT tool?

Snowflake is a cloud data warehouse that natively supports ELT rather than functioning as a standalone ETL tool. The platform excels at post-load transformations using its powerful SQL engine and elastic compute resources. Data teams typically use external tools like Fivetran or dbt for extraction and orchestration, then leverage Snowflake’s processing capabilities for transformation. This ELT approach maximizes Snowflake’s separation of storage and compute, enabling cost-efficient scaling. While Snowflake handles the T in ELT exceptionally well, you’ll need complementary data integration tools for complete pipelines. Kanerika builds end-to-end ELT solutions on Snowflake tailored to your analytics requirements.

Will ETL be replaced by AI?

AI will not replace ETL entirely but will significantly enhance and automate data integration processes. Machine learning already powers intelligent data mapping, anomaly detection, and self-healing pipelines within modern ETL and ELT platforms. AI-driven tools can auto-generate transformation logic, optimize query performance, and predict pipeline failures before they occur. However, human oversight remains essential for complex business rules, data governance, and compliance requirements. The future combines AI-augmented automation with traditional ETL and ELT patterns, not wholesale replacement. Kanerika integrates AI capabilities into data pipelines, helping enterprises achieve intelligent automation while maintaining control over critical data workflows.

Is ETL obsolete?

ETL is not obsolete but has evolved alongside modern data architectures. Traditional ETL remains relevant for regulated industries requiring strict data quality controls before loading, legacy system integrations, and scenarios with limited target system compute resources. Many enterprises still operate hybrid environments where ETL handles structured operational data while ELT processes analytical workloads. The perception of obsolescence stems from ELT’s dominance in cloud-native platforms, but ETL patterns persist in on-premises systems and compliance-heavy use cases. Smart organizations choose the right approach based on specific requirements rather than following trends blindly. Kanerika assesses your data landscape to recommend whether ETL, ELT, or a hybrid approach fits best.

What will replace ETL?

ELT has become the dominant successor to traditional ETL for cloud-based analytics, though complete replacement is unlikely. Modern data integration trends include real-time streaming pipelines, reverse ETL for operational analytics, and data mesh architectures with decentralized ownership. Platforms like Databricks and Snowflake have shifted transformation workloads into the destination, reducing reliance on separate ETL servers. Additionally, AI-powered data integration tools are automating routine mapping and cleansing tasks. The future points toward composable data architectures combining ELT, streaming, and AI rather than any single replacement technology. Kanerika helps organizations navigate this evolution with modernization strategies aligned to their specific data ecosystem.

Which is faster, ETL or ELT?

ELT is typically faster for large-scale data processing because it eliminates the transformation bottleneck before loading. By leveraging the parallel processing capabilities of cloud data warehouses like Snowflake or Databricks, ELT handles massive volumes more efficiently than traditional ETL servers. However, ETL can be faster when dealing with smaller datasets or when pre-load filtering significantly reduces data volume before transfer. Network bandwidth and source system constraints also influence overall pipeline speed. The performance advantage shifts based on data volume, transformation complexity, and infrastructure capabilities. Kanerika benchmarks both approaches against your actual workloads to determine the optimal architecture for your performance requirements.

What are ELT tools?

ELT tools are platforms that extract data from sources, load it directly into a target system, and transform it within that destination. Popular ELT tools include Fivetran and Airbyte for extraction and loading, dbt for in-warehouse transformations, and cloud platforms like Databricks and Snowflake that provide native transformation capabilities. Unlike ETL tools that require dedicated transformation servers, ELT tools leverage the compute power of modern data warehouses and lakes. This architecture simplifies pipeline management and scales with your cloud infrastructure. When evaluating ELT tools for your data stack, Kanerika provides expert guidance on selecting and implementing the right combination for your enterprise needs.

Which ETL tool is in demand in 2026?

Microsoft Fabric leads ETL tool demand in 2026, integrating data engineering, warehousing, and analytics in a unified platform. Databricks continues strong growth for enterprises requiring advanced Lakehouse capabilities and AI-powered data processing. Snowflake remains highly demanded for cloud-native ELT workflows, while dbt dominates transformation layer tooling. Azure Data Factory and Informatica PowerCenter maintain enterprise adoption, particularly in hybrid cloud environments. Demand increasingly favors platforms offering both ETL and ELT flexibility with built-in governance features. Kanerika holds deep expertise across these leading platforms and can accelerate your migration to the ETL or ELT tool that matches your strategic roadmap.

What is the best ETL tool?

The best ETL tool depends on your specific infrastructure, budget, and technical requirements rather than universal rankings. Microsoft Fabric excels for Microsoft-centric enterprises seeking unified analytics. Databricks leads for organizations prioritizing AI and machine learning integration with data engineering. Informatica remains strong for complex enterprise integrations requiring extensive connector libraries. Talend suits mid-market companies needing open-source flexibility, while Azure Data Factory provides cost-effective orchestration for Azure workloads. Evaluating tools against your data volumes, transformation complexity, and team skills matters more than industry rankings. Kanerika evaluates your environment and recommends the ETL or ELT platform that delivers maximum value for your investment.

Is Informatica ETL or ELT?

Informatica supports both ETL and ELT depending on the product and configuration. Traditional Informatica PowerCenter operates as an ETL tool, transforming data on dedicated servers before loading. Informatica’s cloud offerings, including Intelligent Data Management Cloud, support ELT pushdown optimization that executes transformations within target databases like Snowflake or Databricks. This flexibility lets enterprises choose the optimal pattern per use case. Many organizations are modernizing from Informatica’s ETL architecture to cloud-native ELT platforms for improved scalability and reduced infrastructure costs. Kanerika executes Informatica to Databricks and Informatica to Microsoft Fabric migrations, preserving your business logic while modernizing your data integration approach.

Is Azure Data Factory an ETL tool?

Azure Data Factory functions as a hybrid ETL and ELT orchestration platform rather than a pure transformation tool. ADF excels at extracting data from diverse sources, orchestrating pipeline workflows, and loading data into Azure destinations. For transformations, ADF offers data flows for ETL-style processing and supports ELT pushdown to destinations like Azure Synapse or Databricks. This flexibility makes ADF a versatile data integration hub within Microsoft’s ecosystem. Many enterprises pair ADF with Microsoft Fabric for end-to-end analytics pipelines combining orchestration with advanced transformation capabilities. Kanerika architects Azure Data Factory solutions optimized for your specific ETL or ELT requirements across hybrid environments.

Is DBT for ETL or ELT?

dbt is specifically designed for the T in ELT, handling transformations after data has been loaded into your data warehouse. Unlike full ETL tools, dbt does not extract or load data—it focuses exclusively on SQL-based transformations within platforms like Snowflake, Databricks, or BigQuery. This specialization makes dbt the standard for analytics engineering in modern ELT stacks. Teams pair dbt with extraction tools like Fivetran or Airbyte for complete pipelines. dbt’s version control, testing, and documentation features bring software engineering practices to data transformation workflows. Kanerika implements dbt alongside your data platform to establish scalable, maintainable ELT pipelines with full lineage tracking.

What are the steps of ETL?

ETL follows three sequential steps: Extract, Transform, and Load. During extraction, data is pulled from source systems including databases, APIs, flat files, and applications. Transformation then cleanses, validates, and restructures this data in a staging environment—applying business rules, deduplication, aggregation, and format standardization. Finally, the transformed data loads into the target data warehouse or database. Unlike ELT, all transformation logic executes before reaching the destination. This process typically involves connection management, error handling, scheduling, and monitoring components. Well-designed ETL pipelines ensure data quality and consistency throughout. Kanerika designs robust ETL workflows with comprehensive error handling and data validation to ensure reliable data delivery.

What is an example of ETL?

A classic ETL example involves consolidating sales data from multiple regional ERP systems into a central data warehouse. The ETL pipeline extracts transaction records from each source database nightly, then transforms this data in a staging server—standardizing currency formats, mapping regional product codes to global identifiers, calculating aggregated metrics, and validating data quality rules. After transformation completes, cleansed data loads into the warehouse’s fact and dimension tables for reporting. This approach ensures consistent, analysis-ready data before it reaches the destination. Retail and manufacturing enterprises commonly use such ETL patterns for financial consolidation and operational reporting. Kanerika builds custom ETL solutions for complex multi-source data consolidation across enterprise systems.

What is an example of ELT?

A typical ELT example involves streaming clickstream data from a web application into Snowflake for real-time analytics. The pipeline extracts raw event data via Kafka, loads JSON records directly into Snowflake’s staging tables without pre-processing, then transforms this data using dbt models within the warehouse. Transformations include sessionization, user attribution, and funnel analysis—all executed using Snowflake’s compute resources. This ELT approach handles high-volume data ingestion without bottlenecking on transformation servers. E-commerce and SaaS companies frequently use this pattern for customer behavior analytics and product insights. Kanerika implements production-ready ELT pipelines on Snowflake and Databricks that scale with your data growth.