A senior AI engineer at a healthcare technology company put it bluntly during a post-mortem: “We spent three months building on LangChain before realizing our actual bottleneck was retrieval quality. We picked the framework before we understood the problem.”

His team was building a clinical documentation assistant — a system that needed to surface accurate information from thousands of unstructured medical records. They chose LangChain because the tutorials were everywhere and the community was enormous. What they hadn’t thought through: LangChain’s retrieval abstractions, while flexible, needed significant custom engineering to handle dense, structured medical text well.

The project didn’t fail. But it took longer and cost more than it should have.

That story plays out more often than teams admit. The RAG framework decision gets made early, sometimes casually, and then the team builds around it. According to Gartner, more than 30% of AI projects will be abandoned after proof of concept through 2026 — mainly due to poor data quality and inadequate risk controls, not the algorithms themselves. By the time a framework mismatch shows up, switching has real cost.

This comparison is about catching those mismatches before they happen — across retrieval quality, production observability, token costs, agentic behavior, and compliance requirements.

Key Takeaways

- LlamaIndex is purpose-built for retrieval and indexing — deepest capabilities for document-heavy, knowledge base applications.

- LangChain offers the broadest ecosystem and fastest prototyping speed, but that flexibility adds complexity in production RAG pipelines.

- Haystack prioritizes pipeline auditability and production monitoring — the right fit when compliance and observability come first.

- Token costs shift meaningfully depending on retrieval strategy, and the framework you pick determines how easy those optimizations are.

- Agentic RAG behavior differs significantly across all three frameworks; agent reliability under production load varies too.

- Framework-agnostic teams — or those combining tools deliberately — tend to outperform teams locked into one RAG tool.

- Evaluation and observability should factor into the framework decision from day one, not after the first production incident.

Drive Business Innovation and Growth with Expert Machine Learning Consulting

Partner with Kanerika Today.

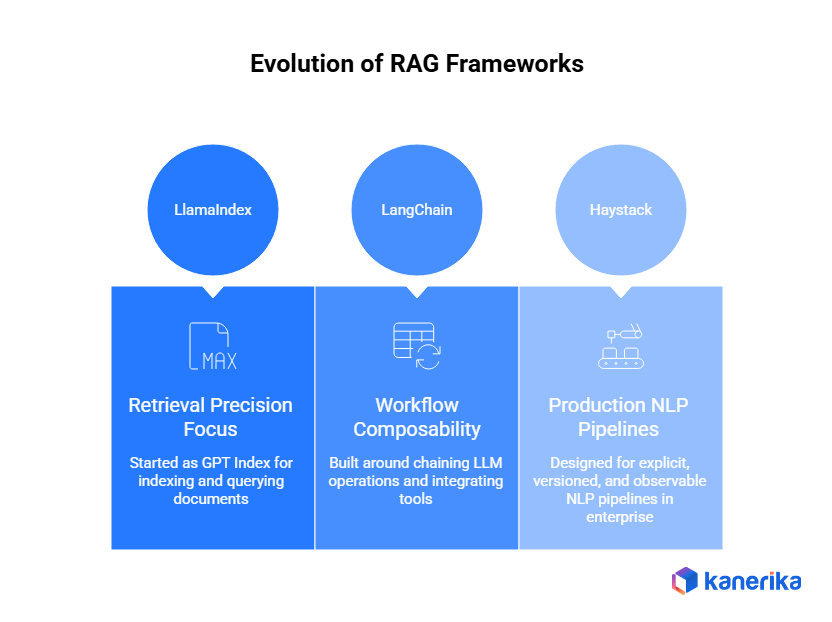

What Each RAG Framework Was Actually Built to Do

Before comparing capabilities, it helps to understand what problem each framework was originally solving. These aren’t interchangeable tools that differ only in syntax — they come from different design philosophies.

1. LlamaIndex: Built for Retrieval Precision

LlamaIndex started as GPT Index, a focused project for indexing documents and making them queryable with large language models. Retrieval is its core competency, and that origin shapes everything — the indexing abstractions, the query engine architecture, how it handles document hierarchies and metadata filtering. If an application’s central challenge is finding the right information from a large document corpus, LlamaIndex was designed for that problem.

2. LangChain: Built for Workflow Composability

LangChain was built around a different idea: LLM applications are chains of operations — retrieve, transform, generate, evaluate, route. Its strength is composability. The ecosystem of integrations across hundreds of tools, APIs, vector databases, and LLM providers makes it possible to wire together complex workflows quickly. This is genuinely valuable for prototyping. It also means the framework carries abstraction overhead that can complicate debugging when something breaks mid-chain in production.

3. Haystack: Built for Production NLP Pipelines

Haystack by deepset comes from an NLP pipeline background, and it shows. The framework treats pipelines as first-class citizens — explicitly defined, versioned, and observable. For teams that need to explain to a compliance officer exactly what happens when a query runs through the document retrieval system, Haystack’s architecture makes that conversation much easier. It was designed to run in production enterprise environments, not just demos.

Side-by-Side Comparison

With those design philosophies in mind, here’s how the three frameworks compare across what actually matters in a real engineering decision.

| Dimension | LlamaIndex | LangChain | Haystack |

| Primary strength | Retrieval precision, indexing depth | Ecosystem breadth, workflow orchestration | Pipeline auditability, production observability |

| Best for | Document-heavy RAG, complex knowledge bases | Multi-step agents, rapid prototyping | Regulated industries, compliance-sensitive deployments |

| GitHub repository | run-llama/llama_index | langchain-ai/langchain | deepset-ai/haystack |

| Learning curve | Moderate | Moderate to high | Moderate |

| Production observability | Good (requires setup) | Good via LangSmith | Native |

| Agent support | Strong (retrieval-focused) | Most mature | Structured and auditable |

| On-premises / private deploy | Strong | Possible, requires audit | Strong |

| Typical stack role | Retrieval layer | Orchestration layer | Compliance pipelines |

LangChain’s wider adoption reflects its head start and use cases well beyond RAG — not RAG-specific superiority. The more useful signal is where each framework sits in mature production stacks: LlamaIndex in the retrieval layer, LangChain in orchestration, Haystack where audit trails are required.

Ecosystem Maturity: What Actually Matters

Framework selection is also a bet on long-term community support — and on finding help when a production pipeline breaks late at night.

| Signal | LlamaIndex | LangChain | Haystack |

| Community size | Large, growing fast | Largest | Smaller, highly specialized |

| Community character | Enterprise practitioners | Broad — researchers to developers | NLP engineers, production teams |

| Commercial backing | LlamaIndex Inc. (funded) | LangChain Inc. (well-funded) | deepset (commercial + open-source) |

| Managed cloud offering | LlamaCloud | LangSmith / LangChain Hub | deepset Cloud |

| Enterprise support | Yes | Yes | Yes (deepset) |

Community size matters less than community quality for specific use cases. A smaller, expert Haystack community around production NLP pipeline patterns often beats a massive LangChain forum when the specific problem is retrieval observability in a regulated environment. Deepset’s commercial support model also gives Haystack something pure open-source projects can’t match: vendor accountability at the enterprise level.

Retrieval Quality: Where Most Framework Comparisons Go Wrong

Most comparisons focus on integrations and setup ease. The more important question is which framework produces better retrieval results for a specific document type and query pattern.

Retrieval quality is a function of chunking strategy, embedding model integration, metadata filtering, and reranking — not just which vector database it connects to. Advanced RAG techniques like sentence window retrieval, hybrid search, and cross-encoder reranking can improve retrieval quality meaningfully, but only if the framework supports them without excessive custom engineering.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Chunking and Indexing

How a framework chunks documents before indexing has an outsized effect on quality. The three frameworks approach this very differently.

- LlamaIndex offers the most granular control. Native support for sentence window retrieval, hierarchical node parsing, and recursive document agents means domain knowledge can be encoded into the indexing layer rather than patched in afterward. For complex document structures — legal contracts, clinical notes, technical manuals with nested sections — this matters.

- LangChain supports multiple chunking strategies through its text splitter modules. These are capable tools, but they operate at the orchestration layer rather than as native retrieval primitives. Custom chunking logic requires more assembly work.

- Haystack handles chunking through explicit, versioned pipeline components — DocumentSplitter nodes that are defined, auditable, and reproducible. For teams running systematic retrieval experiments, being able to version a chunking strategy as a pipeline configuration is a real operational advantage.

| Retrieval capability | LlamaIndex | LangChain | Haystack |

| Hierarchical / structured chunking | Native (NodeParser, HierarchicalNodeParser) | Manual assembly (TextSplitter modules) | Explicit pipeline nodes (DocumentSplitter) |

| Hybrid search (dense + sparse) | Native | Composable (requires assembly) | Declarative component |

| Reranking integration | Native (Cohere, Colbert, cross-encoders) | ContextualCompressionRetriever (manual) | TransformersSimilarityRanker component |

| Metadata filtering | Deep (query-time filter expressions) | Supported via vector store APIs | Filter component |

| Sentence window retrieval | Native | Custom implementation required | Supported |

LlamaIndex gives better retrieval before any extra tuning effort — which matters when the team is also trying to ship. Hybrid search combining BM25 and vector search is supported across all three frameworks, but the implementation friction varies.

Code Reality: What RAG Implementation Actually Looks Like

Framework comparisons that skip code miss the most useful signal available. Here’s how each framework handles a basic RAG query — not a full production system, but enough to reveal architectural philosophy.

LlamaIndex: Retrieval-First Architecture

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader

from llama_index.core.retrievers import VectorIndexRetriever

from llama_index.core.query_engine import RetrieverQueryEngine

from llama_index.core.postprocessor import SentenceTransformerRerank

# Load and index documents

documents = SimpleDirectoryReader("./docs").load_data()

index = VectorStoreIndex.from_documents(documents)

# Configure retrieval with reranking

retriever = VectorIndexRetriever(index=index, similarity_top_k=10)

reranker = SentenceTransformerRerank(

model="cross-encoder/ms-marco-MiniLM-L-2-v2", top_n=3

)

query_engine = RetrieverQueryEngine(

retriever=retriever,

node_postprocessors=[reranker]

)

response = query_engine.query("What are the contraindications for Drug X?")Reranking is a first-class parameter, not an afterthought. The retrieval layer is the primary design surface — everything else flows from it.

LangChain: Chain Assembly Pattern

from langchain_community.vectorstores import Chroma

from langchain_openai import OpenAIEmbeddings, ChatOpenAI

from langchain.retrievers import ContextualCompressionRetriever

from langchain.retrievers.document_compressors import CohereRerank

from langchain_core.runnables import RunnablePassthrough

from langchain_core.output_parsers import StrOutputParser

# Build retriever with reranking via compression

vectorstore = Chroma(embedding_function=OpenAIEmbeddings())

base_retriever = vectorstore.as_retriever(search_kwargs={"k": 10})

compressor = CohereRerank(top_n=3)

retriever = ContextualCompressionRetriever(

base_compressor=compressor,

base_retriever=base_retriever

)

# Assemble RAG chain

llm = ChatOpenAI(model="gpt-4o")

rag_chain = (

{"context": retriever, "question": RunnablePassthrough()}

| prompt_template

| llm

| StrOutputParser()

)

response = rag_chain.invoke("What are the contraindications for Drug X?")The assembly pattern is explicit. But reranking enters as a “compression” layer — a less intuitive abstraction for engineers new to the framework. The flexibility is real; so is the conceptual overhead.

Haystack: Explicit Pipeline Graph

from haystack import Pipeline

from haystack.components.retrievers.in_memory import (

InMemoryBM25Retriever, InMemoryEmbeddingRetriever

)

from haystack.components.joiners import DocumentJoiner

from haystack.components.rankers import TransformersSimilarityRanker

from haystack.components.builders import PromptBuilder

from haystack.components.generators import OpenAIGenerator

# Define pipeline as explicit graph

pipeline = Pipeline()

pipeline.add_component("bm25_retriever", InMemoryBM25Retriever(document_store=store, top_k=10))

pipeline.add_component("embedding_retriever", InMemoryEmbeddingRetriever(document_store=store, top_k=10))

pipeline.add_component("joiner", DocumentJoiner())

pipeline.add_component("ranker", TransformersSimilarityRanker(top_k=3))

pipeline.add_component("prompt_builder", PromptBuilder(template=template))

pipeline.add_component("llm", OpenAIGenerator(model="gpt-4o"))

# Connect components

pipeline.connect("bm25_retriever", "joiner")

pipeline.connect("embedding_retriever", "joiner")

pipeline.connect("joiner", "ranker")

pipeline.connect("ranker", "prompt_builder.documents")

pipeline.connect("prompt_builder", "llm")

result = pipeline.run({"query": "What are the contraindications for Drug X?"})Every component is named, connected, and independently inspectable. The verbosity is intentional — it’s a feature. You can serialize this pipeline, version it, and hand it to someone who has never seen Python. In regulated deployments, that capability has real commercial value.

The three code patterns reveal each framework’s philosophy more clearly than any feature matrix. LlamaIndex keeps retrieval at the center. LangChain assembles from composable pieces. Haystack declares an explicit graph where every data flow decision is visible.

Token Cost Math: The Number That Changes at Scale

Framework selection affects token costs in ways that don’t show up until production traffic arrives. The retrieval strategy, a framework, makes it easy to implement directly, determining how many context window tokens get consumed per query. OpenAI’s current pricing makes this math consequential at meaningful query volume.

Consider an enterprise running 50,000 queries per day against an internal knowledge base. The difference between returning 3 tight, relevant context chunks versus 8 broader ones — multiplied across daily volume — is not a rounding error.

| Retrieval chunks per query | Approx. tokens per query | Relative daily cost |

| 3 chunks (precise retrieval) | ~1,800 | Low |

| 5 chunks (moderate) | ~2,800 | Moderate |

| 8 chunks (broad retrieval) | ~4,200 | High |

The cost gap across these scenarios is driven entirely by retrieval quality decisions, not infrastructure. LlamaIndex’s precision tooling gives the most direct control over context window usage. LangChain makes cost optimization possible — but it requires deliberate engineering rather than leaning on framework defaults. Haystack’s pipeline structure makes it easy to benchmark retrieval strategies against both cost and quality tradeoffs side by side.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

Multimodal RAG: Increasingly Non-Optional

Enterprise document corpora rarely contain only text. Financial filings have tables. Technical manuals have diagrams. Clinical documents have annotated images. Multimodal RAG — processing and retrieving across text, images, and structured data — has become a practical requirement for many deployments.

| Capability | LlamaIndex | LangChain | Haystack |

| Native multimodal indexing | Yes (MultiModal VectorStoreIndex) | Partial (via LLM integrations) | Growing |

| Image + text retrieval | Native | Manual assembly | Configurable |

| Vision model integration (GPT-4V, LLaVA) | Native | Supported | Supported |

| Table and chart extraction | Strong | Moderate | Moderate |

| Structured data + text hybrid | Strong | Composable | Explicit pipeline |

LlamaIndex has the strongest native multimodal support. Its MultiModal VectorStoreIndex handles both text and image embeddings without additional glue code, with clean integrations for vision models. For teams dealing with mixed-content enterprise documents — common in manufacturing, legal, and healthcare — this advantage is worth weighing heavily.

RAG Evaluation and Observability: Don’t Skip This

A framework comparison without evaluation coverage is incomplete. Teams that skip systematic evaluation in staging consistently face production quality surprises — usually at the worst possible moment. The Microsoft Azure RAG architecture guide identifies production monitoring and retrieval evaluation as the two capabilities most frequently underinvested in enterprise RAG implementations.

The leading RAG evaluation tool is RAGAS, introduced in a 2023 paper. It measures faithfulness, answer relevancy, context precision, and context recall. All three frameworks integrate with RAGAS, but the experience differs.

| Evaluation capability | LlamaIndex | LangChain | Haystack |

| RAGAS integration | Native evaluation module | Via ragas compatibility layer | Via EvaluationHarness component |

| Setup overhead | Low | Moderate | Low |

| Faithfulness / context precision scoring | Yes | Yes | Yes |

| Batch evaluation | Yes | Yes | Yes |

| Primary observability tool | Arize Phoenix, TruLens | LangSmith | Native pipeline logging, deepset Cloud |

| Tracing maturity | Good | Highest (LangSmith) | Strong |

LangSmith is genuinely excellent for LangChain applications — the most mature tracing tool across the three ecosystems. If tracing and evaluation infrastructure are primary concerns, this is one area where LangChain’s ecosystem investment shows clearly.

For Haystack, evaluation as a pipeline component fits naturally into how the framework already thinks about production workflows — there’s no separate system to wire up.

Data Intelligence: Transformative Strategies That Drive Business Growth

Explore how data intelligence strategies help businesses make smarter decisions, streamline operations, and fuel sustainable growth.

Production Operations: Where RAG Prototypes Go to Die

Building a proof of concept in LangChain over a weekend is genuinely easy. Running that same application reliably at enterprise scale is a different project entirely.

Three dimensions separate production-ready RAG from extended prototypes: observability, debugging, and deployment support.

Observability varies significantly. Haystack’s pipeline architecture makes it straightforward to log inputs and outputs at every stage — it’s structural, not configured. LangChain has LangSmith for tracing, which is powerful but requires a separate setup and cost. LlamaIndex integrates with observability platforms through callback systems, but production-grade monitoring requires deliberate configuration work.

Debugging follows a similar pattern. Haystack pipelines fail explicitly at defined nodes with traceable errors. LangChain chains can fail in ways that are harder to attribute when the chain involves multiple custom components and third-party integrations.

Deployment also differs meaningfully. Haystack ships with Docker-native deployment support and REST API endpoints as part of its design. LangChain and LlamaIndex are more framework-agnostic, which means teams make more infrastructure decisions themselves.

| Production dimension | LlamaIndex | LangChain | Haystack |

| Observability (native) | Moderate | Good (LangSmith) | Strong |

| Debugging complexity | Moderate | High | Low |

| Deployment support | DIY | DIY + LangServe | Docker-native, REST API |

| Pipeline versioning | Manual | Manual | Native |

| Error traceability | Good | Variable | Strong |

| Component-level logging | Via callbacks | Via LangSmith | Structural |

Haystack wins on production operations by design. LangChain compensates with tooling. LlamaIndex sits in the middle for teams willing to invest in an observability setup. None of these frameworks deploys itself, but some start closer to production-ready than others.

Security and Compliance: The Requirement That Changes Everything

For enterprises in regulated industries, RAG framework selection intersects directly with data governance, audit requirements, and vendor security posture. The questions are concrete: Can the retrieval pipeline be audited? Can sensitive documents stay on-premises? Does the framework expose data to third-party services during inference?

Haystack has the strongest enterprise security story. The pipeline architecture makes audit trails natural — every component is a defined, loggable step. For teams that need to satisfy quality management systems requirements in pharmaceutical or manufacturing contexts, Haystack’s reproducibility is directly relevant.

LlamaIndex supports private deployments well, particularly combined with local embedding models and self-hosted vector stores. The indexing architecture keeps sensitive documents within the defined infrastructure perimeter.

LangChain is capable of secure deployments, but the breadth of its integration ecosystem means teams need to audit which integrations are active and whether any introduce unexpected data flows. This is a consequence of flexibility, not a flaw — but it requires deliberate security review.

| Industry | Primary compliance concern | Recommended starting point |

| Healthcare (HIPAA) | PHI data boundaries, audit trail | LlamaIndex (retrieval) + Haystack (pipeline) |

| Financial services (SOC2, FINRA) | Query auditability, explainability | Haystack |

| Government / Defense | On-premises, air-gapped deployment | LlamaIndex |

| Legal | Document confidentiality, chain of custody | Haystack |

| Pharmaceuticals (GxP) | Validation, reproducibility | Haystack |

| General enterprise | Flexibility, development velocity | LangChain |

Agentic RAG: Where Framework Differences Get Most Visible

Agentic RAG systems — where an LLM autonomously decides which retrieval steps to take, in what order, and when to stop — are where framework differences become most pronounced under load. Agent reliability depends heavily on how the framework manages tool execution, error recovery, and loop prevention.

LlamaIndex has invested heavily in agentic retrieval. Its ReAct agent patterns are specifically designed for retrieval tasks — an agent that decides which index to query, in what order, and with what metadata filters. For retrieval-first agentic applications, this is a meaningful advantage.

LangChain has the most mature general agent ecosystem, including LangGraph for stateful multi-agent workflows. For complex orchestration — an agent that retrieves from a vector database, calls external APIs, transforms data, and generates structured output — LangChain’s tooling is extensive and production-proven.

Haystack introduced agent capabilities more recently. Its pipeline architecture means agents are defined as explicit, observable graphs rather than dynamic runtime decisions — easier to audit, but less flexible for highly dynamic retrieval patterns.

| Agent dimension | LlamaIndex | LangChain | Haystack |

| Agent maturity | High (retrieval-focused) | Highest (general-purpose) | Moderate |

| Multi-agent orchestration | Growing | LangGraph (mature) | Experimental |

| Auditability of agent decisions | Moderate | Moderate | High |

| Loop prevention | Manual | Built-in (LangGraph) | Structural |

| Tool call support | Strong | Strongest | Growing |

| Stateful workflows | Limited | LangGraph (strong) | Pipeline-based |

| Best for | Retrieval-first agentic systems | Complex workflow agents | Auditable, regulated agents |

If the agentic system’s primary job is deciding what to retrieve and how, LlamaIndex is the right foundation. If the agent needs to retrieve, then call APIs, transform data, and route to different handlers — that’s LangChain’s domain. If every agent decision needs to be logged and explainable to a compliance function, Haystack’s structural approach is worth the flexibility tradeoff.

Team Maturity and Framework Fit: The Variable Most Articles Ignore

Framework selection doesn’t happen in a vacuum. It happens inside an organization with a specific team, specific existing skills, and a specific relationship with production risk. This is one of the highest-value inputs to the decision, and almost no comparison addresses it directly.

| Team profile | Primary risk | Recommended starting framework |

| Early-stage team, first RAG build | Over-engineering | LangChain (fastest to testable prototype) |

| Strong NLP background, no MLOps | Deployment complexity | Haystack (production-ready structure) |

| ML-heavy team, document retrieval focus | Retrieval quality at scale | LlamaIndex |

| Enterprise team, compliance-first | Audit failure | Haystack |

| Full-stack team, broad integration needs | Framework lock-in | LangChain with LlamaIndex retrieval layer |

| Small team, limited framework expertise | All-in bet on one tool | LangChain (largest community, most answers available) |

| Team already running LangChain in production | Switching cost vs. gain | Stay on LangChain; add LlamaIndex retrieval module |

The last row is often the most practically relevant. Switching RAG frameworks carries real cost. For teams already running production workloads on one of these three, the question is usually “should we add a specialized layer” rather than “should we rebuild from scratch.” The answer is almost always: add the layer.

When to Combine Frameworks: Patterns That Work in Production

The most sophisticated production RAG systems often don’t make a binary choice. They combine frameworks deliberately, each doing what it does best.

A common enterprise pattern: LlamaIndex handles retrieval and indexing — where its precision matters most — while LangChain orchestrates the broader application workflow, handling tool calls, routing logic, and output formatting. This isn’t theoretical. Engineering teams at enterprises with complex AI workloads frequently run multiple RAG frameworks in the same production environment.

| Combination pattern | Use case | What each framework contributes |

| LlamaIndex + LangChain | Document Q&A with external tool calls | LlamaIndex: retrieval and indexing; LangChain: orchestration, API calls, routing |

| Haystack + LangChain | Compliance pipeline inside a broader application | Haystack: auditable retrieval pipeline; LangChain: application orchestration |

| LlamaIndex + Haystack | High-precision retrieval in regulated environments | LlamaIndex: retrieval quality; Haystack: pipeline auditability and logging |

| LlamaIndex alone | Pure document retrieval, no complex orchestration | Single-framework simplicity when retrieval is the entire problem |

| LangChain alone | Multi-tool agentic system, retrieval secondary | Single-framework simplicity when orchestration is the entire problem |

Running three frameworks poorly outweighs any performance benefit. This path makes sense only for teams with genuine depth across multiple frameworks — or an implementation partner who operates across the full RAG ecosystem.

Partner with Kanerika to Modernize Your Enterprise Operations with High-Impact Data & AI Solutions

What Framework Migration Actually Costs

Teams occasionally discover midway through a project that they’ve chosen the wrong framework. The switching cost is real and worth understanding before committing.

| Migration path | Typical effort | What changes | What stays |

| LangChain → LlamaIndex | 4–8 weeks | Retrieval layer, indexing strategy | Orchestration logic (often reusable) |

| LlamaIndex → LangChain | 4–8 weeks | Orchestration, tool integration | Retrieval layer (usable as LangChain tool) |

| LangChain → Haystack | 6–12 weeks | Pipeline architecture, all components | Core business logic, prompt templates |

| Haystack → LangChain | 6–12 weeks | Pipeline definitions, component structure | Prompt templates, LLM integrations |

| LlamaIndex → Haystack | 5–10 weeks | Pipeline definitions, retrieval components | Document processing logic |

| Adding LlamaIndex to LangChain | 2–4 weeks | Retrieval layer only | Entire LangChain application |

| Adding Haystack compliance pipeline | 3–6 weeks | Specific sensitive retrieval flows | Main application architecture |

Adding a specialized layer is always cheaper than a full migration. “LangChain to LlamaIndex” as a full rebuild is a 4–8 week project. “Adding LlamaIndex as the retrieval layer inside an existing LangChain application” is a 2–4 week project that preserves everything else. Treat orchestration framework choice as the higher-stakes commitment — it’s harder to swap. Start with the retrieval layer as the variable; it’s the cheaper, faster thing to change.

How to Choose: Four Questions Before Committing

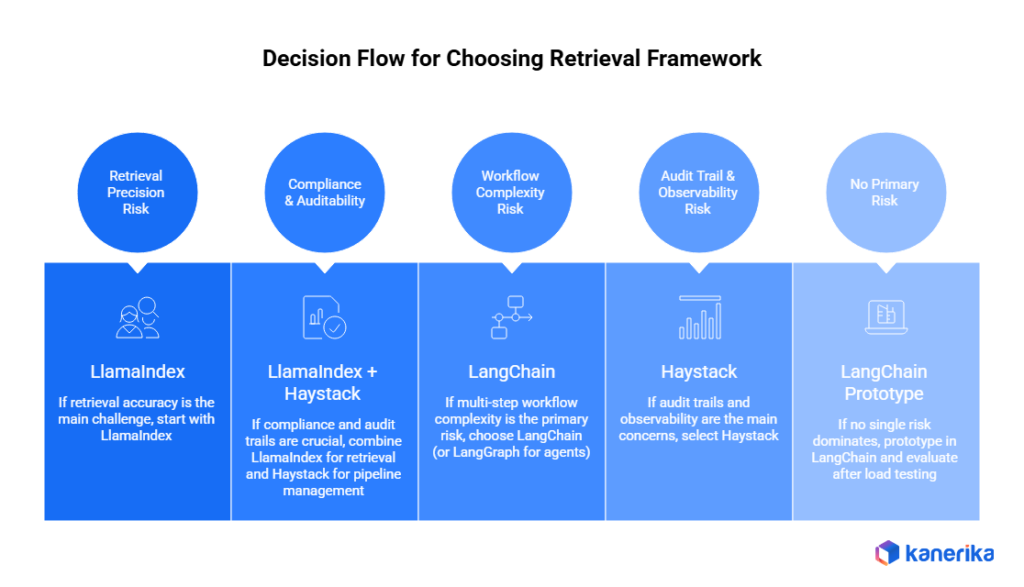

Rather than a generic feature matrix, four questions consistently surface the right answer for a specific team and workload.

- Question 1: What is the primary retrieval challenge? If the core problem is surfacing accurate information from large, complex document corpora — especially in regulated industries — start with LlamaIndex. If the application requires orchestrating retrieval alongside external APIs, data transformations, and conditional logic, LangChain’s composability is more relevant.

- Question 2: What does the production environment look like? Teams that need explicit audit trails, versioned pipeline definitions, and built-in monitoring should weight Haystack heavily. Teams optimizing for development velocity should weight LangChain. Teams optimizing for retrieval precision at scale against a complex knowledge base should weight LlamaIndex.

- Question 3: What are the data governance requirements? Regulated industries need to map data flows explicitly. Haystack and LlamaIndex both support private, on-premises deployment more cleanly than frameworks that rely on broad third-party integration chains. Ethical AI implementation requirements may also constrain which frameworks are permissible under internal governance policies.

- Question 4: What does the team actually know how to operate? The best framework on paper is irrelevant if the team lacks operational depth. A team that has run LangChain in production for two years should probably stay on LangChain — unless there’s a compelling technical reason to switch. This is the variable most framework evaluations ignore entirely.

- Decision flow: Is retrieval precision your primary technical risk? If yes, start with LlamaIndex. Does compliance or pipeline auditability also matter? If yes, LlamaIndex for retrieval plus Haystack for pipeline management. If not, LlamaIndex alone or with LangChain for orchestration. If retrieval precision is not the primary risk, is multi-step workflow complexity your primary risk? If yes, LangChain (consider LangGraph for agentic workflows). If not, is audit trail or observability your primary risk? If yes, Haystack. If not, prototype in LangChain and evaluate after the first load test.

How Kanerika Approaches Enterprise RAG Framework Selection

Kanerika operates as a framework-agnostic implementation partner — a position grounded in actual delivery experience across production environments. As a Microsoft Solutions Partner for Data and AI, Kanerika’s engineering teams have deployed RAG applications using LangChain, LlamaIndex, and Haystack for clients across healthcare, financial services, and manufacturing.

The evaluation starts with workload characteristics — document types, query patterns, governance requirements, infrastructure constraints, and team skill profile. Framework selection follows from that analysis. Data consolidation work often precedes RAG implementation in enterprise contexts, and the shape of the underlying data directly influences which retrieval architecture performs best.

For a healthcare client building a clinical documentation system, Kanerika’s evaluation surfaced retrieval quality on dense clinical text as the primary technical risk. LlamaIndex’s indexing capabilities were selected for the retrieval layer, with LangChain handling broader application orchestration. The separation of concerns reduced debugging complexity and lowered the cost of iterating on retrieval quality independently — without touching the orchestration layer.

Teams working on AI in fraud detection or AI in supply chain contexts face similar architectural decisions, where retrieval precision and pipeline auditability both matter and the right answer depends on which risk is harder to recover from in production.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

Is LlamaIndex better than LangChain?

Neither is universally better. LlamaIndex is stronger for retrieval-heavy applications where document indexing precision matters most. LangChain is stronger for complex multi-step workflows and applications that need to integrate many external tools. The better question is: which is better for your specific workload?

Can you use LlamaIndex and LangChain together?

Yes, and many production teams do. A common pattern is using LlamaIndex as the retrieval layer and LangChain for orchestrating the broader application. LlamaIndex query engines can be wrapped as LangChain tools, keeping the boundary clean.

Is Haystack good for production RAG?

Yes — it’s arguably the best starting point for teams with strict production requirements. Its explicit pipeline architecture makes observability, debugging, and audit trails structurally easier than the other two frameworks. For regulated industries especially, Haystack’s design philosophy fits well.

Which framework is easiest to learn?

LangChain has the most tutorials and community content, so it’s often the fastest to get something running. LlamaIndex has excellent documentation focused on retrieval patterns. Haystack has the steepest initial learning curve for teams unfamiliar with pipeline-oriented frameworks — but that investment pays off in production.

Which RAG framework has the best community support?

LangChain has the largest community by volume. LlamaIndex’s community has grown substantially and skews toward enterprise practitioners. Haystack’s community is smaller but highly specialized in production NLP pipeline use cases. The right community depends on your specific problem.