As organizations deploy AI models and autonomous agents across applications, a new challenge has emerged: understanding what these systems are actually doing in production. Unlike traditional software, AI systems can behave unpredictably, making it difficult to trace errors, detect hallucinations, or diagnose failures. This is where AI observability tools come in. These platforms provide visibility into model inputs, outputs, prompts, and reasoning chains, helping teams monitor performance, identify anomalies, and maintain trust in AI-driven systems.

The demand for AI observability is rising quickly as enterprises scale AI deployments. The global AI observability solutions market is expected to grow from $1.7B in 2025 to $12.5B by 2034, at a 22.5% CAGR. Organizations are increasingly investing in these tools to track model behavior, detect data drift, monitor latency and costs, and ensure AI systems remain reliable and compliant.

Continue reading this blog to explore the leading AI observability tools, how they work, and the key capabilities organizations should look for when monitoring AI models and agent-based systems in production.

Advance AI-Driven Business Transformation

Discover how AI is reshaping modern enterprises and driving measurable business impact.

Key Takeaways

- AI observability tools help organizations monitor and understand how AI models and agent systems behave in production by tracking inputs, outputs, prompts, latency, and costs.

- Unlike traditional monitoring, AI observability focuses on explaining why a model produced a specific result, enabling teams to debug hallucinations, detect data drift, and analyze failures.

- Enterprises adopt these tools to improve reliability, manage token costs, meet regulatory compliance requirements, and maintain trust in AI-driven systems.

- The AI observability ecosystem includes three main categories: LLM tracing tools, production monitoring platforms, and enterprise governance solutions for regulated environments.

- Choosing the right tool depends on factors such as the existing tech stack, complexity of AI workflows, compliance requirements, and whether the priority is evaluation, monitoring, or governance.

- Successful implementation requires a structured approach that starts with instrumentation, adds evaluation layers, and integrates observability with governance and incident response processes.

What Are AI Observability Tools?

AI observability refers to the ability to understand what an AI system is doing, why it produces certain outputs, and whether it performs as expected in production. Unlike traditional software, AI models, especially large language models (LLMs), don’t fail in predictable ways. They can generate plausible but wrong answers, drift from expected behavior over time, or behave differently in production than in testing. Observability tools exist to surface those issues before they cause damage.

Monitoring vs. Observability in AI Systems

Monitoring tracks predefined metrics: whether the system is up, how quickly it responds, and whether error rates are acceptable. Observability goes further. It lets teams ask questions they didn’t know they’d need to ask: why did this model produce that output, what changed between last week and now, which prompt version is underperforming. Traditional monitoring asks “Is it running?” and responds to threshold alerts. AI observability asks “Why did it respond that way?” by analyzing root cause traces across prompts, spans, and token usage. In AI systems, that distinction matters because model behavior is often non-deterministic and hard to predict.

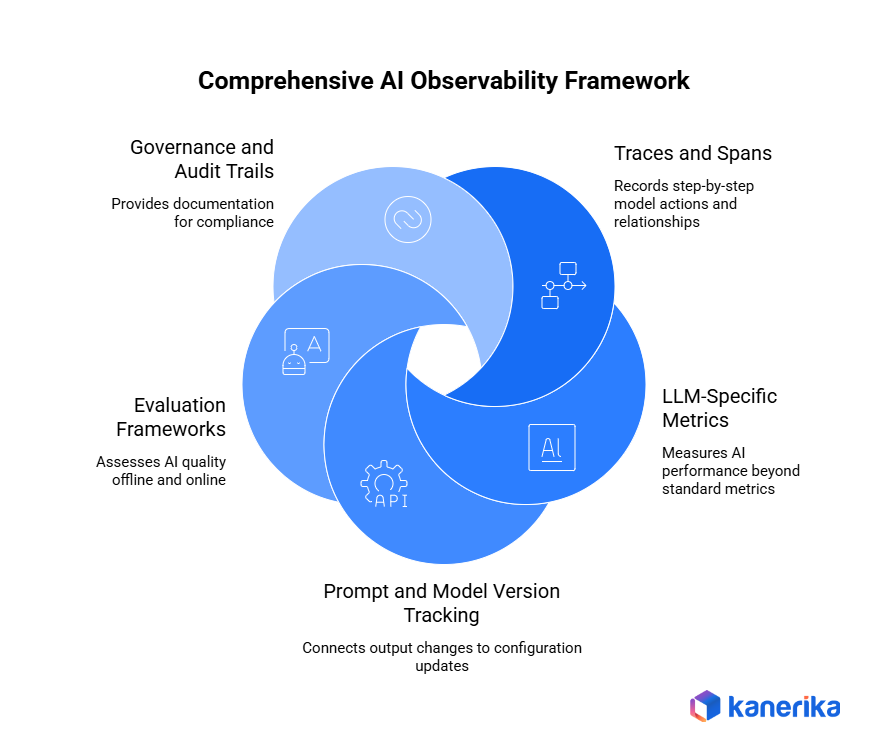

Key Components of AI Observability

- Model performance tracking: Measures accuracy, relevance, and output quality across requests. Tracks how a model performs over time, not just at deployment.

- Data drift monitoring: Detects when incoming data deviates from the distribution the model was trained on. Drift often causes gradual quality degradation that’s hard to catch without automated monitoring.

- Prompt and response tracing: Logs every prompt, tool call, and model response end-to-end. Traces let teams replay a sequence of events to find where something went wrong.

- System reliability insights: Monitors latency, uptime, error rates, and throughput across the AI stack.

- Token usage and cost tracking: Records token consumption per request and aggregates costs across model providers, critical for managing infrastructure spend at scale.

Why Enterprises Need AI Observability

1. Debugging AI Systems

AI systems don’t produce error logs the way traditional software does. When a model gives a wrong answer, there’s no stack trace. Observability tools fill that gap by recording the inputs, outputs, and intermediate steps that led to a result. Teams can trace a bad response back to a specific prompt version, a retrieved document, or a model configuration change. Without that visibility, debugging is guesswork.

2. Reliability and Performance Monitoring

Production AI systems face real-time demands: low latency, consistent quality, and high availability. Observability platforms track latency at the request level, flag hallucinations using automated evaluations, and alert teams when response quality drops below a threshold. Hallucination detection is particularly important because models can produce confident-sounding wrong answers that users trust, and catching those outputs requires more than basic error monitoring.

3. Compliance and Governance

Regulated industries, including healthcare, financial services, and manufacturing, face growing pressure to demonstrate that AI systems operate within defined boundaries. Observability tools provide audit trails of model inputs and outputs, flag policy violations, and support access control requirements. As regulatory frameworks around AI tighten, having documented evidence of how a system behaved becomes a compliance requirement, not a nice-to-have.

4. Cost and Resource Optimization

Token costs compound quickly at scale. A single LLM application making millions of requests per day can generate significant infrastructure spend if token usage isn’t monitored. Observability platforms track token consumption per prompt, per user, and per workflow, giving teams the data to optimize prompts, reduce redundant calls, and right-size model usage.

Key Features of AI Observability Platforms

- LLM tracing and prompt analytics: End-to-end tracing of prompts, completions, tool calls, and agent decisions. Prompt versioning connects output changes to specific configuration changes rather than guessing what shifted. In multi-agent systems, traces capture parent-child relationships among agents, allowing teams to pinpoint exactly which step in a workflow produced a bad output.

- Model evaluation and performance metrics: Automated scoring of outputs using LLM-as-judge evaluations, semantic similarity, and custom metrics. Some platforms support human annotation workflows alongside automated scoring, which matters when quality criteria are subjective or domain-specific. Running evaluation both offline and in production gives teams early warning of quality drift before it shows up in user complaints.

- Data drift and bias detection: Continuous monitoring of input distributions and model outputs to detect shifts that affect quality or fairness. Output quality degrades gradually when incoming data deviates from training distribution, and that’s hard to catch without automated tracking. Bias detection is particularly relevant for regulated industries where discriminatory outputs carry legal and reputational risk.

- Root cause analysis for failures: Correlation of performance issues with specific prompts, model versions, retrieved documents, or infrastructure events. Without this, debugging an AI failure means sifting through logs with no clear path to the cause, which is especially costly in production environments where failures affect real users in real time.

- Security and governance controls: Prompt injection detection, data leakage prevention, role-based access controls, and audit logs. Enterprise platforms typically provide SOC 2 compliance and self-hosted deployment options for organizations that can’t route sensitive data through third-party SaaS.

Categories of AI Observability Tools

1. LLM Tracing Platforms

These tools focus on logging and tracing prompts, outputs, and agent workflows. They’re built for teams that need visibility into how LLMs and multi-agent systems behave at the request level. LangSmith, Langfuse, and Helicone fall into this category. They capture inputs, outputs, tool calls, latency, and costs and surface them in dashboards that let developers debug and improve model behavior.

2. AI Monitoring Platforms

These platforms monitor production AI systems at a broader level, including model performance, data quality, and infrastructure reliability. Arize AI and WhyLabs are examples. They’re designed for organizations running models in production that need continuous tracking of drift, accuracy, and reliability, not just request-level tracing.

3. Enterprise AI Governance Suites

These tools prioritize compliance, security, and responsible AI usage. Fiddler AI and Galileo are examples. They support explainability, bias detection, regulatory audit trails, and access controls. They serve organizations in regulated industries where accountability for model behavior is a legal or contractual requirement.

Top 10 Data Observability Tools for Smarter and More Reliable Data in 2026

Explore top data observability tools to monitor data health, detect issues early, and ensure reliable analytics.

Top 10 AI Observability Tools for Enterprise Teams

1. LangSmith

Built by the LangChain team, LangSmith provides native tracing for LangChain and LangGraph applications. For teams building multi-step agent workflows, the graph visualization is genuinely useful: it shows parent-child span relationships across agent execution in a way most tools don’t. Setup is near-zero configuration for LangChain-native stacks. The trade-off is that the tool loses most of its value outside that ecosystem, and per-seat pricing gets expensive at scale.

2. Arize AI

Arize AI takes a two-product approach. Phoenix is open-source and built for development and evaluation, while the Arize cloud platform handles production monitoring. It’s one of the few platforms that covers both classical ML and LLM observability in a single interface, and its multi-agent tracing support is more mature than most competitors. Used in production by organizations including Siemens and PepsiCo. The learning curve is steep, and enterprise pricing is opaque, but the capability breadth is hard to match.

3. WhyLabs

WhyLabs is an open-source observability platform built on OpenTelemetry with a strong focus on data and model drift monitoring. It supports a wide range of LLM providers and vector databases and can export telemetry to existing observability stacks. Teams that have already invested in OTel infrastructure will find it integrates cleanly without requiring a separate SaaS layer.

4. Weights & Biases

Weights & Biases started as an ML experiment tracking tool and has expanded into LLM observability through its Weave product. It’s the most practical option for teams already using W&B for traditional machine learning work, since they get LLM tracing and prompt versioning inside a familiar platform without adding a new tool. Teams with no classical ML workloads will find it over-engineered for their needs.

5. Fiddler AI

Fiddler AI was built originally for ML explainability and bias detection and is one of the more mature vendors in this space. Its compliance capabilities are the strongest in this list: audit-grade logging, bias detection, and the ability to surface why a model made a specific decision. It tracks both traditional ML and LLMs, which is practical for financial services and healthcare organizations running mixed AI workloads under active regulatory requirements. Enterprise-only pricing puts it out of reach for smaller teams.

6. TruEra

TruEra focuses on AI quality and safety evaluation, offering automated testing and monitoring for LLM accuracy, safety, and performance. It’s useful for teams that need to validate model behavior against defined quality standards before and after deployment, particularly in production environments where output reliability directly affects user trust.

7. Helicone

Helicone sits between an application and LLM API providers as a lightweight proxy. One URL change in an OpenAI or Anthropic client gives immediate logging, cost tracking, and latency data with near-zero code changes. Per-request cost breakdowns, historical spend trends, and model version comparisons come out of the box. It’s a logging and cost tool rather than a full observability platform, but for teams at early instrumentation stages who want immediate visibility into LLM API spend, it’s the lowest-friction entry point available.

8. HoneyHive

HoneyHive is an evaluation and observability platform with agent simulation capabilities. It supports automated evaluation workflows and human annotation queues, which is useful for teams iterating on prompt quality and agent behavior, where engineering and product teams need to collaborate on what “good” output actually looks like.

9. PromptLayer

PromptLayer is focused on prompt management and versioning. It logs requests and responses, tracks performance by prompt version, and supports collaboration between engineering and product teams on prompt development. For teams where prompt iteration is frequent and multiple people are touching prompt configurations, the version control and comparison features address a real workflow gap.

10. Galileo

Galileo is an enterprise LLM quality platform focused on hallucination detection, prompt quality scoring, and production monitoring at scale. Its Chainpoll methodology for hallucination scoring uses a chain-of-thought-based sampling approach and achieves a mean correlation of 0.88 with human raters on the ARES benchmark, according to Galileo’s published research. That’s one of the more rigorously documented methods for hallucination detection available. It supports OpenAI, Anthropic, Azure OpenAI, and Bedrock. Enterprise-only pricing and no self-serve tier mean long sales cycles before teams get platform access.

| Tool | Category | Best For | Multi-Agent Support | Hallucination Detection | Compliance/Audit | Pricing Model |

|---|---|---|---|---|---|---|

| LangSmith | Tracing | LangChain/LangGraph teams | Yes (LangGraph only) | No | Via export only | Free tier; $39/user/month+ |

| Arize AI | Governance Suite | Enterprise ML + LLM observability | Yes | Yes | Yes | Enterprise custom |

| WhyLabs | Monitoring | Drift detection, OTel-native teams | No | No | Via export only | Free + cloud tiers |

| Weights & Biases | Monitoring | ML-to-LLM lifecycle teams | Partial | Via eval pipeline | Via export only | Free tier; $50/seat/month+ |

| Fiddler AI | Governance Suite | Regulated industries | Partial | Yes | Yes | Enterprise custom |

| TruEra | Monitoring | LLM quality and safety validation | No | Yes | Partial | Custom |

| Helicone | Tracing | API cost tracking, fast setup | No | No | Basic logs only | Free; $80/month+ |

| HoneyHive | Tracing | Prompt iteration, human annotation | Partial | Via eval pipeline | Partial | Free tier; custom |

| PromptLayer | Tracing | Prompt versioning, team collaboration | No | No | No | Free tier; custom |

| Galileo | Governance Suite | Hallucination detection at scale | Partial | Yes | Yes (Enterprise) | Enterprise custom |

How to Choose the Right AI Observability Tool

No single tool fits every team. The right choice depends on the workload, the stack, and the organizational requirements. Key factors to evaluate:

- LLM compatibility: Does the tool support the models and frameworks already in use, such as OpenAI, Anthropic, AWS Bedrock, LangChain, and LlamaIndex? Broad compatibility reduces integration friction. For enterprises running Azure OpenAI, Bedrock, and direct API calls simultaneously, provider lock-in is a real concern. Proxy-based tools like Helicone have gaps here, with limited native support for Bedrock and Vertex AI.

- Integration with ML pipelines: Enterprise teams often run classical ML models alongside LLMs. Tools like Fiddler AI and Weights & Biases handle both, which simplifies toolchain management and avoids running separate observability stacks for different model types. For teams in the middle of an ML-to-LLM transition, unified platforms reduce the overhead of learning and maintaining multiple tools.

- Scalability for enterprise workloads: High-volume applications generate millions of traces. The platform needs to handle ingestion, storage, and querying at that scale without degrading performance or inflating costs. Some tools are well-suited for development and early production but struggle under sustained enterprise load, so testing against realistic traffic volumes before committing matters.

- Governance and security capabilities: Regulated industries need audit trails, role-based access, SOC 2 compliance, and often the option to self-host. Not all tools offer this, and the gap between a tracing tool and a governance suite is significant. For teams under the EU AI Act or comparable mandates, audit trail capability should be the first filter applied, not an afterthought.

- Real-time monitoring features: Some tools are better suited for post-hoc analysis than live monitoring. Production systems need alerting, anomaly detection, and dashboards that reflect current state, not just historical logs. The distinction matters most when AI failures affect users in real time: a tool that surfaces issues hours after they occur has limited operational value compared to one that fires an alert when a quality threshold is crossed.

Challenges in AI Observability

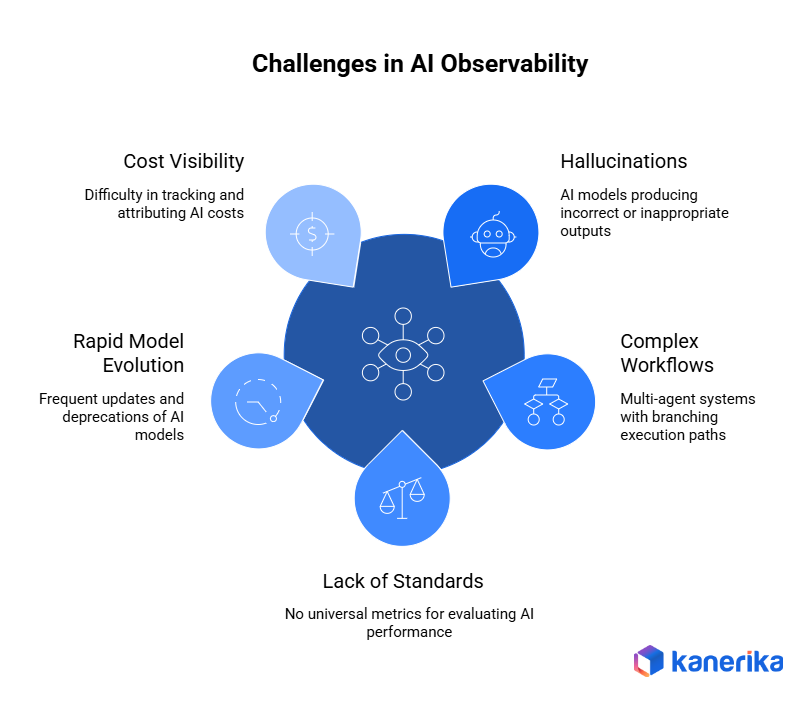

1. Hallucinations and Unpredictable Outputs

Language models can produce factually wrong or contextually inappropriate outputs with no signal that anything went wrong. Detecting hallucinations requires automated evaluation layers, including LLM-as-judge scoring, semantic similarity checks, or custom metrics, on top of basic logging. Building reliable evaluation pipelines is non-trivial and adds operational overhead.

2. Monitoring Complex AI Agent Workflows

Single-model applications are relatively straightforward to trace. Multi-agent systems, where models call tools, query external APIs, and hand off tasks between agents, generate complex, branching execution graphs. Mapping those workflows, identifying where failures originate, and attributing costs to specific agent decisions requires observability tooling built specifically for agentic architectures. Standard tracing breaks down when agents spawn sub-agents and operate in parallel loops because a flat log of events doesn’t capture parent-child relationships or the causal chain of a multi-step workflow.

3. Lack of Standardized Metrics

There’s no universal definition of “good” LLM output. Teams define quality differently depending on the application: a customer service bot has different success criteria than a code generation tool. The absence of standard benchmarks makes it hard to compare tools, track progress consistently, or communicate performance to stakeholders outside the engineering team.

4. Managing Rapidly Evolving AI Models

Model providers update and deprecate models on short cycles. A prompt optimized for one model version may behave differently on the next. Observability tools need to track performance across model versions, flag regressions after updates, and support A/B testing of different model configurations in production.

5. Cost Visibility and Attribution

Token costs are often distributed across multiple workflows, teams, and use cases within an organization. Without granular attribution, finance and engineering teams struggle to understand where spend is going, identify wasteful patterns, or justify infrastructure investments. Connecting observability data to cost accounting requires tooling that tracks usage at the request level and rolls it up into meaningful reports by team, product feature, or business unit.

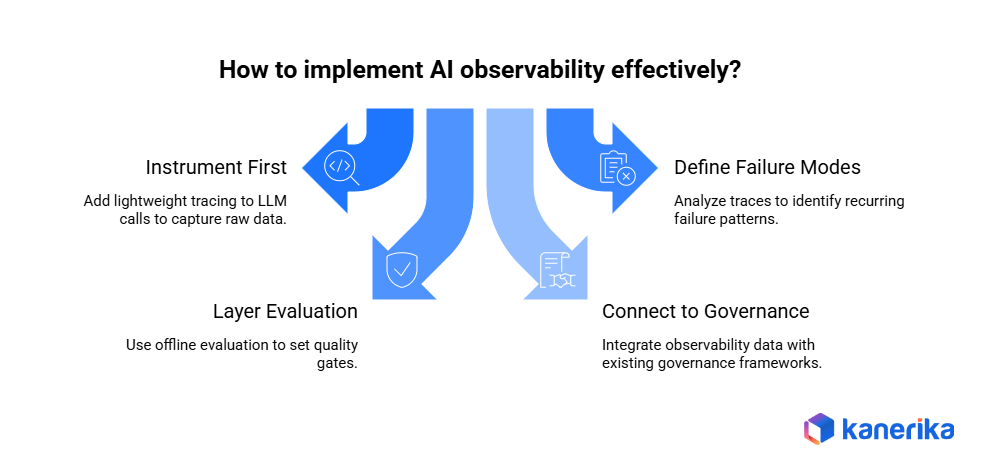

How to Implement AI Observability: Four Stages

Selecting a tool is the easy part. Getting AI observability to work in production requires a sequenced implementation. These four stages reflect what Kanerika deploys with enterprise clients.

Stage 1 – Instrument First (Weeks 1-2):

Add lightweight tracing to all LLM calls before anything else. Use Helicone (one URL change) or Langfuse SDK (10-20 lines of code) to capture inputs, outputs, latency, and token usage. Don’t design dashboards yet. The goal is raw data.

Stage 2 – Define Failure Modes (Weeks 2-4):

Review the first two weeks of traces. Identify recurring failure patterns: which prompts produce inconsistent outputs, where latency spikes occur, which agent steps fail most often. This analysis determines what metrics need active monitoring – and which tool category is actually needed.

Stage 3 – Layer Evaluation (Month 2):

Add offline evaluation against a reference dataset. Use TruLens for RAG pipelines, W&B Weave for ML-adjacent workloads, or Langfuse’s scoring API for custom quality metrics. Set quality gates that block deployment if evaluation scores drop below the threshold.

Stage 4 – Connect to AI Governance (Months 2-3):

Connect observability outputs to the organization’s existing data governance and compliance framework. Audit logs should flow to the same systems that handle other regulated data. Alerting logic should route to teams with authority to act, not just engineering. This stage requires real organizational change management – getting compliance, legal, and risk teams aligned on what observability data they’ll receive and how they’ll use it.

This is the stage most implementations skip, and the stage that matters most for decision intelligence at the organizational level. The alarm goes off. The question is whether anyone knows what to do next.

The TRACE Framework: Kanerika’s Guide to Choosing the Right Tool

Choosing an AI observability tool depends on the stack, the regulatory context, the team’s maturity, and what’s actually being monitored. TRACE is a sequenced set of filters Kanerika uses with enterprise clients to narrow the field to a real shortlist, rather than picking a tool name from a blog post.

T: Tech Stack Fit

The starting point is always the LLM framework already in use. Teams building on LangChain or LangGraph should start with LangSmith or Langfuse. Teams making raw API calls against OpenAI, Anthropic, or Azure OpenAI get the most immediate value from Helicone for cost visibility and Langfuse for deeper tracing. Existing OpenTelemetry infrastructure? Traceloop routes AI traces into what’s already running without adding a new platform. Custom multi-framework agent architectures point to Arize AI or Langfuse for framework-agnostic coverage.

R: Regulatory and Compliance Requirements

If the organization needs bias detection, explainability reports, or audit-grade logging, Fiddler AI or enterprise Arize are the realistic options. For teams operating under the EU AI Act or comparable mandates, audit trail capability is the deciding factor, not feature count. Where there’s no active regulatory mandate, open-source options are viable and often preferable for flexibility and data sovereignty.

A: Agent vs. LLM Complexity

Single LLM calls are the simplest case and any tool on this list handles them. Simple tool-calling agents work well with LangSmith, Langfuse, or Helicone. Complex multi-agent systems with sub-agent spawning, memory, and parallel execution are a different problem. Only LangSmith (for LangGraph-based systems), Langfuse, and Arize AI have the distributed tracing support to map those workflows reliably.

C: Cost and Team Scale

Teams under 10 people should use open-source self-hosted options: Langfuse, TruLens, or Evidently AI. Enterprise pricing doesn’t make sense at this stage. Teams of 10 to 100 get the right capability-to-cost ratio from managed cloud tiers like Langfuse Cloud, W&B Weave Pro, or Helicone. Enterprises at 100-plus should budget for Arize AI, Fiddler, or Galileo, and budget explicitly for onboarding, integration, and training time alongside license cost.

E: Evaluation vs. Monitoring Priority

Teams that need offline quality evaluation before deployment should look at TruLens, Evidently AI, or W&B Weave. Live production monitoring with alerting and dashboards points to Arize AI, Fiddler, or Galileo. For teams that need both in one platform, Arize AI is the most complete option. RAG-specific evaluation is where TruLens is purpose-built, with Arize Phoenix as the strong second choice.

How Kanerika Implements AI Observability for Enterprise Clients

Kanerika’s AI practice treats observability as infrastructure, not an afterthought. That means instrumentation is in place from the first sprint, not after the first production incident.

As a Microsoft Solutions Partner for Data & AI, Kanerika builds observability architectures that integrate with Azure Monitor, Azure OpenAI, and the broader Microsoft data ecosystem. For teams running Microsoft Copilot across business workflows, that telemetry layer covers Copilot usage patterns and output quality, not just raw model API calls. For organizations deploying KARL, Kanerika’s AI data insights agent, observability is part of the architecture from day one.

Three things Kanerika does differently:

- Stack-matched tool selection: Tool recommendations are based on the client’s actual LLM framework, data architecture, and regulatory context. Generic best practice lists don’t account for what’s already running.

- Governance-first design: Observability outputs connect directly to existing data governance and compliance frameworks, rather than sitting as a separate DevOps concern. This is central to Kanerika’s AI TRiSM approach across regulated engagements.

- Incident response integration: Every AI deployment includes a defined escalation path and model response runbook before go-live. The alarm going off is only useful if someone knows what to do next.

Observability tools provide the data. The practice around them, defined alerting thresholds, clear ownership, and regular offline evaluation against production samples, is what makes that data actionable.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

FAQs

What is AI observability and how is it different from AI monitoring?

Monitoring tracks predefined metrics — latency, error rate, uptime — and tells teams when something breaks. Observability gives teams the ability to ask questions about system behavior after the fact: why did the model produce that output, what was the context, which agent step failed. For generative AI systems, where outputs are non-deterministic and failure modes are non-obvious, observability is what makes diagnosis possible. Not just detection. The distinction maps directly to what decision intelligence requires from AI systems: not just “it ran” but “here’s why it decided what it decided.”

Which AI observability tool is best for teams just getting started?

Langfuse and Helicone are the lowest-friction starting points. Helicone requires only a URL change to start capturing LLM API traces and cost data — no code refactoring needed. Langfuse is open-source, self-hostable, and well-documented for teams needing data sovereignty. Both have meaningful free tiers and can be running within hours.

Which AI observability tools support multi-agent monitoring?

Multi-agent observability requires distributed tracing with parent-child span relationships and tool call attribution across agent steps. LangSmith (specifically for LangGraph-based agents), Langfuse, and Arize AI are currently the strongest options. Most other tools handle single-agent or single-LLM-call scenarios but lack the span correlation needed for complex multi-agent workflows. Teams using OpenAI AgentKit or similar frameworks should validate multi-agent trace support before committing to a platform.

How do AI observability tools handle RAG pipeline monitoring?

RAG pipelines need observability at multiple stages — document retrieval, context ranking, chunk relevance scoring, and final generation quality — not just the last LLM call. TruLens is purpose-built for RAG evaluation using the RAG Triad (context relevance, groundedness, answer relevance). Arize Phoenix and Langfuse support multi-step RAG tracing. Most general-purpose AI monitoring tools treat the entire RAG pipeline as a single LLM call and miss the retrieval failure modes. For teams building Advanced RAG systems, that distinction is critical.

How long does it take to implement an AI observability platform?

Basic instrumentation with Helicone or Langfuse takes 1–4 hours for most LLM applications. LangSmith integration for an existing LangChain app takes under an hour. Enterprise deployments with Arize AI or Fiddler — including data pipeline connections, governance integration, and stakeholder onboarding — typically take 4–8 weeks. The implementation timeline scales with governance complexity, not technical complexity.

Is AI observability required for regulatory compliance?

In regulated industries — financial services, healthcare, insurance — AI observability is increasingly tied to compliance requirements. AI systems that make or inform automated decisions need audit trails documenting inputs, outputs, decision logic, model version, and data provenance. Fiddler AI and enterprise Arize are built with compliance-grade logging in mind. The EU AI Act regulatory framework creates documentation requirements for high-risk AI systems that observability tooling directly helps fulfill.

How much do enterprise AI observability tools cost?

Open-source tools (Langfuse, TruLens, Evidently AI) are free to self-host, with infrastructure as the main cost. Managed cloud tiers (Helicone Pro ~$80/month, Langfuse Cloud Pro ~$59/month, W&B Weave Pro ~$50/seat/month) suit growing teams. Enterprise platforms (Arize AI, Fiddler AI, Galileo) typically run $25,000–$150,000+ annually depending on scale and support requirements. Most enterprise vendors require a sales conversation before disclosing specific pricing.