Did you know that poor data quality costs businesses an average of $12.9 million annually? As businesses grow and data sources multiply, managing and integrating the flood of information has become a critical challenge. Whether you’re pulling data from cloud apps, on-premises systems, or third-party platforms,data integration tools are essential for making sense of it all.

These powerful solutions act as the backbone of modern data ecosystems, enabling businesses to consolidate, transform, and leverage their data assets for improved decision-making and operational efficiency. From breaking down data silos to ensuring real-time insights, data integration tools are revolutionizing how companies harness the power of their information.

With the right data integration tool, you can turn scattered data into actionable insights, streamline workflows, and boost operational efficiency, but how do you choose the right one?

Enhance Data Accuracy and Efficiency With Expert Integration Solutions!

Partner with Kanerika Today.

What Are Data Integration Tools?

Data integration tools are specialized software solutions that combine data from multiple sources into a unified view. These platforms enable businesses to extract data from diverse systems, transform it into consistent formats, and load it into target destinations like data warehouses or applications.

Modern integration tools support various integration patterns (ETL, ELT, real-time), offer pre-built connectors to common systems, and provide features for data quality management, workflow automation, and governance—all essential for creating reliable data pipelines that power analytics and business processes.

Key Features of Data Integration Tools

1. Data Extraction Capabilities

Data extraction capabilities encompass the methods and technologies used to retrieve data from diverse source systems. Modern integration tools offer robust connectors for various data sources, enabling efficient and reliable data acquisition regardless of format or location.

- Comprehensive source system support (databases, applications, files, APIs, etc.)

- Change data capture (CDC) functionality to identify and process only modified data

- Parallel and incremental extraction options to optimize performance and reduce load

2. Transformation Functionalities

Transformation functionalities convert raw data into formats suitable for analysis and business use. These features enable organizations to cleanse, enrich, and standardize data while applying business rules and logic to create valuable information assets.

- Data cleansing and quality tools to handle missing values, duplicates, and inconsistencies

- Advanced mapping capabilities with support for complex transformations and calculations

- Schema mapping and metadata management to maintain data consistency

3. Loading Mechanisms

Loading mechanisms determine how processed data is written to destination systems. Effective loading features balance speed, reliability, and system impact while ensuring data arrives intact and usable at its destination.

- Bulk and batch loading options for efficient handling of large data volumes

- Transaction management with commit/rollback capabilities for data integrity

- Target system optimizations including partitioning and indexing support

4. Automation and Scheduling

Automation and scheduling features enable organizations to create reliable, repeatable data integration processes with minimal manual intervention. These capabilities ensure timely data delivery while optimizing resource utilization and operational efficiency.

- Flexible scheduling options including time-based, event-driven, and dependency-based triggers

- Workflow orchestration to manage complex multi-step integration processes

- Error handling with retry logic and exception management

5. Monitoring and Logging

Monitoring and logging capabilities provide visibility into integration processes, enabling proactive management and troubleshooting. These features help organizations ensure data reliability, meet SLAs, and quickly resolve issues when they arise.

- Real-time dashboards showing integration job status and performance metrics

- Comprehensive logging of all integration activities with configurable detail levels

- Alerting systems for critical failures and performance degradations

Types of Data Integration Tools

1. ETL (Extract, Transform, Load) Tools

ETL tools extract data from source systems, transform it according to business rules, and load it into target destinations like data warehouses. This traditional approach handles data processing before loading.

Use Cases:

- Data warehousing projects requiring significant transformations

- Complex business logic implementation

- Legacy system integration

- Compliance and data cleansing requirements

Popular Tools: Informatica PowerCenter, IBM DataStage, Microsoft SSIS, Talend Open Studio, Oracle Data Integrator

2. ELT (Extract, Load, Transform) Tools

ELT tools extract data from sources and load it into the target system before transformation, leveraging the target system’s processing power for transformations.

Differences from ETL:

- Transforms data after loading (not before)

- Utilizes target system computing power

- Better for large datasets and cloud data warehouses

- More flexible for iterative analytics

Use Cases:

- Cloud data warehouse integration

- Big data scenarios

- Analytics where transformation needs may change

- Real-time or near-real-time reporting

Popular Tools: Fivetran, Stitch, Matillion, Snowflake, Azure Data Factory

3. Data Virtualization Tools

Data virtualization creates an abstraction layer that allows applications to access and query data without knowing its physical location, format, or how it’s stored.

Use Cases:

- Real-time access requirements

- Federated queries across multiple sources

- When physical data movement is impractical

- Prototyping before physical integration

Popular Tools: Denodo, TIBCO Data Virtualization, IBM Cloud Pak for Data, Oracle Data Service Integrator, Red Hat JBoss Data Virtualization

4. Data Replication Tools

Data replication tools create and maintain copies of databases or data sets across different locations, ensuring consistency between source and target systems.

Use Cases:

- Disaster data recovery and high availability

- Distributed data access to improve performance

- Data migration projects

- Cross-regional synchronization

Popular Tools: Oracle GoldenGate, AWS Database Migration Service, Qlik Replicate, HVR, Striim

5. iPaaS (Integration Platform as a Service) Solutions

iPaaS solutions provide cloud-based platforms for building and deploying integrations between cloud and on-premises applications and data sources.

Use Cases:

- SaaS application integration

- Hybrid cloud/on-premises environments

- API management and orchestration

- Business process automation

- Event-driven architectures

Popular Tools: MuleSoft Anypoint Platform, Dell Boomi, Jitterbit, Workato, SnapLogic, Tray.io, Microsoft Power Automate

Data Ingestion vs Data Integration: How Are They Different?

Uncover the key differences between data ingestion and data integration, and learn how each plays a vital role in managing your organization’s data pipeline.

Top 10 Data Integration Tools in 2025

1. Informatica PowerCenter

A powerful enterprise-grade ETL (Extract, Transform, Load) platform, Informatica PowerCenter is designed for large-scale data integration and data management. It supports real-time and batch data integration, enabling businesses to handle high-volume data across different systems.

Key Features

- Enterprise-grade ETL and ELT capabilities.

- Advanced data transformation and data cleansing.

- Scalable architecture with metadata-driven automation.

Use Cases

- Building and managing large data warehouses.

- Ensuring data governance and compliance in sectors like healthcare.

- Real-time data streaming and integration for analytics.

2. Talend

Description: Talend is an open-source data integration platform that provides a suite of cloud and on-premise solutions, covering data integration, data transformation, and data governance. Talend allows users to access, transform, and integrate data from any data source with ease, making it a top choice for both small and large enterprises.

Key Features

- Open-source flexibility with enterprise capabilities.

- Cloud-native, with support for real-time data processing.

- Built-in data quality and governance tools.

Use Cases

- Seamlessly integrating data from cloud-based applications.

- Improving data quality for marketing analytics.

- Data migration and syncing for ERP systems.

3. Microsoft Azure Data Factory

Description: A cloud-based ETL and data integration service, Microsoft Azure Data Factory enables users to create data-driven workflows for orchestrating and automating data movement and transformation across cloud and on-premises environments.

Key Features

- Managed, serverless data integration.

- Native integration with Azure services.

- Drag-and-drop interface for building data pipelines.

Use Cases

- Migrating on-premises data to the Azure cloud.

- Building data pipelines for real-time analytics.

- Integrating data from multiple SaaS applications into Azure.

4. Dell Boomi

Dell Boomi is a cloud-based integration platform-as-a-service (iPaaS) that simplifies data integration for both cloud and on-premise applications. Known for its user-friendly interface, it helps organizations integrate applications, data, and processes quickly and efficiently.

Key Features

- API-led connectivity with drag-and-drop design.

- Pre-built connectors for CRM, ERP, and cloud applications.

- Real-time data synchronization and monitoring.

Use Cases

- Syncing data between cloud-based apps like Salesforce and SAP.

- Automating workflows for HR systems.

- Integrating customer data from multiple systems for unified insights.

Maximizing Efficiency: The Power of Automated Data Integration

Discover the key differences between data ingestion and data integration, and learn how each plays a vital role in managing your organization’s data pipeline.

5. Fivetran

Description: Fivetran provides automated data pipelines that enable data movement from various sources to data warehouses. It focuses on eliminating the complexity of data extraction and transformation, making data analytics seamless for businesses.

Key Features

- Fully automated ETL pipelines.

- Pre-built connectors for a wide variety of data sources.

- Continuous, real-time data synchronization.

Use Cases

- Streaming real-time marketing data into a data warehouse.

- Automating data extraction for finance teams.

- Synchronizing data from multiple SaaS platforms for business intelligence.

6. Hevo Data

Hevo Data is a no-code data integration platform that helps businesses automate data flows from multiple sources to a data warehouse without the need for coding. It supports real-time data streaming and offers a robust ETL/ELT solution.

Key Features

- No-code, automated ETL and ELT pipelines.

- Real-time data replication across platforms.

- Automated schema mapping for easy integration.

Use Cases

- Migrating marketing and sales data to cloud-based analytics platforms.

- Real-time customer analytics in e-commerce.

- Syncing data from databases to business intelligence tools.

7. MuleSoft Anypoint Platform

MuleSoft’s Anypoint Platform provides an API-led approach to data integration, focusing on enabling organizations to connect applications, data, and devices through APIs. It is well-suited for businesses heavily reliant on API-driven ecosystems.

Key Features

- API-led connectivity and data integration.

- Unified platform for designing, managing, and securing APIs.

- Scalable architecture for enterprise-level integrations.

Use Cases

- Connecting legacy systems with modern cloud applications.

- Managing and securing APIs for seamless data exchange.

- Integrating customer data from multiple touchpoints for unified experiences.

8. CData Sync

CData Sync is a data replication solution that synchronizes data across multiple platforms, including cloud applications and on-premises systems. It supports over 250 connectors and offers both real-time and scheduled sync options.

Key Features

- Real-time, bi-directional data synchronization.

- Supports over 250 connectors for various data sources.

- Visual mapping tools for easy data transformation.

Use Cases

- Synchronizing data between on-premise and cloud databases.

- Real-time data replication for analytics platforms.

- Connecting legacy ERP systems with cloud-based apps.

9. Astera Centerprise

Astera Centerprise is a powerful end-to-end data integration platform, offering a no-code, drag-and-drop interface to simplify the integration of data across different systems. It is ideal for businesses looking for user-friendly, scalable data integration solutions.

Key Features

- No-code platform with drag-and-drop functionality.

- Supports complex data transformations and ETL workflows.

- Real-time and batch processing capabilities.

Use Cases

- Integrating disparate business applications for unified data access.

- Data transformation for analytics and reporting.

- Real-time data syncing between on-premise and cloud-based systems.

10. Jitterbit

Jitterbit is an iPaaS platform that offers quick and easy data integration with a focus on API connectivity. It helps businesses automate workflows and manage data across systems with pre-built connectors and templates.

Key Features

- API integration with pre-built connectors.

- Drag-and-drop interface for data integration workflows.

- AI-powered automation tools.

Use Cases

- Integrating cloud applications for e-commerce platforms.

- Automating workflows for marketing and sales systems.

- Managing API connections between CRM and ERP systems.

Simplify Your Data Management With Powerful Integration Services!!

Partner with Kanerika Today.

Benefits of Implementing Data Integration Tools

1. Streamlined Workflows and Better Efficiency

By automating the processes of acquiring, converting, and importing data from several sources, data integration solutions minimize errors and manual labor. Teams can focus on key business tasks and streamline workflows through automation, which accelerates results and increases productivity.

2. Improved Decision-making with Access to Real-time Data

Organizations can obtain real-time insights by combining data from several sources and making sure it is up to date. Leaders can now make better and faster decisions because they have access to timely and reliable data, which makes it possible for them to respond swiftly and effectively to changes in the market.

3. Scalability for Growing Data Needs

As businesses grow, so do their data requirements. Cloud-based data integration tools in particular provide the flexibility to grow operations without significant infrastructure costs. They ensure that organizations may expand effectively by giving them the capacity to handle growing data volumes without compromising performance.

4. Enhanced Security and Governance

Features that guarantee data privacy and regulatory compliance are included into a lot of data integration tools. Organizations can lower the risk of data breaches and penalties by managing their data security and complying with industry-specific compliance regulations through the use of features like encryption, access control, and audit trails.

Data Integration for Insurance Companies: Benefits and Advantages

Leverage data integration to enhance efficiency, improve customer insights, and streamline claims processing for insurance companies, unlocking new levels of operational excellence..

Case Studies: Kanerika’s Successful Data Integration Projects

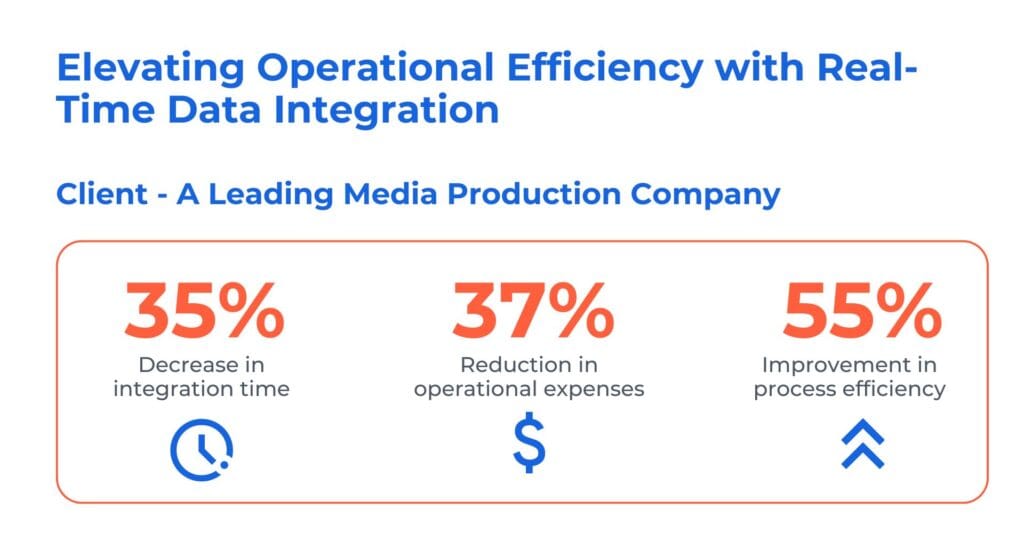

1. Unlocking Operational Efficiency with Real-Time Data Integration

The client is a prominent media production company operating in the global film, television, and streaming industry. They faced a significant challenge while upgrading its CRM to the new MS Dynamics CRM. This complexity in accessing multiple systems slowed down response times and posed security and efficiency concerns.

Kanerika has reolved their problem by leevraging tools like Informatica and Dynamics 365. Here’s how we our real-time data integration solution to streamline, expedite, and reduce operating costs while maintaining data security.

- Implemented iPass integration with Dynamics 365 connector, ensuring future-ready app integration and reducing pension processing time

- Enhanced Dynamics 365 with real-time data integration to paginated data, guaranteeing compliance with PHI and PCI

- Streamlined exception management, enabled proactive monitoring, and automated third-party integration, driving efficiency

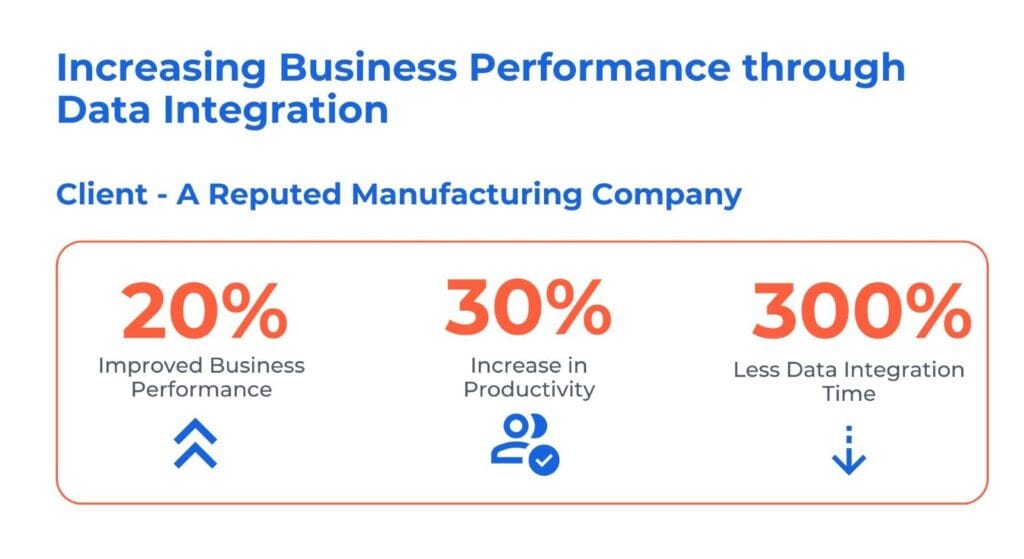

2. Enhancing Business Performance through Data Integration

The client is a prominent edible oil manufacturer and distributor, with a nationwide reach. The usage of both SAP and non-SAP systems led to inconsistent and delayed data insights, affecting precise decision-making. Furthermore, the manual synchronization of financial and HR data introduced both inefficiencies and inaccuracies.

Kanerika has addressed the client challenges by delvering follwoing data integration solutions:

- Consolidated and centralized SAP and non-SAP data sources, providing insights for accurate decision-making

- Streamlined integration of financial and HR data, ensuring synchronization and enhancing overall business performance

- Automated integration processes to eliminate manual efforts and minimize error risks, saving cost and improving efficiency

Kanerika: The Trusted Choice for Streamlined and Secure Data Integration

At Kanerika, we excel in unifying your data landscapes, leveraging cutting-edge tools and techniques to create seamless, powerful data ecosystems. Our expertise spans the most advanced data integration platforms, ensuring your information flows efficiently and securely across your entire organization.

With a proven track record of success, we’ve tackled complex data integration challenges for diverse clients in banking, retail, logistics, healthcare, and manufacturing. Our tailored solutions address the unique needs of each industry, driving innovation and fueling growth.

We understand that well-managed data is the cornerstone of informed decision-making and operational excellence. That’s why we’re committed to building and maintaining robust data infrastructures that empower you to extract maximum value from your information assets.

Choose Kanerika for data integration that’s not just about connecting systems, but about unlocking your data’s full potential to propel your business forward.

Enhance Data Accuracy and Efficiency With Expert Integration Solutions!

Partner with Kanerika Today.

Frequently Asked Questions

Is ETL the same as API?

ETL and API are fundamentally different concepts serving distinct purposes in data integration. ETL (Extract, Transform, Load) is a process methodology for moving and transforming data between systems, while an API (Application Programming Interface) is a communication protocol enabling software applications to exchange information. APIs often serve as the extraction mechanism within ETL pipelines, pulling data from source systems. Modern data integration tools leverage APIs to connect disparate applications, but the transformation and loading logic remains separate. Kanerika designs ETL architectures that maximize API efficiency while ensuring seamless data flow—connect with our integration specialists today.

Is dbt an ETL tool?

dbt (data build tool) is not a traditional ETL tool but rather a transformation-focused solution operating within the ELT paradigm. dbt handles the T in ELT, executing SQL-based transformations directly inside your data warehouse after data has already been loaded. It lacks native extraction and loading capabilities, meaning you need separate data integration tools like Fivetran or Airbyte to move data first. This makes dbt ideal for analytics engineering teams managing complex transformation logic in modern cloud warehouses. Kanerika helps enterprises architect complete data pipelines combining dbt with robust ingestion solutions—reach out for a tailored assessment.

What are examples of data integration tools?

Popular data integration tools span multiple categories based on enterprise needs. Enterprise-grade platforms include Informatica PowerCenter, Talend, and Microsoft Azure Data Factory. Cloud-native solutions feature Fivetran, Airbyte, and Matillion. Modern analytics platforms like Databricks, Snowflake, and Microsoft Fabric offer built-in integration capabilities alongside processing power. For business intelligence workflows, tools like Alteryx combine data preparation with analytics. Open-source options include Apache NiFi and Apache Kafka for streaming integration. Each tool excels in specific scenarios depending on data volume, complexity, and cloud strategy. Kanerika evaluates your ecosystem to recommend the optimal integration stack—schedule a consultation to explore your options.

What is a data integration tool?

A data integration tool is software that connects, combines, and unifies data from multiple disparate sources into a cohesive, accessible format for analysis and operations. These platforms automate the movement of information across databases, applications, cloud services, and on-premises systems while maintaining data quality and consistency. Core capabilities include extraction from source systems, transformation of data formats, and loading into target destinations like data warehouses or lakes. Modern integration tools also provide data governance, real-time syncing, and pre-built connectors for common applications. Kanerika implements enterprise-grade data integration solutions tailored to your infrastructure—let us streamline your data landscape.

Which is the best data integration tool?

The best data integration tool depends entirely on your specific requirements, existing infrastructure, and scalability needs. Microsoft Fabric excels for organizations invested in the Microsoft ecosystem, offering unified analytics with built-in governance. Databricks provides superior performance for large-scale data engineering and lakehouse architectures. Informatica remains the enterprise standard for complex, hybrid environments requiring extensive transformation logic. Talend offers flexibility with both open-source and commercial editions. Snowflake simplifies cloud data warehousing with native integration features. The right choice balances cost, connector availability, and team expertise. Kanerika assesses your unique environment to identify the optimal platform—request a free evaluation today.

What is the difference between ETL and ELT?

ETL transforms data before loading it into the target system, while ELT loads raw data first and transforms it inside the destination. Traditional ETL processes data on a separate server, making it ideal when target systems have limited processing power or strict schema requirements. ELT leverages the computational strength of modern cloud data warehouses like Snowflake, Databricks, or Microsoft Fabric, enabling faster ingestion and on-demand transformations. ELT suits large-scale, flexible analytics workloads; ETL remains valuable for compliance-heavy environments requiring pre-validated data. Your choice impacts architecture, cost, and agility. Kanerika architects ETL and ELT pipelines matched to your performance and governance needs—connect with us to optimize your approach.

Is ETL a data integration?

ETL is a specific method of data integration, not the entirety of it. Extract, Transform, Load represents one approach to unifying data from multiple sources into a centralized repository. Data integration as a discipline encompasses broader techniques including real-time streaming, data virtualization, application integration, and master data management. ETL specifically focuses on batch-oriented movement with transformation logic applied during transit. While ETL remains foundational for data warehousing projects, modern integration strategies often combine multiple methods depending on latency requirements and use cases. Kanerika designs comprehensive data integration architectures leveraging ETL alongside complementary approaches—reach out to build your unified data strategy.

What are the types of data integration?

Data integration encompasses several distinct approaches suited to different enterprise needs. ETL and ELT handle batch-based movement and transformation for analytics workloads. Data virtualization creates unified views without physically moving data, enabling real-time access across sources. Application integration connects software systems through APIs and middleware for operational workflows. Data replication synchronizes information across databases for redundancy and distributed access. Change data capture tracks incremental updates for near-real-time syncing. Master data management ensures consistency of critical business entities across systems. Each type addresses specific latency, governance, and scalability requirements. Kanerika implements multi-modal integration strategies aligning with your business objectives—talk to our experts to identify your optimal mix.

What are the four types of data integration methodologies?

The four primary data integration methodologies are manual integration, middleware-based integration, application-based integration, and uniform data access. Manual integration involves custom scripts and direct database queries, offering flexibility but poor scalability. Middleware integration uses dedicated platforms like Informatica or Talend to orchestrate data movement with pre-built connectors. Application-based integration embeds data sharing logic within software applications themselves. Uniform data access employs data virtualization to present a unified view without physical consolidation. Each methodology balances cost, complexity, and real-time capability differently depending on organizational maturity and data volume. Kanerika helps enterprises select and implement the right integration methodology for sustainable growth—schedule your architecture review today.

Which is the most popular ETL tool?

Informatica PowerCenter historically dominates enterprise ETL adoption, trusted by large organizations for complex transformation requirements and robust governance. However, market dynamics are shifting toward cloud-native solutions. Microsoft Azure Data Factory leads in Microsoft-centric environments, while AWS Glue captures Amazon ecosystem users. Talend maintains strong presence across hybrid deployments with open-source flexibility. For modern data stacks, Fivetran and Airbyte gain rapid adoption for automated ELT pipelines. Databricks increasingly handles ETL workloads through its unified lakehouse platform. Popularity varies by industry, cloud strategy, and team expertise. Kanerika specializes in migrating legacy ETL workflows to modern platforms—explore our migration accelerators to reduce transition risk.

Will ETL be replaced by AI?

AI will augment rather than fully replace ETL processes in data integration. Machine learning already enhances ETL through intelligent schema mapping, automated data quality detection, and anomaly identification during transformations. AI-powered tools can suggest optimization rules, auto-generate transformation logic, and predict pipeline failures before they occur. However, ETL fundamentals—extraction, transformation, and loading—remain essential for moving and preparing data reliably. AI makes these processes smarter and more self-healing rather than obsolete. Enterprises should expect AI-enhanced ETL platforms that reduce manual configuration while maintaining data governance. Kanerika integrates AI capabilities into data pipelines for intelligent automation—discover how we modernize ETL with purposeful AI.

Is Talend a data integration tool?

Talend is a comprehensive data integration tool offering ETL, data quality, and data governance capabilities in unified platform. Originally launched as open-source software, Talend now provides both community and enterprise editions supporting cloud, on-premises, and hybrid deployments. Its visual interface enables developers to build complex integration pipelines without extensive coding. Talend supports hundreds of pre-built connectors for databases, SaaS applications, and cloud services. Key strengths include data profiling, cleansing, and master data management alongside core ETL functionality. Organizations use Talend for batch processing, real-time streaming, and API-based integration scenarios. Kanerika delivers Talend implementations and migrations to modern platforms like Microsoft Fabric—contact us for expert guidance.

Can dbt replace Informatica?

dbt cannot directly replace Informatica because they serve different functions in data integration architecture. Informatica handles end-to-end ETL including extraction from source systems, complex transformations, and loading to targets. dbt focuses exclusively on SQL-based transformations within the data warehouse, requiring separate tools for data ingestion. Organizations migrating from Informatica to modern stacks typically pair dbt with ingestion platforms like Fivetran or Azure Data Factory. This combination achieves similar outcomes with cloud-native efficiency but requires rearchitecting existing workflows. The decision depends on transformation complexity, team SQL proficiency, and cloud strategy. Kanerika executes Informatica migrations to Databricks, Microsoft Fabric, and dbt-based architectures—start with our migration assessment to evaluate your path forward.

Is dbt the same as Databricks?

dbt and Databricks are distinct tools serving complementary roles in modern data integration stacks. dbt is an open-source transformation framework executing SQL models inside data warehouses, focusing purely on the transformation layer. Databricks is a comprehensive unified analytics platform combining data engineering, data science, and machine learning on a lakehouse architecture. Databricks handles storage, compute, ETL orchestration, and advanced analytics, while dbt provides version-controlled transformation workflows. Many organizations run dbt transformations on Databricks, using Databricks for infrastructure and dbt for modeling discipline. Together they create powerful, governed data pipelines. Kanerika architects solutions combining Databricks infrastructure with dbt best practices—reach out to optimize your lakehouse strategy.

What is ETL in data integration?

ETL stands for Extract, Transform, Load—the foundational process for consolidating data from multiple sources into analytical systems. Extraction pulls data from databases, APIs, files, and applications. Transformation cleanses, validates, and reshapes data to match target schema requirements, applying business rules and aggregations. Loading delivers processed data into data warehouses, lakes, or marts for reporting and analytics. ETL enables organizations to create unified, trustworthy datasets from fragmented operational systems. Traditional ETL processes data in batches on dedicated servers before loading, ensuring quality before arrival. Modern data integration tools automate and orchestrate these steps efficiently. Kanerika builds scalable ETL pipelines across leading platforms—talk to us about accelerating your data warehouse initiatives.

What are the 5 steps of ETL?

The five steps of ETL extend beyond the basic three-phase acronym to include data extraction, data validation, data transformation, data loading, and data verification. Extraction connects to source systems and retrieves raw data. Validation checks extracted data for completeness and basic integrity before processing. Transformation applies cleansing, standardization, deduplication, and business logic to prepare data for analysis. Loading transfers transformed data into the target data warehouse or lake. Verification confirms successful loading through record counts, checksums, and quality audits. These steps form a continuous cycle in production data integration pipelines. Kanerika implements end-to-end ETL workflows with built-in quality gates—connect with us to ensure reliable data delivery.

Is Tableau a data integration tool?

Tableau is primarily a data visualization and business intelligence platform, not a dedicated data integration tool. While Tableau connects to numerous data sources and offers basic data preparation through Tableau Prep, its core strength lies in creating interactive dashboards and analytical reports. Tableau Prep provides data cleaning and joining capabilities for analysis-ready datasets, but lacks the robust transformation, orchestration, and governance features of enterprise data integration platforms. Organizations typically use dedicated integration tools like Informatica, Talend, or Azure Data Factory to prepare data before visualization in Tableau. Kanerika helps enterprises build complete pipelines feeding clean, governed data into Tableau—explore our Power BI migration services for Microsoft-aligned alternatives.

What are the 4 types of system integration?

The four types of system integration are point-to-point integration, hub-and-spoke integration, enterprise service bus (ESB), and microservices-based integration. Point-to-point creates direct connections between systems, simple but difficult to scale. Hub-and-spoke centralizes integration through a single hub managing all connections, improving maintainability. ESB architecture provides a middleware layer handling message routing, transformation, and protocol conversion across enterprise applications. Microservices integration uses APIs and event-driven patterns for loosely coupled, scalable connections suited to cloud-native environments. Each approach balances complexity, flexibility, and operational overhead differently. Kanerika evaluates your application landscape to recommend the optimal integration architecture—schedule a consultation to modernize your systems connectivity.

Is SQL a data integration tool?

SQL itself is a query language, not a data integration tool, though it serves as the foundation for many integration processes. SQL enables data extraction, transformation, and manipulation within relational databases and modern cloud warehouses. Data engineers use SQL extensively to write transformation logic executed by integration platforms. Tools like dbt leverage SQL exclusively for in-warehouse transformations, while traditional ETL platforms embed SQL within visual workflows. Stored procedures and database scripts can perform basic integration tasks, but lack orchestration, scheduling, and connector capabilities of dedicated data integration tools. SQL proficiency remains essential for anyone working in data integration. Kanerika’s engineers build optimized SQL-based pipelines across leading platforms—contact us for expert implementation support.

What are examples of data integration?

Common data integration examples include consolidating CRM and ERP data into a unified customer view, synchronizing inventory across e-commerce platforms and warehouses, and aggregating financial data from multiple subsidiaries for consolidated reporting. Healthcare organizations integrate electronic health records with billing systems for complete patient profiles. Retailers combine point-of-sale transactions with marketing data for customer analytics. Manufacturing companies merge IoT sensor data with production systems for predictive maintenance. Data lake implementations integrate structured database records with unstructured logs and documents. Each scenario requires connecting disparate sources, transforming formats, and delivering trusted data. Kanerika implements these integration patterns across industries—share your use case to explore tailored solutions.

Is Excel an ETL tool?

Excel is not a true ETL tool, though it can perform basic data extraction, transformation, and loading tasks for small-scale needs. Power Query within Excel enables connecting to external sources, cleaning data, and loading results into worksheets. However, Excel lacks automation, scheduling, version control, and scalability required for enterprise data integration. It cannot handle large data volumes efficiently or maintain audit trails for governance compliance. Excel serves well for ad-hoc analysis and prototyping transformation logic before implementing in proper ETL platforms. Production data pipelines require dedicated data integration tools with orchestration and monitoring capabilities. Kanerika helps organizations graduate from Excel-based workflows to scalable, governed integration platforms—reach out to modernize your data operations.