Enterprise analytics is going through one of the fastest technology shifts in a decade. With AI agents, Model Context Protocol (MCP) servers, semantic-layer APIs, and real-time decision flows moving from demos into production stacks, the pressure on traditional BI systems has never been higher. In this environment, agentic BI is becoming a strategic priority rather than a reporting upgrade.

Today’s enterprises generate enormous volumes of operational data across sales, finance, supply chain, and customer systems. Yet data analysts in high-demand industries such as finance, retail, and tech spend 50 to 70 percent of their time on ad-hoc requests rather than planned analytical work, according to research from Wren AI. Most of those requests are variations of questions that existing dashboards cannot answer without a human translating them into SQL.

This article covers what agentic BI is, how it works, where it breaks, and how to build it on Microsoft Fabric without losing the numbers.

Key Takeaways

- Agentic BI uses AI agents to query data, build visualizations, and act on insights without dashboard navigation.

- The semantic layer is the single biggest determinant of agentic BI accuracy.

- Production benchmarks show text-to-SQL accuracy drops from 85% on clean schemas to 52% on real enterprise ones.

- Microsoft’s MCP Server for Power BI makes agent access to semantic models a shipped capability, not a concept.

- Kanerika deploys agentic BI on Microsoft Fabric using Karl, its named data insights agent.

What Is Agentic BI?

Agentic BI is a category of business intelligence where AI agents autonomously handle the analytics workflow. Instead of building dashboards and writing queries, users describe what they need in plain English. Agents find the data, build the query, generate the visualization, explain the result, and trigger actions downstream.

An agent plans a multi-step task, picks up the data source, writes the query, checks the result, and hands back an answer with reasoning, all without the user touching a visualization, and dashboards. Agentic BI removes the dashboard as the primary interface and replaces it with a conversation that can take action. BI adoption statistics show most enterprises still sit in the first two categories, which is exactly why the shift to agents is needed now.

Three capabilities separate agentic BI from earlier generations:

- Autonomy: The agent decides how to approach a question rather than only how to display an answer.

- Context: Memory persists across a conversation, so follow-up questions build on earlier steps.

- Action: The system can write back to other tools, trigger alerts, and update models within defined guardrails.

The practical consequence is that business users can work with data directly, without filing a ticket with the analytics team and waiting three days for a report.

How Agentic BI Functions Behind the Chat Box

Agentic BI is an orchestration problem wrapped in an accuracy problem. The user sees a chat box. Underneath, several agents hand work to each other, and every step depends on a clean semantic layer. The request hits a planning agent first, and then that agent decomposes the question into sub-tasks. It pulls conversion data, filters by region and date, compares to prior periods, detects anomalies, and explains the cause.

1. Agents Reading Enterprise Data

Agents connect to warehouses, lakehouses, SaaS APIs, and document stores. In a Microsoft shop, that usually means OneLake, Fabric warehouses, and Power BI semantic models. In a mixed environment, it extends to Databricks, Snowflake, or operational databases.

2. Planning and Decomposing Queries

A large language model turns the natural-language request into an execution plan and simple questions work well because the plan is short. Compound questions with joins, filters, and comparisons are where most systems start failing, because the AI agent architecture underneath has to handle more reasoning steps without losing the thread.

3. Turning Answers Into Action

Good agentic BI doesn’t stop at the answer. It can push an alert to Teams, update a Power BI report, or open a ticket in a downstream system. The action layer is what separates BI from decision automation, and it’s what agentic AI companies are competing on now.

4. The Semantic Layer and Accuracy

The semantic layer is where raw tables become business meaning. It maps customer_id to “customer.” It defines “gross margin” the same way finance defines it. It enforces which filters apply to which measures, and it’s the single biggest predictor of whether an agentic BI deployment succeeds.

Enterprise text-to-SQL benchmarks show that adding a semantic layer moves production accuracy from around 16% to above 90%. The jump comes from giving the model business definitions, not only by giving better prompts. Microsoft’s MCP Server for Power BI matters because it exposes those semantic models directly to agents, which means the business definitions travel with every query.

Where Agentic BI Falls Short

They repeat in predictable ways, and they usually come down to one thing that is the data layer wasn’t in shape when the agent was deployed.

Three issues show up most often:

Hallucinated columns and joins: The model produces a column name that sounds right but doesn’t exist in the schema. The query still executes, but it pulls from the wrong place and returns a confident, incorrect result. This happens more often when table names are unclear or the prompt doesn’t tie the model closely to the actual schema.

Ambiguous metric definitions: A user asks for “revenue,” but the warehouse holds several versions of that metric. The agent picks one without context. The number looks reasonable, yet it doesn’t match any existing report, and teams can end up arguing over which figure is correct due to lack of clarity.

Accuracy drops on complex queries: On the BIRD benchmark, GPT-5 reaches about 74.1% accuracy on straightforward enterprise queries and falls to around 35% as complexity increases. Most real-world schemas look more like BIRD than the simplified setups shown in demos, so this decline shows up quickly in practice.

| Failure mode | Root cause | Fix |

| Hallucinated columns | No schema grounding | Expose schema through MCP or retrieval layer |

| Wrong metric definition | No business glossary | Semantic layer with defined measures |

| SQL accuracy collapse | Complex joins, no semantic views | Pre-built views for common query patterns |

| Wrong numbers, confidently | No validation step | Post-query validation before results reach the user |

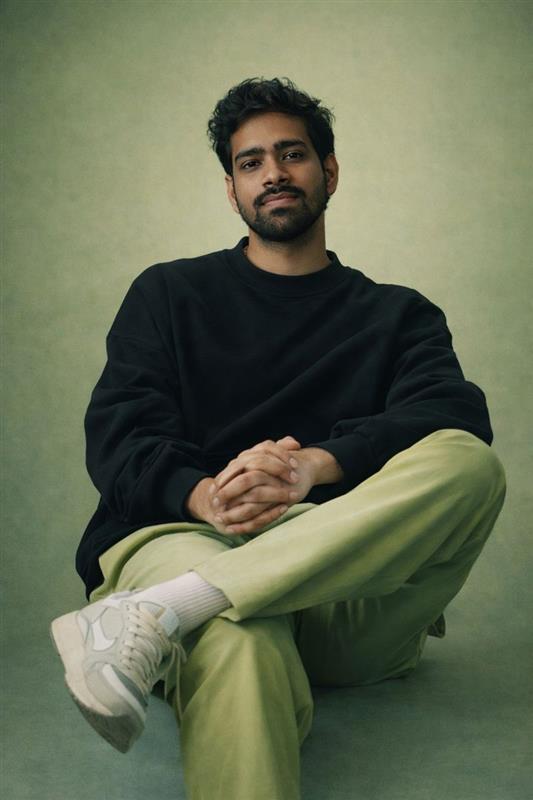

Kanerika’s Karl as Agentic BI

Kanerika is an AI-first data and analytics consulting firm that builds production agentic AI on Microsoft Fabric, Databricks, and Snowflake, covering data integration, semantic modeling, governance, and agent deployment as a single engagement. It is a Microsoft Solutions Partner for Data and AI with the Analytics Specialization, a Microsoft Fabric Featured Partner, and one of the earliest Microsoft Purview implementors globally, with a Microsoft MVP on staff. The practice is ISO 27001, SOC II Type II, CMMI Level 3, and GDPR certified. Karl is the data insights agent that comes out of that work.

What Karl Does

Karl answers questions in plain English, returns a chart with a written explanation, and keeps a follow-up ready. No SQL, no dashboard navigation, no ticket to the analytics team.

Karl connects to SQL, NoSQL, cloud warehouses, and live data streams. He queries data where it lives, so there’s no pipeline project to run before the first useful answer.

Four things that separate Karl from a chatbot bolted onto a dashboard:

- No pipeline project upfront. Karl works against existing data sources directly. First useful answer in days, not months.

- Context across the session. Follow-up questions build on prior answers rather than treating every question as the first.

- Charts generated on demand. The visualization fits the question, not a default template.

- Data stays in place. Karl runs inside the client environment, which matters in healthcare, finance, and any regulated industry.

Karl has active deployments across finance, retail, and manufacturing. Operations teams pull live inventory numbers during meetings instead of filing a request afterward. Executives who used to wait days for analyst-built decks now ask Karl directly before board calls.

Case Study: 30% Faster Inventory Reconciliation for a UK Manufacturer

A UK-based manufacturer of building products runs windows, doors, and cladding production across multiple sites. Inventory moved constantly through Microsoft Navision, with transactions logged as coded Item Ledger Entries that business users could not read without a technical translator. Variance analysis took days. Reconciliation relied on SQL experts. Operations, warehouse, and finance teams regularly disagreed on which number was correct.

Challenge

- Complex ERP data structures in Microsoft Navision made variance analysis time-intensive

- Manual reconciliation led to delays and inconsistent interpretations across teams

- Business users depended on technical teams to write SQL queries, which slowed every resolution

- Over 400 recurring reports reflected these mismatches, making accuracy and compliance harder to maintain

Solution

- Kanerika deployed Karl, its data insights agent, to interpret ERP data and answer variance questions in natural language

- Karl was trained on real transaction data and business logic so variance detection was automated, accurate, and explainable

- A Data Dictionary framework translated coded ERP fields into business-readable terms, turning cryptic Navision entries into self-service insights

- Karl surfaced the top 5 to 10 recurring variance patterns proactively, so teams could act on root causes instead of investigating the same mismatches each week

Result

- Weekly inventory reconciliation time dropped by 20 to 30%, tightening the closing cycle and freeing finance and operations teams from repetitive investigation work

- Time-to-insight fell by over 50% as business users pulled answers directly from Karl using natural-language queries, cutting dependency on the BI team

- Over 10 recurring variance patterns were detected automatically, turning repeated manual investigations into one-time fixes at the source

- Improved accuracy carried across 400+ recurring reports, bringing operations, warehouse, and finance teams onto the same numbers and reducing compliance risk

- ERP interpretation was standardized across the business, so a variance meant the same thing in a finance report as it did on the warehouse floor

Transform Your Dashboard with AI-Powered Karl!

Partner with Kanerika for Expert AI implementation Services

Wrapping Up

Agentic BI is moving from keynote slide to shippable feature fast, and Microsoft’s MCP Server announcement is the clearest proof so far. The teams that win with it will be the ones that treat it as an architecture problem, not a product purchase.

Semantic layer work, governance, and honest benchmarking against your own schemas decide whether agents become trusted analysts or expensive liabilities. Start with a clean model, pick one workflow, measure accuracy against ground truth, and expand only when the numbers hold up.

Frequently Asked Questions

What is agentic BI in simple terms?

Agentic BI is business intelligence where AI agents handle the work of querying data, building visualizations, and explaining results. Users ask questions in plain English. Agents find the data, run the analysis, return an answer with reasoning, and can trigger downstream actions like alerts or report updates without manual dashboard navigation or SQL.

How is agentic BI different from Copilot in Power BI?

Copilot in Power BI answers questions about data already modeled in a specific report. Agentic BI agents go further. They plan multi-step workflows, access multiple data sources, execute actions downstream, and carry context across conversations. Copilot is one component of a full agentic BI stack, not the whole system on its own.

Does agentic BI replace data analysts?

The short answer is no, though the job shifts noticeably. Agents handle routine reporting and ad-hoc queries against existing models. Analysts shift toward defining metrics, building semantic models agents depend on, designing experiments, and investigating the harder questions agents cannot answer reliably. The work moves up the value chain toward data product ownership rather than disappearing.

What is the biggest reason agentic BI fails in production?

A weak semantic layer. Without clean table and column names, business definitions, synonyms, and relationships, agents guess the structure and meaning of data. Benchmarks show text-to-SQL accuracy drops from above 90% with a strong semantic layer to near 16% without one. Fix the semantic model before deploying the agent, not after.

Is agentic BI accurate enough for enterprise use?

It depends on the data foundation. On clean, well-modeled schemas with a governed semantic layer, production accuracy exceeds 90% on common query patterns. On messy enterprise warehouses without semantic tuning, accuracy can drop below 50% on moderate-complexity queries. Architecture decides the outcome, not the model, which is why benchmark results rarely predict real performance.

How does Microsoft Fabric support agentic BI?

Microsoft Fabric provides the unified data layer (OneLake), semantic modeling (Power BI), conversational interface (Copilot), agent access (Power BI Modeling MCP Server), and governance (Purview). Together they form the most complete agentic BI stack in the Microsoft ecosystem, released progressively through 2025 and 2026. The pieces depend on each other, so teams usually deploy them together.

What is the Power BI MCP Server and why does it matter?

The Power BI Modeling MCP Server, announced in November 2025, lets AI agents interact with Power BI semantic models through the Model Context Protocol. Agents can build, modify, and query models using natural language. It matters because it makes agentic BI a shipped capability inside Microsoft’s stack, not a concept.

How long does it take to deploy agentic BI?

A pilot with one workflow on a clean semantic model takes four to eight weeks. Enterprise-wide rollout with multiple agents, Purview governance, and legacy BI migration typically takes three to six months. Deployments that skip the semantic layer work run longer and fail audits, so most time is spent on foundations rather than agents.