AI solutions have come a long way in a remarkably short time. Sure, ChatGPT got all the headlines and captured the public’s imagination. But the real story? It’s playing out in hospitals, clinics, and healthcare organizations where enterprise-focused AI is quietly delivering results that actually matter—better clinical workflows, genuine operational efficiency, and measurably improved patient care.

Take Microsoft Azure, for instance. They’ve rolled out AI capabilities across healthcare systems that do something pretty remarkable: generate intelligent patient timelines. These systems dig through mountains of unstructured clinical data, pull out what matters, and organize it chronologically. The result? Clinicians finally get comprehensive patient histories right when they need them most—at the point of care.

Now, anyone who’s worked in healthcare knows the industry hasn’t exactly been quick to embrace new technology. That’s changing, though. Financial pressures and operational headaches are pushing healthcare organizations to take AI seriously in ways they never have before.

Here’s something that should get the attention of any healthcare executive: research from McKinsey and Harvard University shows that if we actually deploy AI widely across healthcare, we’re looking at potential annual savings between $200 billion and $360 billion for the U.S. system alone. That’s not a small number. And it’s not just theoretical anymore. At major medical conferences recently, doctors have watched AI systems perform real-time clinical documentation. What used to be reactive, fragmented care is starting to look more proactive and connected.

Of course, there’s a catch—there always is. Successfully deploying AI in healthcare means paying serious attention to data security, clinical accuracy, and the maze of regulatory compliance. Despite all the advances, we still need humans in the loop to ensure patient safety and maintain the quality of care that patients deserve.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

Why Healthcare Organizations Are Turning to AI to Cut Administrative Costs

Let’s talk about money for a moment, because the financial squeeze on healthcare organizations just keeps getting tighter. According to PwC’s annual analysis, medical costs jumped 7% in 2024. The culprits? Persistent inflation, workforce shortages that show no signs of letting up, pharmaceutical prices that keep climbing, and providers consolidating left and right. These aren’t temporary blips—they’re fundamental economic challenges forcing C-suite executives to seriously consider technologies that can move the needle on operational efficiency.

McKinsey’s research paints an interesting picture: AI tools can automate roughly 45% of administrative tasks across healthcare organizations. When you do the math, that translates to something like $150 billion in potential annual savings. And that’s just administrative work. Even something as straightforward as AI-enabled chatbots could shave $3.6 billion off global healthcare spending by 2025.

What’s actually happening behind these numbers? The technology is processing all that messy, unstructured data—patient records, medical images, clinical documentation—and using it to streamline workflows that used to eat up enormous amounts of time. Beyond just admin work, it’s enhancing how we train medical professionals, helping doctors make more accurate diagnoses, and even speeding up how quickly we can develop new drugs.

A Real Example: How Navina Built a Clinical Intelligence Platform That Works

Let’s look at a concrete example. Navina, a healthcare AI startup, pulled in $55 million in Series C funding in 2025. Not bad for a company that’s essentially trying to solve one of healthcare’s most persistent problems: information overload.

Their AI assistant does something physicians desperately need. It gathers patient data from everywhere—electronic health records, insurance claims, multiple care touchpoints—and turns it into something actually useful: actionable insights that doctors can use during patient encounters. No more hunting through dozens of screens or trying to piece together a patient’s story from fragments.

The American Academy of Family Physicians Innovation Lab evaluated Navina’s platform and found something pretty impressive: it cuts physician pre-visit preparation time by 61%. Think about what that means in practical terms. Instead of spending an hour before seeing patients just trying to get up to speed, doctors can spend that time actually taking care of people. The system even handles the tedious stuff—referral letters, progress notes, clinical summaries—automatically.

Unlocking Value With Databricks Lakehouse Architecture: Key Components & Benefits

Unpack core components, explore architecture and governance, and share best practices for successful adoption.

Solving the Privacy Puzzle with Synthetic Data

Here’s a thorny problem that keeps healthcare IT executives up at night: patient data privacy. On one hand, you need massive amounts of real patient data to train AI systems effectively. On the other hand, you have very real legal, ethical, and practical obligations to protect that data.

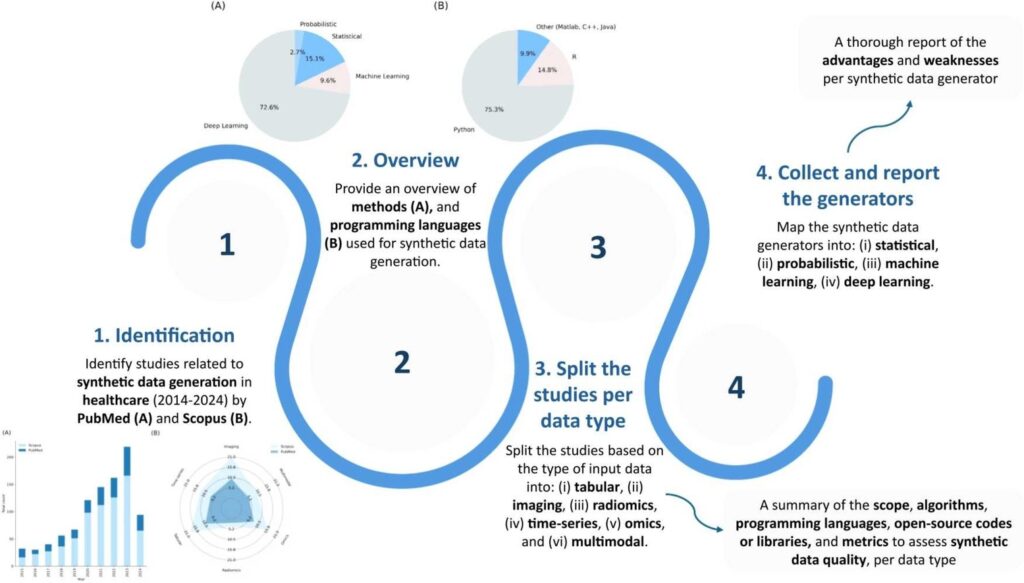

The National Library of Medicine has pointed to an interesting solution: synthetic data generation. Basically, AI creates fake patient data that looks and behaves like the real thing, statistically speaking. Researchers can use this synthetic data to study drug interactions, model disease outbreaks, and develop new algorithms without ever touching actual patient information. It’s not a perfect solution, but it’s proving to be a viable one.

This approach is already accelerating medical research by providing the scalable datasets researchers need to train machine learning models. Healthcare institutions are putting synthetic data to work across all sorts of applications—epidemiological studies, clinical trial design, personalized medicine research. The list keeps growing.

There’s a particularly neat example from Germany. A team of scientists developed something called GANerAid. It’s an AI model that uses Generative Adversarial Network methodology to create synthetic patient data for clinical studies. What makes it especially useful is that it can generate clinically relevant datasets even when you’re starting with limited initial training data. That’s huge for smaller research institutions or studies of rare diseases.

Source- Sciencedirect

Making Insurance Operations Less Painful

Anyone who’s dealt with medical insurance knows it can be a bureaucratic nightmare. AI is starting to chip away at that problem, particularly in claims processing and prior authorization.

Prior authorization is a perfect example. Under the current system, it takes an average of ten days to get authorization. Ten days where a patient might be waiting for treatment they need. AI-powered systems are cutting those timelines substantially. We’re not talking about shaving off a few hours here and there—we’re talking about fundamental improvements in how quickly patients can access necessary care.

Kanerika recently worked with a major U.S. insurance company on exactly this kind of challenge. The engagement wasn’t just about implementing some AI tool and calling it done. We built out comprehensive financial analysis and forecasting capabilities that helped them identify critical trends they’d been missing, assess operational risks more accurately, and make data-driven decisions about growth. That’s the kind of work that actually enables strategic expansion rather than just keeping the lights on.

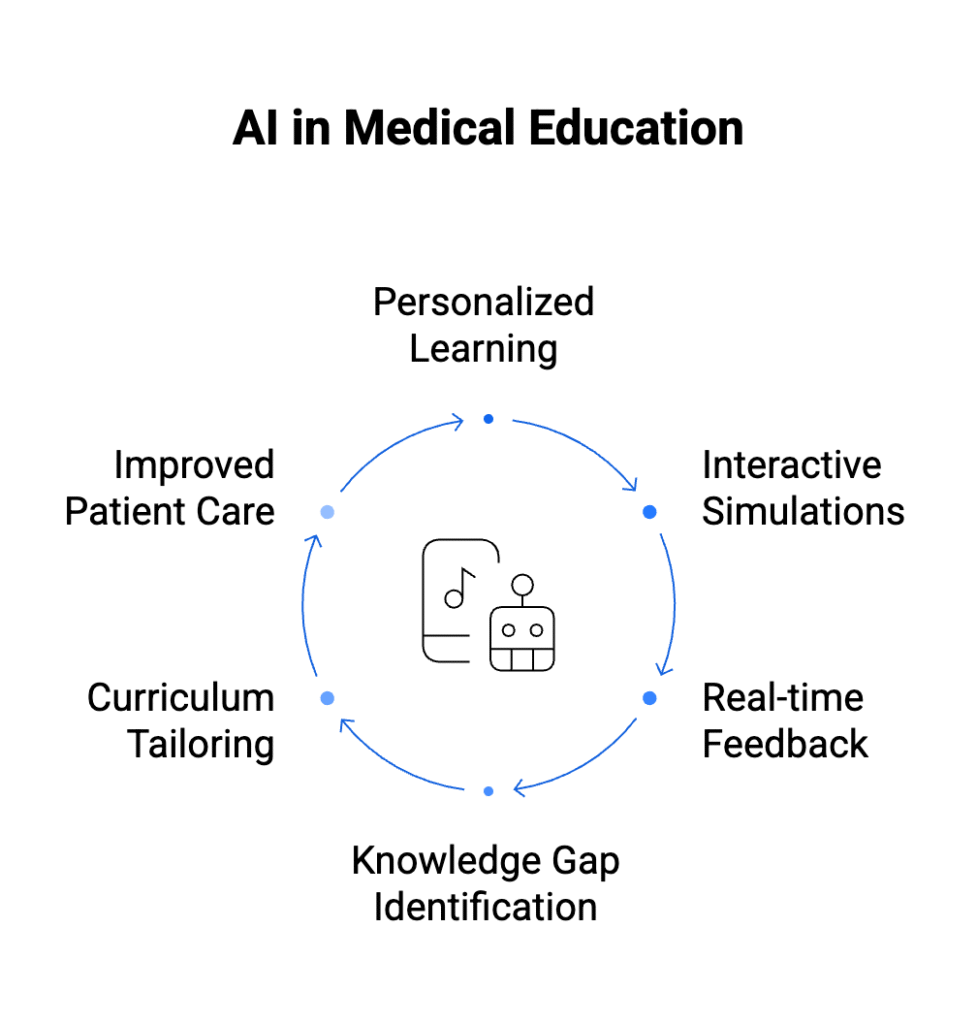

How AI Is Reshaping Medical Education and Clinical Training

Let’s address something uncomfortable but important: medical errors kill people. Johns Hopkins research estimates that medical errors contribute to over 250,000 deaths every year in the United States. Now, these figures are hotly debated among healthcare researchers, and there are methodological questions about how you even count something like this. Other studies put the number somewhere between 200,000 and 400,000 hospitalized patients experiencing some form of preventable harm annually.

Whatever the exact number, it’s too high. And one promising avenue for bringing it down is better training for healthcare professionals.

Traditional medical education has always relied on simulation, but it’s been limited by being, well, pre-programmed. You run through Scenario A, then Scenario B, and so on. The scenarios don’t change based on what you do. They can’t surprise you the way real patients constantly surprise doctors.

AI-powered training systems are different. They dynamically generate patient cases that actually adapt in real-time based on what the trainee decides to do. Make one decision, and the patient’s condition evolves one way. Make a different decision, and you’re dealing with a completely different situation. It’s closer to the messy, unpredictable reality of actual clinical practice, which means it prepares healthcare professionals better for the complex situations they’ll face.

According to Software Advice’s 2026 Medical Software Trends survey, more than half of healthcare providers (52%) believe AI will have its greatest impact on clinical decision-making, yet most care teams are still learning how to use it effectively. Among practices already using AI, 88% report positive ROI, with NLP (42%) and large language models (36%) being the most adopted tools in clinical workflows. But with insufficient AI skills cited as a key barrier, the real work lies in training care teams to use these tools confidently and consistently.

Universities Leading the Way on AI Medical Simulation

The University of Michigan built an AI model specifically for sepsis training. Sepsis is tricky—it can kill quickly if you don’t catch it early and treat it aggressively. The research team noted something important: AI applications in sepsis management aren’t just about one thing. They span both predictive analytics (identifying which patients are at risk of developing sepsis) and case identification (recognizing when a patient showing up in the emergency department is already septic).

Meanwhile, the University of Pennsylvania took a different angle during the COVID-19 pandemic. They deployed AI simulation models to analyze transmission patterns and evaluate whether public health interventions were actually working. Social distancing protocols, vaccination strategies—they could model all of it and see what made a difference and what didn’t.

Data Warehouse Migration: A Practical Guide for Enterprise Teams

Learn about key migration phases, leading tools, and proven best practices to ensure a smooth, successful transition to the modern data era.

Using AI to Catch Diseases Earlier and Diagnose Them More Accurately

In medicine, timing matters enormously. This is especially true in oncology, where catching cancer early can mean the difference between a treatable condition and a terminal diagnosis. AI algorithms trained on huge medical imaging datasets are now matching—and in some specific applications, exceeding—the diagnostic capabilities of experienced clinicians.

These AI systems analyze CT scans looking for lung cancer. They evaluate dermatological images for signs of melanoma. They assess cardiac imaging for cardiovascular disease. What started as experimental research tools have become essential clinical support systems that radiologists, dermatologists, and cardiologists are actually using in their daily practice. The result? Better diagnostic accuracy, which translates directly to better patient outcomes.

But AI in medical imaging isn’t just about interpretation. It’s also improving image quality itself. Hospitals are deploying AI tools that take low-resolution scans and transform them into high-definition images. For radiologists trying to spot subtle abnormalities, that improvement in image quality can make all the difference.

Real Breakthroughs in Medical Imaging

Researchers have been doing fascinating work with Generative Adversarial Network models. These systems take low-resolution medical scans and convert them into high-resolution images. And we’re not talking about one type of scan—this works across multiple imaging modalities. Brain MRIs, dermoscopy, retinal fundoscopy, cardiac ultrasound. The approach has shown significant improvements in how accurately clinicians can detect abnormalities. That’s setting new quality benchmarks for medical imaging across the board.

Then there’s Google’s Med-Palm 2. When they trained it on comprehensive medical datasets and tested it on answering medical questions, it hit 85% accuracy. Is it perfect? No. But it represents real, meaningful progress toward AI systems that can support clinical decision-making.

Speeding Up Drug Development Without Compromising Safety

Here’s a sobering fact about pharmaceutical development: bringing a new drug to market typically costs up to $2 billion and takes years—often more than a decade—of research and clinical trials. The process is slow, expensive, and filled with failures. Most drug candidates that look promising in early research never make it to patients.

AI is starting to change that equation, though the industry is still figuring out exactly how.

The market for AI-powered drug discovery is expected to hit $4 billion by 2028. According to Forbes, AI solutions are already cutting drug screening timelines by something like 40% to 50%. When you’re talking about a process that normally takes years, shaving off 40% to 50% of that time is transformative. It means drugs reaching patients faster. It means lower development costs. It means pharmaceutical companies can take more shots on goal.

How Big Pharma Is Investing in AI Capabilities

Recursion Pharmaceuticals made headlines when they dropped $88 million to acquire two Canadian AI startups. One of them, Valence, specializes in something particularly interesting: designing drug candidates from datasets that traditional pharmaceutical development approaches can’t really handle. Essentially, they can work with less data and still generate viable drug candidates.

Meanwhile, over at the University of Toronto, researchers developed something called ProteinSGM. It’s an AI system that generates novel protein structures at speeds that would have seemed impossible just a few years ago. Then another AI model—OmegaFold—evaluates those AI-generated proteins to figure out whether they might actually work as therapeutic targets or pharmaceutical compounds. It’s AI systems checking other AI systems’ work, and it’s moving incredibly fast.

The Ethical Minefield of Healthcare AI

There’s a quote from Harvey Cushing, one of the pioneers of neurosurgery, that’s worth remembering: “A physician is obligated to consider more than a diseased organ, more than even the whole man—he must view the man in his world.” That perspective becomes even more critical when we’re deploying AI systems that directly affect patient care, privacy, and clinical outcomes.

There are four big ethical dimensions that healthcare organizations need to grapple with: algorithmic bias, regulatory frameworks (or the lack thereof), clinical accuracy, and figuring out who’s accountable when things go wrong.

Let’s start with algorithmic bias, because frankly, it’s the scariest one. If you train an AI model on data that doesn’t represent the full diversity of the patient population, that model is going to perform worse for underrepresented groups. This isn’t theoretical—it’s already happened. There have been documented cases of AI systems that worked great for some patient populations and delivered inferior care for others, perpetuating and sometimes amplifying existing healthcare disparities.

Then there’s the regulatory situation, which is… well, let’s just say it’s evolving. The oversight frameworks for healthcare AI are still being developed. Organizations are operating in a lot of gray area, trying to figure out compliance requirements that haven’t been fully defined yet.

Clinical accuracy is non-negotiable, obviously. In most industries, if an AI system makes a mistake, maybe it costs you some money or creates some inconvenience. In healthcare? AI errors can result in serious patient harm or death. The stakes couldn’t be higher.

And finally, there’s accountability. When an AI system contributes to a bad outcome for a patient, who’s responsible? The treating physician who relied on the AI’s recommendation? The healthcare organization that deployed the system? The company that implemented it? The developers who built the algorithm? These aren’t just philosophical questions—they have serious legal and ethical implications that the industry is still working through.

Data Integration with Microsoft Fabric: Simplify, Unify, and Analyze Your Data

Discover how data integration with Microsoft Fabric is reshaping modern analytics and speeding up business intelligence.

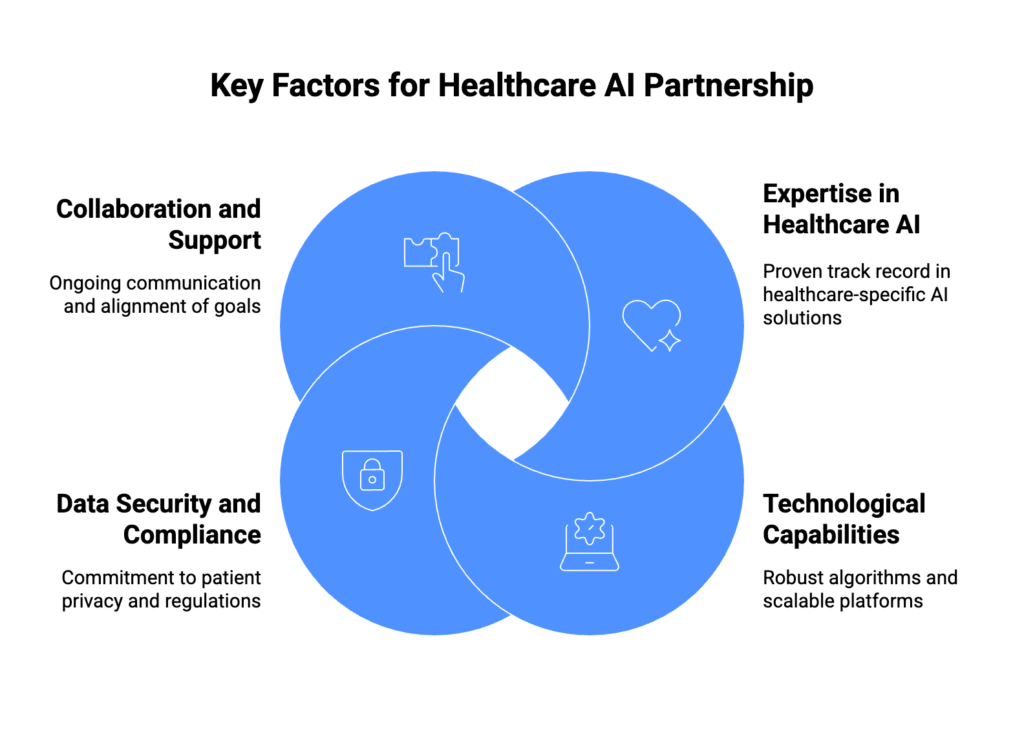

Finding the Right Partner for Your AI Implementation

If your healthcare organization is serious about implementing AI, you need to get really clear on what you’re actually trying to accomplish. Vague goals like “we need AI” or “we should use machine learning” aren’t going to cut it. You need AI solutions tailored to your specific clinical workflows, built around your organizational constraints, and trained on your data.

The implementation process touches everything. You’re selecting algorithms. You’re integrating new systems with existing electronic health records and clinical workflows that people have been using for years. You’re ensuring HIPAA compliance and data security. Depending on what you’re building, you might be dealing with FDA regulatory requirements. It’s complex, it’s technical, and it requires deep expertise in both healthcare and AI.

That’s why the partner you choose matters so much.

Transform Your Business with AI-Powered Solutions!

Partner with Kanerika for Expert AI implementation Services

What to Look For in a Healthcare AI Partner

Healthcare Domain Expertise Combined with Technical Chops

You need a partner with a proven track record in healthcare AI specifically, not just AI in general. Healthcare is different. The regulatory environment is different. The workflows are different. The stakes are different. Your implementation partner needs to understand clinical workflows, regulatory requirements, and the operational challenges that health systems, physician groups, and payers face every single day.

The methodology matters too. Reliable partners have structured processes they’ve refined through multiple successful deployments in healthcare settings. This isn’t their first rodeo. They know where the pitfalls are, they know what works, and they can move faster because they’ve done it before.

Technology Infrastructure That Fits Your Needs

Partners who’ve built established frameworks and pre-built integration tools can customize solutions to address what you specifically need rather than trying to force-fit a generic solution. These tools and frameworks streamline everything from data aggregation and algorithm training to ongoing performance monitoring and system maintenance. The difference between a partner with these capabilities and one without them can be years of development time and millions of dollars.

Change Management Support

Here’s something a lot of organizations underestimate: the technology deployment is actually the easy part. Getting your organization to actually adopt and use the AI system? That’s where things get hard. People don’t like change, especially when it affects how they do their jobs. Clinical staff have learned workflows that work for them. You’re asking them to change those workflows.

Effective partners provide real change management support. They help you manage the organizational transition. They train your clinical staff. They work with you on workflow optimization. They help shift your organizational culture to embrace AI-augmented care delivery rather than resist it.

Why Healthcare Organizations Choose Kanerika for AI Implementation

Patient data privacy isn’t just a concern—it’s a massive implementation challenge that can derail healthcare AI projects before they even get off the ground. Research published in BMC Medical Ethics lays it out pretty clearly: the huge volumes of data you need to train AI models create serious patient privacy risks. If your organization doesn’t have deep expertise in healthcare data governance and AI security protocols, you’re facing heightened compliance risks and, more importantly, potential patient harm.

This is exactly the kind of challenge Kanerika was built to solve. We’ve spent over two decades working in data management, analytics, and AI/ML technologies. That experience translates into solutions that meet stringent healthcare regulatory requirements while maintaining the ethical standards that patients have a right to expect.

How Kanerika Approaches Healthcare AI Projects

Our team brings together 100+ technology professionals with expertise across cloud infrastructure, business intelligence, AI/ML, and healthcare-specific applications. That might sound like marketing speak, but it matters because healthcare AI implementations fail when you’re missing any one of those pieces. You need people who understand the technology and people who understand healthcare, working together.

What that multidisciplinary capability actually means in practice is that we can architect AI solutions that integrate seamlessly with whatever healthcare IT ecosystem you already have in place while delivering operational improvements you can actually measure. We’re not ripping out systems that work and starting from scratch. We’re making what you have work better.

Proof Points from Real Healthcare Projects

We can talk about capabilities all day, but what have we actually done? Here are some concrete examples:

One major healthcare provider came to us with system performance problems that were affecting patient care. We re-architected their system using Microsoft Azure and delivered a 30% increase in system performance, 35% reduction in support costs, and 80% improvement in response time. Those aren’t small numbers. That’s the difference between a system that frustrates clinical staff and one that actually supports them.

For a global clinical research organization, the challenge was data processing. They had mountains of data but couldn’t process it fast enough to be useful. We implemented data wrangling solutions that cut their data processing time by 60% while simultaneously improving the quality of data they were using for research. Faster processing plus better quality—that’s the kind of outcome that actually changes what an organization can accomplish.

We’ve also deployed intelligent automation for invoice processing across a major North American healthcare provider’s network of 30+ remote healthcare centers. The manual process was causing payment delays and burying staff in data entry. The automated system eliminated those delays and freed up staff to focus on work that actually requires human judgment.

What We Offer Beyond Just Implementation

Kanerika’s healthcare AI practice isn’t just about building technology and walking away. We work with you across the entire lifecycle:

Strategic AI Consulting: We start by assessing where your organization actually is with AI readiness. Then we work with you to identify use cases that align with your strategic objectives and build a roadmap that makes sense for your organization. Not some generic best practices roadmap—one built around your specific situation.

Custom AI Development: We design and implement AI models trained on your data. This matters because off-the-shelf AI solutions often don’t perform well in healthcare settings where each organization’s data, workflows, and patient populations are different. Custom models trained on your specific data deliver performance that generic solutions can’t match.

Healthcare Data Infrastructure: You can’t do AI without solid data infrastructure. We build secure, HIPAA-compliant data platforms that aggregate all those disparate clinical and operational data sources into something AI systems can actually use. If your data is scattered across a dozen different systems, we bring it together.

Regulatory Compliance and Security: This is non-negotiable in healthcare. We implement comprehensive security controls, audit trails, and compliance frameworks that meet HIPAA, HITECH, and FDA requirements for AI-enabled medical devices. You’re not just getting technology—you’re getting technology that meets the regulatory requirements you’re accountable for.

Change Management and Training: We run organizational change programs designed specifically to drive clinical adoption. The best AI system in the world doesn’t deliver any value if your clinical staff won’t use it. We work with you to maximize ROI by ensuring your people actually adopt and effectively use the solutions we build.

Our Commitment to Quality and Security

Look, anyone can say they take security seriously. We back it up with ISO 27701 certification, SOC 2 compliance, and GDPR adherence. These aren’t just checkboxes—they represent the rigorous quality and security standards we maintain across every engagement. For healthcare organizations deploying AI solutions, that commitment to information security and privacy protection means you can move forward with confidence that the data governance and regulatory compliance pieces are handled properly.

Why Healthcare Leaders Choose Kanerika

Healthcare organizations looking to implement AI solutions that actually deliver measurable clinical and operational improvements need partners who bring more than just technical skills to the table. You need deep domain expertise in healthcare. You need proven implementation methodologies. You need comprehensive technology capabilities. And honestly, you need a partner who’s been through this enough times to know where the problems are going to come from before they show up.

Kanerika’s track record across healthcare AI implementations, combined with our commitment to ethical AI development and regulatory compliance, gives healthcare organizations a partner they can actually trust for digital transformation work.

The solutions we build are designed to scale and deliver value over the long term. We’re not interested in delivering something that works great for six months and then becomes a maintenance headache. We build solutions that continue delivering value as your organizational needs evolve and as healthcare technology itself advances.

If you’re ready to move beyond pilot projects and PowerPoints to actual AI implementation that improves how your organization operates, let’s talk.

FAQs

How is generative AI used in healthcare?

Generative AI in healthcare automates clinical documentation, synthesizes patient data for faster diagnoses, and accelerates drug discovery by generating molecular structures. Hospitals deploy these models to draft discharge summaries, create personalized treatment plans, and streamline administrative workflows that burden clinicians. Gen AI also powers conversational assistants that triage patient inquiries and supports medical imaging analysis by enhancing scan quality. These applications reduce physician burnout while improving care delivery speed and accuracy. Kanerika helps healthcare organizations implement generative AI solutions tailored to clinical workflows—connect with our team to explore your use case.

Is generative AI worth the hype in healthcare?

Generative AI delivers measurable ROI in healthcare when deployed strategically. Organizations using gen AI for clinical documentation report up to 40% time savings for physicians, while drug discovery teams cut early-stage research timelines significantly. The technology excels at processing unstructured data like physician notes and radiology reports, extracting insights that traditional analytics miss. However, success depends on proper data governance, HIPAA-compliant infrastructure, and domain-specific model training. Generic implementations often underdeliver. Kanerika specializes in building healthcare AI solutions that balance innovation with compliance—request a free assessment to evaluate your organization’s readiness.

What are the negatives of AI in healthcare?

AI in healthcare faces legitimate concerns including algorithmic bias from training data that underrepresents certain populations, leading to diagnostic disparities. Data privacy risks intensify when models process protected health information without proper safeguards. Clinician over-reliance on AI recommendations can erode diagnostic skills, while opaque black-box models complicate accountability when errors occur. Integration challenges with legacy EHR systems create workflow disruptions, and high implementation costs exclude smaller practices. Regulatory uncertainty around AI-generated medical decisions adds compliance complexity. Kanerika addresses these challenges through governed AI deployments with built-in bias detection and HIPAA-compliant architectures—let us help you implement AI responsibly.

Does AI in healthcare violate HIPAA?

AI in healthcare does not inherently violate HIPAA, but improper implementation certainly can. HIPAA compliance requires that any AI system processing protected health information operates under a Business Associate Agreement, implements encryption at rest and in transit, maintains audit trails, and enforces strict access controls. Cloud-based AI models must ensure PHI never trains external models without authorization. The risk emerges when organizations deploy consumer-grade AI tools without evaluating their data handling practices. Compliant healthcare AI requires purpose-built infrastructure and governance frameworks. Kanerika builds HIPAA-compliant AI solutions with embedded security controls—schedule a consultation to ensure your AI deployment meets regulatory requirements.

Is ChatGPT for healthcare HIPAA compliant?

Standard ChatGPT is not HIPAA compliant and should never process protected health information directly. OpenAI’s consumer product lacks Business Associate Agreements, does not guarantee PHI isolation, and may use inputs to train models. However, OpenAI offers enterprise solutions with enhanced security controls that can support HIPAA compliance when properly configured. Healthcare organizations must deploy ChatGPT through Azure OpenAI Service or equivalent HIPAA-eligible infrastructure with signed BAAs, encryption, and access controls. Using consumer ChatGPT for patient data creates serious compliance violations and breach risks. Kanerika implements secure generative AI solutions for healthcare with full HIPAA compliance—contact us to build a compliant deployment.

What is the future of AI in healthcare?

The future of AI in healthcare centers on ambient clinical intelligence, where AI passively documents patient encounters, and autonomous agents that handle administrative tasks end-to-end. Multimodal models will integrate imaging, genomics, and clinical notes for comprehensive diagnostic support. Predictive AI will shift care from reactive treatment to proactive intervention, identifying disease risk years earlier. Federated learning will enable model training across institutions without sharing raw patient data. Regulatory frameworks will mature, establishing clear accountability standards. Within five years, AI copilots will assist most clinical decisions. Kanerika helps healthcare organizations prepare for this future with scalable AI infrastructure—talk to us about your roadmap.

What is one of the biggest challenges of AI in healthcare?

Data quality and interoperability represent the biggest challenge for AI in healthcare. Medical data sits fragmented across EHRs, imaging systems, labs, and wearables in inconsistent formats. AI models require clean, standardized, representative datasets to perform reliably, yet most health systems struggle with incomplete records, coding inconsistencies, and siloed repositories. Without proper data integration, even sophisticated algorithms produce unreliable outputs that clinicians cannot trust. Privacy regulations further complicate data aggregation across institutions. Solving this requires robust data engineering before any AI deployment. Kanerika’s data integration and governance expertise ensures your healthcare AI foundation is solid—reach out to assess your data readiness.

Where is AI used most in healthcare?

AI sees heaviest adoption in medical imaging, where algorithms detect anomalies in radiology scans, pathology slides, and ophthalmology images with accuracy matching specialists. Administrative automation ranks second, with AI handling prior authorizations, claims processing, and clinical documentation. Drug discovery leverages AI for molecular design and clinical trial optimization. Predictive analytics identifies high-risk patients for early intervention, while virtual health assistants manage patient triage and appointment scheduling. Revenue cycle management also benefits from AI-driven coding and denial prediction. These high-volume, data-rich areas offer the clearest ROI for healthcare AI investments. Kanerika deploys AI across these domains with proven implementation frameworks—explore how we can accelerate your AI adoption.

What is generative AI for disease detection?

Generative AI for disease detection uses deep learning models to identify patterns in medical data that indicate disease presence or progression. Unlike traditional diagnostics, generative models can synthesize training data to improve detection in rare conditions with limited samples. These systems analyze imaging scans, lab results, and clinical notes simultaneously, flagging early-stage cancers, cardiac abnormalities, and neurological conditions before symptoms manifest. Gen AI also generates explanations for its findings, helping clinicians understand diagnostic reasoning. The technology augments specialist expertise, particularly in underserved areas lacking subspecialty access. Kanerika builds custom generative AI disease detection solutions integrated with your clinical workflows—schedule a discovery session to learn more.

What is generative AI in medical imaging?

Generative AI in medical imaging creates enhanced diagnostic capabilities by reconstructing low-quality scans, generating synthetic training data, and producing detailed annotations that guide radiologists. These models improve MRI, CT, and X-ray image resolution while reducing patient radiation exposure through fewer required scans. Generative adversarial networks synthesize realistic medical images representing rare pathologies, enabling better model training without additional patient data. AI also automates preliminary readings, flagging urgent findings for immediate review and generating structured reports. This technology reduces interpretation time while improving detection sensitivity for subtle abnormalities. Kanerika implements medical imaging AI solutions that integrate seamlessly with existing PACS systems—connect with our healthcare AI specialists today.

What is the difference between predictive and generative AI in healthcare?

Predictive AI in healthcare analyzes historical data to forecast outcomes—patient readmission risk, disease progression, or treatment response—outputting probabilities and classifications. Generative AI creates new content: clinical documentation, synthetic patient data for research, drug molecule structures, or personalized care plans. Predictive models answer questions about likelihood; generative models produce original artifacts. Many healthcare applications combine both: predictive AI identifies high-risk patients while generative AI drafts intervention recommendations. Predictive AI requires structured labeled datasets, while generative models learn from unstructured text and images. Both complement each other in comprehensive healthcare AI strategies. Kanerika implements both predictive and generative AI solutions tailored to your clinical objectives—let us design your integrated AI roadmap.

What is the main advantage of AI in healthcare?

The main advantage of AI in healthcare is its ability to process vast amounts of complex medical data faster and more consistently than humans, enabling earlier diagnoses and more personalized treatments. AI analyzes thousands of variables across imaging, genomics, and clinical records simultaneously, identifying patterns invisible to clinicians. This capability translates into faster time-to-diagnosis, reduced medical errors, and treatments optimized for individual patient characteristics. For healthcare systems, AI automates administrative burden, allowing clinicians to focus on patient care rather than paperwork. The scale of analysis AI enables was simply impossible with traditional approaches. Kanerika helps healthcare organizations capture these advantages through purpose-built AI implementations—reach out for a consultation.

How can generative AI improve public health?

Generative AI improves public health by accelerating disease surveillance, creating targeted health communications, and modeling intervention outcomes at population scale. Public health agencies use gen AI to synthesize epidemiological reports, generate multilingual health education materials, and simulate disease spread under various scenarios. These models analyze social determinants of health data to identify vulnerable populations and draft personalized outreach strategies. Generative AI also speeds vaccine development and optimizes resource allocation during outbreaks by generating predictive scenarios. The technology democratizes public health intelligence, enabling smaller agencies to leverage capabilities previously available only to large institutions. Kanerika partners with public health organizations to deploy generative AI for population health management—contact us to explore solutions for your agency.

How is generative AI used in clinical research?

Generative AI transforms clinical research by automating literature synthesis, designing trial protocols, and identifying optimal patient cohorts from EHR data. Researchers use gen AI to draft regulatory submissions, generate synthetic control arms reducing placebo group sizes, and summarize thousands of publications into actionable insights. In drug discovery, generative models design novel molecular structures with desired therapeutic properties, dramatically accelerating early-stage research. AI also monitors trial data in real-time, flagging safety signals and suggesting protocol modifications. Natural language processing extracts structured endpoints from unstructured clinical notes, improving data quality. These applications reduce research timelines from years to months. Kanerika supports life sciences organizations with generative AI solutions that accelerate clinical development—schedule a briefing to learn how.

What is Gen AI in healthcare 2025?

Gen AI in healthcare 2025 represents the maturation phase where generative AI moves from pilots to production-scale deployments across clinical and operational workflows. Leading health systems now deploy ambient documentation that automatically generates visit notes from physician-patient conversations. Multimodal models process imaging, pathology, and genomics data together for comprehensive diagnostic support. Regulatory clarity has emerged, with FDA establishing clear pathways for generative AI medical devices. Enterprise adoption focuses on administrative automation, reducing staff burnout and operational costs. Integration with EHR systems is now seamless, and models trained on institution-specific data deliver higher accuracy. Kanerika helps organizations navigate this evolved landscape with proven healthcare AI implementations—talk to our experts about your 2025 AI strategy.

Can medical devices use generative AI technology?

Medical devices can incorporate generative AI technology, though regulatory requirements intensify significantly. The FDA evaluates AI-enabled medical devices through its Software as a Medical Device framework, requiring manufacturers to demonstrate safety, efficacy, and continuous performance monitoring. Generative AI in devices must produce consistent, explainable outputs—a challenge given model variability. Current FDA-cleared applications include imaging analysis and clinical decision support. Manufacturers must implement robust validation protocols, version control, and post-market surveillance. The predetermined change control plan allows certain AI updates without new submissions. Embedded generative AI remains emerging, with most cleared devices using traditional ML approaches. Kanerika guides medical device companies through AI integration with regulatory-compliant development processes—connect with us to discuss your device roadmap.

Is it legal to use AI in healthcare?

AI in healthcare is legal when deployed within established regulatory frameworks. The FDA regulates AI-enabled medical devices, requiring clearance or approval depending on risk classification. HIPAA governs AI systems processing protected health information, mandating specific security and privacy controls. State medical practice laws may restrict AI-generated diagnoses without physician oversight. Liability frameworks continue evolving, with providers generally remaining accountable for AI-assisted decisions. Informed consent requirements vary by jurisdiction and use case. Organizations must document AI governance policies, ensure algorithmic transparency for clinical decisions, and maintain human oversight for patient-facing applications. Compliance is achievable but requires deliberate planning. Kanerika implements legally compliant healthcare AI solutions with embedded governance—reach out for guidance on your regulatory requirements.

How big is the Gen AI market in healthcare?

The generative AI healthcare market is projected to exceed $17 billion by 2030, growing at over 35% annually. Current spending concentrates on clinical documentation automation, drug discovery acceleration, and administrative workflow optimization. North America leads adoption, driven by EHR infrastructure and reimbursement pressures. Investment flows heavily into foundation models trained on medical data, ambient clinical intelligence platforms, and clinical decision support systems. Large health systems and pharmaceutical companies drive enterprise spending, while venture capital fuels healthcare AI startups. The market expansion reflects demonstrated ROI in reducing physician burnout and accelerating research timelines. Kanerika helps organizations capture their share of healthcare AI value with implementation expertise—request a strategic assessment to identify your highest-impact opportunities.