As of 2025, businesses continue to face a major disconnect between data collection and actual insight. According to recent research, 68% of enterprise data still goes unused in analytics workflows. This isn’t due to lack of access—it’s because the data often lives in unstructured, all-in-one tables that aren’t fit for analysis at scale.

Compounding the issue, 71% of organizations now report that their business intelligence tools struggle to scale with growing data, and 76% say slow or cluttered dashboards are affecting decision-making. The root cause? Poor data modeling. Without clear fact and dimension separation, even modern BI tools can’t perform efficiently.

Addressing these challenges, Microsoft Build 2025 introduced significant enhancements to Power BI and unveiled Microsoft Fabric, a unified data platform designed to streamline data management and analytics. These advancements aim to empower organizations to transform raw data into structured, insightful information efficiently.

That’s why, in this blog, we focus on one of the most practical and powerful modeling approaches available in Power BI—creating a Star Schema from a Single Table using Power Query. We’ll show you how to take a flat table and break it into a clean, scalable data model, step by step, using native Power BI tools.

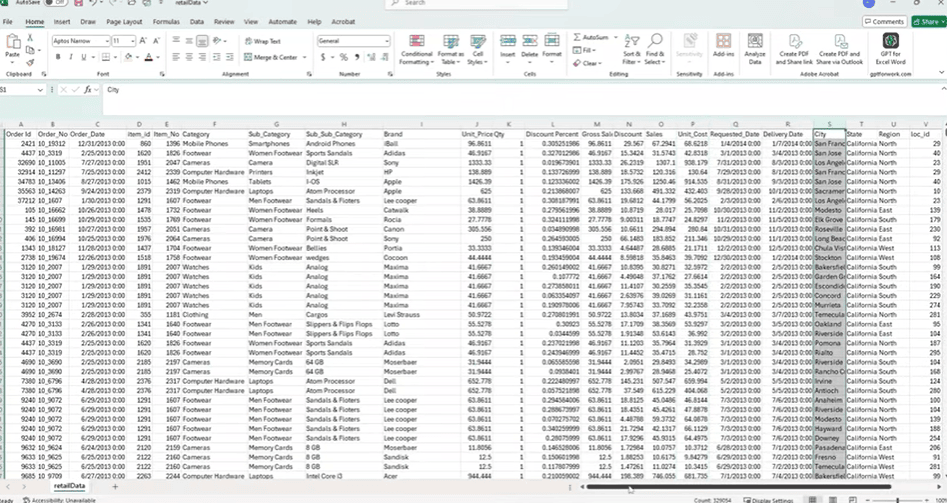

Step 1: Understanding the Dataset to Create Star Schema

Many business datasets are delivered as flat tables—single sheets containing a mix of transactional and descriptive data. These tables often include sales values, product details, customer information, payment methods, and geographic fields, all combined in one structure.

While this format is easy to work with initially, it creates several issues in Power BI. Mixing repeated descriptive fields with transactional data leads to larger file sizes, unnecessary redundancy, and slower performance. It also complicates relationship management and limits the flexibility of the data model.

To resolve this, the data needs to be restructured into a star schema. In this approach:

- A fact table holds transactional data such as sales, quantity, cost, and discount.

- Dimension tables store descriptive attributes like product categories, regions, or payment methods.

The dataset used in this example contains:

- Sales details: Order ID, Order Date, Unit Price, Quantity, Discount

- Product attributes: Item ID, Category, Subcategory, Brand

- Geography: City, State, Region, Location ID

- Business descriptors: Payment Method, Order Type, Customer ID

This structure makes it suitable for building a star schema, which improves performance and supports scalable reporting in Power BI. In the next steps, Power Query will be used to transform this flat table into a clean, relational model.

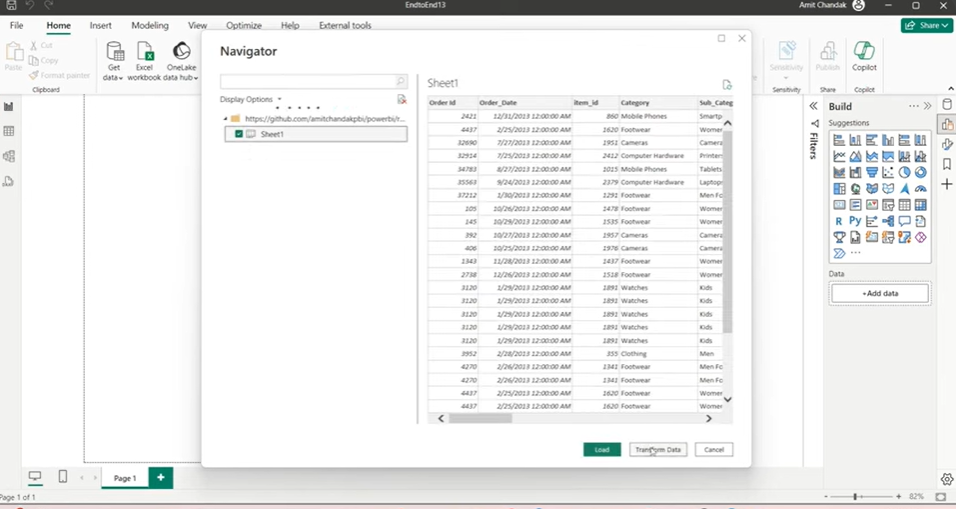

Step 2: Loading the Flat File into Power BI Using Power Query

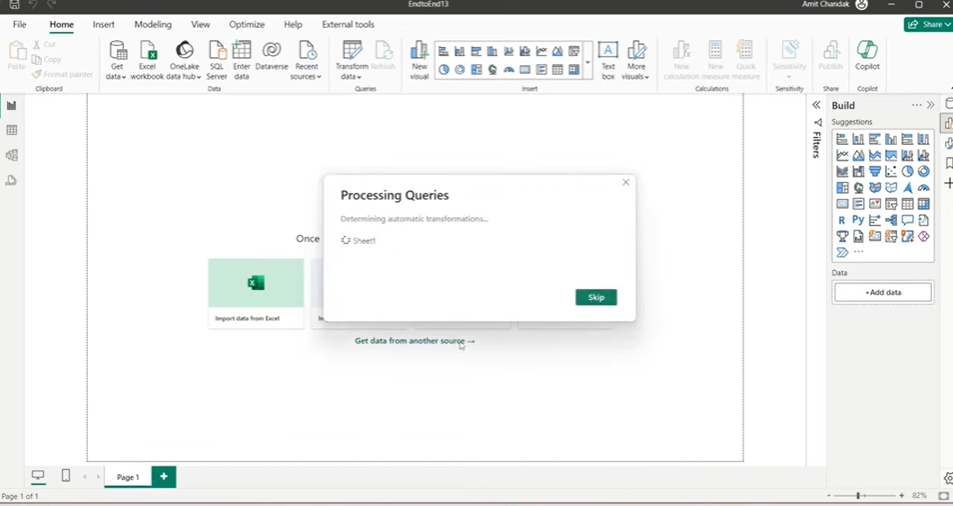

To begin building the star schema, the first step is importing the dataset into Power BI using Power Query. Here’s how the process works, broken down for clarity.

1. Open Power BI and Get the Data

- Launch Power BI Desktop.

- Go to the Home tab and select Get Data.

- Choose your data source:

- Use Web if the file is hosted online (e.g. a GitHub or Dropbox link).

- Use Excel if it’s stored locally.

2. Select the Table

- After connecting, Power BI will show a list of available sheets or tables.

- Select the one that contains the raw data.

- Do not load the data yet—click Transform Data instead.

- This opens the Power Query Editor.

3. Review the Table Structure

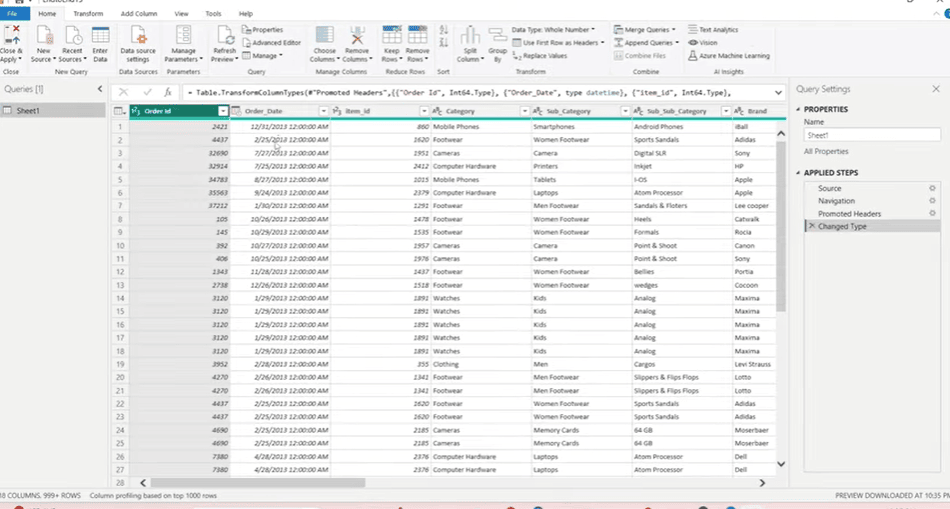

The table should now be open in Power Query. It contains:

- Transactional fields – Order ID, Order Date, Unit Price, Quantity, Discounts

- Product attributes – Item ID, Category, Subcategory, Brand

- Geographic fields – City, State, Region, Location ID

- Business fields – Payment Method, Order Type, Customer ID

This flat structure includes both descriptive fields and numeric values. It’s not optimized for reporting but provides everything we need to build a structured model.

4. Why Use Power Query

Power Query lets you:

- Split one table into many (facts and dimensions)

- Remove duplicates

- Create index columns

- Merge and join tables

- Apply all transformations before the data reaches the model

This gives you full control over how the data is shaped and ensures the final model in Power BI is clean, fast, and reliable.

Step 3: Creating Dimension Tables for Power BI Star Schema

Once the data is loaded into Power Query, the next step is to break the flat table into dimension tables. These dimensions will store repeated descriptive values such as product info, locations, and payment types. This improves performance and helps create a proper star schema.

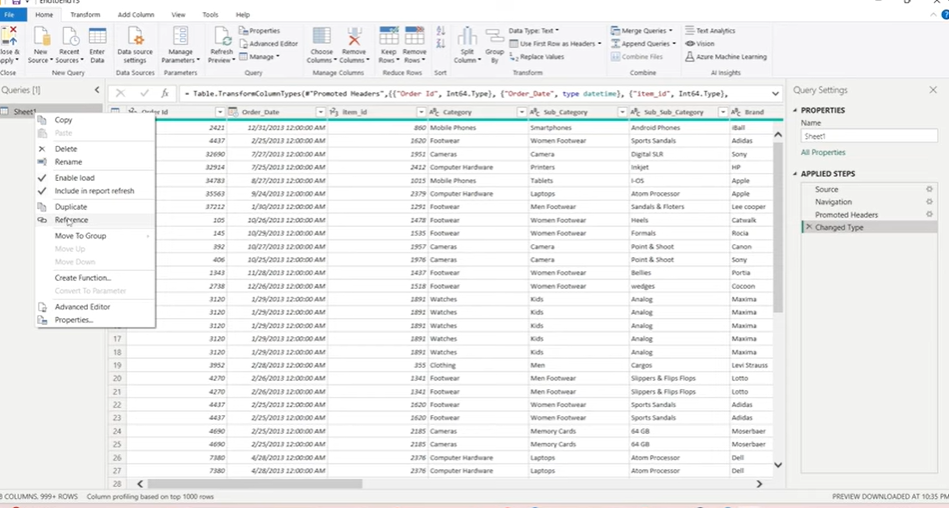

We’ll create dimension tables using the Reference option in Power Query.

1. Use “Reference” Instead of “Duplicate”

In Power Query, you can either:

- Duplicate a table – creates a separate copy with no link to the original

- Reference a table – creates a linked version that shares the data source

Always use Reference when building dimensions. It loads data only once and reduces memory usage.

To create a reference:

- Right-click the main table

- Select Reference

- A new query will be created, linked to the original

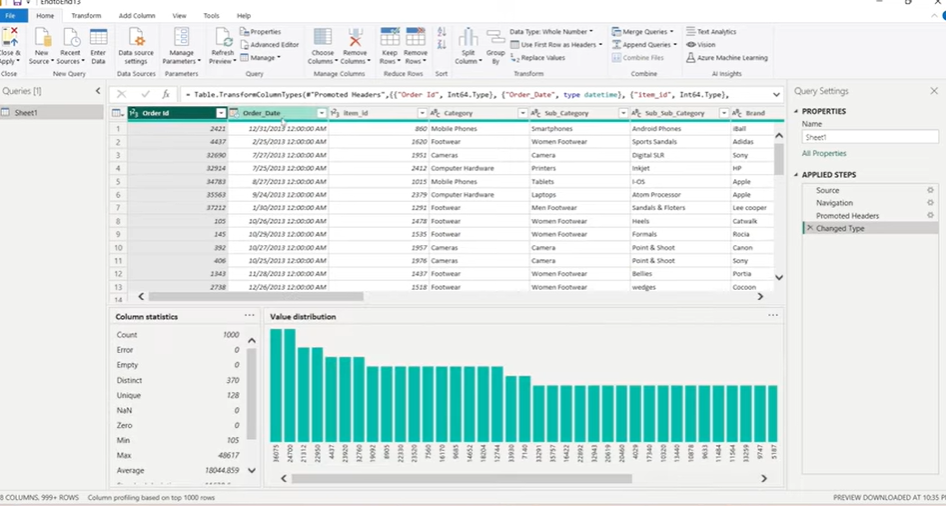

2. Build the Product (Item) Dimension

This table contains product-related attributes.

Steps:

- Reference the main table

- Select the following columns:

- Item ID

- Category

- Subcategory

- Sub-subcategory

- Brand

- Right-click → Remove Other Columns

- Use Remove Duplicates to ensure uniqueness

- Go to View > Column Distribution to confirm Item ID is unique

- Rename this query to Item

This becomes your Item Dimension.

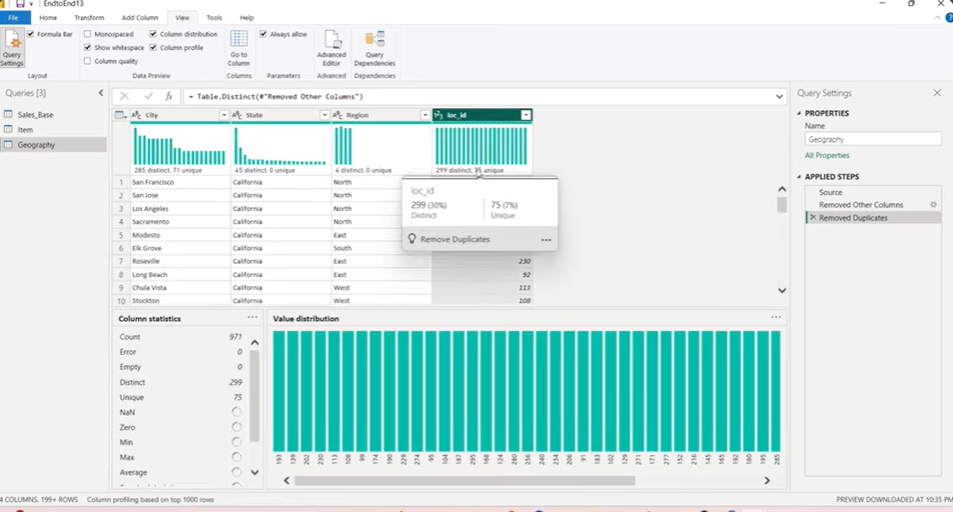

3. Build the Geography Dimension

Next, we create a table with geographic data.

Steps:

- Reference the main table

- Select:

- City

- State

- Location ID

- Remove other columns

- If Region causes duplication, remove it

- Use Remove Duplicates

- Check column distribution to ensure Location ID is unique

- Rename the query to Geography

4. Build Single-Column Dimensions (Optional but Recommended)

Some fields like Payment Method, Order Type, or Region are standalone values that still deserve their own dimensions.

Steps (for each field):

- Reference the main table

- Keep only one column (e.g. Payment Method)

- Remove duplicates

- Add an Index Column (starting from 1) to act as a unique key

- Rename the query to match the column (e.g. Payment Method)

This approach ensures even small lookup tables are normalized.

Step 4: Building the Fact Table for the Star Schema Using Power Query

With the dimension tables ready, the next step is to build the fact table. This table holds the transactional data—sales amounts, quantities, costs—and connects to each dimension using ID columns.

We’ll build the fact table by referencing the base table and merging it with the dimension tables.

1. Start from the Base Table

- Go back to the main query (the original flat table)

- Rename it to something like Sales Base

- Do not make any changes to this query—it will serve only as a source

2. Create a Reference for the Fact Table

- Right-click Sales Base

- Choose Reference

- Rename the new query to Sales Fact

- This will become your main fact table

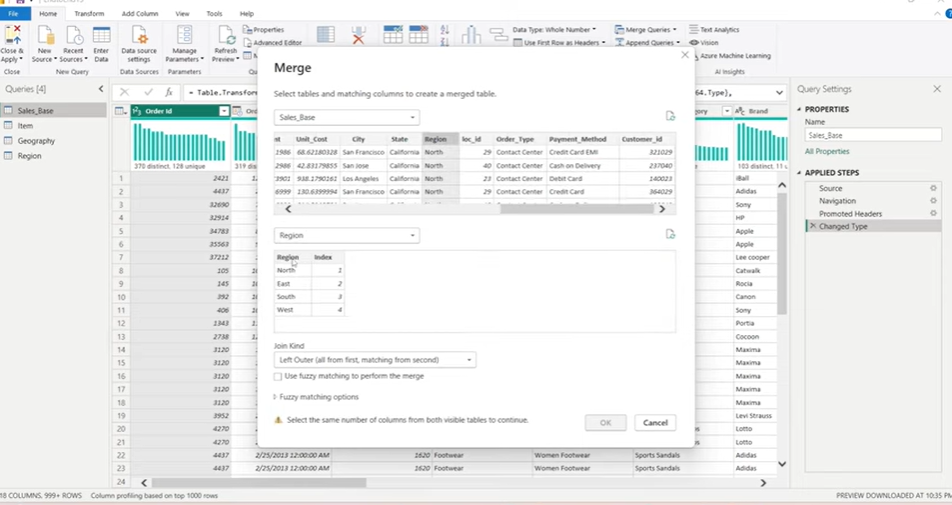

3. Merge Dimension Tables

You’ll now join each dimension to the fact table to bring in the related ID fields.

Example: Merge Geography

- In Sales Fact, go to Home > Merge Queries

- Select the Region column in both tables (Sales Fact and Geography)

- Use Left Outer Join

- Click OK

- Expand the merged column

- Select only the index or ID column (e.g. Region ID)

- Rename the column properly

Repeat the same steps to merge:

- Item table using Item ID

- Payment Method table using Payment Method

- Order Type table if needed

- Any other single-column dimensions you’ve created

4. Finalize the Fact Table

Once all merges are done:

- Keep only necessary fields

- Ensure all dimension IDs are present

- Confirm numeric fields like:

- Unit Price

- Quantity

- Gross Sales

- Discount

- Net Sales

- Optionally, create calculated columns (e.g. Gross Amount = Quantity * Unit Price)

Now the fact table is complete, with proper keys linking it to each dimension.

5. Apply the Changes

- Click Close & Apply in Power BI

- Power BI will load all the tables into the data model

- Loading order matters—dimension tables will load first, followed by the fact table

Microsoft Fabric vs Power BI: How They Differ and Which One You Need

An in-depth comparison of Microsoft Fabric and Power BI, explaining their differences, use cases, and how to choose the right solution for your data and analytics needs.

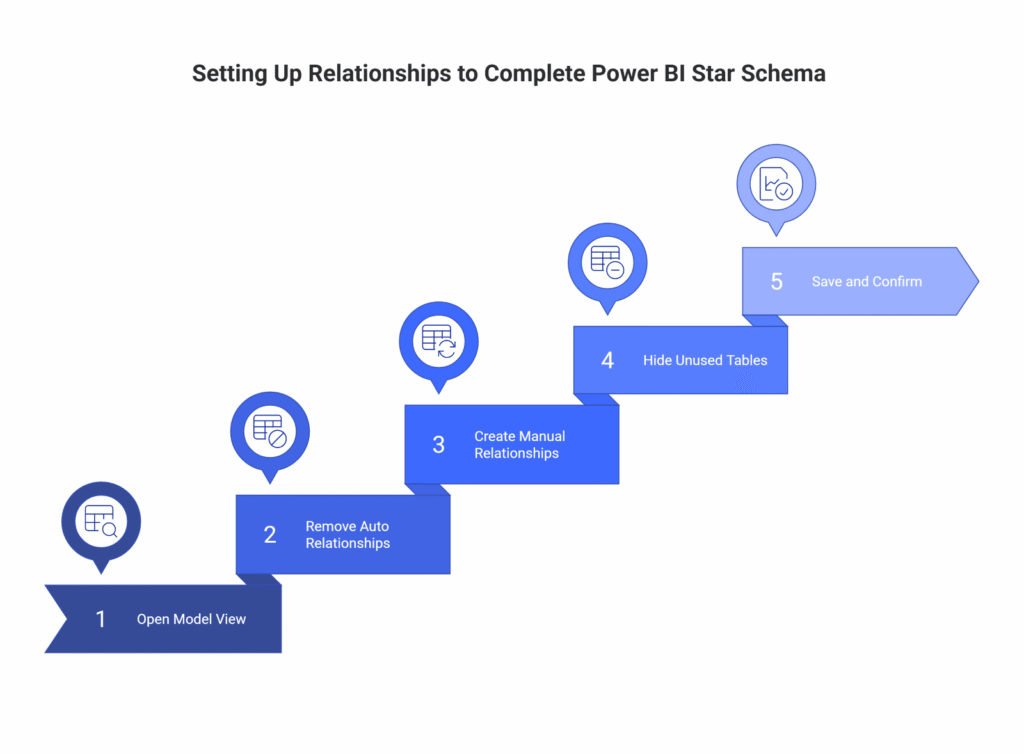

Step 5: Setting Up Relationships to Complete Power BI Star Schema

Once the fact and dimension tables are loaded into Power BI, the final step is to define relationships between them. This creates the actual star schema—a central fact table connected to multiple dimension tables.

Power BI may auto-detect relationships, but it’s best to set them manually to ensure accuracy.

1. Open Model View

- In Power BI Desktop, switch to the Model view from the left sidebar

- You’ll see all your tables laid out as blocks

- If Power BI created relationships automatically, review them carefully

2. Remove Incorrect Auto Relationships

- Select and delete any automatically created relationships

- These are often based on column name matches and may not reflect the actual keys you want to use

- It’s better to define all joins manually

3. Create Manual Relationships

For each dimension, connect its key column to the matching column in the fact table.

Examples:

- Item[Item ID] → Sales Fact[Item ID]

- Geography[Location ID] → Sales Fact[Location ID]

- Payment Method[Payment Method ID] → Sales Fact[Payment Method ID]

- Region[Region ID] → Sales Fact[Region ID] (if applicable)

Settings to use:

- Cardinality: Many-to-One (fact → dimension)

- Cross Filter Direction: Single

Power BI should detect these settings correctly but always double-check.

4. Hide Unused Tables (Optional)

To keep the model clean:

- Right-click on Sales Base (the untouched reference table)

- Select Hide in Report View

- This keeps it in the background for queries but removes clutter from visuals

5. Save and Confirm

- At this point, your star schema is complete

- Save your file

- Test visuals to confirm that relationships work as expected

With all joins defined, Power BI can now read the model efficiently. This improves performance, reduces redundancy, and simplifies visualizations and DAX logic.

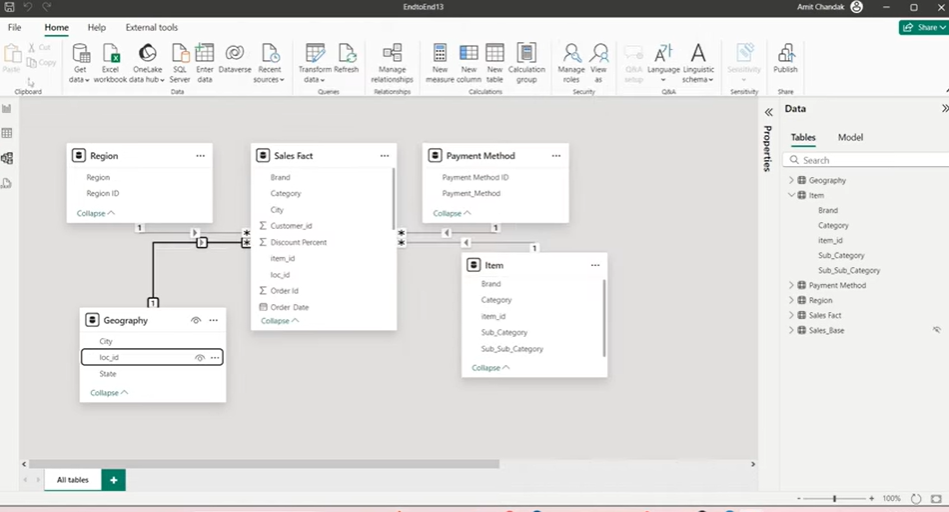

Step 6: Apply and Load Star Schema Model in Power BI

With all tables loaded and cleaned, the final task is to connect the fact table to the dimension tables. This is done in Power BI’s Model View, where you define relationships and complete the star schema.

1. Hide the Base Table (Optional)

The original flat table, now renamed as Sales Base, is no longer needed for reports. To reduce clutter:

- Right-click on Sales Base

- Select Hide in Report View

- This keeps the query for reference but removes it from visual-level tools

2. Define Relationships Manually

Power BI may try to auto-detect joins, but it’s best to create them yourself. Each dimension should connect to the fact table using its ID column.

Set up these joins:

- Sales Fact[Item ID] → Item[Item ID]

- Sales Fact[Location ID] → Geography[Location ID]

- Sales Fact[Payment Method ID] → Payment Method[Payment Method ID]

- Sales Fact[Region ID] → Region[Region ID] (if a Region table was created)

3. Set Relationship Properties

For each join:

- Cardinality: Many-to-One (the fact table has repeated values, the dimension doesn’t)

- Cross Filter Direction: Single (from dimension to fact)

These settings ensure your model behaves predictably in visuals and DAX calculations.

Once relationships are in place, your star schema is complete. The model is now ready for building fast, scalable, and reliable reports in Power BI.

Real-World Use Case: Applying Star Schema Using Power Query in Power BI

Use Case 1: E-commerce Sales Dashboard

Scenario:

An online retail company stores monthly transaction data in a centralized system. The export includes:

- Order ID, Order Date, Product Name, Category, Subcategory

- Quantity, Unit Price, Discount, Total Amount

- Payment Method, Shipping City, Customer ID

All of this is in a single flat Excel or CSV file shared with the BI team.

Problem with the Flat Table:

- Every row repeats product and category info

- City names are spelled inconsistently (e.g. “New York”, “NYC”, “new york”)

- Adding a new metric or dimension becomes harder over time

- Reports get slower as data grows

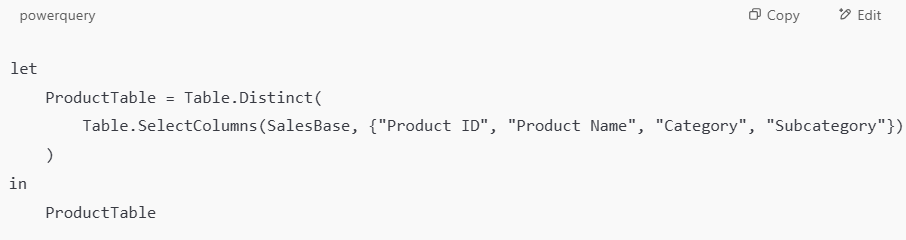

Star Schema Fix:

Split the data into:

- Fact_Sales: Order-level transactional data (Order ID, Quantity, Unit Price, Discount, Total)

- Dim_Product: Product ID, Product Name, Category, Subcategory

- Dim_Payment: Payment Method, with an assigned PaymentMethodID

- Dim_Geography: City, State, Region, Location ID

Example Power Query Steps (Product Table):

Business Impact:

- Clean slicers for Category or Payment Method

- Reports like “Top-Selling Products by Region” load instantly

- Easy to add measures like Gross Margin or Average Discount

- Can use Calendar Table and DATEADD() for YTD, MTD, or LY analysis

Use Case 2: Financial Performance Dashboard for a Services Company

Scenario:

A consulting firm tracks billing data across projects. Their Excel report contains:

- Invoice ID, Invoice Date, Client Name, Client Region, Service Type

- Consultant Name, Billing Rate, Hours Worked, Total Billed

The data is flat and includes many repeated names and service types.

Problem with the Flat Table:

- Every invoice row repeats client and service info

- Changing a client region requires updating many rows

- Complex filters (e.g., all hours billed for a certain service in a region) are slow and unreliable

Star Schema Fix:

Split the data into:

- Fact_Billing: Invoice ID, Consultant ID, Service ID, Client ID, Billing Rate, Hours, Total

- Dim_Client: Client ID, Client Name, Region

- Dim_Service: Service ID, Service Name, Type

- Dim_Consultant: Consultant ID, Name, Department

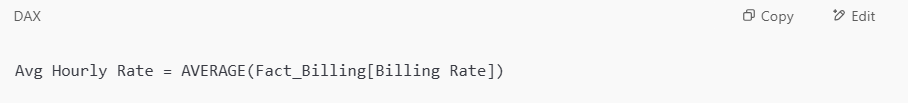

Example Measure in Power BI:

Business Impact:

- Analysts can easily slice by service, region, or consultant

- Drill-throughs to see billing by client or department become fast and reliable

- New service types can be added without touching historical data

- Regional managers get dashboards filtered to their territory with row-level security

These examples help highlight why star schema isn’t just a “best practice”—it’s the difference between a slow, fragile report and a robust analytics solution that scales.

Why Kanerika is Your #1 Choice for Data Modernization Services

As a leading provider of data and AI solutions, Kanerika recognizes the need for businesses to transition from legacy systems to modern data platforms. Upgrading outdated infrastructure enhances data accessibility, improves reporting accuracy, enables real-time insights, and lowers maintenance costs. With modern platforms, businesses can harness advanced analytics, cloud scalability, and AI-powered decision-making to stay ahead in a competitive landscape.

However, manual migration processes can be complex, time-intensive, and prone to errors, often disrupting critical business operations. Even a minor mistake in data mapping or transformation can lead to inconsistencies, historical data loss, or extended system downtime.

To address these challenges, Kanerika has developed custom automation solutions that streamline migrations across multiple platforms with precision and efficiency. Our automated tools ensure seamless transitions from SSRS to Power BI, SSIS and SSAS to Fabric, Informatica to Talend/DBT, and Tableau to Power BI, minimizing manual effort while preserving data integrity.

Partner with Kanerika for a seamless, automated, and risk-free data modernization experience.

Take Your Business to the Next Level with Innovative Power BI Solutions!

Partner with Kanerika today.

FAQs

What is the difference between star schema and wide table?

Wide Tables are more inflexible to change and are harder to maintain than the star schema. If the number of data sources increases, the whole table must be rebuilt. It also doesn’t handle changes in dimensions well, unlike star schema that can capture both the latest values and values at any given point in time.

What is the difference between star schema and wide table?

Wide Tables are more inflexible to change and are harder to maintain than the star schema. If the number of data sources increases, the whole table must be rebuilt. It also doesn’t handle changes in dimensions well, unlike star schema that can capture both the latest values and values at any given point in time.

What is the difference between star schema and wide table?

Wide Tables are more inflexible to change and are harder to maintain than the star schema. If the number of data sources increases, the whole table must be rebuilt. It also doesn’t handle changes in dimensions well, unlike star schema that can capture both the latest values and values at any given point in time.

What are the two types of tables in a star schema known?

Fact tables and dimension tables

A star schema has a single fact table in the center, containing business “facts” (like transaction amounts and quantities). The fact table connects to multiple other dimension tables along “dimensions” like time, or product.

Can you have multiple fact tables in a star schema?

The first step to handle multiple fact tables in a star schema is to identify the grain or level of detail of each fact table. The grain determines the key attributes that uniquely identify each row in the fact table, such as transaction ID, order ID, or invoice ID.

What is star schema with an example?

A star schema is a data warehouse design where a central fact table connects to multiple dimension tables, resembling a star shape. The fact table holds measurable, quantitative data like sales amounts or order quantities, while dimension tables store descriptive attributes like customer names, product categories, or dates. For example, consider a retail sales database. The fact table, Sales, contains numeric metrics such as revenue, units sold, and discount amounts, along with foreign keys linking to surrounding dimension tables. Those dimension tables might include DimCustomer (customer ID, name, region), DimProduct (product ID, category, brand), DimDate (date ID, month, quarter, year), and DimStore (store ID, location, manager). Each dimension table connects to the Sales fact table through a shared key, forming that characteristic star shape. In Power BI, this structure directly improves report performance because DAX calculations and filter propagation work more efficiently across well-defined relationships than against a single flat table. Instead of scanning one wide table with redundant data, Power BI queries compact dimension tables and the lean fact table separately, reducing both model size and query execution time. Breaking a single flat source table into this structure using Power Query is a common data modeling task that produces cleaner, faster, and more maintainable Power BI reports.

What is the difference between star schema and snowflake schema in Power BI?

A star schema connects all dimension tables directly to a central fact table, while a snowflake schema normalizes those dimension tables further by splitting them into sub-dimensions, creating a more complex multi-level structure. In Power BI, star schemas are generally preferred because they produce simpler DAX calculations, faster query performance, and cleaner relationships in the model view. When all dimensions connect directly to the fact table, Power BI’s Vertipaq engine can compress and scan data more efficiently, which translates to faster report load times. A snowflake schema, by contrast, reduces data redundancy through normalization, which can be useful in transactional databases. However, in Power BI, the additional table joins required to resolve a query across multiple relationship levels can slow down performance and make measures harder to write and maintain. For most Power BI projects, the recommended practice is to flatten snowflake structures into a star schema during the data transformation stage, typically using Power Query. This means merging sub-dimension tables into a single dimension before loading the model, so the reporting layer stays simple and performant even when the source data is more complex.

What are the 4 types of data models?

The four main types of data models are flat (single table), star schema, snowflake schema, and galaxy schema (also called a fact constellation schema). A flat model stores all data in one table, which is simple but becomes slow and redundant at scale. A star schema organizes data into a central fact table surrounded by dimension tables, making it the most common choice for Power BI reporting due to its query performance and DAX compatibility. A snowflake schema extends the star schema by normalizing dimension tables into sub-dimensions, reducing redundancy but adding join complexity. A galaxy schema connects multiple fact tables sharing common dimension tables, suited for enterprise-level reporting across different business processes. For Power BI specifically, the star schema is the recommended model because it aligns with how DAX calculates measures, reduces model size, and improves filter propagation across visuals. Kanerika’s Power BI implementations consistently use star schema design as the baseline data modeling standard, since it balances simplicity, performance, and scalability for most business intelligence use cases.

What are the 4 types of fact tables?

The four types of fact tables in dimensional modeling are transaction fact tables, periodic snapshot fact tables, accumulating snapshot fact tables, and factless fact tables. Transaction fact tables record individual events at the most granular level, such as a single sale or a customer support ticket. These are the most common type and work well when you need detailed, row-level analysis. Periodic snapshot fact tables capture the state of a measure at regular intervals, like weekly inventory levels or monthly account balances. They make it easy to track trends over time without querying transactional history. Accumulating snapshot fact tables track the lifecycle of a process from start to finish, recording key milestone dates and measures in a single row. Order fulfillment pipelines and loan processing workflows are typical use cases. Factless fact tables contain no numeric measures at all. They record the occurrence of an event or the relationship between dimensions, such as student class attendance or promotional coverage. These are useful when the mere existence of a relationship carries analytical value. When building a star schema in Power BI using Power Query, understanding which fact table type fits your data determines how you structure your central table and what dimension tables you need to surround it with. Most single-table datasets that get transformed into a star schema follow the transaction fact table pattern, since raw operational data typically stores individual events at the row level.

What are three types of schema?

The three main types of schema used in data warehousing are star schema, snowflake schema, and galaxy schema (also called a fact constellation schema). A star schema organizes data into a central fact table connected directly to multiple dimension tables, forming a star-like structure. It is the most common choice for Power BI models because it simplifies queries and improves performance. A snowflake schema extends the star schema by normalizing dimension tables into sub-dimensions, creating a more complex, multi-level structure. While it reduces data redundancy, it requires more joins and can slow down query performance in reporting tools. A galaxy schema contains multiple fact tables that share dimension tables across a single data warehouse. It suits complex enterprise environments where different business processes need to share common reference data, such as a shared customer or product dimension used by both sales and inventory fact tables. For most Power BI projects, the star schema remains the recommended approach because Power BI’s DAX engine and Vertipaq storage are specifically optimized for it. Kanerika’s data modeling work consistently applies star schema design principles to ensure clean relationships, faster report rendering, and easier maintenance across client BI environments.

What are the 4 components of data warehouse?

A data warehouse is built on four core components: a central data repository, ETL (extract, transform, load) processes, a metadata layer, and an access/query layer. The central repository stores integrated, historical data from multiple source systems in a structured format. ETL processes handle the movement and transformation of raw data into the warehouse, cleaning inconsistencies and enforcing business rules along the way. The metadata layer documents data definitions, lineage, and transformation logic, making it easier for analysts to understand and trust the data. The access layer includes query engines, reporting tools, and BI platforms like Power BI that end users interact with directly. In the context of star schema design, these components work closely together. The fact and dimension tables you build in Power Query live in the repository layer, while Power BI’s DAX engine and report canvas form the access layer. Understanding all four components helps you make better structural decisions, like when to denormalize a flat table into star schema dimensions, which is exactly the kind of transformation covered in this guide. Kanerika applies this layered data warehouse thinking when designing scalable BI solutions, ensuring each component is properly aligned before building reporting structures on top.

Is star schema faster than snowflake?

Star schema is generally faster than snowflake schema for query performance in Power BI and most analytical workloads. Because star schema stores dimension data in a single, denormalized table rather than splitting it across multiple related tables, the query engine executes fewer joins to retrieve results. This directly reduces query complexity and improves DAX measure calculation speed in Power BI’s VertiPaq engine. Snowflake schema normalizes dimension tables into sub-dimensions, which saves storage space and reduces data redundancy, but at the cost of requiring additional joins at query time. In a reporting environment where users run frequent, complex aggregations, those extra joins accumulate into noticeable performance overhead. For Power BI specifically, star schema aligns with how the VertiPaq columnar storage engine compresses and scans data. Denormalized dimensions compress efficiently, and the engine can resolve relationships between a central fact table and flat dimension tables with minimal overhead. This is why Microsoft recommends star schema as the preferred data model for Power BI reports. Snowflake schema makes more sense when storage efficiency is a priority or when source data normalization needs to be preserved for data integrity reasons. But if query speed and report responsiveness are the primary goals, star schema consistently outperforms snowflake schema in analytical and business intelligence contexts.

What is the purpose of a star schema?

A star schema organizes data into a central fact table surrounded by dimension tables, making it easier to query, analyze, and visualize large datasets efficiently. The fact table stores measurable, transactional data like sales amounts or order quantities, while dimension tables hold descriptive attributes like customer names, product categories, or dates. This separation reduces data redundancy and improves query performance because analytical tools like Power BI only need to join relevant dimension tables to the fact table rather than scanning a single bloated, denormalized table. For reporting purposes, star schemas dramatically simplify DAX calculations and measure creation in Power BI. Relationships between tables become clean and predictable, which helps Power BI’s query engine optimize filter propagation across visuals. Users building reports can also navigate the model more intuitively since each dimension represents a clear business concept. When working with a single flat table in Power Query, splitting it into a star schema structure means your Power BI model becomes more scalable, performs faster under large data volumes, and produces more accurate results when applying filters or slicers across multiple dimensions.

Is star schema OLAP or oltp?

A star schema is an OLAP (Online Analytical Processing) structure, not OLTP (Online Transaction Processing). It is specifically designed for analytical workloads fast querying, aggregation, and reporting across large datasets rather than for recording individual transactions in real time. OLTP databases are normalized to minimize data redundancy and support high-speed inserts, updates, and deletes. Star schemas take the opposite approach: they intentionally denormalize data into a central fact table surrounded by dimension tables, which reduces join complexity and speeds up read performance for analytical queries. In Power BI, building a star schema from a single flat table using Power Query means you are transforming an OLTP-style or raw data structure into an OLAP-ready model. This separation of measures (facts) from descriptive attributes (dimensions) allows Power BI’s VertiPaq engine to compress and scan data more efficiently, delivering faster DAX calculations and more responsive reports. If your source table mixes transactional detail with descriptive data which flat exports often do restructuring it into a star schema before building visuals is a best practice that directly improves model performance and maintainability.

What is the difference between 3NF and star schema?

Third normal form (3NF) and star schema serve different purposes: 3NF is designed for transactional databases to eliminate data redundancy, while star schema is optimized for analytical queries and reporting in data warehouses. In a 3NF structure, data is broken into many normalized tables with strict rules to prevent duplicate values and update anomalies. This works well for OLTP systems where data is frequently inserted, updated, or deleted. However, running analytical queries across dozens of joined tables becomes slow and complex. Star schema deliberately accepts some redundancy to improve query performance. It organizes data into a central fact table surrounded by denormalized dimension tables, reducing the number of joins needed for reporting. A query that might require 10 table joins in 3NF can often be answered with just 2 or 3 joins in a star schema. The practical trade-off comes down to use case. If you are building a source system that handles live transactions, 3NF keeps data consistent and storage efficient. If you are building a Power BI model or any analytical layer where end users run aggregations, filters, and time-based comparisons, star schema delivers faster query execution and simpler DAX measures. In Power BI specifically, the star schema approach aligns with how the VertiPaq engine processes data, making it the recommended modeling pattern for performance-oriented reporting. Kanerika’s data engineering work regularly involves transforming normalized source data into star schema structures using Power Query to support scalable BI solutions.

What are the 5 types of data warehouse architecture?

The five main types of data warehouse architecture are single-tier, two-tier, three-tier, data mart, and cloud-based architecture. Single-tier architecture stores data in one layer, minimizing redundancy but offering limited query performance. Two-tier architecture separates the data source from the warehouse, improving access but creating scalability challenges. Three-tier architecture is the most common enterprise approach, with a bottom tier (relational database), a middle tier (OLAP server), and a top tier (reporting and analytics tools like Power BI) this is the architecture star schemas are most often designed to support. Data mart architecture breaks the warehouse into smaller, department-specific subsets, making it easier for teams to access relevant data without querying an entire enterprise database. Cloud-based architecture hosts the warehouse on platforms like Azure Synapse, AWS Redshift, or Google BigQuery, offering elastic scaling and reduced infrastructure overhead. For Power BI implementations specifically, the three-tier and cloud-based architectures are most relevant because star schemas with their fact and dimension tables are optimized for the OLAP layer that sits between raw data storage and front-end reporting. Kanerika works across these architecture types, helping organizations design data models that align warehouse structure with reporting performance goals in Power BI and similar platforms.

What is the star scheme?

A star schema is a data modeling structure where a central fact table connects to multiple surrounding dimension tables, forming a shape that resembles a star. The fact table holds measurable, quantitative data like sales amounts or transaction counts, while dimension tables store descriptive attributes like customer names, product categories, or date details. This structure is widely used in data warehousing and business intelligence because it simplifies queries, improves report performance, and makes data easier for end users to understand. Instead of storing everything in one flat table, the star schema separates what happened (facts) from who, what, when, and where (dimensions). In Power BI specifically, building a star schema from a single flat table using Power Query means splitting that source data into separate, related tables before it reaches the report layer. This reduces data redundancy, enables efficient DAX calculations, and ensures relationships between tables work correctly in the model. A well-structured star schema is one of the most important foundations for accurate, high-performing Power BI reports.

What is a real life example of a star schema?

A retail sales database is one of the most common real-life examples of a star schema, where a central fact table stores transactional data like sales amounts, quantities, and discounts, surrounded by dimension tables for customers, products, stores, dates, and promotions. For instance, a supermarket chain might structure its data warehouse so the fact table holds millions of individual sales transactions, each linked to a customer ID, product ID, store ID, and date key. The customer dimension table contains demographic details like name, location, and loyalty tier. The product dimension holds category, brand, and supplier information. The date dimension enables time-based analysis across days, weeks, quarters, and years. This structure lets analysts run queries like what was total revenue from organic products in the Northeast region during Q3 without complex joins across multiple transactional tables. The same star schema pattern applies across industries, including healthcare (fact table of patient visits with dimensions for doctors, diagnoses, facilities, and dates), e-commerce (order transactions with dimensions for customers, SKUs, shipping methods, and campaigns), and manufacturing (production runs linked to machines, operators, shifts, and materials). In Power BI specifically, replicating this structure using Power Query, even when your source data starts as a single flat table, dramatically improves DAX performance and simplifies measure writing because relationships between tables are clean and direct.

How to generate star schema?

A star schema is generated by decomposing a single flat table into one central fact table and multiple dimension tables, then linking them through foreign key relationships. Here is the process in practical terms: start by identifying which columns contain measurable numeric data (sales amount, quantity, revenue) these form your fact table. The remaining descriptive columns (customer name, product category, region, date details) become your dimension tables. Each dimension table gets a unique surrogate key, which is then referenced in the fact table as a foreign key. In Power BI using Power Query, you execute this by duplicating or referencing your original table, removing irrelevant columns from each copy to isolate specific dimensions, adding index columns to generate surrogate keys, and then merging those keys back into the fact table. Once loaded into the data model, you draw relationships between the fact table and each dimension table using those keys, creating the characteristic star pattern. The result is a central fact table surrounded by dimension tables visually resembling a star which dramatically improves query performance, simplifies DAX measure writing, and reduces data redundancy. This structure is the foundation of most analytical data models in Power BI, and following a consistent step-by-step approach, like the six-step method Kanerika outlines, ensures the relationships and keys are set up correctly from the start.

Is star schema still relevant?

Star schema remains one of the most reliable and widely used data modeling approaches in business intelligence today. Despite the rise of modern cloud data warehouses and columnar storage engines, the star schema’s core advantages fast query performance, simple navigation, and clean separation of facts and dimensions still hold up across most analytical workloads. Tools like Power BI are built with star schema in mind. Microsoft explicitly recommends it as a best practice for Power BI data models because it reduces DAX complexity, improves filter propagation, and speeds up report rendering. When you work through Power Query to split a flat table into fact and dimension tables, you are following a pattern that directly aligns with how Power BI’s engine is optimized to work. Some argue that denormalized flat tables or wide table formats work just as well in modern tools, and for simple, single-source reports they sometimes do. But as data volume grows, relationships become more complex, and multiple teams share the same model, star schema’s clarity and performance consistency become hard to replace. For organizations building scalable, governed analytics environments, star schema continues to be the foundation worth learning. Kanerika applies this modeling approach when designing Power BI solutions for clients, precisely because it keeps models maintainable as reporting needs evolve. The relevance of star schema is not about tradition it is about building something that performs reliably and scales without constant rework.